Archive

Not Either, But Both?

I recently dug up an old DDS tutorial pitch by distributed system middleware expert extraordinaire, Doug Schmidt. The last slide in the pitch shows a side-by-side, high-level feature comparison of CORBA and DDS:

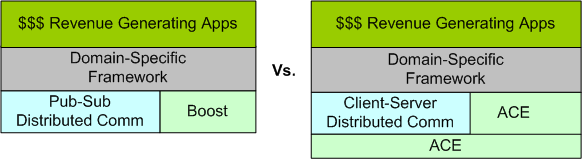

High performance middleware technologies like CORBA and DDS are big, necessarily complex beasts that have high learning curves. Thus, I’m not so sure I agree with Doug’s assessment that complex software systems (like sensor-based command and control systems) need both. One can build a pub-sub mechanism on top of CORBA (using the notification, event, or messaging services) and one can build a client-server, request-response mechanism on top of DDS (using the TCP/IP-like “reliability” QoS). What do you think about the tradeoff? Fill the holes yourself with a tad of home-grown infrastructure code, or use both and create a two-headed, fire-breathing dragon?

Message-Centric Vs. Data-Centric

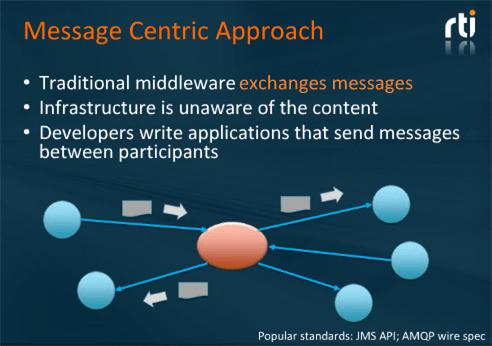

The slide below, plagiarized from a recent webinar presented by RTI Inc’s CEO Stan Schneider, shows the evolution of distributed system middleware over the years.

At first, I couldn’t understand the difference between the message-centric pub-sub (MCPS) and data-centric pub-sub (DCPS) patterns. I thought the difference between them was trivial, superficial, and unimportant. However, as Stan’s webinar unfolded, I sloowly started to “get it“.

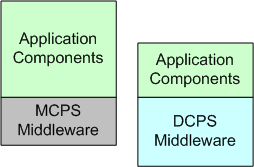

In MCPS, application tier messages are opaque to to the middleware (MW). The separation of concerns between the app and MW tiers is clean and simple:

In DCPS systems, app tier messages are transparent to the MW tier – which blurs the line between the two layers and violates the “ideal” separation of concerns tenet of layered software system design. Because of this difference, the term “message” is superceded in DCPS-based technologies (like the OMG‘s DDS) by the term “topic“. The entity formerly known as a “message” is now defined as a topic sample.

Unlike MCPS MW, DCPS MW supports being “told” by the app tier pub-sub components which Quality Of Service (QoS) parameters are important to each of them. For example, a publisher can “promise” to send topic samples at a minimum rate and/or whether it will use a best-effort UDP-like or reliable TCP-like protocol for transport. On the receive side, a subscriber can tell the MW that it only wants to see every third topic sample and/or only those samples in which certain data-field-filtering criteria are met. DCPS MW technologies like DDS support a rich set of QoS parameters that are usually hard-coded and frozen into MCPS MW – if they’re supported at all.

With smart, QoS-aware DCPS MW, app components tend to be leaner and take less time to develop because the tedious logic that implements the QoS functionality is pushed down into the MW. The app simply specifies these behaviors to the MW during launch and it gets notified by the MW during operation when QoS requirements aren’t being, or can’t be, met.

The cost of switching from an MCPS to a DCPS-based distributed system design approach is the increased upfront, one-time, learning curve (or more likely, the “unlearning” curve).

Related articles

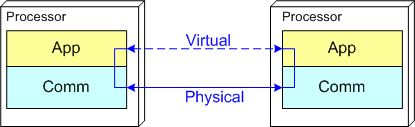

Push And Pull Message Retrieval

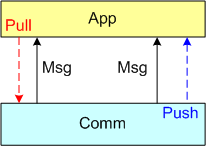

The figure below models a two layer distributed system. Information is exchanged between application components residing on different processor nodes via a cleanly separated, underlying communication “layer“. App-to-App communication takes place “virtually“, with the arcane, physical, over-the-wire, details being handled under the covers by the unheralded Comm layer.

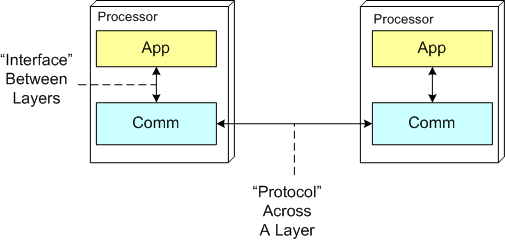

In the ISO OSI reference model for inter-machine communication, the vertical linkage between two layers in a software stack is referred to as an “interface” and the horizontal linkage between two instances of a layer running on different machines is called a “protocol“. This interface/protocol distinction is important because solving flow-control and error-control issues between machines is much more involved than handling them within the sheltered confines of a single machine.

In this post, I’m going to focus on the receiving end of a peer-to-peer information transfer. Specifically, I’m going to explore the two methods in which an App component can retrieve messages from the comm layer: Pull and Push. In the “Pull” approach, message transfer from the Comm layer to the App layer is initiated and controlled by the App component via polling. In the “Push” method, inversion of control is employed and the Comm layer initiates/controls the transfer by invoking a callback function installed by the App component on initialization. Any professional Comm subsystem worth its salt will make both methods of retrieval available to App component developers.

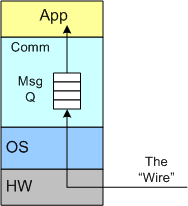

The figure below shows a model of a comm subsystem that supplies a message queue between the application layer and the “wire“. The purpose of this queue is to prevent high rate, bursty, asynchronous message senders from temporarily overwhelming slow receivers. By serving as a flow rate smoother, the queue gives a receiver App component a finite amount of time to “catch up” with bursts of messages. Without this temporary holding tank, or if the queue is not deep enough to accommodate the worst case burst size, some messages will be “dropped on the floor“. Of course, if the average send rate is greater than the average processing rate in the receiving App, messages will be consistently lost when the queue eventually overflows from the rate mismatch – bummer.

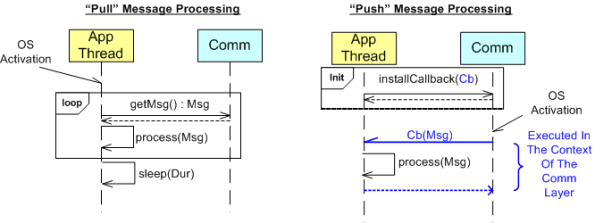

The UML sequence diagram below zeroes in on the interactions between an App component thread of execution and the Comm layer for both the “Push” and “Pull” methods of message retrieval. When the “Pull” approach is implemented, the OS periodically activates the App thread. On each activation, the App sucks the Comm layer queue dry; performing application-specific processing on each message as it is pulled out of the Comm layer. A nice feature of the “Pull” method, which the “Push” method doesn’t provide, is that the polling rate can be tuned via the sleep “Dur(ation)” parameter. For low data rate message streams, “Dur” can be set to a long time between polls so that the CPU can be voluntarily yielded for other processing tasks. Of course, the trade-off for long poll times is increased latency – the time from when a message becomes available within the Comm layer to the time it is actually pulled into the App layer.

In the”Push” method of message retrieval, during runtime the Comm layer activates the App thread by invoking the previously installed App callback function, Cb(Msg), for each newly received message. Since the App’s process(Msg) method executes in the context of a Comm layer thread, it can bog down the comm subsystem and cause it to miss high rate messages coming in over the wire if it takes too long to execute. On the other hand, the “Push” method can be more responsive (lower latency) than the “Pull” method if the polling “Dur” is set to a long time between polls.

So, which method is “better“? Of course, it depends on what the Application is required to do, but I lean toward the “Pull” Method in high rate streaming sensor applications for these reasons:

- In applications like sensor stream processing that require a lot of number crunching and/or data associations to be performed on each incoming message, the fact that the App-specific processing logic is performed within the context of the App thread in the “Pull” method (instead of the Comm layer) means that the Comm layer performance is not dependent on the App-specific performance. The layers are more loosely coupled.

- The “Pull” approach is simpler to code up.

- The “Pull” approach is tunable via the sleep “Dur” parameter.

How about you? Which do you prefer, and why?

Dissin’ Boost

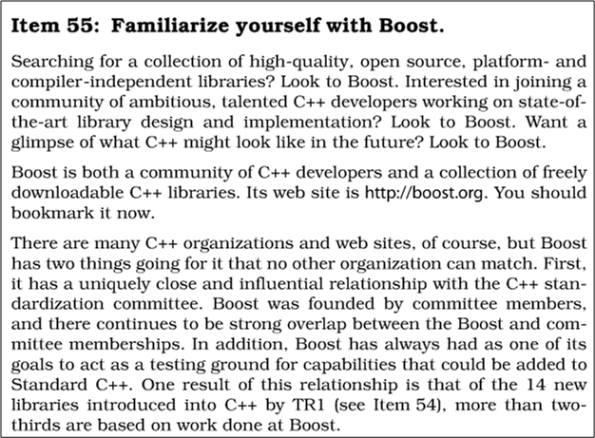

To support my yearning for learning, I continuously scan and probe all kinds of forums, books, articles, and blogs for deeper insights into, and mastery of, the C++ programming language. In all my external travels, I’ve never come across anyone in the C++ community that has ever trashed the boost libraries. Au contraire, every single reference that I’ve ever seen has praised boost as a world class open source organization that produces world class, highly efficient code for reuse. Here’s just one example of praise from Scott Meyers‘ classic “Effective C++: 55 Specific Ways To Improve Your Programs And Designs“:

Notice that in the first paragraph, I wrote the word external in bold. Internal, which means “at work” where politics is always involved, is another story. Sooooo, let me tell you one.

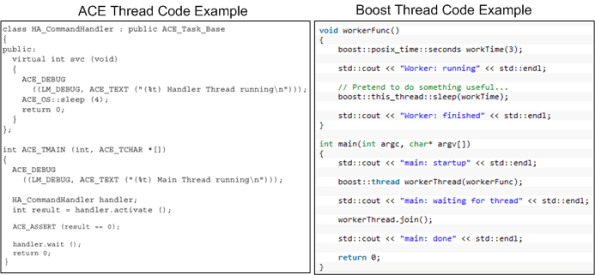

Years ago, a smart, highly productive, and dedicated developer who I respect started building a distributed “framework” on top of the ACE library set (not as a formal project – on his own time). There’s no doubt that ACE is a very powerful, robust, and battle-tested platform. However, because it was designed back in the days when C++ compiler technology was immature, I think its API is, let’s say “frumpy“, unconventional, and (dare I say) “obsolete” compared to the more modern Boost APIs. Boost-based code looks like natural C++, whereas ACE-based code looks like a macro derived dialect. In the functional areas where ACE and Boost overlap (which IMHO is large), I think that Boost is head over heels easier to learn and use. But that’s just me, and if you’re a long-time ACE advocate you might be mad at me now because you’re blinded by your bias – just like I am blinded by mine.

Fast forward to the present moment after other groups in the company (essentially, having no choice) have built their one-off applications on top of the homegrown, ACE-based, framework. Of course, you know through experience that “homegrown” means:

- the framework API is poorly documented,

- the build process is poorly documented,

- forks have been spawned because of the lack of a formally funded maintenance team and change process,

- the boundary between user and library code is jagged/blurry,

- example code tutorials are non-existent.

- it is most likely to cost less to build your own, lighter weight framework from scratch than to scale the learning curve by studying tens of 1,000s of lines of framework code to separate the API from the implementation and figure out how to use the dang thing.

Despite the time-proven assertions above, the framework author and a couple of “other” promoters who’ve never even tried to extract/build the framework, let alone learn the basics of the “jagged” API and write a simple sample distributed app on top of it, have naturally auto-assumed that reusing the framework in all new projects will save the company time and money.

Along comes a new project in which the evil Bulldozer00 (BD00) is a team member. Being suspicious of the internal marketing hype, and in response to the “indirect pressure and unspoken coercion” to architect, design, and build on top of the one and only homegrown framework, BD00 investigates the “product“. After spending the better part of a week browsing the code base and frustratingly trying to build the framework so that he could write a little distributed test app, BD00 gives up and concludes that the bulleted list definition above has withstood the test of time….. yet again.

When other members of BD00’s team, including one member who directly used the ACE-based framework on a previous project, investigate the qualities of the framework, they come to the same conclusion: thank you, but for our project, we’ll roll our own lighter weight, more targeted, and more “modern” framework on top of Boost. But of course, BD00 is the only politically incorrect and blatantly over-the-top rejector of the intended one-size-fits-all framework. In predictable cause-effect fashion, the homegrown framework advocates dig their heels in against BD00’s technical criticisms and step up their “cost and time savings” rhetoric – including a diss against Boost in their internal marketing materials. Hmmm.

Since application infrastructure is not a company core competence and certainly not a revenue generator, BD00 “cleverly” suggests releasing the framework into the open source community to test its viability and ability to attract an external following. The suggestion falls on deaf ears – of course. Even though BD00 (who’s deliberately evil foot-in-mouth approach to conflict-handling almost always triggers the classic auto-reject response in others) made the helpful(?) suggestion, the odds are that it would be ignored regardless of who had made it. Based on your personal experience, do you agree?

Note 1: If interested, check out this ACE vs Boost vs Poco libraries discussion on StackOverflow.com.

Note2: There’s a whole ‘nother sensitive socio-technical dimension to this story that may trigger yet another blog post in the future. If you’ve followed this blog, I’ve hinted about this bone of contention in several past posts. The diagram below gives a further hint as to its nature.

Where’s The Bug?

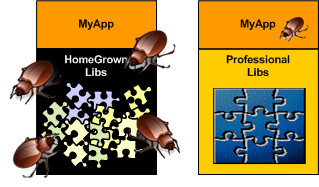

When you’re designing and happily coding away in the application layer and you discover a nasty bug, don’t you hate it when you find that the chances are high that the critter may not be hiding in your code – it may be in one of the cavernous homegrown libraries that prop your junk up. I hate when that happens because it forces me to do a mental context switch from the value-added application layer down into the support layer(s) – sometimes for days on end (ka-ching, ka-ching; tic-toc, tic-toc).

Compared to writing code on top of an undocumented, wobbly, homegrown BBoM, writing code on top of a professionally built infrastructure with great tutorials and API artifacts is a joy. When you do find a bug in the code base, the chances are astronomically high that it will be in your code and not down in the infrastructure. Unsurprisingly, preferring the professional over the amateur saves time, money, and frustration.

For the same strange reason (hint: ego) that command and control hierarchy is accepted without question as the “it just has to be this way” way of structuring a company for “success“, software developers love to cobble together their own BBoM middleware infrastructure. To reinforce this dysfunctional approach, managers are loathe to spend money on battle-tested middleware built by world class experts in the field. Yes, these are the same managers who’ll spend $100K on a logic analyzer that gets used twice a year by the two hardware designer dudes that cohabitate with the hundreds of software weenies and elite BMs inside the borg. C’est la vie.

Data-Centric, Transaction-Centric

The market (ka-ching!, ka-ching!) for transaction-centric enterprise IT (Information Technology) systems dwarfs that for real-time, data-centric sensor control systems. Because of this market disparity, the lion’s share of investment dollars is naturally and rightfully allocated to creating new software technologies that facilitate the efficient development of behemoth enterprise IT systems.

I work in an industry that develops and sells distributed, real-time, data-centric sensor systems and it frustrates me to no end when people don’t “see” (or are ignorant of) the difference between the domains. With innocence, and unfortunately, ignorance embedded in their psyche, these people try to jam-fit risky, transaction-centric technologies into data-centric sensor system designs. By risky, I mean an elevated chance of failing to meet scalability, real-time throughput and latency requirements that are much more stringent for data-centric systems than they are for most transaction-centric systems. Attributes like scalability, latency, capacity, and throughput are usually only measurable after a large investment has been made in the system development. To add salt to the wound, re-architecting a system after the mistake is discovered and ( more importantly) acknowledged, delays release and consumes resources by amounts that can seriously damage a company’s long term viability.

As an example, consider the CORBA and DDS OMG standard middleware technologies. CORBA was initially designed (by committee) from scratch to accommodate the development of big, distributed, client-server, transaction-centric systems. Thus, minimizing latency and maximizing throughout were not the major design drivers in its definition and development. DDS was designed to accommodate the development of big, distributed, publisher-subscriber, data-centric systems. It was not designed by a committee of competing vendors each eager to throw their pet features into a fragmented, overly complex quagmire. DDS was derived from the merger of two fielded and proven implementations, one by Thales (distributed naval shipboard sensor control systems) and the other by RTI (distributed robotic sensor and control systems). In contrast, as a well meaning attempt to be all things to all people, publish-subscribe capability was tacked on to the CORBA beast after the fact. Meanwhile, DDS has remained lean and mean. Because of the architecture busting risk for the types of applications DDS targets, no client-server capability has been back-fitted into its design.

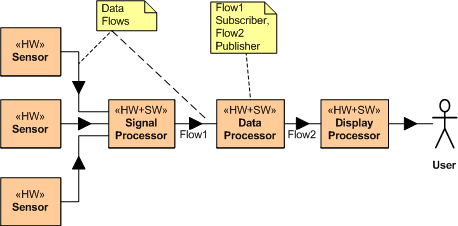

Consider the example distributed, data-centric, sensor system application layer design below. If this application sits on top of a DDS middleware layer, there is no “intrusion” of a (single point of failure) CORBA broker into the application layer. Each application layer system component simply boots up, subscribes to the topic (message) streams it needs, starts crunching them with it’s app-specific algorithms, and publishes its own topic instances (messages) to the other system components that have subscribed to the topic.

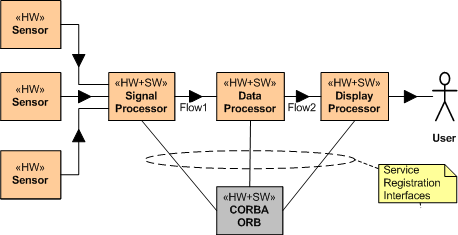

Now consider a CORBA broker-based instantiation of this application example (refer to the figure below). Because of the CORBA requirement for each system component to register with an all knowing centralized ORB (Object Request Broker) authority, CORBA “leaks” into the application layer design. After registration, and after each service has found (via post-registration ORB lookup) the other services it needs to subscribe to, the ORB can disappear from the application layer – until a crash occurs and the system gets hosed. DDS avoids leakage into the value-added application layer by avoiding the centralized broker concept and providing for fully distributed, under-the-covers, “auto-discovery” of publishers and subscribers. No DDS application process has to interact with a registrar at startup to find other components – it only has to tell DDS which topics it will publish and which topics it needs to subscribe to. Each CORBA component has to know about the topics it needs and the services it needs to subscribe to.

The more a system is required to accommodate future growth, the more inapplicable a centralized ORB-based architecture is. Relative to a fully distributed coordination and auto-discovery mechanism that’s transparent to each application component, attempting to jam fit a single, centralized coordinator into a large scale distributed system so that the components can find and interact with each other reduces robustness, fault tolerance, and increases long term maintenance costs.