Archive

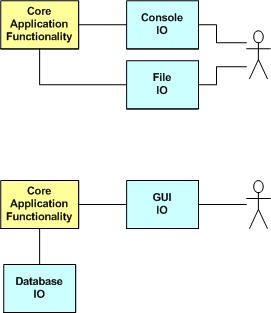

Console And Files First, GUI And Database Last

Adding Database IO and GUI IO to a program ratchets up it’s complexity, and hence development time, immensely. In “Programming: Principles and Practice Using C++“, Bjarne Stroustrup recommends designing and writing your program to do IO over the console and filesystem first, and then adding GUI IO and database IO later. And only if you have to.

Eliminating, or at least delaying, GUI and database IO forces you to focus on getting the internal application design right early. It also helps to keep you from tangling the GUI IO and database IO code with your application code and creating an unmaintainable ball of mud. Thirdly, the practice also makes testing much simpler than trying to write and debug the whole quagmire at once. Good advice?

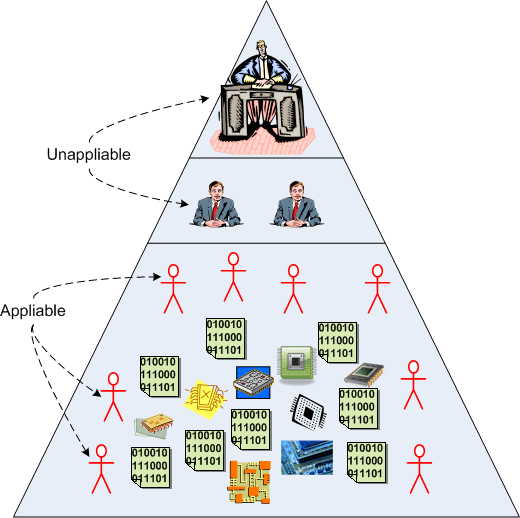

Appliablity

In the “good ole’ days”, products, along with the development and production processes to create them, were much simpler. Modern day knowledge-intensive products require both deep and broad know-what and know-how to be successful in the marketplace. Accordingly, in the “good ole’ days” many front line and second tier managers were skilled enough to man the production lines when workers went on strike. In knowledge-intensive industries like software development, that’s no longer true – even if the manager was an engineer just prior to promotion. It’s especially true in today’s fast moving environment where skills become obsolete as soon as they’re mastered. D’oh!

Another way of expressing the idea above is in the corpo lingo of “appliability”. For the most part, managers don’t have the skills to be appliable anymore. They’re a pure overhead expense to the orgs they work for. Thus, unless they’re PHORs, they’re a drain on profits. The next time a non-PHOR manager tells you that “you’re expensive to employ” retort back “at least I’m appliable” – if you dare.

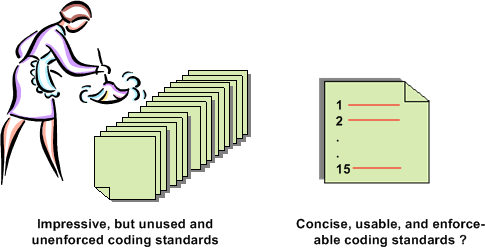

Project-Specific Coding Guidelines

I’m about to embark on the development of a distributed, scalable, data-centric, real-time, sensor system. Since some technical risks are at this point disturbingly high, especially meeting CPU loading and latency requirements, a team of three of us (two software engineers and one system engineer) are going to prototype several CPU-intensive system functions.

Assuming that our prototyping effort proves that our proposed application layer functional architecture is feasible, a much larger team will be applied to the effort in an attempt to meet schedule. In order to promote consistency across the code base, facilitate on-boarding of new team members, and lower long term maintenance costs, I’m proposing the following 15 design and C++ coding guidelines:

- Minimize macro usage.

- Use STL containers instead of homegrown ones.

- No unessential 3rd party libraries, with the exception of Boost.

- Strive for a clear and intuitive namespace-to-directory mapping.

- Use a consistent, uniform, communication scheme between application processes. Deviations must be justified.

- Use the same threading library (Boost) when multi-threading is needed within a process.

- Design a little, code a little, unit test a little, integrate a little, document a little. Repeat.

- Avoid casting. When casting is unavoidable, use the C++ cast operators so that the cast hacks stick out like a sore thumb.

- No naked pointers when heap memory is required. Use C++ auto and Boost smart pointers.

- Strive for pure layering. Document all non-adjacent layer interface breaches and wrap all forays into OS-specific functionality.

- Strive for < 100 lines of code per function member.

- Strive for < 4 nested if-then-else code sections and inheritance tree depths.

- Minimize “using” directives, liberally employ “using” declarations to keep verbosity low.

- Run the code through a static code analyzer frequently.

- Strive for zero compiler warnings.

Notice that the list is short, (maybe) memorize-able, and (maybe) enforceable. It’s an attempt to avoid imposing a 100 page tome of rules that nobody will read, let alone use or enforce. What do you think? What is YOUR list of 15?

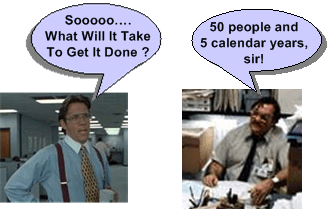

Dude, Read It The Freakin’ First Time

Recently, I was asked by Joe Manager to estimate the amount of resources (people and time) that I thought would be consumed in the development of a large, computationally-intensive, software-centric system. I dutifully did what was asked of me and posted a coarse, first cut estimate on the company wiki for all to scrutinize. I also provided a link to the page to Joe Manager. Unsurprisingly (to me at least) , at the next “planning” meeting I was asked again by Joe, on-the-spot and in real-time, what it would take to do the job if I were to lead the project. Since my pre-meeting intuition told me to bring a hard copy of my estimate to the meeting, I presented it to Mr. Manager and gently reminded him that I had forwarded it to him earlier when he asked for it the (freakin’) first time.

Apparently, besides being forgetful, poor Joe wasn’t told of the not-so-secret policy that I’m not allowed to lead anyone, let alone a team on a large and costly software development project that could be pretty important to the company’s future. Plus, since Joe’s last name is “Manager”, isn’t he supposed to at least consider planning and leading the effort his own freakin’ self? Or is he just envisioning someone else struggling to get it done while he periodically samples status, rides the schedule, and watches from the grassy knoll.

In a bacon and eggs breakfast, the chicken is involved, but the pig is committed – Ken Schwaber

Iteration Frequency

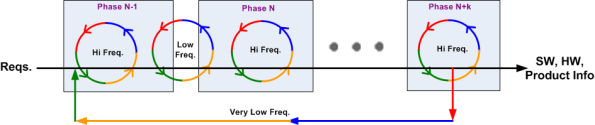

Obviously, even the most iterative agile development process marches forward in time. Thus, at least to a small extent, every process exhibits some properties of the classic and much maligned waterfall metaphor. On big projects, the schedule is necessarily partitioned into sequential time phases. The figure below (Reqs. = requirements, Freq. = frequency, HW = hardware, SW = software) attempts to model both forward progress and iteration as a function of time.

If the phases are designed to be internally cohesive, externally loosely coupled , and the project is managed correctly, the frequency of iteration triggered by the natural process of human learning and fixing mistakes is:

- high within a given project phase

- low between adjacent project phases

- very low between non-adjacent project phases

Of course, if managed stupidly by explicitly or implicitly “disallowing” any iterative learning loops in order to meet equally stupid and “aggressive” schedules handed down from the heavens, errors and mistakes will accumulate and weave themselves undetected into the product fabric – until the customer starts using the contraption. D’oh!

The Loop Of Woe

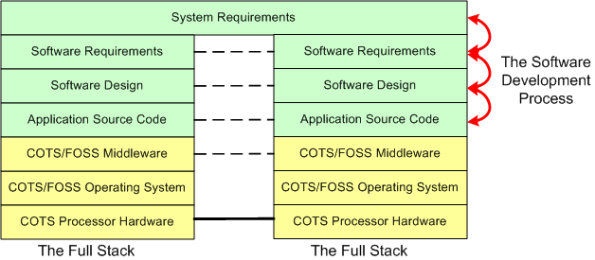

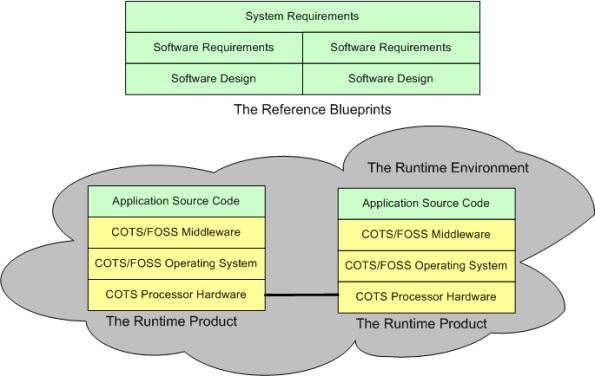

When a “side view” of a distributed software architecture is communicated, it’s sometimes presented with a specific instantiation of something like this four layer drawing; where COTS = Commercial Off The Shelf and FOSS=Free Open Source Software:

I think that neglecting the artifacts that capture the thinking and rationale in the more abstract higher layers of the stack is a recipe for high downstream maintenance costs, competitive disadvantage, and all around stakeholder damage. For “big” systems, trying to find/fix bugs, or determining where new feature source code must be inserted among 100s of thousands of lines of code, is a huge cost sink when a coherent full stack of artifacts is not available to steer the hunt. The artifacts don’t have to be high ceremony, heavyweight boat anchors, they just have to be useful. Simple, but not simplistic.

For safety-critical systems, besides being a boon to maintenance, another increasingly important reason for treating the upper layers with respect is certification. All certification agencies require an auditable and scrutably connected path from requirements down through the source code. The classic end run around the certification obstacle when the content of the upper layers is non-existent or resembles swiss cheese is to get the system classified as “advisory”.

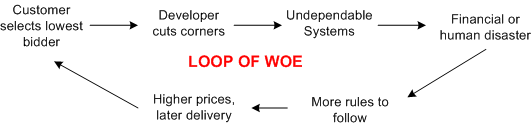

Frenetic , clock-watching managers and illiterate software developers are the obvious culprits of upper layer neglect but, ironically, the biggest contributors to undependable and uncertifiable systems are customers themselves. By consistently selecting the lowest bidder during acquisition, customers unconsciously encourage corner-cutting and apathy towards safety.

Frenetic , clock-watching managers and illiterate software developers are the obvious culprits of upper layer neglect but, ironically, the biggest contributors to undependable and uncertifiable systems are customers themselves. By consistently selecting the lowest bidder during acquisition, customers unconsciously encourage corner-cutting and apathy towards safety.

Got any ideas for breaking the loop of woe? I wish I did, but I don’t.

Background Daemons

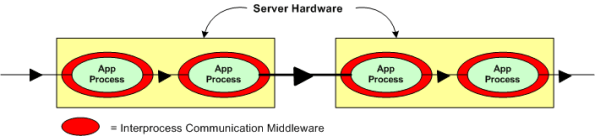

Assume that you have to build a distributed real-time system where your continuously communicating application components must run 24×7 on multiple processor nodes netted together over a local area network. In order to save development time and shield your programmers from the arcane coding details of setting up and monitoring many inter-component communication channels, you decide to investigate pre-written communication packages for inclusion into your product. After all, why would you want your programmers wasting company dollars developing non-application layer software that experts with decades of battle-hardened experience have already created?

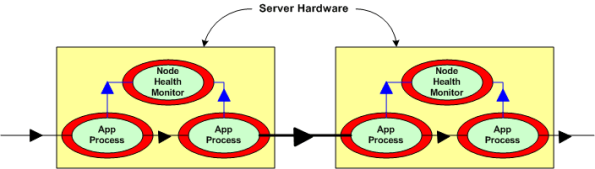

Now, assume that the figure below represents a two node portion of your many-node product where a distributed architecture middleware package has been linked (statically or dynamically) into each of your application components. By distributed architecture, I mean that the middleware doesn’t require any single point-of-failure daemons running in the background on each node.

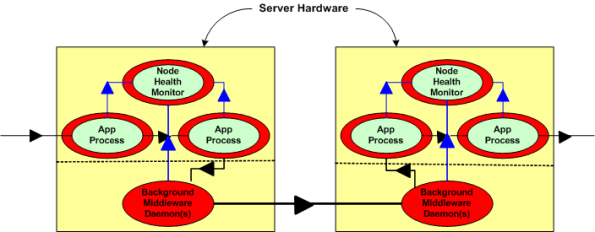

Next, assume that to increase reliability, your system design requires an application layer health monitor component running on each node as in the figure below. Since there are no daemons in the middleware architecture that can crash a node even when all the application components on that node are running flawlessly, the overall system architecture is more reliable than a daemon-based one; dontcha think? In both distributed and daemon-based architectures, a single application process crash may or may not bring down the system; the effect of failure is application-specific and not related to the middleware architecture.

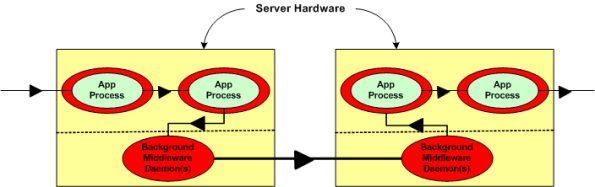

The two figures below represent a daemon-based alternative to the truly distributed design previously discussed. Note the added latency in the communication path between nodes introduced by the required insertion of two daemons between the application layer communication endpoints. Also note in the second figure that each “Node Health Monitor” now has to include “daemon aware” functionality that monitors daemon state in addition to the co-resident application components. All other things being equal (which they rarely are), which architecture would you choose for a system with high availability and low latency requirements? Can you see any benefits of choosing the daemon-based middleware package over a truly distributed alternative?

The most reliable part in a system is the one that is not there – because it isn’t needed.

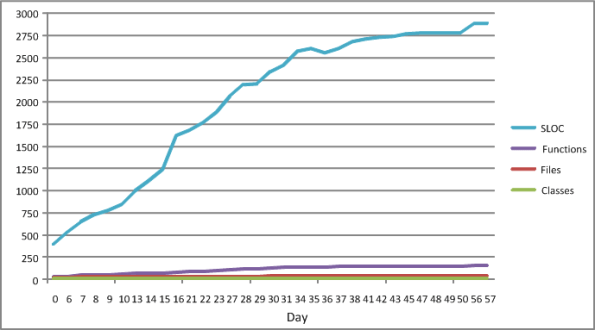

My Velocity

The figure below shows some source code level metrics that I collected on my last C++ programming project. I only collected them because the process was low ceremony, simple, and unobtrusive. I ran the source code tree through an easy to use metrics tool on a daily basis. The plots in the figure show the sequential growth in:

- The number of Source Lines Of Code (SLOC)

- The number of classes

- The number of class methods (functions)

- The number of source code files

So Whoopee. I kept track of metrics during the 60 day construction phase of this project. The question is: “How can a graph like this help me improve my personal software development process?”.

The slope of the SLOC curve, which measured my velocity throughout the duration, doesn’t tell me anything my intution can’t deduce. For the first 30 days, my velocity was relatively constant as I coded, unit tested, and integrated my way toward the finished program. Whoopee. During the last 30 days, my velocity essentially went to zero as I ran end-to-end system tests (which were designed and documented before the construction phase, BTW) and refactored my way to the end game. Whoopee. Did I need a plot to tell me this?

I’ll assert that the pattern in the plot will be unspectacularly similar for each project I undertake in the future. Depending on the nature/complexity/size of the application functionality that will need to be implemented, only the “tilt” and the time length will be different. Nevertheless, I can foresee a historical collection of these graphs being used to predict better future cost estimates, but not being used much to help me improve my personal “process”.

What’s not represented in the graph is a metric that captures the first 60 days of problem analysis and high level design effort that I did during the front end. OMG! Did I use the dreaded waterfall methodology? Shame on me.

iSpeed OPOS

A couple of years ago, I designed a “big” system development process blandly called MPDP2 = Modified Product Development Process version 2. It’s version 2 because I screwed up version 1 badly. Privately, I named it iSpeed to signify both quality (the Apple-esque “i”) and speed but didn’t promote it as such because it didn’t sound nerdy enough. Plus, I was too chicken to introduce the moniker into a conservative engineering culture that innocently but surely suppresses individuality.

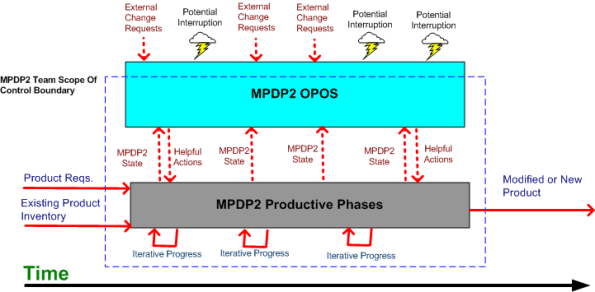

One of the MPDP2 activties, which stretches across and runs in parallel to the time sequenced development phases, is called OPOS = Ongoing Planning, Ongoing Steering. The figure below shows the OPOS activity gaz-intaz and gaz-outaz.

In the iSpeed process, the top priority of the project leader (no self-serving BMs allowed) is to buffer and shield the engineering team from external demands and distractions. Other lower priority OPOS tasks are to periodically “sample the value stream”, assess the project state, steer progress, and provide helpful actions to the multi-disciplined product development team. What do you think? Good, bad, fugly? Missing something?

The Rise Of The “ilities”

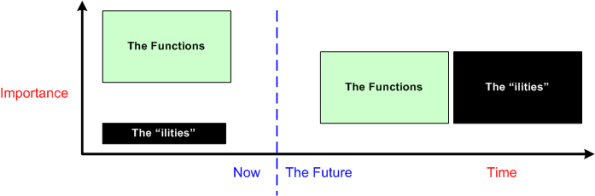

The title of this post should have been “The Rise Of Non-Functional Requirements“, but that sounds so much more gauche than the chosen title.

As software-centric systems get larger and necessarily more complex, they take commensurately more time to develop and build. Making poor up front architectural decisions on how to satisfy the cross-cutting non-functional requirements (scalability, distribute-ability, response-ability (latency), availability, usability, maintainability, evolvability, portability, secure-ability, etc.) imposed on the system is way more costly downstream than making bad up front decisions regarding localized, domain-specific functionality. To exacerbate the problem, the unglamorous “ilities” have been traditionally neglected and they’re typically hard to quantify and measure until the system is almost completely built. Adding fuel to the fire, many of the “ilities” conflict with each other (e.g. latency vs maintainability, usability vs. security). Optimizing one often marginalizes one or more others.

When a failure to meet one or more non-functional requirements is discovered, correcting the mistake(s) can, at best, consume a lot of time and money, and at worst, cause the project to crash and burn (the money’s gone, the time’s gone, and the damn thang don’t work). That’s because the mechanisms and structures used to meet the “ilities” requirements cut globally across the entire system and they’re pervasively weaved into the fabric of the product.

If you’re a software engineer trying to grow past the coding and design patterns phases of your profession, self-educating yourself on the techniques, methods, and COTS technologies (stay away from homegrown crap – including your own) that effectively tackle the highest priority “ilities” in your product domain and industry should be high on your list of priorities.

Because of the ubiquitous propensity of managers to obsess on short term results and avoid changing their mindsets while simultaneously calling for everyone else to change theirs, it’s highly likely that your employer doesn’t understand and appreciate the far reaching effects of hosing up the “ilities” during the front end design effort (the new age agile crowd doesn’t help very much here either). It’s equally likely that your employer ain’t gonna train you to learn how to confront the growing “ilities” menace.