Archive

Dissin’ Boost

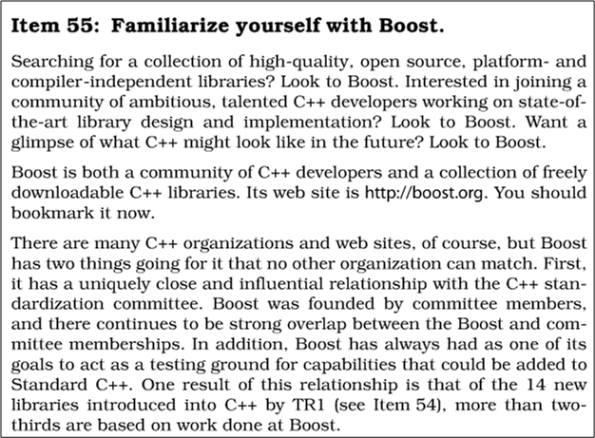

To support my yearning for learning, I continuously scan and probe all kinds of forums, books, articles, and blogs for deeper insights into, and mastery of, the C++ programming language. In all my external travels, I’ve never come across anyone in the C++ community that has ever trashed the boost libraries. Au contraire, every single reference that I’ve ever seen has praised boost as a world class open source organization that produces world class, highly efficient code for reuse. Here’s just one example of praise from Scott Meyers‘ classic “Effective C++: 55 Specific Ways To Improve Your Programs And Designs“:

Notice that in the first paragraph, I wrote the word external in bold. Internal, which means “at work” where politics is always involved, is another story. Sooooo, let me tell you one.

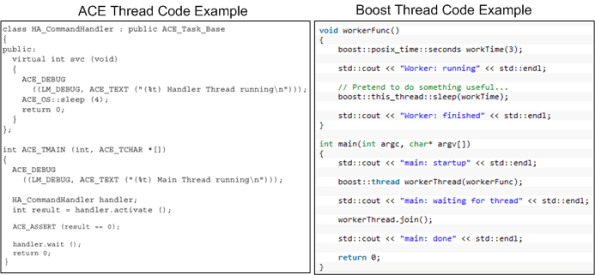

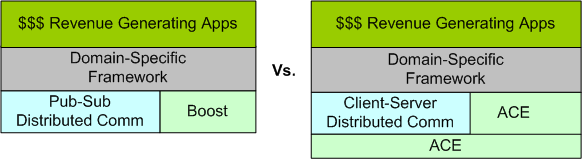

Years ago, a smart, highly productive, and dedicated developer who I respect started building a distributed “framework” on top of the ACE library set (not as a formal project – on his own time). There’s no doubt that ACE is a very powerful, robust, and battle-tested platform. However, because it was designed back in the days when C++ compiler technology was immature, I think its API is, let’s say “frumpy“, unconventional, and (dare I say) “obsolete” compared to the more modern Boost APIs. Boost-based code looks like natural C++, whereas ACE-based code looks like a macro derived dialect. In the functional areas where ACE and Boost overlap (which IMHO is large), I think that Boost is head over heels easier to learn and use. But that’s just me, and if you’re a long-time ACE advocate you might be mad at me now because you’re blinded by your bias – just like I am blinded by mine.

Fast forward to the present moment after other groups in the company (essentially, having no choice) have built their one-off applications on top of the homegrown, ACE-based, framework. Of course, you know through experience that “homegrown” means:

- the framework API is poorly documented,

- the build process is poorly documented,

- forks have been spawned because of the lack of a formally funded maintenance team and change process,

- the boundary between user and library code is jagged/blurry,

- example code tutorials are non-existent.

- it is most likely to cost less to build your own, lighter weight framework from scratch than to scale the learning curve by studying tens of 1,000s of lines of framework code to separate the API from the implementation and figure out how to use the dang thing.

Despite the time-proven assertions above, the framework author and a couple of “other” promoters who’ve never even tried to extract/build the framework, let alone learn the basics of the “jagged” API and write a simple sample distributed app on top of it, have naturally auto-assumed that reusing the framework in all new projects will save the company time and money.

Along comes a new project in which the evil Bulldozer00 (BD00) is a team member. Being suspicious of the internal marketing hype, and in response to the “indirect pressure and unspoken coercion” to architect, design, and build on top of the one and only homegrown framework, BD00 investigates the “product“. After spending the better part of a week browsing the code base and frustratingly trying to build the framework so that he could write a little distributed test app, BD00 gives up and concludes that the bulleted list definition above has withstood the test of time….. yet again.

When other members of BD00’s team, including one member who directly used the ACE-based framework on a previous project, investigate the qualities of the framework, they come to the same conclusion: thank you, but for our project, we’ll roll our own lighter weight, more targeted, and more “modern” framework on top of Boost. But of course, BD00 is the only politically incorrect and blatantly over-the-top rejector of the intended one-size-fits-all framework. In predictable cause-effect fashion, the homegrown framework advocates dig their heels in against BD00’s technical criticisms and step up their “cost and time savings” rhetoric – including a diss against Boost in their internal marketing materials. Hmmm.

Since application infrastructure is not a company core competence and certainly not a revenue generator, BD00 “cleverly” suggests releasing the framework into the open source community to test its viability and ability to attract an external following. The suggestion falls on deaf ears – of course. Even though BD00 (who’s deliberately evil foot-in-mouth approach to conflict-handling almost always triggers the classic auto-reject response in others) made the helpful(?) suggestion, the odds are that it would be ignored regardless of who had made it. Based on your personal experience, do you agree?

Note 1: If interested, check out this ACE vs Boost vs Poco libraries discussion on StackOverflow.com.

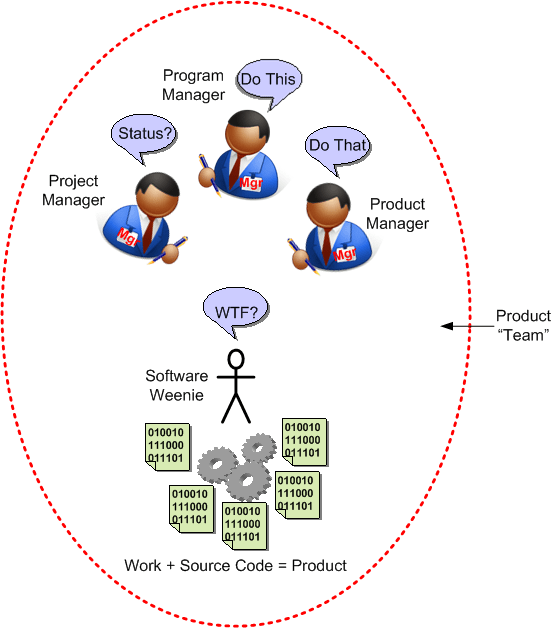

Note2: There’s a whole ‘nother sensitive socio-technical dimension to this story that may trigger yet another blog post in the future. If you’ve followed this blog, I’ve hinted about this bone of contention in several past posts. The diagram below gives a further hint as to its nature.

Product Team

How can a software project have more managers and pseudo-managers “working” on it than developers – you know, those fungible people who write, debug, and test the product code that is the source of the borg’s income. You would think that this comically dysfunctional practice would stick out like a sore thumb and somebody upstairs would put the kabosh on it, no?

Tested And Untested

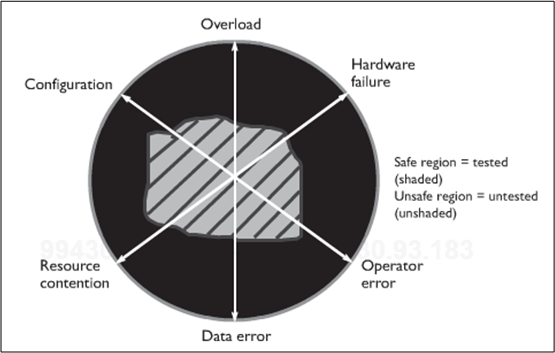

Being the e-klepto that I am, I stole this kool graphic from Humphrey and Over’s book “Leadership, Teamwork, And Trust“:

It represents the tested-to-untested (TU) region ratio of a generic, big software system. Humphrey/Over use it to prove that the ubiquitous, test-centric approach to quality doesn’t work very well and that by focusing on defect prevention via upfront review/inspection before testing, one can dramatically decrease the risk for any given TU ratio. Hard to argue with that, right?

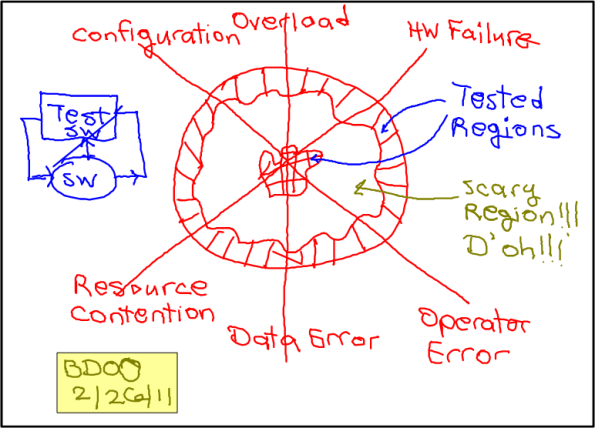

The point I’d like to make, which is different than the one Humphrey/Over focus on in their book (to sell their heavyweight PSP/TSP methodology, of course), is that when a software system is released into the wild, it most likely hasn’t been tested in the wacky configurations and states that can cause massive financial loss and/or personal injury. You know, those “stressful” system states on the edge of chaos where transient input overloads occur, or hardware failures occur, or corrupted data enters the system. I think building the test infrastructure that can duplicate these doomsday scenarios – and then actually testing how the system responds in these rare but possible environments is far more effective than just adding political dog-and-pony reviews/inspections to one’s approach to quality. Instead of decreasing the risk for a fixed TU ratio, which reviews/inspections can achieve (if (and it’s a big if) they’re done right), it increases the TU ratio itself. However, since system-specific test tools are unglamorous and perceived as unnecessary costs by scrooge managers more concerned about their image than other stakeholders, scarily untested software is foisted upon the populace at an increasing rate. D’oh!

Note: The dorky hand made drawing above is the first one that I created with the $14.99 “Paper Tablet” app for my Livescribe Echo smartpen. “Paper Tablet” turns a notebook page into a surrogate computer tablet. When I run an “ink aware” app like Microsoft Visio, and then physically draw on a notebook page, the output goes directly to the computer app and it shows up on the screen – in addition to being stored in the pen. Thus, as I was drawing this putrid picture with my pen, it was being simultaneously regenerated on a visio page in real-time (see clip below). Kool, eh? Maybe with some practice……

The Wevo Approach

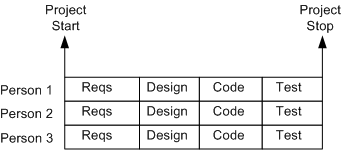

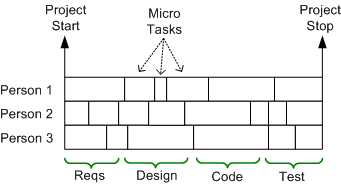

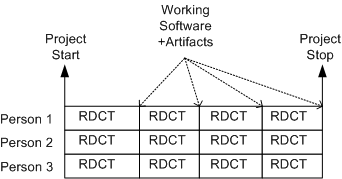

The figure below shows an example of a one-size-fits-all, waterfall schedule template that’s prevalent at many old school software companies. It sure looks nice, squeaky clean, and controllable, but as everyone knows, it’s always wrong. Out of fear or apathy, almost no one speaks out against this “best practice“, but those who do are quickly slapped down by the anointed controllers and meta-controllers of the project.

A more insidious, micro-grained, version of this waterboarding fiasco is shown below. It’s a self-medicating attempt to amplify the illusion of control that’s envisioned to take place throughout the execution of the project. Since schedules are concocted before an architecture or design has been reasonably sketched out and no one can possibly know up front what all the micro tasks are, let alone how long they’ll take (unless the project is to dig ditches), it’s monstrously wrong too. But shush, don’t say a word.

Once a monstrosity like this is baked into a huge Microsoft Project file or company proprietary scheduling document, those who conjured up the camouflage auto-become loathe to modify it, even as the situation dynamically changes during the death march. Once the project starts churning, new unforeseen “popup” tasks emerge and some pre-planned micro-tasks become obsolete. These events disconnect the schedule from reality quicker than you can say “WTF?“.

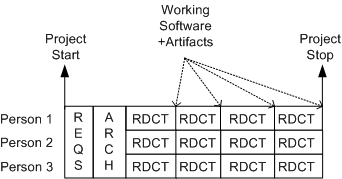

Moving on to a sunnier disposition, the template below shows a more “sane“, but not infallible, method of scheduling. It’s a model of the incremental “evo” strategy that I first stumbled upon from Tom Gilb – a bazillion years before the agile movement rose to prominence. In the evo(lutionary) approach, stable working software becomes visible early with each RDCT cycle and it grows and matures as the messy (it’s always messy) project lurches forward.

The figure below shows a tweaked version of the evo model. It’s a hybrid concoction of the waterboard and evolutionary development approaches – the “wevo“. Some upfront requirements and architecture exploration/definition/specification is performed by the elected team technical leaders before staffing up for the battle against the possibility of building a BBoM. The purpose of the upfront requirements and architecture efforts are to address major cross-cutting concerns and establish contextual boundaries – before letting the dogs loose.

Of course, the wevo approach is not enough. Another necessary but insufficient requirement is that the team leaders dive into the muck with the “coders” after the cross-cutting requirements and architecture definition activities have produced a stable, understandable blueprint. No jargon spewing software “rocketects” or “pure” software project leads allowed – everyone gets dirty – and for the duration.

Where’s The Bug?

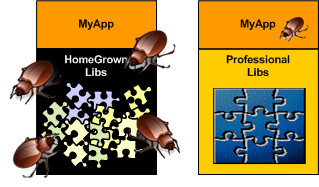

When you’re designing and happily coding away in the application layer and you discover a nasty bug, don’t you hate it when you find that the chances are high that the critter may not be hiding in your code – it may be in one of the cavernous homegrown libraries that prop your junk up. I hate when that happens because it forces me to do a mental context switch from the value-added application layer down into the support layer(s) – sometimes for days on end (ka-ching, ka-ching; tic-toc, tic-toc).

Compared to writing code on top of an undocumented, wobbly, homegrown BBoM, writing code on top of a professionally built infrastructure with great tutorials and API artifacts is a joy. When you do find a bug in the code base, the chances are astronomically high that it will be in your code and not down in the infrastructure. Unsurprisingly, preferring the professional over the amateur saves time, money, and frustration.

For the same strange reason (hint: ego) that command and control hierarchy is accepted without question as the “it just has to be this way” way of structuring a company for “success“, software developers love to cobble together their own BBoM middleware infrastructure. To reinforce this dysfunctional approach, managers are loathe to spend money on battle-tested middleware built by world class experts in the field. Yes, these are the same managers who’ll spend $100K on a logic analyzer that gets used twice a year by the two hardware designer dudes that cohabitate with the hundreds of software weenies and elite BMs inside the borg. C’est la vie.

Performance Playground

Since I work on real-time software projects where tens of thousands of data samples per second must be filtered, manipulated, and transformed into higher level decision-support information, performance in the main processing pipeline is important. If the software can’t keep up with the unrelenting onslaught of data streaming in from the “real world“, internal buffers/queues will overflow at best and the system will crash at worst. D’oh!

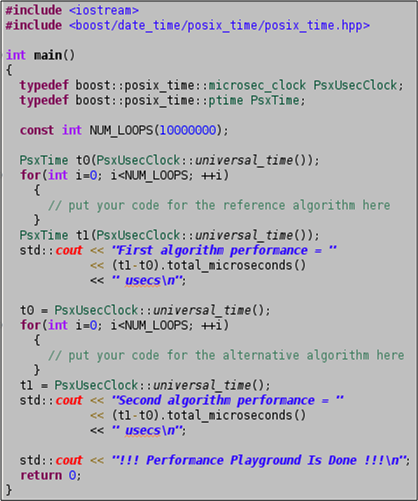

Because of the elevated importance of efficiency in real-time systems, I always keep a simple (one source code file) project named “performance_playground” open in my Eclipse IDE for algorithm/idiom/pattern prototyping and performance measurement. I use it to measure and optimize the performance of “chunks” of critical logic and to pit two or more candidates against each other in performance death matches. For each experiment I “branch” off of the project trunk and then I tag and commit the instantiation to archive the results.

The source code for the performance_playground project is shown below. The program’s sole external dependency is on the boost.date_time library for its platform-independent timestamping. Surely, you have the boost library set installed on all your development platforms, right?

How about you? Do you have something similar? Do you assume that all performance testing and algorithm vetting falls into the dreaded, time-wasting, “premature optimization” anti-pattern?

Avoiding The Big Ball Of Mud

Like other industries, the software industry is rife with funny and quirky language terms. One of my favorites is “Big Ball of Mud“, or BBoM. A BBoM is a mess of a software system that starts off soft and malleable but turns brittle and unstable over time. Inevitably, it hardens like a ball of mud baking in the sun; ready to crumble and wreak havoc on the stakeholder community when disturbed. D’oh!

“And they looked upon the SW and saw that it was good. But they just had to add one more feature…”

Joseph Yoder, one of the co-creators of “BBoM” along with Brian Foote, recently gave a talk titled “Big Balls of Mud in Agile Development: Can We Avoid Them?“. Out of curiosity and the desire to learn more, I watched a video of the talk at InfoQ. In his talk, Mr. Yoder listed these agile tenets as “possible” BBoM promoters:

- Lack of upfront design (i.e. BDUF)

- Embrace late changes to requirements

- Continuous evolving architecture

- Piecemeal growth

- Focus on (agile) process instead of architecture

- Working code is the one true measure of success

For big software systems, steadfastly adhering to these process principles can hatch a BBoM just as skillfully as following a sequential and prescriptive waterfall process. It’ll just get you to the state of poverty that always accompanies a BBoM much quicker.

Unlike application layer code, infrastructure code should not be expected to change often. Even small architectural changes can have far reaching negative effects on all the value-added application code that relies on the structure and general functionality provided under the covers. If you look hard at Joe’s list, any one of his bullets can insidiously steer a team off the success profile below – and lead the team straight to BBoM hell.

The Curiously Recurring Scramble Pattern

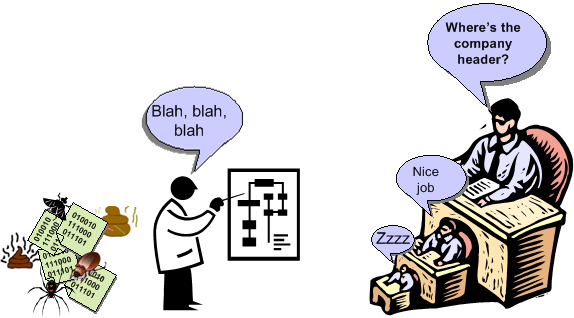

It’s funny to watch software development teams hack away for months building a just barely working patch-quilt monster that they can hardly understand themselves – and then scramble at the last minute generating design documents for some big upcoming management design review or “independent” auditor dog and pony show (woof woof!).

In this Curiously Recurring Scramble Pattern (CRSP), a successful attempt to avoid the labor of thinking is made as developers frantically sprinkle Doxygen annotations throughout the code and/or load the beast into a reverse engineering tool that mechanistically generates UML diagrams to model the as-built mess. It goes without saying that the tool’s “verbose” mode is selected in order to obscure meaning and promote the illusion of high falutin’ sophistication. Of course, all of this is a waste of time (= $$$$) because the dudes doing the reviewing (self-important managers and bureaucratic auditors) don’t want to understand a thing.

When the review or audit does take place; a couple of cream puff questions and comments are bantered about, check boxes are ticked off, a couple of superficial “action items” are generated, and the whole lovefest is rubber-stamped as a great success. Whoo Hoo, we rock!

Without a doubt, you and I have never been culturally forced to participate in an instantiation of the CRSP. We are above that nonsense, right? We do something like this.

“A meeting is a refuge from the dreariness of labor and the loneliness of thought.” – Bernard Baruch

Bugs Or Cash

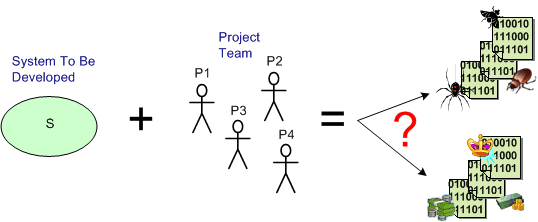

Assume that you have a system to build and a qualified team available to do the job. How can you increase the chance that you’ll build an elegant and durable money maker and not a money sink that may put you out of business.

The figure below shows one way to fail. It’s the well worn and oft repeated ready-fire-aim strategy. You blast the team at the project and hope for the best via the buzz phrase of the day – “emergent design“.

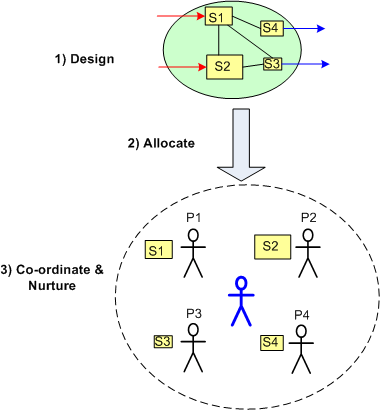

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

Start Big?

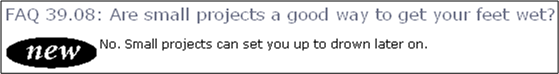

While browsing through the “C++ FAQs“, this particular FAQ caught my eye:

The authors’ “No” answer was rather surprising to me at first because I had previously thought the answer was an obvious “Yes“. However, the rationale behind their collective “No” was compelling. Rather than butcher and fragment their answer with a cut and paste summary, I present their elegant and lucid prose as is:

Small projects, whose intellectual content can be understood by one intelligent person, build exactly the wrong skills and attitudes for success on large projects…..The experience of the industry has been that small projects succeed most often when there are a few highly intelligent people involved who use a minimum of process and are willing to rip things apart and start over when a design flaw is discovered. A small program can be desk-checked by senior people to discover many of the errors, and static type checking and const correctness on a small project can be more grief than they are worth. Bad inheritance can be fixed in many ways, including changing all the code that relied on the base class to reflect the new derived class. Breaking interfaces is not the end of the world because there aren’t that many interconnections to start with. Finally, source code control systems and formalized build procedures can slow down progress.

On the other hand, big projects require more people, which implies that the average skill level will be lower because there are only so many geniuses to start with, and they usually don’t get along with each other that well, anyway. Since the volume of code is too large for any one person to comprehend, it is imperative that processes be used to formalize and communicate the work effort and that the project be decomposed into manageable chunks. Big programs need automated help to catch programming errors, and this is where the payback for static type checking and const correctness can be significant. There is usually so much code based on the promises of base classes that there is no alternative to following proper inheritance for all the derived classes; the cost of changing everything that relied on the base class promises could be prohibitive. Breaking an interface is a major undertaking, because there are so many possible ripple effects. Source code control systems and formalized build processes are necessary to avoid the confusion that arises otherwise.

So the issue is not just that big projects are different. The approaches and attitudes to small and large projects are so diametrically opposed that success with small projects breeds habits that do not scale and can lead to failure of large projects.

After reading this, I initially changed my previously un-investigated opinion. However, upon further reflection, a queasy feeling arose in my stomach because the implication of the authors is that the code bases on big projects aren’t as messy and undisciplined as smaller projects. Plus, it seems as though they imply that disciplined use of processes and tools have a strong correlation with a clean code base and that developers, knowing that the system will be large, will somehow change their behavior. My intuition and personal experience tell me that this may not be true, especially for large code bases that have been around for a long time and have been heavily hacked by lots of programmers (both novice and expert) under schedule pressure.

Small projects may set you up to drown later on, but big projects may start to drown you immediately. What are your thoughts, start small or big?