Archive

An Answer 10 Years Later

I’ve always questioned why one of my mentors from afar, Steve Mellor, was one of the original signatories of the “Agile Manifesto” 10 years ago. He’s always been a “model-based” guy and his fellow pioneer agile dudes were obsessed with the idea that source code was the only truth – to hell with bogus models and camouflage documents. Even Grady Booch, another guy I admire, tempered the agilist obsession with code by stating something like this: “the code is the truth, but not the whole truth“.

Stephen recently sated my 10 year old curiosity in this InfoQ interview: “A Personal Reflection on Agile Ten Years On“. Here’s Steve’s answer to the question that haunted me fer 10 ears:

The other signatories were kind enough, back in 2001, to write the manifesto using the word “software” (which can include executable models), not “code” (which is more specific.) As such I felt able, in good conscience, to become a signatory to the Manifesto while continuing to promote executable modeling. Ten years on we have a standard action language for agile modeling. – Stephen J. Mellor

The reason I have great respect for Stephen (and his cohort Paul Ward) is this brilliant trilogy they wrote waaaayy back in the mid 80s:

Despite the dorky book covers and the dates they were written, I think the info in these short tomes is timeless and still relevant to real-time systems builders today. Of course, they were created before the object-oriented and multi-core revolutions occurred, but these books, using simple DeMarco/Plauger structured analysis modeling notation (before UML), nail it. By “it”, I mean the thinking, tools, techniques, idioms, and heuristics required to specify, design, and build concurrent, distributed, real-time systems that work. Buy em, read em, decide for yourself, bookmark this post, and please report your thoughts back to me.

Performance Playground

Since I work on real-time software projects where tens of thousands of data samples per second must be filtered, manipulated, and transformed into higher level decision-support information, performance in the main processing pipeline is important. If the software can’t keep up with the unrelenting onslaught of data streaming in from the “real world“, internal buffers/queues will overflow at best and the system will crash at worst. D’oh!

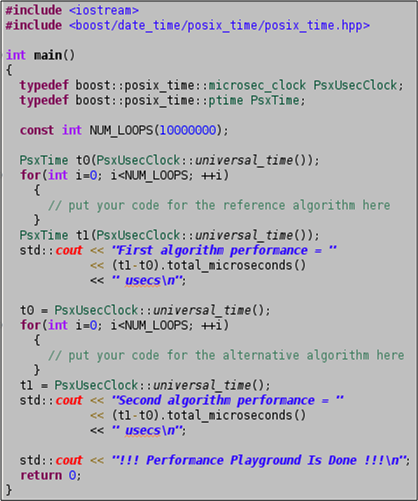

Because of the elevated importance of efficiency in real-time systems, I always keep a simple (one source code file) project named “performance_playground” open in my Eclipse IDE for algorithm/idiom/pattern prototyping and performance measurement. I use it to measure and optimize the performance of “chunks” of critical logic and to pit two or more candidates against each other in performance death matches. For each experiment I “branch” off of the project trunk and then I tag and commit the instantiation to archive the results.

The source code for the performance_playground project is shown below. The program’s sole external dependency is on the boost.date_time library for its platform-independent timestamping. Surely, you have the boost library set installed on all your development platforms, right?

How about you? Do you have something similar? Do you assume that all performance testing and algorithm vetting falls into the dreaded, time-wasting, “premature optimization” anti-pattern?

Fixed Vs Variable Sleep Times

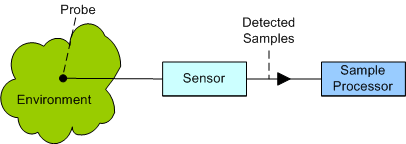

Consider the back end of a sensor system as shown below. Now, assume that you’re tasked with building the Sample Processor and you need some way of testing it. Thus, you need to simulate the continuous high speed sample stream that the Sensor will produce during operation in the real physical world.

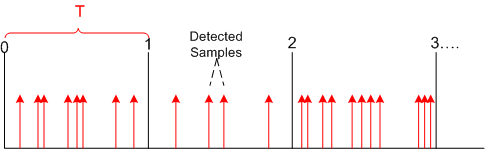

Since the analog real world is wonderfully messy and non-deterministic, the sensor/probe combo will produce data sample detections in bursty clumps as modeled below.

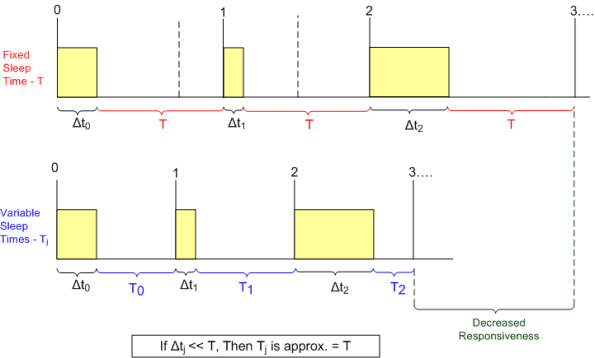

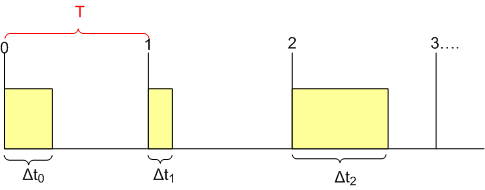

However, since you don’t need the high fidelity and fine grained controllability in your sensor simulator implied by the figure, you simplify your approach by modeling the sensor output as a deterministically time sliced (slice size = T) and “batched” device as shown by the yellow boxes in the diagram below.

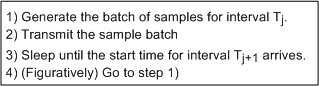

After thinking about it, you sketch out a simple core sensor simulation algorithm as:

After thinking about it, you sketch out a simple core sensor simulation algorithm as:

As the figure below shows, there are two options for determining how long to “sleep” after each yellow sample batch has been generated and transmitted: fixed and variable. In the simpler approach, the code sleeps for a fixed time duration “T” after every batch – regardless of how long it took to generate and transmit the batch of sensor samples. In the higher fidelity variable sleep approach, the time to sleep is calculated on the fly during runtime as the slice time “T” minus the time it took to generate and send the current batch. As the batch processing time approaches the time slice period T, the software sleeps less in order to maintain true real-time operation.

As the figure shows, implementing the sensor simulator with variable sleep time logic results in truer real-time behavior. In the fixed sleep design, the simulator starts lagging behind real-time immediately and the longer the sensor simulator runs, the further its behavior deviates from the real-time ideal. However, for short simulator runs and/or large relative time slice periods (see the box above), the simpler fixed sleep time approach tracks real-time just about as well.

For the project I’m currently working on, I’ve coded up a sensor simulator and sample processor pair that can be configured either way. I just thought I’d share the analysis/design thought process that I went through just in case it might interest or help anybody who’s working on something similar.