Archive

Whole, Part, Purposeful, Unpurposeful

Perhaps ironically, the various branches of “systems thinking” do not have a consensus definition of “system” archetypes. In “Ackoff’s Best”, Russell Ackoff lays down his definition as follows:

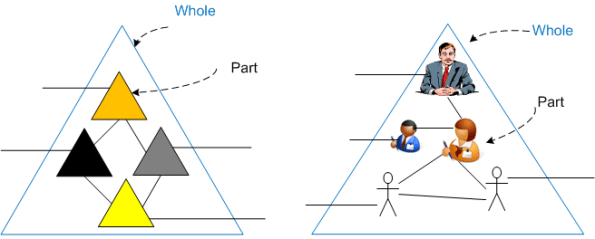

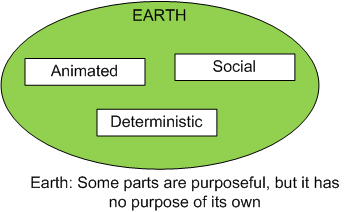

There are three basic types of systems and models of them, and a meta-system: one that contains all three types as parts of it. 1. Deterministic: Systems and models in which neither the parts nor the whole are purposeful (e.g. a computer) 2. Animated: Systems and models in which the whole is purposeful but the parts are not (e.g. you or me). 3. Social: Systems and models in which both the parts and the whole are purposeful (e.g. an institution). All three types of systems are contained in ecological systems, some of whose parts are purposeful but not the whole. For example, Earth is an ecological system that has no purpose of its own but contains social and animate systems that do, and deterministic systems that don’t.

But wait! Why are there no Ackoffian systems whose parts are purposeful but whose whole is un-purposeful? Russ doesn’t say why, but BD00 (of course) can speculate.

As soon as one inserts a purposeful part into a deterministic system, the system auto-becomes purposeful?

As soon as one inserts a purposeful part into a deterministic system, the system auto-becomes purposeful?

Structure And The “ilities”

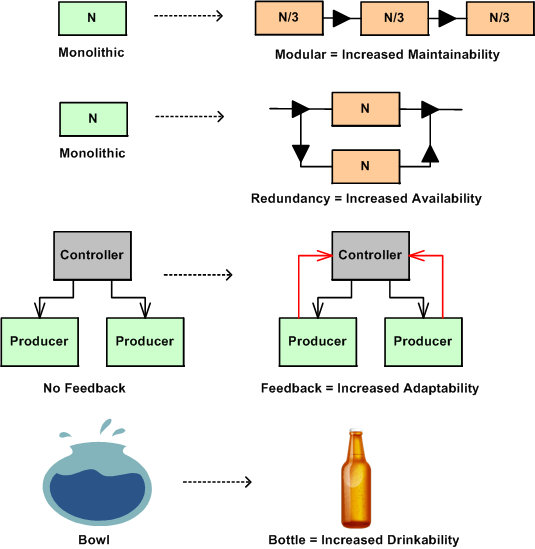

In nature, structure is an enabler or disabler of functional behavior. No hands – no grasping, no legs – no walking, no lungs – no living. Adding new functional components to a system enables new behavior and subtracting components disables behavior. Changing the arrangement of an existing system’s components and how they interconnect can also trade-off qualities of behavior, affectionately called the “ilities“. Thus, changes in structure effect changes in behavior.

The figure below shows a few examples of a change to an “ility” due to a change in structure. Given the structure on the left, the refactored structure on the right leads to an increase in the “ility” listed under the new structure. However, in moving from left to right, a trade-off has been made for the gain in the desired “ility”. For the monolithic->modular case, a decrease in end-to-end response-ability due to added box-to-box delay has been traded off. For the monolithic->redundant case, a decrease in buyability due to the added purchase cost of the duplicate component has been introduced. For the no feedback->feedback case, an increase in complexity has been effected due to the added interfaces. For the bowl->bottle example, a decrease in fill-ability has occurred because of the decreased diameter of the fill interface port.

The plea of this story is: “to increase your aware-ability of the law of unintended consequences”. What you don’t know CAN hurt you. When you are bound and determined to institute what you think is a “can’t lose” change to a system that you own and/or control, make an effort to discover and uncover the ilities that will be sacrificed for those that you are attempting to instill in the system. This is especially true for socio-technical systems (do you know of any system that isn’t a socio-technical system?) where the influence on system behavior by the technical components is always dwarfed by the influence of the components that are comprised of groups of diverse individuals.

Surveillance Systems

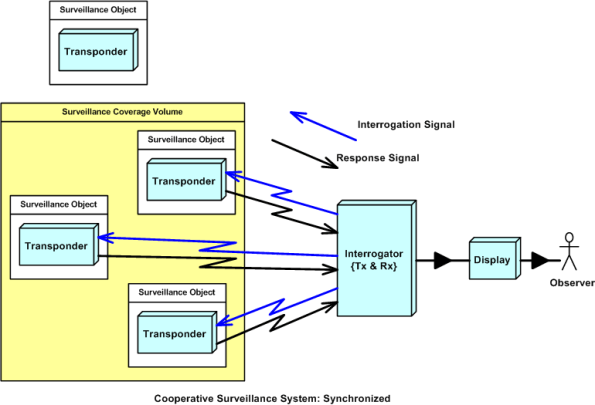

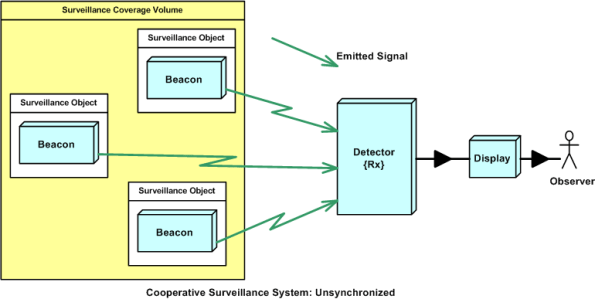

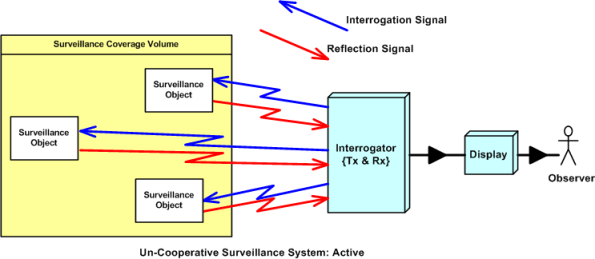

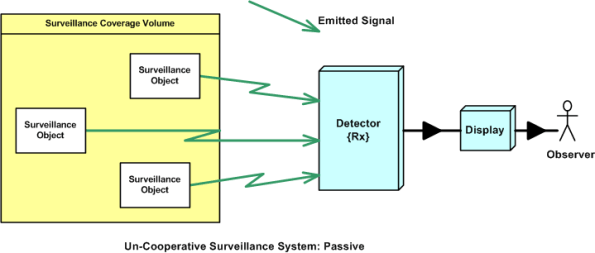

The purpose of a surveillance system is to detect and track Objects Of Interest (OOI) that are present within a spatial volume covered by a sensing device. Surveillance systems can be classified into four types:

- Cooperative and synchronized

- Cooperative and unsynchronized

- Un-cooperative and active

- Un-cooperative and passive

In cooperative systems, the OOI are equipped with a transponder device that voluntarily “cooperates” with the sensor. The sensor continuously probes the surveillance volume by transmitting an interrogation signal that is recognized by the OOI transponders. When a transponder detects an interrogation, it transmits a response signal back to the interrogator. The response may contain identification and other information of interest to the interrogator. Air traffic control radar systems are examples of cooperative, synchronized surveillance systems.

In a cooperative and unsynchronized surveillance system, the sensor doesn’t actively probe the surveillance volume. It passively waits for signal emissions from beacon-equipped OOI. Cooperative and unsynchronized surveillance systems are less costly than cooperative and synchronized systems, but because the OOI beacon emissions aren’t synchronized by an interrogator, their signals can garble each other and make it difficult for the sensor detector to keep them separated.

In uncooperative surveillance systems, the OOI aren’t equipped with any man made devices designated to work in conjunction with a remotely located sensor. The OOI are usually trying to evade detection and/or the sensor is trying to detect the OOI without letting the OOI know that they are under surveillance.

In an active, uncooperative surveillance system, the sensor’s radiated signal is specially designed to reflect off of an OOI. The time of detection of the reflected signal can be used to determine the position and speed of an OOI. Military radar and sonar equipment are good examples of uncooperative surveillance systems.

In a passive, uncooperative surveillance system, the sensor is designed to detect some type of energy signal (e.g. heat, radioactivity, sound) that is naturally emitted or reflected (e.g. light) by an OOI. Since there is no man made transmitter device in the system design, the detection range, and hence coverage volume, is much smaller than any of the other types of surveillance systems.

The dorky classification system presented in this blarticle is by no means formal, or official, or standardized. I just made it up out of the blue, so don’t believe a word that I said.

No Good Deed

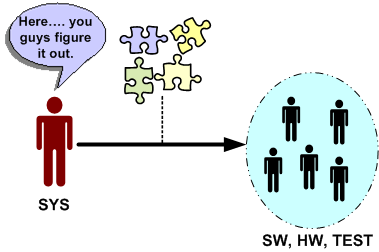

Let’s say that the system engineering culture at your hierarchically structured corpo org is such that virtually all work products handed off (down?) to hardware, software and test engineers are incomplete, inconsistent, fragmented, and filled with incomprehensible ambiguity. Another word that describes this type of low quality work is “camouflage”. Since it is baked into the “culture”, camouflage is expected, it’s taken for granted, and it’s burned into everyone’s mind that “that’s the way it is and that’s the way it always will be”.

Now, assume that someone comes along and breaks from the herd. He/she produces coherent, understandable, and directly usable outputs for the SW and HW and TEST engineers to make rapid downstream progress. How do you think the maverick system engineer would be treated by his/her peers? If you guessed: “with open arms”, then you are wrong. Statements like “that’s too much detail”, “it took too much time”, “you’re not supposed to do that”, “that’s not what our process says we should do”, etc, will reign down on the maverick. No good deed goes unpunished. Sic.

Why would this seemingly irrational and dysfunctional behavior occur? Because hirearchical corpo cultures don’t accept “change” without a fight, regardless of whether the change is good or bad. By embracing change, the changees have to first acknowledge the fact that what they were doing before the change wasn’t working. For engineers, or non-engineers with an engineering mindset of infallibility, this level of self-awareness doesn’t exist. If a maverick can’t handle the psychological peer pressure to return to the norm and produce shoddy work products, then the status quo will remain entrenched. Sadly but surely, this is what everyone wants, including management, and even more outrageously, the HW, SW, and TEST engineers. Bummer.

Functional Allocation VIII

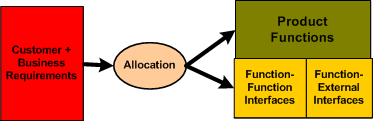

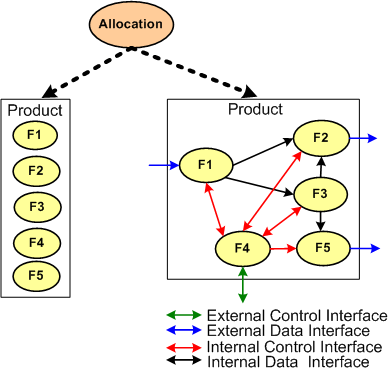

Typically, the first type of allocation work performed on a large and complex product is the shall-to-function (STF) allocation task. The figure below shows the inputs and outputs of the STF allocation process. Note that it is not enough to simply identify, enumerate, and define the product functions in isolation. An integral sub-activity of the process is to conjure up and define the internal and external functional interfaces. Since the dynamic interactions between the entities in an operational system (human or inanimate) give the system its power, I assert that interface definition is the most important part of any allocation process.

The figure below illustrates two alternate STF allocation outputs produced by different people. On the left, a bland list of unconnected product functions have been identified, but the functional structure has not been defined. On the right, the abstract functional product structure, defined by which functions are required to interact with other functions, is explicitly defined.

If the detailed design of each product function will require specialized domain expertise, then releasing a raw function list on the left to the downstream process can result in all kinds of counter productive behavior between the specialists whose functions need to communicate with each other in order to contribute to the product’s operation. Each function “owner” will each try to dictate the interface details to the “others” based on the local optimization of his/her own functional piece(s) of the product. Disrespect between team members and/or groups may ensue and bad blood may be spilled. In addition, even when the time consuming and contentious interface decision process is completed, the finished product will most likely suffer from a lack of holistic “conceptual integrity” because of the multitude of disparate interface specifications.

It is the lead system engineer’s or architect’s duty to define the function list and the interfaces that bind them together at the right level of detail that will preserve the conceptual integrity of the product. The danger is that if the system design owner goes too far, then the interfaces may end up being over-constrained and stifling to the function designers. Given a choice between leaving the interface design up to the team or doing it yourself, which approach would you choose?

Functional Allocation VII

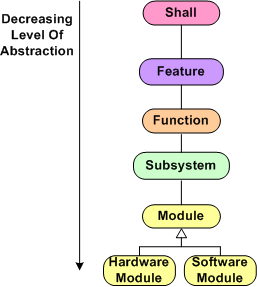

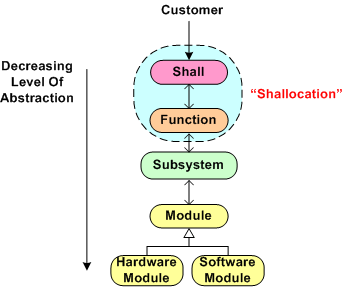

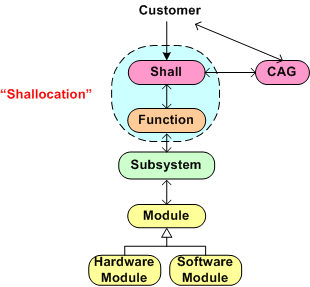

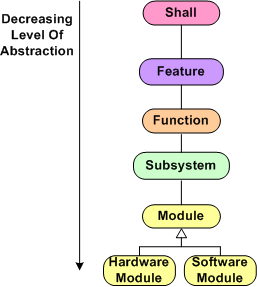

Here we are at blarticle number 7 on the unglamorous and boring topic of “Functional Allocation”. Once again, for a reference point of discussion, I present the hypothetical allocation tree below (your company does have a guidepost like this, doesn’t it?). In summary, product “shalls” are allocated to features, which are allocated to functions, which are allocated to subsystems, which are allocated to software and hardware modules. Depending on the size and complexity of the product to be built, one or more levels of abstraction can be skipped because the value added may not be worth the effort expended. For a simple software-only system that will run on Commercial-Off-The-Shelf (COTS) hardware, the only “allocation” work required to be performed is a shall-to-software module mapping.

During the performance of any intellectually challenging human endeavor, mistakes will be made and learning will take place in real-time as the task is performed. That’s how fallible humans work, period. Thus for the output of such a task like “allocation” to be of high quality, an iterative and low latency feedback loop approach should be executed. When one qualified person is involved, and there is only one “allocation” phase to be performed (e.g. shall-to-module), there isn’t a problem. All the mistake-making, learning, and looping activity takes place within a single mind at the speed of thought. For (hopefully) long periods of time, there are no distractions or external roadblocks to interrupt the performance of the task.

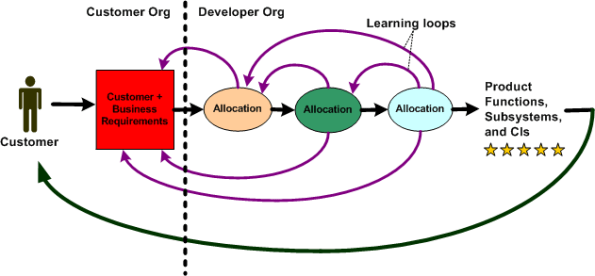

For a big and complex multi-technology product where multiple levels of “allocation” need to be performed and multiple people and/or specialized groups need to be involved, all kinds of socio-technical obstacles and roadblocks to downstream success will naturally emerge. The figure below shows an effective product development process where iteration and loop-based learning is unobstructed. Communication flows freely between the development groups and organizations to correct mistakes and converge on an effective solution . Everything turns out hunky dory and the customer gets a 5 star product that he/she/they want and the product meets all expectations.

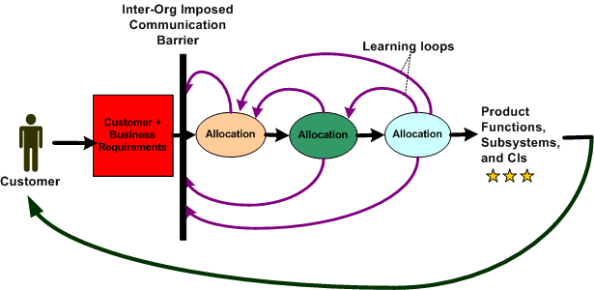

The figure below shows a dysfunctional product development process. For one reason or another, communication feedback from the developer org’s “allocation” groups is cut off from the customer organization. Since questions of understanding don’t get answered and mistakes/errors/ambiguities in the customer requirements go uncorrected, the end product delivered back to the customer underperforms and nobody ends up very happy. Bummer.

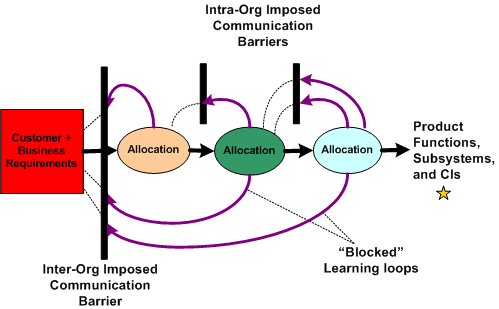

The figure below illustrates the worst possible case for everybody involved – a real mess. Not only do the customer and developer orgs not communicate; the “allocation” groups within the developer org don’t, or are prohibited from, communicating effectively with each other. The product that emerges from such a sequential linear-think process is a real stinker, oink oink. The money’s gone. the time’s gone, and the damn thang may not even work, let alone perform marginally.

Obviously, this situation is a massive failure of corpo leadership and sadly, I assert that it is the norm across the land. It is the norm because almost all big customer and developer orgs are structured as hierarchies of rank and stature with “standard” processes in place that require all kinds and numbers of unqualified people to “be in the loop” and approve (disapprove?) of every little step forward – lest their egos be hurt. Can a systemic, pervasive, baked-in problem like this be solved? If so, who, if anybody, has the ability to solve it? Can a single person overcome the massive forces of nature that keep a hierarchical ecosystem like this viable?

“The Biggest problem To Communication Is The Illusion That It Has Taken Place.” – George Bernard Shaw

Functional Allocation VI

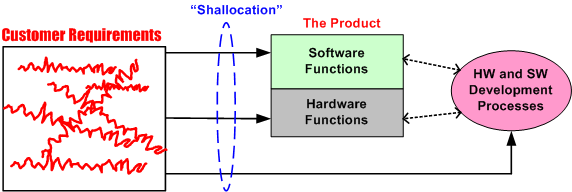

Every big system, multi-level, “allocation” process (like the one shown below) assumes that the process is initialized and kicked-off with a complete, consistent, and unambiguous set of customer-supplied “shalls”. These “shalls” need to be “shallocated” by a person or persons to an associated aggregate set of future product functions and/or features that will solve, or at least ameliorate, the customer’s problem. In my experience, a documented set of “shalls” is always provided with a contract, but the organization, consistency, completeness, and understandability of these customer level requirements often leaves much to be desired.

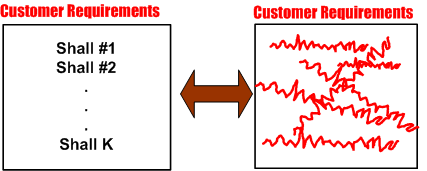

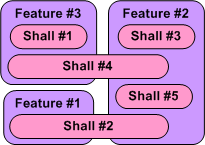

The figure below represents a hypothetical requirements mess. The mess might have been caused by “specification by committee”, where a bunch of people just haphazardly tossed “shalls” into the bucket according to different personal agendas and disparate perceptions of the problem to be solved.

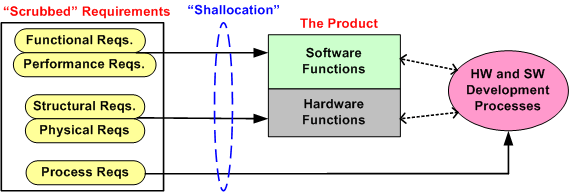

Given a fragmented and incoherent “mess”, what should be done next? Should one proceed directly to the Shall-To-Function (STF) process step? One alternative strategy, the performance of an intermediate step called Classify And Group (CAG), is shown below. CAG is also known as the more vague phrase; “requirements scrubbing”. As shown below, the intent is to remove as much ambiguity and inconsistency as possible by: 1) intelligently grouping the “shalls” into classification categories; 2) restructuring the result into a more usable artifact for the next downstream STF allocation step in the process.

The figure below shows the position of the (usually “hidden” and unaccounted for) CAG process within the allocation tree. Notice the connection between the CAG and the customer. The purpose of that interface is so that the customer can clarify meaning and intent to the person or persons performing the CAG work. If the people performing the CAG work aren’t allowed, or can’t obtain, access to the customer group that produced the initial set of “shalls”, then all may be lost right out of the gate. Misunderstandings and ambiguities will be propagated downstream and end up embedded in the fabric of the product. Bummer city.

Once the CAG effort is completed (after several iterations involving the customer(s) of course), the first allocation activity, Shall-To-Function (STF), can then be effectively performed. The figure below shows the initial state of two different approaches prior to commencement of the STF activity. In the top portion of the figure, CAG was performed prior to starting the STF. In the bottom portion, CAG was not performed. Which approach has a better chance of downstream success? Does your company’s formal product development process explicitly call out and describe a CAG step? Should it?

Functional Allocation V

Holy cow! We’re up to the fifth boring blarticle that delves into the mysterious nature of “Functional Allocation”. Let’s start here with the hypothetical 6 level allocation reference tree that was presented earlier.

Assume that our company is smart enough to define and standardize a reference tree like this one in their formal process documentation. Now, let’s assume that our company has been contracted to develop a Large And Complex (LAC) software-intensive system. My fuzzy and un-rigorous definition of large and complex is:

“The product has, (or will have after it’s built) lots of parts, many different kinds of parts, lots of internal and external interfaces, and lots of different types of interfaces”.

The figure below shows a partial result of step one in the multi-level process; the Shall-To-Feature (STF) allocation process. Given a set of 5 customer-supplied abstract “shalls”, someone has made the design decisions that led to the identification and definition of 3 less-abstract features that the product must provide in order to satisfy the customer shalls.We’ve started the movement from the abstract to the less abstract.

Just imagine what the model below would look like in the case where we had 100s of shalls to wrestle with. How could anyone possibly conclude up front that the set of shalls have been completely covered by the feature set? At this stage of the game, I assert that you can’t. You have to make a commitment and move on. In all likelihood, the initial STF allocation result won’t work. Thus, if your process doesn’t explicitly include the concept of “iterating on mistakes made and on new knowledge gained” as the product development process lurches forward, you’ll get what you deserve.

Note that in the simple example above, there is no clean and proper one-to-one STF mapping and there are 2 cross-cutting “shalls”. Also, note that there is no logical rule or mathematical formula grounded in physics that enables a shallocator (robot or human) to mechanically compute an “optimum” feature set and perform the corresponding STF allocation. It’s abstract stuff, and different qualified people will come up with different designs. Management, take heed of that fact.

So, given the initial finished STF allocation output (recorded and made accessible and visible for others to evaluate, of course) how was it arrived at? Could the effort be codified in a step-by-step Standard Operating Procedure (SOP) so that it can be classified as “repeatable and predictable”? I say no, regardless of what bureaucrats and process managers who’ve never done it themselves think. What about you, what do you think?

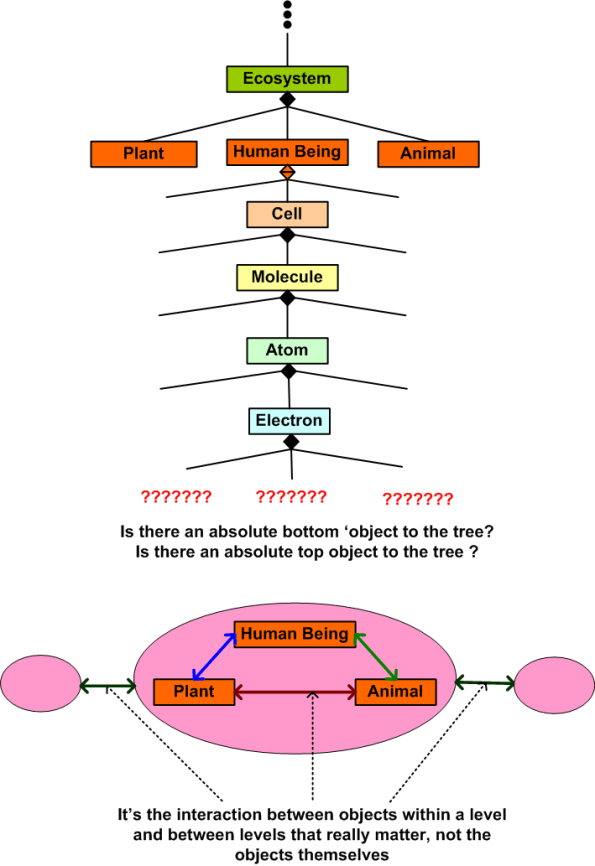

Trees

This tree below is my personal creation. You’re tree would likely be different than my tree. Nature creates perfect trees. Man tends to destroy nature’s trees and to create arbitrary artificial trees to suit his needs. Man must create, either consciously or unconsciously, conceptual trees to make sense of the world. How attached are you to your trees? Are your trees THE right trees and are my trees wrong? Are trees created by ‘experts’ the trees that all should unquestionably embrace? Who are the ‘experts’?

Creating the vertical aspect of the tree is called leveling. Creating the horizontal aspect of the tree is called balancing. Leveling and balancing, along with scoping and bounding, are powerful systems analysis and synthesis tools.

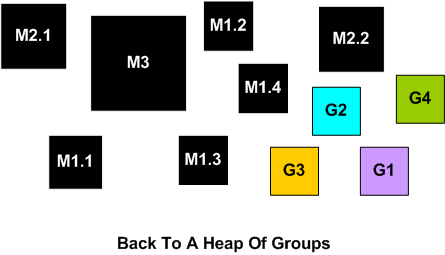

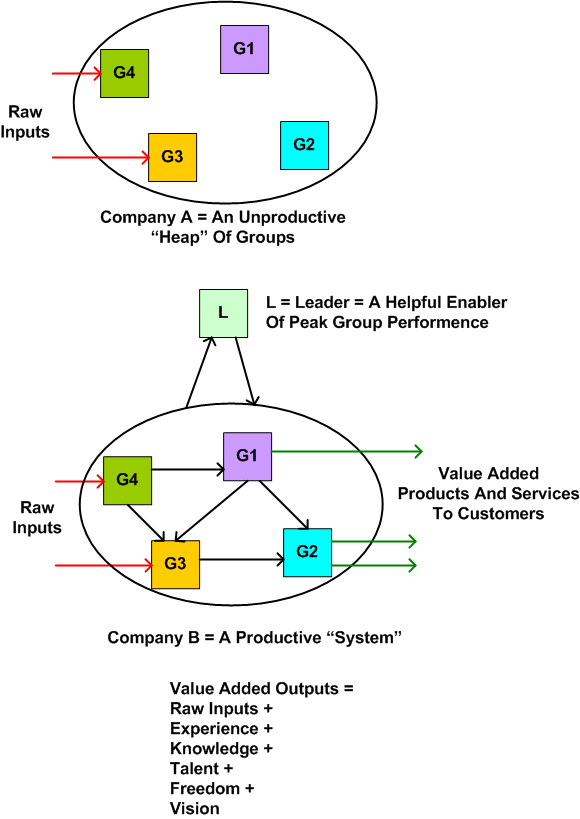

Heaps And Systems

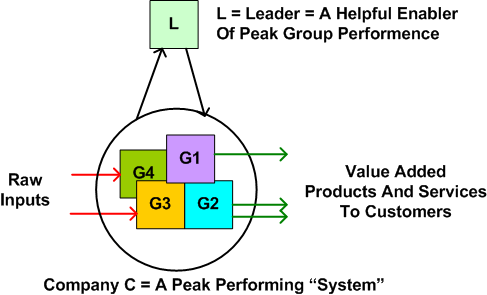

A “heap” is a collection of individual “parts”. A “system” is an intentionally designed set of interconnected parts with a purpose. The purpose of a system transcends AND includes the purpose of each of the individual parts. As an example, think of an automobile. If we disassemble one, we end up with a heap of individual parts. When these parts are assembled and interconnected in accordance with the purpose of human transportation as the goal, we may get a system. Structural design and interconnection are not enough. The system must be energized and steered so that purposeful behavior can be manifested. For a car, the energy is fuel and the steerer is a human being. For an organization of mutli-disciplined groups of people, the energy is motivation and the steerer is a leader. Without motivation and a leader, an organization of human groups is just an unproductive heap that consumes natural resources and doesn’t produce any value added output to share with the world.

The figure below shows two companies that are each comprised of 4 potentially diverse and productive groups of people. Company A is unconnected and leaderless. Thus, it just consumes resources from the external environment and produces nothing of value to share with the world. Company B is both connected and well led. What kind of company do you work for?

Look at company C in the illustration below. In this company, the leader has propelled his/her company to the head of the pack by creating the internal environment for, and nurturing the system’s internal groups and interfaces for peak performance. All of the internal connections and relationships between the groups are comprised of low latency and high bandwidth collaboration. Both high quality outputs and speed of execution distinguish company C from the rest of the herd.

In a high performing system, the danger of over-optimization looms in the form of inflexibility. A system optimized for a single purpose tends to harden and become resistant to change overt time – corposclerosis sets in. The trick for the leader is to create and sustain a delicate balance between optimization and flexibility that adapts with the rapidly changing external environment.

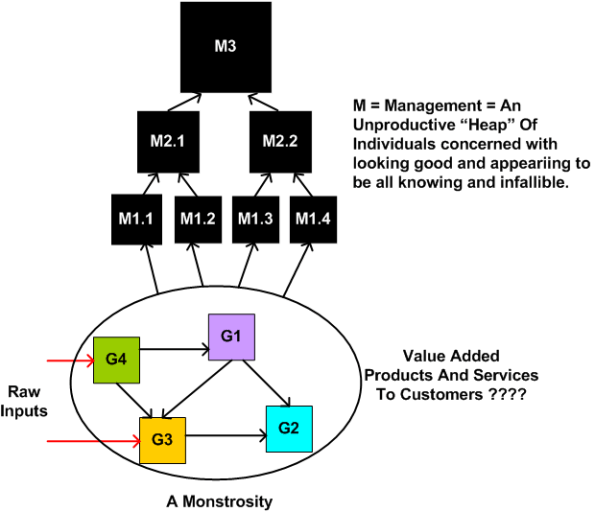

In an attempt to over-optimize performance, some leaders unknowingly morph into “managers”. They start inserting subordinate management layers of questionable value between themselves and the productive subsystems of the company. They start creating and accumulating titles that distance themselves from the productive groups. These and other symbols of status divide and alienate instead of integrate and endear. Instead of guiding, steering, and nurturing, they start commanding, controlling, and constraining. Productivity plummets and quality of workmanship deteriorates.

Because of increasing rules and procedures mandated by management, the internal interfaces between the formerly productive groups start transitioning into high latency and low bandwidth communication channels. In the worst case, like an overheated engine, the interfaces rupture and the system abruptly disintegrates; leaving an unconnected and purposeless heap of parts in its wake. Bummer.