Archive

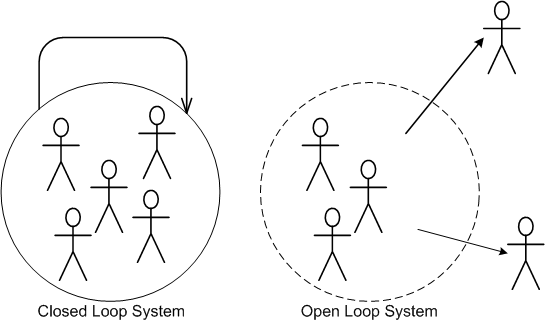

Open Loop

I’m currently working on a really exciting and fun software development project with several highly competent peers. Two of them, however, like to operate open loop and plow ahead with minimal collaboration with the more disciplined (and hence, slower) developers. These dudes not only insert complex designs and code into their components without vetting their ideas before the rest of the team, they have no boundaries. Like elephants in a china shop, they tramp all over code in everybody else’s “area of ownership”.

“He who touches the code last, owns it.” — Anonymous

Because of the team cohesion that it encourages, I’m all for shared ownership, but there has to be some nominal boundary to arrest the natural growth in entropy inherent in open loop systems and to ensure local and global conceptual integrity.

Even though these colleagues are rogues, they’re truly very smart. So, I’m learning from them, albeit slooowly since they don’t document very well (surprise!) and I have to laboriously reverse engineer the code they write to understand what the freak they did. Even though feelings aren’t allowed, I “feel” for those dudes who come on board the project and have to extend/maintain our code after we leave for the next best thing.

Cppcheck Test Run

Since I think that a static code analyzer can help me and my company produce higher quality code, I decided to download and test Cppcheck:

Cppcheck is an analysis tool for C/C++ code. Unlike C/C++ compilers and many other analysis tools, we don’t detect syntax errors. Cppcheck only detects the types of bugs that the compilers normally fail to detect. The goal is no false positives.

After the install, I ran Cppcheck on the root directory of a code base that contains over 200K lines of C++ code. As the figure below shows, 1077 style and error violations were flagged. The figure also shows a sample of the specific types of style and error violations that Cppcheck flagged within this particular code base.

After this test run, I ran Cppcheck on the five figure code base of the current project that I’m working on. Lo and behold, it didn’t flag any suspicious activity in my pristine code. Hah, hah, the last sentence was a joke! Cppcheck did flag some style warnings in my code, but (thankfully) it didn’t spew out any dreaded error warnings. And of course, I mopped up my turds.

Because of the painless install, its simplicity of use, and its speed of execution, I’ve added Cppcheck to my nerd toolbelt. I’m gonna run Cppcheck on every substantial piece of C++ code that I write in the future.

I want to sincerely thank all the programmers who contributed their free time to the Cppcheck project for the nice product they created and unselfishly donated to the world. You guys rock.

Emergent Design

I’m somewhat onboard with the agile community’s concept of “emergent design“. But like all techniques/heuristics/rules-of-thumb/idioms, context is everything. In the “emergent design” approach, you start writing code and, via a serendipitous, rapid, high frequency, mistake-making, error-correction process, a good design emerges from the womb – voila! This technique has worked well for me on “small” designs within an already-established architecture, but it is not scalable to global design, new architecture, or cross-cutting system functionality. For these types of efforts, “emergent modeling” may be a more appropriate approach. If you believe this, then you must make the effort to learn how to model, write (or preferably draw) it up, and iterate on it much like you do with writing code. But wait, you don’t do, or ever plan to do, documentation, right? Your code is so self-expressive that you don’t even need to comment it, let alone write external documentation. That crap is for lowly English majors.

To religiously embrace “emergent design” and eschew modeling/documentation for big design efforts is to invite downstream disaster and massive post delivery stakeholder damage. Beware because one of those downstream stakeholders may be you.

PAYGO II

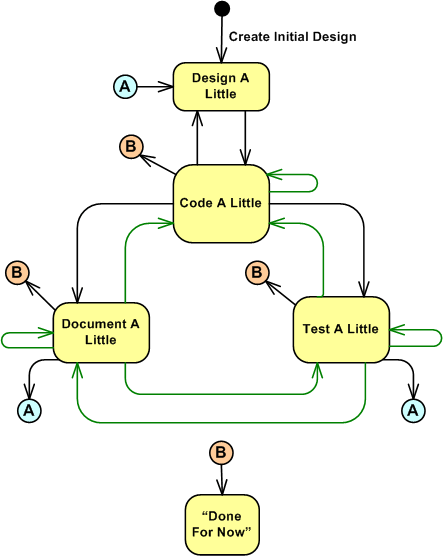

PAYGO stands for “Pay As You Go“. It’s the name of the personal process that I use to create or maintain software. There are five operational states in PAYGO:

- Design A Little

- Code A Little

- Test A Little

- Document A Little

- Done For Now

Yes, the fourth state is called “Document A Little“, and it’s a first class citizen in the PAYGO process. Whatever process you use, if some sort of documentation activity is not an integral part of it, then you might be an incomplete and one dimensional engineer, no?

“…documentation is a love letter that you write to your future self.” – Damian Conway

The UML state transition diagram below models the PAYGO states of operation along with the transitions between them. Even though the diagram indicates that the initial entry into the cyclical and iterative PAYGO process lands on the “Design A Little” state of activity, any state can be the point of entry into the process. Once you’re immersed in the process, you don’t kick out into the “Done For Now” state until your first successful product handoff occurs. Here, successful means that the receiver of your work, be it an end user or a tester or another programmer, is happy with the result. How do you know when that is the case? Simply ask the receiver.

Notice the plethora of transition arcs in the diagram (the green ones are intended to annotate feedback learning loops as opposed to sequential forward movements). Any state can transition into any other state and there is no fixed, well defined set of conditions that need to be satisfied before making any state-to-state leap. The process is fully under your control and you freely choose to move from state to state as “God” (for lack of a better word) uses you as an instrument of creation. If clueless STSJ PWCE BMs issue mindless commands from on high like “pens down” and “no more bug fixing, you’re only allowed to write new code“, you fake it as best you can to avoid punishment and you go where your spirit takes you. If you get caught faking it and get fired, then uh….. soothe your conscience by blaming me.

The following quote in “The C++ Programming Language” by mentor-from-afar Bjarne Stroustrup triggered this blog post:

In the early years, there was no C++ paper design; design, documentation, and implementation went on simultaneously. There was no “C++ project” either, or a “C++ design committee.” Throughout, C++ evolved to cope with problems encountered by users and as a result of discussions between my friends, my colleagues, and me. – Bjarne Stroustrup

When I read it on my Nth excursion through the book (you’ve made multiple trips through the BS book too, no?), it occurred to me that my man Bjarne uses PAYGO too.

Say STFU to all the mindlessly mechanistic processes from highly-credentialed and well-intentioned luminaries like Watts Humphrey’s PSP (because he wants to transform you into an accountant) and your mandated committee corpo process group (because the chances are that the dudes who wrote the process manuals haven’t written software in decades) and the TDD know-it-alls. Embrace what you discover is the best personal development process for you; be it PAYGO or whatever personal process the universe compels you to embrace. Out of curiosity, what process do you use?

If you’re interested in a higher level overview of the personal PAYGO process in the context of other development processes, you can check out this previous post: PAYGO I. And thanks for listening.

BS Design

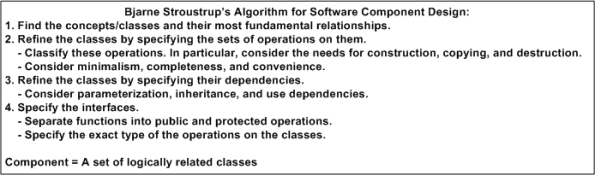

Sorry, but I couldn’t resist naming the title of this post as it is written. However, despite what you may have thought on first glance, BS stands for dee man, Bjarne Stroustrup. In chapter 23 of “The C++ Programming Language“, Bjarne offers up this advice regarding the art of mid-level design:

To find out the details of executing each iterative step in the while(not_done){} loop, go buy the book. If C++ is your primary programming language and you don’t have the freakin’ book, then shame on you.

Bjarne makes a really good point when he states that his unit of composition is a “component”. He defines a component as a cluster of classes that are cohesively and logically related in some way.

A class should ideally map directly into a problem domain concept, and concepts (like people) rarely exist in isolation. Unless it’s a really low level concrete concept on the order of a built in type like “int”, a class will be an integral player in a mini system of dynamically collaborating concepts. Thinking myopically while designing and writing classes (in any language that directly supports object oriented design) can lead to big, piggy classes and unnecessary dependencies when other classes are conceived and added to the kludge under construction – sort of like managers designing an org to serve themselves instead of the greater community. 🙂

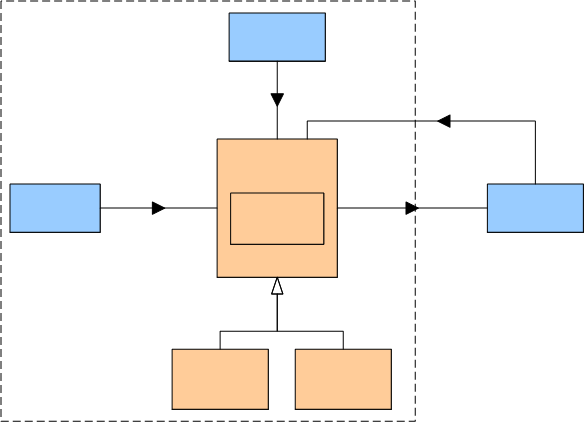

The figure below shows “revision 0” of a mini system of abstract classes that I’m designing and writing on my current project. The names of the classes have been elided so that I don’t get fired for publicly disclosing company secrets. I’ve been architecting and designing software like this from the time I finally made the painful but worthwhile switchover to C++ from C.

The class diagram is the result of “unconsciously” applying step one of Bjarne’s component design process. When I read Bjarne’s sage advice it immediately struck a chord within me because I’ve been operating this way for years without having been privy to his wisdom. That’s why I wrote this blowst – to share my joy at discovering that I may actually be doing something right for a change.

It’s Gotta Be Free!

I love ironies because they make me laugh.

I find it ironic that some software companies will staunchly avoid paying a cent for software components that can drastically lower maintenance costs, but be willing to charge their customers as much as they can get for the application software they produce.

When it comes to development tools and (especially) infrastructure components that directly bind with their application code, everything’s gotta be free! If it’s not, they’ll do one of two things:

- They’ll “roll their own” component/layer even though they have no expertise in the component domain that cries out for the work of specialized experts. In the old days it was device drivers and operating systems. Today, it’s entire layers like distributed messaging systems and database managers.

- They’ll download and jam-fit a crappy, unpolished, sporadically active, open source equivalent into their product – even if a high quality, battle-tested commercial component is available.

Like most things in life, cost is relative, right? If a component costs $100K, the misers will cry out “holy crap, we can’t waste that money“. However, when you look at it as costing less than one programmer year, the situation looks different, no?

How about you? Does this happen, or has it happened in your company?

Close Spatial Proximity

Even on software development projects with agreed-to coding rules, it’s hard to (and often painful to all parties) “enforce” the rules. This is especially true if the rules cover superficial items like indenting, brace alignment, comment frequency/formatting, variable/method name letter capitalization/underscoring. IMHO, programmers are smart enough to not get obstructed from doing their jobs when trivial, finely grained rules like those are violated. It (rightly) pisses them off if they are forced to waste time on minutiae dictated by software “leads” that don’t write any code and (especially) former programmers who’ve been promoted to bureaucratic stooges.

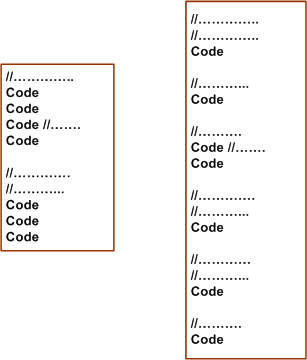

Take the example below. The segment on the right reflects (almost) correct conformance to the commenting rule “every line in a class declaration shall be prefaced with one or more comment lines”. A stricter version of the rule may be “every line in a class declaration shall be prefaced with one or more Doxygenated comment lines”.

Obviously, the code on the left violates the example commenting rule – but is it less understandable and maintainable than the code on the right? The result of diligently applying the “rule” can be interpreted as fragmenting/dispersing the code and rendering it less understandable than the sparser commented code on the left. Personally, I like to see necessarily cohesive code lines in close spatial proximity to each other. It’s simply easier for me to understand the local picture and the essence of what makes the code cohesive.

Even if you insert an automated tool into your process that explicitly nags about coding rule violations, forcing a programmer to conform to standards that he/she thinks are a waste of time can cause the counterproductive results of subversive, passive-aggressive behavior to appear in other, more important, areas of the project. So, if you’re a software lead crafting some coding rules to dump on your “team”, beware of the level of granularity that you specify your coding rules. Better yet, don’t call them rules. Call them guidelines to show that you respect and trust your team mates.

If you’re a software lead that doesn’t write code anymore because it’s “beneath” you or a bureaucrat who doesn’t write code but who does write corpo level coding rules, this post probably went right over your head.

Note: For an example of a minimal set of C++ coding guidelines (except in rare cases, I don’t like to use the word “rule”) that I personally try to stick to, check this post out: project-specific coding guidelines.

Conceptual Integrity

Like in his previous work, “The Mythical Man Month“, in “The Design Of Design“, Fred Brooks remains steadfast to the assertion that creating and maintaining “conceptual integrity” is the key to successful and enduring designs. Being a long time believer in this tenet, I’ve always been baffled by the success of Linux under the free-for-all open source model of software development. With thousands of people making changes and additions, even under Linus Torvalds benevolent dictatorship, how in the world could the product’s conceptual integrity defy the second law of thermodynamics under the onslaught of such a chaotic development process?

Fred comes through with the answers:

- A unifying functional specification is in place: UNIX.

- An overall design structure, initially created by Torvalds, exists.

- The builders are also the users – there are no middlemen to screw up requirements interpretation between the users and builders.

If you extend the reasoning of number 3, it aligns with why most of the open source successes are tools used by software developers and not applications used by the average person. Some applications have achieved moderate success, but not on the scale of Linux and other application development tools.

Architectural Choices

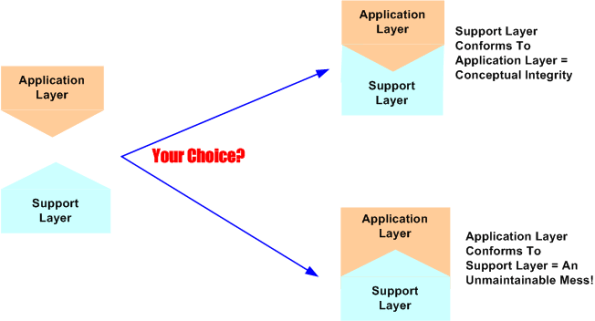

Assume that a software-intensive system you’re tasked with designing is big and complex. Because of this, you freely decide to partition the beast into two clearly delineated layers; a value-added application layer on top and an unwanted overhead, but essential, support layer on the bottom.

Now assume that a support layer for a different application layer already exists in your org – the investment has been made, it has been tested, and it “works” for those applications it’s been integrated with. As the left portion of the figure below shows, assume that the pre-existing support layer doesn’t match the structure of your yet-to-be-developed application layer. They just don’t naturally fit together. Would you:

- try to “bend” the support layer to fit your application layer (top right portion of the figure)?

- try to redesign and gronk your application layer to jam-fit with the support layer (bottom right portion of the figure)?

- ditch the support layer entirely and develop (from scratch) a more fitting one?

- purchase an externally developed support layer that was designed specifically to fit applications that fall into the same category as yours?

After contemplating long term maintenance costs, my choice is number 4. Let support layer experts who know what they’re doing shoulder the maintenance burden associated with the arcane details of the support layer. Numbers 1 through 3 are technically riskier and financially costlier because, unless your head is screwed on backwards, you should be tasking your programmers with designing and developing application layer functionality that is (supposedly) your bread winner – not mundane and low value-added infrastructure functionality.

Layered Obsolescence

For big and long-lived software-intensive systems, the most powerful weapon in the fight against the relentless onslaught of hardware and software obsolescence is “layering”. The purer the layering, the better.

In a pure layered system, source code in a given layer is only cognizant of, and interfaces with, code in the next layer down in the stack. Inversion of control frameworks where callbacks are installed in the next layer down are a slight derivative of pure layering. Each given layer of code is purposefully designed and coded to provide a single coherent service for the next higher, and more abstract, layer. However, since nothing comes for free, the tradeoff for architecting a carefully layered system over a monolith is performance. Each layer in the cake steals processor cycles and adds latency to the ultimate value-added functionality at the top of the stack. When layering rules must be violated to meet performance requirements, each transgression must be well documented for the poor souls who come on-board for maintenance after the original development team hits the high road to promotion and fame.

Relative to a ragged and loosely controlled layering scheme (or heaven forbid – no discernable layering), the maintenance cost and technical risk of replacing any given layer in a purely layered system is greatly reduced. In a really haphazard system architecture, trying to replace obsolete, massively tentacled components with fuzzy layer boundaries can bring the entire house of cards down and cause great financial damage to the owning organization.

“The entire system also must have conceptual integrity, and that requires a system architect to design it all, from the top down.” – Fred Brooks

Having said all this motherhood and apple pie crap that every experienced software developer knows is true, why do we still read and hear about so many software maintenance messes? It’s because someone needs to take continuous ownership of the overall code base throughout the lifecycle and enforce the layering rules. Talking and agreeing 100% about “how it should be done” is not enough.

Since:

- it’s an unpopular job to scold fellow developers (especially those who want to put their own personal coat of arms on the code base),

- it’s under-appreciated by all non-software management,

- there’s nothing to be gained but extra heartache for any bozo who willfully signs up to the enforcement task,

very few step up to the plate to do the right thing. Can you blame them? Those that have done it and have been rewarded by excommunication from the local development community in addition to indifference from management, rarely do it again – unless coerced.