Archive

Yin And Yang

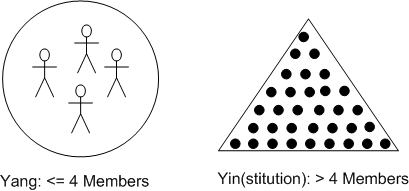

In Bill Livingston’s current incarnation of the D4P, the author distinguishes between two mutually exclusive types of orgs. For convenience of understanding, Bill arbitrarily labels them as Yin (short for “Yinstitution“) and Yang (short for “Yang Gang“):

The critical number of “four” in Livingston’s thesis is called “the Starkermann bright line“. It’s based on decades of modeling and simulation of Starkermann’s control-theory-based approach to social unit behavior. According to the results, a group with greater than 4 members, when in a “mismatch” situation where Business As Usual (BAU) doesn’t apply to a novel problem that threatens the viability of the institution, is not so “bright” – despite what the patriarchs in the head shed espouse. Yinstitutions, in order to retain their identities, must, as dictated by natural laws (control theory, the 2nd law of thermodynamics, etc), be structured hierarchically and obey an ideology of “infallibility” over “intelligence” as their ideological MoA (Mechanism of Action).

According to Mr. Livingston, there is no such thing as a “mismatch” situation for a group of <= 4 capable members because they are unencumbered by a hierarchical class system. Yang Gangs don’t care about “impeccable identities” and thus, they expend no energy promoting or defending themselves as “infallible“. A Yang Gang’s structure is flat and its MoA is “intelligence rules, infallibility be damned“.

The accrual of intelligence, defined by Ross Ashby as simply “appropriate selection“, requires knowledge-building through modeling and rapid run-break-learn-fix simulation (RBLF). Yinstitutions don’t do RBLF because it requires humility, and the “L” part of the process is forbidden. After all, if one is infallible, there is no need to learn.

Cross-Disciplinary Pariahs

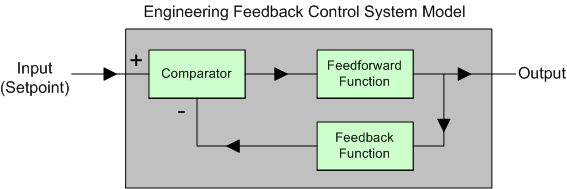

The figure below shows a simplified version of the classic engineering Feedback Control System (FCS). There are two significant features that distinguish an FCS from a typical engineering system. First, the input is not a raw signal to be manipulated in order to produce a derived output of added informational value. It is a “desired” setpoint (or goal, or reference) to be “achieved” by the system’s design.

The second feature is the feedback loop which taps off the output signal and provides real-time evidence to the comparator of how well the output is converging to (or diverging from) the desired setpoint. For a given application, the system’s innards are designed such that the output tracks its input with hi fidelity – even in the presence of “disturbances” (e.g. noise) that infiltrate the system.

In purely technical systems (as opposed to socio-technical systems), the FCS system output would typically be connected to an “actuator” device like a motor, a switch, a valve, a furnace, etc that affects an important measurable quantity in the external environment. The desired setpoints for these type of systems would be motor speed, switch position, valve position, and temperature, respectively. The mathematics of how engineering FCSs behave been known since the 1930s.

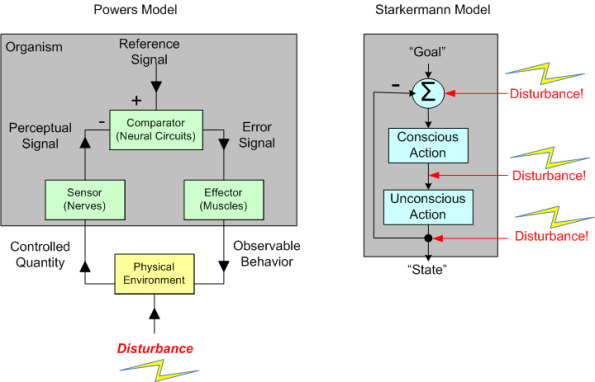

In defiance of mainstream psychology and sociology pedagogy, Bill Powers and Rudy Starkermann spent much of their careers applying control theory concepts to their own innovative theories of human behavior. Their heretical, cross-disciplinary approaches to psychology and sociology have kept them oppressed and out of the mainstream much like Deming, Ackoff, Argyris in management “science”.

The figure below shows (big simplifications of) the Powers and Starkermann models side by side. Note the similarities between them and also between them and the classic engineering FCS.

- Engineering FCS: Setpoint/Comparator/Feedback Loop

- Powers: Reference/Comparator/Feedback Loop

- Starkermann: Goal/Summing Node/Feedback Loop

The big (and it’s huge) difference between the Starkermann/Powers models and the engineering FCS model is that Starkermann’s goal and Powers’ reference signal originate from within the system whereas the dumb-ass engineering FCS must “be told” what the desired setpoint is by something outside of itself (a human or another mechanistic system designed by a human). In the Starkermann/Powers FCS models of human behavior, “being told” is processed as a disturbance.

If you delve deeper into the “obscure” work of Starkermann and Powers, your world view of the behavior of individuals and groups of individuals just may change – for the better or the worse.

Related articles

- Building The Perfect Beast (bulldozer00.com)

- Normal, Slave, Almost Dead, Wimp, Unstable (bulldozer00.com)

- 1, 2, X, Y (bulldozer00.com)

- The Dispute Over Control Theory (docs.google.com)

Building The Perfect Beast

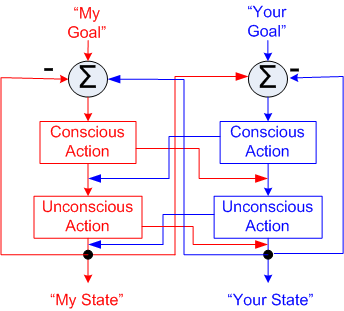

The figure below illustrates a simplified model of a Starkermann dualism. My behavior can contribute to (amity), or detract from (enmity) your well being and vice versa.

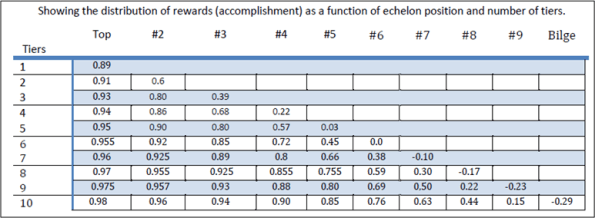

Mr. Starkermann spent decades developing and running simulations of his models to gain an understanding of the behavior of groups. The table below (plucked from Bill Livingston’s D4P4D) shows the results of one of those simulation runs.

The table shows the deleterious effects of institutional hierarchy building. In a single tier organization, the group at the top, which includes everyone since no one is above or below anybody else, attains high levels of achievement (89%). In a 10 layer monstrosity, those at the top benefit greatly (98% achievement) at the expense of those dwelling at the bottom – who actually gain nothing and suffer the negative consequences of being a member of the borg.

What do you think? Does this model correspond to reality? How many tiers are in your org and where are you located?

D4P Has Been Hatched

Friend and long time mentor Bill Livingston has finished his latest book, “Design For Prevention” (D4P). I mildly helped Bill in his endeavor by providing feedback over the last year or so in the form of idiotic commentary, and mostly, typo exposure.

Bill, being a staunch promoter of SCRBF feedback and its natural power of convergence to excellence, continuously asked for feedback and contributory ideas throughout the book writing process. Being a blabbermouth and having great respect for the man because of the profound influence he’s had on my worldview for 20+ years, I truly wanted to contribute some ideas of substance. However, I struggled mightily to try and conjure up some worthy input because even though I understood the essence of this original work and it resonated deeply with me, I couldn’t quite form (and still can’t) a decent and coherent picture of the whole work in my mind.

D4P is a socio-technical process for designing a solution to a big hairy problem (in the face of powerful institutional resistance) that dissolves the problem without causing massive downstream stakeholder damage. Paradoxically, the book is a loosely connected, but also dense, artistic tapestry of seemingly unrelated topics and concepts such as:

- Alan Turing’s thesis of infallibility vs. intelligence

- Leveraging nature’s physical laws, with a special emphasis on suspending the 2nd law of thermodynamics and entropy growth

- W. Ross Ashby‘s cybernetic law of requisite variety

- Thorstein Veblen’s theory of the leisure class

- Nash Equilibriums in game playing

- Rudolf Starkermann‘s mathematical analysis of social group system behavior

Bill does a masterful and unprecedented job at connecting the dots. The book will set you back, uh, $250 beaners on Amazon.com, but wait….. there’s a reason for that astronomical price. He doesn’t really care if he sells it. He wants to give it away to people who are seriously interested in “Designing For Prevention”. Posers need not apply. If you’re intrigued and interested in trying to coerce Bill into sending you a copy, you can introduce yourself and make your case at vitalith “at” att “dot” net.