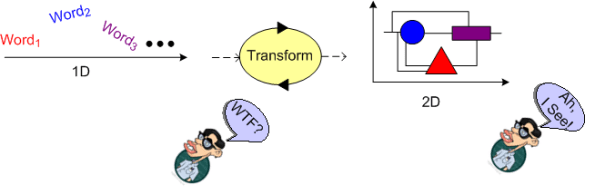

1D And 2D

In case you didn’t already know, I draw dorky diagrams often, really often. My motivation is to increase understanding by transforming a constricted, sequential, 1D word description of a new, interesting topic into a spatially loose, 2D visualization. To me, the resulting diagrams are not as important as the act of creating them. The iterative thinking and reflection required by the process anchors an understanding (which in fact may turn out to be wrong) in place. Maybe you should give it a try?

Stuck And Bother

I’m currently working on a project with a real, hard deadline. My team has to demonstrate a working, multi-million dollar radar in the near future to a potential deep-pocketed customer. As you can see below, the tower is up and the antenna is majestically perched on its pedestal. However, it ain’t spinning yet. Nor is it radiating energy, detecting signal returns, or extracting/estimating target information (range, speed, angle, size) buried in a mess of clutter and noise. But of course, we’re integrating the hardware and software and progressing toward the goal.

Lest you think otherwise, I’m no Ed Snowden and those pics aren’t classified. If you’re a radar nerd, you can even get this particular radar emblazoned on a god-awful t-shirt (like I did) from zazzle.com:

OK, enough of this bulldozarian levity. Let’s get serious again – oooh!

As a hard deadline approaches on a project, a perplexing question always comes to my mind:

How much time should I spend helping others who get “stuck“, versus getting the code I am responsible for writing done in time for the demo? And conversely, when I get stuck, how often should I “bother” someone who’s trying to get her own work done on time?

Of course, if you’re doing “agile“, situations like this never happen. In fact, I haven’t ever seen or heard a single agile big-wig address this thorny social issue. But reality being what it is, situations like this do indeed happen. I speculate that they happen more often than not – regardless of which methodology, practices, or tools you’re using. In fact, “agile” has the potential to amplify the dilemma by triggering the issue to surface on a per sprint basis.

Save for the psychopaths among us, we all want to be altruistic simply because it’s the culturally right thing to do. But each one of us, although we’re sometimes loathe to admit it, has been endowed by mother nature with “the selfish gene“. We want to serve ourselves and our families first. In addition, the situation is exacerbated by the fact that the vast majority of organizations unthinkingly have dumb-ass recognition and reward systems in place that celebrate individual performance over team performance – all the while assuming that the latter is a natural consequence of the former. Life can be a be-otch, no?

No More JAMB On My Toast

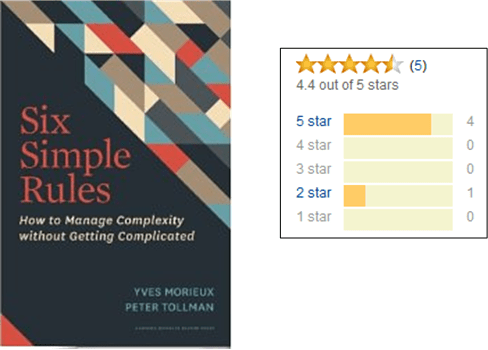

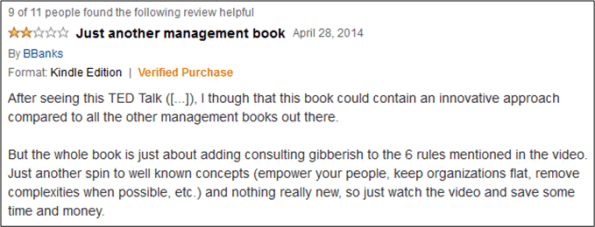

Amazon just sent me a recommendation for this book on the management of complexity:

Since four out of five reviewers gave it 5 stars, I scrolled down to peruse the reviews. As soon as I read the following JAMB review, I knew exactly what the reviewer was talking about. I can’t even begin to count how many boring, disappointing management books I’ve read over the years that fit the description. What I do know is that I don’t want to spend any more money or time on gobbledygook like this.

FUNGENOOP Programming

As you might know, the word “paradigm” and the concept of a “paradigm shift” were made insanely famous by Thomas Kuhn’s classic book: “The Structure Of Scientific Revolutions“. Mr. Kuhn’s premise is that science only advances via a progression of funerals. An old, inaccurate view of the world gets supplanted by a new, accurate view only when the powerfully entrenched supporters of the old view literally die off. The implication is that a paradigm shift is a binary, black and white event. The old stuff has been proven “wrong“, so you’re compelled to totally ditch it for the new “right” stuff – lest you be ostracized for being out of touch with reality.

In his recent talks on C++, Bjarne Stroustrup always sets aside a couple of minutes to go off on a mini-rant against “paradigm shifts“. Even though Einstein’s theory of relativity subsumes Newton’s classical physics, Newtonian physics is still extremely useful to practicing engineers. The discovery of multiplication/division did not make addition/subtraction useless. Likewise, in the programming world, the meteoric rise of the “object-oriented” programming style (and more recently, the “functional” programming style) did not render “procedural” and/or “generic” programming techniques totally useless.

This slide below is Bjarne’s cue to go off on his anti-paradigm rant.

If the system programming problem you’re trying to solve maps perfectly into a hierarchy of classes, then by all means use a OOP-centric language; perhaps Java, Smalltalk? If statefulness is not a natural part of your problem domain, then preclude its use by using something like Haskell. If you’re writing algorithmically simple but arcanely detailed device drivers that directly read/write hardware registers and FIFOs, then perhaps use procedural C. Otherwise, seriously think about using C++ to mix and match programmimg techniques in the most elegant and efficient way to attack your “multi-paradigm” system problem. FUNGENOOP (FUNctional + GENeric + Object Oriented + Procedural) programming rules!

Context Is Everything

Right along side with POSIWID (the Purpose Of a System Is What It Does), one of my favorite sayings is CIE (Context Is Everything).

Given a problem to solve and a person (or team) designated to solve it, the person will seek a solution in accordance with the constraints imposed on him/her by the surrounding context. As the figure below shows, his/her perceptions and thoughts of the problem will be colored by the context. The problem itself will most likely be perceived differently as a function of the context (as signified in the picture by slightly different poop types). In one or more contexts, the problem might not even be perceived as a problem at all (it’s not a bug, it’s a feature)!

While writing this post, I suddenly realized that there is no difference between the word “context” and “culture” – but only in the context of this post. 😀

Please Help Me With The Narrative

This is another one of those BD00 posts where the dorky picture effortlessly drew itself, but an accompanying, plausible narrative did not reveal itself. These word clusters came to mind during the chaotic process of creation, but I gave up attempting to iteratively structure and weave them together into anything semi-sane: “role distinction“, “bottom-up vs. top-down evolution“, “dumb, uniform components vs smart, diverse components“, “enduring vs. fragile foundation“, “excessive control“, “caste system“.

What words come to mind when you peruse the picture? Can can you fuse a story line with the picture? Please help me with the narrative, dear reader. Secrete your creative hormones on the problem at hand. Revel in the possibility of making sense out of nonsense. Like Elton John’s music goes with Bernie Taupin’s words, we can have your words go with BD00’s dorky picture.

Of course, like the one or two other posts similar to this that I’ve hatched in the past, I don’t expect any takers.

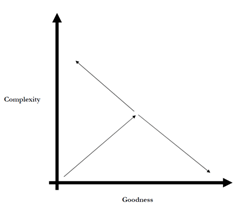

On Complexity And Goodness

While browsing around on Amazon.com for more books to read on simplicity/complexity, the pleasant memory of reading Dan Ward’s terrific little book, “The Simplicity Cycle“, somehow popped into my head. Since it has been 10 years since I read it, I decided to dig it up and re-read it.

In his little gem, Dan explores the relationships between complexity, goodness, and time. He starts out by showing this little graph, and then he spends the rest of the book eloquently explaining movements through the complexity-goodness space.

First things first. Let’s look at Mr. Ward’s parsimonious definitions of system complexity and goodness:

Complexity: Consisting of interconnected parts. Lots of interconnected parts equal high degree of complexity. Few interconnected parts equal a low degree of complexity.

Goodness: Operational functionality or utility or understandability or design maturity or beauty.

Granted, these definitions are just about as abstract as we can imagine, but (always) remember that context is everything:

The number 100 is intrinsically neither large nor small. 100 interconnected parts is a lot if we’re talking about a pencil sharpener, but few if we’re talking about a jet aircraft. – Dan Ward

When we start designing a system, we have no parts, no complexity (save for that in our heads), no goodness. Thus, we begin our effort close to the origin in the complexity-goodness space.

As we iteratively design/build our system, we conceive of parts and we connect them together, adding more parts as we continuously discover, learn, employ our knowledge of, and apply our design expertise to the problem at hand. Thus, we start moving out from the origin, increasing the complexity and (hopefully!) goodness of our baby as we go. The skills we apply at this stage of development are “learning and genesis“.

At a certain point in time during our effort, we hit a wall. The “increasing complexity increases goodness” relationship insidiously morphs into an “increasing complexity decreases goodness” relationship. We start veering off to the left in the complexity-goodness space:

Many designers, perhaps most, don’t realize they’ve rotated the vector to the left. We continue adding complexity without realizing we’re decreasing goodness.

We can often justify adding new parts independently, but each exists within the context of a larger system. We need to take a system level perspective when determining whether a component increases or decreases goodness. – Dan Ward

Once we hit the invisible but surely present wall, the only way to further increase goodness is to somehow start reducing complexity. We can do this by putting our “learning and genesis” skills on the shelf and switching over to our vastly underutilized “unlearning and synthesis” skills. Instead of creating and adding new parts, we need to reduce the part count by integrating some of the parts and discarding others that aren’t pulling their weight.

Perfection is achieved not when there is nothing more to add, but rather when there is nothing more to take away. – Antoine de Saint Exupery

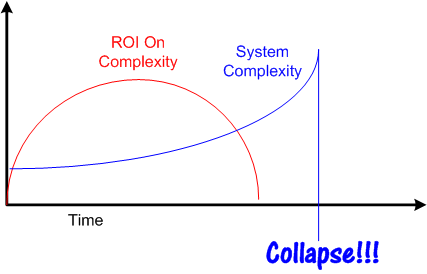

Dan’s explanation of the complexity-goodness dynamic is consistent with Joseph Tainter’s account in “The Collapse Of Complex Societies“. Mr. Tainter’s thesis is that as societies grow, they prosper by investing in, and adding layer upon layer, of complexity to the system. However, there is an often unseen downside at work during the process. Over time, the Return On Investment (ROI) in complexity starts to decrease in accordance with the law of diminishing returns. Eventually, further investment depletes the treasury while injecting more and more complexity into the system without adding commensurate “goodness“. The society becomes vulnerable to a “black swan” event, and when the swan paddles onto the scene, there are not enough resources left to recover from the calamity. It’s collapse city.

The only way out of the runaway increasing complexity dilemma is for the system’s stewards to conscientiously start reducing the tangled mess of complexity: integrating overlapping parts, fusing tightly coupled structures, and removing useless or no-longer-useful elements. However, since the biggest benefactors of increasing complexity are the stewards of the system themselves, the likelihood of an intervention taking place before a black swan’s arrival on the scene is low.

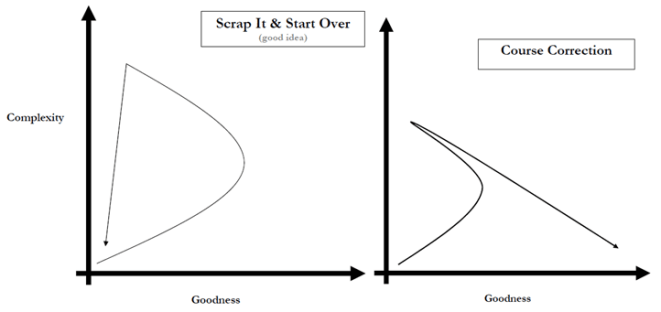

At the end of his book, Mr. Ward presents a few patterns of activity in the complexity-goodness space, two of which align with Mr. Tainter’s theory. Perhaps the one on the left should be renamed “Collapse“?

So, what does all this made up BD00 complexity-goodness-collapse crap mean to me in my little world (and perhaps you)? In my work as a software developer, when my intuition starts whispering in my ear that my architecture/sub-designs/code are starting to exceed my capacity to understand the product, I fight the urge to ignore it. I listen to that voice and do my best to suppress the mighty, culturally inculcated urge to over-learn, over-create, and over-complexify. I grudgingly bench my “learning and genesis” skills and put my “unlearning and synthesis” skills in the game.

An Intimate Act Of Communication

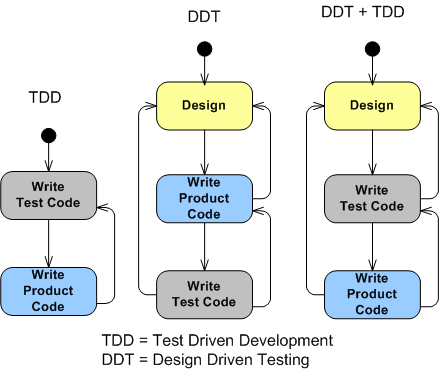

Take a look at these three state machine models for intimately developing a chunk of functionally cohesive software:

The key distinguishing feature of the two machines on the right from the pure TDD machine on the left is that some level of design is the initial driver, informer, of the subsequent coding/testing development process. Note that all three methods contain feedback transitions triggered by “learning as we go” events.

In my understanding of TDD, no time is “wasted” upfront thinking about, or capturing, design data at any level of granularity. The pithy mandate from the TDD gods is “red-green-refactor” or die. The design bubbles up solely from the testing/coding cycle in a zen-like flow of intelligence.

Personally, I work in accordance with the DDT model. How about you? For newbies who were solely taught, and only know how to do, TDD, have you ever thought about trying the “traditional” DDT way?

BTW, I learned, tried, and then rejected, TDD as my personal process from “Unit Test Frameworks“. Except for the parts on TDD, it’s a terrific book for learning about unit testing.

Since “design” is an intimately personal process, whatever works for you is fine by BD00. But just because it’s “newer” and has a lot of rabid fan-boys promoting it (including some big and famous consultants), don’t auto-assume TDD is da bomb.

Design is an intimate act of communication between the creator and the created. – Unknown

Two Thousand Six Hundred And Sixty-Three

Checkout the impressive number of media files that I’ve uploaded to the WordPress web site during my unimpressive five year blogging career:

I briefly considered the possibility of creating an unsellable 134 page coffee table book of repulsive BD00 images, but I don’t think there is a single legal image in the bunch. 😦

Stopping The Spew!

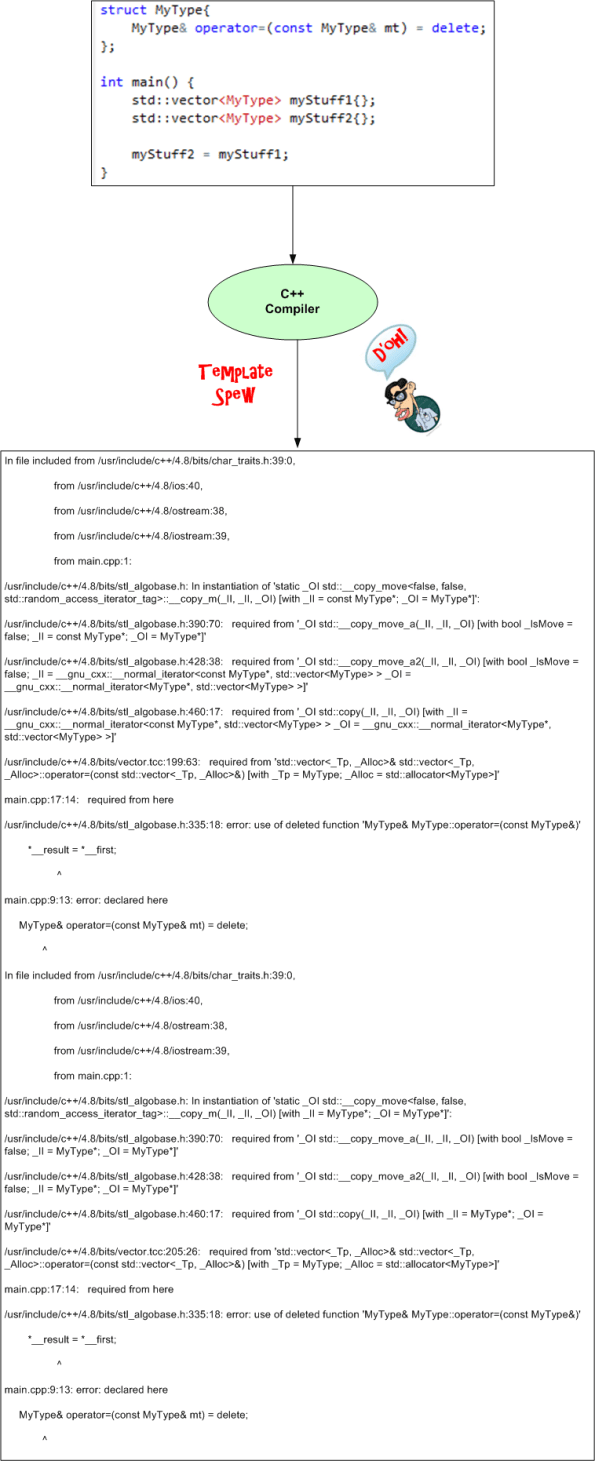

Every C++ programmer has experienced at least one, and most probably many, “Template Spew” (TS) moments. You know you’ve triggered a TS moment when, just after hitting the compile button on a program the compiler deems TS-worthy, you helplessly watch an undecipherable avalanche of error messages zoom down your screen at the speed of light. It is rumored that some novices who’ve experienced TS for the very first time have instantaneously entered a permanent catatonic state of unresponsiveness. It’s even said that some poor souls have swan-dived off of bridges to untimely deaths after having seen such carnage.

Note: The graphic image that follows may be highly disturbing. You may want to stop reading this post at this point and continue to waste company time by surfing over to facebook, reddit, etc.

TS occurs when one tries to use a templated class object or function template with a template parameter type that doesn’t provide the behavior “assumed” by the class or function. TS is such a scourge in the C++ world that guru Scott Meyers dedicates a whole item in “Effective STL“, number 49, to handling the trauma associated with deciphering TS gobbledygook.

For those who’ve never seen TS output, here is a woefully contrived example:

The above example doesn’t do justice to the havoc the mighty TS dragon can wreak on the mind because the problem (std::vector<T> requires its template arg to provide a copy assignment function definition) can actually be inferred from a couple of key lines in the sea of TS text.

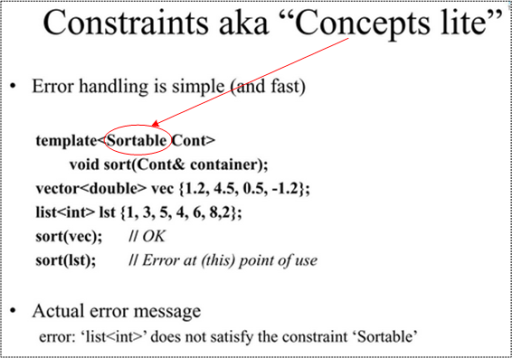

Ok, enough doom and gloom. Fear not, because help is on the way via C++14 in the form of “concepts lite“. A “concept” is simply a predicate evaluated on a template argument at compile time. If you employ them while writing a template, you inform the compiler of what kind(s) of behavior your template requires from its argument(s). As a use case illustration, behold this slide from Bjarne Stroustrup:

The “concept” being highlighted in this example is “Sortable“. Once the compiler knows that the sort<T> function requires its template argument to be “Sortable“, it checks that the argument type is indeed sortable. If not, the error message it emits will be something short, sweet, and to the point.

The concept of “concepts” has a long and sordid history. A lot of work was performed on the feature during the development of the C++11 specification. However, according to Mr. Stroustrup, the result was overly complicated (concept maps, new syntax, scope & lookup issues). Thus, the C++ standards committee decided to controversially sh*tcan the work:

C++11 attempt at concepts: 70 pages of description, 130 concepts – we blew it!

After regrouping and getting their act together, the committee whittled down the number of pages and concepts to something manageable enough (approximately 7 pages and 13 concepts) to introduce into C++14. Hence, the “concepts lite” label. Hopefully, it won’t be long before the TS dragon is relegated back to the dungeon from whence it came.