Archive

Stacks And Icebergs

The picture below attempts to communicate the explosive growth in software infrastructure complexity that has taken place over the past few decades. The growth in the “stack” has been driven by the need for bigger, more capable, and more complex software-intensive systems required to solve commensurately growing complex social problems.

In the beginning there was relatively simple, “fixed” function hardware circuitry. Then, along came the programmable CPU (Central Processing Unit). Next, the need to program these CPUs to do “good things” led to the creation of “application software”. Moving forward, “operating system software” entered the picture to separate the arcane details and complexity of controlling the hardware from the application-specific problem solving software. Next, in order to keep up with the pace of growing application software size , the capability for application designers to spawn application tasks (same address space) and processes (separate address spaces) was designed into the operating system software. As the need to support geographically disperse, distributed, and heterogeneous systems appeared, “communication middleware software” was developed. On and on we go as the arrow of time lurches forward.

As hardware complexity and capability continues to grow rapidly, the complexity and size of the software stack also grows rapidly in an attempt to keep pace. The ability of the human mind (which takes eons to evolve compared to the rate of technology change) to comprehend and manage this complexity has been overwhelmed by the pace of advancement in hardware and software infrastructure technology growth.

Thus, in order to appear “infallibly in control” and to avoid the hard work of “understanding” (which requires diligent study and knowledge acquisition), bozo managers tend to trivialize the development of software-intensive systems. To self-medicate against the pain of personal growth and development, these jokers tend to think of computer systems as simple black boxes. They camouflage their incompetence by pretending to have a “high level” of understanding. Because of this aversion to real work, these dudes have no problem committing their corpocracies to ridiculous and unattainable schedules in order to win “the business”. Have you ever heard the phrase “aggressive schedule”?

“You have to know a lot to be of help. It’s slow and tedious. You don’t have to know much to cause harm. It’s fast and instinctive.” – Rudolph Starkermann

Metrics Dilemma

“Not everything that counts can be counted. Not everything that can be counted counts.” – Albert Einstein.

When I started my current programming project, I decided to track and publicly record (via the internal company Wiki) the program’s source code growth. The list below shows the historical rise in SLOC (Source Lines Of Code) up until 10/08/09.

10/08/09: Files= 33, Classes=12, funcs=96, Lines Code=1890

10/07/09: Files= 31, Classes=12, funcs=89, Lines Code=1774

10/06/09: Files= 33, Classes=14, funcs=86, Lines Code=1683

10/01/09: Files= 33, Classes=14, funcs=83, Lines Code=1627

09/30/09: Files= 31, Classes=14, funcs=74, Lines Code=1240

09/29/09: Files= 28, Classes=13, funcs=67, Lines Code=1112

09/28/09: Files= 28, Classes=13, funcs=66, Lines Code=1004

09/25/09: Files= 28, Classes=14, funcs=57, Lines Code=847

09/24/09: Files= 28, Classes=14, funcs=53, Lines Code=780

09/23/09: Files= 28, Classes=14, funcs=50, Lines Code=728

09/22/09: Files= 28, Classes=14, funcs=48, Lines Code=652

09/21/09: Files= 26, Classes=10, funcs=35, Lines Code=536

09/15/09: Files= 26, Classes=10, funcs=29, Lines Code=398

The fact that I know that I’m tracking and publicizing SLOC growth is having a strange and negative effect on the way that I’m writing the code. As I iteratively add code, test it, and reflect on its low level physical design, I’m finding that I’m resisting the desire to remove code that I discover (after-the-fact) is not needed. I’m also tending to suppress the desire to replace unnecessarily bloated code blocks with more efficient segments comprised of fewer lines of code.

Hmm, so what’s happening here? I think that my subconscious mind is telling me two things:

- A drop in SLOC size from one day to the next is a bad thing – it could be perceived by corpo STSJs (Status Takers and Schedule Jockeys) as negative progress.

- If I spend time refactoring the existing code to enhance future maintainability and reduce size, it’ll put me behind schedule because that time could be better spent adding new code.

The moral of this story is that the “best practice” of tracking metrics, like everything in life, has two sides.

No BS, From BS

You certainly know what the first occurrence of “BS” in the title of this blarticle means, but the second occurrence stands for “Bjarne Stroustrup”. BS, the second one of course, is the original creator of the C++ programming language and one of my “mentors from afar” (it’s a good idea to latch on to mentors from afar because unless your extremely lucky, there’s a paucity of mentors “a-near”).

I just finished reading “The Design And Evolution of C++” by BS. If you do a lot C++ programming, then this book is a must read. BS gives a deeply personal account of the development of the C++ language from the very first time he realized that he needed a new programming tool in 1979, to the start of the formal standardization process in 1994. BS recounts the BS (the first one, of course) that he slogged through, and the thinking processes that he used, while deciding upon which features to include in C++ and which ones to exclude. The technical details and chronology of development of C++ are interesting, but the book is also filled with insightful and sage advice. Here’s a sampling of passages that rang my bell:

“Language design is not just design from first principles, but an art that requires experience, experiments, and sound engineering trade-offs.”

“Many C++ design decisions have their roots in my dislike for forcing people to do things in some particular way. In history, some of the worst disasters have been caused by idealists trying to force people into ‘doing what is good for them'”.

“Had it not been for the insights of members of Bell Labs, the insulation from political nonsense, the design of C++ would have been compromised by fashions, special interest groups and its implementation bogged down in a bureaucratic quagmire.”

“You don’t get a useful language by accepting every feature that makes life better for someone.”

“Theory itself is never sufficient justification for adding or removing a feature.”

“Standardization before genuine experience has been gained is abhorrent.”

“I find it more painful to listen to complaints from users than to listen to complaints from language lawyers.”

“The C++ Programming Language (book) was written with the fierce determination not to preach any particular technique.”

“No programming language can be understood by considering principles and generalizations only; concrete examples are essential. However, looking at the details without an overall picture to fit them into is a way of getting seriously lost.”

For an ivory tower trained Ph.D., BS is pretty down to earth and empathic toward his customers/users, no? Hopefully, you can now understand why the title of this blarticle is what it is.

Don’t Be Late!

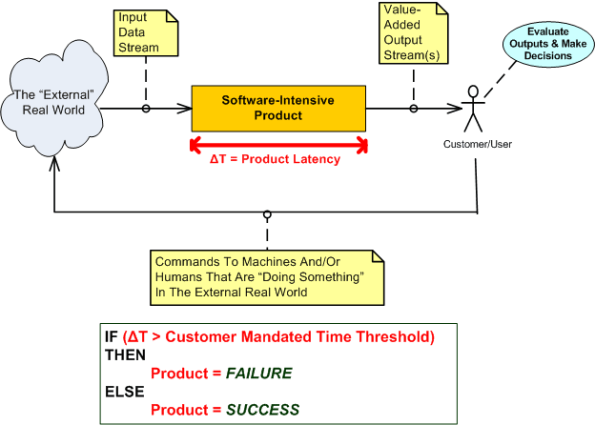

The software-intensive products that I get paid to specify, design, build, and test involve the real-time processing of continuous streams of raw input data samples. The sample streams are “scrubbed and crunched” in order to generate higher-level, human interpretable, value-added, output information and event streams. As the external stream flows into the product, some samples are discarded because of noise and others are manipulated with a combination standard and proprietary mathematical algorithms. Important events are detected by monitoring various characteristics of the intermediate and final output streams. All this processing must take place fast enough so that the input stream rate doesn’t overwhelm the rate at which outputs can be produced by the product; the product must operate in “real-time”.

The human users on the output side of our products need to be able to evaluate the output information quickly in order to make important and timely command and control decisions that affect the physical well-being of hundreds of people. Thus, latency is one of the most important performance metrics used by our customers to evaluate the acceptability of our products. Forget about bells and whistles, exotic features, and entertaining graphical interfaces, we’re talking serious stuff here. Accuracy and timeliness of output are king.

Latency (or equivalently, response time) is the time it takes from an input sample or group of related samples to traverse the transformational processing path from the point of entry to the point of egress through the software-dominated product “box” or set of interconnected boxes. Low latency is good and high latency is bad. If product latency exceeds a time threshold that makes the output effectively unusable to our customers , the product is unacceptable. In some applications, failure of the absolute worst case latency to stay below the threshold can be the deal breaker (hard real time) and in other applications the average latency must not exceed the threshold xx percent of the time where xx is often greater than 95% (soft real time).

Latency is one of those funky, hard-to-measure-until-the-product-is-done, “non-functional” requirements. If you don’t respect its power to make or break your wonderful product from the start of design throughout the entire development effort, you’ll get what you deserve after all the time and money has been spent – lots of rework, stress, and angst. So, if you work on real-time systems, don’t be late!

Complexity Explosion

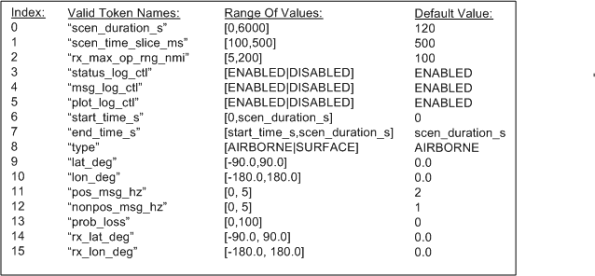

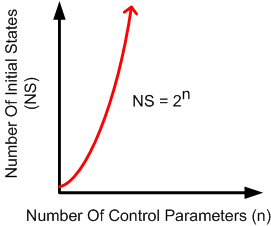

I’m in the process of writing a C++ program that will synthesize and inject simulated inputs into an algorithm that we need to test for viability before including it in one of our products. I’ve got over 1000 lines of code written so far, and about another 1000 to go before I can actually use it to run tests on the algorithm. Currently, the program requires 16 multi-valued control inputs to be specified by the user prior to running the simulation. The inputs define the characteristics of the simulated input stream that will be synthesized.

Even though most of the control parameters are multi-valued, assume that they are all binary-valued and can be set to either “ON” or “OFF” . It then follows that there are 2**16 = 65536 possible starting program states. When (not if) I need to add another control parameter to increase functionality, the number of states will soar to 131,072, if, and only if, the new parameter is not multi-valued. D’oh! OMG! Holy shee-ite!

Is it possible to setup the program, run the program, and evaluate its generated outputs against its inputs for each of these initial input scenarios? Never say never, but it’s not economically viable. Even if the setup and run activities can be “automated”, manually calculating the expected outputs and comparing the actual outputs against the expected outputs for each starting state is impractical. I’d need to write another program to test the program, and then write another program to test the program that tests the program that tests the first program. This recursion would go on indefinitely and errors can be made at any step of the way. Bummer.

No matter what the “experts” who don’t have to do the work themselves have to say, in programming situations like this, you can’t “automate” away the need for human thought and decision making. Based on knowledge, experience, and more importantly, fallible intuition, I have to judiciously select a handful of the 65536 starting states to run. I then have to manually calculate the outputs for each of these scenarios, which is impractical and error-prone because the state machine algorithm that processes the inputs is relatively dense and complicated itself. What I’m planning to do is visually and qualitatively scan the recorded outputs of each program run for a select few of the 65536 states that I “feel” are important. I’ll intuitively analyze the results for anomalies in relation to the 16 chosen control input values.

Got a better way that’s “practical”? I’m all ears, except for a big nose and a bald head.

“Nothing is impossible for the man who doesn’t have to do it himself.” – A. H. Weiler

Four Or Two?

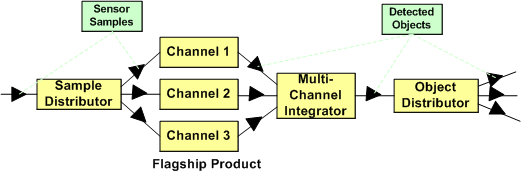

Assume that the figure below represents the software architecture within one of your flagship products. Also assume that each of the 6 software components are comprised of N non-trivial SLOC (Source Lines Of Code) so that the total size of the system is 6N SLOC. For the third assumption, pretend that a new, long term, adjacent market opens up for a “channel 1” subset of your product.

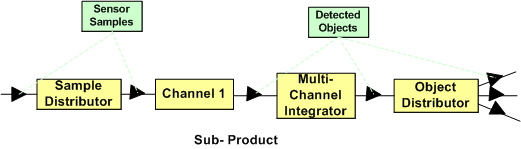

To address this new market’s need and increase your revenue without a ton of investment, you can choose to instantiate the new product from your flagship. As shown in the figure below, if you do that, you’ve reduced the complexity of your new product addition by 1/3 (6N to 4N SLOC) and hence, decreased the ongoing maintenance cost by at least that much (since maintainability is a non-linear function of software size).

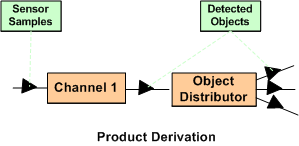

Nevertheless, your new product offering has two unneeded software components in its design: the “Sample Distributor” and the “Multi-Channel Integrator”. Thus, if as the diagram below shows, you decide to cut out the remaining fat (the most reliable part in a system is the one that’s not there – because it’s not needed), you’ll deflate your long term software maintenance costs even further. Your new product portfolio addition would be 1/3 the original size (6N to 2N SLOC) of your flagship product.

If you had the authority, which approach would you allocate your resources to? Would you leave it to chance? Is the answer a no brainer, or not? What factors would prevent you from choosing the simpler two component solution over the four component solution? What architecture would your new customers prefer? What would your competitors do?

Sum Ting Wong

- SYS = Systems

- SW = Software

- HW = Hardware

When the majority of SYS engineers in an org are constantly asking the HW and SW engineers how the product works, my Chinese friend would say “sum ting (is) wong”. Since they’re the “domain experts”, the SYS engineers supposedly designed and recorded the product blueprints before the box was built. They also supposedly verified that what was built is what was specified. To be fair, if no useful blueprints exist, then 2nd generation SYS engineers who are assigned by org corpocrats to maintain the product can’t be blamed for not understanding how the product works. These poor dudes have to deal with the inherited mess left behind by the sloppy and undisciplined first generation of geniuses who’ve moved on to cushy “staff” and “management” positions.

Leadership is exploring new ground while leaving trail markers for those who follow. Failing to demand that first generation product engineers leave breadcrumbs on the trail is a massive failure of leadership.

If the SYS engineers don’t know how the product works at the “white box” level of detail, then they won’t be able to efficiently solve system performance problems, or conceive of and propose continuous improvements. The net effect is that the mysterious “black box” product owns them instead of vice versa. Like an unloved child, a neglected product is perpetually unruly. It becomes a serial misbehaver and a constant source of problems for its parents; leaving them confounded and confused when problems manifest in the field.

A corpocracry with leaders that are so disconnected from the day-to-day work in the bowels of the boiler room that they don’t demand system engineering ownership of products, get what they deserve; crappy products and deteriorating financial performance.

My “Status” As Of 09-27-09

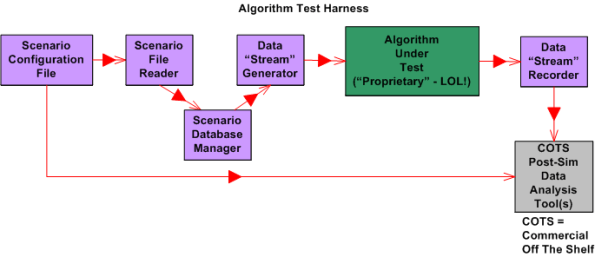

I recently finished a 2 month effort discovering, developing, and recording a state machine algorithm that produces a stream of integrated output “target” reports from a continuous stream of discrete, raw input message fragments. It’s not rocket science, but because of the complexity of the algorithm (the devil’s always in the details), a decision was made to emulate this proprietary algorithm in multiple, simulated external environmental scenarios. The purpose of the emulation-plus-simulation project is to work out the (inevitable) kinks in the algorithm design prior to integrating the logic into an existing product and foisting it on unsuspecting customers :^) .

The “bent” SysML diagram below shows the major “blocks” in the simulator design. Since there are no custom hardware components in the system, except for the scenario configuration file, every SysML block represents a software “class”.

Upon launch, the simulator:

- Reads in a simple, flat, ASCII scenario configuration file that specifies the attributes of targets operating in the simulated external environment. Each attribute is defined in terms of a <name=value> token pair.

- Generates a simulated stream of multiplexed input messages emitted by the target constellation.

- Demultiplexes and processes the input stream in accordance with the state machine algorithm specification to formulate output target reports.

- Records the algorithm output target report stream for post-simulation analysis via Commercial Off The Shelf (COTS) tools like Excel and MATLAB.

I’m currently in the process of writing the C++ code for all of the components except the COTS tools, of course. On Friday, I finished writing, unit testing, and integration testing the “Simulation Initialization” functionality (use case?) of the simulator.Yahoo!

The diagram below zooms in on the front end of the simulator that I’ve finished (100% of course) developing; the “Scenario File Reader” class, and the portion of the in-memory “Scenario Database Manager” class that stores the scenario configuration data in the two sub-databases.

The next step in my evil plan (moo ha ha!) is to code up, test, and integrate the much-more-interesting “Data Stream Generator” class into the simulator without breaking any of the crappy code that has already been written. 🙂

If someone (anyone?) actually reads this boring blog and is interested in following my progress until the project gets finished or canceled, then give me a shoutout. I might post another status update when I get the “Data Stream Generator” class coded, tested, and integrated.

What’s your current status?

Surveillance Systems

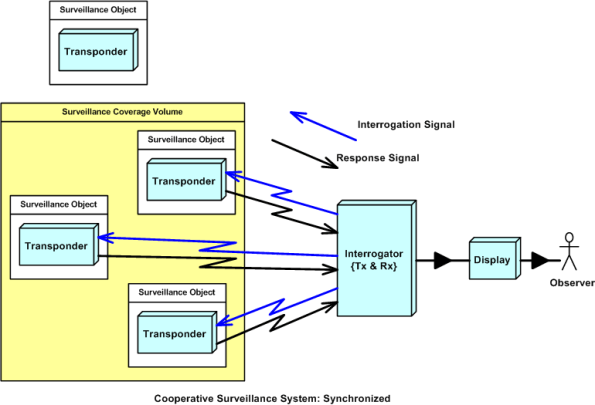

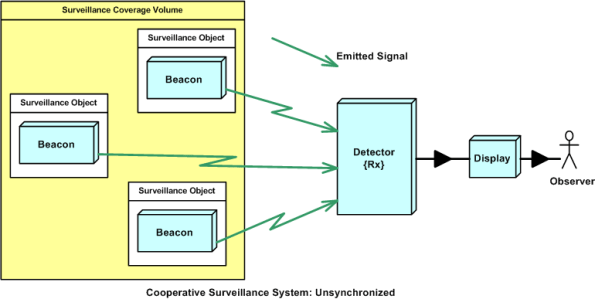

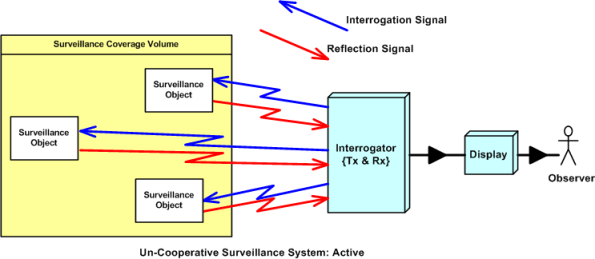

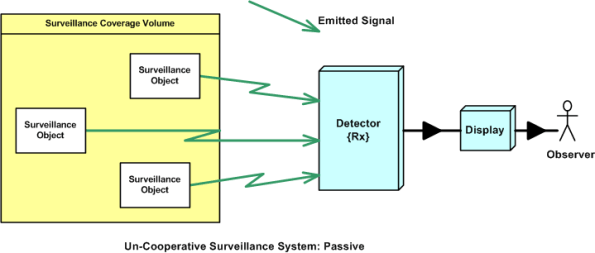

The purpose of a surveillance system is to detect and track Objects Of Interest (OOI) that are present within a spatial volume covered by a sensing device. Surveillance systems can be classified into four types:

- Cooperative and synchronized

- Cooperative and unsynchronized

- Un-cooperative and active

- Un-cooperative and passive

In cooperative systems, the OOI are equipped with a transponder device that voluntarily “cooperates” with the sensor. The sensor continuously probes the surveillance volume by transmitting an interrogation signal that is recognized by the OOI transponders. When a transponder detects an interrogation, it transmits a response signal back to the interrogator. The response may contain identification and other information of interest to the interrogator. Air traffic control radar systems are examples of cooperative, synchronized surveillance systems.

In a cooperative and unsynchronized surveillance system, the sensor doesn’t actively probe the surveillance volume. It passively waits for signal emissions from beacon-equipped OOI. Cooperative and unsynchronized surveillance systems are less costly than cooperative and synchronized systems, but because the OOI beacon emissions aren’t synchronized by an interrogator, their signals can garble each other and make it difficult for the sensor detector to keep them separated.

In uncooperative surveillance systems, the OOI aren’t equipped with any man made devices designated to work in conjunction with a remotely located sensor. The OOI are usually trying to evade detection and/or the sensor is trying to detect the OOI without letting the OOI know that they are under surveillance.

In an active, uncooperative surveillance system, the sensor’s radiated signal is specially designed to reflect off of an OOI. The time of detection of the reflected signal can be used to determine the position and speed of an OOI. Military radar and sonar equipment are good examples of uncooperative surveillance systems.

In a passive, uncooperative surveillance system, the sensor is designed to detect some type of energy signal (e.g. heat, radioactivity, sound) that is naturally emitted or reflected (e.g. light) by an OOI. Since there is no man made transmitter device in the system design, the detection range, and hence coverage volume, is much smaller than any of the other types of surveillance systems.

The dorky classification system presented in this blarticle is by no means formal, or official, or standardized. I just made it up out of the blue, so don’t believe a word that I said.

Quantum Progress

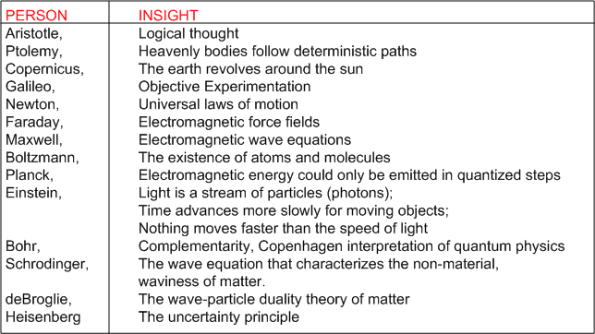

Kuttner and Rosenblum’s “Quantum Enigma” is the best book on quantum physics that I’ve read to date. The table below is my attempt to chronologically summarize some of the well known, brilliant people that led us to where we are today. I found it fascinating that most of the people developed their insights as a result of noticing and pursuing nagging “anomalies” in their work. I also found it fascinating that Einstein was constantly challenging quantum theory even though the math worked flawlessly at predicting outcomes.

The quote that I like most is Bohr’s: “If quantum mechanics hasn’t profoundly shocked you, you haven’t understood it yet”. Well, I don’t understand quantum physics, but I’m still shocked by it. However, since I’ve been consuming all kinds of spiritual teachings over the past 15 years, I’m not surprised by what quantum physics says. Academically “inferior”, but much wiser spiritual teachers have been saying the same thing for thousands, yes thousands, of years. From the Buddha, Lao Tzu, and Jesus, to Krishnamurti, Tolle, and Adyashanti; they didn’t need exotic and esoteric math skills to develop their ideas and thoughts.