Archive

Architectural Choices

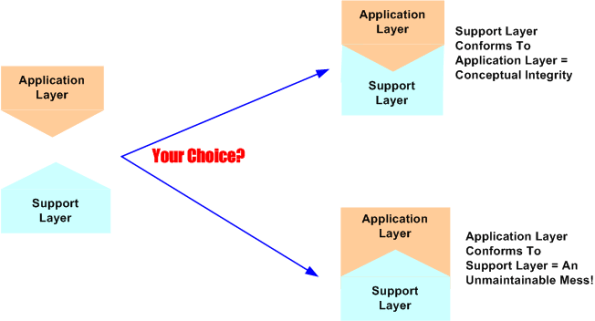

Assume that a software-intensive system you’re tasked with designing is big and complex. Because of this, you freely decide to partition the beast into two clearly delineated layers; a value-added application layer on top and an unwanted overhead, but essential, support layer on the bottom.

Now assume that a support layer for a different application layer already exists in your org – the investment has been made, it has been tested, and it “works” for those applications it’s been integrated with. As the left portion of the figure below shows, assume that the pre-existing support layer doesn’t match the structure of your yet-to-be-developed application layer. They just don’t naturally fit together. Would you:

- try to “bend” the support layer to fit your application layer (top right portion of the figure)?

- try to redesign and gronk your application layer to jam-fit with the support layer (bottom right portion of the figure)?

- ditch the support layer entirely and develop (from scratch) a more fitting one?

- purchase an externally developed support layer that was designed specifically to fit applications that fall into the same category as yours?

After contemplating long term maintenance costs, my choice is number 4. Let support layer experts who know what they’re doing shoulder the maintenance burden associated with the arcane details of the support layer. Numbers 1 through 3 are technically riskier and financially costlier because, unless your head is screwed on backwards, you should be tasking your programmers with designing and developing application layer functionality that is (supposedly) your bread winner – not mundane and low value-added infrastructure functionality.

Bah Hum BUG

Note: For those readers not familiar with c++ programming, you still might get some value out of this nerd-noid blarticle by skipping to the last paragraph.

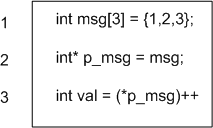

The other day, a colleague called me over to help him find a bug in some c++ code. The bug was reported in from the field by system testers. Since the critter was causing the system to crash (which was better than not crashing and propagating bad “logical” results downstream) a slew of people, including the customer, were “waiting” for it to be fixed so the testers could continue testing. My friend had localized the bug to the following 3 simple lines:

After extracting the three lines of code out of the production program and wrapping it in a simple test driver, he found that after line 3 was executed, “val” was being set to “1” as expected. However, the “int” pointer, p_msg, was not being incremented as assumed by the downstream code – which subsequently crashed the system. WTF?

After figuring it out by looking up the c++ operator precedence rules, we recreated the crime scene in the diagram below. The left scenario at the bottom of the figure below was what we initially thought the code was doing, but the actual behavior on the right was what we observed. Not only was the pointer not incremented, but the value in msg[0] was being incremented – a double freakin’ whammy.

Before analyzing the precedence rules, we thought that there was a bug in the compiler (yeah, right). However, after thinking about it for a while, we understood the behavior. Line 3 was:

- extracting the value in msg[0] (which is “1”)

- assigning it to “val”

- incrementing the value in msg[0]

Changing the third line to “int val = *p_msg++” solved the problem. Because of the operator precedence rules, the new behavior is what was originally intended:

- extract the value in msg[0] (which is “1”)

- assign it to “val”

- increment the pointer to point to the value in msg[1]

A simple “const” qualifier placed at the beginning of line 2 would have caused the compiler to barf on the code in line 3: “you cannot assign to a variable that is const“. The bug would’ve been squashed before making it out into the field.

It’s great to be brought down to earth and occasionally being humbled by experiences like these; especially when you’re not the author of the bug 🙂 Plus, after-the-fact fire fighting is cherished by institutions over successful prevention. After all, how can you reward someone for a problem that didn’t occur because of his/her action? Even worse, most institutions actively thwart the application of prevention techniques by imposing Draconian schedules upon those doing the work.

The world is full of willing people, some willing to work, the rest willing to let them. – Robert Frost

My contortion of this quote is:

The world is full of willing people, some willing to work, the rest willing to manage them while simultaneously pressuring them into doing a poor job. – Bulldozer00

Skill Acquisition

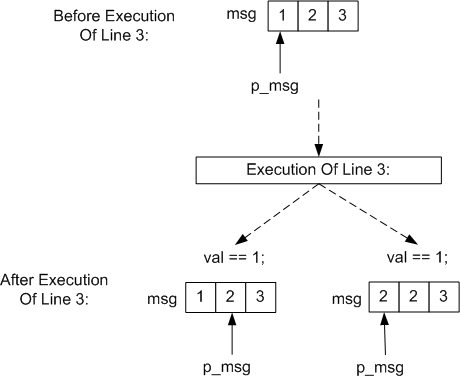

Check out the graph below. It is a totally made up (cuz I like to make things up) fabrication of the relationship between software skill acquisition and time (tic-toc, tic-toc). The y-axis “models” a simplistic three skill breakdown of technical software skills: programming (in-the-very-small) , design (in-the-small) and architecture (in-the-large). The x-axis depicts time and the slopes of the line segments are intended to convey the qualitative level of difficulty in transitioning from one area of expertise into the next higher one in the perceived value-added chain. Notice that the slopes decrease with each transition; which indicates that it’s tougher to achieve the next level of expertise than it was to achieve the previous level of expertise.

The reason I assert that moving from level N to level N+1 takes longer than moving from N-1 to N is because of the difficulty human beings have dealing with abstraction. The more concrete an idea, action or thought is, the easier it is to learn and apply. It’s as simple as that.

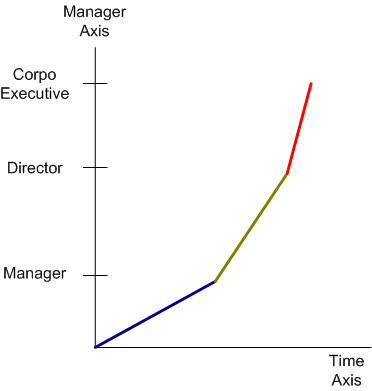

The figure below shows another made up management skill acquisition graph. Note that unlike the technical skill acquisition graph, the slopes decrease with each transition. This trend indicates that it’s easier to achieve the next level of expertise than it was to achieve the previous level of expertise. Note that even though the N+1 level skills are allegedly easier to acquire over time than the Nth level skill set, securing the next level title is not. That’s because fewer openings become available as the management ladder is ascended through whatever means available; true merit or impeccable image.

Error Acknowledgement: I forgot to add a notch with the label DIC at the lower left corner of the graph where T=0.

Meta-test Tools

A meta test tool ties together other lower level workhorse test tools in one place. Users interface with a meta test tool to launch and control the underlying system tool set that exercises the system under test. However, if you don’t have any low level test tools (out of dumbass neglect or innocent ignorance), a meta test tool is useless. But hey, the user interface and the camouflage it generates look good.

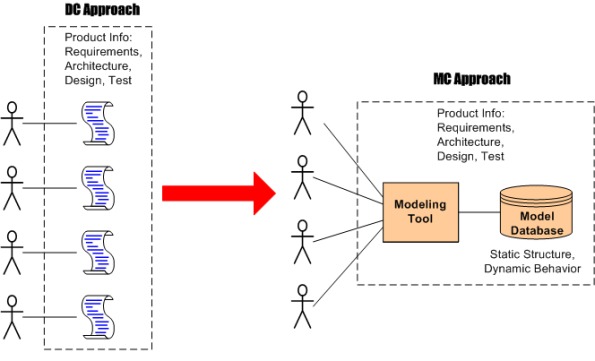

Docu-centric, Model-centric

Let’s say that your org has been developing products using a Docu-Centric (DC) approach for many years. Let’s also say that the passage of time and the experience of industry peers have proven that a Model-Centric (MC) mode of development is superior. By superior, I mean that MC developed products are created more quickly and with higher quality than DC developed products.

Now, assume that your org is heavily invested in the old DC way – the DC mindset is woven into the fabric of the org. Of course, your bazillions of (probably ineffective) corpo processes are all written and continuously being “improved” to require boatloads of manually generated, heavyweight documents that clearly and unassailably prove that you know what you’re doing (lol!) to internal and external auditors.

How would you move your org from a DC mindset to an MC mindset? Would you even risk trying to do it?

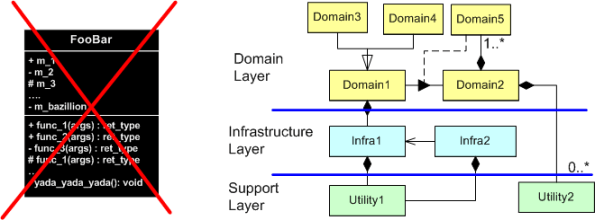

Hold The Details, Please

When documenting software designs (in UML, of course) before, during, or after writing code, I don’t like to put too much detail in my models. By details, I mean exposing all public/protected/private data members and functions and all intra-member processing sequences within each class. I don’t like doing it for these reasons:

- I don’t want to get stuck in a time-busting, endless analysis paralysis loop.

- I don’t want a reviewer or reader to lose the essence of the design in a sea of unnecessary details that obscure the meaning and usefulness of the model.

- I don’t want every little code change to require a model change – manual or automated.

I direct my attention to the higher levels of abstraction, focusing on the layered structure of the whole. By “layered structure”, I mean the creation and identification of all the domain level classes and the next lower level, cross-cutting infrastructure and utility classes. More importantly, I zero in on all the relationships between the domain and infrastructure classes because the real power of a system, any system, emerges from the dynamic interactions between its constituent parts. Agonizing over the details within each class and neglecting the inter-class relationships for a non-trivial software system can lead to huge, incoherent, woeful classes and an unmaintainable downstream mess. D’oh!

How about you? What personal guidelines do you try to stick to when modeling your software designs? What, you don’t “do documentation“? If you’re looking to move up the value chain and become more worthy to your employer and (more importantly) to yourself, I recommend that you continuously learn how to become a better, “light” documenter of your creations. But hey, don’t believe a word I say because I don’t have any authority-approved credentials/titles and I like to make things up.

Powerful Tools

Cybernetician W. Ross Ashby‘s law of requisite variety states that “only variety can effectively control variety“. Another way of stating the law is that in order to control an innately complex problem with N degrees of freedom, a matched solution with at least N degrees of freedom is required. However, since solutions to hairy socio-technical problems introduce their own new problems into the environment, over-designing a problem controller with too many extra degrees of freedom may be worse than under-designing the controller.

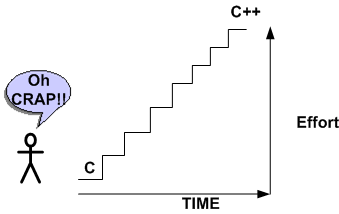

In an analogy with Ashby’s law, it takes powerful tools to solve powerful problems. Using a hammer where only a sledgehammer will get the job done produces wasted effort and leaves the problem unsolved. However, learning how to use and wield powerful new tools takes quite a bit of time and effort for non-genius people like me. And most people aren’t willing to invest prolonged time and effort to learn new things. Relative to adolescents, adults have an especially hard time learning powerful new tools because it requires sustained immersion and repetitive practice to become competent in their usage. That’s why they typically don’t stick with learning a new language or learning how to play an instrument.

In my case, it took quite a bit of effort and time before I successfully jumped the hurdle between the C and C++ programming languages. Ditto for the transition from ad-hoc modeling to UML modeling. These new additions to my toolbox have allowed me to tackle larger and more challenging software problems. How about you? Have you increased your ability to solve increasingly complex problems by learning how to wield commensurately new and necessarily complex tools and techniques? Are you still pointing a squirt gun in situations that cry out for a magnum?

Layered Obsolescence

For big and long-lived software-intensive systems, the most powerful weapon in the fight against the relentless onslaught of hardware and software obsolescence is “layering”. The purer the layering, the better.

In a pure layered system, source code in a given layer is only cognizant of, and interfaces with, code in the next layer down in the stack. Inversion of control frameworks where callbacks are installed in the next layer down are a slight derivative of pure layering. Each given layer of code is purposefully designed and coded to provide a single coherent service for the next higher, and more abstract, layer. However, since nothing comes for free, the tradeoff for architecting a carefully layered system over a monolith is performance. Each layer in the cake steals processor cycles and adds latency to the ultimate value-added functionality at the top of the stack. When layering rules must be violated to meet performance requirements, each transgression must be well documented for the poor souls who come on-board for maintenance after the original development team hits the high road to promotion and fame.

Relative to a ragged and loosely controlled layering scheme (or heaven forbid – no discernable layering), the maintenance cost and technical risk of replacing any given layer in a purely layered system is greatly reduced. In a really haphazard system architecture, trying to replace obsolete, massively tentacled components with fuzzy layer boundaries can bring the entire house of cards down and cause great financial damage to the owning organization.

“The entire system also must have conceptual integrity, and that requires a system architect to design it all, from the top down.” – Fred Brooks

Having said all this motherhood and apple pie crap that every experienced software developer knows is true, why do we still read and hear about so many software maintenance messes? It’s because someone needs to take continuous ownership of the overall code base throughout the lifecycle and enforce the layering rules. Talking and agreeing 100% about “how it should be done” is not enough.

Since:

- it’s an unpopular job to scold fellow developers (especially those who want to put their own personal coat of arms on the code base),

- it’s under-appreciated by all non-software management,

- there’s nothing to be gained but extra heartache for any bozo who willfully signs up to the enforcement task,

very few step up to the plate to do the right thing. Can you blame them? Those that have done it and have been rewarded by excommunication from the local development community in addition to indifference from management, rarely do it again – unless coerced.

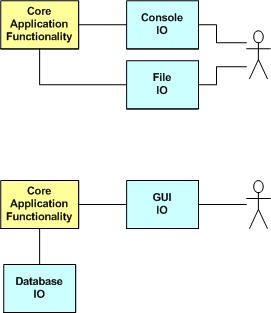

Console And Files First, GUI And Database Last

Adding Database IO and GUI IO to a program ratchets up it’s complexity, and hence development time, immensely. In “Programming: Principles and Practice Using C++“, Bjarne Stroustrup recommends designing and writing your program to do IO over the console and filesystem first, and then adding GUI IO and database IO later. And only if you have to.

Eliminating, or at least delaying, GUI and database IO forces you to focus on getting the internal application design right early. It also helps to keep you from tangling the GUI IO and database IO code with your application code and creating an unmaintainable ball of mud. Thirdly, the practice also makes testing much simpler than trying to write and debug the whole quagmire at once. Good advice?

Continuous Husbandry

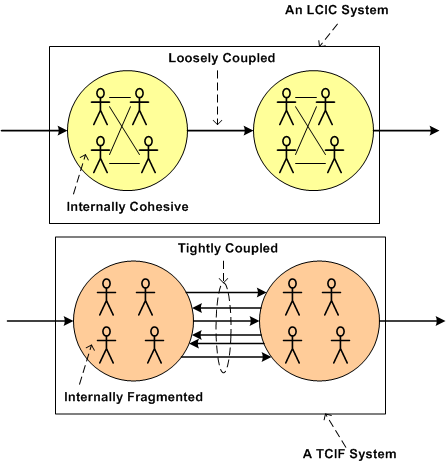

One definition of a system is “a collection of interacting elements designed to fulfill a purpose“. A well known rule of thumb for designing robust and efficient social, technical, and socio-technical systems is:

Keep your system elements Loosely Coupled and Internally Cohesive (LCIC)

The opposite of this golden rule is to design a system that has Tightly Coupled and Internally Fragmented (TCIF) elements. TCIF systems are rigid, inflexible, and tough to troubleshoot when the system malfunctions.

Designing, building, testing, and deploying LCIC systems is not enough to ensure that the system’s purpose will be fulfilled over long periods of time. Because of the relentless increase in entropy dictated by the second law of thermodynamics, continuous husbandry (as my friend W. L. Livingston often says) is required to arrest the growth in entropy. Without husbandry, LCIC systems (like startup companies) morph into TCIF systems (like corpocracies). The transformation can be so slooow that it is undetectable – until it’s too late. In subtle LCIC-to-TCIF transformations, it takes a crisis to shake the system architect(s) into reality. In a sudden jolt of awareness, they realize that their cute and lovable baby has turned into an uncontrollable ogre capable of massive stakeholder destruction. Bummer.