Archive

Taking Stock

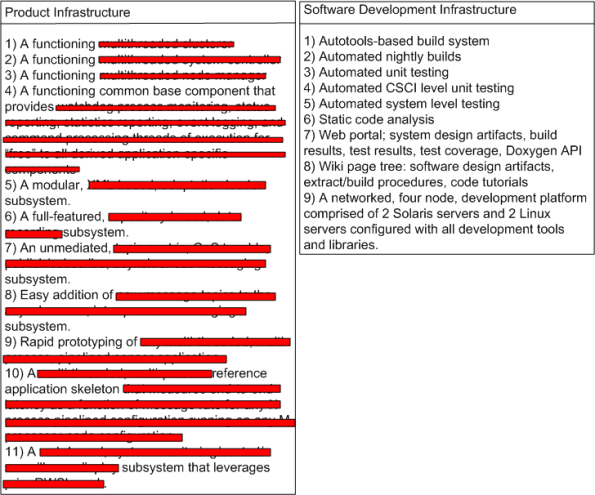

Every once in awhile, it’s good to step back and take stock of your team’s accomplishments over a finite stretch of time. I did this recently, and here’s the marketing hype that I concocted:

I like to think of myself as a pretty open dude, but I don’t think I’m (that) stupid. The product infrastructure features have been redacted just in case one of the three regular readers of this blasphemous blog happens to be an evil competitor who is intent on raping and pillaging my company.

The team that I’m very grateful to be working on did all this “stuff” in X months – starting virtually from scratch. Over the course of those X months, we had an average of M developers working full time on the project. At an average cost of C dollars per developer-month, it cost roughly X * M * C = $ to arrive at this waypoint. Of course, I (roughly) know what X, M, C, and $ are, but as further evidence that I’m not too much of a frigtard (<- kudos to Joel Spolsky for adding this term of endearment to my vocabulary!), I ain’t tellin’.

So, what about you? What has your team accomplished over a finite period of time? What did it cost? If you don’t know, then why not? Does anyone in your group/org know?

Push And Pull Message Retrieval

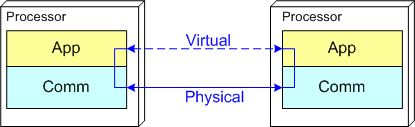

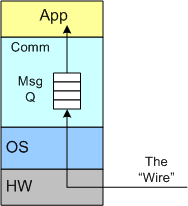

The figure below models a two layer distributed system. Information is exchanged between application components residing on different processor nodes via a cleanly separated, underlying communication “layer“. App-to-App communication takes place “virtually“, with the arcane, physical, over-the-wire, details being handled under the covers by the unheralded Comm layer.

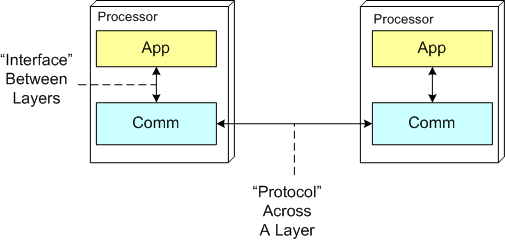

In the ISO OSI reference model for inter-machine communication, the vertical linkage between two layers in a software stack is referred to as an “interface” and the horizontal linkage between two instances of a layer running on different machines is called a “protocol“. This interface/protocol distinction is important because solving flow-control and error-control issues between machines is much more involved than handling them within the sheltered confines of a single machine.

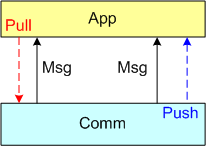

In this post, I’m going to focus on the receiving end of a peer-to-peer information transfer. Specifically, I’m going to explore the two methods in which an App component can retrieve messages from the comm layer: Pull and Push. In the “Pull” approach, message transfer from the Comm layer to the App layer is initiated and controlled by the App component via polling. In the “Push” method, inversion of control is employed and the Comm layer initiates/controls the transfer by invoking a callback function installed by the App component on initialization. Any professional Comm subsystem worth its salt will make both methods of retrieval available to App component developers.

The figure below shows a model of a comm subsystem that supplies a message queue between the application layer and the “wire“. The purpose of this queue is to prevent high rate, bursty, asynchronous message senders from temporarily overwhelming slow receivers. By serving as a flow rate smoother, the queue gives a receiver App component a finite amount of time to “catch up” with bursts of messages. Without this temporary holding tank, or if the queue is not deep enough to accommodate the worst case burst size, some messages will be “dropped on the floor“. Of course, if the average send rate is greater than the average processing rate in the receiving App, messages will be consistently lost when the queue eventually overflows from the rate mismatch – bummer.

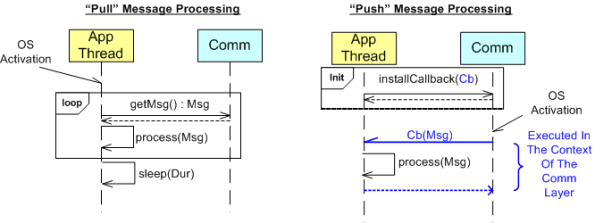

The UML sequence diagram below zeroes in on the interactions between an App component thread of execution and the Comm layer for both the “Push” and “Pull” methods of message retrieval. When the “Pull” approach is implemented, the OS periodically activates the App thread. On each activation, the App sucks the Comm layer queue dry; performing application-specific processing on each message as it is pulled out of the Comm layer. A nice feature of the “Pull” method, which the “Push” method doesn’t provide, is that the polling rate can be tuned via the sleep “Dur(ation)” parameter. For low data rate message streams, “Dur” can be set to a long time between polls so that the CPU can be voluntarily yielded for other processing tasks. Of course, the trade-off for long poll times is increased latency – the time from when a message becomes available within the Comm layer to the time it is actually pulled into the App layer.

In the”Push” method of message retrieval, during runtime the Comm layer activates the App thread by invoking the previously installed App callback function, Cb(Msg), for each newly received message. Since the App’s process(Msg) method executes in the context of a Comm layer thread, it can bog down the comm subsystem and cause it to miss high rate messages coming in over the wire if it takes too long to execute. On the other hand, the “Push” method can be more responsive (lower latency) than the “Pull” method if the polling “Dur” is set to a long time between polls.

So, which method is “better“? Of course, it depends on what the Application is required to do, but I lean toward the “Pull” Method in high rate streaming sensor applications for these reasons:

- In applications like sensor stream processing that require a lot of number crunching and/or data associations to be performed on each incoming message, the fact that the App-specific processing logic is performed within the context of the App thread in the “Pull” method (instead of the Comm layer) means that the Comm layer performance is not dependent on the App-specific performance. The layers are more loosely coupled.

- The “Pull” approach is simpler to code up.

- The “Pull” approach is tunable via the sleep “Dur” parameter.

How about you? Which do you prefer, and why?

Apples And Oranges

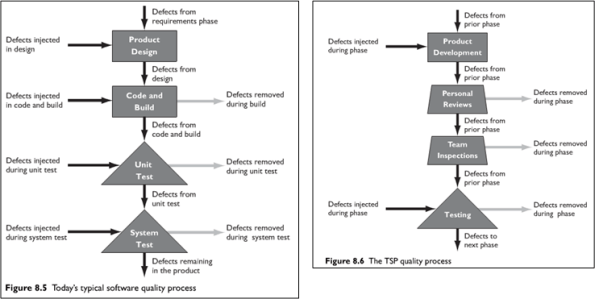

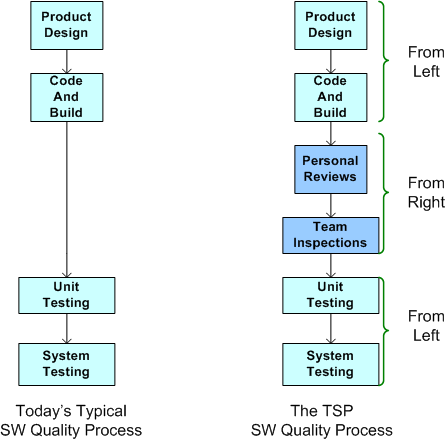

In “Leadership, Teamwork, and Trust“, Watts Humphrey and James Over build a case against the classic “Test To Remove Defects” mindset (see the left portion of the figure below). They assert that testing alone is not enough to ensure quality – especially as software systems grow larger and commensurately more complex. Their solution to the problem (shown on the right of the figure below) calls for more reviews and inspections, but I’m confused as to when they’re supposed to occur: before, after, or interwoven with design/coding?

If you compare the left and right hand sides of the figure, you may have come to the same conclusion as I have: it seems like an apples to oranges comparison? The left portion seems more “concrete” than the right portion, no? Since they’re not enumerated on the right, are the “concrete” design and code/build tasks shown on the left subsumed within the more generic “Product Development” box on the right?

In order to un-confuse myself, I generated the equivalent of the Humphrey/Over TSP (Team Software Process) quality process diagram on the right using the diagram on the left as the starting reference point. Here’s the resulting apples-to-apples comparison:

If this is what Humphrey and Over meant, then I’m no longer confused. Their TSP approach to software quality is to supplement unit and system testing with reviews and inspections before testing occurs. I wish they said that in the first place. Maybe they did, but I missed it.

If this is what Humphrey and Over meant, then I’m no longer confused. Their TSP approach to software quality is to supplement unit and system testing with reviews and inspections before testing occurs. I wish they said that in the first place. Maybe they did, but I missed it.

Mismatch

Assume that you’re tasked to create a two component, distributed software system as shown in the figure below. The nature of the application is such that during runtime, component 1 will continuously transmit a “bursty“, asynchronous stream of messages to component 2. During evolution of the system in the future, you know that more and more stages will be tacked on to the “pipeline“, with each stage adding value to a growing customer base (if you don’t screw it up and hatch a BBoM).

Note that the relationship between application components is peer-to-peer and not client-server like this:

One question is this: “Why on earth would anyone choose a client-server messaging system (with peer-to-peer capability tacked on) over a peer-to-peer messaging system for this class of application?“. The question especially applies to product organizations that strive to develop distinctly elegant and innovative solutions – which hopefully includes yours. A second question is: “What would technologically savvy customers think?“. Of course, if you think your customers are dumb-asses (and you won’t be in business for long if you do) and can’t tell the difference, then the situation is a “don’t care“, no?

Flouting Convention

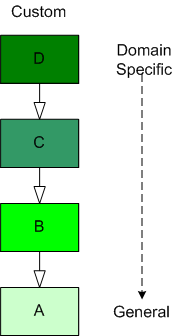

As software-centric systems get more complex, one of the most effective tools for preventing the creation of monstrous BBoMs downstream is “layering”. The figure below shows a generic model of the layering concept.

When you use layering, you partition your system into a vertical stack with the most “exciting” application-specific functions and objects at the top of the stack and the more mundane and boring functionality down in the basement. In a pure layered system, the higher layers depend on the services provided by the lower levels and there are no dependencies the other way. The cleaner and crisper your inter-layer boundaries, the lower your maintenance cost and frustration.

The figure below shows the conventional approach of representing an inheritance hierarchy in an object oriented design. What’s wrong with this picture? Relative to the layered model, it’s “upside down“. The most general class is on top and the most domain-specific class is at the bottom. WTF and D’oh!

Since “layering” has been around much longer than object-orientation, Bulldozer00 thinks that a layered, object-oriented software system should always be presented to stakeholders like this:

This method of representation aligns cleanly with the layered “view” of the system and is thus, less confusing and dis-orienting to all audiences, dontcha think? To hell with convention, – at least in this situation.

Horse And Buggy

Werner Vogels is Amazon.com’s Chief Technology Officer and author of the blog “All Things Distributed – Building Scalable And Robust Distributed Systems“. I just couldn’t stop myself from laughing when he announced that fellow distributed-systems guru Steve Vinoski had just joined Twitter:

Specifically, the last sentence put me in stitches. As you can see from the book cover below, Steve is a CORBA expert and way back in the dark ages he was a CORBA advocate. However, he’s moved into the 21st century and left that horse and buggy behind – unlike others who cling to their horse whips and ostracize those who point out the obvious.

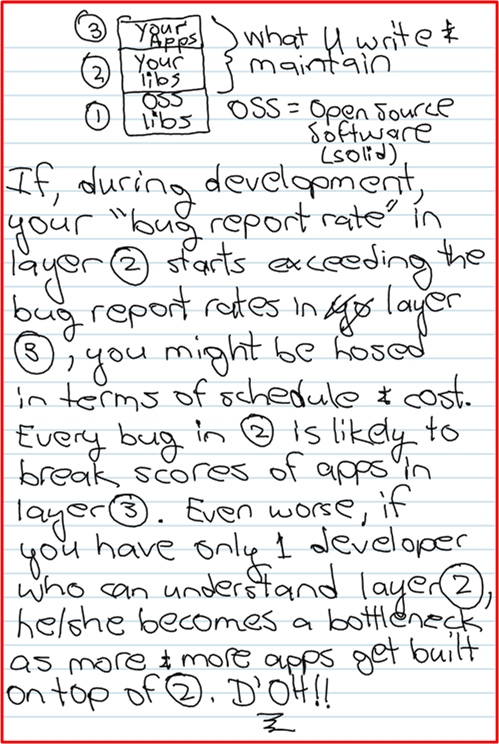

Bug Reporting Rate

My Erlang Learning Status – II

In my quest to learn the Erlang programming language, I’ve been sloowly making my way through Cesarini and Thompson’s wonderful book: “Erlang Programming“.

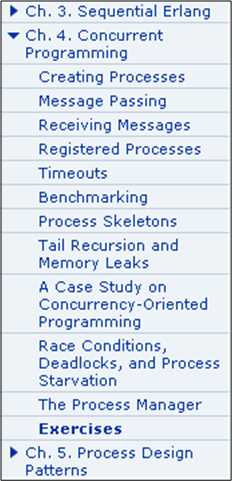

In terms of the book’s table of contents, I’ve just finished reading Chapter 4 (twice):

As I expected, programming concurrent software systems in Erlang is stunningly elegant compared to the language I currently love and use, C++. In comparison to C++ and (AFAIK) all the other popularly used programming languages, concurrency was designed into the language from day one. Thus, the amount of code one needs to write to get a program comprised of communicating processes up and running is breathtakingly small.

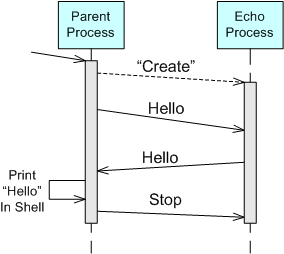

For example, take a look at the “bent” UML sequence diagram of the simple two process “echo” program below. When the parent process is launched by the user, it spawns an “Echo” process. The parent process then asynchronously sends a “Hello” message to the “Echo” process and suspends until it receives a copy of the “Hello” message back from the “Echo” process. Finally, the parent process prints “Hello” in the shell, sends a “Stop” message to the “Echo” process, and self-terminates. Of course, upon receiving the “Stop” message, the “Echo” process is required to self-terminate too.

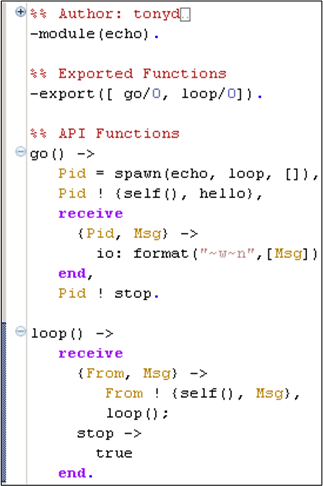

Here’s an Erlang program module from the book that implements the model:

The go() function serves as the “Parent” process and the loop() function maps to the “Echo” process in the UML sequence diagram. When the parent is launched by typing “echo:go().” into the Erlang runtime shell, it should:

- Spawn a new process that executes the loop() function that co-resides in the echo module file with the go() function.

- Send the “Hello” message to the “Echo” process (identified by the process ID bound to the “Pid” variable during the return from the spawn() function call) in the form of a tuple containing the parent’s process ID (returned from the call to self()) and the “hello” atom.

- Suspend (in the receive-end clause) until it receives a message from the “Echo” process.

- Print out the content of the received “Msg” to the console (via the io:format/2 function).

- Issue a “Stop” message to the “Echo” process in the form of the “stop” atom.

- Self-terminate (returning control and the “stop” atom to the shell after the Pid ! stop. expression).

When the loop() function executes, it should:

Suspend in the receive-end clause until it receives a message in the form of either: A) a two argument tuple – from any other running process, or, B) an atom labeled “stop“.

- If the received message is of type A), send a copy of the received Msg tuple argument back to the process whose ID was bound to the From variable upon message reception. In addition to the content of the Msg variable, the transmitted message will contain the loop() process ID returned from the call to self(). After sending the tuple, suspend again and wait for the next message (via the recursive call to loop()).

- If the received message is of type B), self-terminate.

I typed this tiny program into the Eclipse Erlide plugin editor:

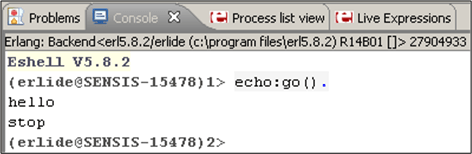

After I compiled the code, started the Erlang VM in the Eclipse console view, and ran the program, here’s what I got:

It worked like a charm – Whoo Hoo! Now, imagine what an equivalent, two process, C++ program would look like. For starters, one would have to write, compile, and run two executables that communicate via sockets or some other form of relatively arcane inter-process OS mechanism (pipe, shared memory, etc), no? For a two thread, single process C++ equivalent, a threads library must be used with some form of synchronized inter-thread communication (2 lockless ring buffers, 2 mutex protected FIFO queues, etc).

Note: If you’re interested in reading my first Erlang learning status report, click here.

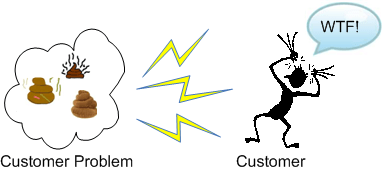

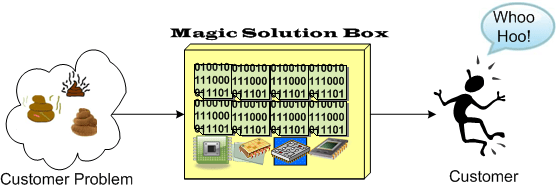

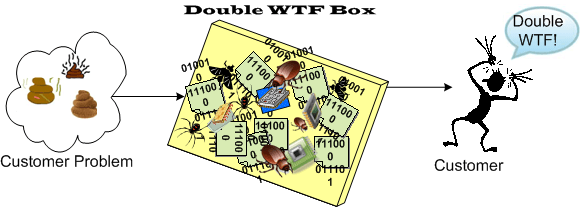

I’ll Have A Double, Please

An Answer 10 Years Later

I’ve always questioned why one of my mentors from afar, Steve Mellor, was one of the original signatories of the “Agile Manifesto” 10 years ago. He’s always been a “model-based” guy and his fellow pioneer agile dudes were obsessed with the idea that source code was the only truth – to hell with bogus models and camouflage documents. Even Grady Booch, another guy I admire, tempered the agilist obsession with code by stating something like this: “the code is the truth, but not the whole truth“.

Stephen recently sated my 10 year old curiosity in this InfoQ interview: “A Personal Reflection on Agile Ten Years On“. Here’s Steve’s answer to the question that haunted me fer 10 ears:

The other signatories were kind enough, back in 2001, to write the manifesto using the word “software” (which can include executable models), not “code” (which is more specific.) As such I felt able, in good conscience, to become a signatory to the Manifesto while continuing to promote executable modeling. Ten years on we have a standard action language for agile modeling. – Stephen J. Mellor

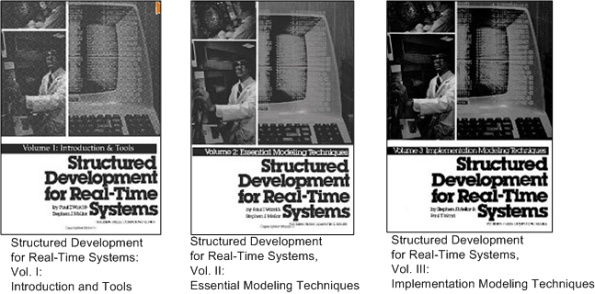

The reason I have great respect for Stephen (and his cohort Paul Ward) is this brilliant trilogy they wrote waaaayy back in the mid 80s:

Despite the dorky book covers and the dates they were written, I think the info in these short tomes is timeless and still relevant to real-time systems builders today. Of course, they were created before the object-oriented and multi-core revolutions occurred, but these books, using simple DeMarco/Plauger structured analysis modeling notation (before UML), nail it. By “it”, I mean the thinking, tools, techniques, idioms, and heuristics required to specify, design, and build concurrent, distributed, real-time systems that work. Buy em, read em, decide for yourself, bookmark this post, and please report your thoughts back to me.