Archive

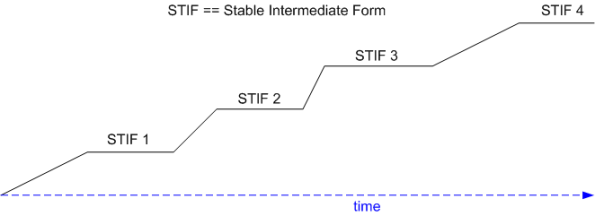

A Bunch Of STIFs

Grady Booch states that good software architectures evolve incrementally via a progressive sequence of STable Intermediat Forms (STIFs). At each point of equilibrium, the “released” STIF is exercised by an independent group of external customers and/or internal testers. Defects, unneeded functionality, missing functionality, and unmet “ilities” are ferreted out and corrected early and often.

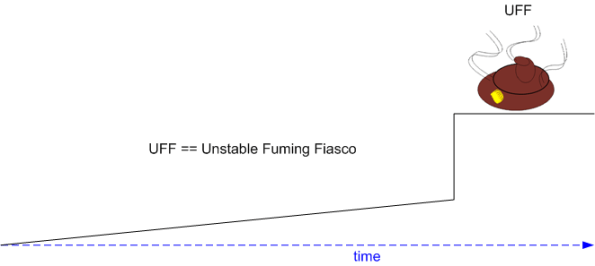

The alternative to a series of STIFs is the ubiquitous, one-shot, Unstable Fuming Fiasco (UFF):

Note: After I converted this draft post into a publishable one and queued it up, I experienced a sense of deja-vu. As a consequence, I searched back through my draft (91) and published (987) post lists. I found this one. D’oh! and LOL! I’m sure I’ve repeated myself many times during my blogging “career“, but hey, a steady drumbeat is more effective than a single cymbal crash. No?

Note: After I converted this draft post into a publishable one and queued it up, I experienced a sense of deja-vu. As a consequence, I searched back through my draft (91) and published (987) post lists. I found this one. D’oh! and LOL! I’m sure I’ve repeated myself many times during my blogging “career“, but hey, a steady drumbeat is more effective than a single cymbal crash. No?

Yet Another Nit

Programming in C++, I “prefer” composition over inheritance. Thus, for that reason alone, I’d use “ComposedLookupTable” over “InheritedLookupTable” every time:

In addition, since (on purpose) no STL container has a virtual destructor, I’d never even consider inheriting from one – even though those who do would never try to write this monstrosity:

Of course, this can be seen as yet another childish BD00 nitpick. But hey, what else is BD00 gonna do with his time? Solve world poverty? Occupy the org?

Quality is in all the freakin’ details, and when you see stuff like this in product code, it makes you wonder how many other risky, idiom-busting, code frags are lurking within the beast. But alas, where does one draw the line and let things like this slide?

As a final note, beware of disclosing turds like this to their authors; especially in mercenary cultures where everyone is implicitly “expected to” project an image of infallibility in order to “advance” their kuh-rearends. If you can excise turds like this yourself without getting caught, then please do so.

Levels Of Testing

Using three equivalent UML class diagrams, the graphic below conveys how to represent a system design using the “C” terminology of the aerospace and defense industry. On the left, the system “contains” CSCIs and CSCIs contain CSCs, etc. In the middle, the system “has” CSCIs. On the right, CSCIs “reside within” the system.

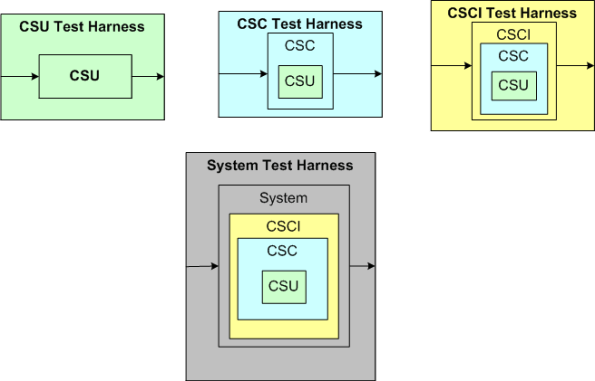

Leaving aside the question of “what the hell’s a unit“, let’s explore four “levels of testing” with the aid of the multi-graphic below that uses the “reside within” model.

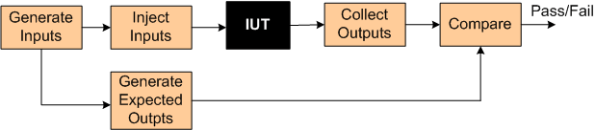

In order to test at any given level, some sort of test harness is required for that level. The test harness “system” is required to:

- generate inputs (for a CSU, CSC, CSCI, or the system),

- inject the inputs into the “Item Under Test” (CSU, CSC, or CSCI),

- collect the actual outputs from the IUT,

- compare the actual outputs with expected outputs.

- declare success or failure for each test

When planning a project up front, it’s easy to issue the mandate: “we’ll formally test and document at all four levels; progressing from the bottom CSU level up to the system level“. However, during project execution, it’s tough to follow “the plan” when the schedule starts to, uh, expand, and pressure mounts to “just shipt it, damn it!“.

Because:

- the number of IUTs in a level decreases as one moves up the ladder of abstraction (there’s only a single IUT at the top – the system),

- the (larger and fewer) IUTs at a given level are richer in complex behavior than the (smaller and more plentiful) IUTs at the next lowest level,

- it is expensive to develop high fidelity, manual and/or automated test harnesses for each and every level

- others?

there’s often a legitimate ROI-based reason to eschew all levels of formal testing except at the system level. That can be OK as long as it’s understood that defects found during final system level testing will be more difficult and time consuming ($$$) to localize and repair than if lower levels of isolated testing had been performed prior to system testing.

To mitigate the risk of increased time to localize/repair from skipping levels of testing, developers can build in test visibility points that can be turned on/off during operation.

The tradeoff here is that designing in an extensive set of runtime controllable test points adds complexity, code, and additional CPU/memory loading to both the system and test harness software. To top it off, test point activation is often needed most when the system is being stressed with capacity test scenarios where the nastiest of bugs tend surface – but the additional CPU and memory load imposed on the system/harness may itself cause tests to fail and mutate bugs into looking like they are located where they are not. D’oh! Ain’t life a be-otch?

Biased At Initialization

In “Thinking, Fast and Slow“, Daniel Kahneman asserts:

The normal state of your mind is that you have intuitive feelings and opinions about almost everything that comes your way. You like or dislike people long before you know much about them; you trust or distrust strangers without knowing why; you feel that an enterprise is bound to succeed without analyzing it. Whether you state them or not, you often have answers to questions that you do not completely understand, relying on evidence that you can neither explain nor defend.

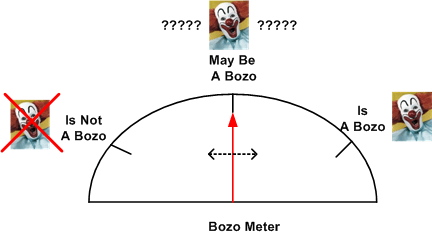

Sheesh. So much for initializing the “Bozo Bit” to the unjudgmental MBAB state and then consciously flippin’ it to one state or the other as evidence mounts over time. According to Dr. Dan, because of the lizard-like, “fast thinking” subsystem in our (bozo) brains, the Bozo Bit is biased at initialization to either IAB or INAB.

Frag City

As the accumulation of knowledge in a disciplinary domain advances, the knowledge base naturally starts fragmenting into sub-disciplines and turf battles break out all over: “my sub-discipline is more reflective of reality than yours, damn it!“.

In a bit of irony, the systems thinking discipline, which is all about holism, has followed the well worn yellow brick road that leads to frag city. For example, compare the disparate structures of two (terrific) books on systems thinking:

Thank Allah there is at least some overlap, but alas, it’s a natural law: with growth, comes a commensurate increase in complexity. Welcome to frag city…

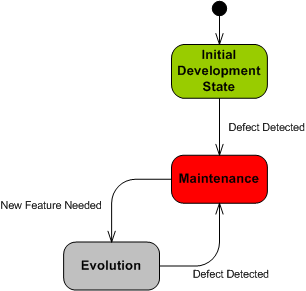

The Hidden Preservation State

In the software industry, “maintenance” (like “system“) is an overloaded and overused catchall word. Nevertheless, in “Object-Oriented Analysis and Design with Applications“, Grady Booch valiantly tries to resolve the open-endedness of the term:

It is maintenance when we correct errors; it is evolution when we respond to changing requirements; it is preservation when we continue to use extraordinary means to keep an ancient and decaying piece of software in operation (lol! – BD00) – Grady Booch et al

Based on Mr. Booch’s distinctions, the UML state machine diagram below attempts to model the dynamic status of a software-intensive system during its lifetime.

The trouble with a boatload of orgs is that their mental model and operating behavior can be represented as thus:

What’s missing from this crippled state machine? Why, it’s the “Preservation” state silly. In reality, it’s lurking ominously behind the scenes as the elephant in the room, but since it’s hidden from view to the unaware org, it only comes to the fore when a crisis occurs and it can’t be denied any longer. D’oh! Call in the firefighters.

Maybe that’s why Mr. “Happy Dance” Booch also sez:

… reality suggests that an inordinate percentage of software development resources are spent on software preservation.

For orgs that operate in accordance to the crippled state machine, “Preservation” is an expensive, time consuming, resource sucking sink. I hate when that happens.

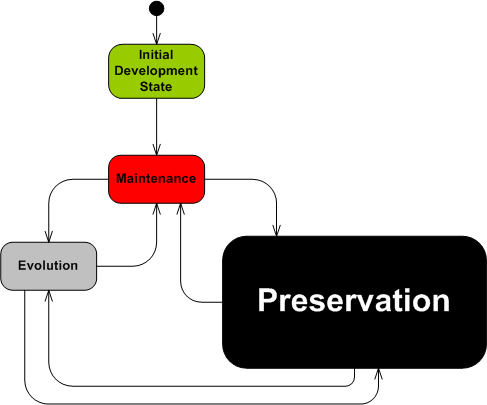

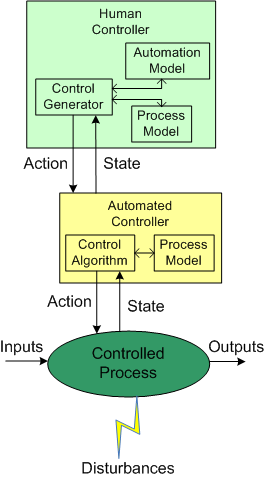

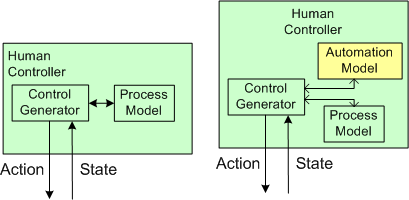

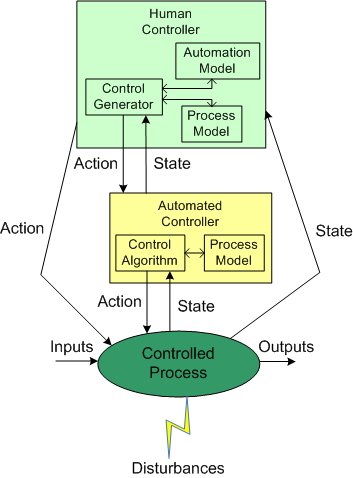

Human And Automated Controllers

Note: The figures that follow were adapted from Nancy Leveson‘s “Engineering A Safer World“.

In the good ole days, before the integration of fast (but dumbass) computers into controlled-process systems, humans had no choice but to exercise direct control over processes that produced some kind of needed/wanted results. During operation, one or more human controllers would keep the “controlled process” on track via the following monitor-decide-execute cycle:

- monitor the values of key state variables (via gauges, meters, speakers, etc)

- decide what actions, if any, to take to maintain the system in a productive state

- execute those actions (open/close valves, turn cranks, press buttons, flip switches, etc)

As the figure below shows, in order to generate effective control actions, the human controller had to maintain an understanding of the process goals and operation in a mental model stored in his/her head.

With the advent of computers, the complexity of systems that could be, were, and continue to be built has skyrocketed. Because of the rise in the cognitive burden imposed on humans to effectively control these newfangled systems, computers were inserted into the control loop to: (supposedly) reduce cognitive demands on the human controller, increase the speed of taking action, and reduce errors in control judgment.

The figure below shows the insertion of a computer into the control loop. Notice that the human is now one step removed from the value producing process.

Also note that the human overseer must now cognitively maintain two mental models of operation in his/her head: one for the physical process and one for the (supposedly) subservient automated controller:

Assuming that the automated controller unburdens the human controller from many mundane and high speed monitoring/control functions, then the reduction in overall complexity of the human’s mental process model may more than offset the addition of the requirement to maintain and understand the second mental model of how the automated controller works.

Since computers are nothing more than fast idiots with fixed control algorithms designed by fallible human experts (who nonetheless often think they’re infallible in their domain), they can’t issue effective control actions in disturbance situations that were unforeseen during design. Also, due to design flaws in the hardware or software, automated controllers may present an inaccurate picture of the process state, or fail outright while the controlled process keeps merrily chugging along producing results.

To compensate for these potentially dangerous shortfalls, the safest system designs provide backup state monitoring sensors and control actuators that give the human controller the option to override the “fast idiot“. The human controller relies primarily on the interface provided by the computer for monitoring/control, and secondarily on the direct interface couplings to the process.

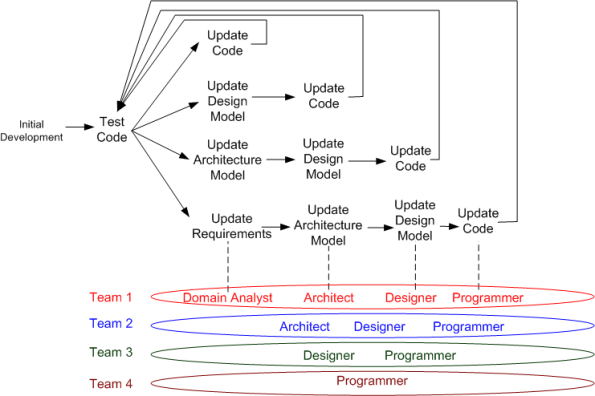

Maintenance Cycles And Teams

The figure below highlights the unglamorous maintenance cycle of a typical “develop-deliver-maintain” software product development process. Depending on the breadth of impact of a discovered defect or product enhancement, one of 4 feedback loops “should” be traversed.

In the simplest defect/enhancement case, the code is the only product artifact that must be updated and tested. In the most complex case, the requirements, architecture, design, and code artifacts all “should” be updated.

Of course, if all you have is code, or code plus bloated, superficial, write-once-read-never documents, then the choice is simple – update only the code. In the first case, since you have no docs, you can’t update them. In the second case, since your docs suck, why waste time and money updating them?

After the super-glorious business acquisition phase and during the mini-glorious “initial development” phase, the team is usually (but not always – especially in DYSCOs and CLORGs) staffed with the roles of domain analyst(s), architect(s), designer(s), and programmer(s). Once the product transitions into the yukky maintenance phase, the team may be scaled back and roles reassigned to other projects to cut costs. In the best case, all roles are retained at some level of budgeting – even if the total number of people is decreased. In the worst case, only the programmer(s) are kept on board. In the suicidal case, all roles but the programmer(s) are reassigned, but multiple manager type roles are added. (D’oh!)

Note that there does not have to be a one to one correspondence between a role and a person; one person can assume multiple roles. Unfortunately, the staff allocation, employee development, and reward systems in most orgs aren’t “designed” to catalyze and develop the added value of multi-role-capable people. That’s called the “employee-in-a-box” syndrome.

A Thing In Context

Discrete, Not Continuous?

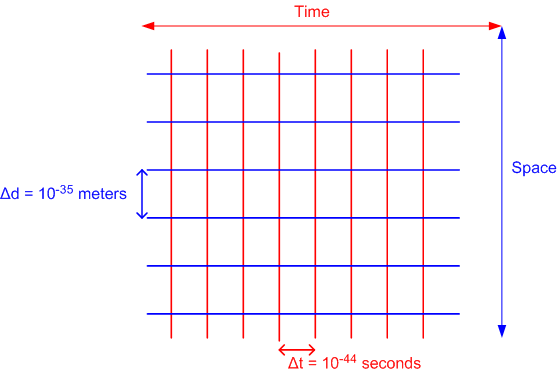

I’m in the process of reading my seventh layman’s book on quantum physics. It’s written by quantum physicist William Bray and its long winded title is: “Quantum Physics, Near Death Experiences, Eternal Consciousness, Religion, and the Human Soul“.

Mr. Bray writes something fascinating about space and time that I don’t recall seeing in any of the other QP books:

On a Planck scale, each moment exists in an isolated region (we call a Planck interval) of space-time existing only in the past or future to another point, and never in the present. No two things anywhere in space-time share a common present, no matter how close they are to one another, all the way down to 10**(-35) meters, or 10**(-44) seconds, apart. – William Bray (p. 31). Kindle Edition

In other words, Mr. Bray asserts that space and time are not continuous dimensions, they’re discrete. The universe is not analog, it’s digital. The movement of energy and matter in time is herky-jerky, jump-to-jump, like a motion picture. However, it “looks” smooth and continuous because of the lack of resolution and sensitivity of our woefully inadequate senses and sense-extending tools. D’oh!

Here’s what Wikipedia says about Planck time:

Theoretically, this is the smallest time measurement that will ever be possible. As of May 2010, the smallest time interval that was directly measured was on the order of 12 attoseconds (12 × 10**(−18) seconds)…. All scientific experiments and human experiences happen over billions of billions of billions of Planck times, making any events happening at the Planck scale hard to detect.

My interpretation is that we will never be able to mechanistically detect what happens between two adjacent planck time points.

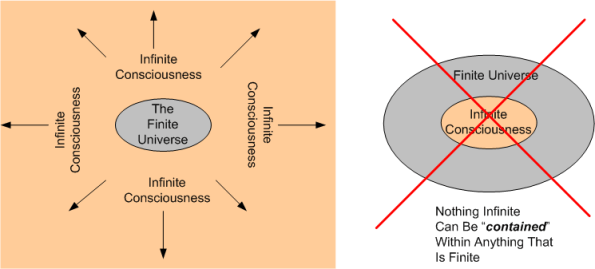

So, what do you think exists between two adjacent, discrete, planck time points? Could it be that bits of infinite consciousness leak into the finite universe?

After doing some superficial research on Mr. Bray, I’ve become quite skeptical:

- There are no recommendations/testimonials from peers on the inside or backside covers of the book.

- Googling on “William Joseph Bray” doesn’t reveal that much.

- He’s got a facebook page, but I’m not a member so I didn’t get to see it.

- I discovered his web site, and his credentials seem to be too extensive to be believable.

Nevertheless, the book is a fascinatingly refreshing and novel read. In a nutshell, his main theme is that consciousness is not caused by bio-chemical processes in the brain, it’s quite the opposite. Infinite consciousness lies outside of the finite physical universe and it thus paints the universe into being by “collapsing the wavefunction“. Consciousness is the ultimate observer and it creates you and me and everything else in the universe.

Don’t forget, BD00 is a self-proclaimed L’artiste, so don’t believe a word he sez. What do you think?