Archive

Environmental Influence

In “Engineering A Safer World“, Nancy Leveson states:

Human behavior is always influenced by the environment in which it takes place. Changing that environment will be much more effective in changing operator error than the usual behaviorist approach of using reward and punishment. Without changing the environment, human error cannot be reduced for long. We design systems in which operator error is inevitable and then blame the operator and not the system design.

So why is that? Could it be because the system designers and environment caretakers are also the same people who have the power to assign blame – and it’s much easier to blame than to change the environment?

Human And Automated Controllers

Note: The figures that follow were adapted from Nancy Leveson‘s “Engineering A Safer World“.

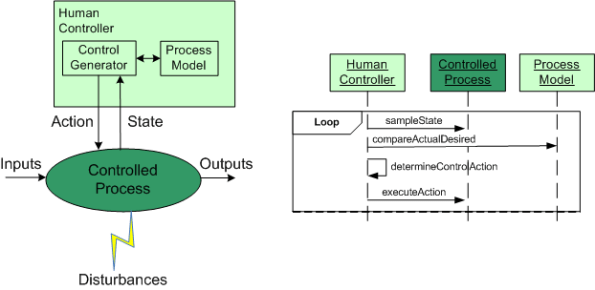

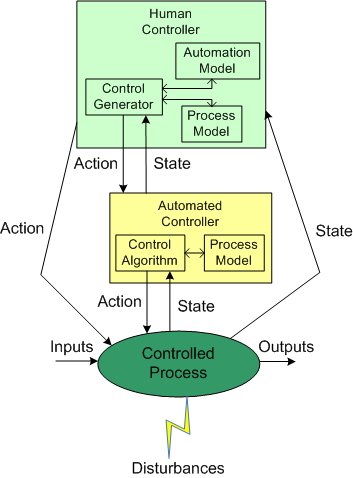

In the good ole days, before the integration of fast (but dumbass) computers into controlled-process systems, humans had no choice but to exercise direct control over processes that produced some kind of needed/wanted results. During operation, one or more human controllers would keep the “controlled process” on track via the following monitor-decide-execute cycle:

- monitor the values of key state variables (via gauges, meters, speakers, etc)

- decide what actions, if any, to take to maintain the system in a productive state

- execute those actions (open/close valves, turn cranks, press buttons, flip switches, etc)

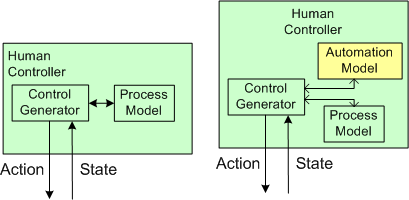

As the figure below shows, in order to generate effective control actions, the human controller had to maintain an understanding of the process goals and operation in a mental model stored in his/her head.

With the advent of computers, the complexity of systems that could be, were, and continue to be built has skyrocketed. Because of the rise in the cognitive burden imposed on humans to effectively control these newfangled systems, computers were inserted into the control loop to: (supposedly) reduce cognitive demands on the human controller, increase the speed of taking action, and reduce errors in control judgment.

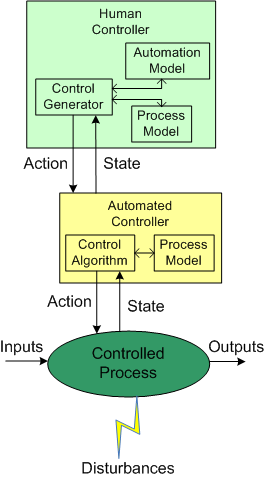

The figure below shows the insertion of a computer into the control loop. Notice that the human is now one step removed from the value producing process.

Also note that the human overseer must now cognitively maintain two mental models of operation in his/her head: one for the physical process and one for the (supposedly) subservient automated controller:

Assuming that the automated controller unburdens the human controller from many mundane and high speed monitoring/control functions, then the reduction in overall complexity of the human’s mental process model may more than offset the addition of the requirement to maintain and understand the second mental model of how the automated controller works.

Since computers are nothing more than fast idiots with fixed control algorithms designed by fallible human experts (who nonetheless often think they’re infallible in their domain), they can’t issue effective control actions in disturbance situations that were unforeseen during design. Also, due to design flaws in the hardware or software, automated controllers may present an inaccurate picture of the process state, or fail outright while the controlled process keeps merrily chugging along producing results.

To compensate for these potentially dangerous shortfalls, the safest system designs provide backup state monitoring sensors and control actuators that give the human controller the option to override the “fast idiot“. The human controller relies primarily on the interface provided by the computer for monitoring/control, and secondarily on the direct interface couplings to the process.

PPPP Invention

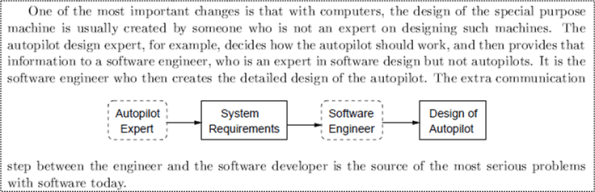

In “Engineering A Safer World“, Nancy Leveson uses the autopilot example below to illustrate the extra step of complexity that was introduced into the product development process with the integration of computers and software into electro-mechanical system designs.

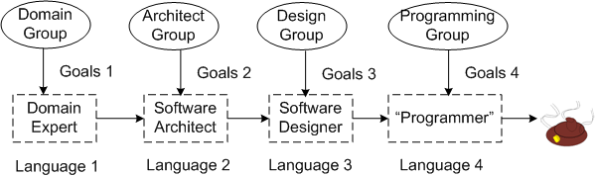

In the worst case, as illustrated below, the inter-role communication problem can be exacerbated. Although the “gap of misunderstanding” from language mismatch is always greatest between the Domain Expert and the Software Architect, as more roles get added to the project, more errors/defects are injected into the process.

But wait! It can get worse, or should I say, “more challenging“. Each person in the chain of cacophony can be a member of a potentially different group with conflicting goals.

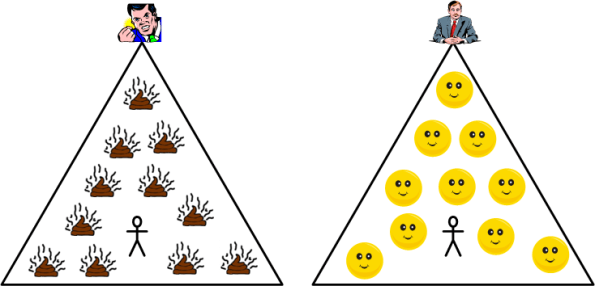

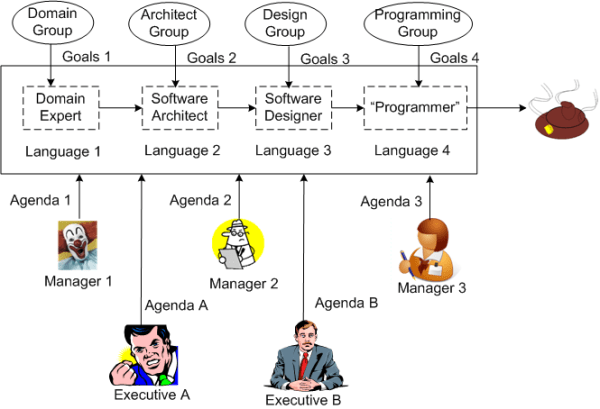

But wait, it can get worser! By consciously or unconsciously adding multiple managers and executives to the mix, you can put the finishing touch on your very own Proprietary Poop Producing Process (PPPP).

So, how can you try to inhibit inventing your very own PPPP? Two techniques come to mind:

- Frequent, sustained, proactive, inter-language education (to close the gaps of misunderstanding).

- Minimization of the number of meddling managers (and especially, pseudo-manager wannabes) allowed anywhere near the project.

Got any other ideas?

B and S == BS

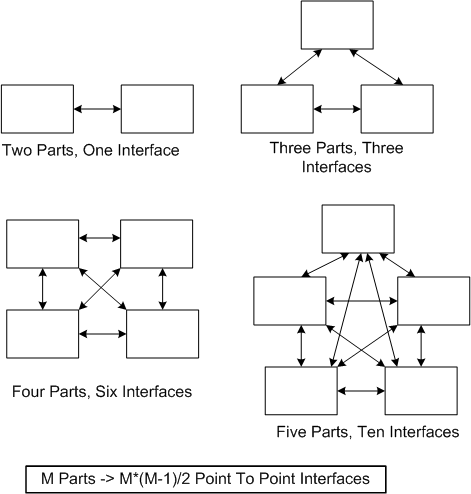

About a year ago, after a recommendation from management guru Tom Peters, I read Sidney Dekker’s “Just Culture“. I mention this because Nancy Leveson dedicates a chapter to the concept of a “just culture” in her upcoming book “Engineering A Safer World“.

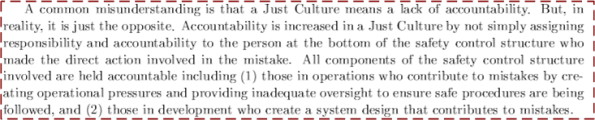

The figure below shows a simple view of the elements and relationships in an example 4 level “safety control structure“. In unjust cultures, when a costly accident occurs, the actions of the low elements on the totem pole, the operator(s) and the physical system, are analyzed to death and the “causes” of the accident are determined.

After the accident investigation is “done“, the following sequence of actions usually occurs:

- Blame and Shame (BS!) are showered upon the operator(s).

- Recommendations for “change” are made to operator training, operational procedures, and the physical system design.

- Business goes back to usual

- Rinse and repeat

Note that the level 2 and level 3 elements usually go uninvestigated – even though they are integral, influential forces that affect system operation. So, why do you think that is? Could it be that when an accident occurs, the level 2 and/or level 3 participants have the power to, and do, assume the role of investigator? Could it be that the level 2 and/or level 3 participants, when they don’t/can’t assume the role of investigator, become the “sugar daddies” to a hired band of independent, external investigators?

Asynchronous Evolution

In “Engineering A Safer World“, Nancy Leveson asserts that “asynchronous evolution” is a major contributor to costly accidents in socio-technical systems. Asynchronous evolution occurs when one or more parts in a system evolve faster than other parts – causing internal functional and interface mismatches between the parts. As an example, in a friendly fire accident where a pair of F-15 fighters shot down a pair of black hawk helicopters, the copter and fighter pilots didn’t communicate by voice because they had different radio technologies on board.

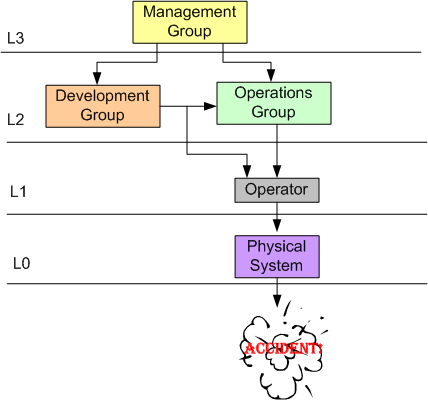

As another example, consider the graphic below. It shows a project team comprised of domain analysts and software developers along with two possible paths of evolution.

Happenstance asynchronous evolution is corrosive to product excellence and org productivity. It underpins much misunderstanding, ambiguity, error, and needless rework. Org controllers that diligently ensure synchronous evolution of the tools/techniques/processes amongst the disciplines that create and build its revenue generating products own a competitive advantage over those that don’t, no?

Dysfunctional Interactions

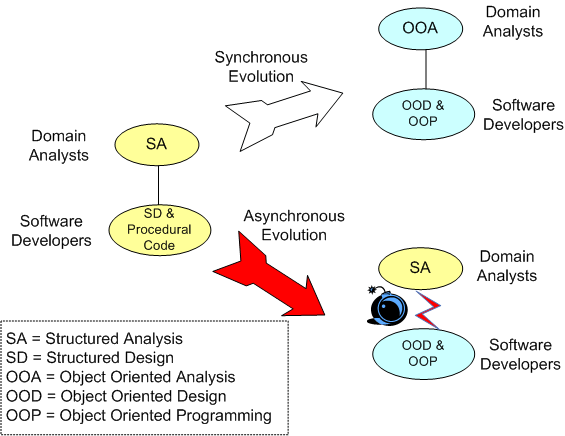

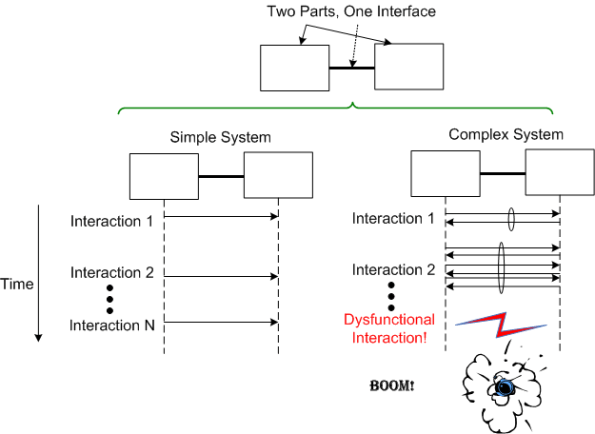

In “Engineering A Safer World“, Nancy Leveson states that dysfunctional interactions between system parts play a bigger role in accidents than individual part failures. Relative to yesterday’s systems, today’s systems contain many more parts. But because of manufacturing advances, each part is much more reliable than it used to be.

A consequence of adding more parts to a system is that the numbers of potential connections and interactions between parts starts exploding fast. Hence, there’s a greater chance of one dysfunctional interaction crashing the whole system – even whilst the individual parts and communication links continue to operate reliably.

Even with a “simple” two part system, if its designed-in purpose requires many rich and interdependent interactions to be performed over the single interface, watch out. A single dysfunctional interaction can cause the system to seize up and stop producing the emergent behavior it was designed to provide:

So, what’s the lesson here for system designers? It’s two-fold. Minimize the number of interfaces in your design and, more importantly, limit the number, types, and exchanges over each interface to only those that are required to fulfill the system’s purpose. Of course, if no one knows what’s required (which is the number one cause of unsuccessful systems), then you’re hosed no matter what. D’oh!

Out With The Old And In With The New

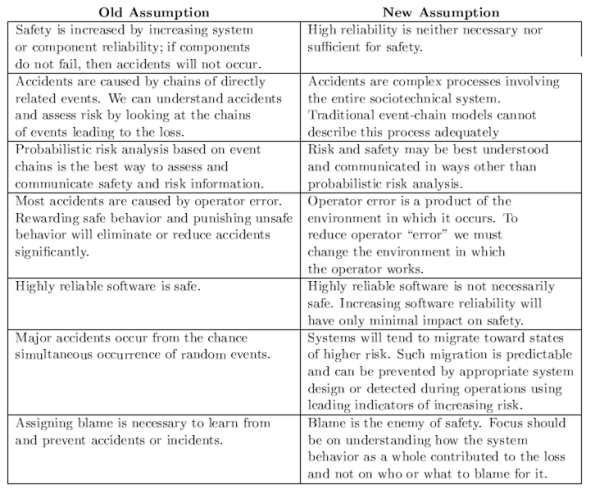

Nancy Leveson has a new book coming out that’s titled “Engineering A Safer World” (the full draft of the book is available here: EASW). In the beginning of the book, Ms. Leveson asserts that the conventional assumptions, theory, and techniques (FMEA == Failure Modes and Effects Analysis, Fault Tree Analysis == FTA, Probability Risk Assessment == PRA) for analyzing accidents and building safe systems are antiquated and obsolete.

The expert, old-guard mindset in the field of safety engineering is still stuck on the 20th century notion that systems are aggregations of relatively simple, electro-mechanical parts and interfaces. Hence, the steadfast fixation on FMEA, FTA, and PRA. On the contrary, most of the 21st century safety-critical systems are now designed as massive, distributed, software-intensive systems.

As a result of this emerging, brave new world, Ms. Leveson starts off her book by challenging the flat-earth assumptions of yesteryear:

Note that Ms. Leveson tears down the former truth of reliability == system_safety. After proposing her set of new assumptions, Ms. Leveson goes on to develop a new model, theory, and set of techniques for accident analysis and hazard prevention.

Note that Ms. Leveson tears down the former truth of reliability == system_safety. After proposing her set of new assumptions, Ms. Leveson goes on to develop a new model, theory, and set of techniques for accident analysis and hazard prevention.

Since the subject of safety-critical systems interests me greatly, I plan to write more about her novel approach as I continue to progress through the book. I hope you’ll join me on this new learning adventure.

Hindsight Bias

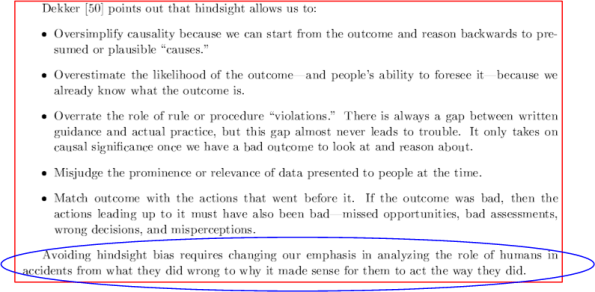

In Nancy Leveson‘s forthcoming book, “Engineering A Safer World“, she provides the following attributes of Hindsight Bias (a.k.a “hindsight is always 20-20“):

So THAT’s why Bulldozer00 is always 100% right!

I find that the text in the blue ellipse is very insightful. If one switches his/her analysis slant from “what they did wrong” to “why it made sense at the time“, one’s emotional reaction will soften toward “those who are responsible for the mess“.

BD00’s attitude toward FUBAR situations, which used to be anger and frustration, has changed to wonder and curiosity. At some serendipitous point along the line, BD00 finally came to the realization that since borgs exhibit emergent behavior that can’t be traced back to a single source, tis better to laugh than cry.

Organizations often behave worse than any member would – Fred Brooks