Archive

Misapplication Of Partially Mastered Ideas

Because the time investment required to become proficient with a new, complex, and powerful technology tool can be quite large, the decision to design C++ as a superset of C was not only a boon to the language’s uptake, but a boon to commercial companies too – most of whom developed their product software in C at the time of C++’s introduction. Bjarne Stroustrup‘s decision killed those two birds with one stone because C++ allowed a gradual transition from the well known C procedural style of programming to three new, up-and-coming mainstream styles at the time: object-oriented, generic, and abstract data types. As Mr. Stroustrup says in D&E:

Companies simply can’t afford to have significant numbers of programmers unproductive while they are learning a new language. Nor can they afford projects that fail because programmers over enthusiastically misapply partially mastered new ideas.

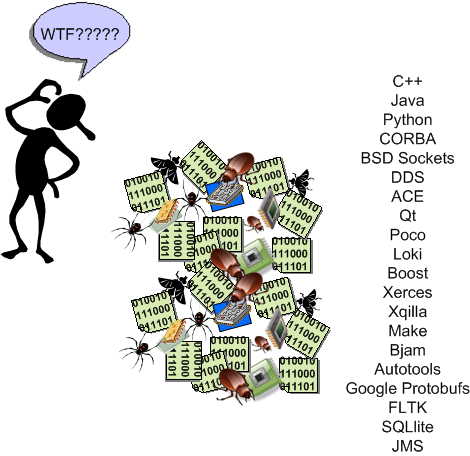

That last sentence in Bjarne’s quote doesn’t just apply to programming languages, but to big and powerful libraries of functionality available for a language too. It’s one challenge to understand and master a language’s technical details and idioms, but another to learn network programming APIs (CORBA, DDS, JMS, etc), XML APIs, SQL APIs, GUI APIs, concurrency APIs, security APIs, etc. Thus, the investment dilemma continues:

I can’t afford to continuously train my programming workforce, but if I don’t, they’ll unwittingly implement features as mini booby traps in half-learned technologies that will cause my maintenance costs to skyrocket.

BD00 maintains that most companies aren’t even aware of this ongoing dilemma – which gets worse as the complexity and diversity of their product portfolio rises. Because of this innocent, but real, ignorance:

- they don’t design and implement continuous training plans for targeted technologies,

- they don’t actively control which technologies get introduced “through the back door” and get baked into their products’ infrastructure; receiving in return a cacophony of duplicated ways of implementing the same feature in different code bases.

- their software maintenance costs keep rising and they have no idea why; or they attribute the rise to insignificant causes and “fix” the wrong problems.

I hate when that happens. Don’t you?

Highly Available Systems == Scalable Systems

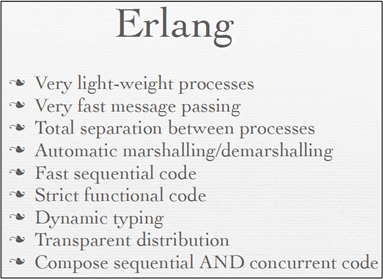

In this QCon talk: “Building Highly Available Systems In Erlang“, Erlang programming language creator and highly-entertaining speaker Joe Armstrong asserts that if you build a highly available software system, then scalability comes along for “free” with that system. Say what? At first, I wanted to ask Joe what he was smoking, but after reflecting on his assertion and his supporting evidence, I think he’s right.

In his inimitable presentation, Joe postulates that there are 6 properties of Highly Available (HA) systems:

- Isolation (of modules from each other – a module crash can’t crash other modules).

- Concurrency (need at least two computers in the system so that when one crashes, you can fix it while the redundant one keeps on truckin’).

- Failure Detection (in order to fix a failure at its point of origin, you gotta be able to detect it first)

- Fault Identification (need post-failure info that allows you to zero-in on the cause and fix it quickly)

- Live Code Upgrade (for zero downtime, need to be able to hot-swap in code for either evolution or bug fixes)

- Stable Storage (multiple copies of data; distribution to avoid a single point of failure)

By design, all 6 HA rules are directly supported within the Erlang language. No, not in external libraries/frameworks/toolkits, but in the language itself:

- Isolation: Erlang processes are isolated from each other by the VM (Virtual Machine); one process cannot damage another and processes have no shared memory (look, no locks mom!).

- Concurrency: All spawned Erlang processes run in parallel – either virtually on one CPU, or really, on multiple cores and processor nodes.

- Failure Detection: Erlang processes can tell the VM that it wants to detect failures in those processes it spawns. When a parent process spawns a child process, in one line of code it can “link” to the child and be auto-notified by the VM of a crash.

- Fault Identification: In Erlang (out of band) error signals containing error descriptors are propagated to linked parent processes during runtime.

- Live Code Upgrade: Erlang application code can be modified in real-time as it runs (no, it’s not magic!)

- Stable Storage: Erlang contains a highly configurable, comically named database called “mnesia” .

The punch line is that systems composed of entities that are isolated (property 1) and concurrently runnable (property 2) are automatically scalable. Agree?

Find The Bug

The part of the conclusion in the green box below for 32 bit linux/GCC (g++) is wrong. A “long long” type is 64 bits wide for that platform combo. If you can read the small and blurry text in the dang thing, can you find the simple logical bug in the five line program that caused the erroneous declaration? And yes, it was the infallible BD00 who made this mess.

Aligned On A Misalignment

After recently tweeting this:

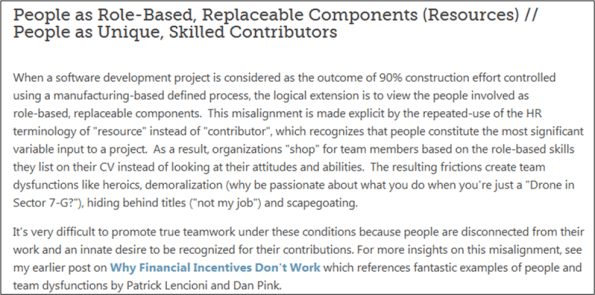

Chris Chapman tweeted this link to me: “Understanding Misalignments in SoftwareDevelopment Projects“. Lo and behold, the third “misalignment” on the page reads:

Chris and I seem to be aligned on this misalignment.

Nickels And Dimes

In mediocre 20th century orgs, some ambitious managers are always trying to get something out of their DICs for nothing so that their personal project performance metrics “look good” to the chieftains in the head shed. Nickle and diming “human resources” by:

- calling pre-work, lunchtime, or post-work meetings,

- texting for status on nights/weekends,

- adding work in the middle of a project without extending schedule or budget,

- expecting sustained, long term overtime without offering to pay for it,

- not acknowledging overtime hours,

- “stopping” by often to see “how you’re doing” without asking if they can help

does not go unnoticed. Well, it doesn’t go unnoticed by the supposed dumbos in the DICforce, but it does conveniently go unnoticed and unquestioned by the dudes in the head shed.

What other “nickel and dime practices” for getting something for nothing can you conjure up?

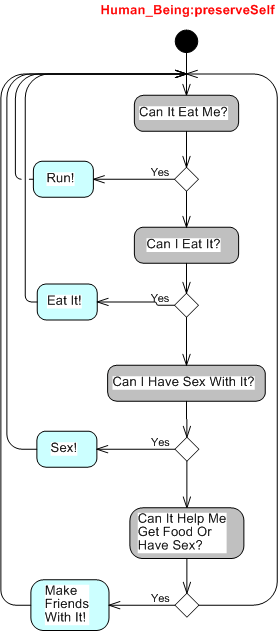

Human_Being:preserveSelf

As the UML sequence diagram below shows, an “unnamed” Nature object with an infinite lifeline asynchronously creates and, uh, kills Human_Being objects at will. Sorry about that.

So, what’s this preserveSelf routine that we loop on until nature kicks our bucket? I’m glad you asked:

Have a nice day! 🙂

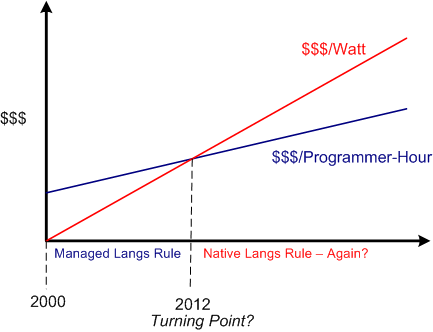

Performance Per Watt

Recently, I concocted a blog post on Herb Sutter‘s assertion that native languages are making a comeback due to power costs usurping programming labor costs as the dominant financial drain in software development. It seems that the writer of this InforWorld post seems to agree:

But now that Intel has decided to focus on performance per watt, as opposed to pure computational performance, it’s a very different ball game. – Bill Snyder

Since hardware developers like Intel have shifted their development focus towards performance per watt, do you think software development orgs will follow by shifting from managed languages (where the minimization of labor costs is king) to native languages (where the minimization of CPU and memory usage is king)?

Hell, I heard Facebook chief research scientist Andrei Alexandrescu (admittedly a native language advocate (C++ and D)) mention the never-used-before “users per watt” metric in a recent interview. So, maybe some companies are already onboard with this “paradigm shift“?

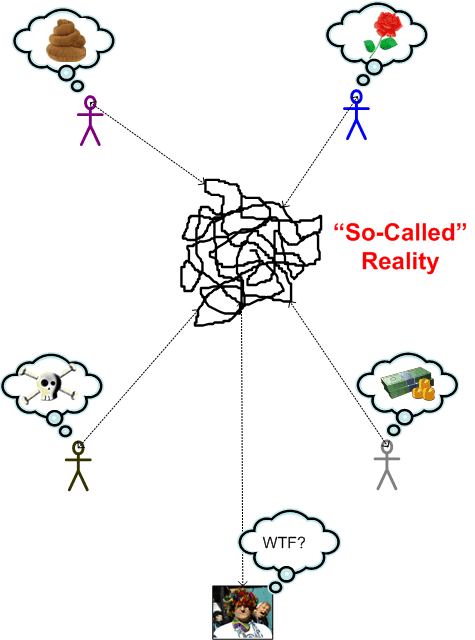

So-Called Reality

Your Fork, Sir

To that dumbass BD00 simpleton, it’s simple and clear cut. People don’t like to be told how to do their work by people who’ve never done the work themselves and, thus, don’t understand what it takes. Orgs that insist on maintaining groups whose sole purpose is to insert extra tasks/processes/meetings/forms/checklists of dubious “added-value” into the workpath foster mistrust, grudging compliance, blown schedules, and unnecessary cost incursion. It certainly doesn’t bring out the best in their people, dontcha think?

You would think that presenting “certified” obstacle-inserters with real industry-based data implicating the cost-inefficiency of their imposed requirements on value-creation teams might cause them to pause and rethink their position, right? Fuggedaboud it. All it does, no matter how gently you break the news, is cause them to dig in their heels; because it threatens the perceived importance of their livelihood.

Of course, this post, like all others on this bogus blawg, is a delusional distortion of so-called reality. No?