Archive

Open, Closed, Inquiring

“Every man, wherever he goes, is encompassed by a cloud of comforting convictions, which move with him like flies on a summer day.” – Bertrand Russell

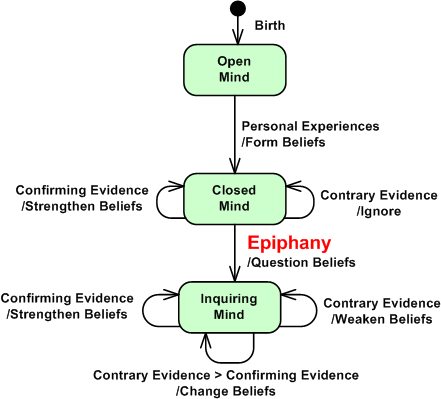

Out of the chute, so to speak, we’re all born with open minds. As we age and accumulate one experience after another, we naturally start forming beliefs based on those experiences. The experiences of other individuals (like our friends and parents) and institutions (like our schools, our corpocracies, and our government) are also impressed upon us. The more similar our new experiences to our previous experiences, the more attached we become to our beliefs. Unknowingly, we’ve started to construct our own very personal “unshakable cognitive burden” (UCB) from the ground up.

As our attachment to (at least some of) those beliefs hardens through exposure to more and more confirming evidence, our minds close up and we start suffering more and more. We tend to conveniently ignore, or violently reject, disconfirming evidence to the contrary in order to preserve our hard earned sense of safety and security. Each subsequent experience causes a nearly instantaneous transition out of, and back into, the closed mind state. Once a core belief (the earth is flat, the sun revolves around the earth, “they” are always right, “—-ism” is infallible) has hardened, intellectual and spiritual growth stops. Stasis sets in. Bummer.

So, how does one break the infinite loop of self-transitions out of, and then back into, the closed UCB mind state? Does another more flexible state exist? I think one may exist- the “Inquiring Mind” state, but I don’t have a clue on how to make the jump to get there. In this state, beliefs still exist but our attachment to them is not absolute. Our level of attachment is fluid and ever changing. As a consequence, our suffering, and more importantly, the suffering of those around us, decreases. The world becomes a kinder and gentler place to live in. We start to recognize our connectedness to all “things” and we empathize with people who still hold fast to their core beliefs.

The state machine below shows one speculative way out of the closed mind state and into the inquiring mind state – the experience of an instantaneous, life-changing epiphany. It’s speculation on my part because I don’t know squat and it’s just a belief that is a brick in my UCB.

Initiative Initiation

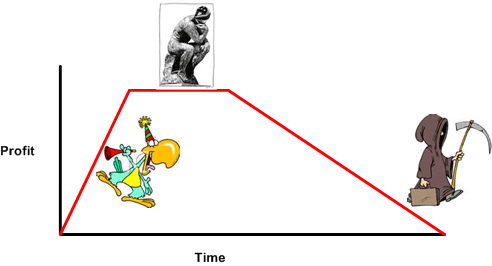

Assume that the graph below describes the rise and fall of a hypothetical CCH (Command and Control Hierarchical) business. During the party time phase of increasing profits (whoo hoo!), the CCH corpocrats in charge pat themselves on the back, stuff their pockets, and slowly inflate their heads with bravado and delusions of infallibility.

In order to extend the increasing profit trajectory, an undetectable status quo preserving mindset slowly but surely kicks in. Hell, if it ain’t broke, don’t touch the damn thang. Since what the CCH (so-called) leadership is doing is working, any individual or group from within or without the cathedral walls who tries to deviate significantly from the norm is swiftly “dealt with” by the corpocrats in charge. Everthing needs to get approved by a gauntlet of “important” people. However, while the shackles are being tightened and the ability to scale for success is being snuffed, the external environment keeps changing relentlessly in accordance with the second law of thermodynamics. Profit starts eroding and tension starts ratcheting upwards. Out of fear of annihilation, the cuffs are tightened further and the death dive has begun. Bummer.

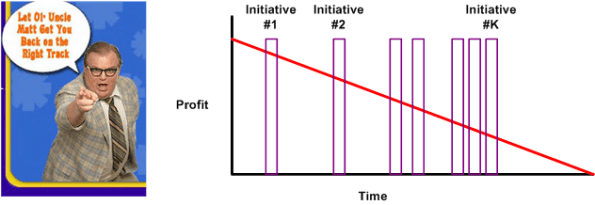

During the free fall to obscurity, the now brain-dead and immobile corpocrats in charge start “taking aggressive action” to stem the flow of red ink. Platitudes and Matt Foley-like motivational speeches are foisted upon the DICs (Dweebs In the Cellar) in frantic attempts to self-medicate away the pain of stasis and failure. Initiatives with cute and inspiring names are started but never finished (because it takes real hands-on leadership, sweat, and work to follow through). As corposclerosis accelerates, silver-bullet-bearing consultants are brought in and the frequency of initiative initiation increases. Calls for accountability of “them” pervade the corpocracy from the top down and vice versa.

After being hammered by pleas to “improve performance” and being pounded by the endless tsunamis of hollow initiatives, the DICs disconnect and distance themselves from the lunacy being doled out by the omnipotent dudes in the politboro. Since the DICs expect the corpocrats to effect the “turnaround” and the corpocrats expect the DICs to strap on their Nikes and “just do it”, no one takes ownership and nothing of substance changes. As you might surmise, it’s a Shakespearian tragedy with no happy ending. Bummer squared.

Leaderless CCHs deserve what they get; a fearful, disconnected workforce and a roller coaster ride to oblivion.

Targeted Ire

What we need is more people to lose their temper in public. – Watts Wacker

When you’re dissatisified with a stagnant, risk averse, and status-quo-loving bureaucratic group, how do you blow off steam without alienating or intimidating those few people who help you do your job better and those people you are committed to helping do their jobs better? One approach, which doesn’t work but is incredibly hard to abandon , is “targeted ire“.

When I perceive smug, fat headed executives and managers (of all types) talking up a storm, sucking more out of the org than they put in, and doing nothing of substance to improve everyone’s performance, it’s hard for me to “act professionally” (lol!) and keep my freakin’ mouth shut. In the back of my tortured mind, I often hear a faint and fearful voice saying “STFU you idiot“. Sadly, I find it incredibly hard, if not impossible, to follow that advice. Besides the ever present “fear of excommunication“, I think the fact that I don’t aspire to become a self-important, meeting-loving, and game-playing corpocrat drives my self-destructive behavior. Bummer……. or not?

“Never apologize for showing feeling. When you do so, you apologize for the truth.” – Benjamin Disraeli

So, how do you express your dissatisfaction with a stationary and fading organization when the world is crying out for movement and emergence? Do you do anything about it? Do you assume that you‘re powerless, ignore your passion, and force yourself to STFU? Do you put on “the mask of political correctness” such that your potentially sacred-cow-busting ideas and thoughts get obscured by all the sugar that you coat them with? Are you paralyzed by fear? What do you advise?

Scaleability

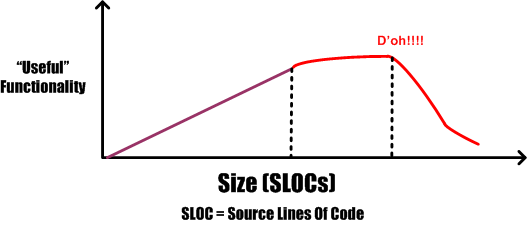

The other day, a friend suggested plotting “functionality versus size” as a potentially meaningful and actionable measure of software development process prowess. The figure below is an unscientific attempt to generically expand on his idea.

Assume that the graph represents the efficiency of three different and unknown companies (note: since I don’t know squat and I am known for “making stuff up”, take the implications of the graph with a grain of salt). Because it’s well known by industry experts that the complexity of a software-intensive product increases at a much faster rate than size, one would expect the “law of diminishing returns” to kick in at some point. Now, assume that the inflection point where the law snaps into action is represented by the intersection of the three traces in the graph. The red company’s performance clearly shows the deterioration in efficiency due to the law kicking in. However, the other two companies seem to be defying the law.

How can a supposedly natural law, which is unsentimental and totally indifferent to those under its influence, be violated? In a word, it’s “scaleability“. The purple and green companies have developed the practices, skills, and abilities to continuously improve their software development processes in order to keep up with the difficulty of creating larger and more complex products. Unlike the red company, their processes are minimal and flexible so that they can be easily changed as bigger and bigger products are built.

Either quantitatively or qualitatively, all growing companies that employ unscaleable development processes eventually detect that they’ve crossed the inflection point – after the fact. Most of these post-crossing discoverers panic and do the exact opposite of what they need to do to make their processes scaleable. They pile on more practices, procedures, forms-for-approval, status meetings, and oversight (a.k.a. managers) in a misguided attempt to reverse deteriorating performance. These ironic “process improvement” actions solidify and instill rigidity into the process. They handcuff and demoralize development teams at best, and trigger a second inflection point at worst:

More meetings plus more documentation plus more management does not equal more success. – NASA SEL

Is your process scaleable? If so, what specific attributes make it scaleable? If not, are the results that you’re getting crying out for scaleability?

Percent Complete

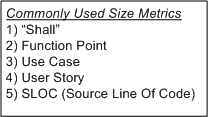

In order to communicate progress to someone who requires a quantitative number attached to it, some sort of consistent metric of accomplishment is needed. The table below lists some of the commonly used size metrics in the software development world.

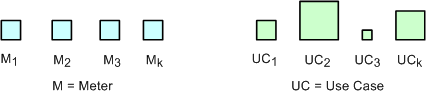

All of these metrics suffer to some extent from a “consistency” problem. The problem (as exemplified in the figure below) is that, unlike a standard metric such as the “meter”, the size and meaning of each unit is different from unit to unit within an application, and across applications. Out of all the metrics in the list, the definition of what comprises a “Function Point” unit seems to be the most rigorous, but it still suffers from a second, “translation” problem. The translation problem manifests when an analyst attempts to convert messy and ambiguous verbal/written user needs into neat and tidy requirement metrics using one of the units in the list.

Nevertheless, numerically-trained MBA and PMI certified managers and their higher up executive bosses still obsessively cling to progress reports based on these illusory metrics. These STSJs (Status Takers and Schedule Jockeys) love to waste corpo time passing around status reports built on quicksand like the “percent done” example below.

The problems with using graphs like this to “direct” a project are legion. First, it is assumed that the TNFP is known with high accuracy at t=0 and, more erroneously, that its value stays constant throughout the duration. A second problem with this “best practice” is that lots, if not all, non-trivial software development projects do not progress linearly with the passage of time. The green trace in the graph is an example of a non-linearly progressing project.

Since most managers are sequential, mechanistic, left-brain-trained thinkers, they falsely conclude that all projects progress linearly. These bozelteens also operate under the meta-assumption that no initial assumptions are violated during project execution (regardless of what items they initially deposited in their “risk register” at t=0). They mistakenly arrive at conclusions like: ” if it took you two weeks to get to 50% done, you will be expected to be done in two more weeks”. Bummer.

Even after trashing the “percent complete” earned-value-management method in the previous paragraphs, I think there is a chance to acquire a long term benefit by tracking progress this way. The benefit can accrue IF AND ONLY IF the method is not taken too seriously and it’s not used to impose undue stress upon the software creators and builders who are trying their best to balance time-cost and quality. Performing the “percent complete” method over a bunch of projects and averaging the results can yield decent, but never 100% accurate, metrics that can be used to more effectively estimate future project performance. What do you think?

Pay As You Go

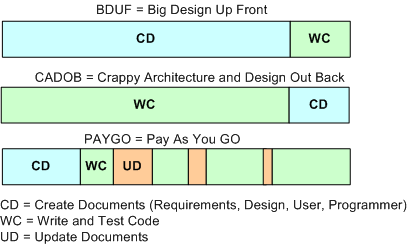

An age old and recurring source of contention in software-intensive system development is the issue of deciding how much time to spend coding and how much time to spend writing documentation artifacts. The figure below shows three patterns of development: BDUF, CADOB, and PAYGO.

Prior to the agile “revolution“, most orgs spent a lot of time generating software documentation during the front end of a project. The thinking was that if you diligently mapped out and physically recorded your design beforehand, the subsequent coding, integration, and test phases would proceed smoothly and without a hitch. Bzzzt! BDUF (bee-duff) didn’t work out so well. Religiously following the BDUF method (a.k.a. the waterfall method) often led to massive schedule and cost overruns along with crappy and bug infested software. Bummer.

In search of higher quality and lower cost results, a well meaning group of experts conceived of the idea of “agile” software development. These agile proponents, and the legions of programmers soon to follow, pointed to the publicly visible crappy BDUF results and started evangelizing minimal documentation up front. However, since the vast majority of programmers aren’t good at writing anything but code, these legions of programmers internalized the agile advice to the extreme; turning the dials to “10”, as Kent Beck would say. Citing the agile luminaries, massive numbers of programmers recoiled at any request for up front documentation. They happily started coding away, often leading to an unmaintainable shish-CADOB (Crappy Architecture and Design Out Back). Bozo managers, exclusively measured on schedule and cost performance by equally unenlightened corpocratic executives, jumped on this new silver bullet train. Bzzzt! Extreme agility hasn’t worked very well either. The extremist wing of the agilista party has in effect regressed back to the dark ages of hack and fix programming, hatching impressive disasters on par with the BDUF crews. In extreme agile projects where documentation is still required by customers, a set of hastily prepared, incorrect, and unusable design/user/maintenance artifacts (a.k.a. camouflage) is often produced at the tail end of the project. Boo hoo, and WAAAAGH!

As the previously presented figure illustrates, a third, hybrid pattern of software-intensive system development can be called PAYGO. In the PAYGO method, the coding/test and artifact-creation activities are interlaced and closely coupled throughout the development process. If done correctly, progressively less project time is spent “updating” the document set and more time is spent coding, integrating, and testing. More importantly, the code and documentation are diligently kept in synch with each other.

An important key to success in the PAYGO method is to keep the content of the document artifact set at a high enough level of abstraction “above” the source code so that it doesn’t need to be annoyingly changed with every little code change. A second key enabler to PAYGO success is the ability and (more importantly) the will to write usable technical documentation. Sadly, because the barriers to adoption are so high, I can’t imagine the PAYGO method being embraced now or in the future. Personally, I try to do it covertly, under the radar. But hey, don’t listen to me because I don’t have any credentials, I like to make stuff up, and I’ve been told by infallible and important people that I’m not fit to lead 🙂

The only way to learn how to write is by wrote.

Structure And The “ilities”

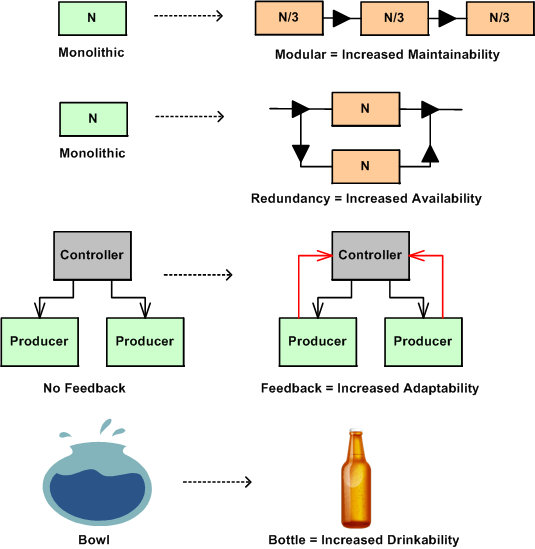

In nature, structure is an enabler or disabler of functional behavior. No hands – no grasping, no legs – no walking, no lungs – no living. Adding new functional components to a system enables new behavior and subtracting components disables behavior. Changing the arrangement of an existing system’s components and how they interconnect can also trade-off qualities of behavior, affectionately called the “ilities“. Thus, changes in structure effect changes in behavior.

The figure below shows a few examples of a change to an “ility” due to a change in structure. Given the structure on the left, the refactored structure on the right leads to an increase in the “ility” listed under the new structure. However, in moving from left to right, a trade-off has been made for the gain in the desired “ility”. For the monolithic->modular case, a decrease in end-to-end response-ability due to added box-to-box delay has been traded off. For the monolithic->redundant case, a decrease in buyability due to the added purchase cost of the duplicate component has been introduced. For the no feedback->feedback case, an increase in complexity has been effected due to the added interfaces. For the bowl->bottle example, a decrease in fill-ability has occurred because of the decreased diameter of the fill interface port.

The plea of this story is: “to increase your aware-ability of the law of unintended consequences”. What you don’t know CAN hurt you. When you are bound and determined to institute what you think is a “can’t lose” change to a system that you own and/or control, make an effort to discover and uncover the ilities that will be sacrificed for those that you are attempting to instill in the system. This is especially true for socio-technical systems (do you know of any system that isn’t a socio-technical system?) where the influence on system behavior by the technical components is always dwarfed by the influence of the components that are comprised of groups of diverse individuals.

Islands Of Sanity

Via InformIT: Safari Books Online – 0201700735 – The C++ Programming Language, Special Edition, I snipped this quote from Bjarne Stroustrup, the creator of the C++ programming language:

“AT&T Bell Laboratories made a major contribution to this by allowing me to share drafts of revised versions of the C++ reference manual with implementers and users. Because many of these people work for companies that could be seen as competing with AT&T, the significance of this contribution should not be underestimated. A less enlightened company could have caused major problems of language fragmentation simply by doing nothing.”

There are always islands of sanity in the massive sea of corpocratic insanity. AT&T’s behavior at that time during the historical development of C++ showed that they were one of those islands. Is AT&T still one of those rare anomalies today? I don’t have a clue.

Another Bjarne quote from the book is no less intriguing:

“In the early years, there was no C++ paper design; design, documentation, and implementation went on simultaneously. There was no “C++ project” either, or a “C++ design committee.” Throughout, C++ evolved to cope with problems encountered by users and as a result of discussions between my friends, my colleagues, and me.”

WTF? Direct communication with users? And how can it be possible that no PMI trained generic project manager or big cheese executive was involved to lead Mr. Stroustrup to success? Bjarne should’ve been fired for not following the infallible, proven, repeatable, and continuously improving, corpo product development process. No?

Dynamic “To Do” List

While making an iterative pass over the pages in my wiki space, I stumbled upon the goals for 2009 that I recorded on my “Dynamic To-Do List” Page:

- Increase my question to statement ratio.

- Don’t comment on stuff that I don’t know anything about!

- Don’t give unsolicited opinions as much; especially when I know it will piss off someone with a higher rank.

- Listen without immediately formulating refutations and alternative views: resist the urge to “auto-reject”. Listen to understand, not to criticize.

- Ask “How can I help?” more often, especially to younger folks.

Stacks And Icebergs

The picture below attempts to communicate the explosive growth in software infrastructure complexity that has taken place over the past few decades. The growth in the “stack” has been driven by the need for bigger, more capable, and more complex software-intensive systems required to solve commensurately growing complex social problems.

In the beginning there was relatively simple, “fixed” function hardware circuitry. Then, along came the programmable CPU (Central Processing Unit). Next, the need to program these CPUs to do “good things” led to the creation of “application software”. Moving forward, “operating system software” entered the picture to separate the arcane details and complexity of controlling the hardware from the application-specific problem solving software. Next, in order to keep up with the pace of growing application software size , the capability for application designers to spawn application tasks (same address space) and processes (separate address spaces) was designed into the operating system software. As the need to support geographically disperse, distributed, and heterogeneous systems appeared, “communication middleware software” was developed. On and on we go as the arrow of time lurches forward.

As hardware complexity and capability continues to grow rapidly, the complexity and size of the software stack also grows rapidly in an attempt to keep pace. The ability of the human mind (which takes eons to evolve compared to the rate of technology change) to comprehend and manage this complexity has been overwhelmed by the pace of advancement in hardware and software infrastructure technology growth.

Thus, in order to appear “infallibly in control” and to avoid the hard work of “understanding” (which requires diligent study and knowledge acquisition), bozo managers tend to trivialize the development of software-intensive systems. To self-medicate against the pain of personal growth and development, these jokers tend to think of computer systems as simple black boxes. They camouflage their incompetence by pretending to have a “high level” of understanding. Because of this aversion to real work, these dudes have no problem committing their corpocracies to ridiculous and unattainable schedules in order to win “the business”. Have you ever heard the phrase “aggressive schedule”?

“You have to know a lot to be of help. It’s slow and tedious. You don’t have to know much to cause harm. It’s fast and instinctive.” – Rudolph Starkermann