Archive

Oppose A Thing

“Men often oppose a thing merely because they have had no agency in planning it, or because it may have been planned by those whom they dislike.” – Alexander Hamilton

If you buy into Hamilton’s quote, then you’ll realize that it explains all kinds of irrational behavior at work by those in charge. Another ditty that explains counterproductive behavior and ludicrous decision-making in mediocracies is:

“It’s not what you say, it’s how you say it.”

When someone is disliked by, or is brutally honest to those in power, even the best ideas offered up by the perceived villain will be rejected. It doesn’t matter if an idea could potentially save the corpocracy tons of money or bring in new business, the idea will be killed in the cradle. Of course, many kinds of clever camouflage and pseudo-rational reasons will be given for the rejection, but the underlying truth is what Mr. Hamilton stated hundreds of years ago.

Who says that business isn’t personal?

Continuous Husbandry

One definition of a system is “a collection of interacting elements designed to fulfill a purpose“. A well known rule of thumb for designing robust and efficient social, technical, and socio-technical systems is:

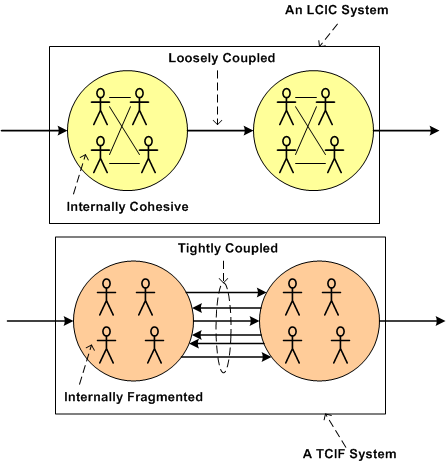

Keep your system elements Loosely Coupled and Internally Cohesive (LCIC)

The opposite of this golden rule is to design a system that has Tightly Coupled and Internally Fragmented (TCIF) elements. TCIF systems are rigid, inflexible, and tough to troubleshoot when the system malfunctions.

Designing, building, testing, and deploying LCIC systems is not enough to ensure that the system’s purpose will be fulfilled over long periods of time. Because of the relentless increase in entropy dictated by the second law of thermodynamics, continuous husbandry (as my friend W. L. Livingston often says) is required to arrest the growth in entropy. Without husbandry, LCIC systems (like startup companies) morph into TCIF systems (like corpocracies). The transformation can be so slooow that it is undetectable – until it’s too late. In subtle LCIC-to-TCIF transformations, it takes a crisis to shake the system architect(s) into reality. In a sudden jolt of awareness, they realize that their cute and lovable baby has turned into an uncontrollable ogre capable of massive stakeholder destruction. Bummer.

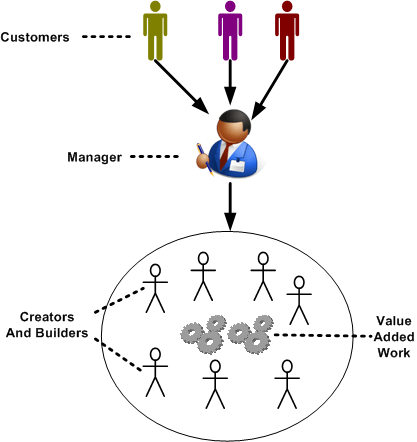

Layers Of Value Streams

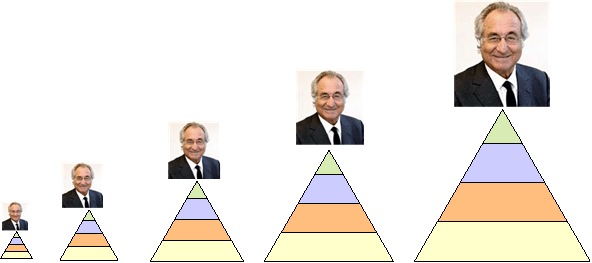

An organization of people assembled for a purpose runs on all cylinders when every layer in its control structure creates a value stream that puts more into the org than it takes out. The higher you go up the pyramid of privilege, the less visible the added value, but the more the impact. In dysfunctional orgs, the upper layers don’t create any value and their impact is damaging to the whole. Like leeches, they suck the blood out of the org without contributing anything of substance.

It’s the responsibility (or, it should be) of each upper layer to sample the value stream produced by the lower layer(s) to ensure continuous excellence and improvement. Except for the DICforce (at rock bottom of course), each upper level can sample and measure any/all of the value streams below them.

In screwed up and inefficient corpocracies where the upper layers are too lazy or incompetent to sample the lower layer value streams, the only value stream samplers are the customers. This means that if crap makes it out the door, they’re the ones who discover and report it. At worst, they don’t report it and they silently blow off the org. They never buy anything from it again, and they tell all their peers to stay away from the crap factory. Meanwhile, everyone back at doo-doo ranch is asking each other; “Lucy, whuh hah-penned?”.

Buffer Gone Awry

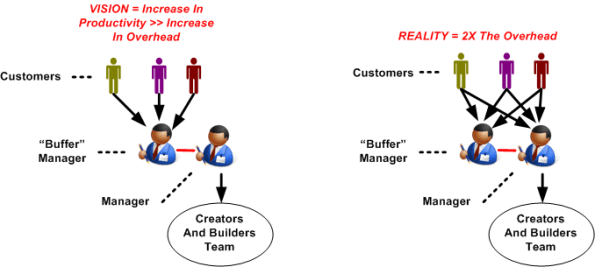

Assume that you’re a manager enveloped within the predicament below. On a daily basis, you’re trying to coordinate bug fixes and new feature additions to your product while simultaneously getting hammered by internal and external customers with problem reports and new feature requests.

In order to reduce your workload and increase productivity, your meta-manager decides to add a “buffer” manager to filter and smooth out the customer interface side of your job. As the left side of the figure below shows, the hope is that the team’s increased productivity will offset the doubling of overhead costs associated with adding a second manager to the mix. However, when your customers find out that they now have two managers to voice their problems and needs to, the situation on the right develops: your workload remains the same; you now have an additional interface with the buffer manager (who has less of a workload than you); the overhead cost to the org doubles. Bummer.

Appliablity

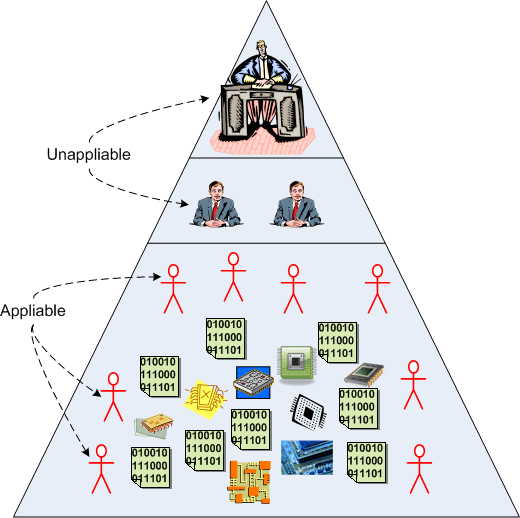

In the “good ole’ days”, products, along with the development and production processes to create them, were much simpler. Modern day knowledge-intensive products require both deep and broad know-what and know-how to be successful in the marketplace. Accordingly, in the “good ole’ days” many front line and second tier managers were skilled enough to man the production lines when workers went on strike. In knowledge-intensive industries like software development, that’s no longer true – even if the manager was an engineer just prior to promotion. It’s especially true in today’s fast moving environment where skills become obsolete as soon as they’re mastered. D’oh!

Another way of expressing the idea above is in the corpo lingo of “appliability”. For the most part, managers don’t have the skills to be appliable anymore. They’re a pure overhead expense to the orgs they work for. Thus, unless they’re PHORs, they’re a drain on profits. The next time a non-PHOR manager tells you that “you’re expensive to employ” retort back “at least I’m appliable” – if you dare.

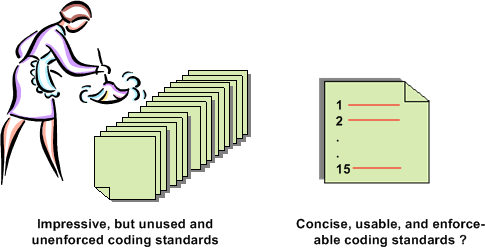

Project-Specific Coding Guidelines

I’m about to embark on the development of a distributed, scalable, data-centric, real-time, sensor system. Since some technical risks are at this point disturbingly high, especially meeting CPU loading and latency requirements, a team of three of us (two software engineers and one system engineer) are going to prototype several CPU-intensive system functions.

Assuming that our prototyping effort proves that our proposed application layer functional architecture is feasible, a much larger team will be applied to the effort in an attempt to meet schedule. In order to promote consistency across the code base, facilitate on-boarding of new team members, and lower long term maintenance costs, I’m proposing the following 15 design and C++ coding guidelines:

- Minimize macro usage.

- Use STL containers instead of homegrown ones.

- No unessential 3rd party libraries, with the exception of Boost.

- Strive for a clear and intuitive namespace-to-directory mapping.

- Use a consistent, uniform, communication scheme between application processes. Deviations must be justified.

- Use the same threading library (Boost) when multi-threading is needed within a process.

- Design a little, code a little, unit test a little, integrate a little, document a little. Repeat.

- Avoid casting. When casting is unavoidable, use the C++ cast operators so that the cast hacks stick out like a sore thumb.

- No naked pointers when heap memory is required. Use C++ auto and Boost smart pointers.

- Strive for pure layering. Document all non-adjacent layer interface breaches and wrap all forays into OS-specific functionality.

- Strive for < 100 lines of code per function member.

- Strive for < 4 nested if-then-else code sections and inheritance tree depths.

- Minimize “using” directives, liberally employ “using” declarations to keep verbosity low.

- Run the code through a static code analyzer frequently.

- Strive for zero compiler warnings.

Notice that the list is short, (maybe) memorize-able, and (maybe) enforceable. It’s an attempt to avoid imposing a 100 page tome of rules that nobody will read, let alone use or enforce. What do you think? What is YOUR list of 15?

Dude, Read It The Freakin’ First Time

Recently, I was asked by Joe Manager to estimate the amount of resources (people and time) that I thought would be consumed in the development of a large, computationally-intensive, software-centric system. I dutifully did what was asked of me and posted a coarse, first cut estimate on the company wiki for all to scrutinize. I also provided a link to the page to Joe Manager. Unsurprisingly (to me at least) , at the next “planning” meeting I was asked again by Joe, on-the-spot and in real-time, what it would take to do the job if I were to lead the project. Since my pre-meeting intuition told me to bring a hard copy of my estimate to the meeting, I presented it to Mr. Manager and gently reminded him that I had forwarded it to him earlier when he asked for it the (freakin’) first time.

Apparently, besides being forgetful, poor Joe wasn’t told of the not-so-secret policy that I’m not allowed to lead anyone, let alone a team on a large and costly software development project that could be pretty important to the company’s future. Plus, since Joe’s last name is “Manager”, isn’t he supposed to at least consider planning and leading the effort his own freakin’ self? Or is he just envisioning someone else struggling to get it done while he periodically samples status, rides the schedule, and watches from the grassy knoll.

In a bacon and eggs breakfast, the chicken is involved, but the pig is committed – Ken Schwaber

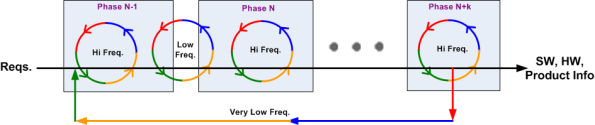

Iteration Frequency

Obviously, even the most iterative agile development process marches forward in time. Thus, at least to a small extent, every process exhibits some properties of the classic and much maligned waterfall metaphor. On big projects, the schedule is necessarily partitioned into sequential time phases. The figure below (Reqs. = requirements, Freq. = frequency, HW = hardware, SW = software) attempts to model both forward progress and iteration as a function of time.

If the phases are designed to be internally cohesive, externally loosely coupled , and the project is managed correctly, the frequency of iteration triggered by the natural process of human learning and fixing mistakes is:

- high within a given project phase

- low between adjacent project phases

- very low between non-adjacent project phases

Of course, if managed stupidly by explicitly or implicitly “disallowing” any iterative learning loops in order to meet equally stupid and “aggressive” schedules handed down from the heavens, errors and mistakes will accumulate and weave themselves undetected into the product fabric – until the customer starts using the contraption. D’oh!

Keep A Tally

Starting today, keep a tally on the number of times that mangers in your org say the words “schedule” and “quality” over the next month. At the end of the month, compute the ratio of schedule-to-quality pronouncements that you recorded; the SQR. I’ll bet you that at the end of the month, SQR >> 1.

Ponerology’s Six Percent

From wikipedia:

Ponerology is the name given by Polish psychiatrist Andrzej Łobaczewski to an interdisciplinary study of the causes of periods of social injustice. This discipline makes use of data from psychology, psychopathology, sociology, philosophy, and history to account for such phenomena as aggressive war, ethnic cleansing, genocide, and police states.

A like-minded friend recently pointed out Lobaczewski’ book to me. I read the preface, the foreword, and the first chapter for free on Google books. In the first chapter, Andrzej claims that since the dawn of mankind, 6% of humanity has been comprised of suave, clever, law-abiding psychopaths who have zero conscience – none, nada, zilch. The “no conscience” advantage that these people have had over the other 94% of the populace has allowed the wretches to commit the greatest number of man-made atrocities over the entire course of history. Andrzej also states that this advantage allows them to perpetually rise, undiscovered, to the highest levels of government and industry. Scary stuff, no?

Here are a couple of my favorite passages from the book:

Psychology is the only science in which the observer and the observed belong to the same species, even the same person in an act of introspection. That is why his natural world view of humans can be neither sufficiently universal nor completely true.

Whenever a society has become enslaved to others or to the rule of an overly-privileged class, psychology is the first discipline to suffer from censorship and incursions on the part of an administrative body which starts claiming the last word as to what represents scientific truth.