Archive

Reinventing The Wheel

In Federico Biancuzzi’s “Masterminds Of Programming“, UML co-creator Jim Rumbaugh states:

The computing field has a lot of people that think very well of themselves and seem to forget that there is any past to build upon. A lot of people keep reinventing things that have already been discovered. – Jim Rumbaugh

LOL! I understand what Jim’s saying, but there can be another reason for reinventing the wheel too. How many slightly different API versions of virtually similar “reusable” libraries are littered around your software development org? How many of them have you written and rewritten yourself?

If a library/module/component is poorly or “un” documented in terms of its design, external dependencies, and most importantly, usage examples, it t’aint gonna be reused. Even if the thang IS miraculously well documented, if the info is not integrated, organized, and easily accessible, it ain’t gonna be reused either. Both of these shortcomings, which are highly likely since most programmers don’t “do documentation” and managers don’t want to pay for non-camouflage documentation, guarantee reinventing the wheel over and over again.

Assume that you truly do want to reuse someone else’s code to save time, but all you have is the source code. You’re gonna have to pour through the mess to figure out what it offers, how it works, and what other components and libraries it depends on before you can consider using it “as is“. The larger and denser the component, the deeper and wider the inheritance tree, the more external dependencies, the more frustrated you’ll become and the less likely you’ll apply your brainpower and time to the task at hand. When that happens, Ta Dah, it’s time to roll your own – yet again. Hell, if you don’t document your own stuff, you might not even be able to eat your own dog food downstream. D’oh! I hate when that happens. And yes, it happens to me.

Read, Read, Read

To put it mildly, I’m not too fond of software project and “functional” software managers that don’t read code. Even worse, wanna-be-manager tweeners and lofty software “architects” who don’t read code are the pits. Note that I’m not demanding that these exalted ones write code, just actually RTFC (Read The F#@^&*! Code). Why? I thought you’d never ask…..

You see, I’m a believer in the “trust but verify” motto popularized by Ronald Reagan during the cold war. The only pseudo-objective way to truly assess progress, consistency, and quality on a software project is to sample the product – you know, the code. If you know of a better way, then I’m all ears.

It’s not that I don’t trust people to try their best, it’s just that most hierarchical cultures are toxic by unintentional design and that forces people to innocently cover up or camouflage a lack of progress when they fill out their “weekly status sheets” or verbally report progress at useless CYA (Cover Your Arse) meetings. Sadly, once DICs get appointed into elite manager and architect titles, they tend to leave their code reading and (especially) writing days behind. Of course, they’ve arrived (Halleluja!) and they no longer have to do any “janitorial” work that can be done by fungible DORKs.

How about you? Do you think people in the roles of software project manager, software functional manager, and software architect should actively read code as part of their jobs?

In many companies, enterprise architects sit in an ivory tower without doing anything useful. – Ivar Jacobson

An Epic Heavyweight Battle

In this corner, we have Bjarne “No Yarn” Stroustrup, the father of C++. In the other corner, we have James “The Goose” Gosling, the father of Java. Ding, ding…… let’s get ready to ruuuumble!

“No Yarn” comes outs swinging and throws the first haymaker:

Well, the Java designers—and probably the Java marketers even more so—emphasized OO to the point where it became absurd. When Java first appeared, claiming purity and simplicity, I predicted that if it succeeded Java would grow significantly in size and complexity. It did. For example, using casts to convert from Object when getting a value out of a container (e.g., (Apple)c.get(i)) is an absurd consequence of not being able to state what type the objects in the container is supposed have. It’s verbose and inefficient. Now Java has generics, so it’s just a bit slow. Other examples of increased language complexity (helping the programmer) are enumerations, reflection, and inner classes. The simple fact is that complexity will emerge somewhere, if not in the language definition, then in thousands of applications and libraries. Similarly, Java’s obsession with putting every algorithm (operation) into a class leads to absurdities like classes with no data consisting exclusively of static functions. There are reasons why math uses f(x) and f(x,y) rather than x.f(), x.f(y), and (x,y).f()—the latter is an attempt to express the idea of a “truly object-oriented method” of two arguments and to avoid the inherent asymmetry of x.f(y).

In an agile counter move, “The Goose” launches a monstrous left hook:

These days we’re beating the really good C and C++ compilers pretty much always. When you go to the dynamic compiler, you get two advantages when the compiler’s running right at the last moment. One is you know exactly what chipset you’re running on. So many times when people are compiling a piece of C code, they have to compile it to run on kind of the generic x86 architecture. Almost none of the binaries you get are particularly well tuned for any of them. When HotSpot runs, it knows exactly what chipset you’re running on. It knows exactly how the cache works. It knows exactly how the memory hierarchy works. It knows exactly how all the pipeline interlocks work in the CPU. It knows what instruction set extensions this chip has got. It optimizes for precisely what machine you’re on. Then the other half of it is that it actually sees the application as it’s running. It’s able to have statistics that know which things are important. It’s able to inline things that a C compiler could never do. The kind of stuff that gets inlined in the Java world is pretty amazing. Then you tack onto that the way the storage management works with the modern garbage collectors. With a modern garbage collector, storage allocation is extremely fast.

On the age old debate about naked pointers, “The Goose” unleashes a crippling blow to the left kidney:

Pointers in C++ are a disaster. They are just an invitation to errors. It’s not so much the implementation of pointers directly, but it’s the fact that you have to manually take care of garbage, and most importantly that you can cast between pointers and integers—and the way many APIs are set up, you have to!

Enraged, “No Yarn” returns the favor with a rapid jab, jab, jab, uppercut combo:

Well, of course Java has pointers. In fact, just about everything in Java is implicitly a pointer. They just call them references. There are advantages to having pointers implicit as well as disadvantages. Separately, there are advantages to having true local objects (as in C++) as well as disadvantages. C++’s choice to support stack-allocated local variables and true member variables of every type gives nice uniform semantics, supports the notion of value semantics well, gives compact layout and minimal access costs, and is the basis for C++’s support for general resource management. That’s major, and Java’s pervasive and implicit use of pointers (aka references) closes the door to all that.

The “dark side” of having pointers (and C-style arrays) is of course the potential for misuse: buffer overruns, pointers into deleted memory, uninitialized pointers, etc. However, in well-written C++ that is not a major problem. You simply don’t get those problems with pointers and arrays used within abstractions (such as vector, string, map, etc.). Scoped resource management takes care of most needs; smart pointers and specialized handles can be used to deal with most of the rest. People whose experience is primarily C or old-style C++ find this hard to believe, but scope-based resource management is an immensely powerful tool and user-defined types with suitable operations can address classical problems with less code than the old insecure hacks.

Stunned silly, “The Goose” steps back, regroups, and charges back into the fray:

One of the most problematic (situations) over the years in C++ has been multithreading. Multithreading is very tightly designed into the code of Java and the consequence is that Java can deal with multicore machines very, very well.

“The Yarn” stands his ground and attempts to weather the ferocious onslaught:

The very first C++ library (really the very first C with classes) library, provided a lightweight form of concurrency and over the years, hundreds of libraries and frameworks for concurrent, parallel, and distributed computing have been built in C++. C++0x will provide a set of facilities and guarantees that saves programmers from the lowest-level details by providing a “contract” between machine architects and compiler writers—a “machine model.” It will also provide a threads library providing a basic mapping of code to processors. On this basis, other models can be provided by libraries. I would have liked to see some simpler-to-use, higher-level concurrency models supported in the C++0x standard library, but that now appears unlikely. Later—hopefully, soon after C++0x—we will get more libraries specified in a technical report: thread pools and futures, and a library for I/O streams over wide area networks (e.g., TCP/IP). These libraries exist, but not everyone considers them well enough specified for the standard.

And the winner is………?

So, do you think that I’ve served well as an impartial referee for this epic heavyweight battle? Hell, I hope not. I’m strongly biased toward C++ because it’s taken me years of study and diligent practice to become an intermediate-to-advanced C++ programmer (but still well below the skill level of the masters). I think that most Java programmer’s who religiously trash C++ do so out of fear of: its breadth+depth, its suitability for application at all layers of the stack, and the option to “get dangerously close to the machine“. On the other hand, C++ programmers who trash Java do so out of a sense of elitism and a disdain for object oriented purity.

Note: The snippets in this blarticle were copied and pasted from the delightful and engrossing “Masterminds Of Programming“. The book’s author, Federico Biancuzzi, not only picked the best possible people to interview, his questions were insightful and deeply thought provoking.

Communication Layer Performance Benchmarking

Along with two outstanding and dedicated peers, I’m currently designing and writing (in C++) a large, distributed, multi-process, multi-threaded, scalable, real-time, sensor software system. Phew, that’s a lot of “see how smart I am” techno-jargon, no?

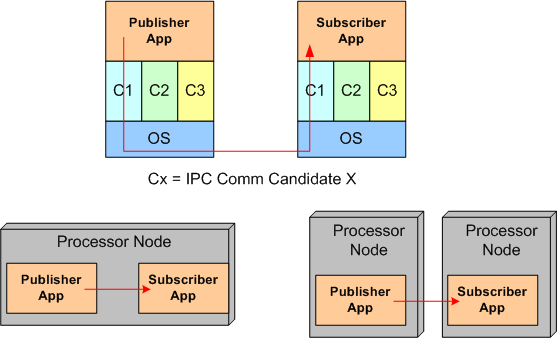

Since the performance and reliability of the underlying Inter-Process Communication (IPC) layer is critical to meeting our customer’s end-to-end system latency and throughput requirements, we decided to measure the performance of three different IPC candidates:

- Real Time Innovations Inc.’s implementation of the Object Management Group’s Data Distribution Service (DDS) standard

- The Apache Software Foundation’s ActiveMQ implementation of the Java Messaging System (JMS) standard

- A homegrown brew built on top of the Boost Organization‘s Asio (Asychronous input output) portable C++ library.

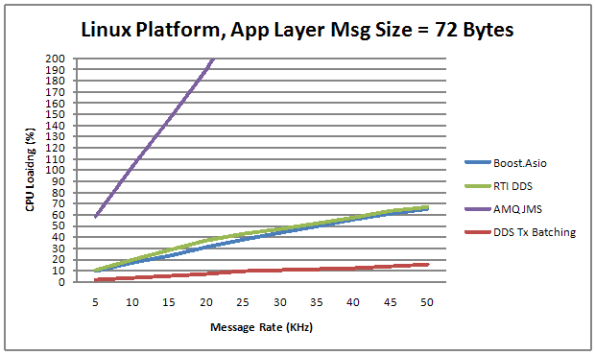

The figure below shows the average CPU load vs throughput performance of the three distributed system messaging communication candidates. Notice that the centralized broker-based JMS approach yielded horrendous relative results.

Transmit batching, along with a whole bevy of “free” (to application layer programmers) tunable features in RTI’s DDS, consists of aggregating a bunch of application layer messages into one network packet to increase the throughput (at the expense of increased latency). Since batching isn’t available in AMQ JMS or our “homegrown” Boost.Asio comm layer candidate, only the DDS performance increase is shown on the graph.

Measurement Approach

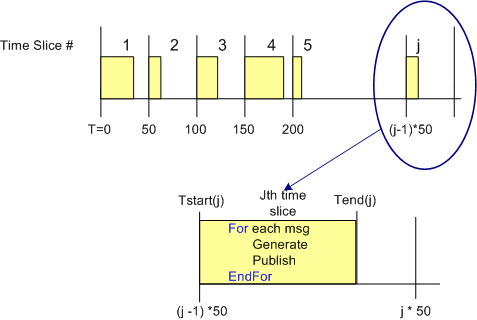

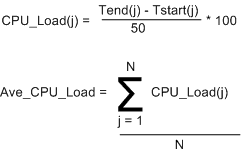

One way to measure the CPU load imposed on a processor node by an IPC layer candidate in a data streaming, real-time, system is to quantize time into discrete slices and measure the per slice processing time that it takes to send a fixed number of messages out via the comm software stack. Since other non-deterministic OS runtime functionality shares the CPU with the application processes and the comm software stack, measuring and averaging the normalized CPU time across a large number of slices can give some quantitative feel for the load imposed on the processor.

The figure below shows the approach that was taken to measure the CPU load versus throughput performance of the three communication layer candidates. To implement this strategy, I wrote a small C++ test application that is designed to operate in a time sliced mode, where the time slice size (default = 50 msecs) is user selectable via the command line.

During runtime, the test app generates and publishes a stream of “canned” messages at a user specified rate and for a user-defined test run duration. Upon the start of each time slice, the current time is “grabbed” and stored for later use. At the end of each tight, K-message, generate-and-publish loop, the end time is retrieved from the OS and then the percent CPU load for the slice is calculated in accordance with the simple equations below. At the end of the test run, the first 1000 sample points are averaged, and the result, along with the max and min loads measured during the run are printed to the console and a date stamped log file.

Of course, to ensure that the comm layer candidate wasn’t dropping or corrupting application messages during test runs, I wrote a subscriber app to provide a “resistor load” on the performance measuring publisher app process. By comparing the number and integrity of messages received to the number and integrity of those transmitted, the measurements were given higher credibility. The figure below shows the test fixtures that I ran the performance tests on. For the AMQ JMS candidate, a broker process was running along side of the app processes, but his single-point-of-failure component is not shown in the diagram.

Of course, to ensure that the comm layer candidate wasn’t dropping or corrupting application messages during test runs, I wrote a subscriber app to provide a “resistor load” on the performance measuring publisher app process. By comparing the number and integrity of messages received to the number and integrity of those transmitted, the measurements were given higher credibility. The figure below shows the test fixtures that I ran the performance tests on. For the AMQ JMS candidate, a broker process was running along side of the app processes, but his single-point-of-failure component is not shown in the diagram.

Quantification Of The Qualitative

Because he bucked the waterfall herd and advocated “agile” software development processes before the agile movement got started, I really like Tom Gilb. Via a recent Gilb tweet, I downloaded and read the notes from his “What’s Wrong With Requirements” keynote speech at the 2nd International Workshop on Requirements Analysis. My interpretation of his major point is that the lack of quantification of software qualities (you know, the “ilities”) is the major cause of requirements screwups, cost overruns, and schedule failures.

Here are some snippets from his notes that resonated with me (and hopefully you too):

- Far too much attention is paid to what the system must do (function) and far too little attention to how well it should do it (qualities) – in spite of the fact that quality improvements tend to be the major drivers for new projects.

- There is far too little systematic work and specification about the related levels of requirements. If you look at some methods and processes, all requirements are ‘at the same level’. We need to clearly document the level and the relationships between requirements.

- The problem is not that managers and software people cannot and do not quantify. They do. It is the lack of ‘quantification of the qualitative’ that is the problem.

- Most software professionals when they say ‘quality’ are only thinking of bugs (logical defects) and little else.

- There is a persistent bad habit in requirements methods and practices. We seem to specify the ‘requirement itself’, and we are finished with that specification. I think our requirement specification job might be less than 10% done with the ‘requirement itself’.

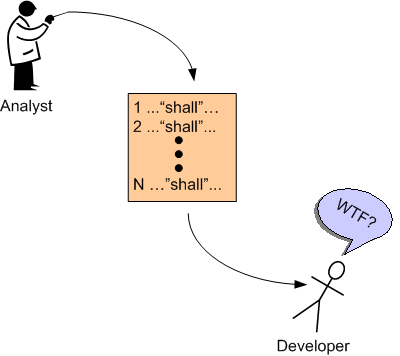

I can really relate to items 2 and 5. Expensive and revered domain specialists often do little more than linearly list requirements in the form of text “shalls”; with little supporting background information to help builders and testers clearly understand the “what” and “why” of the requirements. My cynical take on this pervasive, dysfunctional practice is that the analysts themselves often don’t understand the requirements and hence, they pursue the path of least resistance – which is to mechanically list the requirements in disconnected and incomprehensible fragments. D’oh!

Software Debt

If it hasn’t taken place already, be prepared for the latest buzz-concept in the software development world to go viral – “Software Debt“. I think that Ward Cunningham (who I love because he invented the Wiki) is the originator of the term “technical debt“, from which “Software Debt” is, no doubt, derived.

Voila, here’s the first book that I’ve seen so far with “Software Debt” in it’s title. Expect all kinds of seminars and videos and professional “Software Bankers” (who will certainly help you keep your debt low and prevent foreclosure by your customers) to sprout up all over like fungi in a dark, stanky, and moist environment. After all, the well worn and tired “agile” buzzword needs to be replaced by something just as exciting, no?

In my twisted mind, “Software Debt” is no different, but sounds a lot kooler than the bland “Software Maintainability“. Designing, coding, and artifacting to manage “Software Debt” is no different than doing the same for “Software Maintenance“. What do you think?

“Hi, I’m a software banker and I can help you consolidate and pay off all your software debt. Trust me, I will solve all your maintenance, oops, I mean debt, problems in no time flat. Plus, my fee is reasonable.” – Bulldozer00

An Estimation Example

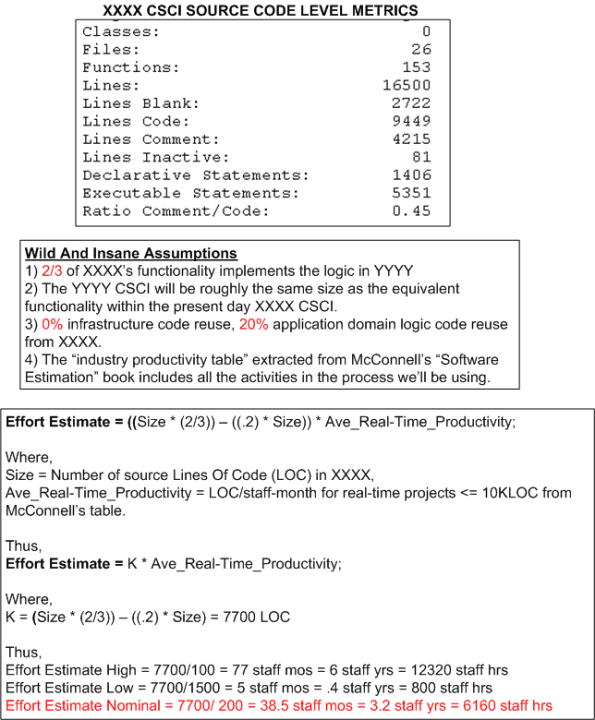

The figure below shows the derivation of an estimate of work in staff-hours to design/develop/test a Computer Software Configuration Item (CSCI) named YYYY. The estimate is based on the size of an existing CSCI named XXXX and the productivity numbers assigned to the “Real Time” category of software from the productivity chart in Steve McConnell‘s “Software Estimation: Demystifying the Black Art“.

Of course, the simple equation used to compute effort and all of the variables in it can be challenged, but would it improve the accuracy of the range of estimates?

Estimation Deflation

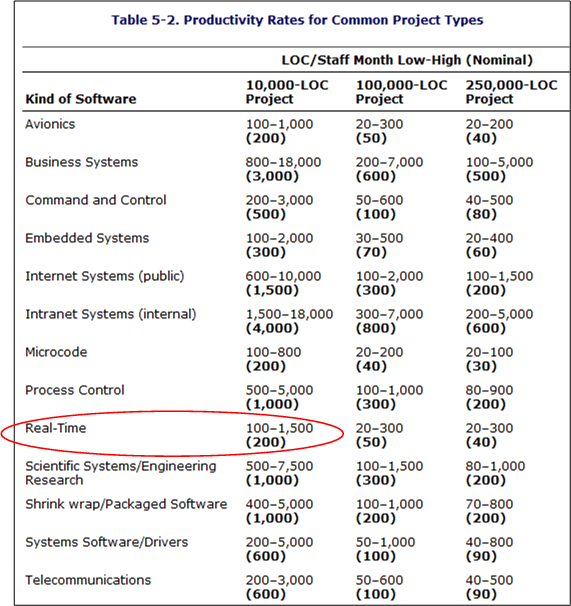

The best book I’ve read to date on the topic of software effort and schedule estimation is Steve McConnell‘s “Software Estimation: Demystifying the Black Art“. According to Mr. McConnell, two large influences on the amount of work required to develop a non-trivial piece of software are “size” and “kind“. Regardless of the units of measure (use cases, user stories, function points, Lines Of Code, etc), the greater the “size”, the greater the amount of work required to build the thang. Similarly, the harder “kinds” are associated with lower productivity than the simpler “kinds”.

In his book, McConnell provides the following handy, industry-data-backed, “kinds” vs “productivity” table that’s parameterized by “size” (in Lines Of Code (LOC)). Note that the “kinds” are sort of arbitrary and by no means an industry standard.

The Real-Time, 10K-100K LOC entry is circled because that’s the type and typical size of software that I specify/design/write. Note the huge 15-to-1 range of productivity for the type. Also note that the table contains large ranges of productivity for all the kind-size entries. Hint, hint: estimating is hard.

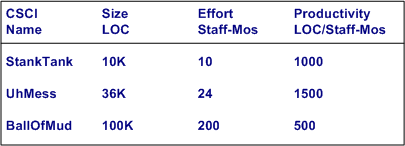

Ideally, for psuedo-accurate planning purposes, a software development org maintains its own table (see bogus example below) with real, measured numbers for the sizes of the CSCIs (Computer Software Configuration Items) that its DICs have created.

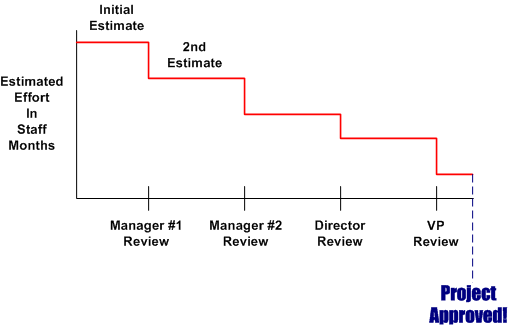

Of course, for a variety of cultural, competence, and social reasons, a lot of orgs don’t measure or maintain a custom productivity table. Thus, estimators are forced to pull numbers out of their arses and anyone’s productivity estimate is as bad anyone else’s. Everyone who wasn’t born yesterday knows that the pressure to use ridiculously high productivity numbers in work estimates pervades the ether in most orgs. Even when some FAI bucks the trend and withstands the looks and sound bites of disdain for conjuring up a work estimate that is perceived by the management chain as “too high”, the final estimates that show up on “approved” schedules are magically deflated to what is wanted by some clueless BM, SCOL, or CGH.

Of course, for a variety of cultural, competence, and social reasons, a lot of orgs don’t measure or maintain a custom productivity table. Thus, estimators are forced to pull numbers out of their arses and anyone’s productivity estimate is as bad anyone else’s. Everyone who wasn’t born yesterday knows that the pressure to use ridiculously high productivity numbers in work estimates pervades the ether in most orgs. Even when some FAI bucks the trend and withstands the looks and sound bites of disdain for conjuring up a work estimate that is perceived by the management chain as “too high”, the final estimates that show up on “approved” schedules are magically deflated to what is wanted by some clueless BM, SCOL, or CGH.

How’s Your GoF Swing?

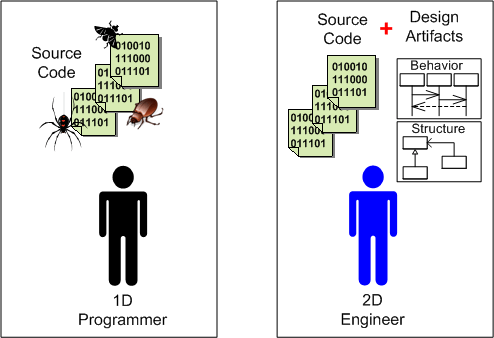

I don’t think many software professionals would disagree with the assertion that one of the greatest and innovative software design books of all time is “Design Patterns” by the Gang of Four (GoF). According to these PGA GoFfers, one dimensional software developers who only cut code and are “above” documenting behavioral and structural views of their designs do everyone a great disservice, especially themselves. Here’s why:

An object-oriented program’s run-time structure often bears little resemblance to its code structure. The code structure is frozen at compile-time; it consists of classes in fixed inheritance relationships. A program’s run-time structure consists of rapidly changing networks of communicating objects. In fact, the two structures are largely independent. Trying to understand one from the other is like trying to understand the dynamism of living ecosystems from the static taxonomy of plants and animals, and vice versa. With such disparity between a program’s run-time and compile-time structures, it’s clear that code won’t reveal everything about how a system will work. – Design Patterns, GoF.

Here’s the double whammy from UML co-creator Grady Booch.

The (source) code is the truth, but not the whole truth. – Grady Booch

I interpret these quotes to mean that without supporting “artifacts” (I use the less offensive “a” word here because “documentation” to most programmers is the equivalent of a four letter word.) to aid in understanding, maintenance developers and new team members and even the original coders are hosed. Of course, it goes without saying that their organizations and customers are hosed too. The hosing may be later than sooner, but the hosing will take place.

“The bitterness of poor system performance remains long after the sweetness of low prices and prompt delivery are forgotten.” – Jerry Lim

When one dimensional programmers are combined with one dimensional, schedule-is-the-only-thing-that-matters BMs who don’t care to know squat about software other than that the code is “done”, a toxic and self-reinforcing 2 X 1D brew of inefficiency and endless downstream rework is guaranteed. No superficial org restructurings, process improvement initiatives, excellence committees, or executive orders can solve deeply rooted quality problems like this. Bummer.

So what’s the advice that goes with this typical Bulldozer00 rant? Learn UML (on your own time; see the quote below) and develop your software from end-to-end with a process that interlaces coding and “artifacting” similar to PAYGO.

So what’s the advice that goes with this typical Bulldozer00 rant? Learn UML (on your own time; see the quote below) and develop your software from end-to-end with a process that interlaces coding and “artifacting” similar to PAYGO.

“I hold great hopes for UML, which seems to offer a way to build products that integrates hardware and software, and that is an intrinsic part of development from design to implementation. But UML will fail if management won’t pay for quite extensive training, or toss the approach when panic reigns.” – Jack Gannsle

My version of Jack’s quote replaces the “if” with “when”.

Reuse Based Estimation

“It’s called estimation, not exactimation” – Scott Ambler

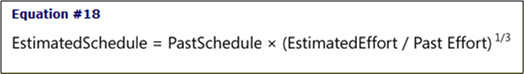

One of the pragmatically simple, down to earth equations in Steve McConnell‘s terrific “Software Estimation” defines the schedule for a new software development project in terms of past performance as:

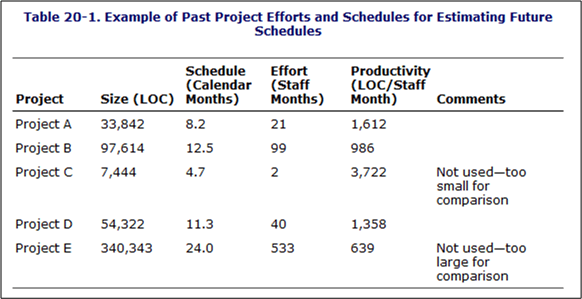

Of course, in order to use the equation to compute a guesstimate, as the table below shows, you must have tracked and recorded past efforts along with the calendar times it took to get those jobs completed.

Of course, not many orgs keep a running tab of past projects in an integrated, simple to use, easily accessible form like the above table, or do they? The info may actually be available someplace in the corpo data dungeon, but it’s likely fragmented, scattered, and buried within all kinds of different and incompatible financial forms and Microsoft project files. Why is this the case? Because it’s a management task and thus, no one’s responsible for doing it. In elegant corpo-speak, managers are responsible for “getting work done through others“. The catch phrase used to be “getting work done“, but to remove all ambiguity and increase clarity, the “through others” was cleverly or unconsciously tacked on.

How about you? How do you guesstimate effort and schedule?