Archive

“As-Built” Vs “Build-To”

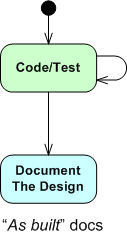

The figure below shows one (way too common) approach for developing computer programs. From an “understanding” in your head, you just dive into the coding state and stay there until the program is “done“. When it finally is “done“: 1) you load the code into a reverse engineering tool, 2) press a button, and voila, 3) your program “As-Built” documentation is generated.

For trivial programs where you can hold the entire design in your head, this technique can be efficient and it can work quite well. However, for non-trivial programs, it can easily lead to unmaintainable BBoMs. The problem is that the design is “buried” in the code until after the fact – when it is finally exposed for scrutiny via the auto-generated “as-built” documentation.

With a dumb-ass reverse engineering tool that doesn’t “understand” context or what the pain points in a design are, the auto-generated documentation is often overly detailed, unintelligible, camouflage in which a reviewer/maintainer can’t see the forest for the trees. But hey, you can happily tick off the documentation item on your process checklist.

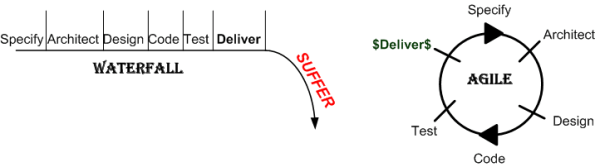

Two alternative, paygo types of development approaches are shown below. During development, the “build-to” design documentation and the code are cohesively produced manually. Only the important design constructs are recorded so that they aren’t buried in a mass of detail and they can be scrutinized/reviewed/error-corrected in real-time during development – not just after the fact.

I find that I learn more from the act of doing the documentation than from pushing an “auto-generate” button after the fact. During the effort, the documentation often speaks to me – “there’s something wrong with the design here, fix me“.

Design is an intimate act of communication between the creator and the created – Unknown

Of course, for developers, especially one dimensional extreme agilista types who have no desire to “do documentation” or learn UML, the emergence of reverse engineering tools has been a Godsend. Bummer for the programmer, the org he/she works for, the customer, and the code.

Deliverance

These Guys “Get It”

In the freely downloadable National Academies book, “Critical Code: Software Producibility for Defense“, the dudes who wrote the book “get it“. Check out this rather long snippet and place close attention on the bolded sentences. If you dare, pay closer attention to the snarky Bulldozer00 commentary highlighted in RED .

An additional challenge to the DoD is that the split between technical and management roles will result (has already resulted) in leaders who, on moving into management, face the prospect of losing technical excellence and currency over time. This means that their qualifications to lead in architectural decision making (and schedule making) may diminish unless they can couple project management with ongoing architectural leadership and technical engagement. The DoD does not (and legions of private enterprises don’t) have strong technical career paths that build on and advance software expertise with the exception of the service labs. Upward career progression trends leading closer to senior management-focused roles and further away from technical involvement tend to stress general management rather than technical management experience (well, duh! That’s the way status-centric command and control hierarchies are designed.). This is not necessarily the case in technology-intensive roles in industry (not necessarily, but still pervasively). Many (but nearly not enough) of the most senior leaders in the technology industry have technical backgrounds and continue to exercise technical roles and be engaged in technology strategy. Nonetheless, certain DoD software needs remain sufficiently complex and unique and are not covered by the commercial world, and therefore call for internal DoD software expertise. In the DoD, however, as software personnel take on more management responsibility, they have less opportunity and incentive to stay technically current (<- this “feature” is baked into command and control hierarchies where, of course, caste and who-reports-to-who is king – to hell with excellence and what sustains an enterprise’s health and profitability). At the same time, there is an increasing need for an acquisition workforce that has a strong understanding of the challenges in systems engineering and software-intensive systems development. It is particularly critical to have program managers who understand modern software development and systems (If that’s the case, then the DoD and most private enterprises are hosed. D’oh!).

Could it be that unelected, anointed “managers” in DoD and technology industry CLORGs and DYSCOs are still stuck in the 20th century FOSTMA mindset? You know, the UCB where they “feel” they are entitled to higher compensation and stature than the lower cast knowledge workers (architects, designers, programmers, testers, etc) just because they occupy a higher slot in an anachronistic, and no longer applicable, way of life – no matter what the cost to the whole org’s viability.

In command and control hierarchies, almost everybody is a wanna-be:

In command and control hierarchies, almost everybody is a wanna-be:

“I wanna rise up to the next level so I’ll: make more money, have more freedom, be perceived as more important, and rule over the hapless dudes in my former level“. Nah, that’s not true. BD00 has been drinkin’ too many dirty, really really dirty, martinis.

Telltale Signs Of A Failed Software Project

Like everyone else who’s worked on a slew of team-based software development projects, uber C++ blogger Danny Kalev has his own thoughts on why projects fail. In “Telltale Signs of a Failed Software Project, Part I“, Danny ominously describes “The Emperor’s New Clothes Syndrome” as follows:

The first ominous sign is the emperor’s new clothes syndrome — as a new recruit, you try to study the project. You’re reading the specifications, perusing those lovely Data Flow Diagrams (DFDs) or UML charts and you still can’t get the hang of it. “What on earth were they thinking? It simply doesn’t add up!” you’re muttering silently. At some point you realize that it’s not you — it’s the project itself. What you’ve been reading is simply a collection of smoke and mirror effects meant to appease the high management. Little by little you get the picture: your colleagues know that it’s not working but they won’t admit it in public. If you dare exposing the truth you’ll be denounced as an ignorant, misfit traitor. You have two choices: quit silently, or join the rest, pretending all is well.

Hmm, I think Mr. Kalev may have missed the point that his second-to-last sentence; “exposing the truth and being denounced as an ignorant, misfit traitor“, is also a choice – albeit one that is auto-discarded by all sane persons. Ya see, if you’re not perceived to be a cross-eyed Columbo, a court-jester, or an innocent (but naive) child when you state your concern, you’re sure to get hosed down by the powers that be.

Have you ever tried to call-it-like-ya-see-it in front of the papal infallibles? If so, which halloween costume did you don? Columbo, Court-Jester, Innocent Child, or “other“? Surely, you’ve done it at least once, right? If not, why not? If so, then what kinda blowback did you receive – and did it force you into “quiet desperation” mode? Come onnnnnnnnn, don’t be shy – share your story with BD00 and the two other regular readers of this blog.

Complicated != Complex

For the non-geeks reading this post, the “!=” symbol is the C++ programming language token for “not equal“.

It seems like a lot of people think that classifying something as “complex” is the same as calling it “complicated“, and vice-versa. That conclusion can be, and often is, true, but it can also be false. I associate “complicated” with “not-understandable” – except to a select few experts. I think of “complex” to be the equivalent of something like “intricately elegant” and understandable to far more people than just experts.

Let’s take an example to illuminate my viewpoint. Assume that the black box system below functions delightfully. It’s reliable, responsive, easy to learn, and does what its users want without frustrating them in the slightest.

Now, in terms of complicated and complex, consider what the system may look like under the covers:

Now, in terms of complicated and complex, consider what the system may look like under the covers:

Of course, most users don’t give a shite what goes on under the covers, but the designing org and its people better well know what does – unless they luckily don’t have any competition to deal with, and hence, have their customers in a vice grip.

You see, at some point in time, the users will want improvements to the system as their needs evolve. If the original team of builders of implementation #1 are the only people who know the (so-called) design well enough to change it without breaking any existing capabilities, then the development org is hosed if those people leave. In effect, the org is held hostage by a small cadre of people. D’oh!

In the complex-complex implementation on the far right, even if the original builders leave the development org, the (relatively) elegant and well thought out design structure facilitates easy on-boarding of replacement builders. As an added bonus, the effort needed to add features and enhancements to the product is way less costly and risky than the other jaggedly complicated implementations.

So, given the portfolio of products in your org, how would you assess them in terms of the complexity and complicated attributes? If, and it’s probably a big IF, you could publicly communicate your assessment without fear of marginalization, or worse, how many people in your org do you think would publicly agree with your assessment? Uh, how abut privately? Would the number of public “agreers” match the number of private “agreers“?

Seven Unsurprising Findings

In the National Acadamies Press’s “Summary of a Workshop for Software-Intensive Systems and Uncertainty at Scale“, the Committee on Advancing Software-Intensive Systems Producibility lists 7 findings from a review of 40 DoD programs.

- Software requirements are not well defined, traceable, and testable.

- Immature architectures; integration of commercial-off-the-shelf (COTS) products; interoperability; and obsolescence (the need to refresh electronics and hardware).

- Software development processes that are not institutionalized, have missing or incomplete planning documents, and inconsistent reuse strategies.

- Software testing and evaluation that lacks rigor and breadth.

- Lack of realism in compressed or overlapping schedules.

- Lessons learned are not incorporated into successive builds—they are not cumulative.

- Software risks and metrics are not well defined or well managed.

Well gee, do ya think they missed anything? What I’d like to know is what, if anything, they found right with those 40 programs. Anything? Maybe that would help more than ragging on the same issues that have been ragged on for 40 years.

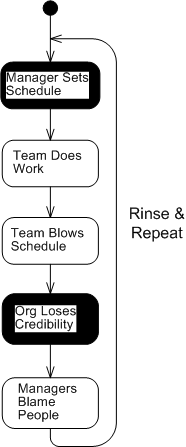

My fave is number five (with number 1 a close second). When schedules concocted by non-technical managers without any historical backing or input from the people who will be doing the work are publicly promised to customers, how can anyone in their right mind assert that they’re “realistic“? The funny thing is, it happens all the time with nary a blink – until the fit hits the shan, of course. D’oh!

Meeting schedules based on historically tracked data and input from team members is challenging enough, but casting an unsubstantiated schedule in stone without an explicit policy of periodically reassessing it on the basis of newly acquired knowledge and learning as a project progresses is pure insanity. Same old, same old.

I love deadlines. I like the whooshing sound they make as they fly by. – Douglas Adams

Pegged

Peg, It will come back to you – Steely Dan

Assume that as a sub-task of redesigning a BBoM chunk of legacy software for scalability (single thread to mutliple threads), you have to “do something” with a subset of high performance, but computationally dense and tricky, C procedural code. Let’s call the functionality implemented by this code “peg“.

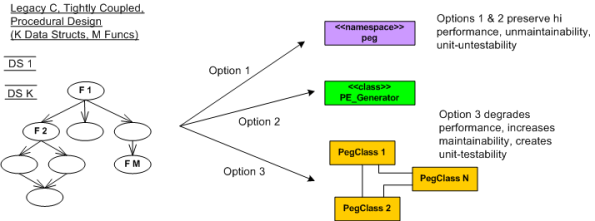

In the figure below, I model the procedural mess that is “peg” on the left as an M function call tree in which many of the functions perform CRUD accesses on a set of K interrelated global data structures. Trust me when I say that K and M are non-trivial, double digit numbers. D’oh!

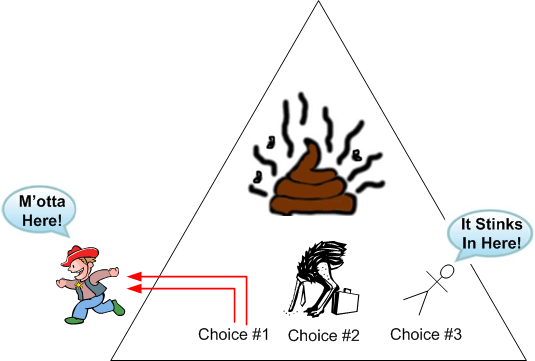

The way I see it, I have three choices for attacking the monolith:

Options 1 and 2 are slightly different instances of the ultra-conservative “sarcophagus” pattern (remember Chernobyl?). Option 3, which is technically riskier, higher latency, and higher development cost in the short term, will definitely pay off in terms of lower maintenance costs and lower developer anxiety the long term – if done correctly!

If it was my decision alone (and it should be since I’ll be doing all the coding/testing/documenting/defending/”owning“, no?), I’d choose option 3 without blinking an eye. I wouldn’t blink an eye because I know that “peg” will continue to need to be extended and enhanced for many years into the future – and maintenance costs far exceed initial developments costs in all software product life cycles. But alas, I’m just a dumb engineer with no business sense.

Requirements Stability

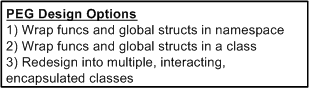

Over the years, I’ve been assigned to the roles of specifier, designer, documenter, writer, and maintainer of source code for radar sensor systems that are used in safety-critical applications. These sensors get deployed in noisy, interference-infested environments and they must perform at high levels of availability and with great fidelity.

The figure below shows a generic sensor system context diagram along with some typical non-functional requirements (with made-up values) that are critical for customer acceptance. My experience has indicated that once these black-box level requirements are specified, they rarely change. Thus, the agile war cry to continuously “embrace requirements change” may not fully apply to the development of this class of systems, no?

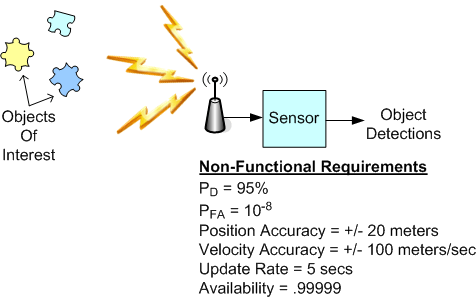

The point I’m trying to make here is to be wary of morphing into a lap-dog zealot for any technique, process, method, or practice – which includes the hallowed “agile” brand. For a long time, my motto (thanks to the work of W. L. Livingston and John Warfield) has been: Context, Content, and then Process (CCP). Synthesize an understanding of the problem context, design the content of the solution (structure and behavior), and only then design the solution construction process – tailored to the context and content. Of course, since mistakes and errors will be made during the journey, backtracking and iterative convergence are expected. Thus, “embrace mistakes, errors, backtracking, and iteration” is my war cry. What’s yours? What’s your org’s?

Snap Judgments And Ineffective Decisions

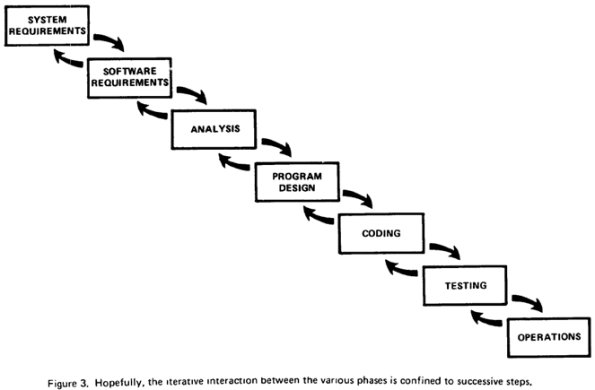

In the software industry, virtually all people agree that Winston Royce‘s classic paper titled “Managing The Development Of Large Software Systems” was the first widely publicized work to describe the linear, sequential, “waterfall” method of building big systems. He didn’t coin the term “waterfall“, he called it a “grandiose process“.

Here’s one of the pics from Mr. Royce’s paper (note that he shows stage N to stage N-1 feedback loops in the diagram and note the “hopefully” word in the figure’s title):

What seems strange to me is that most professional’s that I’ve conversed with think that Mr. Royce was an advocate of this “grandiose process“. However, if you read his 11 page paper, he wasn’t:

The problem is…. The testing phase which occurs at the end of the (waterfall) development cycle is the first event for which timing, storage, input/output transfers, etc., are experienced as distinguished from analyzed. They are not precisely analyzable. They are not the solutions to the standard partial differential equations of mathematical physics for instance. – W. W. Royce

This lack of due diligence to dig deeper into Mr. Royce’s stance reminds me of bad managers who make snap judgments and ineffective decisions. They do this because, in hierarchical command & control CLORG cultures, they’re “supposed to look like” they know and understand what’s going on at all times. After all, the unquestioned assumption in hierarchies is that the best and brightest bubble up to the top. But, as Rudy sez…..

“You have to know a lot to be of help. It’s slow and tedious. You don’t have to know much to cause harm. It’s fast and instinctive.” – Rudolph Starkermann

Of course, all human beings suffer from the same “snap judgments and ineffective decisions” malady to some extent, but the guild of management-by-hierarchy, fueled by its ADHD obsession to jam fit as much attention/planning/work into as little time as possible, seems to have taken it to an extreme.

Tell Me About The Failures

As the world’s rising population becomes more and more dependent on software to preserve and enhance quality of life, efficiently solving software development problems has become big business. Thus, from the myriad of “agile” flavors to PSP/TSP, there are all kinds of light and heavyweight techniques, methods, and processes available to choose from.

Of course, the people who acquire fame and fortune selling these development process “solutions” seem to only publicize success stories. In each book, case study, lecture, or general article they create to promote their solution, they always cite one or two concrete examples of wild success that “validate” their assertions.

Besides the success stories, I, as a potential consumer of their product, want to hear about the failures. No large scale software development system is perfect and there are always failures, no? But why don’t the promoters expose the failures and the improvements made as a result of the lessons learned from those failures? Humbly providing this information could serve as a competitive differentiator, couldn’t it?

The difference between a methodologist and a terrorist is that you can negotiate with a terrorist – Martin Fowler