Archive

Visualizing And Reasoning About

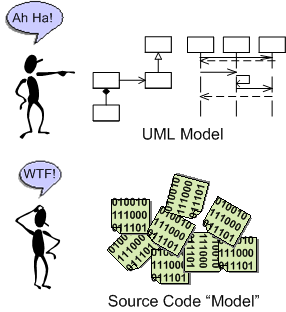

I recently read an interview with Grady Booch in which the interviewer asked him what his proudest technical achievement was. Grady stated that it was his involvement in the creation of the Unified Modeling Language (UML). Mr. Booch said it allowed for a standardized way (vs. ad-hoc) of “visualizing and reasoning about software” before, during, and/or after its development.

All Forked Up!

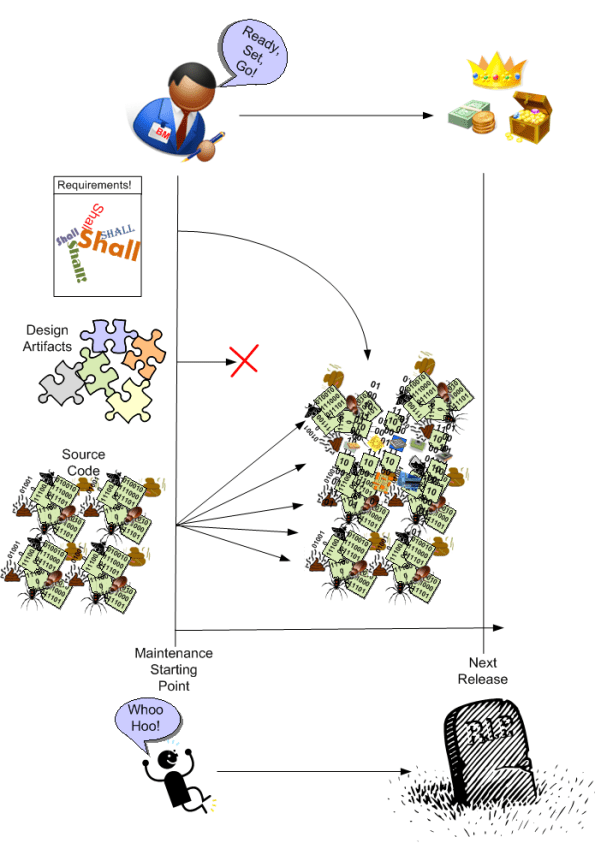

BD00 posits that many software development orgs start out with good business intentions to build and share a domain-specific “platform” (a.k.a. infrastructure) layer of software amongst a portfolio of closely related, but slightly different instantiations of revenue generating applications. However, as your intuition may be hinting at, the vast majority of these poor souls unintentionally, but surely, fork it all up. D’oh!

The example timeline below exposes just one way in which these colossal “fork ups” manifest. At T0, the platform team starts building the infrastructure code (common functionality such as inter-component communication protocols, event logging, data recording, system fault detection/handling, etc) in cohabitation with the team of the first revenue generating app. It’s important to have two loosely-coupled teams in action so that the platform stays generic and doesn’t get fused/baked together with the initial app product.

At T1, a new development effort starts on App2. The freshly formed App2 team saves a bunch of development cost and time upfront by reusing, as-is, the “general” platform code that’s being co-evolved with App1.

Everything moves along in parallel, hunky dory fashion until something strange happens. At T2, the App2 product team notices that each successive platform update breaks their code. They also notice that their feature requests and bug reports are taking a back seat to the App1 team’s needs. Because of this lack of “service“, at T3 the frustrated App2 team says “FORK IT!” – and they literally do it. They “clone and own” the so-called common platform code base and start evolving their “forked up” version themselves. Since the App2 team now has to evolve both their App and their newly born platform layer, their schedule starts slipping more than usual and their prescriptive “plan” gets more disconnected from reality than it normally does. To add insult to injury, the App2 team finds that there is no usable platform API documentation, no tutorial/example code, and they must pour through 1000s of lines of code to figure out how to use, debug, and add features to the dang thing. Development of the platform starts taking more time than the development of their App and… yada, yada, yada. You can write the rest of the story, no?

So, assume that you’ve been burned once (and hopefully only once) by the ubiquitous and pervasive “forked up” pattern of reuse. How do you prevent history from repeating itself (yet again)? Do you issue coercive threats to conform to the mission? Do you swap out individuals or whole teams? Do you send your whole org to a 3 day Scrum certification class? Will continuous exhortations from the heavens work to change “mindsets“? Do you start measuring/collecting/evaluating some new metrics? Do you change the structure and behaviors of the enclosing social system? Is this solely a social problem; solely a technical problem? Do you not think about it and hope for the best – the next time around?

Ready, Set, Go!

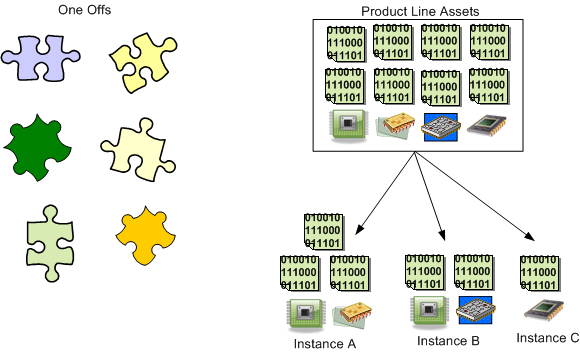

Product Line Blueprint

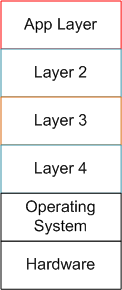

Here it is, the blueprint (patent pending) you’ve been waiting for:

Need a little less abstraction? Well, how about this refinement:

Piece of cake, no? It’s easy to “figure out“:

- the number of layers needed in the platform,

- the functionality and connectivity within and between each of the layers in the stack

- the granularity of the peer entities that go into each layer and which separates the layers

- the peer-to-peer communication protocols and dependencies within each layer

- the interfaces provided by, and required by, each layer in the stack

- what your horizontally, integrate-able App component set should be for specific product instantiations

- how much time, how much money, and how many people it will take to stand up the stack

- how many different revenue-generating product variants will initially be needed for economic viability

- how to secure all the approvals needed

- how to manage the inevitable decrease in conceptual integrity and increase in entropy of the product factory stack over time – the maintenance problem

Perhaps easiest of all is the last bullet; the continuous, real-time management of the core asset base IF the product factory stack is actually built and placed into operation. After all, it’s not like trying to herd cats, right?

Please feel free to use this open source (LOL!) product line template to instantiate, build, and exploit your own industry and domain specific product line(s). Enough intellectualizing and “strategizing” about doing it in useless committees, task forces, special councils, tiger teams, and blue ribbon panels. There’s money to be made, joy to be distributed, and toes to be stepped on; so just freakin’ do it.

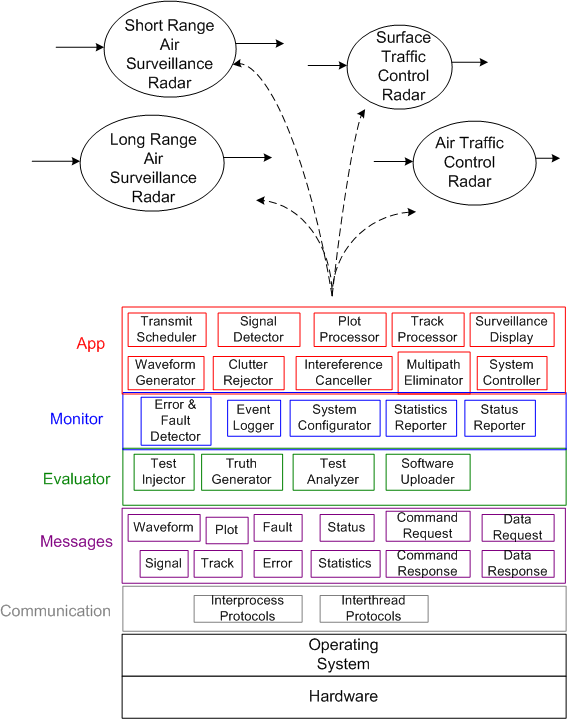

Is this post still too abstract to be of any use? Let’s release some more helium from our balloon and descend from the sky just a wee bit more so that we can get a glimpse of what is below us. Try out “revision 0” of this blueprint instantiation for a hypothetical producer of radar systems:

Did you notice the increase in tyranny of detail and complexity as we transcended the 3 levels of abstraction in this post? Well, it gets worse if we continue on cuz we don’t yet have enough information, knowledge, or understanding to start cutting code, building, testing, and standing up the stack – not nearly enough. Thus, let’s just stop right here so we can retain a modicum of sanity. D’oh! Too late!

Fellow Tribe Members

Being a somewhat skeptical evaluator of conventional wisdom myself, I always enjoy promoting heretical ideas shared by unknown members of my “tribe“. Doug Rosenberg and Matt Stephens are two such tribe members.

Waaaay back, when the agile process revolution against linear, waterfall process thinking was ignited via the signing of the agile manifesto, the eXtreme Programming (XP) agile process burst onto the scene as the latest overhyped silver bullet in the software “engineering” community. While a religious cult that idolized the infallible XP process was growing exponentially in the wake of its introduction, Doug and Matt hatched “Extreme Programming Refactored: The Case Against XP“. The book was a deliciously caustic critique of the beloved process. Of course, Matt and Doug were showered with scorn and hate by the XP priesthood as soon as the book rolled off the presses.

Well, Doug and Matt are back for their second act with the delightful “Design Driven Testing: Test Smarter, Not Harder“. This time, the duo from hell pokes holes in the revered TDD (Test Driven Design) approach to software design – which yet again triggered the rise of another new religion in the software community; or should I say “commune“.

BD00’s hat goes off to you guys. Keep up the good work! Maybe your next work should be titled “Lowerarchy Design: The Case Against Hierarchy“.

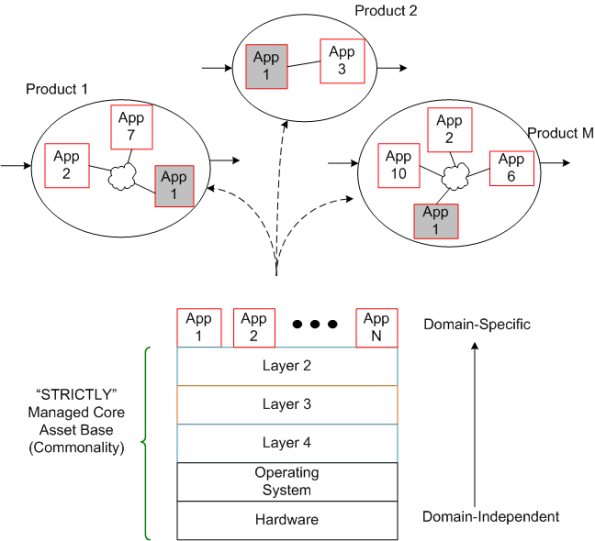

Dream, Mess, Catastrophe

To build high quality, successful, long-lived, “Big” software, you must design it in terms of layers (that’s why the ISO ISO model for network architecture has 7, crisply defined layers). If you don’t leverage the tool of layering (and its close cousin – leveling) in an attempt to manage complexity, then: your baby won’t have much conceptual integrity; you’ll go insane; and you’ll be the unproud owner of a big ball of mud that sucks down maintenance funds like a Dyson and may crumble to pieces at the slightest provocation. D’oh!

The figure below shows a reference model for a layered application. Note that even though we have a neat stack, we can’t tell if we have a winner on our hands.

By adding the inter-layer dependencies to the reference architecture, the true character of our software system will be revealed:

In the “Maintenance Dream“, the inter-layer APIs are crisply defined and empathetically exposed in the form a well documented interfaces, abstractions, and code examples. The programmer(s) of a given layer only have to know what they have to provide to the users above them and what the next layer below lovingly provides to them. Ah, life is good.

Next, shuffle on over to the “Maintenance Mess“. Here, we have crisply defined layers, but the allocation of functionality to the layers has been hosed up ( a violation of the principle of “leveling“) and there’s a beast in the making. Thus, in order for App Layer programmers to be productive, they have to stuff their head with knowledge/understanding of all the sub-layer APIs to get their jobs done. Hopefully, their heads don’t explode and they don’t run for the exits.

Finally, skip on over to the (shhh!) “Maintenance Catastrophe“. Here, we have both a leveling mess and an incoherent set of incomprehensible (to mere mortals) inter-layer APIs. In the worst case: the layers aren’t discernible from one another; it takes “forever” to on-board new project members; it takes forever to fix bugs; it takes forever to add features; and it takes an heroic effort to keep the abomination alive and kicking. Double D’oh!

Forever == Lots Of Cash

In orgs that have only ever created “Maintenance Messes and Catastrophies“, since they’ve never experienced a “Maintenance Dream“, they think that high maintenance costs, busted schedules, and buggy releases are the norm. How do you explain the color green to someone who’s spent his/her whole life immersed in a world of red?

World Class Help

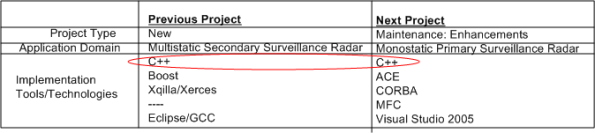

I’m currently transitioning from one software project to another. After two years of working on a product from the ground up, I will be adding enhancements to a legacy system for an existing customer.

The table below shows the software technologies embedded within each of the products. Note that the only common attribute in the table is C++, which, thank god, I’m very proficient at. Since ACE, CORBA, and MFC have big, complicated, “funky” APIs with steep learning curves, it’s a good thing that “training” time is covered in the schedule as required by our people-centric process. 🙂

I’m not too thrilled or motivated at having to spin up and learn ACE and CORBA, which (IMHO) have had their 15 minutes of fame and have faded into history, but hey, all businesses require maintenance of old technologies until product replacement or retirement.

I am, however, delighted to have limited e-access to LinkedIn connection Steve Vinoski. Steve is a world class expert in CORBA know-how who’s co-authored (with Michi Henning) the most popular C++ CORBA programming book on the planet:

Even though Steve has moved on (C++ -> Erlang, CORBA -> REST), he’s been gracious enough to answer some basic beginner CORBA questions from me without requiring a consulting contract 🙂 Thanks for your generosity Steve!

Sandwich Dilemma

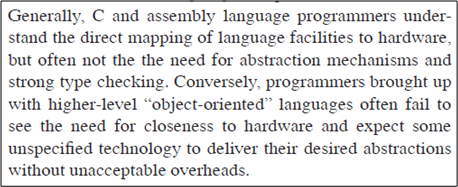

In this dated, but still relevant paper, “Evolving a language in and for the real world“, Bjarne Stroustrup laments about one of the adoption problems that (still) faces C++:

Since the overarching theme of C++ is, and always has been, “efficient abstraction“, it’s not surprising that long time efficiency zealots and abstraction aficionados would be extremely skeptical of the value proposition served up by C++. I personally know this because I arrived at the C++ camp from the C world of “void *ptr” and bit twiddling. When I first started studying C++, its breadth of coverage, feature set, and sometimes funky syntax scared me into thinking that it wasn’t worth the investment of my time to “go there“.

I think it’s easier to get C programmers to make the transition to C++ than it is to get VM-based and interpreter-based programmers to make the transition. The education, more disciplined thinking style, and types of apps written (non-business, non-web) by “close to the metal” programmers maps into the C++ mindset more naturally.

What do you think? Is C++ the best of both worlds, or the worst of both worlds?

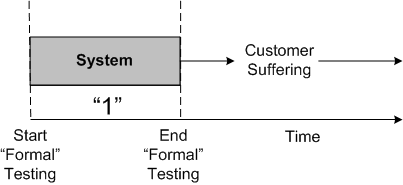

Customer Suffering

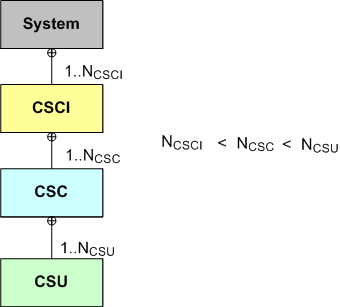

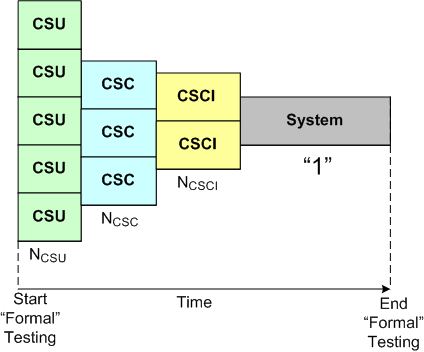

For some context, assume that your software-intensive system can actually be modeled in terms of “identifiable C”s:

Given this decomposition of structure, the ideal but pragmatically unattainable test plan that “may” lead to success is given by:

On the opposite end of the spectrum, the test plan that virtually guarantees downstream failure is given by:

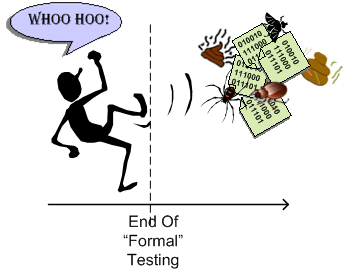

In practice, no program/project/product/software leader in their right mind skips testing at all the “C” levels of granularity. Instead, many are forced (by the ubiquitous “system” they’re ensconced in) to “fake it” because by the time the project progresses to the “Start Formal Testing” point, the schedule and budget have been blown to bits and punting the quagmire out the door becomes the top priority.

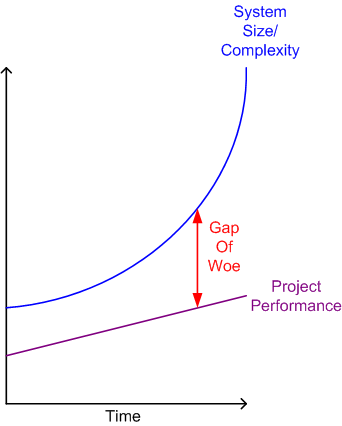

The Gap Of Woe

In “Why Software Fails”, the most common factors that contribute to software project failure are enumerated as:

- Unrealistic or unarticulated project goals

- Inaccurate estimates of needed resources

- Badly defined system requirements

- Poor reporting of the project’s status

- Unmanaged risks

- Poor communication among customers, developers, and users

- Use of immature technology

- Inability to handle the project’s complexity

- Sloppy development practices

- Poor project management

- Stakeholder politics

- Commercial pressures

Yawn. These failure factors have remained the same for forty years and there are no silver bullet(s) in sight. Oh sure, tools and practices and methodologies have “slightly” improved project performance over the decades, but the increase in size/complexity of the software systems we develop is outpacing performance improvement efforts by a large margin.