Archive

No Lessons Learned

Because I’m fascinated by the causes and ubiquity of socio-technical project explosions, I try to follow technical press reports on the status of big government contracts. Here’s a recent article detailing the demise of the DoD’s Joint Tactical Radio System (JTRS): “How to blow $6 billion on a tech project“.

Even though the reasons for big, software-intensive, multi-technology project failures have been well known for decades, disasters continue to be hatched and cancelled daily around the world by both public and private institutions everywhere – except yours, of course.

What follows are some snippets from the Ars Technica article and the JTRS wikipedia entry. The well-known, well-documented, contributory causes to the JTRS project’s demise are highlighted in bold type.

When JTRS and GMR launched, the services broke out huge wish lists when they drafted their initial requests for proposals on individual JTRS programs. While they narrowed some of these requirements as the programs were consolidated, requirements were constantly revised before, during, and after the design process.

In hindsight, the military badly underestimated the challenges before it.

First and foremost was the software development problem. When JTRS started, software-defined radio (SDR) was still in its infancy. The project’s SCA architecture allowed software to manipulate field-programmable gate arrays (FPGAs) in the radio hardware to reconfigure how its electronics functioned, exposing those FPGAs as CORBA objects. But when development began, hardware implementations of CORBA for FPGAs didn’t really exist in any standard form.

Moving code for a waveform from one set of radio hardware to another didn’t just mean a recompile—it often meant significant rewrites to make it compatible with whatever FGPAs were used in the target radio, then further tweaking to produce an acceptable level of performance. The result: the challenge of core development tasks for each of the initial designs was often grossly underestimated. Some of those issues have been addressed by specialized CORBA middleware, such as PrismTech’s OpenFusion, but the software tools have been long in coming.

When JTRS began, there was no WiFi, no 3G or no 4G wireless, and commercial radio communications was relatively expensive. But the consumer industry didn’t even look at SDR as a way to keep its products relevant in the future. Now, ASIC-based digital signal processors are cheap, and new products also tend to include faster chips and new hardware features; people prefer buying a new $100 WiFi router when some future 802.11z protocol appears instead of buying a $3,000 wireless router today that is “future proofed” (and you can’t really call anything based on CORBA “future proofed”).

“If JTRS had focused on rapid releases and taken a more modular approach, and tested and deployed early, the Army could have had at least 80 percent of what it wanted out of GMR today, instead of what it has now—a certified radio that it will never deploy.”

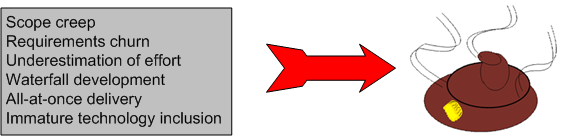

Having an undefined technical problem is bad enough, but it gets even worse when serious “scope creep” sets in during a 15-year project.

Each of the five sub-programs within JTRS aimed not at an incremental goal, but at delivering everything at once. That was a recipe for disaster.

By 2007 (10 years after start) the JTRS program as a whole had spent billions and billions—without any radios fielded.

In the fall of 2011, after 13 years of toil and $6B of our money wasted, the monster was put out of its misery. It was cancelled on October 2011 by the United States Undersecretary of Defense:

Our assessment is that it is unlikely that products resulting from the JTRS GMR development program will affordably meet Service requirements, and may not meet some requirements at all. Therefore termination is necessary.

And here’s what we, the taxpayers, have to show for the massive investment:

After 13 years in the pipeline, what those users saw was a radio that weighed as much as a drill sergeant, took too long to set up, failed frequently, and didn’t have enough range. (D’oh! and WTF!)

Misapplication Of Partially Mastered Ideas

Because the time investment required to become proficient with a new, complex, and powerful technology tool can be quite large, the decision to design C++ as a superset of C was not only a boon to the language’s uptake, but a boon to commercial companies too – most of whom developed their product software in C at the time of C++’s introduction. Bjarne Stroustrup‘s decision killed those two birds with one stone because C++ allowed a gradual transition from the well known C procedural style of programming to three new, up-and-coming mainstream styles at the time: object-oriented, generic, and abstract data types. As Mr. Stroustrup says in D&E:

Companies simply can’t afford to have significant numbers of programmers unproductive while they are learning a new language. Nor can they afford projects that fail because programmers over enthusiastically misapply partially mastered new ideas.

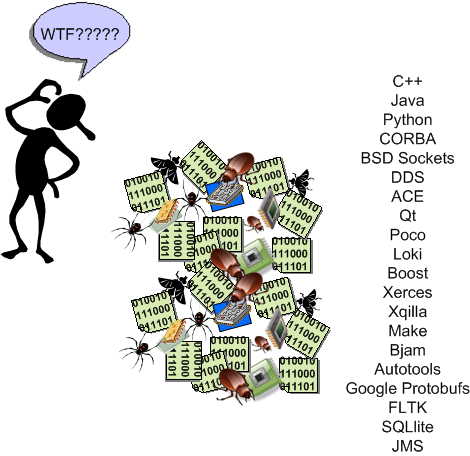

That last sentence in Bjarne’s quote doesn’t just apply to programming languages, but to big and powerful libraries of functionality available for a language too. It’s one challenge to understand and master a language’s technical details and idioms, but another to learn network programming APIs (CORBA, DDS, JMS, etc), XML APIs, SQL APIs, GUI APIs, concurrency APIs, security APIs, etc. Thus, the investment dilemma continues:

I can’t afford to continuously train my programming workforce, but if I don’t, they’ll unwittingly implement features as mini booby traps in half-learned technologies that will cause my maintenance costs to skyrocket.

BD00 maintains that most companies aren’t even aware of this ongoing dilemma – which gets worse as the complexity and diversity of their product portfolio rises. Because of this innocent, but real, ignorance:

- they don’t design and implement continuous training plans for targeted technologies,

- they don’t actively control which technologies get introduced “through the back door” and get baked into their products’ infrastructure; receiving in return a cacophony of duplicated ways of implementing the same feature in different code bases.

- their software maintenance costs keep rising and they have no idea why; or they attribute the rise to insignificant causes and “fix” the wrong problems.

I hate when that happens. Don’t you?

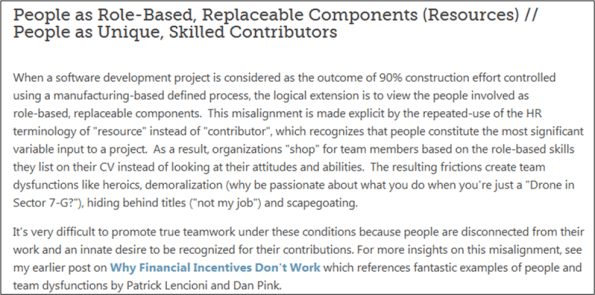

Aligned On A Misalignment

After recently tweeting this:

Chris Chapman tweeted this link to me: “Understanding Misalignments in SoftwareDevelopment Projects“. Lo and behold, the third “misalignment” on the page reads:

Chris and I seem to be aligned on this misalignment.

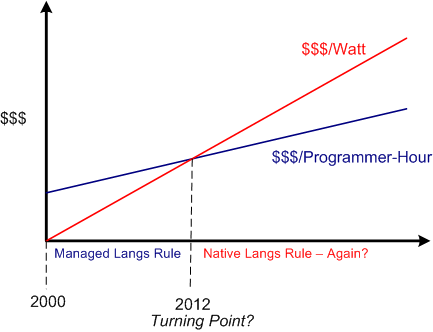

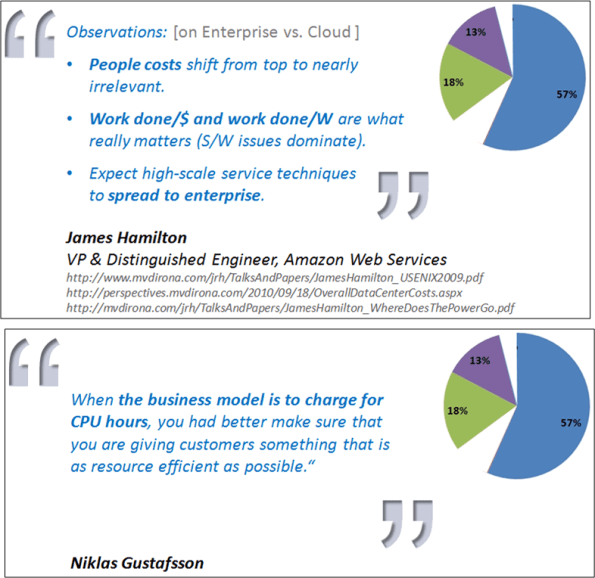

Performance Per Watt

Recently, I concocted a blog post on Herb Sutter‘s assertion that native languages are making a comeback due to power costs usurping programming labor costs as the dominant financial drain in software development. It seems that the writer of this InforWorld post seems to agree:

But now that Intel has decided to focus on performance per watt, as opposed to pure computational performance, it’s a very different ball game. – Bill Snyder

Since hardware developers like Intel have shifted their development focus towards performance per watt, do you think software development orgs will follow by shifting from managed languages (where the minimization of labor costs is king) to native languages (where the minimization of CPU and memory usage is king)?

Hell, I heard Facebook chief research scientist Andrei Alexandrescu (admittedly a native language advocate (C++ and D)) mention the never-used-before “users per watt” metric in a recent interview. So, maybe some companies are already onboard with this “paradigm shift“?

Your Fork, Sir

To that dumbass BD00 simpleton, it’s simple and clear cut. People don’t like to be told how to do their work by people who’ve never done the work themselves and, thus, don’t understand what it takes. Orgs that insist on maintaining groups whose sole purpose is to insert extra tasks/processes/meetings/forms/checklists of dubious “added-value” into the workpath foster mistrust, grudging compliance, blown schedules, and unnecessary cost incursion. It certainly doesn’t bring out the best in their people, dontcha think?

You would think that presenting “certified” obstacle-inserters with real industry-based data implicating the cost-inefficiency of their imposed requirements on value-creation teams might cause them to pause and rethink their position, right? Fuggedaboud it. All it does, no matter how gently you break the news, is cause them to dig in their heels; because it threatens the perceived importance of their livelihood.

Of course, this post, like all others on this bogus blawg, is a delusional distortion of so-called reality. No?

New Native Languages

The editor of Dr. Dobb’s Journal, Andrew Binstock, has put together a nice little slideshow summary of four “modern” native (native = no virtual machine running underneath the code) programming languages here: New Native Languages. As the figure below shows, these relatively new languages are D, Go, Vala, and Rust.

According to Andy, “older” native languages like C11 and C++11 “can have the feel of a past era onto which contemporary elements have been grafted“. I don’t agree with his “grafted” assertion, but I have to begrudgingly agree with him when he says:

The upshot is that to be truly expert in C++ requires far more education and far more effort than comparable mainstream OO languages (notably, Java and C#). – Andrew Binstock

Since I have much admiration for “D” creator Walter Bright and co-evolver Andrei Alexandrescu, I’ve been following the evolution of their language from afar. These two guys really know C++ inside and out; warts and all. Thus, unlike the 1995 Sun marketing proclamation that “Java is what C++ should have been“, Walter and Andrei are truly evolving D into “what C++ should be” – which is not just an OO language. Plus, they are being very gracious about it.

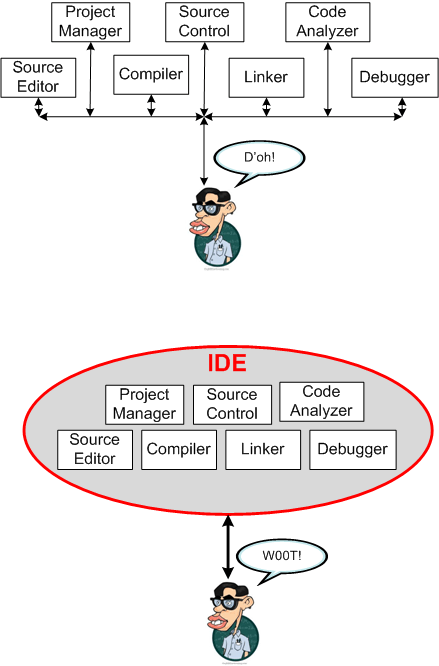

The IDEs Of March

I’m an Integrated Development Environment (IDE) man. I like the way IDEs (Eclipse, Visual Studio) provide an overarching dashboard overview and uniform control over the tool set needed to build software. Plus, I’m horrible at remembering commands; and as I get older, it gets worse.

How about you? Are you an IDE user or a command line person?

Relentless Assault

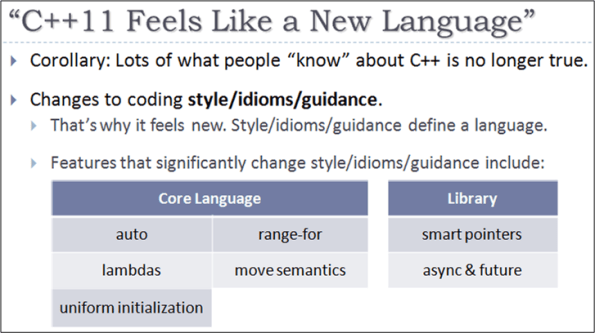

Microsoft’s Herb Sutter is a whirling dervish. Since the ISO approval of the C++11 standard last fall, he’s been on a crusade to promote C++ via several comparative assaults on Java and Microsoft’s own C#.

Herb’s latest blitzkrieg occurred at the lang.next 2012 conference (video link, slides link). In this slide, Herb summarizes some of the C++11 features that close the productivity and safety gap relative to C# and Java.

In this next slide, Herb contrasts C++11’s adherence to its more open “trust the programmer” philosophy against the more closed “we know what’s good for you” C#/Java philosophy.

Unless the large movement of business applications to the cloud is the latest fad du jour, the following two slides show that the largest software development and maintenance costs will shift from labor to compute efficiency. Thus, it doesn’t matter how programmer friendly your language is and how low the salaries you pay are, you might find that your costs are going up because of power drain in massive data centers and time spent frantically optimizing VM-based code that’s relatively slower and bigger.

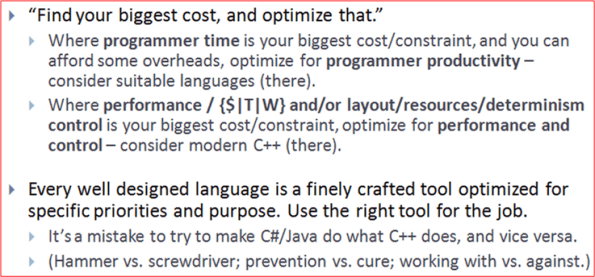

In closing, Herb, the nice guy that he is, backs off a bit with this “the right tool for the right job” slide:

Is Herb stretching the truth and exaggerating the improvements offered up by C++11? The fact that I wrote this post obviously means that I don’t. What about you, dear reader? What say you?

Starting Point

Unless you’re an extremely lucky programmer or you work in academia, you’ll spend most of your career maintaining pre-existing, revenue generating software for your company. If your company has multiple products and you don’t choose to stay with one for your whole career, then you’ll also be hopping from one product to another (which is a good thing for broadening your perspective and experience base – so do it).

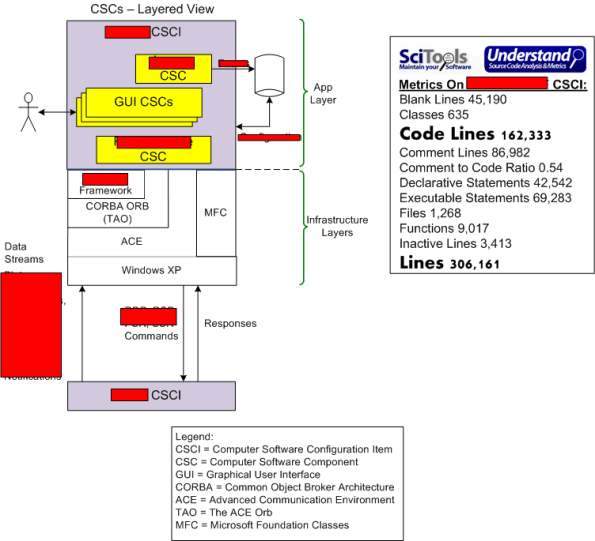

For your viewing pleasure, I’ve sketched out the structure and provided some code-level metrics on the software-intensive product that I recently started working on. Of course, certain portions of the graphic have been redacted for what I hope are obvious reasons.

How about you? What does your latest project look like?

Mistake Recognition

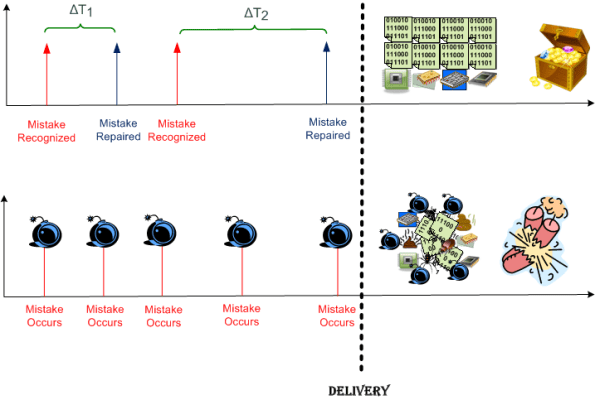

First question: Is “mistake recognition” allowed in your organization? Second question, if, and only if, the answer to the first question is “yes“: How many different “enabler” groups are required by your process to “have a say” in the path from “recognized” to “repaired”

If the answer to the first question is a cultural “no“, then as the lower trace in the dorky diagram shows, out the door your cannonballs go!