Archive

Pay As You Go

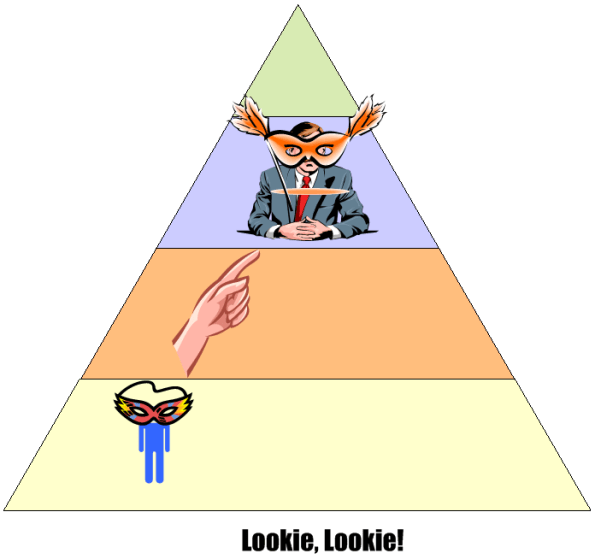

An age old and recurring source of contention in software-intensive system development is the issue of deciding how much time to spend coding and how much time to spend writing documentation artifacts. The figure below shows three patterns of development: BDUF, CADOB, and PAYGO.

Prior to the agile “revolution“, most orgs spent a lot of time generating software documentation during the front end of a project. The thinking was that if you diligently mapped out and physically recorded your design beforehand, the subsequent coding, integration, and test phases would proceed smoothly and without a hitch. Bzzzt! BDUF (bee-duff) didn’t work out so well. Religiously following the BDUF method (a.k.a. the waterfall method) often led to massive schedule and cost overruns along with crappy and bug infested software. Bummer.

In search of higher quality and lower cost results, a well meaning group of experts conceived of the idea of “agile” software development. These agile proponents, and the legions of programmers soon to follow, pointed to the publicly visible crappy BDUF results and started evangelizing minimal documentation up front. However, since the vast majority of programmers aren’t good at writing anything but code, these legions of programmers internalized the agile advice to the extreme; turning the dials to “10”, as Kent Beck would say. Citing the agile luminaries, massive numbers of programmers recoiled at any request for up front documentation. They happily started coding away, often leading to an unmaintainable shish-CADOB (Crappy Architecture and Design Out Back). Bozo managers, exclusively measured on schedule and cost performance by equally unenlightened corpocratic executives, jumped on this new silver bullet train. Bzzzt! Extreme agility hasn’t worked very well either. The extremist wing of the agilista party has in effect regressed back to the dark ages of hack and fix programming, hatching impressive disasters on par with the BDUF crews. In extreme agile projects where documentation is still required by customers, a set of hastily prepared, incorrect, and unusable design/user/maintenance artifacts (a.k.a. camouflage) is often produced at the tail end of the project. Boo hoo, and WAAAAGH!

As the previously presented figure illustrates, a third, hybrid pattern of software-intensive system development can be called PAYGO. In the PAYGO method, the coding/test and artifact-creation activities are interlaced and closely coupled throughout the development process. If done correctly, progressively less project time is spent “updating” the document set and more time is spent coding, integrating, and testing. More importantly, the code and documentation are diligently kept in synch with each other.

An important key to success in the PAYGO method is to keep the content of the document artifact set at a high enough level of abstraction “above” the source code so that it doesn’t need to be annoyingly changed with every little code change. A second key enabler to PAYGO success is the ability and (more importantly) the will to write usable technical documentation. Sadly, because the barriers to adoption are so high, I can’t imagine the PAYGO method being embraced now or in the future. Personally, I try to do it covertly, under the radar. But hey, don’t listen to me because I don’t have any credentials, I like to make stuff up, and I’ve been told by infallible and important people that I’m not fit to lead 🙂

The only way to learn how to write is by wrote.

Stacks And Icebergs

The picture below attempts to communicate the explosive growth in software infrastructure complexity that has taken place over the past few decades. The growth in the “stack” has been driven by the need for bigger, more capable, and more complex software-intensive systems required to solve commensurately growing complex social problems.

In the beginning there was relatively simple, “fixed” function hardware circuitry. Then, along came the programmable CPU (Central Processing Unit). Next, the need to program these CPUs to do “good things” led to the creation of “application software”. Moving forward, “operating system software” entered the picture to separate the arcane details and complexity of controlling the hardware from the application-specific problem solving software. Next, in order to keep up with the pace of growing application software size , the capability for application designers to spawn application tasks (same address space) and processes (separate address spaces) was designed into the operating system software. As the need to support geographically disperse, distributed, and heterogeneous systems appeared, “communication middleware software” was developed. On and on we go as the arrow of time lurches forward.

As hardware complexity and capability continues to grow rapidly, the complexity and size of the software stack also grows rapidly in an attempt to keep pace. The ability of the human mind (which takes eons to evolve compared to the rate of technology change) to comprehend and manage this complexity has been overwhelmed by the pace of advancement in hardware and software infrastructure technology growth.

Thus, in order to appear “infallibly in control” and to avoid the hard work of “understanding” (which requires diligent study and knowledge acquisition), bozo managers tend to trivialize the development of software-intensive systems. To self-medicate against the pain of personal growth and development, these jokers tend to think of computer systems as simple black boxes. They camouflage their incompetence by pretending to have a “high level” of understanding. Because of this aversion to real work, these dudes have no problem committing their corpocracies to ridiculous and unattainable schedules in order to win “the business”. Have you ever heard the phrase “aggressive schedule”?

“You have to know a lot to be of help. It’s slow and tedious. You don’t have to know much to cause harm. It’s fast and instinctive.” – Rudolph Starkermann

A Professional Failure

I’m a professional failure. Why? Because I’m pretty sure that I’ve never satisfied any unreasonable schedule that I was ever “given” to meet. Since almost all schedules are unreasonable, then, by definition, I’m a professional failure. Hell, it didn’t even matter if I was the one who created the unreasonable schedule in the first place, I’ve failed. Bummer.

Looking back, I think that I’ve figured out why I underperformed (<– that’s management-speak for “failed”). It’s simply that the problem solving projects that I’ve worked on have been grossly underestimated. Why is that? Because they all required learning something new and acquiring new knowledge in the problem area of pecuniary interest.

So, how can you know if a given schedule is unreasonable, and does it matter if you conclude that meeting the schedule is a lost cause? You most likely can’t, and no, it doesn’t matter. Assume that, based on personal experience and a deep “knowing” of what’s involved in a project, you actually can determine that the schedule is a laughable, but innocent, lie. There’s nothing you can do about it. If you speak up, at best, you’ll be ignored. At worst, you’ll receive multiple peek-a-boo visits from one or more STSJs (Status Taker and Schedule Jockey) who don’t have to do any of the project work themselves.

How about you, have you been a perpetual failure like me? Of course not. Your resume says here that you have been 100% successful on every project you’ve worked on; and that implies that you’ve met every schedule. But wait, every other resume in my stack says the same thing. Damn! How am I gonna decide among all of these perfect people who gets the job?

90 Percent Done

In order for those in charge (and those who are in charge of those who are in charge ad infinitum) to track and control a project, someone has to estimate when the project will be 100% complete. For any software development project of non-trivial complexity, it doesn’t matter who conjures up the estimate, or what drugs they were on when they verbalized it, the odds are huge that the project will be underestimated. That’s because in most corpo command and control hierarchies, there is always implicit pressure to underestimate the effort needed to “get it done”. After all, time is money and everyone wants to minimize the cost to “get it done”. Even though everybody smells the silent-but-deadly stank in the air and knows that’s how the game is played, everybody pretends otherwise.

The graph below shows a made up example (like John Lovitt, I’m a patholgical liar who makes everything up, so don’t believe a word I say) of a project timeline. On day zero, the obviously infallible project manager (if you browse linkedin.com, no manager has ever missed a due date) plots a nice and tidy straight (blue) line to the 100% done date. During the course of executing the project, regular status is taken and plotted as the “actual” progress (red) line so that everybody who is important in the company can know what’s going on.

For the example project modeled by the graph, the actual progress starts deviating from the planned progress on day one. Of course, since the vast majority of project (and product and program) managers are klueless and don’t have the expertise to fix the deficit, the gap widens over time. On really dorked up projects, the red line starts above the blue line and the project is ahead of schedule – whoopee!

At around the 90-95% scheduled-to-be-done time, something strange (well, not really strange) happens. Each successive status report gets stuck at 90% done. Those in charge (and those who are in charge of those who are in charge ad infinitum) say “WTF?” and then some sort of idiotic and ineffective action, like applying more pressure or requiring daily status meetings or throwing more DICs (Dweebs In the Cellar) on the project, is taken. In rare cases, the project (or product or program) manager is replaced. It’s rare because project (and product and program) managers and those who appoint them are infallible, remember?

So, is “continuous replanning”, where new scheduled-to-be-done dates are estimated as the project progresses, the answer? It can certainly help by reducing the chance of a major “WTF” discontinuity at the 90% done point. However, it’s not a cure all. As long as the vast majority of project (and product and program) managers maintain their attitude of infallibility and eschew maintaining some minimum level of technical competence in order to sniff out the real problems, help the team, and make a difference, it’ll remain the same-old same-old forever. Actually, it will get worse because as the inherent complexity of the projects that a company undertakes skyrockets, this lack of leadership excellence will trigger larger performance shortfalls. Bummer.

Learn A Little, Do A Lot

There is no “learn” in “do” – manager yoda

Assume that you have a basic skill set, some expertise, and some experience in a domain where a task needs to be performed to solve a problem. Now assume that your boss assigns that problem to you and, out of curiosity you decide to track how you go about solving the problem.

The figure below shows the likely result of tracking your problem solving effort. You probably converged on the solution via a series of continuous Learning-Doing iterations. On your first iteration, you gathered a bunch of information and spent a considerable amount of time immersing yourself in the problem area to “learn” both context and content. Then you “did” a little, producing some type of work output – which was wrong. Next, you spent some more time “learning” by analyzing your output for errors/mistakes and correlating your work against the information pile that you amassed. Then you repeated the cycle, doing more while having to learn less on each subsequent iteration until voila, the problem was solved!

So, how can this natural problem solving process get hosed and low quality, shoddy work outputs be produced? Here are three possible reasons:

- Lack of availability of, or accessibility to, applicable information.

- Low quality, inconsistent, and ambiguous information about the problem.

- Explicit or implicit pressure to abandon the natural and iterative Learn-then-Do problem solving process.

IMHO, it should be a manager’s top priority to remove these obstacles to success. If a manager ignores, or can’t fulfill, this critical responsibility and he/she is just an obsessive, textbook-trained “status taker and schedule jockey”, then his/her team will transform into a group of low quality performers. More importantly, he/she will lose the respect of those team members who deeply care about quality.

The Vault Of No Return

In big system development projects, continuous iteration and high speed error removal are critical to the creation of high quality products. Thus, it’s essential to install flexible and responsive Configuration Management and Quality Assurance (CMQA) support systems that provide easy access to intermediate work products that (most definitely) will require rework as a result of ongoing learning and new knowledge acquisition.

As opposed to virtually all methodologies that exhort early involvement of the CMQA folks in projects, I (but who the hell am I?) advise you to consider otherwise. If you have the power (and sadly, most people don’t), then keep the corpo CMQA orgs out of your knickers until the project enters the production phase. Why? Because I assert that most big company CMQA orgs innocently think they are the ends, and not a means. Thus, in order to project an illusion of importance, the org creates and enforces Draconian, Rube Goldberg-like, high latency, low value-added, schedule-busting procedures for storing work products in the vault of no return. Once project work products are locked in the vault, the amount of effort and time to retrieve them for error correction and disambiguation is so demoralizing and frustrating that most well-meaning information creators just give up. Sadly, instead of change management, most CMQA orgs unconsciously practice change prevention.

The figure below contrasts two different product developments in terms of when a CMQA org gets intertwined with the value creation project pipeline. The top half shows early coupling and the bottom half shows late coupling. Since upstream work products are used by downstream workgroups to produce the next stage’s outputs, the downstreamers will discover all kinds of errors of commission and (worse,) omission. However, since the project info has been locked in the vault of no return, if the culture isn’t one of infallible machismo, upstream producers and downstream consumers will circumvent the “system” and collaborate behind the scenes to fix mistakes and clarify ambiguities to increase quality. If and when that does happen, the low quality, vault-locked information gets out of synch with the informal and high quality information in the pipeline. Even though that situation is better than having both the vault and project pipeline filled with error infested information, post-delivery product maintenance teams will encounter outdated and incorrect blueprints when they retrieve the “formal” blueprints from the vault. Bummer.

Distributed Vs. Centralized Control

The figure below models two different configurations of a globally controlled, purposeful system of components. In the top half of the figure, the system controller keeps the producers aligned with the goal of producing high quality value stream outputs by periodically sampling status and issuing individualized, producer-specific, commands. This type of system configuration may work fine as long as:

- the producer status reports are truthful

- the controller understands what the status reports mean so that effective command guidance can be issued when problems manifest.

If the producer status reports aren’t truthful (politics, culture of fear, etc.), then the command guidance issued by the controller will not be effective. If the controller is clueless, then it doesn’t matter if the status reports are truthful. The system will become “hosed”, because the inevitable production problems that arise over time won’t get solved. As you might guess, when the status reports aren’t truthful and the controller is clueless, all is lost. Bummer.

The system configuration in the bottom half of the figure is designed to implement the “trust but verify” policy. In this design, the global controller directly receives samples of the value streams in addition to the producer status reports. The integration of value stream samples to the information cache available to the controller takes care of the “untruthful status report” risk. Again, if the controller is clueless, the system will get hosed. In fact, there is no system configuration that will work when the controller is incompetent.

How many system controllers do you know that actually sample and evaluate value stream outputs? For those that don’t, why do you think they don’t?

The system design below says “syonara dude” to the global omnipotent and omniscient controller. Each producer cell has its own local, closely coupled, and knowledgeable controller. Each local controller has a much smaller scope and workload than the previous two monolithic global controller designs. In addition, a single clueless local controller may be compensated for if the collective controller group has put into place a well defined, fair, and transparent set of criteria for replacement.

What types of systems does your organization have in place? Centrally controlled types, distributed control types, a mixture of both, hybrids? Which ones work well? How do you see yourself in your org? Are you a producer, a local controller, both a local controller and a producer, an overconfident global controller, a narcissistic controller of global controllers, a supreme controller of controllers who control other controllers who control yet other controllers? Do you sample and evaluate the value stream?

SYSMOD Process Overview

Besides the systemic underestimation of cost and schedule, I believe that most project overruns are caused by shoddy front end system engineering (but that doesn’t happen in your org, right?). Thus, I’ve always been interested and curious about various system engineering processes and methods (I’ve even developed one myself – which was ignored of course 🙂 ). Tim Weilkiens, author of System Engineering with SysML/UML, has developed a very pragmatic and relatively lightweight system engineering process called SYSMOD. He uses SYSMOD as a framework to teach SysML in his book.

The figure below shows a summary of Mr. Weilkiens’s SYSMOD process in a 2 level table of activities. All of SYSMOD’s output artifacts are captured and recorded in a set of SysML diagrams, of course. Understandably, the SYSMOD process terminates at the end of the system design phase, after which the software and hardware design phases start. Like any good process, SYSMOD promotes an iterative development philosophy where the work at any downstream point in the process can trigger a revisit to previous activities in order to fix mistakes and errors made because of learning and new knowledge discovery.

The attributes that I like most about SYSMOD are that it:

- Seamlessly blends the best features of both object-oriented and structured analysis/design techniques together.

- Highlights data/object/item “flows” – which are usually relegated to the background as second class citizens in pure object-oriented methods.

- Starts with an outside-in approach based on the development of a comprehensive system context diagram – as opposed to just diving right into the creation of use cases.

- Develops a system glossary to serve as a common language and “root” of shared understanding.

How does the SYSMOD process compare to the ambiguous, inconsistent, bloated, one-way (no iterative loopbacks allowed), and high latency system engineering process that you use in your organization 🙂 ?

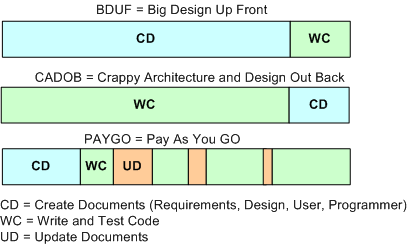

Right, Right, Right.

Great leaders get the right info to the right people at the right time. They don’t hide behind the “it’s not my job” cliche. They don’t just “delegate this” and “delegate that” like a card dealer at a casino. They don’t just sit back in their throne, get manicures, and “review and approve”. They don’t just passively collect “status and schedule” information. They don’t set ambiguous and indecipherable direction, and then change it at will whenever it suits their personal agenda. They don’t mandate the latest management “technique” after they read about it in a 2 page Harvard Business Review article.

If getting the right info to the right people at the right time requires a leader to generate some of the information him/herself, then they do it.

Delegating only works when the delegator works too. – Robert Half

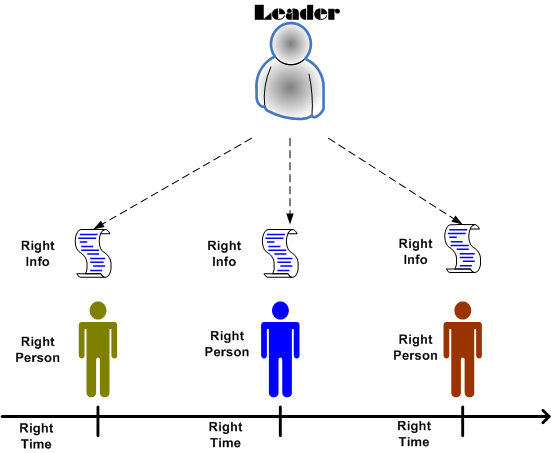

Who’s That Masked Man?

I’m very skeptical of management consultants, but the dudes at VitalSmarts are really good. They are responsible for the wonderful “crucial” pair of books:

I’ve read both of these along with Influencer. They’re all very “down to earth” and highly accessible tomes that detail what works and what doesn’t work in terms of leading organizations of people. Their simple and “executable” advice is backed by academic research and, most importantly, their direct experiences from interacting with lots and lots (thousands) of real people in working organizations around the globe.

The following snippet from their latest e-newsletter caught my eye:

“People are excellent at masking ability problems.”

Man, ain’t that the truth! Along with you, I ‘ve put the “mask ” on many times, both willingly and unwillingly. The question is: “what would cause people to do this?”.

I think the main reason why people try to feign expertise is because they are stuck working in archaic corpo CCHs (Command & Control Hierarchies). All CCH orgs unquestioningly assume that everyone within the pyramid walls is supremely competent, regardless of whether they are or not. In a CCH, anyone who dares to persistently point out “ability” problems is excommunicated, regardless of how much evidence is presented to prove the case so that a beneficial change can be made. Heaven forbid the case where a lower level masked associate points to the huge masks being worn by one or more of the obviously infallible managers entrenched in an upper echelon. Retribution is swift and unambiguous.