Archive

Dependable Mission Critical Software

In this post, Embedded.com – Software for dependable systems, Jack Gannsle introduced me to the book: Software for Dependable Systems–Sufficient Evidence?. It was written by the “Committee on Certifiably Dependable Software Systems” and it’s available for free pdf download.

Despite being written by a committee (blech!), and despite the bland title (yawn), I agree with Jack in that it’s a riveting geek read. It’s understandable to field-hardened practitioners and it’s filled with streetwise wisdom about building dependability into large, mission critical software systems that can kill people or cause massive financial loss if they collapse under stress. Essentially, it says that all the bloated, costly, high-falutin safety and security and certification processes in existence today don’t guarantee squat – except jobs for self-important bureaucrats and wanna-be-engineers. They don’t say it THAT way of course, but that’s my warped and unprofessional interpretation of their message.

Here are a few gems from the 149 page pdf:

As is well known to software engineers, by far the largest class of problems arises from errors made in eliciting, recording, and analysis of requirements.

Undependable software suffers from an absence of a coherent and well articulated conceptual model.

Today’s certification regimes and consensus standards have a mixed record. Some are largely ineffective, and some are counterproductive. (<- This one is mind blowing to me)

The goal of certifiably dependable software cannot be achieved by mandating particular processes and approaches regardless of their effectiveness in certain situations.

In addition to lampooning the “way things are currently done” for certifying software-centric dependability, the committee dudes actually make some recommendations for improving the so-called state of art. Stunningly, they don’t prescribe yet another costly, heavyweight process of dubious effectiveness. They recommend any process comprised of best practices; as long as there is scrutable connectivity from phase to phase and from start to end to “preserve the chain of evidence” for a claim of dependability that vendors of such software should be required to make. Where there is a gap between links in the chain of scrutability, they recommend rigorous analysis to fill it.

To make the transition to the new mindset of scrutable connectivity, they say that these skills, which are rare today and difficult to acquire, will be required in the future:

- True systems thinking (not just specialized, localized, algorithmic thinking that’s erroneously praised as systems thinking by corpocracies) of the properties of the system as a whole and the interactions among its components.

- The art of simplifying complex concepts, which is difficult to appreciate since the awareness of the need for simplification usually only comes (if it DOES come at all) with bitter experience and the humility gained from years of practice.

Drum roll please, because my absolute favorite entry in the book, which tugs at my heart, is as follows:

To achieve high levels of dependability in the foreseeable future, striving for simplicity is likely to be by far the most cost-effective of all interventions. Simplicity is not easy or cheap but its rewards far outweigh its costs.

That passage resonates deeply with me because, even though I’m not good at it, that’s what my primary professional goal has been for 20+ years. Clueless companies that put complexifying and obfuscating experts that nobody can understand up on a pedestal, deserve what they get:

- incomprehensible, unmaintainable, and undependable products

- a disconnected and apathetic workforce

- low (if any) profit margins.

As my Irish friend would say, they are all fecked up. They’re innocent and ignorant, but still fecked up.

Two Hundred And Fifty To Zero

Whoo Hoo! Since March of 2009, I’ve posted just over 250 BS blog entries. However, my labor of love has led to zero supplemental income. As they say (whoever the ubiquitous they are), “don’t quit your day job“. That’s OK, because I love my day job. Since the vast majority of the people that I work with, and for, are good and decent people that give their best every day, I’m a happy camper.

My Velocity

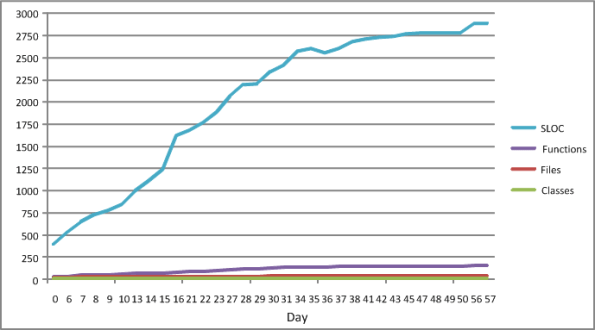

The figure below shows some source code level metrics that I collected on my last C++ programming project. I only collected them because the process was low ceremony, simple, and unobtrusive. I ran the source code tree through an easy to use metrics tool on a daily basis. The plots in the figure show the sequential growth in:

- The number of Source Lines Of Code (SLOC)

- The number of classes

- The number of class methods (functions)

- The number of source code files

So Whoopee. I kept track of metrics during the 60 day construction phase of this project. The question is: “How can a graph like this help me improve my personal software development process?”.

The slope of the SLOC curve, which measured my velocity throughout the duration, doesn’t tell me anything my intution can’t deduce. For the first 30 days, my velocity was relatively constant as I coded, unit tested, and integrated my way toward the finished program. Whoopee. During the last 30 days, my velocity essentially went to zero as I ran end-to-end system tests (which were designed and documented before the construction phase, BTW) and refactored my way to the end game. Whoopee. Did I need a plot to tell me this?

I’ll assert that the pattern in the plot will be unspectacularly similar for each project I undertake in the future. Depending on the nature/complexity/size of the application functionality that will need to be implemented, only the “tilt” and the time length will be different. Nevertheless, I can foresee a historical collection of these graphs being used to predict better future cost estimates, but not being used much to help me improve my personal “process”.

What’s not represented in the graph is a metric that captures the first 60 days of problem analysis and high level design effort that I did during the front end. OMG! Did I use the dreaded waterfall methodology? Shame on me.

iSpeed OPOS

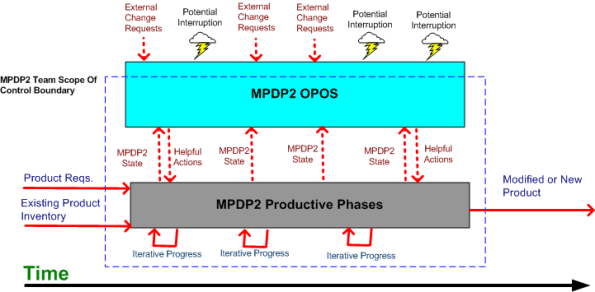

A couple of years ago, I designed a “big” system development process blandly called MPDP2 = Modified Product Development Process version 2. It’s version 2 because I screwed up version 1 badly. Privately, I named it iSpeed to signify both quality (the Apple-esque “i”) and speed but didn’t promote it as such because it didn’t sound nerdy enough. Plus, I was too chicken to introduce the moniker into a conservative engineering culture that innocently but surely suppresses individuality.

One of the MPDP2 activties, which stretches across and runs in parallel to the time sequenced development phases, is called OPOS = Ongoing Planning, Ongoing Steering. The figure below shows the OPOS activity gaz-intaz and gaz-outaz.

In the iSpeed process, the top priority of the project leader (no self-serving BMs allowed) is to buffer and shield the engineering team from external demands and distractions. Other lower priority OPOS tasks are to periodically “sample the value stream”, assess the project state, steer progress, and provide helpful actions to the multi-disciplined product development team. What do you think? Good, bad, fugly? Missing something?

Plumbers And Electricians

Putting an expert in one technical domain “in charge” of a big risky project that requires deep expertise in a different technical domain is like putting plumbers in charge of a team of electricians on a massive skyscraper electrical job – or vice versa. Putting a generic PMI or MBA trained STSJ in charge of a complex, mixed-discipline engineering product development project is even worse. When they don’t know anything about WTF they’re managing, how can innocently ignorant project “leaders”:

- Understand what needs to be done

- Know what information artifacts need to be generated along the way for downstream project participants

- Estimate and plan the work with reasonable accuracy

- Correctly interpret ongoing status so that they have an idea where the project is located in terms of cost, schedule, and quality

- Make effective mid-course corrections when things go awry and ambiguity reigns

- See through any bullshit used to camouflage shoddy work or to cut corners,

- Stop small but important problems from falling through the cracks and growing into ominously huge obstacles

- Perform the “verify” part of “trust but verify”.

Well, they can’t – no matter how many impressive and glossy process templates are stored in the standard corpo database to guide them. Thus, these poor dudes spend most of their time spinning out massive, impressive excel spreadsheets and microsoft project schedules so fine grained that they’re obsolete before they’re showcased to the equally clueless suits upstairs. But hey, everything looks good on the surface for a long stretch of time. Uh, until the fit hits the shan.

Charles (on the) Rocks

Charles Bukowski is on my long list of “to read” authors. What drew him to me is this awesome quote:

There was nothing really as glorious as a good beer shit—I mean after drinking twenty or twenty-five beers the night before. The odor of a beer shit like that spread all around and stayed for a good hour-and-a-half. It made you realize that you were really alive.

If you don’t bust a gut at that quote and this is your first foray into this ridiculous time wasting blog, please don’t ever come back here. No offense, but your time is too precious and there’s so much to explore/discover in this wonderful world that you shouldn’t be wasting it snorting my verbal diarrhea. Dude, wipe your nose cuz there’s a dingleberry hanging from it.

Some people never go crazy, What truly horrible lives they must live. – Charles Bukowski

Maker’s Schedule, Manager’s Schedule

I read this Paul Graham essay quite a while ago; Maker’s Schedule, Manager’s Schedule, and I’ve been wanting to blog about it ever since. Recently, it was referred to me by others at least twice, so the time has come to add my 2 cents.

In his aptly titled essay, Paul says this about a manager’s schedule:

The manager’s schedule is for bosses. It’s embodied in the traditional appointment book, with each day cut into one hour intervals. You can block off several hours for a single task if you need to, but by default you change what you’re doing every hour.

Regarding the maker’s schedule, he writes:

But there’s another way of using time that’s common among people who make things, like programmers and writers. They generally prefer to use time in units of half a day at least. You can’t write or program well in units of an hour. That’s barely enough time to get started. When you’re operating on the maker’s schedule, meetings are a disaster.

When managers graduate from being a maker and morph into bozos (of which there are legions), they develop severe cases of ADHD and (incredibly,) they “forget” the implications of the maker-manager schedule difference. For these self-important dudes, it’s status and reporting meetings galore so that they can “stay on top” of things and lead their teams to victory. While telling their people that they need to become more efficient and productive to stay competitive, they shite in their own beds by constantly interrupting the makers to monitor status and determine schedule compliance. The sad thing is that when the unreasonable schedules they pull out of their asses inevitably slip, the only technique they know how to employ to get back on track is the ratcheting up of pressure to “meet schedule”. They’re bozos, so how can anyone expect anything different – like asking how they could personally help out or what obstacles they can help the makers overcome. Bummer.

Byzantine Labyrinth

During the birth and growth of dysfunctional CCHs, here is what happens:

- silos form and harden,

- a bewildering array of narrow specialist roles get continuously defined,

- layers of self-important and entitled RAPPERS emerge, and

- a byzantine labyrinth of processes and procedures are created by those in charge.

This not only happens under the “watchful eyes” of the head shed, but incredibly, those inside the shed-of-privilege are the chief proponents and instigators of all the added crap that slows down and frustrates the DICforce. Peter Drucker nailed it when he opined:

Ninety percent of what we call ‘management’ consists of making it difficult for people to get things done – Peter Drucker

On the other hand, T.S. Eliot also pegged it with:

“Half of the harm that is done in this world is due to people who want to feel important. They do not mean to do harm… They are absorbed in the endless struggle to think well of themselves.” – T. S. Eliot

Scalability: The Next Buzzword

Since I’m currently in the process of trying to help a team re-design an existing software-intensive system to be more scalable, the title of this book caught my eye:

It’s just my opinion (and you know what “they” say about opinions) but stay away from this stinker. All the authors want to do is to jump on the latest buzzword of “scalability” and make tons of money without helping anybody but themselves.

Check this self-serving advertisement out:

“The Art of Scalability addresses these issues by arming executives, engineering managers, engineers, and architects with models and approaches to scale their technology architectures, fix their processes, and structure their organizations to keep “scaling” problems from affecting their business and to fix these problems rapidly should they occur.”

Notice how they try to cover the entire hierarchical corpo gamut from bozo managers at the peak of the pyramid down to the dweebs in the cellar to maximize the return on their investment. Blech and hawk-tooey.

BTW, I actually did waste my time by reading the first BS chapter. The rest of the book my be a Godsend, but I’m not about to invest my time trying to figure out if it is.

BTW, I actually did waste my time by reading the first BS chapter. The rest of the book my be a Godsend, but I’m not about to invest my time trying to figure out if it is.