Archive

Construction Sequence

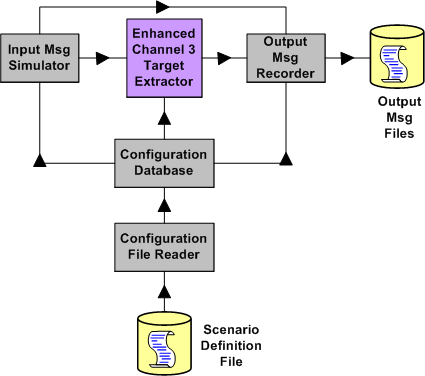

The figure below depicts a static structural bent SysML model of a small but non-trivial software program that I recently finished writing and testing. It’s a simulator harness that’s used to explore/test/improve candidate “Target Extractor” algorithms for inclusion into a revenue generating product.

On program launch, a user-created scenario definition file is read, parsed, error-checked, and then stored in an in-memory database. Subsequent to populating the database, the program automatically:

- Generates a simulated stream of target message fragments characterized by the scenario definition that was pre-specified by the user

- Injects the message stream into the target extractor algorithm under test

- Processes the message stream in accordance with the plot extraction state machine algorithm specification

- Records the target extractor output response message stream to disk

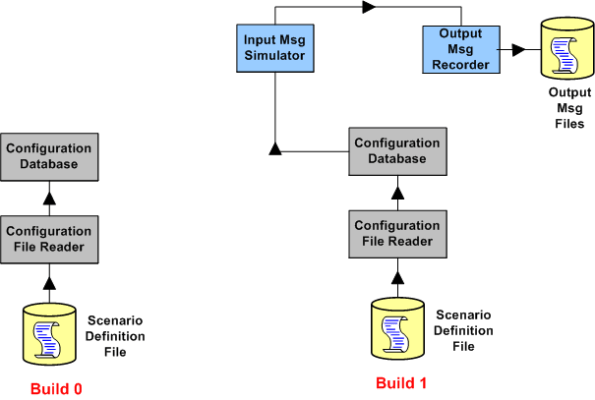

The figure above is a model that represents the finished product – which ended up being the third build in a series of incremental builds. The figure below shows the functionality in the first two builds of the trio.

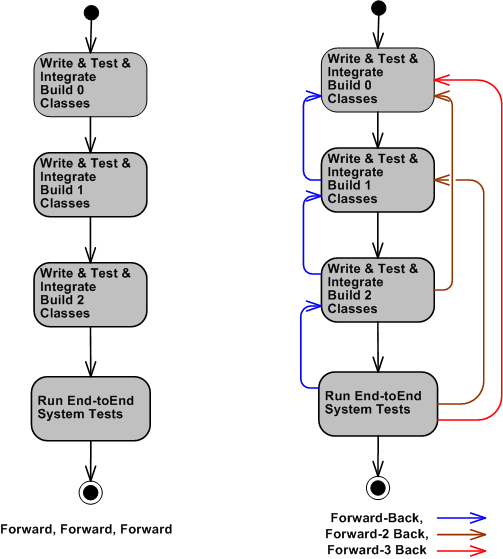

Even though the construction process that I used appears to have progressed in a nice and tidy linear and sequential flow (like the left portion of the figure below depicts), it naturally didn’t work out that way. The workflow progressed in accordance with the right portion of the figure below, with lots of high frequency single-step feedback loops and (thankfully) only a few two-step and three-step feedback loops.

In a perfect world, the software construction process proceeds in a linear and sequential fashion. In the messy real world, mistakes and errors are always made, and stepping backward is the only way that these mistakes and errors can be corrected.

In standard textbook CCH orgs where an endless sea of linear and sequential thinking BMs rule the roost, going backwards is “unacceptable” because “you should have done it right the first time“. In these types of irrational macho cultures, fear of punishment reigns supreme – and nobody dares to discuss it. Fearful development teams either don’t go backwards to correct mistakes, or they try to fix mistakes covertly below the corpo radar. What type of org do you work for?

Shallmeisters, Get Over It.

If you pick up any reference article or book on requirements engineering, I think you’ll find that most “experts” in the field don’t obsess over “shalls”. They know that there’s much more to communicating requirements than writing down tidy, useless, fragmented, one line “shall” statements. If you don’t believe me, then come out of your warm little cocoon and explore for yourself. Here are just a few references:

http://www.amazon.com/Process-System-Architecture-Requirements-Engineering/dp/0932633412/ref=sr_1_3?ie=UTF8&s=books&qid=1257672507&sr=1-3

With the growing complexity of software-intensive systems that need to be developed for an org to remain sustainable and relevant, the so-called venerable “shall” is becoming more and more dinosaurish. Obviously, there will always be a place for “shalls” in the development process, but only at the most superficial and highest level of “requirements specification”; which is virtually useless to the hardware, software, network, and test engineers who have to build the system (while you watch from the sidelines and “wait” until the wrong monstrosity gets built so you can look good criticizing it for being wrong).

So, what are some alternatives to pulling useless one dimensional “shalls” out of your arse? How about mixing and matching some communication tools from this diversified, two dimensional menu:

- UML Class diagrams

- UML Use Case diagrams

- UML Deployment diagrams

- UML Activity diagrams

- UML State Machine diagrams

- UML Sequence diagrams

- Use Case Descriptions

- User Stories

- IDEF0 diagrams

- Data Flow Diagrams

- Control Flow Diagrams

- Entity-Relationship diagrams

- SysML Block Definition diagrams

- SysML Internal Block Definition diagrams

- SysML Requirements diagrams

- SysML Parametric diagrams

Get over it, add a second dimension to your view, move into this century, and learn something new. If not for your company, then for yourself. As the saying goes, “what worked well in the past might not work well in the future”.

I’m Finished

I just finished (100% of course <-LOL!) my latest software development project. The purpose of this post is to describe what I had to do, what outputs I produced during the effort, and to obtain your feedback – good or bad.

The figure below shows a simple high level design view of an existing real-time, software-intensive, revenue generating product that is comprised of hundreds of thousands of lines of source code. Due to evolving customer requirements, a major redesign and enhancement of the application layer functionality that resides in the Channel 3 Target Extractor is required.

The figure below shows the high level static structure of the “Enhanced Channel 3 Target Extractor” test harness that was designed and developed to test and verify that the enhanced channel 3 target extractor works correctly. Note that the number of high level conceptual test infrastructure classes is 4 compared to the lone, single product class whose functionality will be migrated into the product code base.

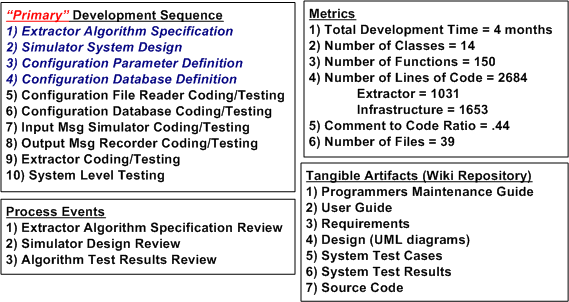

The figure below shows a post-project summary in terms of: the development process I used, the process reviews I held, the metrics I collected, and the output artifacts that I produced. Summarizing my project performance via the often used, simple-minded metric that old school managers love to use; lines of code per day, yields the paltry number of 22.

Since my average “velocity” was a measly 22 lines of code per day, do you think I underperformed on this project? What should that number be? Do you summarize your software development projects similar to this? Do you just produce source code and unit tests as your tangible output? Do you have any idea what your performance was on the last project you completed? What do you think I did wrong? Should I have just produced source code as my output and none of the other 6 “document” outputs? Should I have skipped steps 1 through 4 in my development process because they are non-agile “documentation” steps? Do you think I followed a pure waterfall process? What say you?

Orchestrated Reviews

If you think your design is perfect, it means you haven’t shown it to anyone yet – Harry Hillaker

Open, frequent, and well-engineered reviews and demonstrations are great ways to uncover and fix mistakes and errors before they grow into downstream money and time sucking abominations. In spite of this, some project cultures innocently but surely thwart effective reviews.

Out of fear of criticism, designers in dysfunctional cultures take precautions against “looking bad“. Camouflage is generated in the form of too much or too little detail. Subject matter experts are left off the list of reviewers in order to uphold a false image of infallibility.

Another survival tactic is to pre-load the reviewer list with friends and cream puffs who won’t point out errors and ambiguities for fear of losing their status as nice people and good team players. In really fearful cultures, tough reviewers who consistently point out nasty and potential budget-busting errors are tarred and feathered so that they never provide substantive input again. In the worst cases, reviews and demonstrations aren’t even performed at all. Bummer.

What The Hell’s A Unit?

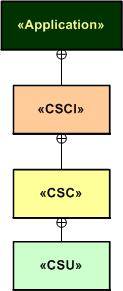

- CSCI = Computer Software Configuration Item

- CSC = Computer Software Component

- CSU = Computer Software Unit

In my industry (aerospace and defense), we use the abstract, programming-language-independent terms CSCI, CSC, and CSU as a means for organizing and conversing about software architectures and designs. The terms go way back, and I think (but am not sure) that someone in the Department Of Defense originally conjured them up.

The SysML diagram below models the semantic relationships between these “formal” terms. An application “contains” one or more CSCIs, each of which which contains one or more CSCs, each of which contains one or more CSUs. If we wanted to go one level higher, we could say that a “system” contains one or more Applications.

In my experience, the CSCI-CSC-CSU tree is almost never defined and recorded for downstream reference at project start. Nor is it evolved or built-up as the project progresses. The lack of explicit definition of the CSCs and, especially the CSUs, has often been a continuous source of ambiguity, confusion, and mis-communication within and between product development teams.

“The biggest problem in communication is the illusion that it has taken place.” – George Bernard Shaw.

A consequence of not classifying an application down to the CSU level is the classic “what the hell’s a unit?” problem. If your system is defined as just a collection of CSCIs comprised of hundreds of thousands of lines of source code and the identification of CSCs and CSUs is left to chance, then a whole CSCI can be literally considered a “unit” and you only have one unit test per CSCI to run (LOL!)

In preparation for an idea that follows, check out the language-specific taxonomies that I made up (I like to make stuff up so people can rip it to shreds) for complex C++ and Java applications below. If your app is comprised of a single, simple process without any threads or tasks (like they teach in school and intro-programming books), mentally remove the process and thread levels from the diagram. Then just plop the Application level right on top of the C++ namespace and/or the Java package levels.

To solve, or at least ameliorate the “what the hell’s a unit?” problem, I gently propose the consideration of the following concrete-to-abstract mappings for programs written in C++ and Java. In both languages, each process in an application “is a” CSCI and each thread within a process “is a” CSC. A CSU “is a” namespace (in C++) or a package (in Java).

I think that adopting a map such as this to use as a standard communication tool would lead to fewer mis-communications between and among development team members and, more importantly, between developer orgs and customer orgs that require design artifacts to employ the CSCI/CSC/CSU terminology.

As just stated, the BD00 proposal maps a C++ namespace or a java package into the lowest level element of abstract organization – the CSU. If that level of granularity is too coarse, then a class, or even a class member function (method in Java), can be designated as a CSU (as shown below). The point is that each company’s software development organization should pick one definition and use it consistently on all their projects. Then everyone would have a chance of speaking a common language and no one would be asking, “what the hell’s a freakin’ unit?“.

So, “What the hell’s a unit?” in your org? A member function? A class? A namespace? A thread? A process? An application? A system?

Useless Cases

Despite the blasphemous title of this blarticle, I think that “use cases” are a terrific tool for capturing a system’s functional requirements out of the ether; for the right class of applications. Nevertheless, I agree with requirements “expert” Karl Wiegers, who states the following in “More About Software Requirements: Thorny Issues And Practical Advice“:

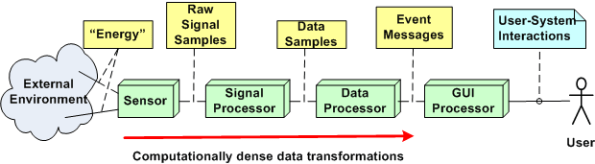

However, use cases are less valuable for projects involving data warehouses, batch processes, hardware products with embedded control software, and computationally intensive applications. In these sorts of systems, the deep complexity doesn’t lie in the user-system interactions. It might be worthwhile to identify use cases for such a product, but use case analysis will fall short as a technique for defining all the system’s behavior.

I help to define, specify, design, code, and test embedded (but relatively “big”) software-intensive sensor systems for the people-transportation industry. The figure below shows a generic, pseudo-UML diagram of one of these systems. Every component in the string is software-intensive. In this class of systems, like Karl says, “the deep complexity doesn’t lie in the user-system interactions”. As you can see, there’s a lot of special and magical “stuff” going on behind the GUI that the user doesn’t know about, and doesn’t care to know about. He/she just cares that the objects he/she wants to monitor show up on the screen and that the surveillance picture dutifully provided by the system is an accurate representation of what’s going on in the real world outside.

A list of typical functions for a product in this class may look like this:

- Display targets

- Configure system

- Monitor system operation

- Tag target

- Control system operation

- Perform RF signal filtering

- Perform signal demodulation

- Perform signal detection

- Perform false signal (e.g. noise) rejection

- Perform bit detection, extraction, and message generation

- Perform signal attribute (e.g. position, velocity) estimation

- Perform attribute tracking

Notice that only the top five functions involve direct user interaction with the product. Thus, I think that employing use cases to capture the functions required to provide those capabilities is a good idea. All the dirty and nasty”perform” stuff requires vertical, deep mathematical expertise and specification by sensor domain experts (some of whom, being “expert specialists”, think they are Gods). Thus, I think that the classical “old and unglamorous” Software Requirements Specification (SRS) method of defining the inputs/processing/output sequences (via UML activity diagrams and state machine diagrams) blows written use case descriptions out of the water in terms of Return On Investment (ROI) and value transferred to software developers.

Clueless Bozo Managers (BMs) and senior wannabe-a-BM developers who jump on the “use cases for everything” bandwagon (but may have never written a single use case description themselves) waste company time and money trying to bully “others” into ramming a square peg into a round hole. But they look hip, on the ball, and up to date doing it. And of course, they call it leadership.

Scaleability

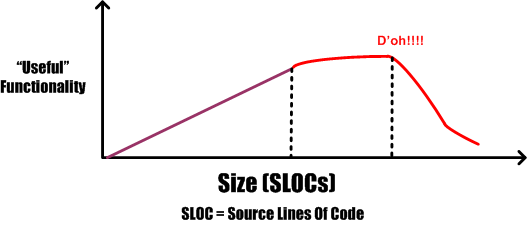

The other day, a friend suggested plotting “functionality versus size” as a potentially meaningful and actionable measure of software development process prowess. The figure below is an unscientific attempt to generically expand on his idea.

Assume that the graph represents the efficiency of three different and unknown companies (note: since I don’t know squat and I am known for “making stuff up”, take the implications of the graph with a grain of salt). Because it’s well known by industry experts that the complexity of a software-intensive product increases at a much faster rate than size, one would expect the “law of diminishing returns” to kick in at some point. Now, assume that the inflection point where the law snaps into action is represented by the intersection of the three traces in the graph. The red company’s performance clearly shows the deterioration in efficiency due to the law kicking in. However, the other two companies seem to be defying the law.

How can a supposedly natural law, which is unsentimental and totally indifferent to those under its influence, be violated? In a word, it’s “scaleability“. The purple and green companies have developed the practices, skills, and abilities to continuously improve their software development processes in order to keep up with the difficulty of creating larger and more complex products. Unlike the red company, their processes are minimal and flexible so that they can be easily changed as bigger and bigger products are built.

Either quantitatively or qualitatively, all growing companies that employ unscaleable development processes eventually detect that they’ve crossed the inflection point – after the fact. Most of these post-crossing discoverers panic and do the exact opposite of what they need to do to make their processes scaleable. They pile on more practices, procedures, forms-for-approval, status meetings, and oversight (a.k.a. managers) in a misguided attempt to reverse deteriorating performance. These ironic “process improvement” actions solidify and instill rigidity into the process. They handcuff and demoralize development teams at best, and trigger a second inflection point at worst:

More meetings plus more documentation plus more management does not equal more success. – NASA SEL

Is your process scaleable? If so, what specific attributes make it scaleable? If not, are the results that you’re getting crying out for scaleability?

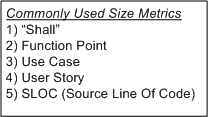

Percent Complete

In order to communicate progress to someone who requires a quantitative number attached to it, some sort of consistent metric of accomplishment is needed. The table below lists some of the commonly used size metrics in the software development world.

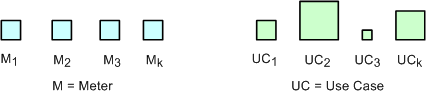

All of these metrics suffer to some extent from a “consistency” problem. The problem (as exemplified in the figure below) is that, unlike a standard metric such as the “meter”, the size and meaning of each unit is different from unit to unit within an application, and across applications. Out of all the metrics in the list, the definition of what comprises a “Function Point” unit seems to be the most rigorous, but it still suffers from a second, “translation” problem. The translation problem manifests when an analyst attempts to convert messy and ambiguous verbal/written user needs into neat and tidy requirement metrics using one of the units in the list.

Nevertheless, numerically-trained MBA and PMI certified managers and their higher up executive bosses still obsessively cling to progress reports based on these illusory metrics. These STSJs (Status Takers and Schedule Jockeys) love to waste corpo time passing around status reports built on quicksand like the “percent done” example below.

The problems with using graphs like this to “direct” a project are legion. First, it is assumed that the TNFP is known with high accuracy at t=0 and, more erroneously, that its value stays constant throughout the duration. A second problem with this “best practice” is that lots, if not all, non-trivial software development projects do not progress linearly with the passage of time. The green trace in the graph is an example of a non-linearly progressing project.

Since most managers are sequential, mechanistic, left-brain-trained thinkers, they falsely conclude that all projects progress linearly. These bozelteens also operate under the meta-assumption that no initial assumptions are violated during project execution (regardless of what items they initially deposited in their “risk register” at t=0). They mistakenly arrive at conclusions like: ” if it took you two weeks to get to 50% done, you will be expected to be done in two more weeks”. Bummer.

Even after trashing the “percent complete” earned-value-management method in the previous paragraphs, I think there is a chance to acquire a long term benefit by tracking progress this way. The benefit can accrue IF AND ONLY IF the method is not taken too seriously and it’s not used to impose undue stress upon the software creators and builders who are trying their best to balance time-cost and quality. Performing the “percent complete” method over a bunch of projects and averaging the results can yield decent, but never 100% accurate, metrics that can be used to more effectively estimate future project performance. What do you think?

Pay As You Go

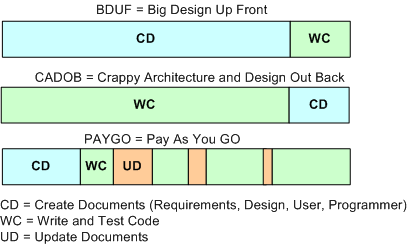

An age old and recurring source of contention in software-intensive system development is the issue of deciding how much time to spend coding and how much time to spend writing documentation artifacts. The figure below shows three patterns of development: BDUF, CADOB, and PAYGO.

Prior to the agile “revolution“, most orgs spent a lot of time generating software documentation during the front end of a project. The thinking was that if you diligently mapped out and physically recorded your design beforehand, the subsequent coding, integration, and test phases would proceed smoothly and without a hitch. Bzzzt! BDUF (bee-duff) didn’t work out so well. Religiously following the BDUF method (a.k.a. the waterfall method) often led to massive schedule and cost overruns along with crappy and bug infested software. Bummer.

In search of higher quality and lower cost results, a well meaning group of experts conceived of the idea of “agile” software development. These agile proponents, and the legions of programmers soon to follow, pointed to the publicly visible crappy BDUF results and started evangelizing minimal documentation up front. However, since the vast majority of programmers aren’t good at writing anything but code, these legions of programmers internalized the agile advice to the extreme; turning the dials to “10”, as Kent Beck would say. Citing the agile luminaries, massive numbers of programmers recoiled at any request for up front documentation. They happily started coding away, often leading to an unmaintainable shish-CADOB (Crappy Architecture and Design Out Back). Bozo managers, exclusively measured on schedule and cost performance by equally unenlightened corpocratic executives, jumped on this new silver bullet train. Bzzzt! Extreme agility hasn’t worked very well either. The extremist wing of the agilista party has in effect regressed back to the dark ages of hack and fix programming, hatching impressive disasters on par with the BDUF crews. In extreme agile projects where documentation is still required by customers, a set of hastily prepared, incorrect, and unusable design/user/maintenance artifacts (a.k.a. camouflage) is often produced at the tail end of the project. Boo hoo, and WAAAAGH!

As the previously presented figure illustrates, a third, hybrid pattern of software-intensive system development can be called PAYGO. In the PAYGO method, the coding/test and artifact-creation activities are interlaced and closely coupled throughout the development process. If done correctly, progressively less project time is spent “updating” the document set and more time is spent coding, integrating, and testing. More importantly, the code and documentation are diligently kept in synch with each other.

An important key to success in the PAYGO method is to keep the content of the document artifact set at a high enough level of abstraction “above” the source code so that it doesn’t need to be annoyingly changed with every little code change. A second key enabler to PAYGO success is the ability and (more importantly) the will to write usable technical documentation. Sadly, because the barriers to adoption are so high, I can’t imagine the PAYGO method being embraced now or in the future. Personally, I try to do it covertly, under the radar. But hey, don’t listen to me because I don’t have any credentials, I like to make stuff up, and I’ve been told by infallible and important people that I’m not fit to lead 🙂

The only way to learn how to write is by wrote.

Structure And The “ilities”

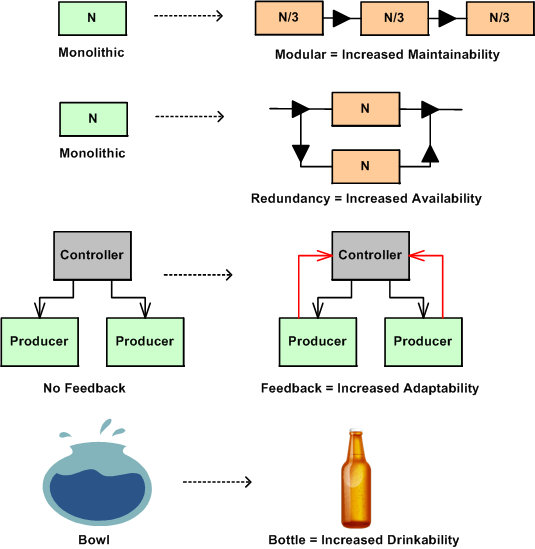

In nature, structure is an enabler or disabler of functional behavior. No hands – no grasping, no legs – no walking, no lungs – no living. Adding new functional components to a system enables new behavior and subtracting components disables behavior. Changing the arrangement of an existing system’s components and how they interconnect can also trade-off qualities of behavior, affectionately called the “ilities“. Thus, changes in structure effect changes in behavior.

The figure below shows a few examples of a change to an “ility” due to a change in structure. Given the structure on the left, the refactored structure on the right leads to an increase in the “ility” listed under the new structure. However, in moving from left to right, a trade-off has been made for the gain in the desired “ility”. For the monolithic->modular case, a decrease in end-to-end response-ability due to added box-to-box delay has been traded off. For the monolithic->redundant case, a decrease in buyability due to the added purchase cost of the duplicate component has been introduced. For the no feedback->feedback case, an increase in complexity has been effected due to the added interfaces. For the bowl->bottle example, a decrease in fill-ability has occurred because of the decreased diameter of the fill interface port.

The plea of this story is: “to increase your aware-ability of the law of unintended consequences”. What you don’t know CAN hurt you. When you are bound and determined to institute what you think is a “can’t lose” change to a system that you own and/or control, make an effort to discover and uncover the ilities that will be sacrificed for those that you are attempting to instill in the system. This is especially true for socio-technical systems (do you know of any system that isn’t a socio-technical system?) where the influence on system behavior by the technical components is always dwarfed by the influence of the components that are comprised of groups of diverse individuals.