Archive

Plan, Plan, Plan…. Blah, Blah, Blah.

Preface

People followed Martin Luther King of their own free will because he had a dream, not a plan. – Simon Sinek

On the other hand, know-it-all business school trained weenies will profess (in a patriachical and condescending tone):

Failing to plan is planning to fail – Unknown

Irrelevant Intro

When I started this relatively loooong blarticle, I had no freakin’ idea where it would go but I followed where it led me. Led by the unknown into the unknown – it was the keystone cops leading the three (nyuk nyuk nyuk) stooges (whoo, whoo, whoo, boink, plunk, pssst!).

As usual, I didn’t have a meticulously well formed plan for this time-waster ( <- for you, heh, heh) in my fallible cranium and I made many mid-course corrections as I crab-walked like a drunken sailor toward the finish line. Hell, I didn’t even know where the freakin’ finish line was. I stopped iteratively writing/drawing when I subjectively concluded that….. tada, “I’ve arrived!”. Such is the nature of exploration, discovery, and exposition, no? If you disagree, why?

Pristine Profile – Full Steam Ahead!

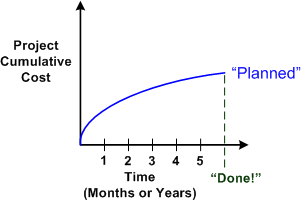

The figure below shows the shape of a pristine, planned, cost vs time profile at “project start” for a long term, resource-intensive, project to do something “big and grand for the world”. Some one or some group has consulted their crystal ball and concocted a cost vs schedule curve based on vague, subjective criteria, and bolstered by a set of ridiculously optimistic assumptions and a bogus risk register “required for signoff“. To coverup the impending calamity, the schedule has been enunciated to the troops as “aggressive“. BTW, have you ever heard of a non-aggressively scheduled big project?

It’s interesting to note that the dudes/dudettes who “craft” cost profiles for big quagmire projects are never the ones who’ll roll up their sleeves, get dirty, and actually do the downstream work. Even if the esteemed planners are smart enough to actually humbly ask for estimates from those who will do the work, they automatically chop them down to size based on whim, fancy, and political correctness. <- LOL!

Typical Profile – Bummer

The figure below shows (in hindsight) the actual vs planned cost curve for a hypothetical “bummer” project. The project started out overestimated (yes, I actually said overestimated), and then, as the cost encroached into uncomfortable territory, the plan became, uh, optimistic. Since it was underestimated for “political reasons” (what other reason is there?), but no one had a clue as to whether the plan was sane, no acknowledgement of the mistake was made during the entire execution and no replanning was done. The loss accumulated and accumulated until end game – whatever that means.

Crisis Profile – We’re Vucked!

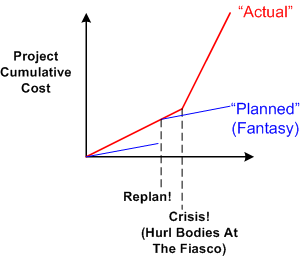

The figure below shows (in hindsight) the actual vs planned cost curve for a hypothetical “vucked” project. Cost-wise, the project started out OK, but because it was discovered that technical progress wasn’t really, uh, technical progress, bodies were thrown onto the bonfire. Again, the financial loss accumulated and accumulated until end game – whatever that means.

Replan Profile – Fantasy Revisited

The figure below shows (in hindsight) the actual vs planned cost curve for a hypothetical “fantasy revisited” project. Cost-wise, the project started out OK (snore, snore, Zzzzz), but because it was discovered that technical progress wasn’t really, uh, technical progress, bodies were thrown on the bonfire. But his time, someone with a conscience actually fessed up (yeah, some people are like that, believe it or not) and the project was replanned in real-time, during execution. Alas, this is not Hollywood and the financial loss accumulated and accumulated until end game – whatever that means.

Iterative, Incremental Profile – No Freakin’ Way

Alright, alright. As everyone knows, and this especially includes you, it’s easy to rag about everyone and everything – “everything sux and everyone’s an a-hole; blah, blah, blah…. aargh!”. What about an alternative, Mr. Smarty Pants? Even though I have no idea if it’ll work, try this one on for size (and it’s definitely not original).

The figure below shows (in hindsight) the actual vs planned cost curve for a hypothetical “no freakin’ way” project. But wait a minute, you cry. There’s only one curve! Shouldn’t there be two curves you freakin’ bozeltine? There’s only one because the actual IS the planned. This can be the case because if the planning increments are small enough, they can almost equate to the actual expenditures. At each release and re-evaluation point, the real thing, which is the product or service that is being provided (product and service are unknown concepts to bureaucrats and executive fatheads), is both objectively and subjectively evaluated by the people who will be using the damn thing in the future. If they say “This thing sux!”, its fini, kaput, end game before scheduled end game. If they say “Good job so far! I can envision this thing helping me do my job better with a few tweaks and these added features”, then it’s onward. The chances are high that with this type of rapid and dynamic learning SCRBF system in place, projects that should be killed will be killed, and projects that should continue will continue. Agree, or disagree? What say you?

This hypothetical project is called “No Freakin’ Way” because there is “no freakin’ way” that the system of co-dependent failure designed and kept in place by hierarchs in both contractor and contractee institutions will ever embrace it. What do you think?

Monolithic Redesign

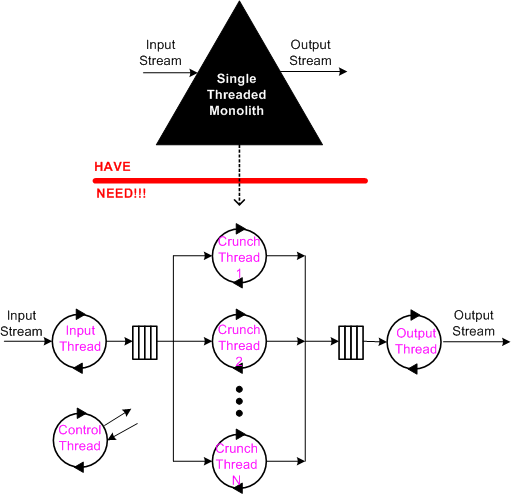

On my latest assignment, I have to reverse engineer and understand a large monolithic block of computationally intense, single-threaded product code that’s been feature-enhanced and bug-fixed many times, by many people, over many years (sound familiar?). In addition to the piled on new features, a boatload of nested if-else structures has been injected into the multi-K SLOC code base over the years to handle special and weird cases observed and reported in from the field.

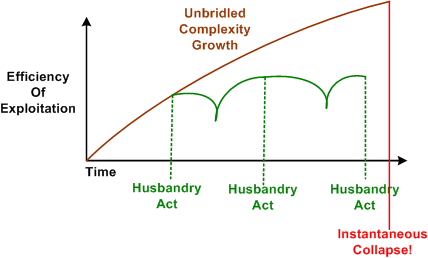

As you can guess, it’s no one’s fault that the code is a tangled mess. It’s because the second law of thermodynamics has been in action doing its dirty work destroying the system without being periodically harnessed by scheduled acts of husbandry. A handful of maintenance developers imbued with a sense of personal responsibility and ownership have tried their best to refactor the beast into submission; under the radar.

Because the code is CPU intensive and single threaded, it’s not scalable to higher input data rates and its viability has hit “the wall”. Thus, besides refactoring the existing functionality into a more maintainable design, I have to simultaneously morph the mess into a multi-threaded structure that can transparently leverage the increased CPU power supplied by multicore hardware.

Note that redesigning for distributed flexibility and higher throughput doesn’t come for free. Essential complexity is added and additional latency is incurred because each input sample must traverse the 3 thread pipeline.

Piece of cake, no? Since lowly “programmers” are interchangeable, anyone could do it, right? I love this job and I’m having a blast!

Close Spatial Proximity

Even on software development projects with agreed-to coding rules, it’s hard to (and often painful to all parties) “enforce” the rules. This is especially true if the rules cover superficial items like indenting, brace alignment, comment frequency/formatting, variable/method name letter capitalization/underscoring. IMHO, programmers are smart enough to not get obstructed from doing their jobs when trivial, finely grained rules like those are violated. It (rightly) pisses them off if they are forced to waste time on minutiae dictated by software “leads” that don’t write any code and (especially) former programmers who’ve been promoted to bureaucratic stooges.

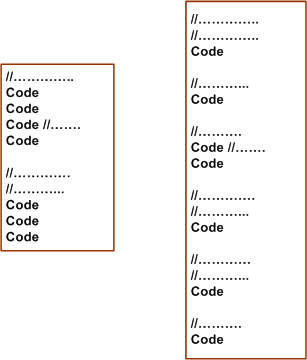

Take the example below. The segment on the right reflects (almost) correct conformance to the commenting rule “every line in a class declaration shall be prefaced with one or more comment lines”. A stricter version of the rule may be “every line in a class declaration shall be prefaced with one or more Doxygenated comment lines”.

Obviously, the code on the left violates the example commenting rule – but is it less understandable and maintainable than the code on the right? The result of diligently applying the “rule” can be interpreted as fragmenting/dispersing the code and rendering it less understandable than the sparser commented code on the left. Personally, I like to see necessarily cohesive code lines in close spatial proximity to each other. It’s simply easier for me to understand the local picture and the essence of what makes the code cohesive.

Even if you insert an automated tool into your process that explicitly nags about coding rule violations, forcing a programmer to conform to standards that he/she thinks are a waste of time can cause the counterproductive results of subversive, passive-aggressive behavior to appear in other, more important, areas of the project. So, if you’re a software lead crafting some coding rules to dump on your “team”, beware of the level of granularity that you specify your coding rules. Better yet, don’t call them rules. Call them guidelines to show that you respect and trust your team mates.

If you’re a software lead that doesn’t write code anymore because it’s “beneath” you or a bureaucrat who doesn’t write code but who does write corpo level coding rules, this post probably went right over your head.

Note: For an example of a minimal set of C++ coding guidelines (except in rare cases, I don’t like to use the word “rule”) that I personally try to stick to, check this post out: project-specific coding guidelines.

Positive Or Negative, Meaning Or No Meaning?

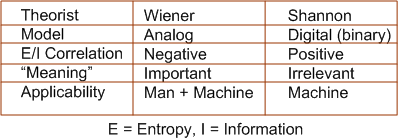

In Claude Shannon‘s book, “The Mathematical Theory Of Communication“, Mr. Shannon positively correlates information with entropy:

Information = f(Entropy)

When I read that several years ago, it was unsettling. Even though I’m a layman, it didn’t make sense. After all, doesn’t information represent order and entropy represent its opposite, chaos? Shouldn’t a minus sign connect the two? Norbert Wiener, whom Claude bounced ideas off of (and vice-versa) thought it did. His entropy-information connection included the minus sign.

In addition, Shannon’s theory stripped “meaning”, which is person-specific and unmodel-able in scrutable equations, from information. He treats information as a string of bland ‘0’ and ‘1’ bits that get transported from one location to another via a matched, but insentient, transmitter-receiver pair. Wiener kept the “meaning” in information and he kept his feedback loop-centric equations analog. This enabled his cybernetic theory to remain applicable to both man and the machine and make assertions like: “those who can control the means of communication in a system will rule the roost“.

Like most of my posts, this one points nowhere. I just thought I’d share it because I think others might find the Shannon-Wiener differences/likenesses as interesting and mysterious as I do.

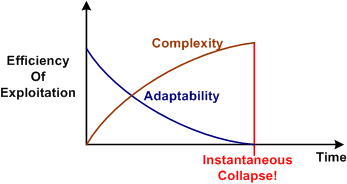

The Commencement Of Husbandry

The figure below was copied over from yesterday’s post. Derived from Joseph Tainter’s “The Collapse of Complex Societies”, it simply illustrates that as the complexity of a social organizational structure necessarily grows to support the group’s own growth and survival needs, the adaptability of the structure decreases. The flat and loosely coupled institutional structures originally created by the group’s elites (with the willing consent of the commoners) start hierarchically rising and coalescing into a rigid, gridlocked monolith incapable of change. At the unknown future point in time where an external unwanted disturbance exceeds the group’s ability to handle it with its existing complex problem solving structures and intellectual wizardry, the whole tower of Babel comes tumbling down since the monolith is incapable of the alternative – adapting to the disturbance via change. Poof!

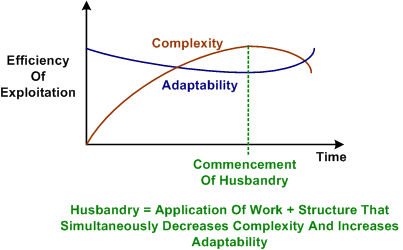

According to Tainter, once the process has started, it is irreversible. But is it? Check out the figure below. In this example, the group leadership not only awakens to the dooms day scenario, it commences the process of husbandry to reverse the process by:

- Re-structuring (not just tinkering and rearranging the chairs) for increased adaptability – by simplifying.

- Scouring the system for, and delicately removing useless, appendix-like substructures.

- Discovering the pockets of fat that keep the system immobile and trimming them away.

- Loosening dependencies between substructures and streamlining the interactions between those substructures by jettisoning bogus processes and procedures.

- Installing effective, low lag time, internal feedback loops and external sensors that allow the system to keep moving forward and probing for harmful external disturbances.

If the execution of husbandry is boldly done right (and it’s a big IF for humongous institutions with a voracious appetite for resources), an effectively self-controlled and adaptable production system will emerge. Over time, and with sustained periodic acts of husbandry to reduce complexity, the system can prosper for the long haul as shown in the figure below.

D4P Has Been Hatched

Friend and long time mentor Bill Livingston has finished his latest book, “Design For Prevention” (D4P). I mildly helped Bill in his endeavor by providing feedback over the last year or so in the form of idiotic commentary, and mostly, typo exposure.

Bill, being a staunch promoter of SCRBF feedback and its natural power of convergence to excellence, continuously asked for feedback and contributory ideas throughout the book writing process. Being a blabbermouth and having great respect for the man because of the profound influence he’s had on my worldview for 20+ years, I truly wanted to contribute some ideas of substance. However, I struggled mightily to try and conjure up some worthy input because even though I understood the essence of this original work and it resonated deeply with me, I couldn’t quite form (and still can’t) a decent and coherent picture of the whole work in my mind.

D4P is a socio-technical process for designing a solution to a big hairy problem (in the face of powerful institutional resistance) that dissolves the problem without causing massive downstream stakeholder damage. Paradoxically, the book is a loosely connected, but also dense, artistic tapestry of seemingly unrelated topics and concepts such as:

- Alan Turing’s thesis of infallibility vs. intelligence

- Leveraging nature’s physical laws, with a special emphasis on suspending the 2nd law of thermodynamics and entropy growth

- W. Ross Ashby‘s cybernetic law of requisite variety

- Thorstein Veblen’s theory of the leisure class

- Nash Equilibriums in game playing

- Rudolf Starkermann‘s mathematical analysis of social group system behavior

Bill does a masterful and unprecedented job at connecting the dots. The book will set you back, uh, $250 beaners on Amazon.com, but wait….. there’s a reason for that astronomical price. He doesn’t really care if he sells it. He wants to give it away to people who are seriously interested in “Designing For Prevention”. Posers need not apply. If you’re intrigued and interested in trying to coerce Bill into sending you a copy, you can introduce yourself and make your case at vitalith “at” att “dot” net.

Update 12/29/12:

The D4P book is available for free download at designforprevention.com. The second edition is on its way shortly.

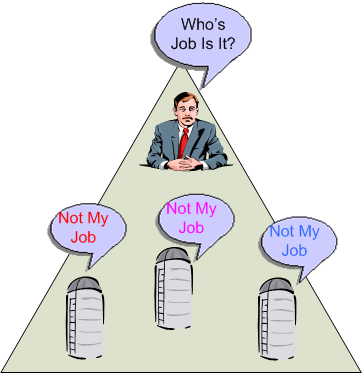

Supported By, Not Partitioned Among

“A design flows from a chief designer, supported by a design team, not partitioned among one.” – Fred Brooks

partitioning == silos == fragmented scopes of responsibility == it’s someone else’s job == uncontrolled increase in entropy == a mess == pissed off customers == pissed off managers == disengaged workers

“Who advocates … for the product itself—its conceptual integrity, its efficiency, its economy, its robustness? Often, no one.” – Fred Brooks

Note for non-programmers: “==” is the C++ programming language’s logical equality operator. If the operator’s left operand equates to its right operand, then the expression is true. For example, the expression “1 == 1” (hopefully) evaluates to true.

Knowing this, do you think the compound expression sandwiched between the two Brooks’s quotes evaluates to true? If not, where does the chain break?

Dark Hero

In Dark Hero of the Information Age: In Search of Norbert Wiener The Father of Cybernetics, authors Flo Conway and Jim Siegelman trace the life of Mr. Wiener from child prodigy to his creation of the interdisciplinary science of cybernetics. As a student of the weak (very weak) connection between academic and spiritual intelligence, I found the following book excerpt fascinating:

Since his youth, Wiener was mindful that his best ideas originated in a place beneath his awareness, “at a level of consciousness so low that much of it happens in my sleep.” He described the process by which ideas would come to him in sudden flashes of insight and dreamlike, hypnoid states:

Very often these moments seem to arise on waking up; but probably this really means that sometime during the night I have undergone the process of deconfusion which is necessary to establish my ideas…. It is probably more usual for it to take place in the so-called hypnoidal state in which one is awaiting sleep, and it is closely associated with those hypnagogic images which have some of the sensory solidity of hallucinations. The subterranean process convinced him that “when I think, my ideas are my masters rather than my servants.”

Barbara corroborated her father’s observation. “He frequently did not know how he came by his answers. They would sneak up on him in the middle of the night or descend out of a cloud,” she said. Yet, because Wiener’s mental processes were elusive even to him, “he lived in fear that ideas would lose interest in him and wander off to present themselves to somebody else.”

This description of how and when ideas instantaneously appear out of the void of nothingness aligns closely with those people who say their best ideas strike them: in the shower, on vacation, out in nature, during meditation, while driving to work, exercising, or doing something they love. In situations like these, the mind is relaxed, humming along at a low rpm rate, and naturally prepared for fresh ideas. Every person is capable of receiving great ideas because it’s an innate ability – a gift from god, so to speak. Most people just don’t realize it.

I haven’t heard many stories of a great idea being birthed in a drab, corpo-supplied, cubicular environment under the watchful eyes of a manager. Have you?

Note: The picture above is wrong. Exept for “what’s your status?“, BMs don’t ask DICs for anything. Since they know everything, they just tell DICs what to do.

Note: The picture above is wrong. Exept for “what’s your status?“, BMs don’t ask DICs for anything. Since they know everything, they just tell DICs what to do.

Leverage Point

In this terrific systems article pointed out to me by Byron Davies, Donella Meadows states:

Physical structure is crucial in a system, but the leverage point is in proper design in the first place. After the structure is built, the leverage is in understanding its limitations and bottlenecks and refraining from fluctuations or expansions that strain its capacity.

The first sentence doesn’t tell me anything new, but the second one does. Many systems, especially big software systems foisted upon maintenance teams after they’re hatched to the customer, are not thoroughly understood by many, if any, of the original members of the development team. Upon release, the system “works” (and it may be stable). Hurray!

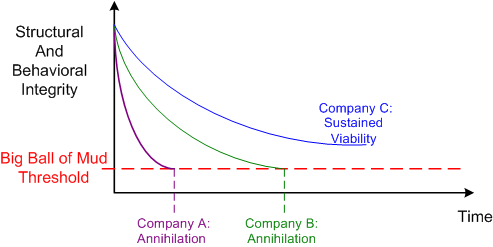

In the post delivery phase, as the (always) unheralded maintenance team starts adding new features without understanding the system’s limitations and bottlenecks, the structural and behavioral integrity of the beast starts surely degrading over time. Scarily, the rate of degradation is not constant; it’s more akin to an exponential trajectory. It doesn’t matter how pristine the original design is, it will undoubtedly start it’s march toward becoming an unlovable “big ball of mud“.

So, how can one slow the rate of degradation in the integrity of a big system that will continuously be modified throughout its future lifetime? The answer is nothing profound and doesn’t require highly skilled specialists or consultants. It’s called PAYGO.

In the PAYGO process, a set of lightweight but understandable and useful multi-level information artifacts that record the essence of the system are developed and co-evolved with the system software. They must be lightweight so that they are easily constructable, navigable, and accessible. They must be useful or post-delivery builders won’t employ them as guidance and they’ll plow ahead without understanding the global ramifications of their local changes. They must be multi-level so that different stakeholder group types, not just builders, can understand them. They must be co-evolved so that they stay in synch with the real system and they don’t devolve into an incorrect and useless heap of misguidance. Challenging, no?

Of course, if builders, and especially front line managers, don’t know how to, or don’t care to, follow a PAYGO-like process, then they deserve what they get. D’oh!

Conceptual Integrity

Like in his previous work, “The Mythical Man Month“, in “The Design Of Design“, Fred Brooks remains steadfast to the assertion that creating and maintaining “conceptual integrity” is the key to successful and enduring designs. Being a long time believer in this tenet, I’ve always been baffled by the success of Linux under the free-for-all open source model of software development. With thousands of people making changes and additions, even under Linus Torvalds benevolent dictatorship, how in the world could the product’s conceptual integrity defy the second law of thermodynamics under the onslaught of such a chaotic development process?

Fred comes through with the answers:

- A unifying functional specification is in place: UNIX.

- An overall design structure, initially created by Torvalds, exists.

- The builders are also the users – there are no middlemen to screw up requirements interpretation between the users and builders.

If you extend the reasoning of number 3, it aligns with why most of the open source successes are tools used by software developers and not applications used by the average person. Some applications have achieved moderate success, but not on the scale of Linux and other application development tools.