Archive

New DDS Vendors

Since I’m a fan of the DDS (Data Distribution Service) inter-process communication infrastructure for distributed systems, I try to keep up with developments in the DDS space. Via an Angelo Corsaro slideshare presentation, I discovered two relatively new commercial vendors of the high performance, low latency, OMG! messaging standard: Twin Oaks Computing and Gallium Visual Systems. I don’t know how long the newcomers have been pitching their DDS implementations or how mature their products are, but I’ll be learning more about them in the weeks to come.

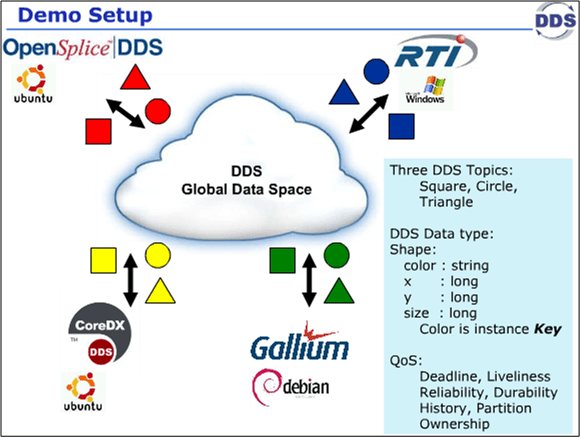

Last week, the four DDS vendors got together at the OMG DDS meeting in CA and they collaboratively executed 9 distributed system test scenarios to highlight the interoperability of the vendors’ products. The Angelo Corsaro slide snippets below show the conceptual context and the goals of the event.

The VP of marketing at RTI, Dave Barnett, published a short video showing that the demo was a success: DDS Interoperability Demo. Since I’m a distributed, real-time software developer geek, this stuff makes me giddy with enthusiasm. Sad, no?

Bugs Or Cash

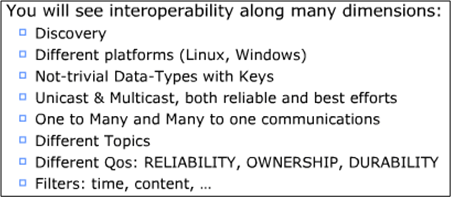

Assume that you have a system to build and a qualified team available to do the job. How can you increase the chance that you’ll build an elegant and durable money maker and not a money sink that may put you out of business.

The figure below shows one way to fail. It’s the well worn and oft repeated ready-fire-aim strategy. You blast the team at the project and hope for the best via the buzz phrase of the day – “emergent design“.

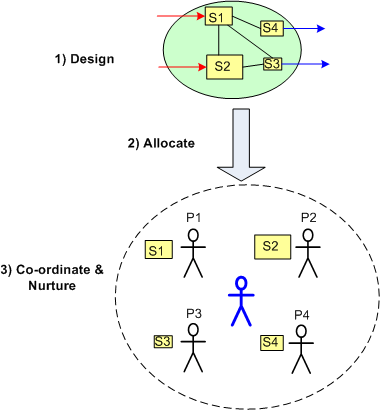

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

Small, Loose, Big, Tight

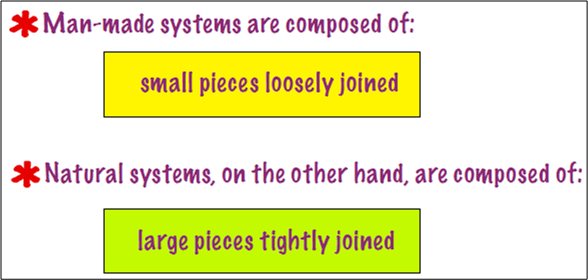

This Tom DeMarco slide from his pitch at the Software Executive Summit caused me to stop and think (Uh oh!):

I find it ironic (and true) that when man-made system are composed of “large pieces tightly joined“, they, unlike natural systems of the same ilk, are brittle and fault-intolerant. Look at the man-made financial system and what happened during the financial meltdown. Since the large institutional components were tightly coupled, the system collapsed like dominoes when a problem arose. Injecting the system with capital has ameliorated the problem, but only the future will tell if the problem was dissolved. I suspect not. Since the structure, the players, and the behavior of the monolithic system have remained intact, it’s back to business as usual.

Similarly, as experienced professionals will confirm, man made software systems composed of “large pieces tightly joined” are fragile and fault-intolerant too. These contraptions often disintegrate before being placed into operation. The time is gone, the money is gone, and the damn thing don’t work. I hate when that happens.

On the other hand, look at the glorious human body composed of “large pieces tightly joined“. It’s natural, built-in robustness and tolerance to faults is unmatched by anything man-made. You can survive and still prosper with the loss of an eye, a kidney, a leg, and a host of other (but not all) parts. IMHO, the difference is that in natural systems, the purposes of the parts and the whole are in continuous, cooperative alignment.

When the individual purposes of a system’s parts become unaligned, let alone unaligned with the purpose of the whole as often happens in man made socio-technical systems when everyone is out for themselves, it’s just a matter of time before an internal or external disturbance brings the monstrosity down to its knees. D’oh!

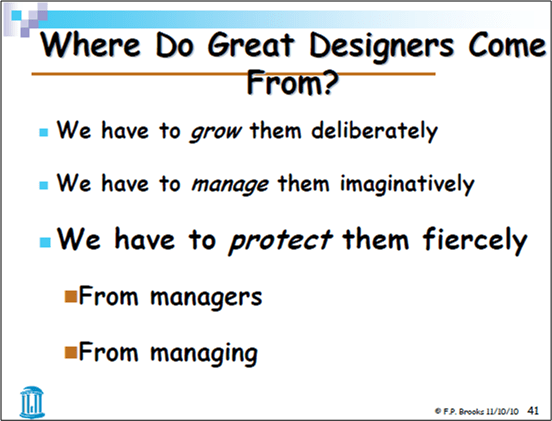

Fierce Protection

Delicious, just delicious. Pitches from Fred Brooks, Scott Berkun, Tom DeMarco, Tim Lister, and Steve McConnell all in one place: the Construx (McConnell’s company) Software Executive Summit. You can download them from here: Summit Materials.

Here’s a snapshot of one of Fred Brooks’s slides that struck me as paradoxical:

So…. who’s the “we” that Fred is addressing here and what’s the paradox? I’m pretty sure that Fred is addressing managers, right? The paradox is that he’s admonishing managers to protect great designers from…… managers. WTF?

But wait, I think I get it now. Fred is telling PHOR managers to “fiercely” protect designers from Bozo Managers (but in a non-offensive and politically correct way, of course). Alas, the fact that this slide appears at all in Fred’s deck implies that PHORs are rare and BMs are plentiful, no?

How do you interpret this slide?

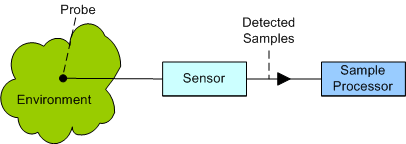

Fixed Vs Variable Sleep Times

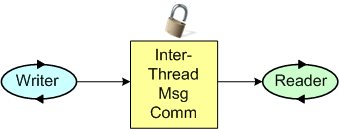

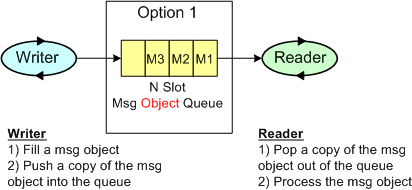

Consider the back end of a sensor system as shown below. Now, assume that you’re tasked with building the Sample Processor and you need some way of testing it. Thus, you need to simulate the continuous high speed sample stream that the Sensor will produce during operation in the real physical world.

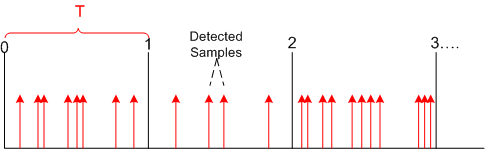

Since the analog real world is wonderfully messy and non-deterministic, the sensor/probe combo will produce data sample detections in bursty clumps as modeled below.

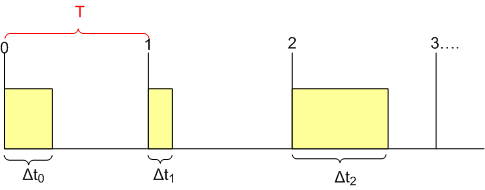

However, since you don’t need the high fidelity and fine grained controllability in your sensor simulator implied by the figure, you simplify your approach by modeling the sensor output as a deterministically time sliced (slice size = T) and “batched” device as shown by the yellow boxes in the diagram below.

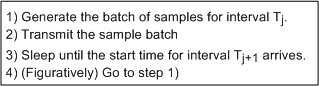

After thinking about it, you sketch out a simple core sensor simulation algorithm as:

After thinking about it, you sketch out a simple core sensor simulation algorithm as:

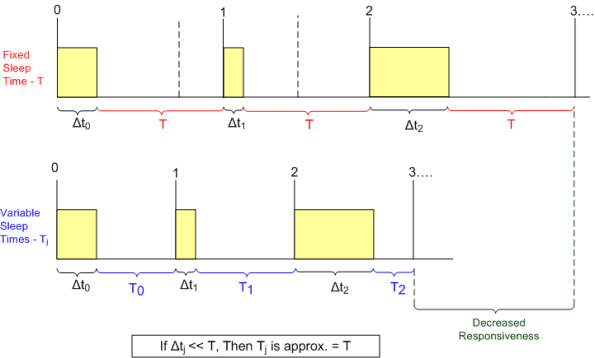

As the figure below shows, there are two options for determining how long to “sleep” after each yellow sample batch has been generated and transmitted: fixed and variable. In the simpler approach, the code sleeps for a fixed time duration “T” after every batch – regardless of how long it took to generate and transmit the batch of sensor samples. In the higher fidelity variable sleep approach, the time to sleep is calculated on the fly during runtime as the slice time “T” minus the time it took to generate and send the current batch. As the batch processing time approaches the time slice period T, the software sleeps less in order to maintain true real-time operation.

As the figure shows, implementing the sensor simulator with variable sleep time logic results in truer real-time behavior. In the fixed sleep design, the simulator starts lagging behind real-time immediately and the longer the sensor simulator runs, the further its behavior deviates from the real-time ideal. However, for short simulator runs and/or large relative time slice periods (see the box above), the simpler fixed sleep time approach tracks real-time just about as well.

For the project I’m currently working on, I’ve coded up a sensor simulator and sample processor pair that can be configured either way. I just thought I’d share the analysis/design thought process that I went through just in case it might interest or help anybody who’s working on something similar.

Where Elitism Is Proper?

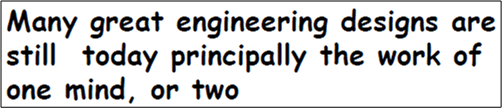

Ever since I stumbled upon Fred Brooks‘s meta-physical idea of “Conceptual Integrity” in his classic book, “The Mythical Man Month“, I’ve strived mightily to achieve that elusive quality in the software work that I do. Over twenty years ago, Mr. Brooks stated that the greatest conceptually integral designs were the product of one, or at most two, human minds. Fred asserts that his “one or two minds” principle still holds true today:

My fictional alter ego, Bulldozer aught aught, would’ve re-worded the beginning of the statement to “Most, if not all… “, but Fred’s message stills rings loud and true.

According to Fred, in today’s world of exponentially growing complexity and team sizes, conceptual integrity is more difficult to achieve than ever:

Why the increased difficulty? Because as a team grows larger, more minds will collide with each other to express and manifest their incompatible design ideas. Big projects can, and usually do, devolve into “design by committee” fiascos where monstrously over-complicated contraptions get created and foisted upon the world.

Ok, Ok, you say. Enough disclosure of the pervasive problem – we get it Yoda. What’s the solution, bozo? Here it is:

Even though it sounds simple to enact this policy, it’s not. The role of “Chief Designer” in a group of highly educated, independent thinking people is fraught with peril. It requires a dose of discipline imposition that can be perceived as “meanness” to external observers. Too much perceived meanness can cause a supporting team to morph into an unsupporting team and lead to the ejection and ostracism of the chief designer. Too little meanness and the possibility of achieving conceptual integrity goes right out the window – it’s Rube Goldberg city. Bummer.

Even though it sounds simple to enact this policy, it’s not. The role of “Chief Designer” in a group of highly educated, independent thinking people is fraught with peril. It requires a dose of discipline imposition that can be perceived as “meanness” to external observers. Too much perceived meanness can cause a supporting team to morph into an unsupporting team and lead to the ejection and ostracism of the chief designer. Too little meanness and the possibility of achieving conceptual integrity goes right out the window – it’s Rube Goldberg city. Bummer.

Does your org explicitly recognize and implement the “Chief Designer” role – which is not the same as the softer, less technical, more politically correct, and more administrative “software lead”, “project manager”, “product manager”, and…….. “software rocket-tect” roles? If your org does formally implement the “Chief Designer” role, are your chief designers kept on a tight leash by higher ranking BUTTS, BMs, BOOGLs, SCOLs or CGHs that have no idea what “conceptual integrity” means? Worse, do your “Chief Designers” (again, if you have any) handcuff themselves in order to increase their promotability?

I’m not into corpo caste systems or stratified command-control hierarchies and I struggle endlessly to fight the instinct to play the “I’m smarter than you” game, but I agree with Mr. Brooks when he asserts that world class product design is one of those rare situations…..

How about you? Where do you stand…… or sit?

How about you? Where do you stand…… or sit?

Note: The snippets in this blarticle were copied and pasted from Fred Brooks’s “The Design Of Design” pitch at the Construx Software Executive Summit. You can download and study it in its entirety from here: Summit Materials.

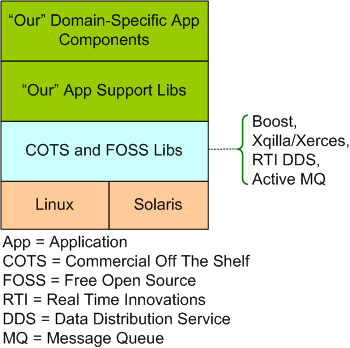

Our Stack

The model below shows “our stack”. It’s a layered representation of the real-time, distributed software system that two peers and I are building for our next product line. Of course, I’ve intentionally abstracted away a boatload of details so as not to give away the keys to the store.

The code that we’ve written so far (the stuff in the funky green layers) is up to 50K SLOC of C++ code and we guesstimate that the system will stabilize at around 250K SLOC. In addition to this computationally intensive back end, our system also includes a Java/PHP/HTML display front end, but I haven’t been involved in that area of development. We’ve been continuously refactoring our “App Support Libs” layer as we’ve found turds in it via testing the multi-threaded, domain-specific app components that use them.

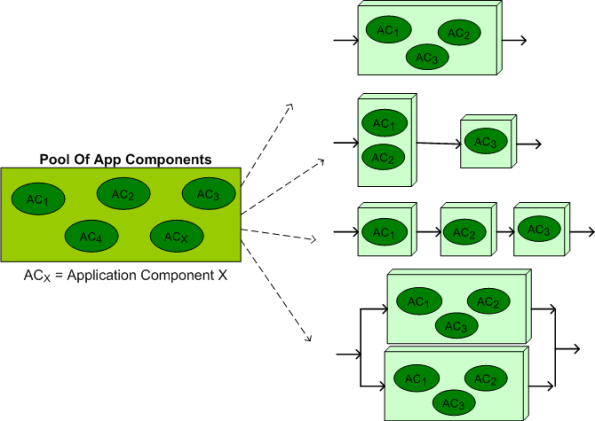

The figure below illustrates the flexibility and scalability of our architecture. From our growing pool of application layer components, a large set of product instantiations can be created and deployed on multiple hardware configurations. In the ideal case, to minimize end-to-end latency, all of the functionality would be allocated to a single processor node like the top instantiation in the figure. However, at customer sites where processing loads can exceed the computing capacity of a single node, voila, a multi-node configuration can be seamlessly instantiated without any source code changes – just XML file configuration parameter changes. In addition, as the bottom instantiation shows, a fault tolerant, auto-failover, dual channel version of the system can be deployed for high availability, safety-critical applications.

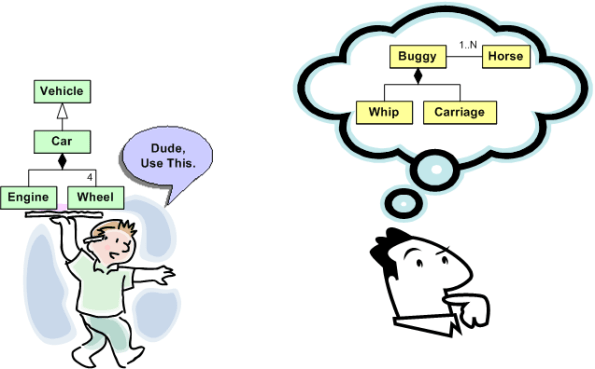

Reinventing The Wheel

In Federico Biancuzzi’s “Masterminds Of Programming“, UML co-creator Jim Rumbaugh states:

The computing field has a lot of people that think very well of themselves and seem to forget that there is any past to build upon. A lot of people keep reinventing things that have already been discovered. – Jim Rumbaugh

LOL! I understand what Jim’s saying, but there can be another reason for reinventing the wheel too. How many slightly different API versions of virtually similar “reusable” libraries are littered around your software development org? How many of them have you written and rewritten yourself?

If a library/module/component is poorly or “un” documented in terms of its design, external dependencies, and most importantly, usage examples, it t’aint gonna be reused. Even if the thang IS miraculously well documented, if the info is not integrated, organized, and easily accessible, it ain’t gonna be reused either. Both of these shortcomings, which are highly likely since most programmers don’t “do documentation” and managers don’t want to pay for non-camouflage documentation, guarantee reinventing the wheel over and over again.

Assume that you truly do want to reuse someone else’s code to save time, but all you have is the source code. You’re gonna have to pour through the mess to figure out what it offers, how it works, and what other components and libraries it depends on before you can consider using it “as is“. The larger and denser the component, the deeper and wider the inheritance tree, the more external dependencies, the more frustrated you’ll become and the less likely you’ll apply your brainpower and time to the task at hand. When that happens, Ta Dah, it’s time to roll your own – yet again. Hell, if you don’t document your own stuff, you might not even be able to eat your own dog food downstream. D’oh! I hate when that happens. And yes, it happens to me.

Read, Read, Read

To put it mildly, I’m not too fond of software project and “functional” software managers that don’t read code. Even worse, wanna-be-manager tweeners and lofty software “architects” who don’t read code are the pits. Note that I’m not demanding that these exalted ones write code, just actually RTFC (Read The F#@^&*! Code). Why? I thought you’d never ask…..

You see, I’m a believer in the “trust but verify” motto popularized by Ronald Reagan during the cold war. The only pseudo-objective way to truly assess progress, consistency, and quality on a software project is to sample the product – you know, the code. If you know of a better way, then I’m all ears.

It’s not that I don’t trust people to try their best, it’s just that most hierarchical cultures are toxic by unintentional design and that forces people to innocently cover up or camouflage a lack of progress when they fill out their “weekly status sheets” or verbally report progress at useless CYA (Cover Your Arse) meetings. Sadly, once DICs get appointed into elite manager and architect titles, they tend to leave their code reading and (especially) writing days behind. Of course, they’ve arrived (Halleluja!) and they no longer have to do any “janitorial” work that can be done by fungible DORKs.

How about you? Do you think people in the roles of software project manager, software functional manager, and software architect should actively read code as part of their jobs?

In many companies, enterprise architects sit in an ivory tower without doing anything useful. – Ivar Jacobson