Archive

Tested And Untested

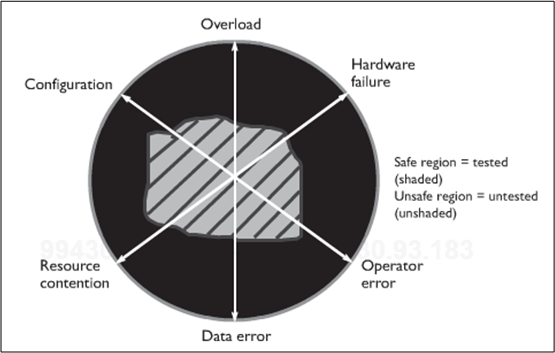

Being the e-klepto that I am, I stole this kool graphic from Humphrey and Over’s book “Leadership, Teamwork, And Trust“:

It represents the tested-to-untested (TU) region ratio of a generic, big software system. Humphrey/Over use it to prove that the ubiquitous, test-centric approach to quality doesn’t work very well and that by focusing on defect prevention via upfront review/inspection before testing, one can dramatically decrease the risk for any given TU ratio. Hard to argue with that, right?

The point I’d like to make, which is different than the one Humphrey/Over focus on in their book (to sell their heavyweight PSP/TSP methodology, of course), is that when a software system is released into the wild, it most likely hasn’t been tested in the wacky configurations and states that can cause massive financial loss and/or personal injury. You know, those “stressful” system states on the edge of chaos where transient input overloads occur, or hardware failures occur, or corrupted data enters the system. I think building the test infrastructure that can duplicate these doomsday scenarios – and then actually testing how the system responds in these rare but possible environments is far more effective than just adding political dog-and-pony reviews/inspections to one’s approach to quality. Instead of decreasing the risk for a fixed TU ratio, which reviews/inspections can achieve (if (and it’s a big if) they’re done right), it increases the TU ratio itself. However, since system-specific test tools are unglamorous and perceived as unnecessary costs by scrooge managers more concerned about their image than other stakeholders, scarily untested software is foisted upon the populace at an increasing rate. D’oh!

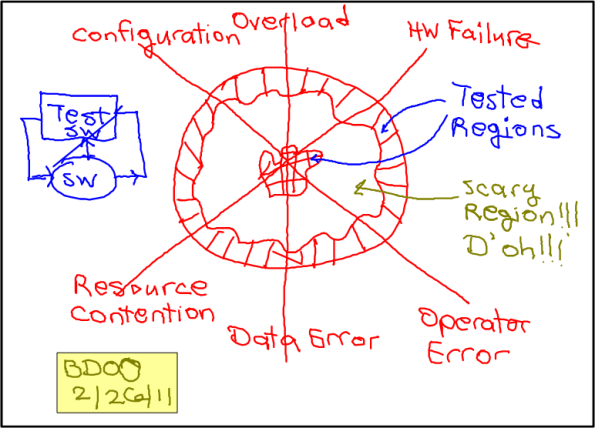

Note: The dorky hand made drawing above is the first one that I created with the $14.99 “Paper Tablet” app for my Livescribe Echo smartpen. “Paper Tablet” turns a notebook page into a surrogate computer tablet. When I run an “ink aware” app like Microsoft Visio, and then physically draw on a notebook page, the output goes directly to the computer app and it shows up on the screen – in addition to being stored in the pen. Thus, as I was drawing this putrid picture with my pen, it was being simultaneously regenerated on a visio page in real-time (see clip below). Kool, eh? Maybe with some practice……

Firing Up The Eclipse Erlide Plugin

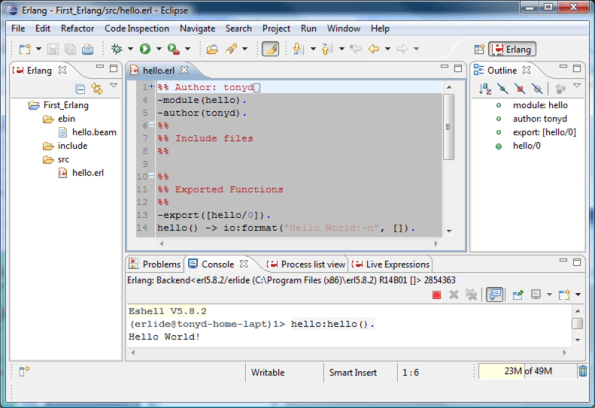

To help me learn and practice writing source code in Erlang, I downloaded and installed the “Erlide” plugin for the Eclipse-Helios IDE. The figure below displays a snapshot of Eclipse with the default Erlang perspective opened up. The set of Erlang specific views that are displayed within the default perspective are: the editor, navigator, process list, and live expressions views. Each Erlang specific view is annotated with this kool little Erlide logo:

As you can see, the editor is showing the content of the “hello.erl” source code file, which contains the definition of the “hello/0” function. The console view at the bottom of the screen shows the result of manually typing in “hello:hello().“, which runs the program on the version 5.8.2 Erlang virtual machine (VM). Upon completion, the VM did what it was told. It printed, duh, : “Hello World!”.

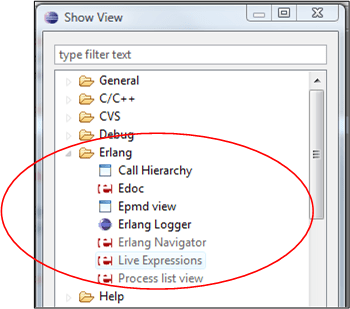

The complete list of Erlide views available to aspiring Erlang programmers is shown below. Since I’m a newbie to the land of Erlang, I have no freakin’ idea what they do yet.

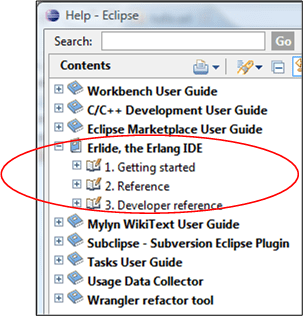

With the aid of the supplied eclipse Erlide help module (see below), I was easily able to configure and link the IDE to my previously downloaded and installed distribution of the Erlang VM.

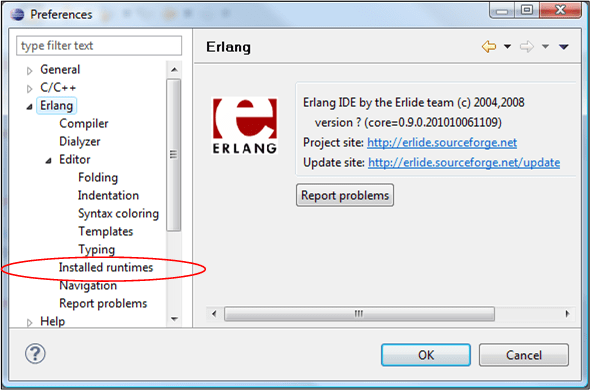

The snapshot below shows the configurability options offered up by Erlide via the eclipse “Preferences” window. I won’t go into the details here, but the “Installed runtimes” option is where you connect up Erlide with your installed Erlang VM(s).

So, C++ programmers, what are you waiting for? Download the latest Erlang distro, the Erlide eclipse plugin (you do use eclipse, right?), buy a good Erlang book, and start exploring this powerful and relatively weird programming language.

Oh, and thanks to the great programmers who designed, wrote, and tested the Erlide Eclipse plugin – most likely on their own time. You guys and gals rock!

My Erlang Learning Status

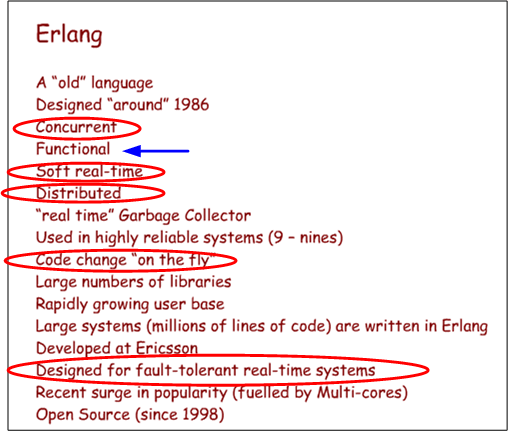

Check out this slide from Erlang language co-designer Joe Armstrong’s InfoQ lecture: “Erlang – software for a concurrent world“. I’ve circled the features that have drawn me to Erlang because I’m currently developing product software where those qualities are hugely important to my customers. Despite their importance to success, they’re almost always given second class status by programmers and managers because they’re not domain-specific, “glamorous“, features.

The blue arrow is my sore spot. You see, I’ve been programming imperatively in FORTAN, then C, and then C++, since Jesus was born. Thus, learning the stateless, recursive, functional programming mindset that Erlang is founded upon is a huge hurdle for me to overcome. Nevertheless, as I’ve stated before, I’m half-assedly trying to learn OMOT how to program in Erlang with the aid of this very good book:

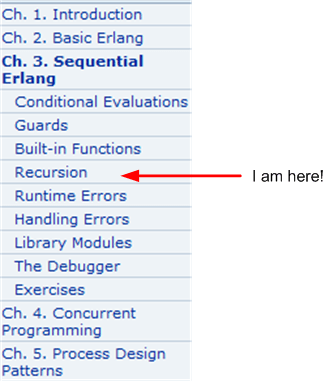

Here’s my learning “status” in terms of the book’s table of contents:

I don’t have an ironclad, micro-scheduled plan or BS risk register pinned on the wall in my war room, so I have no idea when I’ll be 100% done. But who knows, if I don’t abandon the effort:

Note: OMOT = On My Own Time

The Wevo Approach

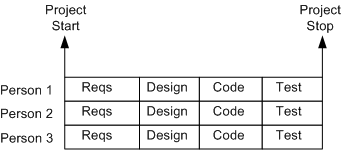

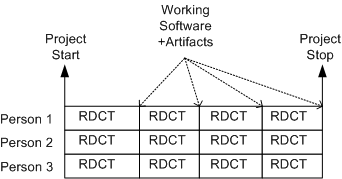

The figure below shows an example of a one-size-fits-all, waterfall schedule template that’s prevalent at many old school software companies. It sure looks nice, squeaky clean, and controllable, but as everyone knows, it’s always wrong. Out of fear or apathy, almost no one speaks out against this “best practice“, but those who do are quickly slapped down by the anointed controllers and meta-controllers of the project.

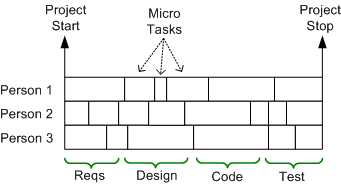

A more insidious, micro-grained, version of this waterboarding fiasco is shown below. It’s a self-medicating attempt to amplify the illusion of control that’s envisioned to take place throughout the execution of the project. Since schedules are concocted before an architecture or design has been reasonably sketched out and no one can possibly know up front what all the micro tasks are, let alone how long they’ll take (unless the project is to dig ditches), it’s monstrously wrong too. But shush, don’t say a word.

Once a monstrosity like this is baked into a huge Microsoft Project file or company proprietary scheduling document, those who conjured up the camouflage auto-become loathe to modify it, even as the situation dynamically changes during the death march. Once the project starts churning, new unforeseen “popup” tasks emerge and some pre-planned micro-tasks become obsolete. These events disconnect the schedule from reality quicker than you can say “WTF?“.

Moving on to a sunnier disposition, the template below shows a more “sane“, but not infallible, method of scheduling. It’s a model of the incremental “evo” strategy that I first stumbled upon from Tom Gilb – a bazillion years before the agile movement rose to prominence. In the evo(lutionary) approach, stable working software becomes visible early with each RDCT cycle and it grows and matures as the messy (it’s always messy) project lurches forward.

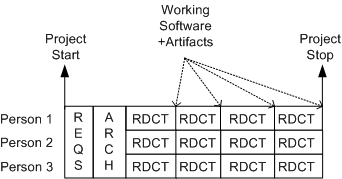

The figure below shows a tweaked version of the evo model. It’s a hybrid concoction of the waterboard and evolutionary development approaches – the “wevo“. Some upfront requirements and architecture exploration/definition/specification is performed by the elected team technical leaders before staffing up for the battle against the possibility of building a BBoM. The purpose of the upfront requirements and architecture efforts are to address major cross-cutting concerns and establish contextual boundaries – before letting the dogs loose.

Of course, the wevo approach is not enough. Another necessary but insufficient requirement is that the team leaders dive into the muck with the “coders” after the cross-cutting requirements and architecture definition activities have produced a stable, understandable blueprint. No jargon spewing software “rocketects” or “pure” software project leads allowed – everyone gets dirty – and for the duration.

Recursive Interpretation

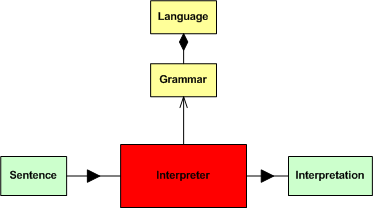

In their classic book, Design Patterns, the GoF defines the intent of the Interpreter pattern as:

Given a language, define a representation for its grammar along with an interpreter that uses the representation to interpret sentences in the language.

For your viewing pleasure, and because it’s what I like to do, I’ve translated (hopefully successfully) the wise words above into the SysML block diagram below.

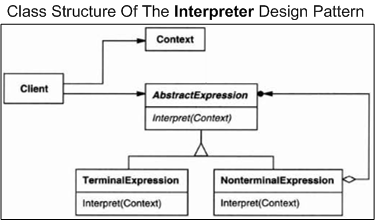

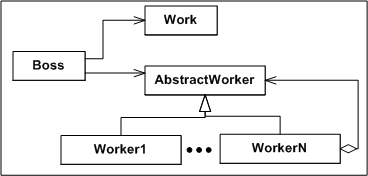

Moving on, observe the copied-and-pasted pseudo-UML class diagram of the GoF Interpreter design pattern below. In a nutshell, the “client” object builds and initializes the context of the sentence to be interpreted and invokes the interpret() operation – passing a reference to the context (in the form of a syntax tree) down into the concrete “xxxExpression” interpreter objects in a recursive descent so that they can do their piece of the interpretation work and then propagate the results back up the stack.

In their writeup of the Interpreter design pattern, the GoF state:

Terminal nodes generally don’t store information about their position in the abstract syntax tree. Parent nodes pass them whatever context they need during interpretation. Hence there is a distinction between shared (intrinsic) state and passed-in (extrinsic) state.

Since the classes in the Interpreter design pattern aren’t thinking, feeling, scheming humans concerned about status and looking good, the collection of collaborating classes do their jobs effectively and efficiently, just like mindless machines should. However, if you try to implement the Interpreter pattern on a project team with human “objects”, fuggedabout it. To start with, the “client” object (i.e. the boss) at the top of the recursion sequence won’t know how to create a coherent sentence or context. Even if the boss does do it, he/she may intentionally or unitentionally withhold the context from the recursion chain and invoke the interpret() operation of the first “XXXExpression” object (i.e. worker) with garbage. When the final interpretation of the garbled sentence is returned to the boss, it’s a new form of useless garbage.

On it’s way down the stack, at any step in the recursive descent, the propagated context can be distorted or trashed on purpose by self-serving intermediate managers and workers. Unlike a software system composed of mindless objects working in lock-step to solve a problem, the chances that the work will be done right, or even defined right, is miniscule in a DYSCO.

Remotivated, At Least For Now

After watching former C++ and CORBA guru Steve Vinoski rave about Erlang in this InfoQ video, I’ve been re-motivated to try and learn a dynamically typed (yikes!), functional programming language. After starting to learn CLisp with the “Land Of Lisp” serving as my training reference, I’ve switched gears. I’ve downloaded the Erlang distribution, I’ve downloaded the Erlang Eclipse plugin “Erlide“, and I’m using “Erlang Programming” as my first training book.

The book’s first chapter describes an impressive list of built in features that have drawn me to Erlang like a moth to a flame:

- Erlang was developed to solve the “time-to-market” requirements of distributed, fault-tolerant, massively concurrent, soft real-time systems.

- Erlang concurrency is fast and scalable. Its processes are lightweight in that the Erlang virtual machine does not create an OS thread for every created process.

- Erlang processes communicate with each other through message passing.

- Erlang has distribution incorporated into the language’s syntax and semantics, allowing systems to be built with location transparency in mind. The default distribution mode is based on TCP/IP, allowing a node (or Erlang runtime system) on a heterogeneous network to connect to any other node running on any operating system.

- Global variables don’t exist.

As of now, I’m planning to occasionally blog about my Erlang learning experience as I inch forward into the weird and whacky world of functional programming. But; since I’m doing this on my own time, I’m a slooow learner, I love working in C++, we don’t use Erlang where I work, and my intrinsic motivation may vanish, I may abandon the effort. If I do decide to “bag it“, be sure to call me a quitter.

Where’s The Bug?

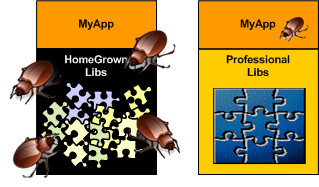

When you’re designing and happily coding away in the application layer and you discover a nasty bug, don’t you hate it when you find that the chances are high that the critter may not be hiding in your code – it may be in one of the cavernous homegrown libraries that prop your junk up. I hate when that happens because it forces me to do a mental context switch from the value-added application layer down into the support layer(s) – sometimes for days on end (ka-ching, ka-ching; tic-toc, tic-toc).

Compared to writing code on top of an undocumented, wobbly, homegrown BBoM, writing code on top of a professionally built infrastructure with great tutorials and API artifacts is a joy. When you do find a bug in the code base, the chances are astronomically high that it will be in your code and not down in the infrastructure. Unsurprisingly, preferring the professional over the amateur saves time, money, and frustration.

For the same strange reason (hint: ego) that command and control hierarchy is accepted without question as the “it just has to be this way” way of structuring a company for “success“, software developers love to cobble together their own BBoM middleware infrastructure. To reinforce this dysfunctional approach, managers are loathe to spend money on battle-tested middleware built by world class experts in the field. Yes, these are the same managers who’ll spend $100K on a logic analyzer that gets used twice a year by the two hardware designer dudes that cohabitate with the hundreds of software weenies and elite BMs inside the borg. C’est la vie.

Avoiding The Big Ball Of Mud

Like other industries, the software industry is rife with funny and quirky language terms. One of my favorites is “Big Ball of Mud“, or BBoM. A BBoM is a mess of a software system that starts off soft and malleable but turns brittle and unstable over time. Inevitably, it hardens like a ball of mud baking in the sun; ready to crumble and wreak havoc on the stakeholder community when disturbed. D’oh!

“And they looked upon the SW and saw that it was good. But they just had to add one more feature…”

Joseph Yoder, one of the co-creators of “BBoM” along with Brian Foote, recently gave a talk titled “Big Balls of Mud in Agile Development: Can We Avoid Them?“. Out of curiosity and the desire to learn more, I watched a video of the talk at InfoQ. In his talk, Mr. Yoder listed these agile tenets as “possible” BBoM promoters:

- Lack of upfront design (i.e. BDUF)

- Embrace late changes to requirements

- Continuous evolving architecture

- Piecemeal growth

- Focus on (agile) process instead of architecture

- Working code is the one true measure of success

For big software systems, steadfastly adhering to these process principles can hatch a BBoM just as skillfully as following a sequential and prescriptive waterfall process. It’ll just get you to the state of poverty that always accompanies a BBoM much quicker.

Unlike application layer code, infrastructure code should not be expected to change often. Even small architectural changes can have far reaching negative effects on all the value-added application code that relies on the structure and general functionality provided under the covers. If you look hard at Joe’s list, any one of his bullets can insidiously steer a team off the success profile below – and lead the team straight to BBoM hell.

Happressed

Ray (the “singularity” dude) Kurzweil‘s web site has summarized Science magazine‘s breakthrough of the year: the world’s first quantum machine. The gizmo is a tiny, but visible to the naked eye, metal “paddle” whose vibrational frequency can be controlled.

No big thing right? Well, here’s what some rocket scientists from UC Santa Barbara achieved:

First, they cooled the paddle until it reached its “ground state,” or the lowest energy state permitted by the laws of quantum mechanics (a goal long-sought by physicists). Then they raised the widget’s energy by a single quantum to produce a purely quantum-mechanical state of motion. They even managed to put the gadget in both states at once, so that it literally vibrated a little and a lot at the same time—a bizarre phenomenon allowed by the weird rules of quantum mechanics.

Wild and exciting stuff, no? A macro object composed of a collection of atoms (not just some singular, invisible, sub-atomic particle) operating in two supposedly mutually exclusive states at once. Just think of any point of reference on the paddle. The scientists proved (<- this is a key word as you’ll see below) that they could force it appear in two places at once. It’s sort of like being both ecstatically happy and depressed at the same time?

When I read about this breakthrough, I frantically searched the web for a video of the event. Hell, I want to actually see and experience what it looks like. Sadly, I was disappointed. I found this clip from Gizmodo that explains the absence of any visual evidence:

“It’s important to realize that they didn’t actually observe it in a superposition. All they said was that the paddle is large enough that it could be observed by the naked eye, but not while it is in a superposition state.”

D’oh! Upon reflection, this makes sense to me based on my layman’s knowledge of quantum physics. Prior to observation by, uh, an observer, so-called reality exists as a superposition of an infinite set of continuous matter waves that can be modeled by Erwin Schrodinger‘s unassailable wave equation. At the moment of observation, poof!, an observer “collapses” the wavefunction into a singularity – in effect creating his/her own reality. The mysterious question to me is: “Why do most people seem to see essentially the same reality when they observe an object at the same point in space and time?“. After all, what are the chances that my collapsed wavefunction will coincide with yours?

The Curiously Recurring Scramble Pattern

It’s funny to watch software development teams hack away for months building a just barely working patch-quilt monster that they can hardly understand themselves – and then scramble at the last minute generating design documents for some big upcoming management design review or “independent” auditor dog and pony show (woof woof!).

In this Curiously Recurring Scramble Pattern (CRSP), a successful attempt to avoid the labor of thinking is made as developers frantically sprinkle Doxygen annotations throughout the code and/or load the beast into a reverse engineering tool that mechanistically generates UML diagrams to model the as-built mess. It goes without saying that the tool’s “verbose” mode is selected in order to obscure meaning and promote the illusion of high falutin’ sophistication. Of course, all of this is a waste of time (= $$$$) because the dudes doing the reviewing (self-important managers and bureaucratic auditors) don’t want to understand a thing.

When the review or audit does take place; a couple of cream puff questions and comments are bantered about, check boxes are ticked off, a couple of superficial “action items” are generated, and the whole lovefest is rubber-stamped as a great success. Whoo Hoo, we rock!

Without a doubt, you and I have never been culturally forced to participate in an instantiation of the CRSP. We are above that nonsense, right? We do something like this.

“A meeting is a refuge from the dreariness of labor and the loneliness of thought.” – Bernard Baruch