Archive

Formal Review Chain

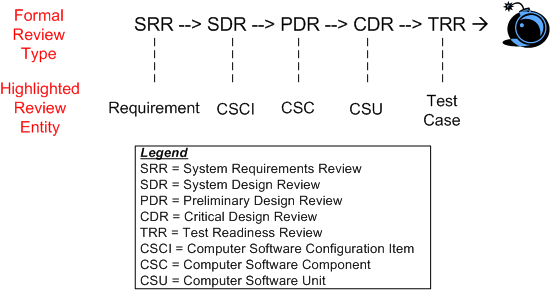

On big, multi-year, multi-million dollar software development projects, a series of “high-ceremony” formal reviews are almost always required to be held by contract. The figure below shows the typical, sequential, waterfall review lineup for a behemoth project.

The entity below each high ceremony review milestone is “supposedly” the big star of the review. For example, at SDR, the structure and behavior of the system in terms of the set of CSCIs that comprise the “whole” are “supposedly” reviewed and approved by the attendees (most of whom are there for R&R and social schmoozing). It’s been empirically proven that the ratio of those “involved” to those “responsible” at reviews in big projects is typically 5 to 1 and the ratio of those who understand the technical content to those who don’t is 1 to 10. Hasn’t that been the case in your experience?

The figure below shows a more focused view of the growth in system artifacts as the project supposedly progresses forward in the fantasy world of behemoth waterfall disasters, uh, I mean projects. Of course, in psychedelic waterfall-land, the artifacts of any given stage are rigorously traceable back to those that were “designed” in the previous stage. Hasn’t that been the case in your experience?

In big waterfall projects that are planned and executed according to the standard waterfall framework outlined in this post, the outcome of each dog-and-pony review is always deemed a great success by both the contractee and contractor. Backs are patted, high fives are exchanged, and congratulatory e-mails are broadcast across the land. Hasn’t that been the case in your experience?

No Man’s Land

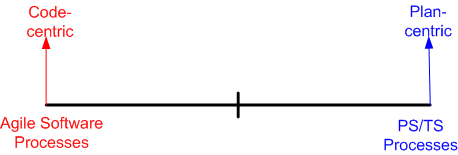

Having recently just read my umpteenth Watts Humphrey book on his PSP/TSP (Personal Software Process/Team Software Process) methodology, I find myself struggling, yet again, to reconcile his right wing thinking with the left wing “Agile” software methodologies that have sprouted up over the last 10 years as a backlash against waterfall-like methodologies. This diagram models the situational tension in my hollow head:

It’s not hard to deduce that prescriptive, plan-centric processes are favored by managers (at least those who understand the business of software) and demonstrative, code-centric processes are favored by developers. Duh!

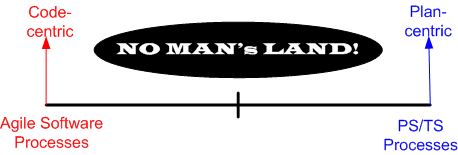

Advocates of both the right and the left have documented ample evidence that their approach is successful, but neither side (unsurprisingly) willingly publicizes their failures much. When the subject of failure is surfaced, both sides always attribute messes to bad “implementations” – which of course is true. IMHO, given a crack team of developers, project managers, testers, and financial sponsors, ANY disciplined methodology can be successful – those on the left, those on the right, or those toiling in NO MAN’s LAND.

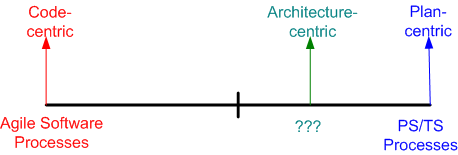

l’ve been the anointed software “lead” on two, < 10 person, software teams in the past. Both as a lead and an induhvidual contributor, the approach I’ve always intuitively taken toward software development can be classified as falling into “no man’s land“. It’s basically an informal, but well-known, Brooksian, architecture-centric, strategy that I’d characterize as slightly right-leaning:

As far as I know, there’s no funky, consensus-backed, label like “semi-agile” or “lean planning” to capture the essence of architecture-centric development. There certainly aren’t any “certification” training courses or famous promoters of the approach. This book, which I discovered and read after I’d been designing/developing in this mundane way for years, sort of covers the process that I repeatedly use.

In my tortured mind (and you definitely don’t want to go there!), architecture-centricity simply means “centered on high level blueprints“. Early on, before the horses are let out of the barn and massive, fragmented, project effort starts churning under continuous pressure from management for “status“, a frantic iterative/sketching/bounding/synthesis activity takes place. With a visible “rev X” architecture in hand (one that enumerates the structural elements, their connectivity, and the macro behavior that the system must manifest) for guidance, people can then be assigned to the sparsely defined, but bounded, system elements so that they can create reasonable “Rev 0” estimates and plans. The keys are that only one or two or three people record the lay of the land. Subsequently, the element assignees produce their own “Rev 0” estimates – prior to igniting the frenetic project activity that is sure to follow.

In a nutshell, what I just described is the front-end of the architecture-centric approach as I practice it; either overtly or covertly. The subsequent construction activities that take place after a reasonably solid, lightweight, “rev X”, architecture (or equivalent design artifact for smaller scale projects) has been recorded and disseminated are just details. Of course, I’m just joking in that last sentence, but unless the macro front end is secured and repeatedly used as the “go to bible” shortly after T==start, all is lost – regardless of the micro-detailed practices (TDD, automated unit tests, continuous integration, continuous delivery, yada yada yada) that will follow. But hey, the content of this post is just Bulldozer00’s uncredentialed and non-expert opinion, so don’t believe a word of it.

Mangled Mess

“Too much documentation can be just as bad as no documentation” – Unknown

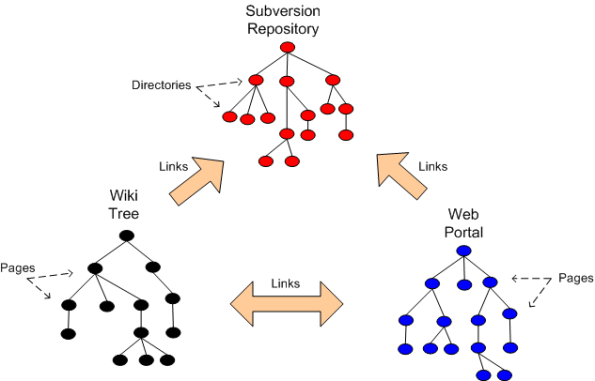

Assume that a big software project has chosen to use the three types of databases below to store and maintain technical information about a product under development: planning artifacts, trade studies, requirements artifacts, design artifacts, test cases & results, source code, installation instructions, developer guidance, user guidance.

Unless one is careful in defining and disseminating to the team the “what goes where” criteria, a fragmented and ambiguously duplicitous mass of confusion can emerge quicker than you can say “WTF?“.

Which One, And When?

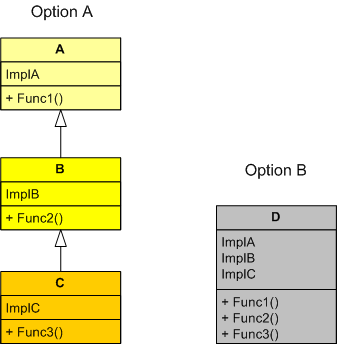

Option A and Option B in the UML figure below show two different ways of presenting the same 3-function interface to “other” code users. Under which conditions would you choose one design over the other?

Because I prefer simplicity over complexity and local dependency over distant dependency, I’d prefer option B over option A if I was reasonably sure that classes A and B wouldn’t be useful in another inheritance tree or useful as leaf classes. Even if I chose wrongly, because all the functionality is encapsulated within one class in option B, it wouldn’t be a huge risk for a user to extract out B or C class sub-functionality from D and customize it for his/her use by placing it in his/her own separate E class. In this case, no other existing clients of class D would be affected, but the trade off is the introduction of duplicate code into the code base. If I chose the option A inheritance tree, this wouldn’t be the case. In option A, if a user was allowed to directly change A and/or B in-situ, then the duplicate code “wart” wouldn’t manifest, but the risk of breaking the existing code of other users of the A, B, and/or C classes would be high compared to the alternative. Do you see any holes in this decision logic?

Increased Cost And Increased Time

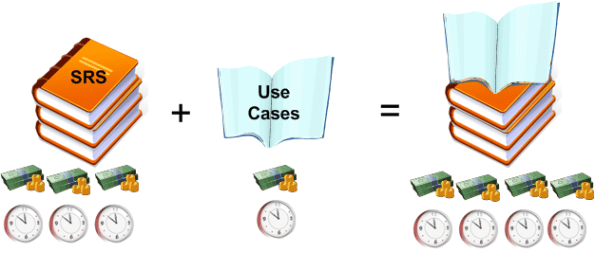

Before the invention of the formal “Use Case“, and the less formal “User Story“, the classic way of integrating, structuring, and recording requirements was via the super-formal Software Requirements Specification (SRS). Like “agile” was a backlash against “waterfall“, the lightweight “Use Case” was a major diss against the heavyweight “SRS“.

However, instead of replacing SRSs with Use Cases, I surmise that many companies have shot themselves in the foot by requiring the expensive and time consuming generation and maintenance of both types of artifacts. Instead of decreasing the cost/time and increasing the quality of the requirements engineering process, they most likely have done the opposite – losing ground to smarter competitors who do one or the other effectively. D’oh! Is your company one of them?

No Obvious Deficiencies

While perusing my favorite quotes page for blog post seedlings, this one spoke to me:

“There are two ways of constructing a software design: One way is to make it so simple that there are obviously no deficiencies, and the other way is to make it so complicated that there are no obvious deficiencies. The first method is far more difficult.” – C.A.R. Hoare.

Being the mangler, distorter, and exaggerator that I am, here’s my version:

“There are two ways of camouflaging crappy work: One way is to present it so simply that there are no obvious deficiencies, and the other way is to make the presentation so complicated that there are no obvious deficiencies. Both ways are equally effective.” – C.A.R. Hoare + Bulldozer00.

Ambivalence

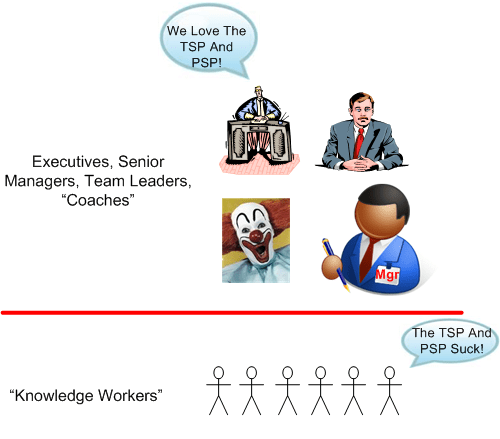

Prominent and presidentially decorated software process guru Watts Humphrey passed away last year. Over the years, I’ve read a lot of his work and I’ve always been ambivalent towards his ideas and methods. On the one hand, I think he’s got it right when he says Peter-Drucker-derived things like:

Since managers don’t and can’t know what knowledge workers do, they can’t manage knowledge workers. Thus, knowledge workers must manage themselves.

On the other hand, I’m turned off when he starts promoting his arcane and overly-pedantic TSP–PSP methodology. To me, his heavy, right wing measurement and prescriptive planning methods are an accountant’s dream and an undue burden on left leaning software development teams. Also, in at least his final two books, he targets his expert TSP-PSP way at “executives, senior managers, coaches and team leaders” while implying that knowledge workers are “them” in the familiar, dysfunctional “us vs. them” binary mindset (that I suffer from too).

I really want to like Watts and his CMMI, TSP-PSP babies, but I just can’t – at least at the moment. How about you? It would be kool if I received a bunch of answers from a mixture of managers and “knowledge workers“. However, since this blog is read by about 10 people and I have no idea whether they’re knowledge workers or managers or if they even heard of TSP-PSP or Watts Humphrey, I most likely won’t get any. 🙂

I really want to like Watts and his CMMI, TSP-PSP babies, but I just can’t – at least at the moment. How about you? It would be kool if I received a bunch of answers from a mixture of managers and “knowledge workers“. However, since this blog is read by about 10 people and I have no idea whether they’re knowledge workers or managers or if they even heard of TSP-PSP or Watts Humphrey, I most likely won’t get any. 🙂

Defect Diaries

Once again, I’ve stolen a graphic from Watts Humphrey and James Over’s book, “Leadership, Teamwork, and Trust“:

According to this performance chart, the best software developers (those in the first quartile of the distribution) “injected” on average less than 50 bugs (Watts calls them defects) per 1000 lines of code over the entire development effort and less than 10 per 1000 lines of code during testing. Assuming that the numbers objectively and fairly represent “reality“, the difference in quality between the top 25% and bottom 25% of this specific developer sample is approximately a factor of 250/50 = 5.

What I’d like to know, and Humphrey/Over don’t explicitly say in the book (unless I missed it), is how these numbers were measured/reported and how disruptive it was to the development process and team? I’d also like to know what the results were used for; what decisions were made by management based on the data. Let’s speculate…

I picture developers working away, designing/coding/compiling/testing against some requirements and architecture artifacts that they may or may not have had hand in producing. Upon encountering each compiler, linker and runtime error, each developer logs the nature of the error in his/her personal “demerit diary” and fixes it. During independent testing: the testers log and report each error they find; the development team localizes the bug to a module; and the specific developer who injected the defect fixes it and records it in his/her demerit diary.

What’s wrong with this picture? Could it be that developers wouldn’t be the slightest bit motivated to measure and record their “bug injection rates” in personal demerit diaries – and then top it off by periodically submitting their report cards lovingly to their trustworthy management “superiors“? Don’t laugh, because there’s quite a body of evidence that shows that Mr. Humphrey’s PSP/TSP process, which requires institutionalization of this “defection injection rate recording and reporting” practice, is in operation at several (many?) financially successful institutions. If you’re currently working in one of these PSP/TSP institutions, please share your experience with me. I’m curious to hear personal stories directly from PSP/TSP developers – not just from Mr. Humphrey and the CMU SEI.

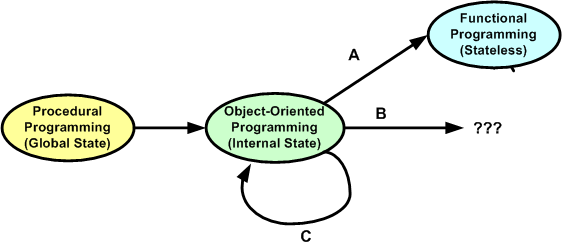

Procedural, Object-Oriented, Functional

In this interesting blog post, Dr Dobbs – Whither F#?, Andrew Binstock explores why he thinks “functional” programming hasn’t displaced “object-oriented” programming in the same way that object-oriented programming started slowly displacing “procedural” programming in the nineties. In a nutshell, Andrew thinks that the Return On Investment (ROI) may not be worth the climb:

“F# intrigued a few programmers who kicked the tires and then went back to their regular work. This is rather like what Haskell did a year earlier, when it was the dernier cri on Reddit and other programming community boards before sinking back into its previous status as an unusual curio. The year before that, Erlang underwent much the same cycle.”

“functional programming is just too much of a divergence from regular programming. ”

“it’s the lack of demonstrable benefit for business solutions — which is where most of us live and work. Erlang, which is probably the closest to showing business benefits across a wide swath of business domains, is still a mostly server-side solution. And most server-side developers know and understand the problems they face.”

So, what do you think? What will eventually usurp the object-oriented way of thinking for programmers and designers in the future? The universe is constantly in flux and nothing stays the same, so the status quo loop modeled by option C below will be broken sometime, somewhere, and somehow. No?

Movin’ On Up

For some unknown reason, I recently found myself reflecting back on how I’ve progressed as a software engineer over the years. After being semi-patient and allowing the fragmented thoughts to congeal, I neatly summed up the quagmire as thus:

- Single Node – Single Process – Single Threaded (SST) programming

- Single Node – Single Process – Multi-Threaded (SSM) programming

- Single Node – Multi-Process – Multi-Threaded (SMM) programming

- Multi-Node – Multi-Process – Multi-Threaded (MMM) programming

It’s interesting to note that my progression “up the stack” of abstraction and complexity did not come about from the execution of some pre-planned, grand master strategy . I feel that I was “tugged” by some unknown force into pursuing the knowledge and skills that have gotten me to a semi-proficient state of expertise in the design and programming of MMM systems.

Being a graphical type of dude, here’s a pictorial representation of how I “moved on up“.

How about you? Do you have a master plan for movin’ on up? Wherever you are in relation to this concocted stack, are you content to stay there – in the womb so-to-speak? If you want to adopt something like it as a roadmap for professional development, are you currently immersed in the type of environment that would allow you to do so?