Archive

The Loop Of Woe

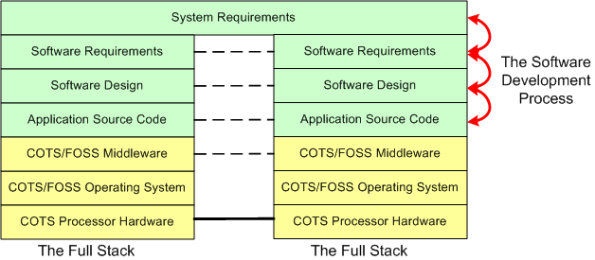

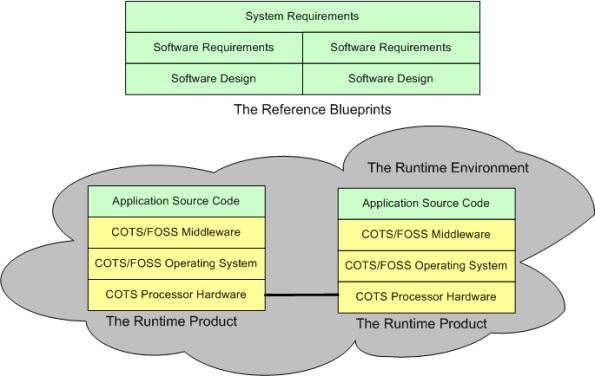

When a “side view” of a distributed software architecture is communicated, it’s sometimes presented with a specific instantiation of something like this four layer drawing; where COTS = Commercial Off The Shelf and FOSS=Free Open Source Software:

I think that neglecting the artifacts that capture the thinking and rationale in the more abstract higher layers of the stack is a recipe for high downstream maintenance costs, competitive disadvantage, and all around stakeholder damage. For “big” systems, trying to find/fix bugs, or determining where new feature source code must be inserted among 100s of thousands of lines of code, is a huge cost sink when a coherent full stack of artifacts is not available to steer the hunt. The artifacts don’t have to be high ceremony, heavyweight boat anchors, they just have to be useful. Simple, but not simplistic.

For safety-critical systems, besides being a boon to maintenance, another increasingly important reason for treating the upper layers with respect is certification. All certification agencies require an auditable and scrutably connected path from requirements down through the source code. The classic end run around the certification obstacle when the content of the upper layers is non-existent or resembles swiss cheese is to get the system classified as “advisory”.

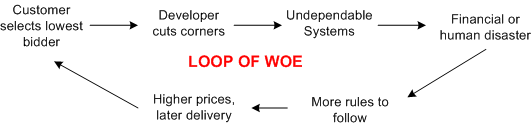

Frenetic , clock-watching managers and illiterate software developers are the obvious culprits of upper layer neglect but, ironically, the biggest contributors to undependable and uncertifiable systems are customers themselves. By consistently selecting the lowest bidder during acquisition, customers unconsciously encourage corner-cutting and apathy towards safety.

Frenetic , clock-watching managers and illiterate software developers are the obvious culprits of upper layer neglect but, ironically, the biggest contributors to undependable and uncertifiable systems are customers themselves. By consistently selecting the lowest bidder during acquisition, customers unconsciously encourage corner-cutting and apathy towards safety.

Got any ideas for breaking the loop of woe? I wish I did, but I don’t.

TAO (Pronounced “DOW”, As In The Dow Jones Industrials)

Relax……. Sit back and take three deep breaths, concentrating on the miraculous transitions between your in-breath and your out-breath along with the transitions between your out-breath and your in-breath (what force causes that to happen and keep you alive?). Next, please try to make sense of the following Newtonian cause and effect chain of events;

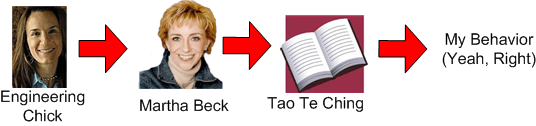

- I discovered Martha Beck through my colleague, the engineering chick.

- Through Martha Beck, I discovered the acronym TAO = Transparent, Authentic, Open.

- For many years, I’ve loved the eternal message conveyed in the 2600 year old Tao Te Ching.

Because of all of the above, I’ve decided to make a (half-assed, of course) commitment to try to live and behave in accordance with the spirit of Ms. Beck’s TAO. Sadly, it may lead to a life of loneliness and living on the streets begging for pennies, but I’m gonna give it a go.

On second thought, fuggedaboud this post cuz I’m too chicken to do it.

Ya Can’t Put The Cat Back In The Bag

Check out this snippet from “Can Larger Companies Still be Passionate and Quirky?“:

Writing for The New York Times, Adam Bryant conducted an interview with Tony Hsieh, the chief executive of Zappos.com. Part of the interview that intrigued me was Hsieh’s explanation of why he and his roommate sold their company LinkExchange to Microsoft in 1998.

Part of it was the money, he admits. But, mostly, it was because the passion and excitement that permeated the company in the beginning was gone, and he’d grown to dislike its culture:

“When it was starting out, when it was just 5 or 10 of us, it was like your typical dot-com. We were all really excited, working around the clock, sleeping under our desks, had no idea what day of the week it was. But we didn’t know any better and didn’t pay attention to company culture. By the time we got to 100 people, even though we hired people with the right skill sets and experiences, I just dreaded getting out of bed in the morning and was hitting that snooze button over and over again.”

To avoid this happening with Zappos, Hsieh says he formalized the definition of the Zappos culture into 10 core values; core values that they would be willing to hire and fire people based on. Read the interview with Hsieh for details on how they went about this.

With LinkExchange, Tony was wise enough to know that it was fruitless to try and restore the company’s original esprit de corps culture. Once the cat gets out of the bag, it’s pretty much a done deal that you won’t get it back in.

What’s mind boggling to me is that leaders of startups that grow and “mature” over time don’t even have a clue that the vibrant culture of community/comraderie that they originally created has petered out. They get disconnected and buffered from the day to day culture by adding layer upon layer of pyramidal stratification and they delude themselves into thinking the culture has been maintained “for free” over the duration. Those dudes deserve what they get; a transformation from a communal meritocracy into a corpo mediocracy just like the rest of the moo-herd.

Background Daemons

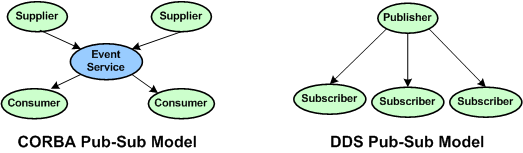

Assume that you have to build a distributed real-time system where your continuously communicating application components must run 24×7 on multiple processor nodes netted together over a local area network. In order to save development time and shield your programmers from the arcane coding details of setting up and monitoring many inter-component communication channels, you decide to investigate pre-written communication packages for inclusion into your product. After all, why would you want your programmers wasting company dollars developing non-application layer software that experts with decades of battle-hardened experience have already created?

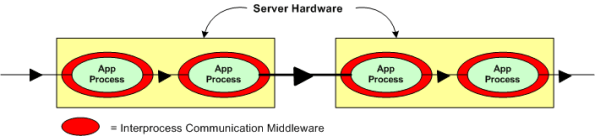

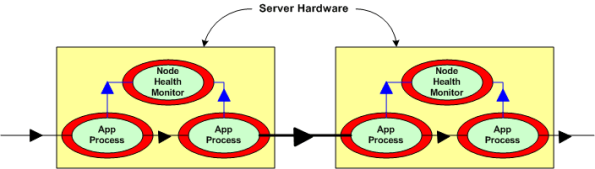

Now, assume that the figure below represents a two node portion of your many-node product where a distributed architecture middleware package has been linked (statically or dynamically) into each of your application components. By distributed architecture, I mean that the middleware doesn’t require any single point-of-failure daemons running in the background on each node.

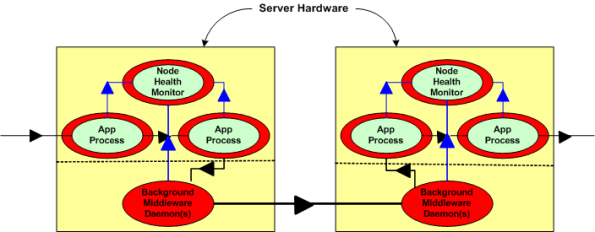

Next, assume that to increase reliability, your system design requires an application layer health monitor component running on each node as in the figure below. Since there are no daemons in the middleware architecture that can crash a node even when all the application components on that node are running flawlessly, the overall system architecture is more reliable than a daemon-based one; dontcha think? In both distributed and daemon-based architectures, a single application process crash may or may not bring down the system; the effect of failure is application-specific and not related to the middleware architecture.

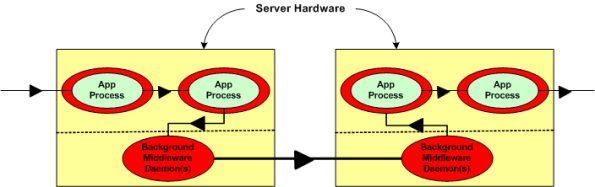

The two figures below represent a daemon-based alternative to the truly distributed design previously discussed. Note the added latency in the communication path between nodes introduced by the required insertion of two daemons between the application layer communication endpoints. Also note in the second figure that each “Node Health Monitor” now has to include “daemon aware” functionality that monitors daemon state in addition to the co-resident application components. All other things being equal (which they rarely are), which architecture would you choose for a system with high availability and low latency requirements? Can you see any benefits of choosing the daemon-based middleware package over a truly distributed alternative?

The most reliable part in a system is the one that is not there – because it isn’t needed.

Dependable Mission Critical Software

In this post, Embedded.com – Software for dependable systems, Jack Gannsle introduced me to the book: Software for Dependable Systems–Sufficient Evidence?. It was written by the “Committee on Certifiably Dependable Software Systems” and it’s available for free pdf download.

Despite being written by a committee (blech!), and despite the bland title (yawn), I agree with Jack in that it’s a riveting geek read. It’s understandable to field-hardened practitioners and it’s filled with streetwise wisdom about building dependability into large, mission critical software systems that can kill people or cause massive financial loss if they collapse under stress. Essentially, it says that all the bloated, costly, high-falutin safety and security and certification processes in existence today don’t guarantee squat – except jobs for self-important bureaucrats and wanna-be-engineers. They don’t say it THAT way of course, but that’s my warped and unprofessional interpretation of their message.

Here are a few gems from the 149 page pdf:

As is well known to software engineers, by far the largest class of problems arises from errors made in eliciting, recording, and analysis of requirements.

Undependable software suffers from an absence of a coherent and well articulated conceptual model.

Today’s certification regimes and consensus standards have a mixed record. Some are largely ineffective, and some are counterproductive. (<- This one is mind blowing to me)

The goal of certifiably dependable software cannot be achieved by mandating particular processes and approaches regardless of their effectiveness in certain situations.

In addition to lampooning the “way things are currently done” for certifying software-centric dependability, the committee dudes actually make some recommendations for improving the so-called state of art. Stunningly, they don’t prescribe yet another costly, heavyweight process of dubious effectiveness. They recommend any process comprised of best practices; as long as there is scrutable connectivity from phase to phase and from start to end to “preserve the chain of evidence” for a claim of dependability that vendors of such software should be required to make. Where there is a gap between links in the chain of scrutability, they recommend rigorous analysis to fill it.

To make the transition to the new mindset of scrutable connectivity, they say that these skills, which are rare today and difficult to acquire, will be required in the future:

- True systems thinking (not just specialized, localized, algorithmic thinking that’s erroneously praised as systems thinking by corpocracies) of the properties of the system as a whole and the interactions among its components.

- The art of simplifying complex concepts, which is difficult to appreciate since the awareness of the need for simplification usually only comes (if it DOES come at all) with bitter experience and the humility gained from years of practice.

Drum roll please, because my absolute favorite entry in the book, which tugs at my heart, is as follows:

To achieve high levels of dependability in the foreseeable future, striving for simplicity is likely to be by far the most cost-effective of all interventions. Simplicity is not easy or cheap but its rewards far outweigh its costs.

That passage resonates deeply with me because, even though I’m not good at it, that’s what my primary professional goal has been for 20+ years. Clueless companies that put complexifying and obfuscating experts that nobody can understand up on a pedestal, deserve what they get:

- incomprehensible, unmaintainable, and undependable products

- a disconnected and apathetic workforce

- low (if any) profit margins.

As my Irish friend would say, they are all fecked up. They’re innocent and ignorant, but still fecked up.

Two Hundred And Fifty To Zero

Whoo Hoo! Since March of 2009, I’ve posted just over 250 BS blog entries. However, my labor of love has led to zero supplemental income. As they say (whoever the ubiquitous they are), “don’t quit your day job“. That’s OK, because I love my day job. Since the vast majority of the people that I work with, and for, are good and decent people that give their best every day, I’m a happy camper.

My Velocity

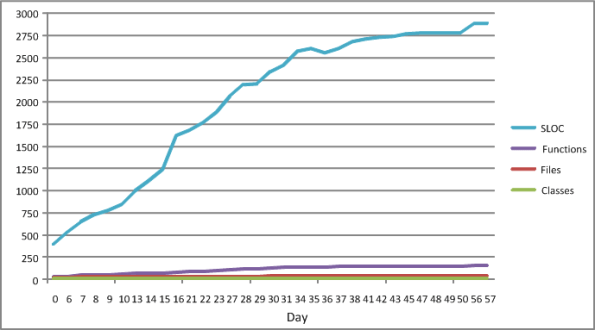

The figure below shows some source code level metrics that I collected on my last C++ programming project. I only collected them because the process was low ceremony, simple, and unobtrusive. I ran the source code tree through an easy to use metrics tool on a daily basis. The plots in the figure show the sequential growth in:

- The number of Source Lines Of Code (SLOC)

- The number of classes

- The number of class methods (functions)

- The number of source code files

So Whoopee. I kept track of metrics during the 60 day construction phase of this project. The question is: “How can a graph like this help me improve my personal software development process?”.

The slope of the SLOC curve, which measured my velocity throughout the duration, doesn’t tell me anything my intution can’t deduce. For the first 30 days, my velocity was relatively constant as I coded, unit tested, and integrated my way toward the finished program. Whoopee. During the last 30 days, my velocity essentially went to zero as I ran end-to-end system tests (which were designed and documented before the construction phase, BTW) and refactored my way to the end game. Whoopee. Did I need a plot to tell me this?

I’ll assert that the pattern in the plot will be unspectacularly similar for each project I undertake in the future. Depending on the nature/complexity/size of the application functionality that will need to be implemented, only the “tilt” and the time length will be different. Nevertheless, I can foresee a historical collection of these graphs being used to predict better future cost estimates, but not being used much to help me improve my personal “process”.

What’s not represented in the graph is a metric that captures the first 60 days of problem analysis and high level design effort that I did during the front end. OMG! Did I use the dreaded waterfall methodology? Shame on me.

iSpeed OPOS

A couple of years ago, I designed a “big” system development process blandly called MPDP2 = Modified Product Development Process version 2. It’s version 2 because I screwed up version 1 badly. Privately, I named it iSpeed to signify both quality (the Apple-esque “i”) and speed but didn’t promote it as such because it didn’t sound nerdy enough. Plus, I was too chicken to introduce the moniker into a conservative engineering culture that innocently but surely suppresses individuality.

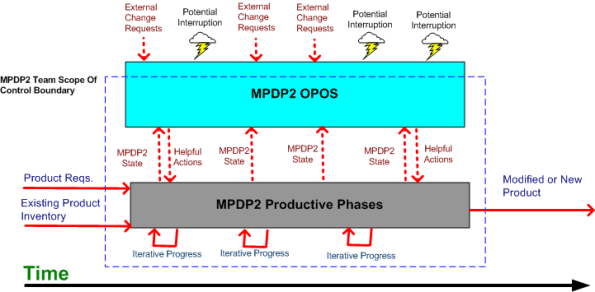

One of the MPDP2 activties, which stretches across and runs in parallel to the time sequenced development phases, is called OPOS = Ongoing Planning, Ongoing Steering. The figure below shows the OPOS activity gaz-intaz and gaz-outaz.

In the iSpeed process, the top priority of the project leader (no self-serving BMs allowed) is to buffer and shield the engineering team from external demands and distractions. Other lower priority OPOS tasks are to periodically “sample the value stream”, assess the project state, steer progress, and provide helpful actions to the multi-disciplined product development team. What do you think? Good, bad, fugly? Missing something?

Plumbers And Electricians

Putting an expert in one technical domain “in charge” of a big risky project that requires deep expertise in a different technical domain is like putting plumbers in charge of a team of electricians on a massive skyscraper electrical job – or vice versa. Putting a generic PMI or MBA trained STSJ in charge of a complex, mixed-discipline engineering product development project is even worse. When they don’t know anything about WTF they’re managing, how can innocently ignorant project “leaders”:

- Understand what needs to be done

- Know what information artifacts need to be generated along the way for downstream project participants

- Estimate and plan the work with reasonable accuracy

- Correctly interpret ongoing status so that they have an idea where the project is located in terms of cost, schedule, and quality

- Make effective mid-course corrections when things go awry and ambiguity reigns

- See through any bullshit used to camouflage shoddy work or to cut corners,

- Stop small but important problems from falling through the cracks and growing into ominously huge obstacles

- Perform the “verify” part of “trust but verify”.

Well, they can’t – no matter how many impressive and glossy process templates are stored in the standard corpo database to guide them. Thus, these poor dudes spend most of their time spinning out massive, impressive excel spreadsheets and microsoft project schedules so fine grained that they’re obsolete before they’re showcased to the equally clueless suits upstairs. But hey, everything looks good on the surface for a long stretch of time. Uh, until the fit hits the shan.