Archive

Freakin’ Oops!

With great power there must also come… great responsibility – Stan Lee

C++, being as sprawling and powerful and flexible as it is, rightly gets dinged for its propensity to bite you in the ass (if you don’t fully understand a language feature you’re trying to use). Thus, many C++ programming book authors point out common “gotchas” while teaching the language to their readers.

The graphic below depicts some “watchout!” snippets from four different, popular C++ programming books. As you might surmise from the picture, my favorite word in a C++ programming book is (freakin’) “Oops!”.

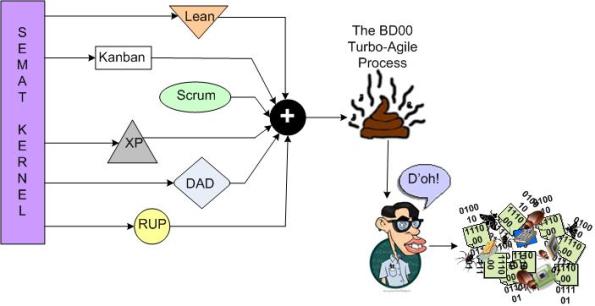

Going Turbo-Agile

I’m planning on using the state of the art SEMAT kernel to cherry-pick a few “best practices” and concoct a new, proprietary, turbo-agile software development process. The BD00 Inc. profit deluge will come from teaching 1 hour certification courses all over the world for $2000 a pop. To fund the endeavor, I’m gonna launch a Kickstarter project.

What do you think of my slam dunk plan? See any holes in it?

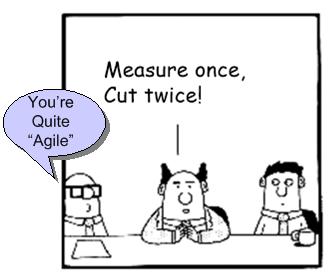

Alternative Considerations

Before you unquestioningly accept the gospel of the “evolutionary architecture” and “emergent design” priesthood, please at least pause to consider these admonitions:

Give me six hours to chop down a tree and I will spend the first four sharpening the axe – Abe Lincoln

Measure twice, cut once – Unknown

If I had an hour to save the world, I would spend 59 minutes defining the problem and one minute finding solutions – Albert Einstein

100% test coverage is insufficient. 35% of the faults are missing logic paths – Robert Glass

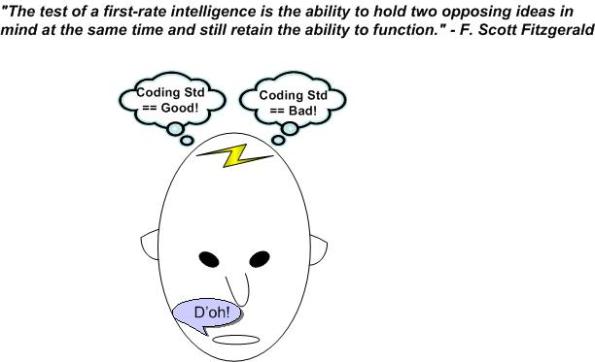

The Ability To Function

While writing the “Rule-Based Safety” post of a few days ago, this quote kept interfering with my thoughts:

Whenever I end up simultaneously holding two opposing ideas in my head, most of the time one of them automatically wins the battle quickly and boots out the loser. Phew, the victory relieves the mental tension. On the down-side, the winner is much too effective at preventing the opposition from ever entering the contemplation chamber again. I hate when that happens.

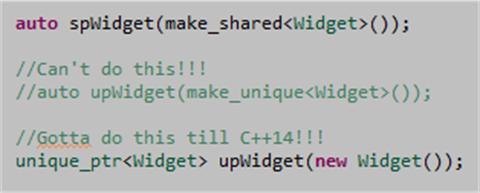

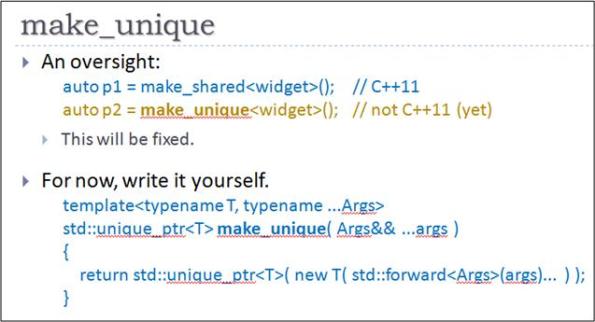

make_unique

On my current project, we’re joyfully using C++11 to write our computationally-dense target processing software. We’ve found that std::shared_ptr and std::unique_ptr are extremely useful classes for avoiding dreaded memory leaks. However, I find it mildly irritating that there is no std::make_unique to complement std::make_shared. It’s great that std::make_unique will be included in the C++14 standard, but whenever we use a std::unique_ptr we gotta include a fugly “new” in our C++11 code until then:

But wait! I stumbled across this helpful Herb Sutter slide:

A variadic function template that uses perfect forwarding. It’s outta my league, but….. Whoo Hoo! I’m gonna add this sucker to our platform library and start using it ASAP.

Rule-Based Safety

In this interesting 2006 slide deck, “C++ in safety-critical applications: the JSF++ coding standard“, Bjarne Stroustrup and Kevin Carroll provide the rationale for selecting C++ as the programming language for the JSF (Joint Strike Fighter) jet project:

First, on the language selection:

- “Did not want to translate OO design into language that does not support OO capabilities“.

- “Prospective engineers expressed very little interest in Ada. Ada tool chains were in decline.“

- “C++ satisfied language selection criteria as well as staffing concerns.“

They also articulated the design philosophy behind the set of rules as:

- “Provide “safer” alternatives to known “unsafe” facilities.”

- “Craft rule-set to specifically address undefined behavior.”

- “Ban features with behaviors that are not 100% predictable (from a performance perspective).”

Note that because of the last bullet, post-initialization dynamic memory allocation (using new/delete) and exception handling (using throw/try/catch) were verboten.

Interestingly, Bjarne and Kevin also flipped the coin and exposed the weaknesses of language subsetting:

What they didn’t discuss in the slide deck was whether the strengths of imposing a large coding standard on a development team outweigh the nasty weaknesses above. I suspect it was because the decision to impose a coding standard was already a done deal.

Much as we don’t want to admit it, it all comes down to economics. How much is the lowering of the risk of loss of life worth? No rule set can ever guarantee 100% safety. Like trying to move from 8 nines of availability to 9 nines, the financial and schedule costs in trying to achieve a Utopian “certainty” of safety start exploding exponentially. To add insult to injury, there is always tremendous business pressure to deliver ASAP and, thus, unconsciously cut corners like jettisoning corner-case system-level testing and fixing hundreds of “annoying” rules violations.

Does anyone have any data on whether imposing a strict coding standard actually increases the safety of a system? Better yet, is there any data that indicates imposing a standard actually decreases the safety of a system? I doubt that either of these questions can be answered with any unbiased data. We’ll just continue on auto-believing that the answer to the first question is yes because it’s supposed to be self-evident.

By Default

Much has been written about the differences between, and similarities across, management and leadership. But unsurprisingly, most managers equate the word “manager” with the word “leader” by default. After all, they’ve been appointed by other “leaders“. Thus, by (their) definition, managers are leaders.

On the other hand, most raw employees equate the word “manager” with “manager” by default. Err, on second thought, since (as usual) he has no supporting “data“, this BD00 post is prolly full of BS00:

Our old arrogant, egotistical nature (continuously) seeks out sustaining agreement with itself and its distorted opinions. – William Samuel

A Danger To Themselves And Others

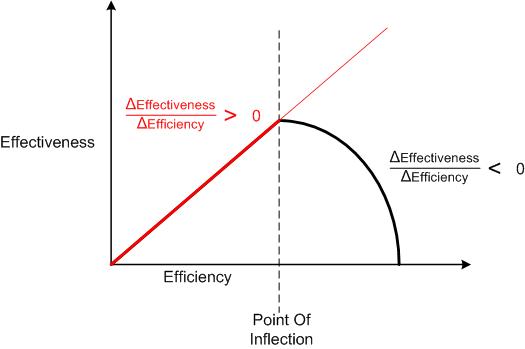

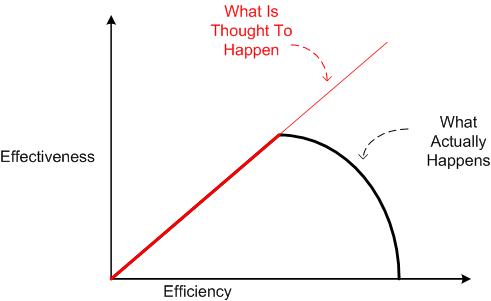

“Efficient systems are dangerous to themselves and others” – John Gall

A new system is always established with the goal of outright solving, or at least mitigating, a newly perceived problem that can’t be addressed with an existing system. As long as the nature of the problem doesn’t change, continuously optimizing the system for increased efficiency also joyfully increases its effectiveness.

However, the universe being as it is, the nature of the problem is guaranteed to change and there comes a time where the joy starts morphing into sorrow. That’s because the more efficient a system becomes over time, the more rigid its structure and behavior becomes and the less open to change it becomes. And the more resistant to change it becomes, the more ineffective it becomes at achieving its original goal – which may no longer even be the right goal to strive for!

In the manic drive to make a system more efficient (so that more money can be made with less effort), it’s difficult to detect when the inevitable joy-to-sorrow inflection point manifests. Most managers, being cost-reduction obsessed, never see it coming – and never see that it has swooshed by. Instead of changing the structure and/or behavior of the system to fit the new reality, they continue to tweak the original structure and fine tune the existing behaviors of the system’s elements to minimize the delay from input to output. Then they are confounded when (if?) they detect the decreased effectiveness of their actions. D’oh! I hate when that happens.

Revolution, Or Malarkey?

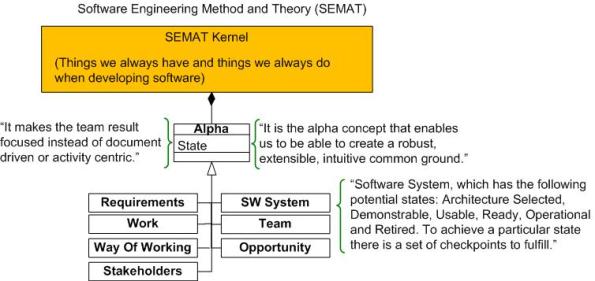

BD00 has been following the development of Ivar Jacobson et al’s SEMAT (Software Engineering Method And Theory) work for a while now. He hasn’t decided whether it’s a revolutionary way of thinking about software development or a bunch of pseudo-academic malarkey designed to add funds to the pecuniary coffers of its creators (like the late Watts Humphrey’s, SEI-backed, PSP/TSP?).

To give you (and BD00!) an introductory understanding of SEMAT basics, he’s decided to write about it in this post. The description that follows is an extracted interpretation of SEMAT from Scott Ambler‘s interview of Ivar: “The Essence of Software Engineering: An Interview with Ivar Jacobson”.

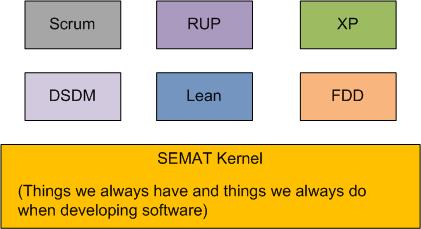

As the figure below shows, the “kernel” is the key concept upon which SEMAT is founded (note that all the boasts, uh, BD00 means, sentences, in the graphic are from Ivar himself).

In its current incarnation, the SEMAT kernel is comprised of seven, fundamental, widely agreed-on “alphas“. Each alpha has a measurable “state” (determined by checklist) at any time during a development endeavor.

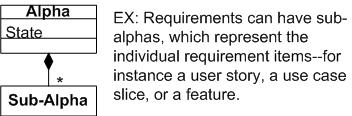

At the next lower level of detail, SEMAT alphas are decomposed into stateful sub-alphas as necessary:

As the diagram below attempts to illustrate, the SEMAT kernel and its seven alphas were derived from the common methods available within many existing methodologies (only a few representative methods are shown).

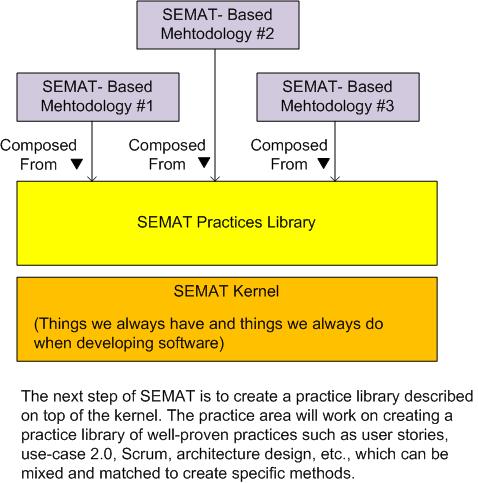

In the eyes of a SEMATian, the vision for the future of software development is that customized methods will be derived from the standardized (via the OMG!) kernel’s alphas, sub-alphas, and a library of modular “practices“. Everyone will evolve to speak the SEMAT lingo and everything will be peachy keen: we’ll get more high quality software developed on time and under budget.

OK, now that he’s done writing about it, BD00 has made an initial assessment of the SEMAT: it is a bunch of well-intended malarkey that smacks of Utopian PSP/TSP bravado. SEMAT has some good ideas and it may enjoy a temporary rise in popularity, but it will fall out of favor when the next big silver bullet surfaces – because it won’t deliver what it promises on a grand scale. Of course, like other methodology proponents, SEMAT’s advocates will tout its successes and remain silent about its failures. “If you’re not succeeding, then you’re doing it wrong and you need to hire me/us to help you out.”

But wait! BD00 has been wrong so many times before that he can’t remember the last time he was right. So, do your own research, form an opinion, and please report it back here. What do you think the future holds for SEMAT?

Should, Or Could?

There’s quite a difference between thinking and behaving as if “the world should be better aligned with my wishes!” and “the world could be better aligned with my wishes“. If your psychic disposition is toward the former, you’ll most likely be walking around frustrated and bitchy most of the time. If it’s toward the latter, you’ll most likely be more accepting and graceful.

BD00 seems to think that he’s been experiencing a slow shift over the years from thinking in terms of “should!” to thinking in terms of “could“. But of course, it may be just another one of those self-delusions that are packed wall to wall inside of his crippled mind.