Archive

Incremental Chunked Construction

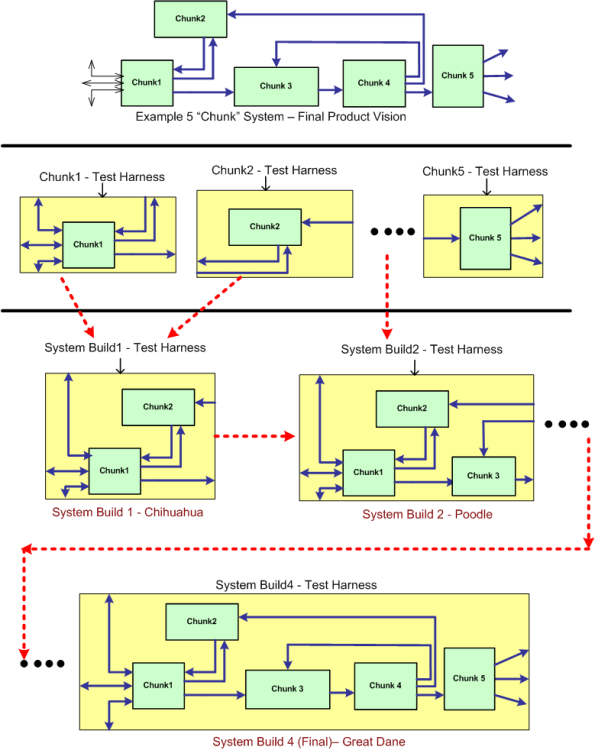

Assume that the green monster at the top of the figure below represents a stratospheric vision of a pipelined, data-centric, software-intensive system that needs to be developed and maintained over a long lifecycle. By data-centric, I mean that all the connectors, both internal and external, represent 24 X 7 real-time flows of streaming data – not “client requests” for data or transactional “services”. If the Herculean development is successful, the product will both solve a customer’s problem and make money for the developer org. Solving a problem and making money at the same time – what a concept, eh?

One disciplined way to build the system is what can be called “incremental chunked construction”. The system entities are called “chunks” to reinforce the thought that their granularity is much larger than a fine grained “unit” – which everybody in the agile, enterprise IT, transaction-centric, software systems world seems to be fixated on these days.

Follow the progression in the non-standard, ad-hoc diagram downward to better understand the process of incremental chunked development. It’s not much different than the classic “unit testing and continuous integration” concept. The real difference is in the size, granularity, complexity and automation-ability of the individual chunk and multi-chunk integration test harnesses that need to be co-developed. Often, these harnesses are as large and complex as the product’s chunks and subsystems themselves. Sadly, mostly due to pressure from STSJ management (most of whom have no software background, mysteriously forget repeated past schedule/cost performance shortfalls, and don’t have to get their hands dirty spending months building the contraption themselves), the effort to develop these test support entities is often underestimated as much as, if not more than, the product code. Bummer.

A Costly Mistake?

Assume the following:

- Your flagship software-intensive product has had a long and successful 10 year run in the marketplace. The revenue it has generated has fueled your company’s continued growth over that time span.

- In order to expand your market penetration and keep up with new customer demands, you have no choice but to re-architect the hundreds of thousands of lines of source code in your application layer to increase the product’s scalability.

- Since you have to make a large leap anyway, you decide to replace your homegrown, non-portable, non-value adding but essential, middleware layer.

- You’ve diligently tracked your maintenance costs on the legacy system and you know that it currently costs close to $2M per year to maintain (bug fixes, new feature additions) the product.

- Since your old and tired home grown middleware has been through the wringer over the 10 year run, most of your yearly maintenance cost is consumed in the application layer.

The figure below illustrates one “view” of the situation described above.

Now, assume that the picture below models where you want to be in a reasonable amount of time (not too “aggressive”) lest you kludge together a less maintainable beast than the old veteran you have now.

Cost and time-wise, the graph below shows your target date, T1, and your maintenance cost savings bogey, $75K per month. For the example below, if the development of the new product incarnation takes 2 years and $2.25 M, your savings will start accruing at 2.5 years after the “switchover” date T1.

Now comes the fun part of this essay. Assume that:

- Some other product development group in your company is 2 years into the development of a new middleware “candidate” that may or may not satisfy all of your top four prioritized goals (as listed in the second figure up the page).

- This new middleware layer is larger than your current middleware layer and complicated with many new (yet at the same time old) technologies with relatively steep learning curves.

- Even after two years of consumed resources, the middleware is (surprise!) poorly documented.

- Except for a handful of fragmented and scattered powerpoint files, programming and design artifacts are non-existent – showing a lack of empathy for those who would want to consider leveraging the 2 year company investment.

- The development process that the middleware team is using is fairly unstructured and unsupervised – as evidenced by the lack of project and technical documentation.

- Since they’re heavily invested in their baby, the members of the development team tend to get defensive when others attempt to probe into the depths of the middleware to determine if the solution is the right fit for your impending product upgrade.

How would you mitigate the risk that your maintenance costs would go up instead of down if you switched over to the new middleware solution? Would you take the middleware development team’s word for it? What if someone proposed prototyping and exploring an alternative solution that he/she thinks would better satisfy your product upgrade goals? In summary, how would you decrease the chance of making a costly mistake?

Application Infrastructure

The most recent C++ application that I wrote is named “ADSBsim” The figure below shows some source code level metrics that characterize the program. The metrics are presented in two categories: global and infrastructure. Infrastructure code includes all of the low level, non-application layer logic. For this application, the ratio of infrastructure code to total code is 1725/2784*100 = 62%. Thus, over half of the application is comprised of unglamorous infrastructure scaffolding.

Unlike the application layer logic, which doesn’t get neglected up front, the amount of infrastructure code to be developed is hardly ever known to any degree of certainty at the beginning of a new project. Thus, in addition to the crappy guesstimates that you usually give for the application layer, you should add an equivalent amount of effort to cover the well-hidden infrastructure logic. Instead of multiplying your guesstimate by the classic factor of “2” (rule of thumb) to accommodate uncertainty, you should consider multiplying it by “4” to get a half-way reasonable result. If your org managers mandate schedules from above and always ignore your input, then never mind the advice in this post. You’re hosed no matter WTF you do :^)

Unlike the application layer logic, which doesn’t get neglected up front, the amount of infrastructure code to be developed is hardly ever known to any degree of certainty at the beginning of a new project. Thus, in addition to the crappy guesstimates that you usually give for the application layer, you should add an equivalent amount of effort to cover the well-hidden infrastructure logic. Instead of multiplying your guesstimate by the classic factor of “2” (rule of thumb) to accommodate uncertainty, you should consider multiplying it by “4” to get a half-way reasonable result. If your org managers mandate schedules from above and always ignore your input, then never mind the advice in this post. You’re hosed no matter WTF you do :^)

BTW, I initially estimated 2 months to complete the ADSBsim project. It ended up taking 4 months instead of the 8 recommended by the technique above. One could interpret this as successfully finishing the project well under budget and within schedule. On the other hand, if one “thought” that it should’ve only taken two months to complete, then my performance can be interpreted as being horrendously below par.

Abstraction

Jeff Atwood, of “Coding Horror” fame, once something like “If our code didn’t use abstractions, it would be a convoluted mess“. As software projects get larger and larger, using more and more abstraction technologies is the key to creating robust and maintainable code.

Using C++ as an example language, the figure below shows the advances in abstraction technologies that have taken place over the years. Each step up the chain was designed to make large scale, domain-specific application development easier and more manageable.

The relentless advances in software technology designed to keep complexity in check is a double-edged sword. Unless one learns and practices using the new abstraction techniques in a sandbox, haphazardly incorporating them into the code can do more damage than good.

One issue is that when young developers are hired into a growing company to maintain legacy code that doesn’t incorporate the newer complexity-busting language features, they become accustomed to the old and unmaintainable style that is encrusted in the code. Because of schedule pressure and no company time allocated to experiment with and learn new language features, they shoe horn in changes without employing any of the features that would reduce the technical debt incurred over years of growing the software without any periodic refactoring. The problem is exacerbated by not having a set of regression tests in place to ensure that nothing gets broken by any major refactoring effort. Bummer.

Requirements Before, Design After

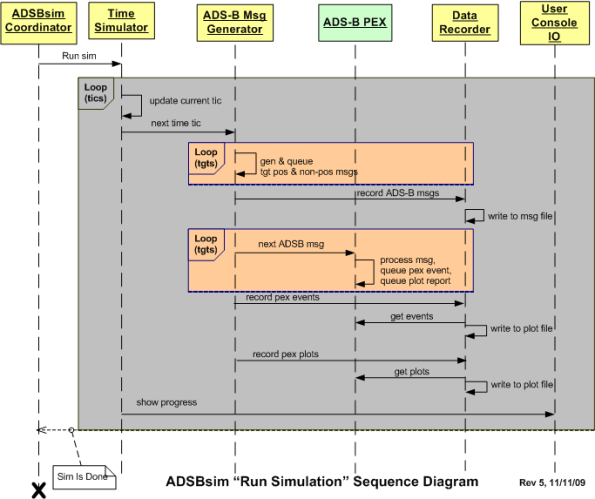

The figure below depicts a UML sequence diagram of the behavior of a simulator during the execution of a user defined scenario. Before the code has been written and tested, one can interpret this diagram as a set of interrelated behavioral requirements imposed on the software. After the code has been written, it can be considered a design artifact that reflects what the code does at a higher level of abstraction than the code itself.

Interpretations like this give credence to Alan Davis’s brilliant quote:

One man’s requirement is another man’s design

Here’s a question. Do you think that specifying the behavior requirements in the diagram would have been best conveyed via a user story or a use case description?

Here’s a question. Do you think that specifying the behavior requirements in the diagram would have been best conveyed via a user story or a use case description?

Black And White Binary Worlds

In this interview with the legendary Grady Booch, (InformIT: Grady Booch on Design Patterns, OOP, and Coffee), Larry O’Brien had this exchange with one of the original pioneers of object oriented design and the UML:

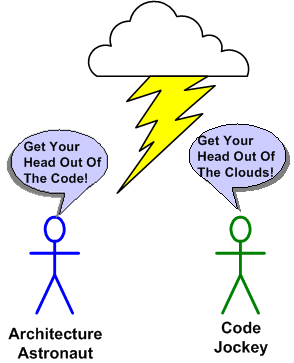

Larry: Joel Spolsky said:

“Sometimes smart thinkers just don’t know when to stop, and they create these absurd, all-encompassing, high-level pictures of the universe that are all good and fine, but don’t actually mean anything at all. These are the people I call Architecture Astronauts. It’s very hard to get them to write code or design programs, because they won’t stop thinking about Architecture.”

He also said:

“Sometimes, you’re on a team, and you’re busy banging out the code, and somebody comes up to your desk, coffee mug in hand, and starts rattling on…And your eyes are swimming, and you have no friggin’ idea what this frigtard is talking about,….and it’s going to crash like crazy and you’re going to get paged at night to come in and try to figure it out because he’ll be at some goddamn “Design Patterns” meetup.”

Spolsky seems to represent a real constituency that is not just dismissive but outright hostile to software development approaches that are not code-centric. What do you say to people who are skeptical about the value of work products that don’t compile?

Grady: You may be surprised to hear that I’m firmly in Joel’s camp. The most important artifact any development team produces is raw, running, naked code. Everything else is secondary or tertiary. However, that is not to say that these other things are inconsequential. Rather, our models, our processes, our design patterns help one to build the right thing at the right time for the right stakeholders.

I think that Grady’s spot on in that both the code-centric camp and the architecture-centric camp tend to throw out what’s good from the other camp. It’s classic binary extremism where things are either black or white to the participants and the color grey doesn’t exist in their minds. Once a rigid and unwavering mindset is firmly established, the blinders are put on and all learning stops. I try to keep this in mind all the time, but the formation of a black/white mindset is hard to detect. It creeps up on you.

Construction Sequence

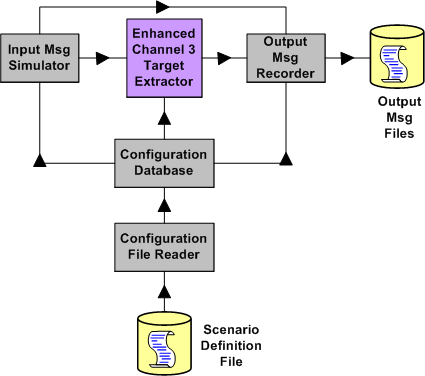

The figure below depicts a static structural bent SysML model of a small but non-trivial software program that I recently finished writing and testing. It’s a simulator harness that’s used to explore/test/improve candidate “Target Extractor” algorithms for inclusion into a revenue generating product.

On program launch, a user-created scenario definition file is read, parsed, error-checked, and then stored in an in-memory database. Subsequent to populating the database, the program automatically:

- Generates a simulated stream of target message fragments characterized by the scenario definition that was pre-specified by the user

- Injects the message stream into the target extractor algorithm under test

- Processes the message stream in accordance with the plot extraction state machine algorithm specification

- Records the target extractor output response message stream to disk

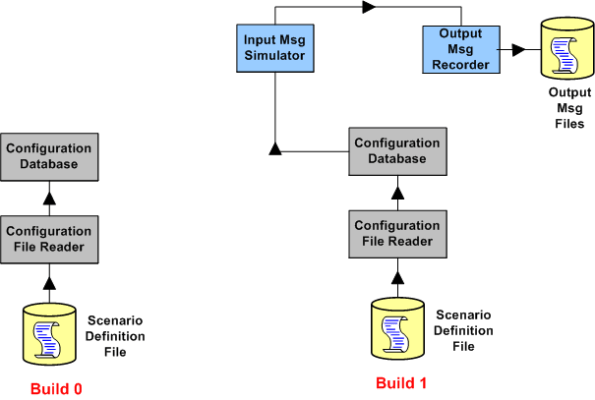

The figure above is a model that represents the finished product – which ended up being the third build in a series of incremental builds. The figure below shows the functionality in the first two builds of the trio.

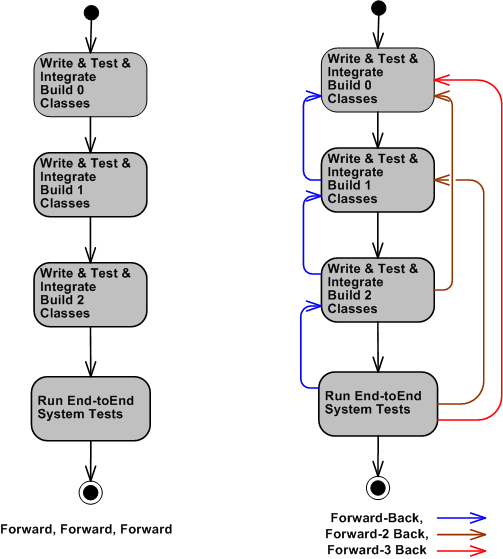

Even though the construction process that I used appears to have progressed in a nice and tidy linear and sequential flow (like the left portion of the figure below depicts), it naturally didn’t work out that way. The workflow progressed in accordance with the right portion of the figure below, with lots of high frequency single-step feedback loops and (thankfully) only a few two-step and three-step feedback loops.

In a perfect world, the software construction process proceeds in a linear and sequential fashion. In the messy real world, mistakes and errors are always made, and stepping backward is the only way that these mistakes and errors can be corrected.

In standard textbook CCH orgs where an endless sea of linear and sequential thinking BMs rule the roost, going backwards is “unacceptable” because “you should have done it right the first time“. In these types of irrational macho cultures, fear of punishment reigns supreme – and nobody dares to discuss it. Fearful development teams either don’t go backwards to correct mistakes, or they try to fix mistakes covertly below the corpo radar. What type of org do you work for?

Process Nazis

Unlike most enterprise software development orgs where “quality assurance” is equated to testing, government contractors usually have both a quality assurance group and a test engineering group. Why is that? It’s because big and bloated government customers “expect” all of its contractors to have one. It’s the way it is, and it’s the way it’s always been.

It doesn’t matter if members of the QA group never specified, designed, or wrote a line of software in their life, these checklist process nazis walk around wielding the process compliance axe like they are the kings of the land: “Did you fill out this unit test form?” “Do you have a project plan written according to our template?“, “Did you write a software development plan for us to approve?“, “Did you submit this review form?“, “Did you submit this software version definition form?“, “Do you have a test readiness form?“, “If you don’t do this, we’ll tell on you and you’ll get punished“. Yada, yada, yada. It’s one interruption, roadblock, and distraction after another. On one side, you’ve got these obstacle inserters, and on the other side you’ve got nervous, time-obsessed managers looking over your shoulder. WTF?

Since following a mechanistic process supposedly “proven to deliver results” doesn’t guarantee anything but a consumption of time, I don’t care much about formal processes. I care about getting the right information to the right people at the right time. By “right“, I mean accurate, unambiguous, complete, and most importantly – frreakin’ useful. For system engineers, the right information is requirements, for software architects it’s blueprints, for programmers it’s designs and source code, for testers it’s developer tested software. How about you, what do you care about?

The Factory And The Widgets

The process to assemble and construct the factory is much more challenging than the process to assemble and construct the widgets that the factory repetitively stamps out. In the software industry, everything’s a factory, but most managers think everything’s a widget in order to delude themselves into thinking that they’re in control. Amazingly, this is true even if the manager used to write software him/herself.

When a developer gets “promoted” to manager, a switch flips and he/she forgets the factory versus widget dichotomy. This stunning and instantaneous about face occurs because pressure from the next higher layer in the dysfunctional CCH (Command and Control Hierarchy) causes the shift in mindset and all common sense goes out the window. Predictability and exploitation replace uncertainty and exploration in all situations that demand the latter; and software creation always demands the latter. Conversation topics flip from talking about technical and CCH org roadblocks to obsessing about schedule and budget conservation because, of course, managers equate writing software with secretarial typing. The problem is that neglecting the former leads to poor performance of the latter.

I’m Finished

I just finished (100% of course <-LOL!) my latest software development project. The purpose of this post is to describe what I had to do, what outputs I produced during the effort, and to obtain your feedback – good or bad.

The figure below shows a simple high level design view of an existing real-time, software-intensive, revenue generating product that is comprised of hundreds of thousands of lines of source code. Due to evolving customer requirements, a major redesign and enhancement of the application layer functionality that resides in the Channel 3 Target Extractor is required.

The figure below shows the high level static structure of the “Enhanced Channel 3 Target Extractor” test harness that was designed and developed to test and verify that the enhanced channel 3 target extractor works correctly. Note that the number of high level conceptual test infrastructure classes is 4 compared to the lone, single product class whose functionality will be migrated into the product code base.

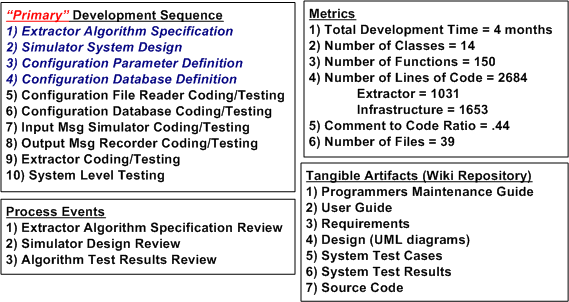

The figure below shows a post-project summary in terms of: the development process I used, the process reviews I held, the metrics I collected, and the output artifacts that I produced. Summarizing my project performance via the often used, simple-minded metric that old school managers love to use; lines of code per day, yields the paltry number of 22.

Since my average “velocity” was a measly 22 lines of code per day, do you think I underperformed on this project? What should that number be? Do you summarize your software development projects similar to this? Do you just produce source code and unit tests as your tangible output? Do you have any idea what your performance was on the last project you completed? What do you think I did wrong? Should I have just produced source code as my output and none of the other 6 “document” outputs? Should I have skipped steps 1 through 4 in my development process because they are non-agile “documentation” steps? Do you think I followed a pure waterfall process? What say you?