Archive

PPPP Invention

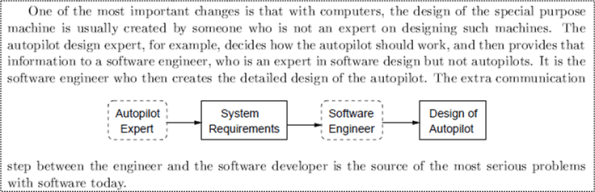

In “Engineering A Safer World“, Nancy Leveson uses the autopilot example below to illustrate the extra step of complexity that was introduced into the product development process with the integration of computers and software into electro-mechanical system designs.

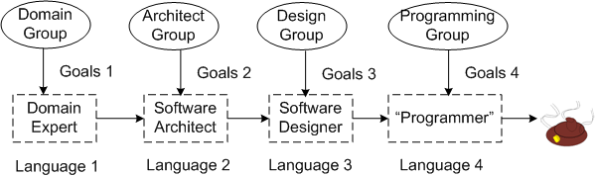

In the worst case, as illustrated below, the inter-role communication problem can be exacerbated. Although the “gap of misunderstanding” from language mismatch is always greatest between the Domain Expert and the Software Architect, as more roles get added to the project, more errors/defects are injected into the process.

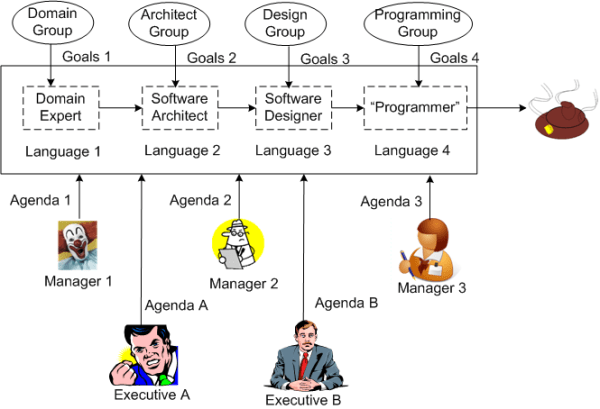

But wait! It can get worse, or should I say, “more challenging“. Each person in the chain of cacophony can be a member of a potentially different group with conflicting goals.

But wait, it can get worser! By consciously or unconsciously adding multiple managers and executives to the mix, you can put the finishing touch on your very own Proprietary Poop Producing Process (PPPP).

So, how can you try to inhibit inventing your very own PPPP? Two techniques come to mind:

- Frequent, sustained, proactive, inter-language education (to close the gaps of misunderstanding).

- Minimization of the number of meddling managers (and especially, pseudo-manager wannabes) allowed anywhere near the project.

Got any other ideas?

Conscious Degradation Of Style

One (of many) of my pet peeves can be called “Conscious Degradation Of Style” (CDOS). CDOS is what happens when a programmer purposely disregards the style in an existing code base while making changes/additions to it. It’s even more annoying when the style mismatch is pointed out to the perpetrator and he/she continues to pee all over the code to mark his/her territory. Sheesh.

When I have to make “local” changes to an existing code base, if I can discern some kind of consistent style within the code (which may be a challenge in itself!), I consciously and mightily try to preserve that style when I make the changes – no matter how much my ego may “disagree” with the naming, formatting, idiomatic, and implementation patterns in the code. That freakin’ makes (dollars and) sense, no?

What about you, what do you do?

James YAGNI

In the software world, YAGNI is one of many cutely named heuristics from the agile community that stands for “don’t waste time/money putting that feature/functionality in the code base NOW cuz odds are that You Ain’t Gonna Need It!”. It’s good advice, but it’s often violated innocently, or when the programmer doesn’t agree with the YAGNI admonisher for legitimate or “political” reasons.

When a team finds out downstream and (more importantly) admits that there’s lots of stuff in the code base that can be removed because of YAGNI violations, there are two options to choose from:

- Spend the time/money and incur the risk of breakage by extricating and removing the YAGNI features

- Leave the unused code in there to save time/money and eschew making the code base slimmer and, thus, easier to maintain.

It’s not often perfectly clear what option should be taken, but BD00 speculates (he always speculates) that the second option is selected way more often than the first. What’s your opinion?

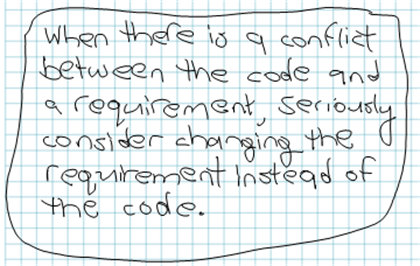

Software Development Cost Saving Tip

It’s easier (read as lower cost) to retest existing code with an “updated” requirement that was wrong or impossible to satisfy than it is to change and retest code in order to match an “unquestioned” requirement.

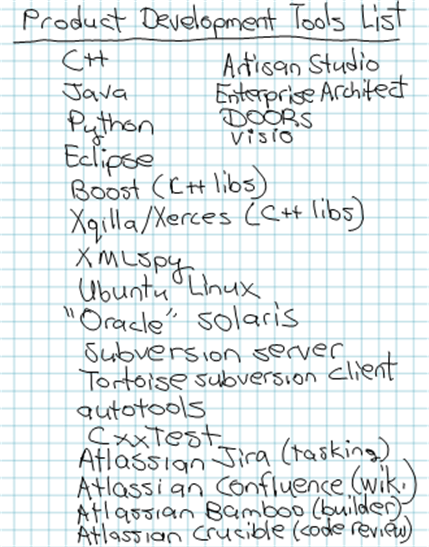

Tools List

Out of the blue, I tried to list all of the tools that we are currently using on our distributed software system development project:

What do you think? Too many tools? Too few tools? Redundant tools? Missing tools? What tools do you use?

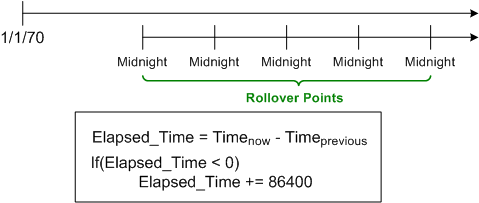

An Epoch Mistake

Let’s start this hypothetical story off with some framing assumptions:

Assume (for a mysterious historical reason nobody knows or cares to explore) that timestamps in a legacy system are always measured in “seconds relative to midnight” instead of “seconds relative to the unix epoch of 1/1/1970“.

Assume that the system computes many time differences at a six figure Hz rate during operation to fulfill it’s mission. Because “seconds relative to midnight” rolls over from 86399 to 0 every 24 hours, the time difference logic has to detect (via a disruptive “if” statement) and compensate for this rollover; lest its output is logically “wrong” once a day.

Assume that the “seconds relative to the unix epoch of 1/1/1970” library (e.g. Boost.Date_Time) satisfies the system’s dynamic range and precision requirements.

Assume that the design of a next generation system is underway and all the time fields in the messages exchanged between the system components are still mysteriously specified as “seconds since midnight” – even though it’s known that the added CPU cycles and annoyance of rollover checking could be avoided with a stroke of the pen.

Assume that the component developers, knowing that they can dispense with the silly rollover checking:

- convert each incoming time field into “seconds since the unix epoch“,

- use the converted values to perform their internal time difference computations without having to check/compensate for midnight rollover,

- convert back to “seconds since midnight” on output as required.

Assume that you know what the next two logical steps are: 1) change the specification of all the time fields in the messages exchanged between the system components from the midnight reference origin to the unix epoch origin, 2) remove the unessential input/output conversions:

Suffice it to say, in orgs where the culture forbids the admittance of mistakes (which implicates most orgs?) because the mistake-maker(s) may “look fallible“, next steps like this are rarely taken. That’s one of the reasons why old product warts are propagated forward and new warts are allowed to sprout up in next generation products.

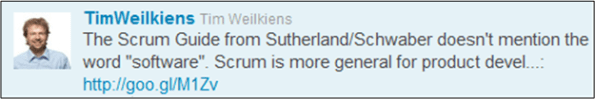

Scrumming For Dollars

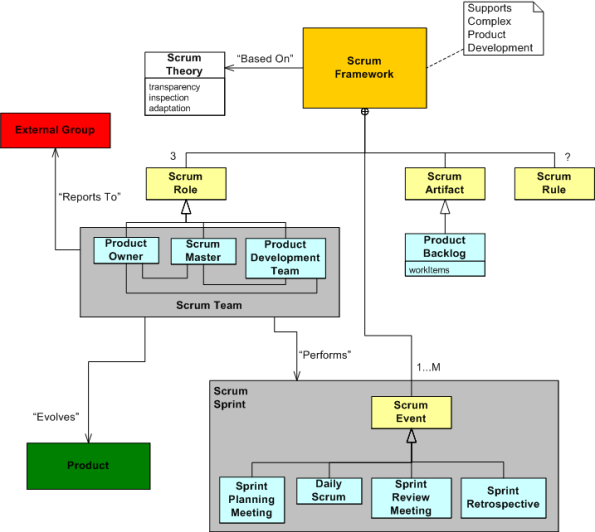

“Systems Engineering with SysML/UML” author Tim Weilkiens recently tweeted:

Tim’s right. Check it out yourself: Scrum Guide – 2011.

Before Tim’s tweet, I didn’t know that “software” wasn’t mentioned in the guide. Initially, I was surprised, but upon further reflection, the tactic makes sense. Scrum creators Ken Schwaber and Jeff Sutherland intentionally left it out because they want to maximize the market for their consulting and training services. Good for them.

As a byproduct of synthesizing this post, I hacked together a UML class diagram of the Scrum system and I wanted to share it. Whatcha think? A useful model? Errors, omissions? Does it look like Scrum can be applied to the development of non-software products?

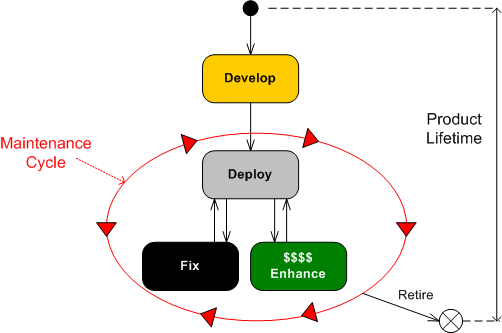

Product Lifetime

The UML state transition diagram below depicts the growth, maturity, and retirement of a large, software-intensive product. There are a bazillion known and unknown factors that influence the longevity and profitability of a system, but the greatest determinant is how effectively the work in the upfront “Develop” state is executed.

If the “Develop” state is executed poorly (underfunded, undermanned, mis-manned, rushed, “pens down“, etc), then you better hope you’ve got the customer by the balls. If not, you’re gonna spend most of your time transitioning into, and dwelling within, the resource-sucking “Fix” state. If you do have cajones in hand, then you can make the customer(s) pay for the fixes. If you don’t, then you’re hosed. (I hate when that happens.)

If you’ve done a great job in the “Develop” state, then you’re gonna spend most of your time transitioning into and out of the “Enhance” state – keeping your customer delighted and enjoying a steady stream of legitimate revenue. (I love when that happens.)

The challenge is: While you’re in the “Develop” state, how the freak do you know you’re doing a great job of forging a joyous and profitable future? Does being “on schedule” and “under budget” tell you this? Do “checked off requirements/design/code/test reviews” tell you this? Does tracking “earned value” metrics tell you this? What does tell you this? Is it quantifiably measurable?

Ironic

It’s like ten thousand spoons when all you need is a knife – Alanis Morissette

I find it curiously ironic that despite what may be espoused, software developers are often placed on one of the lowest rungs of the ladder of stature and importance (but alas, the poor test engineers often rank lowest) in many corpricracies whose revenue is dominated by software-centric products. Yet, it seems that many front-line software project managers, software “leads“, and software “rocketects” are terrified of joining the fray by designing and writing a little code here and there to lead by example and occasionally help out. In mediocre corpo cultures, it’s considered a step “backward” for titled ones to cut some code.

Fuggedaboud writing some code, a lot of the self-pseudo-elite dudes are afraid of even reading code for quality. Hence, to justify their existence, they focus on being meticulous process, schedule, and status-taking wonks – which of course unquestioningly requires greater skill, talent, and dedicated effort than designing/coding/testing/integrating revenue generating code.

TDD Overhype

There is much to like about unit-level testing and its extreme cousin, Test Driven Design (TDD). However, like with any tool/technique/methodology, beware of overhyping by snake oil salesman.

Cédric Beust is the author of the book, “Next Generation Java Testing“. He is also the founder and lead developer of TestNG, the most widely used Java testing framework outside of JUnit. In a Dr. Dobb’s guest post titled “Breaking Away From The Unit Test Group Think”, I found it refreshing that a renowned unit testing expert, Mr. Beust, wrote about the down side of the current obsession with unit testing:

- Unit tests are a luxury. Sometimes, you can afford a luxury; and sometimes, you should just wait for a better time and focus on something more essential — such as writing a functional test.

- There are two specific excesses regarding code coverage: focusing on an arbitrary percentage and adding useless tests.

- TDD code also tends to focus on the very small picture: relentlessly testing individual methods instead of thinking in terms of classes and how they interact with each other. This goal is further crippled by another precept called YAGNI, (You Aren’t Going to Need It), which suggests not preparing code for features that you don’t immediately need.

- Obsessing over numbers in the absence of context leads developers to write tests for trivial pieces of their code in order to increase this percentage with very little benefit to the end user (for example, testing getters).

Being a designer and developer of real-time, multi-threaded code that runs 24 X 7, I’ve found that unit testing is not nearly as cost effective as functional testing. As soon as I can, I get a skeletal instance of the (non-client-server) system that I’m writing up and running and I pump deterministic, canned data streams through the system from end-to-end to find out how the beast is behaving. As I add functionality to the code base, I rerun the data streams through the system again and again and I:

- try to verify that the new functionality inserted into the threading structure works as expected

- try to ensure that threads don’t die,

- try to verify that there are no deadlocks or data races in the quagmire

If someone can show me how unit testing helps with these issues, I’m all ears. No blow hard pontificating, please.