Archive

HW And SW ICs

Because software-intensive system development labor costs are so high, the holy grail of cost reduction is “reusability” at all levels of the stack; reusable requirements, reusable architectures, reusable designs, reusable source code. If you can compose a system from a set of pre-built components instead of writing them (over and over again) from scratch, then you’ll own a huge competitive advantage over your rivals who reinvent the wheel over and over again.

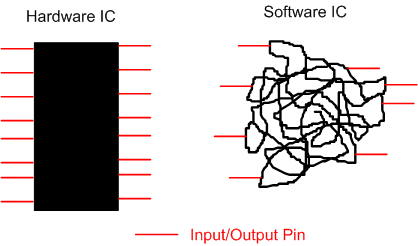

One of the beefs I’ve heard over the years is “why can’t you software weenies be like the hardware guys“. They mastered “reusability” via integrated circuits (IC) a looong time ago.

One difference between a hardware IC and a software IC is that the number of input/output (IO) pins on a physical chip is FIXED. Because of the malleability and ephemeral nature of software, the number of IO “pins” is in constant flux – usually increasing over time as the software is created.

Even when a software IC is considered “finished and ready for reuse” (LOL), unlike a hardware IC, the documentation on how to use the dang thing is almost always lacking and users have to pour through 1000s of lines of code to figure out if and how they can integrate the contraption into their own quagmire.

Alas, the software guys can master “reusability” like the hardware guys, but the discipline and time required to do so just isn’t there. With the increasing size of software systems being built today, the likelihood of software guys and their managers overcoming the discipline-time double whammy can be summarized as “not very“.

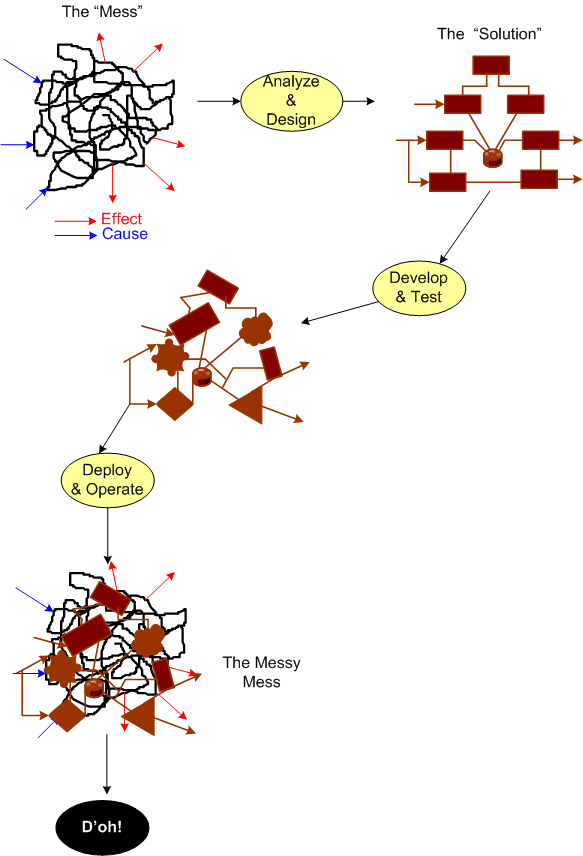

Messy Mess

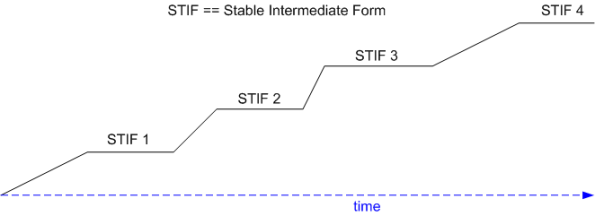

A Bunch Of STIFs

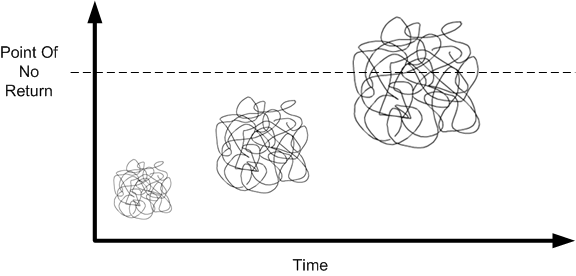

Grady Booch states that good software architectures evolve incrementally via a progressive sequence of STable Intermediat Forms (STIFs). At each point of equilibrium, the “released” STIF is exercised by an independent group of external customers and/or internal testers. Defects, unneeded functionality, missing functionality, and unmet “ilities” are ferreted out and corrected early and often.

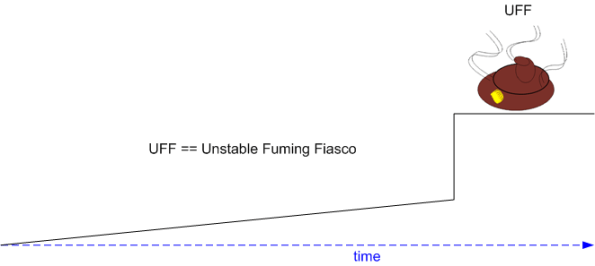

The alternative to a series of STIFs is the ubiquitous, one-shot, Unstable Fuming Fiasco (UFF):

Note: After I converted this draft post into a publishable one and queued it up, I experienced a sense of deja-vu. As a consequence, I searched back through my draft (91) and published (987) post lists. I found this one. D’oh! and LOL! I’m sure I’ve repeated myself many times during my blogging “career“, but hey, a steady drumbeat is more effective than a single cymbal crash. No?

Note: After I converted this draft post into a publishable one and queued it up, I experienced a sense of deja-vu. As a consequence, I searched back through my draft (91) and published (987) post lists. I found this one. D’oh! and LOL! I’m sure I’ve repeated myself many times during my blogging “career“, but hey, a steady drumbeat is more effective than a single cymbal crash. No?

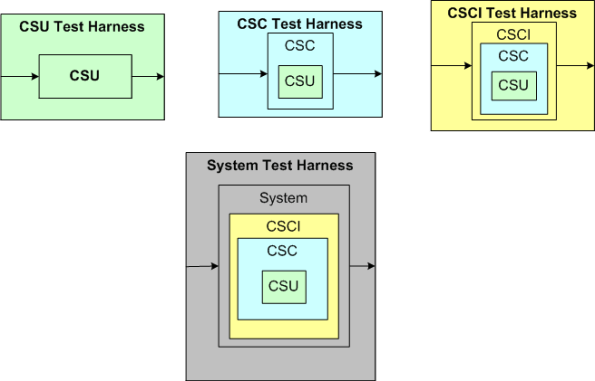

Levels Of Testing

Using three equivalent UML class diagrams, the graphic below conveys how to represent a system design using the “C” terminology of the aerospace and defense industry. On the left, the system “contains” CSCIs and CSCIs contain CSCs, etc. In the middle, the system “has” CSCIs. On the right, CSCIs “reside within” the system.

Leaving aside the question of “what the hell’s a unit“, let’s explore four “levels of testing” with the aid of the multi-graphic below that uses the “reside within” model.

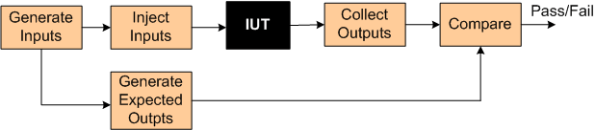

In order to test at any given level, some sort of test harness is required for that level. The test harness “system” is required to:

- generate inputs (for a CSU, CSC, CSCI, or the system),

- inject the inputs into the “Item Under Test” (CSU, CSC, or CSCI),

- collect the actual outputs from the IUT,

- compare the actual outputs with expected outputs.

- declare success or failure for each test

When planning a project up front, it’s easy to issue the mandate: “we’ll formally test and document at all four levels; progressing from the bottom CSU level up to the system level“. However, during project execution, it’s tough to follow “the plan” when the schedule starts to, uh, expand, and pressure mounts to “just shipt it, damn it!“.

Because:

- the number of IUTs in a level decreases as one moves up the ladder of abstraction (there’s only a single IUT at the top – the system),

- the (larger and fewer) IUTs at a given level are richer in complex behavior than the (smaller and more plentiful) IUTs at the next lowest level,

- it is expensive to develop high fidelity, manual and/or automated test harnesses for each and every level

- others?

there’s often a legitimate ROI-based reason to eschew all levels of formal testing except at the system level. That can be OK as long as it’s understood that defects found during final system level testing will be more difficult and time consuming ($$$) to localize and repair than if lower levels of isolated testing had been performed prior to system testing.

To mitigate the risk of increased time to localize/repair from skipping levels of testing, developers can build in test visibility points that can be turned on/off during operation.

The tradeoff here is that designing in an extensive set of runtime controllable test points adds complexity, code, and additional CPU/memory loading to both the system and test harness software. To top it off, test point activation is often needed most when the system is being stressed with capacity test scenarios where the nastiest of bugs tend surface – but the additional CPU and memory load imposed on the system/harness may itself cause tests to fail and mutate bugs into looking like they are located where they are not. D’oh! Ain’t life a be-otch?

The Hidden Preservation State

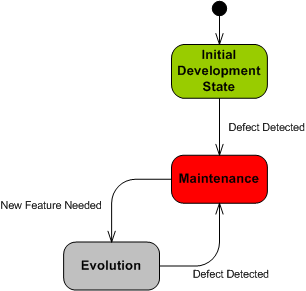

In the software industry, “maintenance” (like “system“) is an overloaded and overused catchall word. Nevertheless, in “Object-Oriented Analysis and Design with Applications“, Grady Booch valiantly tries to resolve the open-endedness of the term:

It is maintenance when we correct errors; it is evolution when we respond to changing requirements; it is preservation when we continue to use extraordinary means to keep an ancient and decaying piece of software in operation (lol! – BD00) – Grady Booch et al

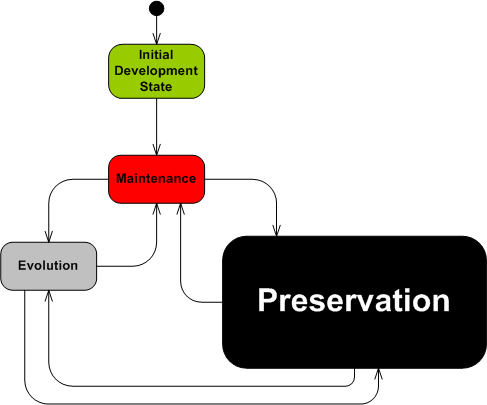

Based on Mr. Booch’s distinctions, the UML state machine diagram below attempts to model the dynamic status of a software-intensive system during its lifetime.

The trouble with a boatload of orgs is that their mental model and operating behavior can be represented as thus:

What’s missing from this crippled state machine? Why, it’s the “Preservation” state silly. In reality, it’s lurking ominously behind the scenes as the elephant in the room, but since it’s hidden from view to the unaware org, it only comes to the fore when a crisis occurs and it can’t be denied any longer. D’oh! Call in the firefighters.

Maybe that’s why Mr. “Happy Dance” Booch also sez:

… reality suggests that an inordinate percentage of software development resources are spent on software preservation.

For orgs that operate in accordance to the crippled state machine, “Preservation” is an expensive, time consuming, resource sucking sink. I hate when that happens.

Butt The Schedule

Did you ever work on a project where you thought (or knew) the schedule was pulled out of someone’s golden butt? Unlucky you, cuz Bulldozer00 never has.

Maintenance Cycles And Teams

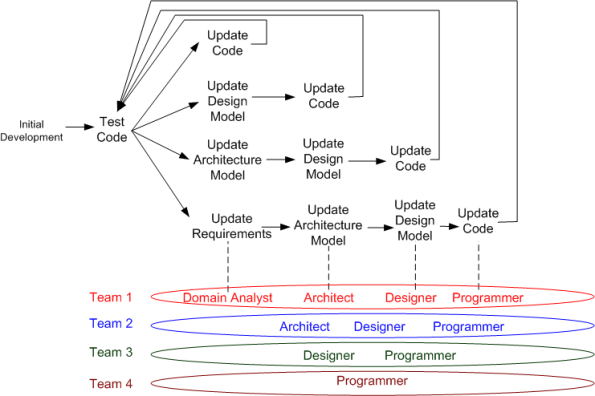

The figure below highlights the unglamorous maintenance cycle of a typical “develop-deliver-maintain” software product development process. Depending on the breadth of impact of a discovered defect or product enhancement, one of 4 feedback loops “should” be traversed.

In the simplest defect/enhancement case, the code is the only product artifact that must be updated and tested. In the most complex case, the requirements, architecture, design, and code artifacts all “should” be updated.

Of course, if all you have is code, or code plus bloated, superficial, write-once-read-never documents, then the choice is simple – update only the code. In the first case, since you have no docs, you can’t update them. In the second case, since your docs suck, why waste time and money updating them?

After the super-glorious business acquisition phase and during the mini-glorious “initial development” phase, the team is usually (but not always – especially in DYSCOs and CLORGs) staffed with the roles of domain analyst(s), architect(s), designer(s), and programmer(s). Once the product transitions into the yukky maintenance phase, the team may be scaled back and roles reassigned to other projects to cut costs. In the best case, all roles are retained at some level of budgeting – even if the total number of people is decreased. In the worst case, only the programmer(s) are kept on board. In the suicidal case, all roles but the programmer(s) are reassigned, but multiple manager type roles are added. (D’oh!)

Note that there does not have to be a one to one correspondence between a role and a person; one person can assume multiple roles. Unfortunately, the staff allocation, employee development, and reward systems in most orgs aren’t “designed” to catalyze and develop the added value of multi-role-capable people. That’s called the “employee-in-a-box” syndrome.

Well Known And Well Practiced

It’s always instructive to reflect on project failures so that the same costly mistakes don’t get repeated in the future. But because of ego-defense, it’s difficult to step back and semi-objectively analyze one’s own mistakes. Hell, it’s hard to even acknowledge them, let alone to reflect on, and to learn from them. Learning from one’s own mistake’s is effortful, and it often hurts. It’s much easier to glean knowledge and learning from the faux pas of others.

With that in mind, let’s look at one of the “others“: Hewlitt Packard. HP’s 2010 $1.2B purchase of Palm Inc. to seize control of its crown jewel WebOS mobile operating system turned out to be a huge technical and financial and social debacle. As chronicled in this New York Times article, “H.P.’s TouchPad, Some Say, Was Built on Flawed Software“, here are some of the reasons given (by a sampling of inside sources) for the biggest technology flop of 2011:

- The attempted productization of cutting edge, but immature (freeze ups, random reboots) and slooow technology (WebKit).

- Underestimation of the effort to fix the known technical problems with the OS.

- The failure to get 3rd party application developers (surrogate customers) excited about the OS.

- The failure to build a holistic platform infused with conceptual integrity (lack of a benevolent dictator or unapologetic aristocrat).

- The loss of talent in the acquisition and the imposition of the wrong people in the wrong positions.

Hmm, on second thought, maybe there is nothing much to learn here. These failure factors have been known and publicized for decades, yet they continue to be well practiced across the software-intensive systems industry.

Obviously, people don’t embark on ambitious software development projects in order to fail downstream. It’s just that even while performing continuous due diligence during the effort, it’s still extremely hard to detect these interdependent project killers until it’s too late to nip them in the bud. Adding salt to the wound, history has inarguably and repeatedly shown that in most orgs, those who actually do detect and report these types of problematiques are either ignored (the boy who cried wolf syndrome) or ostracized into submission (the threat of excommunication syndrome). Note that several sources in the HP article wanted to “remain anonymous“.

Related articles

- Sluggish code and HP power plays blamed for webOS’ failure (slashgear.com)

- Leaks: webOS struggled with poor staff, fundamental design (electronista.com)

- Why WebOS Failed (technologizer.com)

What Would You Do?

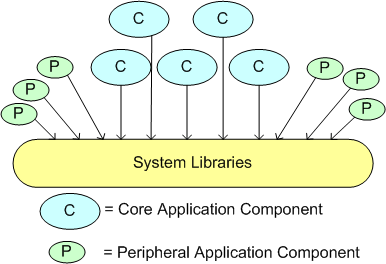

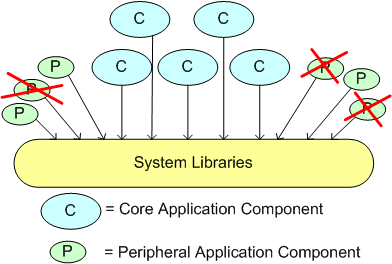

Assume that you’re working on a software system comprised of a set of core and peripheral application components. Also assume that these components rest on top of a layered set of common system libraries.

Next, assume that you remove some functionality from a system library that you thought none of the app components are using. Finally, assume that your change broke multiple peripheral apps, and, after finding out, the apps writer asked you nicely to please restore the functionality.

What would you do?

Capers And Salmon

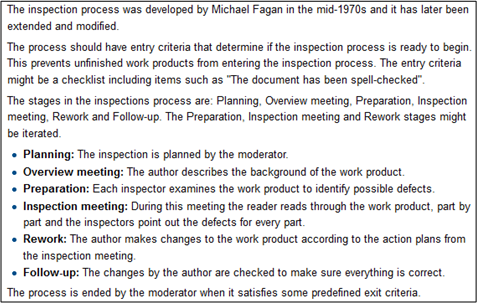

I like capers with my salmon. In general, I also like the work of long time software quality guru Capers Jones. In this Dr. Dobb’s article, “Do You Inspect?”, the caped one extols the virtues of formal inspection. He (rightly) states that formal, Fagan type, inspections can catch defects early in the product development process – before they bury and camouflage themselves deep inside the product until they spring forth way downstream in the customer’s hands. (I hate when that happens!)

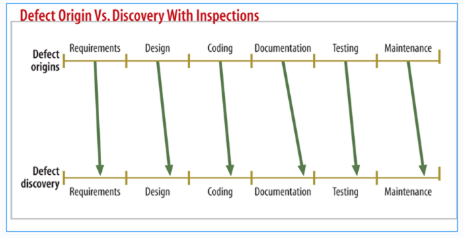

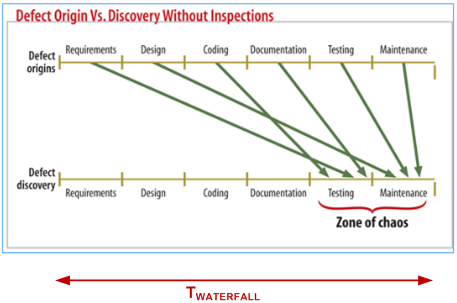

The pair of figures below (snipped from the article) graphically show what Capers means. Note that the timeline implies a long, sequential, one-shot, waterfall development process (D’oh!).

That’s all well and dandy, but as usual with mechanistic, waterfall-oriented thinking, the human aspects of doing formal inspections “wrong” aren’t addressed. Because formal inspections are labor-intensive (read as costly), doing artifact and code inspections “wrong” causes internal team strife, late delivery, and unnecessary budget drain. (I hate when that happens!)

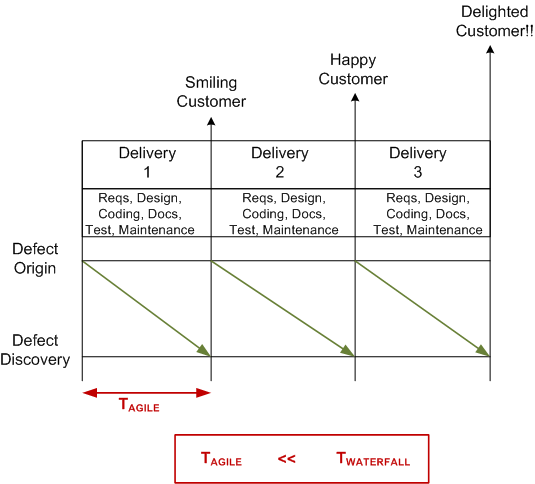

An agile-oriented alternative to boring and morale busting “Fagan-inspections-done-wrong” is shown below. The short, incremental delivery time frames get the product into the hands of internal and external non-developers early and often. As the system grows in functionality and value, users and independent testers can bang on the system, acquire knowledge/experience/insight, and expose bugs before they intertwine themselves deep into the organs of the product. Working hands-on with a product is more exhilarating and motivating than paging through documents and power points in zombie or contentious meetings, no?

Of course, it doesn’t have to be all or nothing. A hybrid approach can be embraced: “targeted, lightweight inspections plus incremental deliveries with hands-on usage”.