Archive

Ultimately And Unsurprisingly

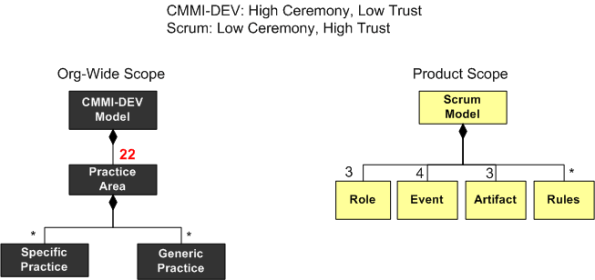

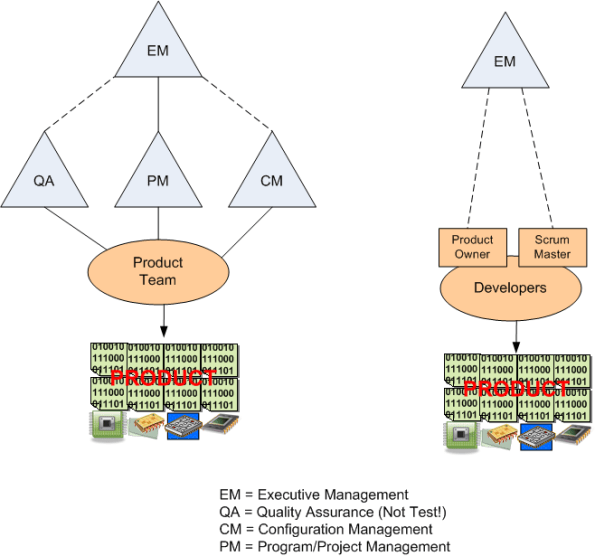

Take a look at the latest, dorky, BD00 diagram below. The process model on the left is derived from the meaty, 482 page CMMI-DEV SEI Technical Report. The model on the right is derived from the lean, 16 page Scrum Guide.

Comparing CMMI-DEV and Scrum may seem like comparing apples to oranges, but it’s my blog and I can write (almost) whatever I want on it, no?

Don’t write what you know, write what you want to know – Jerry Weinberg

The overarching purpose of both process frameworks is to help orgs develop and sustain complex products. As you can deduce, the two approaches for achieving that purpose appear to be radically different.

The CMMI-DEV model is comprised of 22 practice areas, each of which has a number of specific and generic practices. Goodly or badly, note that the word “practice” dominates the CMMI-DEV model.

Unlike the “practice” dominated CMMI-DEV model, the Scrum model elements are diverse. Scrum’s first class citizens are roles (people!), events, artifacts, and the rules of the game that integrate these elements into a coherent socio-technical system. In Scrum, as long as the rules of the game are satisfied, no practice is off limits for inclusion into the framework. However, the genius inclusion of time limits for each of Scrum’s 4 event types implicitly discourages heavyweight practices from being adopted by Scrum implementers and practitioners.

Of course, following either model or some hybrid combo can lead to product quality/time/budget success or failure. Aficionados on both sides of the fence publicly tout their successes and either downplay their failures (“they didn’t understand or really follow the process!“) or they ignore them outright. As everyone knows, there are just too many freakin’ metaphysical factors involved in a complex product development effort. Ultimately and unsurprisingly, success or failure comes down to the quality of the people participating in the game – and a lot of luck. Yawn.

Hurry! Hurry!

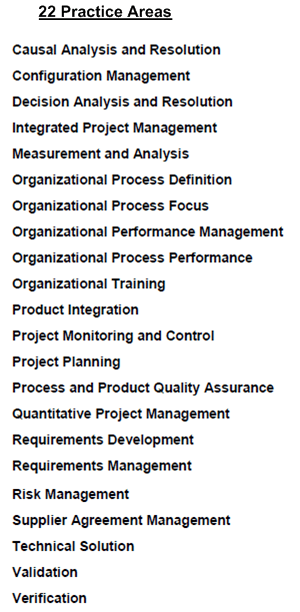

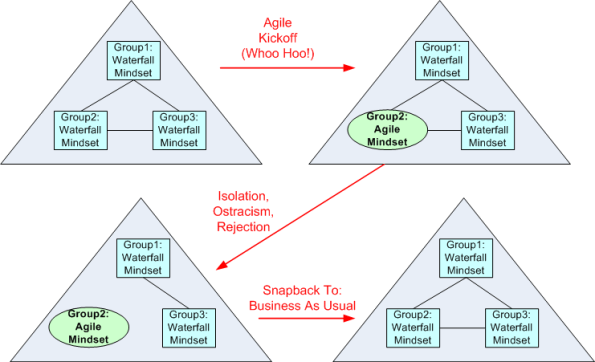

Lots of smart and sincere software development folks like Ron Jeffries, Jim Coplien, Scott Ambler, Bob Marshall, Adam Yuret, etc. have recently been lamenting the dumbing-down and commercialization of the “agile” brand. Since I get e-mails like the one below on a regular basis, I can deeply relate to their misery.

Hurry! Hurry! After just 2 days of effort and a measly 1300 beaners of “investment“, you’ll be fully prepared to lead your next software development project into the promised land of “under budget, on schedule, exceeds expectations“.

Whoo Hoo! My new SCRUM Master certificate is here! My new SCRUM Master certificate is here!

Marginalizing The Middle

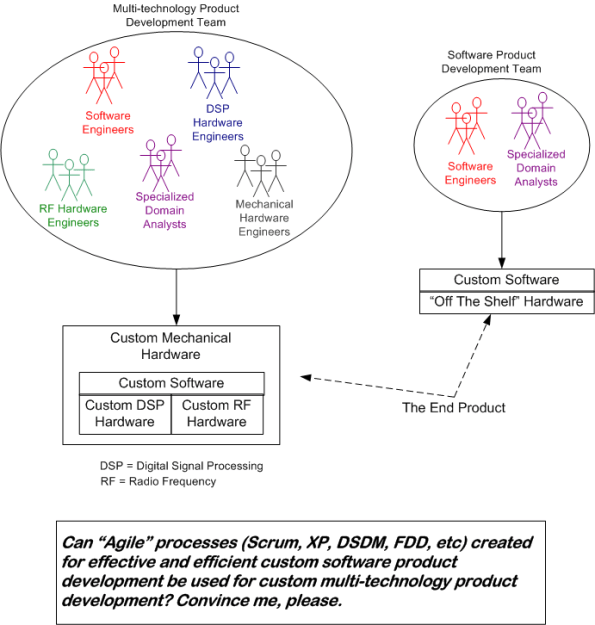

Because they unshackle development teams from heavyweight, risk-averse, plan-drenched, control-obsessed processes promoted by little PWCE Hitlers and they increase the degrees of freedom available to development teams, agile methods and mindsets are clearly appealing to the nerds in the trenches. However, in product domains that require the development of safety-critical, real-time systems composed of custom software AND custom hardware components, the risk of agile failure is much greater than traditional IT system development – from which “agile” was born. Thus, a boatload of questions come to mind and my head starts to hurt when I think of the org-wide social issues associated with attempting to apply agile methods in this foreign context:

Will the Quality Assurance and Configuration Management specialty groups, whose whole identity is invested in approving a myriad of documents through complicated submittal protocols and policing compliance to existing heavyweight policies/processes/procedures become fearful obstructionists because of their reduced importance?

Will penny-watching, untrusting executives who are used to scrutinizing planned-vs-actual schedules and costs in massive Microsoft Project and Excel files via EVM (Earned Value Management) feel a loss of importance and control?

Will rigorously trained, PMI-indoctrinated project managers feel marginalized by new, radically different roles like “Scrum Master“?

Note: I have not read the oxymoronic-titled “Integrating CMMI and Agile Development” book yet. If anyone has, does it address these ever so important, deep seated, social issues? Besides successes, does it present any case studies in failure?

… there is nothing more difficult to carry out, nor more doubtful of success, nor more dangerous to handle, than to initiate a new order of things. For the reformer makes enemies of all those who profit by the old order, and only lukewarm defenders in all those who would profit by the new order… – Niccolo Machiavelli

Citizen CANES

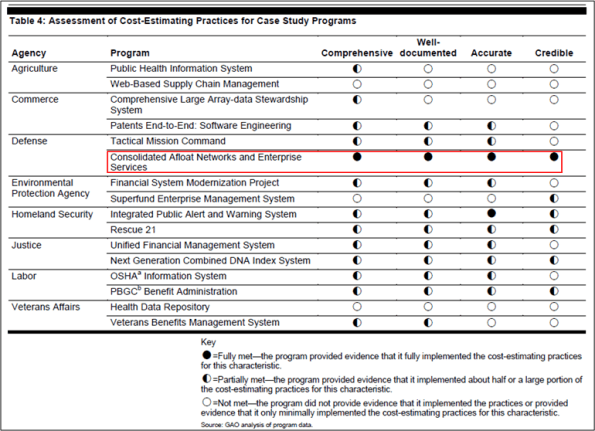

On the same day that the US government General Accountability Office (GAO) released its “Software Development: Effective Practices And Federal Challenges In Applying Agile Methods” report, it also released a report titled “INFORMATION TECHNOLOGY COST ESTIMATION: Agencies Need to Address Significant Weaknesses in Policies and Practices“. In this report, the GAO compared cost estimation policies and procedures to best practices at eight agencies. It also reviewed the documentation supporting cost estimates for 16 major investments at those eight agencies—representing about $51.5 billion of the planned IT spending for fiscal year 2012. The table below summarizes the GAO findings.

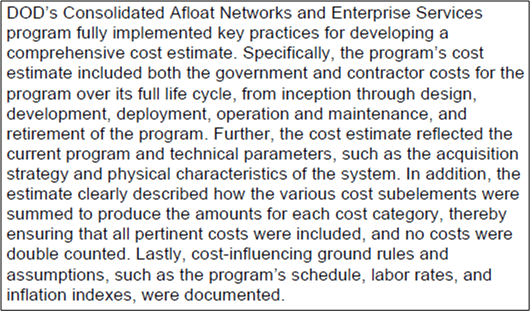

As you can see, only one out of sixteen programs fully met the mighty GAO criteria for “effective” cost estimation: the Navy’s “Consolidated Afloat Networks and Enterprise Services” (CANES) investment. Here’s the GAO’s glowing, bureaucratic-speak assessment of the citizen CANES cost estimation performance as of July 2012:

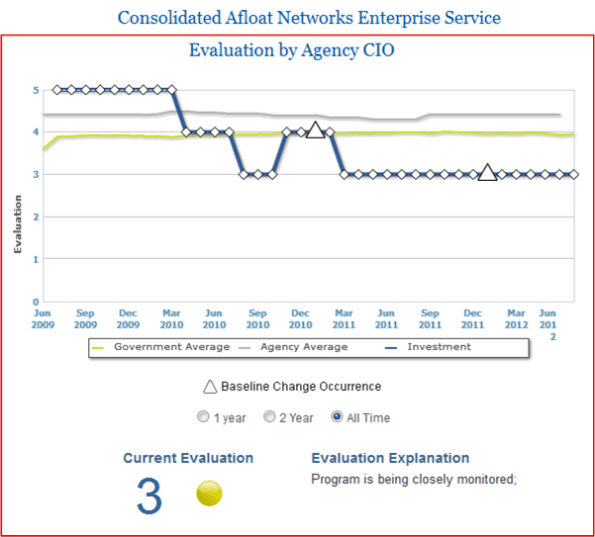

Out of curiosity, I googled the exemplar CANES program. Here’s what I found on the US government’s “IT Dashboard” website:

Note that after debuting with a rating of 5 (Low Risk) in 2009, CANES is currently rated at 3 (medium risk) and is being “closely monitored” by some higher-ups in the infallible chain of command.

Of course, no one, not even that omniscient and omnipresent devil BD00, can tell what will happen to CANES in the future. The point of this post is that spending lots of money and time on meticulous cost estimation to satisfy some authority’s arbitrary and subjective criteria (comprehensive, well-documented, accurate, credible) doesn’t guarantee squat about the future. It does, however, provide a temporary and comfortable illusion of control that “official watchers” crave. We can call it the linus-blanket affect. Maybe coarser and less comprehensive estimation techniques can work just as well or better?

Comprehensiveness is the enemy of comprehensibility – Martin Fowler

Snapback To “Business As Usual”

Over the years, I’ve read quite a few terrific and insightful reports from the General Accountability Office (GAO) on the state of several big, software-intensive, government programs. The GAO is the audit, evaluation, and investigative arm of the US Congress. Its mission is to:

help improve the performance and accountability of the federal government for the American people. The GAO examines the use of public funds; evaluates federal programs and policies; and provides analyses, recommendations, and other assistance to help Congress make informed oversight, policy, and funding decisions.

In a newly released report titled “Software Development: Effective Practices And Federal Challenges In Applying Agile Methods“, the GAO communicated the results of a study it performed on the success of using “agile” software methods in five agencies (a.k.a. bureaucracies): the Department of Commerce, Defense, Veterans Affairs, the Internal Revenue Service, and the National Aeronautics and Space Administration.

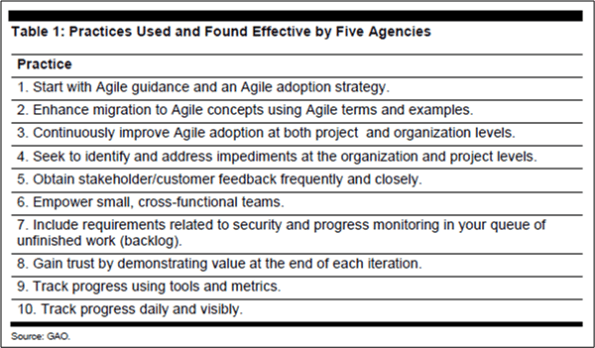

The GAO report deems these 10 best practices as effective for taking an agile approach:

Yawn. Every time I read a high-falutin’ list like this, I’m hauntingly reminded of what Chris Argyris essentially says:

Most advice given by “gurus” today is so abstract as to be un-actionable.

The GAO report also found more than a dozen challenges with the agile approach for federal agencies:

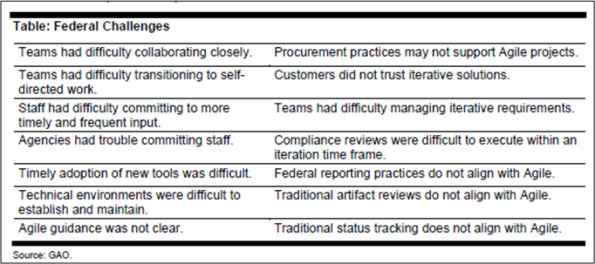

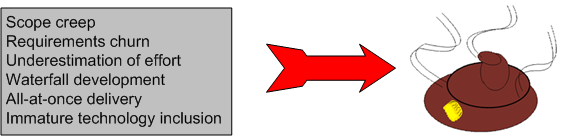

Again, yawn. These are not only federal challenges…. they’re HUGE commercial challenges as well. When the whole borg infrastructure, its policies, its protocols, its (planning, execution, reporting) procedures, and most importantly, its sub-group mindsets are steadfastly waterfall-dominated, here’s what usually happens when “agile” is attempted by a courageous borg sub-group:

D’oh! I hate when that happens.

OMITTED ACTIVITIES!

The best book I’ve read (so far) on software estimation is Steve McConnell’s “Software Estimation: Demystifying the Black Art“. Steve is one of the most pragmatic technical authors I know. His whole portfolio of books is worth delving into.

Prior to describing many practical and “doable” estimation practices, Steve presents a dauntingly depressive list of estimation error sources:

- Unstable requirements

- Unfounded optimism

- Subjectivity and bias

- Unfamiliar application domain area

- Unfamiliar technology area

- Incorrect conversion from estimated time to project time (for example, assuming the project team will focus on the project eight hours per day, five days per week)

- Misunderstanding of statistical concepts (especially adding together a set of “best case” estimates or a set of “worst case” estimates)

- Budgeting processes that undermine effective estimation (especially those that require final budget approval in the wide part of the Cone of Uncertainty)

- Having an accurate size estimate, but introducing errors when converting the size estimate to an effort estimate

- Having accurate size and effort estimates, but introducing errors when converting those to a schedule estimate

- Overstated savings from new development tools or methods

- Simplification of the estimate as it’s reported up layers of management, fed into the budgeting process, and so on

- OMITTED ACTIVITIES!

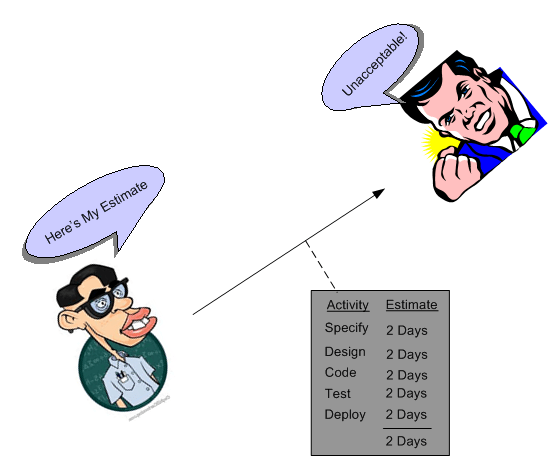

But wait! We’re not done. That last screaming bullet, OMITTED ACTIVITIES!, needs some elaboration:

- Glue code needed to use third-party or open-source software

- Ramp-up time for new team members

- Mentoring of new team members

- Management coordination/manager meetings

- Requirements clarifications

- Maintaining the scripts required to run the daily build

- Participation in technical reviews

- Integration work

- Processing change requests

- Attendance at change-control/triage meetings

- Maintenance work on previous systems during the project

- Performance tuning

- Administrative work related to defect tracking

- Learning new development tools

- Answering questions from testers

- Input to user documentation and review of user documentation

- Review of technical documentation

- Reviewing plans, estimates, architecture, detailed designs, stage plans, code, test cases

- Vacations

- Company meetings

- Holidays

- Sick days

- Weekends

- Troubleshooting hardware and software problems

It’s no freakin’ wonder that the vast majority of software-intensive projects are underestimated, no? To add insult to injury, the unspoken pressure from the “upper layers” to underestimate the activities that ARE actually included in a project plan seals the deal for “perceived” future failure, no? It’s also no wonder that after a few years, good technical people who feel that hands-on creative work is their true calling start agonizing over whether to get the hell out of such a failure-inducing system and make the move on up into the world of politics, one-upsmanship, feigned collaboration, dubious accomplishment, and strategic self-censorship. Bummer for those people and the orgs they dwell in. Bummer for “the whole“.

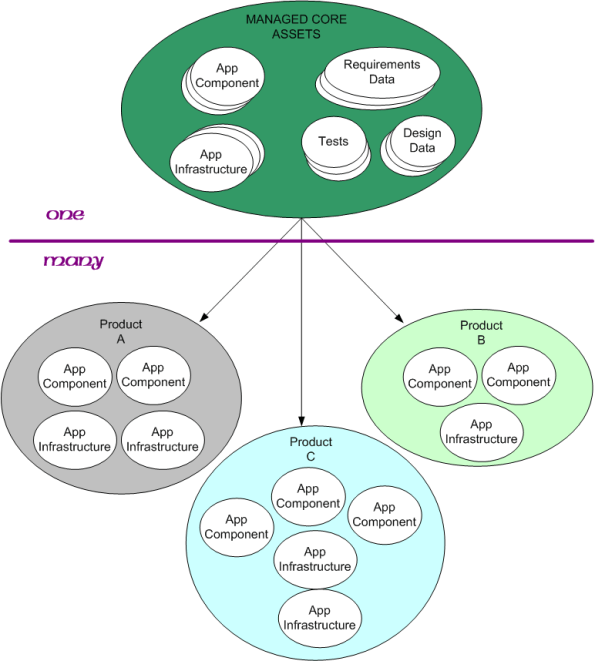

Out Of One, Many

A Software Product Line (SPL) can be defined as “a set of software-intensive systems that share a common, managed set of features satisfying the specific needs of a particular market segment or mission and that are developed from a common set of core assets in a prescribed way“.

The keys to developing and exploiting the value of an SPL are

- specifying the interfaces and protocols between app components and infrastructure components

- the granularity of the software components: 10-20K lines of code,

- the product instantiation and test process,

- the disciplined management of changes to the app and infrastructure components.

- managing obsolescence of open source components/libs used in the architecture

- keeping the requirements and design data in synch with the code base

Any others?

Not Applicable?

No Lessons Learned II

Since my post on the JTRS fiasco generated more blog traffic than usual, this post is based on the same theme – the failure of a big, multi-techonology, socio-technical project. Today’s topic is the termination of the Army’s massive Future Combat Systems (FCS) program in 2009 after 6 years of development and gobs of spent taxpayer money. Actually, some face-saving was achieved on this boondoggle since the monolithic FCS program was replaced by several smaller, fragmented programs.

From a slew of pages I bookmarked on Delicious.com over the years, I pieced together the following timeline of events for the FCS program.

1) The FCS program is formally kicked off in 2003, with much fanfare, of course.

2) In August 2005, the program met 100% of the criteria in its most important milestone to date, Systems of Systems Functional Review. (Whoo Hoo, the “paper” docs were perfect!)

3) January 24, 2008. Congressional investigators express “concern” that the lines of code have nearly doubled since development began in 2003. And they question the Army’s oversight of a far-flung project involving more than 2,000 developers and dozens of contractors working across the nation. The Government Accountability Office, Congress’s watchdog, says the Army underestimated the undertaking. When the software project began, investigators say the Army estimated it needed 33.7 million lines of code; it’s now 63.8 million — about three times the number for the Joint Strike Fighter aircraft program. The software program “started prematurely. They didn’t have a solid knowledge base,” said Bill Graveline, a GAO official involved in the government’s ongoing review. “They didn’t really understand the requirements.”

4) Mar 18, 2008. Setbacks in the Army’s development of its software requirements for FCS due to the immaturity of the program and the aggressive pace of the Army’s development schedule, however, have led to delays, errors and omissions in the development of essential software packages for the program, while flaws in those packages have in turn delayed or threatened other development efforts, GAO said. Developers for five major software packages, for example, said that the high-level requirements they received from the Army were poorly defined, late or missing during the development process, GAO said.

5) June 13, 2008. Possible budget cuts, a change of administration and the Pentagon’s focus on supporting operations in Iraq and Afghanistan have ratcheted up pressure on the program just when it is showing tangible signs of progress after five years of work and almost $15 billion in taxpayer money invested.

6) Mar 02, 2009 The systems integrators heading the Army’s Future Combat Systems program have confirmed that development of the hardware and software required for the program’s vehicles and weapons systems is proceeding as planned. (Boeing Co. and Science Applications International Corp. are the lead systems integrators for the $87 billion FCS program.)

7) June 23, 2009. The memorandum issued confirms the recommendations made earlier this year by Defense Secretary Robert Gates to replace the single, giant program with a number of smaller modernization efforts.

FCS, particularly the manned combat vehicle portion, did not reflect the anti-insurgency lessons learned in Iraq and Afghanistan. – Robert Gates

So, let’s see what went wrong: ambiguous and inconsistent and misunderstood requirements, gross underestimation of effort, immature technologies, “aggressive” schedules. Sound familiar? Yawn. Same old, same old.

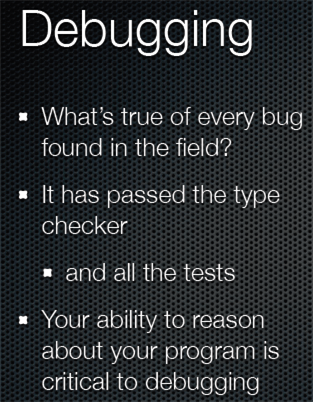

Reasonable Debugging

In Rich Hickey‘s QCon talk, “Simple Made Easy”, he hoisted this slide:

So, what can enhance one’s ability to “reason about” a program, especially a big, multi-threaded, multi-processing beast that maps onto a heterogeneous hodge-podge network of hardware and operating systems? Obviously, a stellar memory helps, but come on, how many human beings can remember enough detail in a >100K line code base to be able to debug field turds effectively and efficiently?

How about simplicity of design structure (whatever that means)? How about the deliberate and intentional use of a small set of nested, recurring patterns of interaction – both of the GoF kind and/or application specific ones? Or, shhhh, don’t say it too loudly, how about a set of layered blueprints that allow you and others to mentally “fly” over the software quickly at different levels of detail and from different aspect angles; without having to slodge through reams of “flat” code?

Do you, your managers, and/or your colleagues value and celebrate: simplicity of design structure; use of a small set of patterns of interaction; use of a set of blueprints? Do you and they walk the talk? If not, then why not? If so, then good for you, your org, your colleagues, your customers, and your shareholders.