Archive

Bugs Or Cash

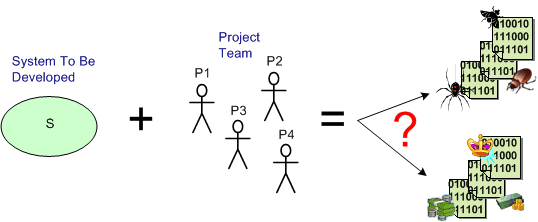

Assume that you have a system to build and a qualified team available to do the job. How can you increase the chance that you’ll build an elegant and durable money maker and not a money sink that may put you out of business.

The figure below shows one way to fail. It’s the well worn and oft repeated ready-fire-aim strategy. You blast the team at the project and hope for the best via the buzz phrase of the day – “emergent design“.

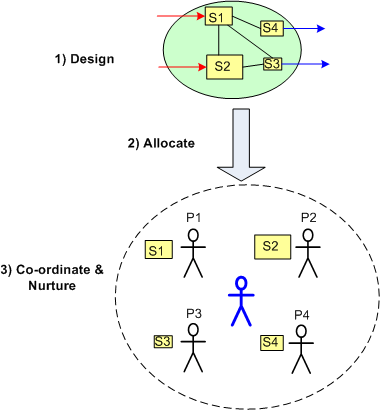

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

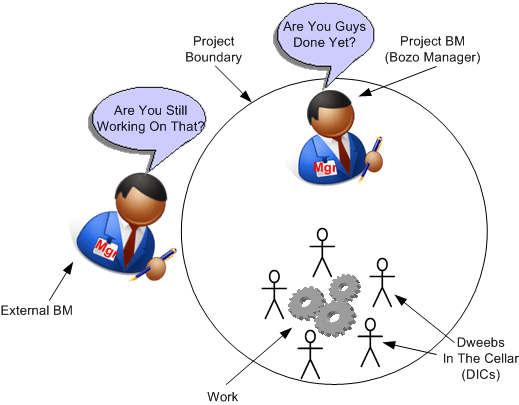

Are You Still Working On That?

It’s funny enough when you work for a one dimensional manager (one dimension = schedule), but it’s even funnier when another 1D manager that has nothing to do with your project stops by to chit chat and he/she inevitably asks you:

Are you still working on that?

LOL! Being 1D, and even though he/she has no idea what it takes (or should take) to finish a project, the question can be interpreted as: Since you’re not done, you’re lazy or you’re screwing up.

When the question pops up, try this Judo move:

Should I be done? How long should it have taken?

Or, you can be really nasty and retort with:

Yes I am still working on it. Sorry, but it’s not a shallow and superficial management task like signing off on a document I haven’t read or attending an agenda-less meeting that I could check off on my TODO list.

Come on, I dare you.

Aggressive Substitution

One incredibly overused word heard repeatedly like a metronome across the vast corpo wasteland is “aggressive“. We will “aggressively pursue new opportunities“, “aggressively cut costs“, yada, yada, yada. It’s most commonly used by anointed BMs everywhere in long winded inspirational sentences that contain its most beloved twin: “schedule“. Here’s what my corpo jargon decoder ring tells me what it means:

Aggressive Schedule (AS) = Work your ass off at least 12 hours a day for months on end without receiving any overtime pay and expecting the same 1% yearly raise as the rest of the DICforce that smartly exits the psychic prison after five to seven hours of work per day. Oh, and I’ll get a bonus if you meet schedule.

The AS(s) phrase is always wielded by someone with no real skin in the game except for the possibility of fewer stock options if the “S” isn’t met. Since chronic overuse of any word takes the sting out of it, how about creatively mixing it up from time to time with a synonym replacement:

- Barbaric schedule

- Contentious schedule

- Destructive schedule

- Disruptive schedule

- Disturbing schedule

- Intrusive schedule

- Pugnacious schedule

- Rapacious schedule

I can’t decide on my favorite surrogate replacement. It’s either “pugnacious” or “rapacious”. What’s yours?

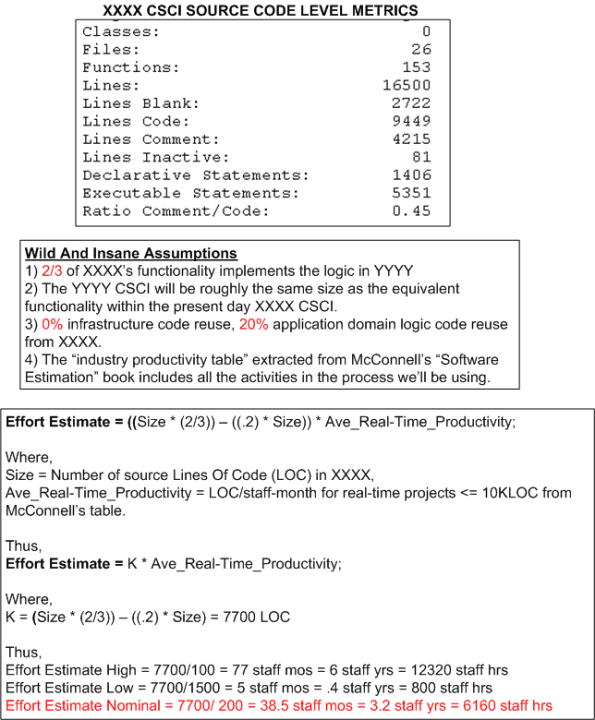

An Estimation Example

The figure below shows the derivation of an estimate of work in staff-hours to design/develop/test a Computer Software Configuration Item (CSCI) named YYYY. The estimate is based on the size of an existing CSCI named XXXX and the productivity numbers assigned to the “Real Time” category of software from the productivity chart in Steve McConnell‘s “Software Estimation: Demystifying the Black Art“.

Of course, the simple equation used to compute effort and all of the variables in it can be challenged, but would it improve the accuracy of the range of estimates?

Reuse Based Estimation

“It’s called estimation, not exactimation” – Scott Ambler

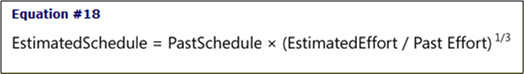

One of the pragmatically simple, down to earth equations in Steve McConnell‘s terrific “Software Estimation” defines the schedule for a new software development project in terms of past performance as:

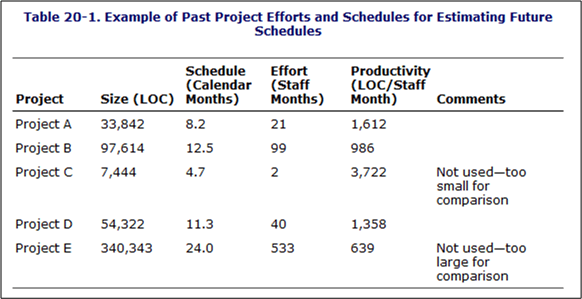

Of course, in order to use the equation to compute a guesstimate, as the table below shows, you must have tracked and recorded past efforts along with the calendar times it took to get those jobs completed.

Of course, not many orgs keep a running tab of past projects in an integrated, simple to use, easily accessible form like the above table, or do they? The info may actually be available someplace in the corpo data dungeon, but it’s likely fragmented, scattered, and buried within all kinds of different and incompatible financial forms and Microsoft project files. Why is this the case? Because it’s a management task and thus, no one’s responsible for doing it. In elegant corpo-speak, managers are responsible for “getting work done through others“. The catch phrase used to be “getting work done“, but to remove all ambiguity and increase clarity, the “through others” was cleverly or unconsciously tacked on.

How about you? How do you guesstimate effort and schedule?

Add Managers And Hope

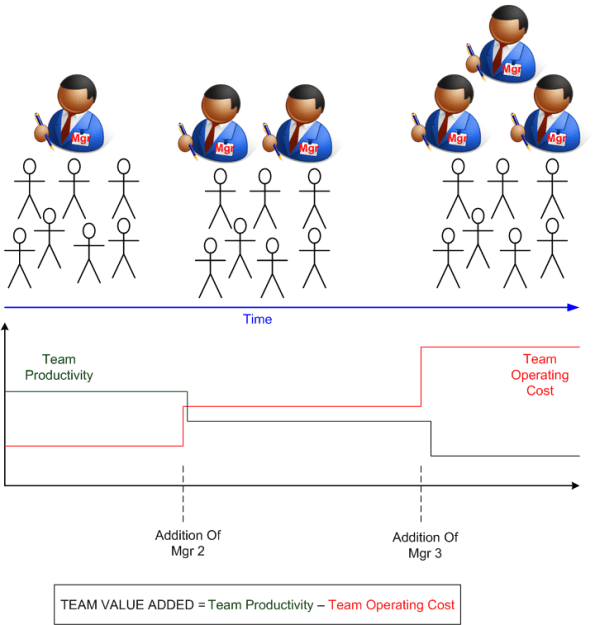

The figure below shows the result of two attempts to increase the productivity of a hypothetical DICteam. In this totally concocted and fictional example, the nervous dudes in the penthouse (not shown in the figure) keep adding specialized managers to the team to fill voids that they perceive are keeping performance from improving. However, since the SCOLs never baseline the TEAM_VALUE_ADDED metric before each brilliant move, or track its increase or decrease with time, they have no idea whether they have achieved their goal. Because SCOLsters think they’re infallible, they just auto-assume that their brilliant moves work out as expected.

Of course, it often turns out that SCOLster decisions and actions do more harm than good. As the graph in the figure for this bogus example shows, not only did the team operating cost increase by the addition of two new manager salaries to the total, the team productivity decreased because of the additional communication and coordination delays inserted into the system. Add an additional “hidden” operating cost due to the high likelihood of jockeying and infighting between the three BMs (to gain favor from the penthouse dudes), and the system performance deteriorates further. Bummer.

So how can SCOLs increase team performance without throwing more useless overhead BM bodies at the problem? For starters, they can clearly communicate the gaps they “see” to the single team coordinator and help him/her rise to the challenge by providing mentorship and advice. They also can replace the BM with a competent, non-BM (fat chance). They can also (heaven forbid) invest in better tools, infrastructure, and training for the one coordinator and team of DICmates – instead of investing that money in more BM specialists. Got any more performance increasing alternatives to the standard “add managers and hope” tactic?

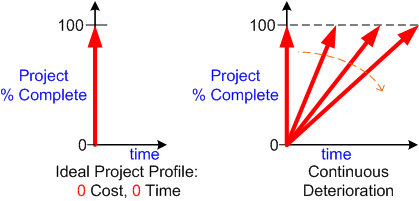

Zero Time, Zero Cost

In “The Politics Of Projects“, Robert Block states that orgs “don’t want projects, they want products“. Thus, the left side of the graph below shows the ideal project profile; zero cost and zero time. A twitch of Samantha Stevens’s nose and, voila, a marketable product appears out of thin air and the revenue stream starts flowin’ into the corpo coffers.

Based on a first order linear approximation, all earthly product development orgs get one of the performance lines on the right side of the figure. There are so many variables involved in the messy and chaotic process from viable idea to product that it’s often a crap shoot at predicting the slope and time-to-100-percent-complete end point of the performance line:

- Experience of the project team

- Cohesiveness of the project team

- Enthusiasm of the project team

- Clarity of roles and responsibilities of each team member

- Expertise in the product application domain

- Efficacy of the development tool set

- Quality of information available to, and exchanged between, project members

- Amount and frequency of meddling from external, non-project groups and individuals

- <Add your own performance influencing variable here>

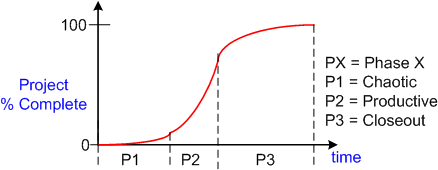

To a second order approximation, the S-curve below shows real world project performance as a function of time. The slope of the performance trajectory (% progress per unit time) is not constant as the previous first order linear model implies. It starts out small during the chaotic phase, increases during the productive stage, then decreases during the closeout phase. The objective is to minimize the time spent in phases P1, P2, and P3 without sacrificing quality or burning out the project team via overwork.

Assume (and it’s a bad assumption) that there’s an objective and accurate way of measuring “% complete” at any given time for a project. Now, assume that you’ve diligently tracked and accumulated a set of performance curves for a variety of large and small projects and a variety of teams over the years. Armed with this data and given a new project with a specific assigned team, do you think you could accurately estimate the time-to-completion of the new project? Why or why not?

Assume (and it’s a bad assumption) that there’s an objective and accurate way of measuring “% complete” at any given time for a project. Now, assume that you’ve diligently tracked and accumulated a set of performance curves for a variety of large and small projects and a variety of teams over the years. Armed with this data and given a new project with a specific assigned team, do you think you could accurately estimate the time-to-completion of the new project? Why or why not?

Wishful And Realistic

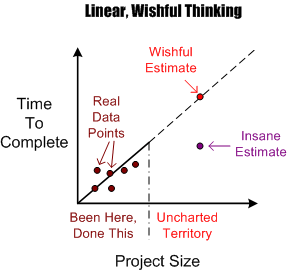

As software development orgs grow, they necessarily take on larger and larger projects to fill the revenue coffers required to sustain the growth. Naturally, before embarking on a new project, somebody’s gotta estimate how much time it will take and how many people will be needed to get it done in that guesstimated time.

The figure below shows an example of the dumbass linear projection technique of guesstimation. Given a set of past performance time-size data points, a wishful estimate for a new and bigger project is linearly extrapolated forward via a neat and tidy, mechanistic, textbook approach. Of course, BMs, DICs, and customers all know from bitter personal experience that this method is bogus. Everyone knows that software projects don’t scale linearly, but (naturally) no one speaks up out of fear of gettin’ their psychological ass kicked by the pope du jour. Everyone wants to be perceived as a “team” player, so each individual keeps their trap shut to avoid the ostracism, isolation, and pariah-dom that comes with attempting to break from clanthink unanimity. Plus, even though everyone knows that the wishful estimate is an hallucination, no one has a clue of what it will really take to get the job done. Hell, no one even knows how to define and articulate what done means. D’oh! (Notice the little purple point in the lower right portion of the graph. I won’t even explain its presence because you can easily figure out why it’s there.)

OK, you say, so what works better Mr. Smarty-Pants? Since no one knows with any degree of certainty what it will take to “just get it done” (<- tough management speak – lol!) nothing really works in the absolute sense, but there are some techniques that work better than the standard wishful/insane projection technique. But of course, deviation from the norm is unacceptable, so you may as well stop reading here and go back about your b’ness.

OK, you say, so what works better Mr. Smarty-Pants? Since no one knows with any degree of certainty what it will take to “just get it done” (<- tough management speak – lol!) nothing really works in the absolute sense, but there are some techniques that work better than the standard wishful/insane projection technique. But of course, deviation from the norm is unacceptable, so you may as well stop reading here and go back about your b’ness.

One such better, but forbidden, way to estimate how much time is needed to complete a large hairball software development project is shown below. A more realistic estimate can be obtained by assuming an exponential growth in complexity and associated time-to-complete with increasing project size. The trick is in conjuring up values for the constant K and exponent M. Hell, it requires trickery to even come up with an accurate estimate of the size of the project; be it function points, lines of code, number of requirements or any other academically derived metric.

An even more effective way of estimating a more accurate TTC is to leverage the dynamic learning (gasp!) that takes place the minute the project execution clock starts tickin’. Learning? Leverage learning? No mechanistic equations based on unquantifiable variables? WTF is he talkin’ bout? He’s kiddin’ right?

Double Whammy

Five Principles

Watts Humphrey is perhaps the most decorated and credentialed member of the software engineering community. Even though his project management philosophy is a tad too rigidly disciplined for me, the dude is 83 years young and he has obtained eons of experience developing all kinds of big, scary, software-intensive systems. Thus, he’s got a lot of wisdom to share and he’s definitely worth listening to.

In “Why Can’t We Manage Large Projects?“, Watts lists the following principles as absolutely necessary for the prevention of major cost and time overruns on knowledge-intensive projects – big and small.

Since nobody’s perfect (except for me — and you?), all tidy packages of advice contain both fluff and substance. The 5 point list above is no different. Numbers 1, 4 and 5, for example, are real motherhood and apple pie yawners – no? However, numbers 2 and 3 contain some substance.

Trustworthy Teams

Number 2 is intriguing to me because it moves the screwup spotlight away from the usual suspects (BMs of course), and onto the DICforce. Watt’s (rightly) says that DIC-teams must be willing to manage themselves. Later in his article, Watts states:

To truly manage themselves, the knowledge workers… must be held responsible for producing their own plans, negotiating their own commitments, and meeting these commitments with quality products.

Now, here’s the killer double whammy:

Knowledge worker DIC-types don’t want to do management work (planning, measuring, watching, controlling, evaluating), and BMs don’t want to give it up to them. – Bulldozer00

Besides disliking the nature of the work, the members of the DICforce know they won’t be rewarded or given higher status in the hierarchy for taking on the extra workload of planning, measuring, and status taking. Adding salt to the wound, BMs won’t give up their PWCE “job” responsibilities because then it would (rightfully) look like they’re worthless to their bosses in the new scheme of things. Bummer, no?

Facts And Data

As long as orgs remain structured as stratified hierarchies (which for all practical purposes will be forever unless an act of god occurs), Watts’s noble number 3 may never take hold. Ignoring facts/data and relying on seniority/status to make decisions is baked into the design of CCHs, and it always will be.

It’s the structure, stupid! – Bulldozer00

Push Back

Besides being volatile, unpredictable, and passionate, I “push back” against ridiculous schedules. While most fellow DICs passively accept hand-me-down schedules like good little children and then miss them by a mile, I rage against them and miss them by a mile. Duh, stupid me.

How about you? What do you do, and why?