Archive

Fly On The Wall

Michael “Rands In Repose” Lopp has been one of my heroes for a long time. Here’s one reason why: rands tumbles – Friday Management Therapy. I would have loved to be a fly on the wall at that workshop, wouldn’t you?

BTW, does anyone know what the”Buzz Kills” attribute means? If you don’t know what I’m talkin’ bout, then you didn’t click on the link and read the list. Shame on you 🙂

Push And Pull Message Retrieval

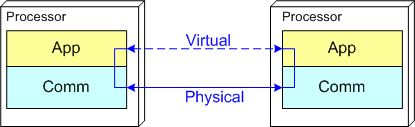

The figure below models a two layer distributed system. Information is exchanged between application components residing on different processor nodes via a cleanly separated, underlying communication “layer“. App-to-App communication takes place “virtually“, with the arcane, physical, over-the-wire, details being handled under the covers by the unheralded Comm layer.

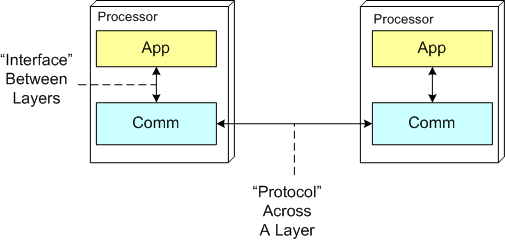

In the ISO OSI reference model for inter-machine communication, the vertical linkage between two layers in a software stack is referred to as an “interface” and the horizontal linkage between two instances of a layer running on different machines is called a “protocol“. This interface/protocol distinction is important because solving flow-control and error-control issues between machines is much more involved than handling them within the sheltered confines of a single machine.

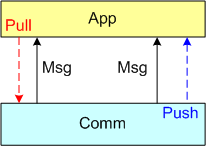

In this post, I’m going to focus on the receiving end of a peer-to-peer information transfer. Specifically, I’m going to explore the two methods in which an App component can retrieve messages from the comm layer: Pull and Push. In the “Pull” approach, message transfer from the Comm layer to the App layer is initiated and controlled by the App component via polling. In the “Push” method, inversion of control is employed and the Comm layer initiates/controls the transfer by invoking a callback function installed by the App component on initialization. Any professional Comm subsystem worth its salt will make both methods of retrieval available to App component developers.

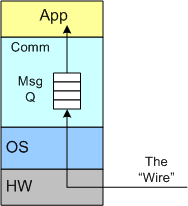

The figure below shows a model of a comm subsystem that supplies a message queue between the application layer and the “wire“. The purpose of this queue is to prevent high rate, bursty, asynchronous message senders from temporarily overwhelming slow receivers. By serving as a flow rate smoother, the queue gives a receiver App component a finite amount of time to “catch up” with bursts of messages. Without this temporary holding tank, or if the queue is not deep enough to accommodate the worst case burst size, some messages will be “dropped on the floor“. Of course, if the average send rate is greater than the average processing rate in the receiving App, messages will be consistently lost when the queue eventually overflows from the rate mismatch – bummer.

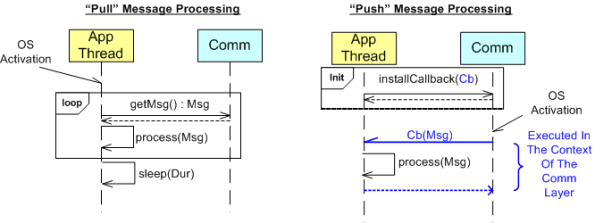

The UML sequence diagram below zeroes in on the interactions between an App component thread of execution and the Comm layer for both the “Push” and “Pull” methods of message retrieval. When the “Pull” approach is implemented, the OS periodically activates the App thread. On each activation, the App sucks the Comm layer queue dry; performing application-specific processing on each message as it is pulled out of the Comm layer. A nice feature of the “Pull” method, which the “Push” method doesn’t provide, is that the polling rate can be tuned via the sleep “Dur(ation)” parameter. For low data rate message streams, “Dur” can be set to a long time between polls so that the CPU can be voluntarily yielded for other processing tasks. Of course, the trade-off for long poll times is increased latency – the time from when a message becomes available within the Comm layer to the time it is actually pulled into the App layer.

In the”Push” method of message retrieval, during runtime the Comm layer activates the App thread by invoking the previously installed App callback function, Cb(Msg), for each newly received message. Since the App’s process(Msg) method executes in the context of a Comm layer thread, it can bog down the comm subsystem and cause it to miss high rate messages coming in over the wire if it takes too long to execute. On the other hand, the “Push” method can be more responsive (lower latency) than the “Pull” method if the polling “Dur” is set to a long time between polls.

So, which method is “better“? Of course, it depends on what the Application is required to do, but I lean toward the “Pull” Method in high rate streaming sensor applications for these reasons:

- In applications like sensor stream processing that require a lot of number crunching and/or data associations to be performed on each incoming message, the fact that the App-specific processing logic is performed within the context of the App thread in the “Pull” method (instead of the Comm layer) means that the Comm layer performance is not dependent on the App-specific performance. The layers are more loosely coupled.

- The “Pull” approach is simpler to code up.

- The “Pull” approach is tunable via the sleep “Dur” parameter.

How about you? Which do you prefer, and why?

The Boundary

Mr. Watts Humphrey‘s final book, titled “Leadership, Teamwork, and Trust: Building a Competitive Software Capability” was recently released and I’ve been reading it online. Since I’m in the front end of the book, before the TSP–PSP crap, I mean “stuff“, is placed into the limelight for sale, I’m enjoying what Watts and co-author James W. Over have written about the 21st century “management of knowledge workers problem“. Knowledge workers manipulate knowledge in the confines of their heads to create new knowledge. Physical laborers manipulate material objects to create new objects. Since, unlike physical work, knowledge work is invisible, Humphrey and Over (rightly) assert that knowledge work can’t be managed by traditional, early 20th century, management methods. In their own words:

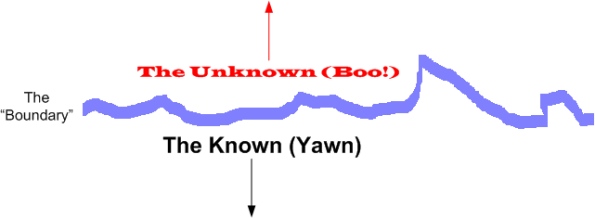

Knowledge workers take what is known, and after modifying and extending it, they combine it with other related knowledge to actually create new knowledge. This means they are working at the boundary between what is known and what is unknown. They are extending our total storehouse of knowledge, and in doing so, they are creating economic value. – Watts Humphrey & James W. Over

But Watts and Over seem inconsistent to me (and it’s probably just me). They talk about the boundary ‘tween the known and the unknown, yet they advocate the heavyweight pre-planning of tasks down to the 10 hour level of granularity. When you know in advance that you’ll be spending a large portion of your time exploring and fumbling around in unknown territory, it’s delusional for others who don’t have to do the work themselves to expect you to chunk and pre-plan your tasks in 10 hour increments, no?

Nothing is impossible for the man who doesn’t have to do it himself. – A. H. Weiler

Mangled Model

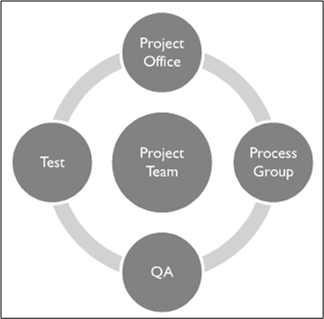

In their book, “Leadership, Teamwork, and Trust: Building a Competitive Software Capability“, Watts Humphrey and James Over model a typical software project system via the diagram below (note that they have separate Quality Assurance and Test groups and they put the “project office” on top).

Bulldozer00 would have modeled the typical project system like this:

Of course, the immature and childish BD00 model would be “inappropriate” for inclusion into a serious book that assumes impeccable, business-like behavior and maturity emanating from each sub-group. Oh, and the book wouldn’t sell many copies to the deep pocketed audience that it targets. D’oh!

When The Spigot Runs Dry

It was recently hinted to me that, for a legitimate business reason, the fun and exciting distributed systems IR&D (Internal Research and Development) software project that I’m working on might get canned. I sadly agree that if there are no customers for a product, and day-to-day fires are burning all over the place, it’s most definitely a legitimate business reason to turn off the financial spigot.

In addition to the “hint“, several important technical people have been reassigned to other maintenance projects. Bummer, but shite happens.

A Free Pass

In a culture of blame, and its Siamese twin, fear, any non-manager group member who consistently asks tough questions and points out shoddy, incomplete, ambiguous work becomes a group target for retribution. This defensive peer group behavior is a natural response to redirect attention away from the stank and to squelch criticism. The funny thing is, managers in CCHs are given a free pass to ask tough questions and criticize without fear of retribution. It helps that managers don’t produce any work products that can be scrutinized by DICsters – if they wanted to. Even if managers did pitch in by leading by example, most DICkies wouldn’t point out flaws because of……. fear of downstream retribution.

Ironically, because of the hierarchical mindset ingrained into all members of a DYSCO, and even though bad managers don’t have to worry about being tarred and feathered by the DICforce, most managers at the workface are incapable of asking the tough questions. Watts Humphrey summarizes this managerial shortcoming nicely:

However, as (Peter) Drucker pointed out, managers can’t manage knowledge work. This means that they cannot plan knowledge work, they cannot monitor and track such work, and they cannot determine and report on job status. – Watts Humphrey & James Over

Cultures of blame and fear of retribution go hand in hand with command and control hierarchies like peas and carrots, Jenny and Forrest. To expect otherwise is to be delusional.

Apples And Oranges

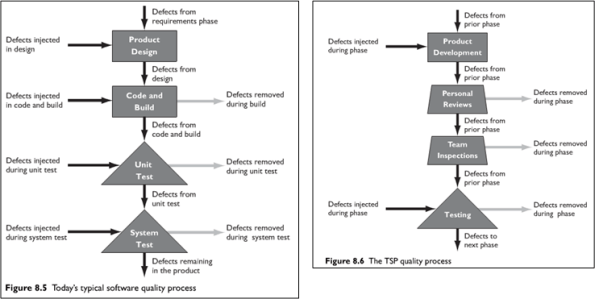

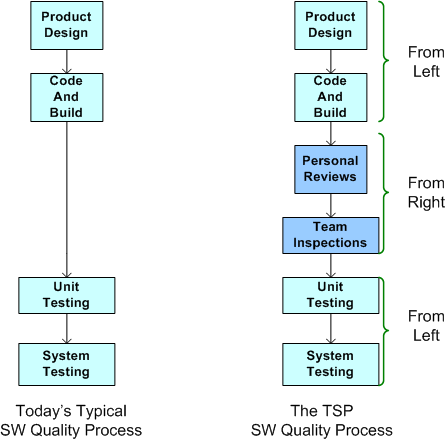

In “Leadership, Teamwork, and Trust“, Watts Humphrey and James Over build a case against the classic “Test To Remove Defects” mindset (see the left portion of the figure below). They assert that testing alone is not enough to ensure quality – especially as software systems grow larger and commensurately more complex. Their solution to the problem (shown on the right of the figure below) calls for more reviews and inspections, but I’m confused as to when they’re supposed to occur: before, after, or interwoven with design/coding?

If you compare the left and right hand sides of the figure, you may have come to the same conclusion as I have: it seems like an apples to oranges comparison? The left portion seems more “concrete” than the right portion, no? Since they’re not enumerated on the right, are the “concrete” design and code/build tasks shown on the left subsumed within the more generic “Product Development” box on the right?

In order to un-confuse myself, I generated the equivalent of the Humphrey/Over TSP (Team Software Process) quality process diagram on the right using the diagram on the left as the starting reference point. Here’s the resulting apples-to-apples comparison:

If this is what Humphrey and Over meant, then I’m no longer confused. Their TSP approach to software quality is to supplement unit and system testing with reviews and inspections before testing occurs. I wish they said that in the first place. Maybe they did, but I missed it.

If this is what Humphrey and Over meant, then I’m no longer confused. Their TSP approach to software quality is to supplement unit and system testing with reviews and inspections before testing occurs. I wish they said that in the first place. Maybe they did, but I missed it.

Inchies

So, what’s an Inchie? It’s an “INfallibility CHIp“. An Inchie is an invisible, but paradoxically real corpo currency that is the opposite of a demerit. An increasing Inchie stash is required to move up in a corpo caste system. The higher up you are in a CLORG, the more Inchies you have been awarded, and the more infallible you’ve become.

At level 0 down in the basement, you begin the game with 0 Inchies and you start making your moves – climbing the ladder Inchie by Inchie. Be careful and keep watch over your Inchie stash though, cuz your peers will try to steal your Inchies when you’re not looking.

Alas, even though you now know that Inchies exist, don’t get your hopes up. You see, the criteria managers at level N (where N > 0) use for disbursing Inchies down to the less infallible people at level N-1 are random. Even among managers within a given level, the award criteria is arbitrarily different. Plus, to make the game more difficult, the dudes who awarded you your Inchies can take them back whenever they feel the need to “scratch your Inchie” – especially if you piss them off with career ending moves. D’oh!

Alas, even though you now know that Inchies exist, don’t get your hopes up. You see, the criteria managers at level N (where N > 0) use for disbursing Inchies down to the less infallible people at level N-1 are random. Even among managers within a given level, the award criteria is arbitrarily different. Plus, to make the game more difficult, the dudes who awarded you your Inchies can take them back whenever they feel the need to “scratch your Inchie” – especially if you piss them off with career ending moves. D’oh!

Once you make it to the top of the pyramid with your big bag o’ Inchies, not only have you amassed the most Inchies in the DYSCO, but you’re given the keys to the Inchie minting machine. This gives you the opportunity to fabricate an unlimited number of Inchies to add to your display case and to sprinkle upon your sycophant crew as you please. You’ve become a 100% infallible god in the DYSCO microcosm. Whoo Hoo and Kuh-nInchie-wah!

Mismatch

Assume that you’re tasked to create a two component, distributed software system as shown in the figure below. The nature of the application is such that during runtime, component 1 will continuously transmit a “bursty“, asynchronous stream of messages to component 2. During evolution of the system in the future, you know that more and more stages will be tacked on to the “pipeline“, with each stage adding value to a growing customer base (if you don’t screw it up and hatch a BBoM).

Note that the relationship between application components is peer-to-peer and not client-server like this:

One question is this: “Why on earth would anyone choose a client-server messaging system (with peer-to-peer capability tacked on) over a peer-to-peer messaging system for this class of application?“. The question especially applies to product organizations that strive to develop distinctly elegant and innovative solutions – which hopefully includes yours. A second question is: “What would technologically savvy customers think?“. Of course, if you think your customers are dumb-asses (and you won’t be in business for long if you do) and can’t tell the difference, then the situation is a “don’t care“, no?

Flouting Convention

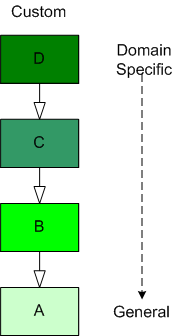

As software-centric systems get more complex, one of the most effective tools for preventing the creation of monstrous BBoMs downstream is “layering”. The figure below shows a generic model of the layering concept.

When you use layering, you partition your system into a vertical stack with the most “exciting” application-specific functions and objects at the top of the stack and the more mundane and boring functionality down in the basement. In a pure layered system, the higher layers depend on the services provided by the lower levels and there are no dependencies the other way. The cleaner and crisper your inter-layer boundaries, the lower your maintenance cost and frustration.

The figure below shows the conventional approach of representing an inheritance hierarchy in an object oriented design. What’s wrong with this picture? Relative to the layered model, it’s “upside down“. The most general class is on top and the most domain-specific class is at the bottom. WTF and D’oh!

Since “layering” has been around much longer than object-orientation, Bulldozer00 thinks that a layered, object-oriented software system should always be presented to stakeholders like this:

This method of representation aligns cleanly with the layered “view” of the system and is thus, less confusing and dis-orienting to all audiences, dontcha think? To hell with convention, – at least in this situation.