Archive

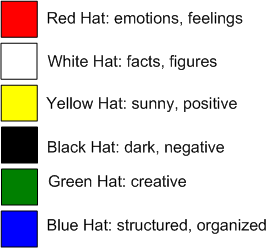

Colored Thinking

In his short and pithy “Six Thinking Hats” book, Edward De Bono describes his structured, but diverse, problem solving method for groups of people who are wrestling with an issue. The picture below summarizes Edward’s six thinking hat colors and the modes of thinking that they represent.

Armed with an understanding of the six thinking hats method, the idea is that a group led by a blue hat facilitator could collectively switch colors and express aligned thinking to explore all aspects of an issue/problem/decision under discussion. The good thing about Mr. De Bono’s method is that it is down-to-earth; it’s easily and quickly learned. It’s not anchored in the latest management jargon du jour and you don’t have to attend a 40 hour elitist university MBA course to absorb the subject matter.

The figure below models a typical six thinking hats use case. The group gathering is framed by “blue hat” thinking book ends. At the start of the discussion, the blue hat wearer (usually the meeting instigator) frames and bounds the gathering with answers to the “why we are here” and “what we’re trying to do” questions. Next, under the fluid direction of the blue hat wearing dude, the group iterates on the issue by collectively switching modes of thinking when deemed necessary. Finally, the blue hat director ends the gathering with the answer to the “what we accomplished here” question – which may or may not be nothing. Simple and doable, no?

By applying the six hats thinking method, the hope is that the mold will be broken on the same-old, same-old, rudderless, alpha-dominated, black-hat-only, egofestive modus of operandi that takes place everywhere in command and control hierarchies across the land:

So, what do you think? Substance or snake oil? If substance, would you try to promote the six thinking hats method in your org? If you think the method has potential but you won’t step up to champion it………. why not?

Successful Dictatorship

I’m intrigued by, and respectful of, enigmatic guys like Steve Jobs. Despite reports of being an explosive control freak and a micro-manager, he continuously inspires his troops to greater heights. John Sculley, the CEO at Apple Inc. before Jobs seized the reins, gives a fascinating interview about his time at Apple and working with Mr. Jobs in this blarticle: “John Sculley On Steve Jobs“.

On the dogmatic “the customer is always right” theme:

He (Jobs) said, “How can I possibly ask somebody what a graphics-based computer ought to be when they have no idea what a graphic based computer is? No one has ever seen one before.”

On bucking the traditional advice to avoid micro-managing your people:

“He (Jobs) was a person of huge vision. But he was also a person that believed in the precise detail of every step. He was methodical and careful about everything — a perfectionist to the end.”

On leadership skills:

“He (Jobs) was extremely charismatic and extremely compelling in getting people to join up with him and he got people to believe in his visions even before the products existed.”

On the “bozo” (lol) issue:

“The other thing about Steve was that he did not respect large organizations. He felt that they were bureaucratic and ineffective. He would basically call them “bozos.” “

On the dogmatic “leaders should remain cool, composed and unemotional at all times (to feign an image of complete self-control)”:

“Steve would shift between being highly charismatic and motivating and getting them excited to feel like they are part of something insanely great. And on the other hand he would be almost merciless in terms of rejecting their work until he felt it had reached the level of perfection that was good enough to go into – in this case, the Macintosh.”

On the natural entropy-driven deterioration of once vibrant orgs into corpricracies:

And you can see today the tremendous problem Sony has had for at least the last 15 years as the digital consumer electronics industry has emerged. They have been totally stove-piped in their organization. The software people don’t talk to the hardware people, who don’t talk to the component people, who don’t talk to the design people. They argue between their organizations and they are big and bureaucratic.

On the “power of less” and beating complexity into submission with simplicity:

He’s a minimalist and constantly reducing things to their simplest level. It’s not simplistic. It’s simplified. Steve is a systems designer. He simplifies complexity.

Being a biased and incorrigibly self-serving bozeltine myself, I cherry-picked this Sculley quote for last to promote my real agenda:

Engineers are far more important than managers at Apple — and designers are at the top of the hierarchy.

What We Look for in Founders

In “What We Look for in Founders“, Y Combinator principal Paul Graham lists the top 5 traits that his vulture capital investment company looks for in a would-be entrepreneur:

- Determination

- Flexibility

- Imagination

- Naughtiness

- Friendship

While writing about “determination”, Mr. Graham says:

“We thought when we started Y Combinator that the most important quality would be intelligence. That’s the myth in the Valley.”

Note that the following traits that pervade toxic corpocracies everywhere are not on the list:

- infallibility

- arrogance

- entitlement

- image

- bloodline

Communication Layer Performance Benchmarking

Along with two outstanding and dedicated peers, I’m currently designing and writing (in C++) a large, distributed, multi-process, multi-threaded, scalable, real-time, sensor software system. Phew, that’s a lot of “see how smart I am” techno-jargon, no?

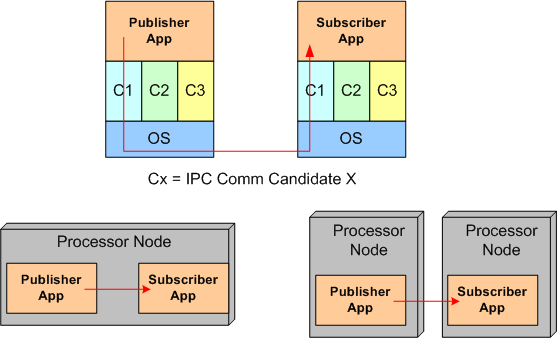

Since the performance and reliability of the underlying Inter-Process Communication (IPC) layer is critical to meeting our customer’s end-to-end system latency and throughput requirements, we decided to measure the performance of three different IPC candidates:

- Real Time Innovations Inc.’s implementation of the Object Management Group’s Data Distribution Service (DDS) standard

- The Apache Software Foundation’s ActiveMQ implementation of the Java Messaging System (JMS) standard

- A homegrown brew built on top of the Boost Organization‘s Asio (Asychronous input output) portable C++ library.

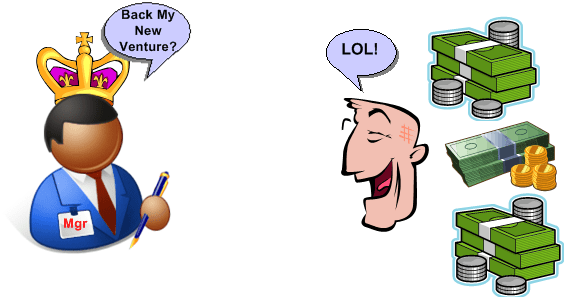

The figure below shows the average CPU load vs throughput performance of the three distributed system messaging communication candidates. Notice that the centralized broker-based JMS approach yielded horrendous relative results.

Transmit batching, along with a whole bevy of “free” (to application layer programmers) tunable features in RTI’s DDS, consists of aggregating a bunch of application layer messages into one network packet to increase the throughput (at the expense of increased latency). Since batching isn’t available in AMQ JMS or our “homegrown” Boost.Asio comm layer candidate, only the DDS performance increase is shown on the graph.

Measurement Approach

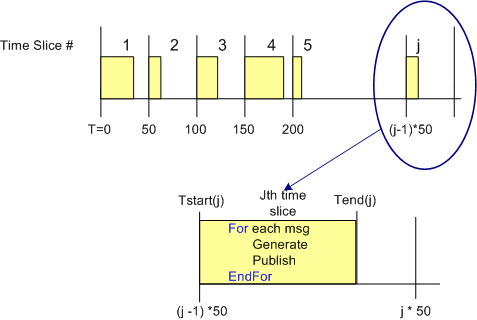

One way to measure the CPU load imposed on a processor node by an IPC layer candidate in a data streaming, real-time, system is to quantize time into discrete slices and measure the per slice processing time that it takes to send a fixed number of messages out via the comm software stack. Since other non-deterministic OS runtime functionality shares the CPU with the application processes and the comm software stack, measuring and averaging the normalized CPU time across a large number of slices can give some quantitative feel for the load imposed on the processor.

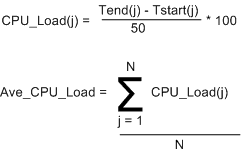

The figure below shows the approach that was taken to measure the CPU load versus throughput performance of the three communication layer candidates. To implement this strategy, I wrote a small C++ test application that is designed to operate in a time sliced mode, where the time slice size (default = 50 msecs) is user selectable via the command line.

During runtime, the test app generates and publishes a stream of “canned” messages at a user specified rate and for a user-defined test run duration. Upon the start of each time slice, the current time is “grabbed” and stored for later use. At the end of each tight, K-message, generate-and-publish loop, the end time is retrieved from the OS and then the percent CPU load for the slice is calculated in accordance with the simple equations below. At the end of the test run, the first 1000 sample points are averaged, and the result, along with the max and min loads measured during the run are printed to the console and a date stamped log file.

Of course, to ensure that the comm layer candidate wasn’t dropping or corrupting application messages during test runs, I wrote a subscriber app to provide a “resistor load” on the performance measuring publisher app process. By comparing the number and integrity of messages received to the number and integrity of those transmitted, the measurements were given higher credibility. The figure below shows the test fixtures that I ran the performance tests on. For the AMQ JMS candidate, a broker process was running along side of the app processes, but his single-point-of-failure component is not shown in the diagram.

Of course, to ensure that the comm layer candidate wasn’t dropping or corrupting application messages during test runs, I wrote a subscriber app to provide a “resistor load” on the performance measuring publisher app process. By comparing the number and integrity of messages received to the number and integrity of those transmitted, the measurements were given higher credibility. The figure below shows the test fixtures that I ran the performance tests on. For the AMQ JMS candidate, a broker process was running along side of the app processes, but his single-point-of-failure component is not shown in the diagram.

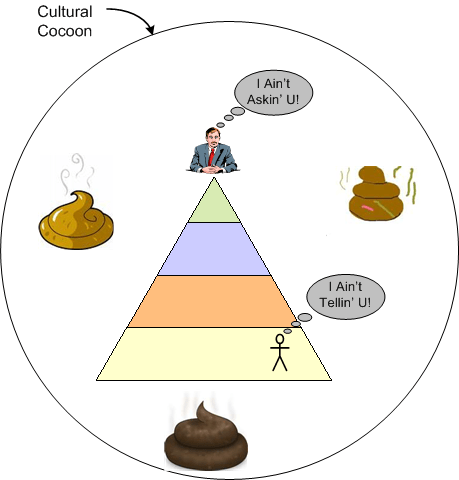

Don’t Ask, Don’t Tell

The corpocratic version of the “don’t ask don’t tell” policy is “SCOLs don’t ask what’s wrong, DICs don’t tell what’s wrong“. SCOLs, CGHs, BUTTs, and BOOGLs ensconced in self-images of infallibility don’t need to ask because they always know:

- what’s wrong,

- when it went wrong,

- who’s “responsible” for the wrong.

Likewise, the aforementioned management team always knows how to fix what’s wrong by launching initiatives that “get the job done“. Of course, when you don’t ever establish a baseline or periodically evaluate post-launch progress, initiatives will always be successful and reinforce the image of infallibilty, no? The funny thing is, if SCOLs always know the what, why, and who of dysfunction, how come they didn’t prevent the dysfunction in the first place?

Implicit Type Conversion

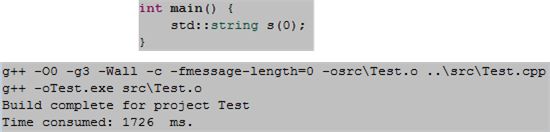

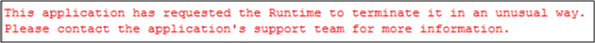

Check out this bit of C++ code and its unexpected, successful compile:

Next, observe the runtime result from the Win Visual Studio 8.0 IDE:

Thirdly, check out this bit of C++ code and its expected, unsuccessful compile:

What’s up wit’ dat? The reason I brought this up is because we’ve discovered that we have this type of bug in our growing 5 figure code base. However, we haven’t been able to locate and kill it – yet.

Note: After being enlightened by a kind and sharing member of a linkedIn.com C++ group, I was told the reason for the successful compile. Since one of the constructors of the base class of std::string takes a char*, the 0 in the s(0) definition is implicitly converted from int(o) to char*(0); the null pointer. In the non-zero case, the compiler rejects the attempt to convert int(1) into char*(1). As this example shows, implicit type conversion, a necessary C++ language feature needed to remain backwardly compatible with C, can be a tricky and subtle source of bugs.

Note: After being enlightened by a kind and sharing member of a linkedIn.com C++ group, I was told the reason for the successful compile. Since one of the constructors of the base class of std::string takes a char*, the 0 in the s(0) definition is implicitly converted from int(o) to char*(0); the null pointer. In the non-zero case, the compiler rejects the attempt to convert int(1) into char*(1). As this example shows, implicit type conversion, a necessary C++ language feature needed to remain backwardly compatible with C, can be a tricky and subtle source of bugs.

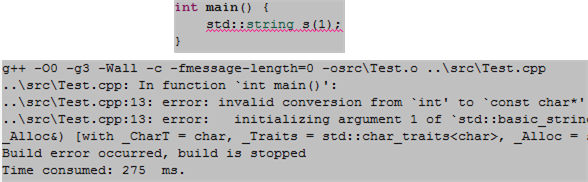

Five Levels

According to Russell Ackoff, there are five types of conceptual content. In order of increasing scarcity, they are Data, Information, Knowledge, Understanding, and Wisdom.

Data and information answer “what something is” questions. Knowledge answers “how something works” questions. Understanding answers “why something is the way it is” questions. Wisdom, the rarest form of conceptual content, is altogether a different beast. It answers all questions.

The acts of probing, sensing, and measuring produce raw data. The filtering, integration and association of data fragments creates information. The mistake-prone application of information and learning from errors leads to knowledge. The application of holistic, systems thinking to knowledge creates understanding.

Unlike the other four types of content, which integrate up and progress sequentially from each other, wisdom may not. Wisdom may appear instantaneously on its own by the grace of some higher power. It has to be that way. If it wasn’t, then only highly educated and experienced intellectuals would be capable of acquiring wisdom – and we know that isn’t true, don’t we? Wisdom is accessible to all human beings regardless of race, age, culture, wealth, or any other trait. The trouble is that society, especially western societies, wants us to think otherwise. No?

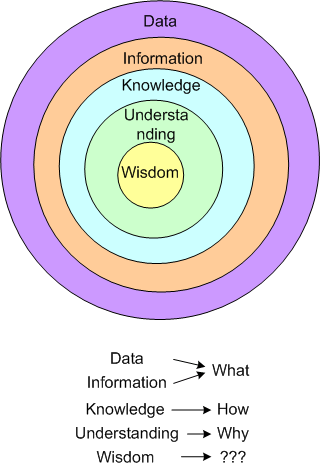

Domain, Infrastructure, And Source

Via a simple SysML diagram that solely uses the “contains” relationship icon (the circled crosshairs thingy) , here’s Bulldozeroo’s latest attempt to make sense of the relationships between various levels of abstraction in the world of software as he knows it today. Notice that in Bulldozer00’s world, where the sky is purple, the architecture is at the center of the containment hierarchy.

Nested Monarchies

Once again, I’m verklempt, so tawk amongst yourselves. I’ll give you a topic: “nested monarchies”.

Quantification Of The Qualitative

Because he bucked the waterfall herd and advocated “agile” software development processes before the agile movement got started, I really like Tom Gilb. Via a recent Gilb tweet, I downloaded and read the notes from his “What’s Wrong With Requirements” keynote speech at the 2nd International Workshop on Requirements Analysis. My interpretation of his major point is that the lack of quantification of software qualities (you know, the “ilities”) is the major cause of requirements screwups, cost overruns, and schedule failures.

Here are some snippets from his notes that resonated with me (and hopefully you too):

- Far too much attention is paid to what the system must do (function) and far too little attention to how well it should do it (qualities) – in spite of the fact that quality improvements tend to be the major drivers for new projects.

- There is far too little systematic work and specification about the related levels of requirements. If you look at some methods and processes, all requirements are ‘at the same level’. We need to clearly document the level and the relationships between requirements.

- The problem is not that managers and software people cannot and do not quantify. They do. It is the lack of ‘quantification of the qualitative’ that is the problem.

- Most software professionals when they say ‘quality’ are only thinking of bugs (logical defects) and little else.

- There is a persistent bad habit in requirements methods and practices. We seem to specify the ‘requirement itself’, and we are finished with that specification. I think our requirement specification job might be less than 10% done with the ‘requirement itself’.

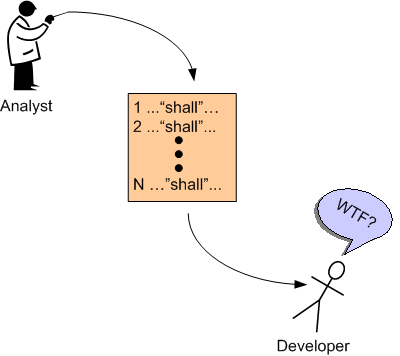

I can really relate to items 2 and 5. Expensive and revered domain specialists often do little more than linearly list requirements in the form of text “shalls”; with little supporting background information to help builders and testers clearly understand the “what” and “why” of the requirements. My cynical take on this pervasive, dysfunctional practice is that the analysts themselves often don’t understand the requirements and hence, they pursue the path of least resistance – which is to mechanically list the requirements in disconnected and incomprehensible fragments. D’oh!