Archive

Monitoring And Learning

Courtesy of this Scott Berkun retweet,

I latched onto this Harvard Business School paper abstract:

Even though the paper is laced with impeccable math and densely “irrefutable” logic, the conclusion of “looser monitoring -> more learning -> more creativity & innovation” seems intuitively obvious, doesn’t it?

Assume that the top leaders in your org embrace the idea and sincerely want to put it into action to detach the group from the status quo and propel it toward excellence. Well, fuggedaboutit. The scores of mediocre middle managers within the institution who do the monitoring will instantaneously switch into passive-aggressive mode and thwart any attempt to institute the policy. They’ll do this because it will most likely expose the fact that they are not only suppressing creativity and innovation where the rubber hits the road, but they are not adding much value to the operation themselves. How do I know this? Because that’s what I’d feel culturally forced to do. But not you, right?

New DDS Vendors

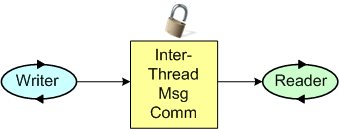

Since I’m a fan of the DDS (Data Distribution Service) inter-process communication infrastructure for distributed systems, I try to keep up with developments in the DDS space. Via an Angelo Corsaro slideshare presentation, I discovered two relatively new commercial vendors of the high performance, low latency, OMG! messaging standard: Twin Oaks Computing and Gallium Visual Systems. I don’t know how long the newcomers have been pitching their DDS implementations or how mature their products are, but I’ll be learning more about them in the weeks to come.

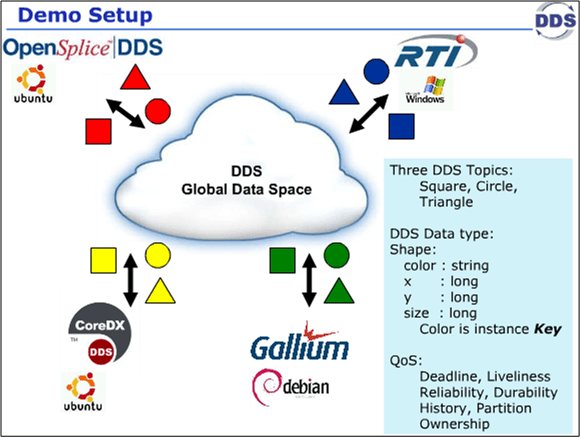

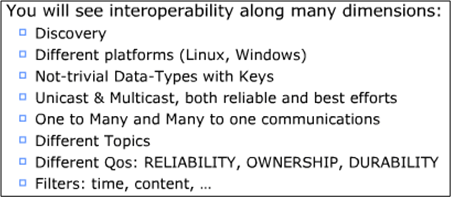

Last week, the four DDS vendors got together at the OMG DDS meeting in CA and they collaboratively executed 9 distributed system test scenarios to highlight the interoperability of the vendors’ products. The Angelo Corsaro slide snippets below show the conceptual context and the goals of the event.

The VP of marketing at RTI, Dave Barnett, published a short video showing that the demo was a success: DDS Interoperability Demo. Since I’m a distributed, real-time software developer geek, this stuff makes me giddy with enthusiasm. Sad, no?

Bugs Or Cash

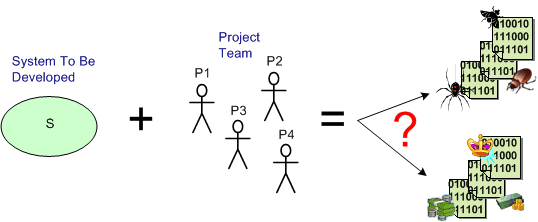

Assume that you have a system to build and a qualified team available to do the job. How can you increase the chance that you’ll build an elegant and durable money maker and not a money sink that may put you out of business.

The figure below shows one way to fail. It’s the well worn and oft repeated ready-fire-aim strategy. You blast the team at the project and hope for the best via the buzz phrase of the day – “emergent design“.

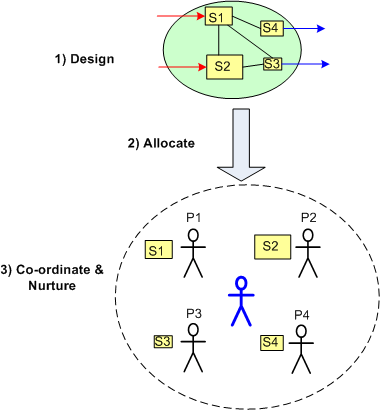

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

On the other hand, the figure below shows a necessary but not sufficient approach to success: design-allocate-coordinate. BTW, the blue stick dude at the center, not the top, of the project is you.

Small, Loose, Big, Tight

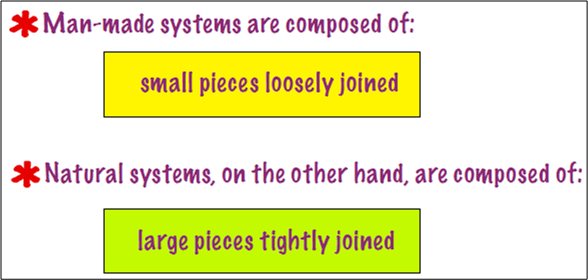

This Tom DeMarco slide from his pitch at the Software Executive Summit caused me to stop and think (Uh oh!):

I find it ironic (and true) that when man-made system are composed of “large pieces tightly joined“, they, unlike natural systems of the same ilk, are brittle and fault-intolerant. Look at the man-made financial system and what happened during the financial meltdown. Since the large institutional components were tightly coupled, the system collapsed like dominoes when a problem arose. Injecting the system with capital has ameliorated the problem, but only the future will tell if the problem was dissolved. I suspect not. Since the structure, the players, and the behavior of the monolithic system have remained intact, it’s back to business as usual.

Similarly, as experienced professionals will confirm, man made software systems composed of “large pieces tightly joined” are fragile and fault-intolerant too. These contraptions often disintegrate before being placed into operation. The time is gone, the money is gone, and the damn thing don’t work. I hate when that happens.

On the other hand, look at the glorious human body composed of “large pieces tightly joined“. It’s natural, built-in robustness and tolerance to faults is unmatched by anything man-made. You can survive and still prosper with the loss of an eye, a kidney, a leg, and a host of other (but not all) parts. IMHO, the difference is that in natural systems, the purposes of the parts and the whole are in continuous, cooperative alignment.

When the individual purposes of a system’s parts become unaligned, let alone unaligned with the purpose of the whole as often happens in man made socio-technical systems when everyone is out for themselves, it’s just a matter of time before an internal or external disturbance brings the monstrosity down to its knees. D’oh!

Don’t You Wish….

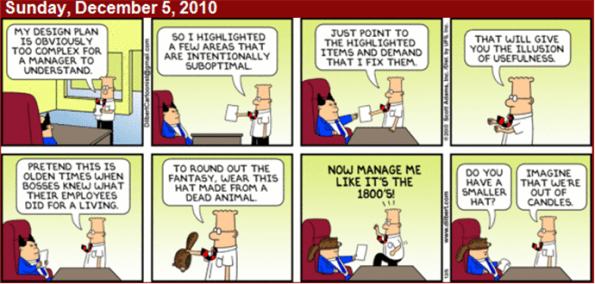

…you can have a Dilbertonian conversation with a BM (past or present) like the one below without getting fired? Of course, the elegant genius of Dilbert is that former cubicle-dweller-turned-gazillionaire Scott Adams makes you want to laugh and cry simultaneously.

Recursive Behavior

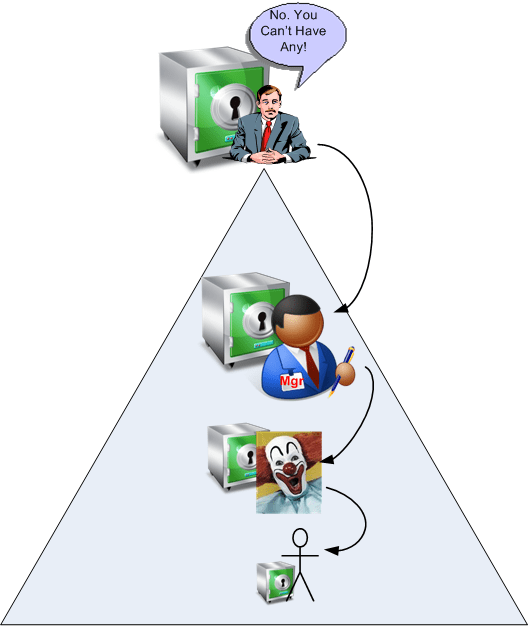

Information hoarding by individuals and orgs used to lead to success in the past, but information sharing is one necessary but insufficient key to success today.

In this century, if the dudes in the penthouse at the top of the pyramid keep all the good stuff locked up in the unspoken name of mistrust, it’s highly likely that this anti-collaborative behavior will be recursively reproduced down the chain of command. Hell, if that behavior led to success for the corpo SCOLs and CGHs, then it will work for the DIC-force too, no?

“Trust is the bandwidth of communication.” – Karl-Erik Sveiby

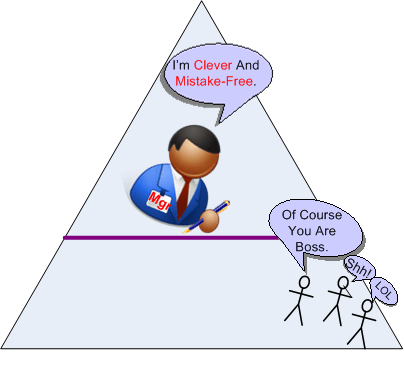

Clever And Mistake-Free

In “The 3 rules of mindsets”, Daniel Pink provides these examples in order to contrast fixed minded thinkers with growth minded thinkers:

- Fixed mindset: Look clever at all costs.

- Growth mindset: Learn, learn, learn.

- Fixed mindset: It should come naturally.

- Growth mindset: Work hard, effort is key.

- Fixed mindset: Hide your mistakes and conceal your deficiencies.

- Growth mindset: Capitalize on your mistakes and confront your deficiencies.

I don’t know about you, but I’m constantly struggling against the “Look clever at all costs” and “Hide your mistakes..” fixed mindset maladies. It’s easy to criticize the “environment” for these shortcomings, but ultimately it’s a personal ego battle, no?

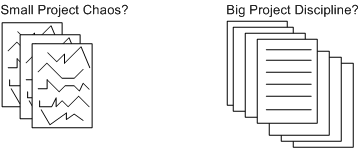

Start Big?

While browsing through the “C++ FAQs“, this particular FAQ caught my eye:

The authors’ “No” answer was rather surprising to me at first because I had previously thought the answer was an obvious “Yes“. However, the rationale behind their collective “No” was compelling. Rather than butcher and fragment their answer with a cut and paste summary, I present their elegant and lucid prose as is:

Small projects, whose intellectual content can be understood by one intelligent person, build exactly the wrong skills and attitudes for success on large projects…..The experience of the industry has been that small projects succeed most often when there are a few highly intelligent people involved who use a minimum of process and are willing to rip things apart and start over when a design flaw is discovered. A small program can be desk-checked by senior people to discover many of the errors, and static type checking and const correctness on a small project can be more grief than they are worth. Bad inheritance can be fixed in many ways, including changing all the code that relied on the base class to reflect the new derived class. Breaking interfaces is not the end of the world because there aren’t that many interconnections to start with. Finally, source code control systems and formalized build procedures can slow down progress.

On the other hand, big projects require more people, which implies that the average skill level will be lower because there are only so many geniuses to start with, and they usually don’t get along with each other that well, anyway. Since the volume of code is too large for any one person to comprehend, it is imperative that processes be used to formalize and communicate the work effort and that the project be decomposed into manageable chunks. Big programs need automated help to catch programming errors, and this is where the payback for static type checking and const correctness can be significant. There is usually so much code based on the promises of base classes that there is no alternative to following proper inheritance for all the derived classes; the cost of changing everything that relied on the base class promises could be prohibitive. Breaking an interface is a major undertaking, because there are so many possible ripple effects. Source code control systems and formalized build processes are necessary to avoid the confusion that arises otherwise.

So the issue is not just that big projects are different. The approaches and attitudes to small and large projects are so diametrically opposed that success with small projects breeds habits that do not scale and can lead to failure of large projects.

After reading this, I initially changed my previously un-investigated opinion. However, upon further reflection, a queasy feeling arose in my stomach because the implication of the authors is that the code bases on big projects aren’t as messy and undisciplined as smaller projects. Plus, it seems as though they imply that disciplined use of processes and tools have a strong correlation with a clean code base and that developers, knowing that the system will be large, will somehow change their behavior. My intuition and personal experience tell me that this may not be true, especially for large code bases that have been around for a long time and have been heavily hacked by lots of programmers (both novice and expert) under schedule pressure.

Small projects may set you up to drown later on, but big projects may start to drown you immediately. What are your thoughts, start small or big?

Static Vs Auto Performance

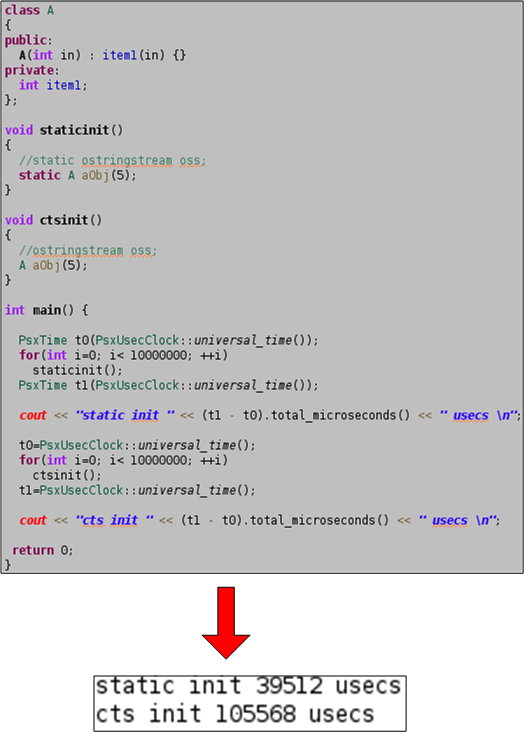

Assume that you’re writing a function that’s called every 5 milliseconds (200 Hz) in a real-time application and that thread-safety for this function is not a factor (it will be called only within one thread of control). Next, assume that you need to use one or more temporary objects to implement the logic in your function. Should you instantiate your objects as static or as auto on the stack?

Unless I’ve made a mental mistake, the source code and results below confirm that using static objects is much more efficient that using auto objects. The code measures the time it takes to instantiate 10 million static and auto objects of type std::ostringstream. The huge performance difference makes sense since the static object is only constructed during the first function call while the auto object is instantiated 10 million times.

It’s likely that std::ostringstream objects are large, but what about small, simple user types? The code below attempts to answer the question by showing that the performance difference between static and auto is still substantial for a trivial object like an instance of class A. I’ve been using this technique for years, but I’ve never quantitatively investigated the static vs auto performance difference until now.

I was motivated to perform this experiment after reading the splendid “Efficient C++“. In the book, authors Bulka and Mayhew show the results of little experiments like these for various C++ programming techniques and idioms. Unlike most classic C++ books, which sprinkle comments and insights about performance tradeoffs throughout the text, the entire book is dedicated to the topic. I highly recommend it for fledgling and intermediate C++ programmers.