Archive

CORKA, The Killer Whale

In case you were wondering, CORKA stands for CORpo Kiss-Ass. In DYSCOs (frequent disclaimer: not all companies are DYSCOs), the CORKA density is a function of the level one operates in within a corpricracy, no?

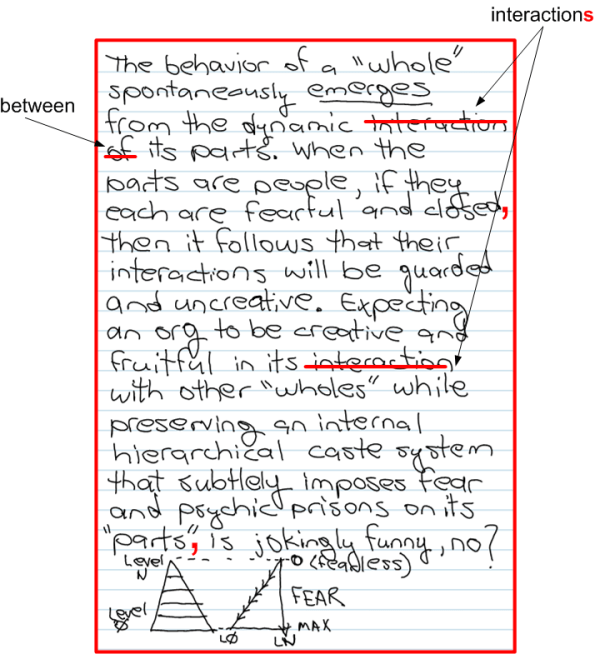

Jokingly Funny

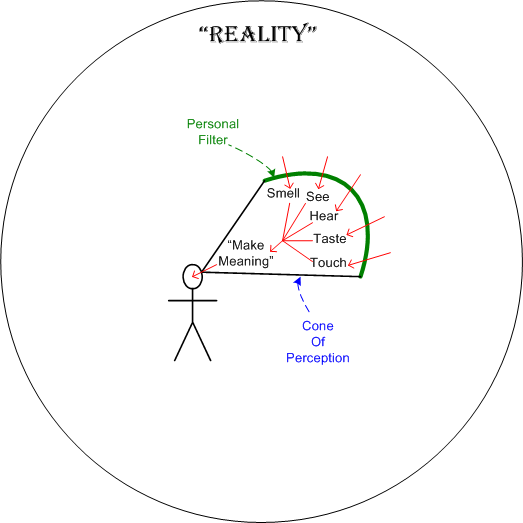

Make Meaning

Can you make meaning out of this freakin’ sketch? I can’t. As I drew it, I struggled to come up with some profound (lol!) words to express my thoughts on the thick and impenetrable “personal filter” (a.k.a UCB) that prevents us from experiencing what’s truly real – which is nothing, er, “no thing“?

21st Century Assembler

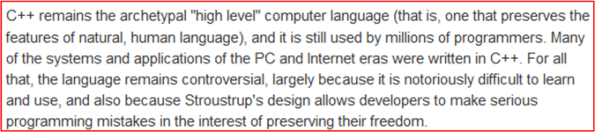

I love working in the malleable medium of C++, but I wonder if it is becoming, or has already become, the “assembly language” of the 21st century – powerful and fast, but hard to learn and easy to make hard-to-diagnose mistakes. I recently stumbled upon this 5 year old interview with C++ creator Bjarne Stroustrup. The interviewer opened with:

Mr. Stroustrup, the honest soul that he is, essentially validated the opening, but with a trailing caveat:

C++ has indeed become too “expert friendly” at a time where the degree of effective formal education of the average software developer has declined. – Bjarne Stroustrup

Since “experts” tend to be more narrow minded than the average Joe, they tend to look down upon newbies and non-experts in their area of expertise. And so it is with many a C++ programmer towards new age programmers who side step C++ for one of the newer, easier to learn, specialized programming languages. In the classic tit-for-tat response, new age programmers belittle C++ users as old timers that are out of touch with the 21st century and still clinging to the horse driven carriage in the era of the lamborghini.

So, who’s “right“? And if you do share your opinion with me, what language do you work with daily?

Defect Diaries

Once again, I’ve stolen a graphic from Watts Humphrey and James Over’s book, “Leadership, Teamwork, and Trust“:

According to this performance chart, the best software developers (those in the first quartile of the distribution) “injected” on average less than 50 bugs (Watts calls them defects) per 1000 lines of code over the entire development effort and less than 10 per 1000 lines of code during testing. Assuming that the numbers objectively and fairly represent “reality“, the difference in quality between the top 25% and bottom 25% of this specific developer sample is approximately a factor of 250/50 = 5.

What I’d like to know, and Humphrey/Over don’t explicitly say in the book (unless I missed it), is how these numbers were measured/reported and how disruptive it was to the development process and team? I’d also like to know what the results were used for; what decisions were made by management based on the data. Let’s speculate…

I picture developers working away, designing/coding/compiling/testing against some requirements and architecture artifacts that they may or may not have had hand in producing. Upon encountering each compiler, linker and runtime error, each developer logs the nature of the error in his/her personal “demerit diary” and fixes it. During independent testing: the testers log and report each error they find; the development team localizes the bug to a module; and the specific developer who injected the defect fixes it and records it in his/her demerit diary.

What’s wrong with this picture? Could it be that developers wouldn’t be the slightest bit motivated to measure and record their “bug injection rates” in personal demerit diaries – and then top it off by periodically submitting their report cards lovingly to their trustworthy management “superiors“? Don’t laugh, because there’s quite a body of evidence that shows that Mr. Humphrey’s PSP/TSP process, which requires institutionalization of this “defection injection rate recording and reporting” practice, is in operation at several (many?) financially successful institutions. If you’re currently working in one of these PSP/TSP institutions, please share your experience with me. I’m curious to hear personal stories directly from PSP/TSP developers – not just from Mr. Humphrey and the CMU SEI.

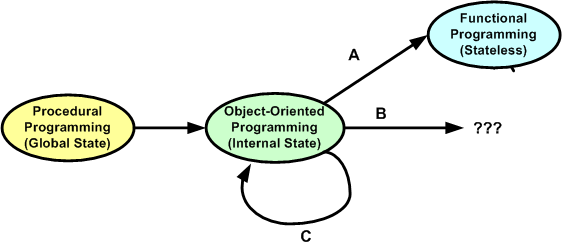

Procedural, Object-Oriented, Functional

In this interesting blog post, Dr Dobbs – Whither F#?, Andrew Binstock explores why he thinks “functional” programming hasn’t displaced “object-oriented” programming in the same way that object-oriented programming started slowly displacing “procedural” programming in the nineties. In a nutshell, Andrew thinks that the Return On Investment (ROI) may not be worth the climb:

“F# intrigued a few programmers who kicked the tires and then went back to their regular work. This is rather like what Haskell did a year earlier, when it was the dernier cri on Reddit and other programming community boards before sinking back into its previous status as an unusual curio. The year before that, Erlang underwent much the same cycle.”

“functional programming is just too much of a divergence from regular programming. ”

“it’s the lack of demonstrable benefit for business solutions — which is where most of us live and work. Erlang, which is probably the closest to showing business benefits across a wide swath of business domains, is still a mostly server-side solution. And most server-side developers know and understand the problems they face.”

So, what do you think? What will eventually usurp the object-oriented way of thinking for programmers and designers in the future? The universe is constantly in flux and nothing stays the same, so the status quo loop modeled by option C below will be broken sometime, somewhere, and somehow. No?

Movin’ On Up

For some unknown reason, I recently found myself reflecting back on how I’ve progressed as a software engineer over the years. After being semi-patient and allowing the fragmented thoughts to congeal, I neatly summed up the quagmire as thus:

- Single Node – Single Process – Single Threaded (SST) programming

- Single Node – Single Process – Multi-Threaded (SSM) programming

- Single Node – Multi-Process – Multi-Threaded (SMM) programming

- Multi-Node – Multi-Process – Multi-Threaded (MMM) programming

It’s interesting to note that my progression “up the stack” of abstraction and complexity did not come about from the execution of some pre-planned, grand master strategy . I feel that I was “tugged” by some unknown force into pursuing the knowledge and skills that have gotten me to a semi-proficient state of expertise in the design and programming of MMM systems.

Being a graphical type of dude, here’s a pictorial representation of how I “moved on up“.

How about you? Do you have a master plan for movin’ on up? Wherever you are in relation to this concocted stack, are you content to stay there – in the womb so-to-speak? If you want to adopt something like it as a roadmap for professional development, are you currently immersed in the type of environment that would allow you to do so?

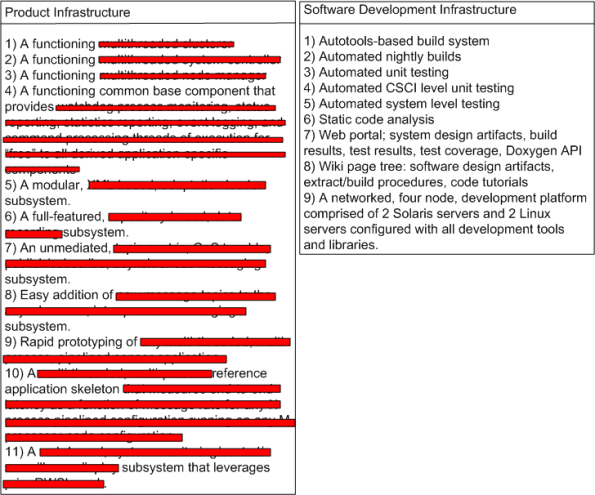

Taking Stock

Every once in awhile, it’s good to step back and take stock of your team’s accomplishments over a finite stretch of time. I did this recently, and here’s the marketing hype that I concocted:

I like to think of myself as a pretty open dude, but I don’t think I’m (that) stupid. The product infrastructure features have been redacted just in case one of the three regular readers of this blasphemous blog happens to be an evil competitor who is intent on raping and pillaging my company.

The team that I’m very grateful to be working on did all this “stuff” in X months – starting virtually from scratch. Over the course of those X months, we had an average of M developers working full time on the project. At an average cost of C dollars per developer-month, it cost roughly X * M * C = $ to arrive at this waypoint. Of course, I (roughly) know what X, M, C, and $ are, but as further evidence that I’m not too much of a frigtard (<- kudos to Joel Spolsky for adding this term of endearment to my vocabulary!), I ain’t tellin’.

So, what about you? What has your team accomplished over a finite period of time? What did it cost? If you don’t know, then why not? Does anyone in your group/org know?

Fly On The Wall

Michael “Rands In Repose” Lopp has been one of my heroes for a long time. Here’s one reason why: rands tumbles – Friday Management Therapy. I would have loved to be a fly on the wall at that workshop, wouldn’t you?

BTW, does anyone know what the”Buzz Kills” attribute means? If you don’t know what I’m talkin’ bout, then you didn’t click on the link and read the list. Shame on you 🙂

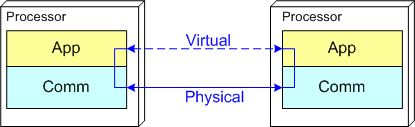

Push And Pull Message Retrieval

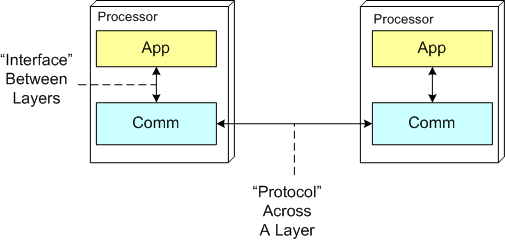

The figure below models a two layer distributed system. Information is exchanged between application components residing on different processor nodes via a cleanly separated, underlying communication “layer“. App-to-App communication takes place “virtually“, with the arcane, physical, over-the-wire, details being handled under the covers by the unheralded Comm layer.

In the ISO OSI reference model for inter-machine communication, the vertical linkage between two layers in a software stack is referred to as an “interface” and the horizontal linkage between two instances of a layer running on different machines is called a “protocol“. This interface/protocol distinction is important because solving flow-control and error-control issues between machines is much more involved than handling them within the sheltered confines of a single machine.

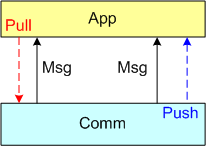

In this post, I’m going to focus on the receiving end of a peer-to-peer information transfer. Specifically, I’m going to explore the two methods in which an App component can retrieve messages from the comm layer: Pull and Push. In the “Pull” approach, message transfer from the Comm layer to the App layer is initiated and controlled by the App component via polling. In the “Push” method, inversion of control is employed and the Comm layer initiates/controls the transfer by invoking a callback function installed by the App component on initialization. Any professional Comm subsystem worth its salt will make both methods of retrieval available to App component developers.

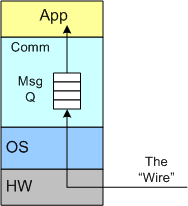

The figure below shows a model of a comm subsystem that supplies a message queue between the application layer and the “wire“. The purpose of this queue is to prevent high rate, bursty, asynchronous message senders from temporarily overwhelming slow receivers. By serving as a flow rate smoother, the queue gives a receiver App component a finite amount of time to “catch up” with bursts of messages. Without this temporary holding tank, or if the queue is not deep enough to accommodate the worst case burst size, some messages will be “dropped on the floor“. Of course, if the average send rate is greater than the average processing rate in the receiving App, messages will be consistently lost when the queue eventually overflows from the rate mismatch – bummer.

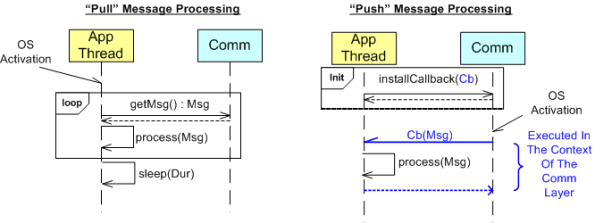

The UML sequence diagram below zeroes in on the interactions between an App component thread of execution and the Comm layer for both the “Push” and “Pull” methods of message retrieval. When the “Pull” approach is implemented, the OS periodically activates the App thread. On each activation, the App sucks the Comm layer queue dry; performing application-specific processing on each message as it is pulled out of the Comm layer. A nice feature of the “Pull” method, which the “Push” method doesn’t provide, is that the polling rate can be tuned via the sleep “Dur(ation)” parameter. For low data rate message streams, “Dur” can be set to a long time between polls so that the CPU can be voluntarily yielded for other processing tasks. Of course, the trade-off for long poll times is increased latency – the time from when a message becomes available within the Comm layer to the time it is actually pulled into the App layer.

In the”Push” method of message retrieval, during runtime the Comm layer activates the App thread by invoking the previously installed App callback function, Cb(Msg), for each newly received message. Since the App’s process(Msg) method executes in the context of a Comm layer thread, it can bog down the comm subsystem and cause it to miss high rate messages coming in over the wire if it takes too long to execute. On the other hand, the “Push” method can be more responsive (lower latency) than the “Pull” method if the polling “Dur” is set to a long time between polls.

So, which method is “better“? Of course, it depends on what the Application is required to do, but I lean toward the “Pull” Method in high rate streaming sensor applications for these reasons:

- In applications like sensor stream processing that require a lot of number crunching and/or data associations to be performed on each incoming message, the fact that the App-specific processing logic is performed within the context of the App thread in the “Pull” method (instead of the Comm layer) means that the Comm layer performance is not dependent on the App-specific performance. The layers are more loosely coupled.

- The “Pull” approach is simpler to code up.

- The “Pull” approach is tunable via the sleep “Dur” parameter.

How about you? Which do you prefer, and why?