Slice By Slice Over Layer By Layer

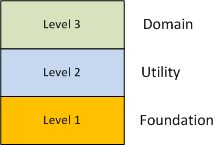

Assume that your team was tasked with developing a large software system in any application domain of your choice. Also, assume that in order to manage the functional complexity of the system, your team iteratively applied the “separation of concerns” heuristic during the design process and settled on a cleanly layered system as such:

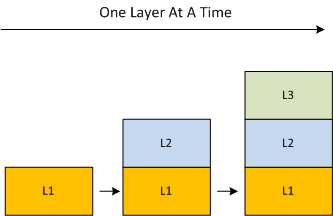

So, how are you gonna manifest your elegant paper design as a working system running on real, tangible hardware? Should you build it from the bottom up like you make a cake, one layer at a time?

Or, should you build it like you eat a cake, one slice at a time?

The problem with growing the system layer-by-layer is that you can end up developing functionality in a lower layer that may not ever be needed in the higher layers (an error of commission). You may also miss coding up some lower layer functionality that is indeed required by higher layers because you didn’t know it was needed during the upfront design phase (an error of omission). By employing the incremental slice-by-slice method, you’ll mitigate these commission/omission errors and you’ll have a partially working system at the end of each development step – instead of waiting until layers 1 and 2 are solid enough to start adding layer 3 domain functionality into the mix.

In the context of organizational growth, Russell Ackoff once stated something like: “it is better to grow horizontally than vertically“. Applying Russ’s wisdom to the growth of a large software system:

It’s better to grow a software system horizontally, one slice at a time, than vertically, one layer at a time.

The above quote is not some profound, original, BD00 quote. It’s been stated over and over again by multitudes of smart people over the years. BD00 just put his own spin on it.

Fragmented, Half-baked, Thoughts

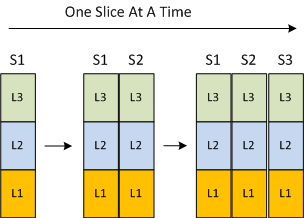

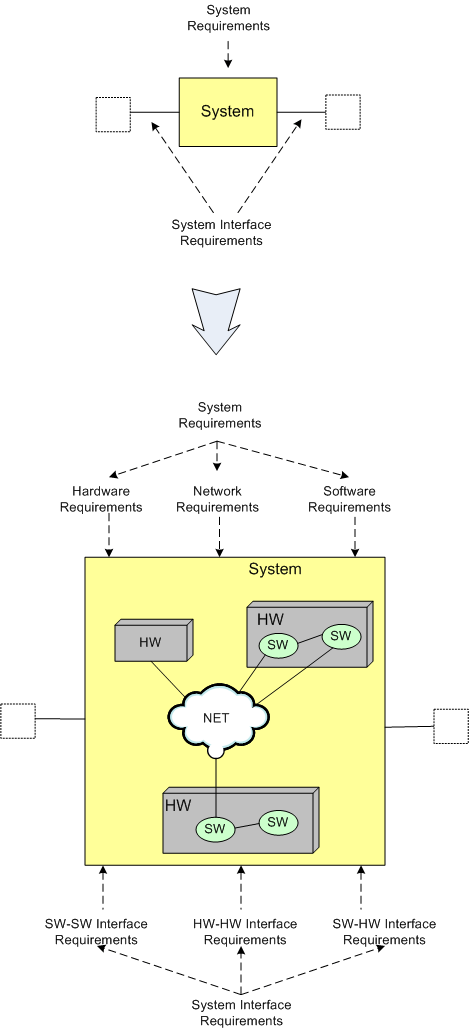

It took me approximately 20 minutes of iterative e-sketching to whip up the following figure:

During the process of construction, I experienced a slew of fragmented, half-baked, thoughts on how to present the intent and meaning of the diagram with some accompanying text. After I deemed the diagram “done“, I uploaded it to this page and reflected on it for 10 more minutes – trying to stitch the thought fragments together into some kind of coherent story that would interest you. I know the overarching theme has something to do with “matched system design“, but that’s all. I failed; and it’s time to move on.

Meet RAID And DIRA

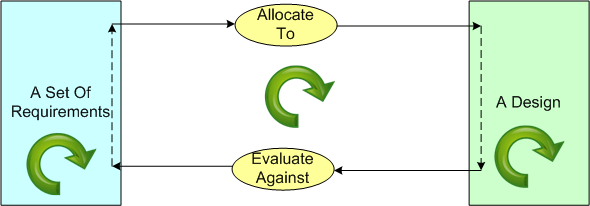

If you’re a C++ programmer, you’ve surely written code in accordance with the RAII (Resource Acquisition Is Initialization) idiom. Inspired by the RAII acronym, BD00 presents the RAID idiom: Requirements Allocation Is Design…

In order to allocate requirements to a design, you must have a design in mind that you think satisfies those requirements. Circularly speaking, in order to create a design (like the one above), you must have a set of requirements in mind to fuel your design process. Thus, RAID == DIRA (Design Is Requirements Allocation).

The Goldilocks Dilemma

With increasing product complexity comes the necessity for technical specialization. For example, I help build multi-million dollar air defense and air traffic control radars that require the integration of:

- RF microwave antenna design skills,

- electro-mechanical design skills,

- physical materials design skills,

- analog RF/IF transmitter and receiver design skills,

- digital signal processing hardware design skills,

- secure internet design skills,

- mathematical radar waveform and tracker design skills,

- real-time embedded software design skills,

- web/GUI software design skills,

- database design skills.

Unless you’re incredibly lucky enough to be blessed with a team of Einsteins, it’s impractical, to the point of insanity, to expect people to become proficient across more than one (perhaps two is doable, but rare) of these deep, time-consuming-to-acquire, engineering skill sets.

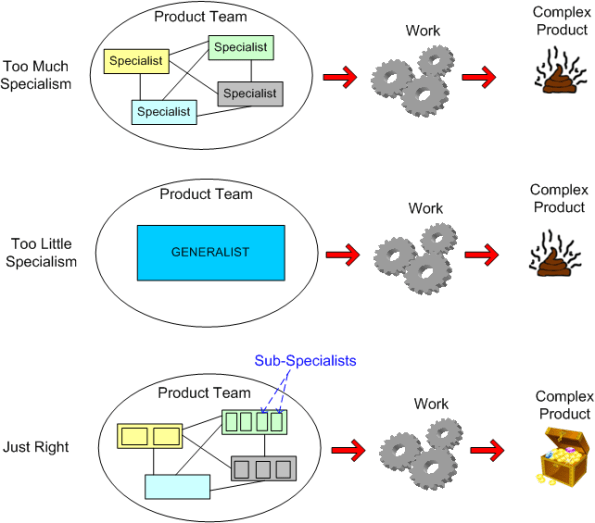

As the figure below illustrates, one of the biggest challenges in complex product development is the Goldilocks dilemma: deciding how much specialism is “just right” for your product development team.

Too much specialism leads to an exponential increase in the number of inter-specialist communication links/languages to manage effectively. Too little specialism leads to the aforementioned “team of Einsteins” syndrome or, in the worst case, the “too many eggs in one basket” risk.

So, is there some magic, plug & play formula that you can crank through to determine the optimal level of specialism required in your product development team? I suspect not, but hey, if you develop one from first principles, lemme know and we’ll start a new consulting LLP to milk that puppy. Hell, even if you pull one out of your ass that people with lots o’ money will buy into, still gimme a call.

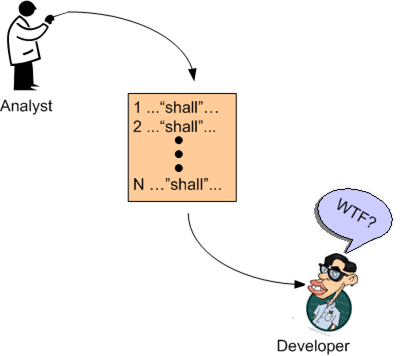

There “Shall” Be A Niche

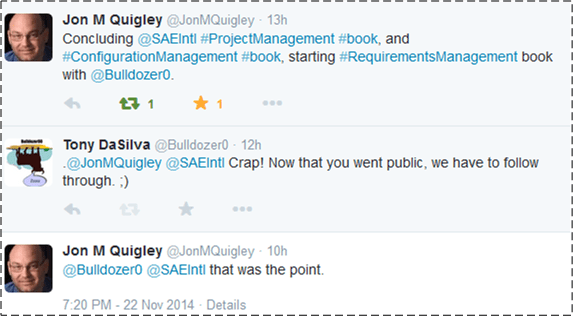

Someone (famous?) once said that a good strategy to employ to ensure that you get something done is to publicize what you’re going to do for all to see:

As you can see, my new found friend, multi-book author Jon M. Quigley (check out his books at Value Transformation LLC), proposed, and I accepted, a collaborative effort to write a book on the topic of product requirements. D’oh!

Why the “D’oh!”? As you might guess, there are a bazillion “requirements” books already out there in the wild. Here is just a sampling of some of those that I have access to via my safaribooksonline.com account: Of course, I haven’t read them all, but I have read both of Mr. Wiegers’s books and the Hatley/Hruschka book – all very well done. I’ve also read two great requirements books (not on the above list) by my favorite software author of all time, Mr. Gerry Weinberg: “Exploring Requirements” and “Are Your Lights On?“.

Of course, I haven’t read them all, but I have read both of Mr. Wiegers’s books and the Hatley/Hruschka book – all very well done. I’ve also read two great requirements books (not on the above list) by my favorite software author of all time, Mr. Gerry Weinberg: “Exploring Requirements” and “Are Your Lights On?“.

Jon and I would love to differentiate our book from the current crop – some of which are timeless classics. It’s not that we expect to eclipse the excellence of Mr. Weinberg or Mr. Wiegers, we’re looking for a niche. Perhaps a “Head First” or “Dummies” approach may satisfy our niche “requirement” :). Got any ideas?

The biggest obstacle, and it is indeed huge, in front of me is simply that:

“My ambition is handicapped by laziness” – Charles Bukowski

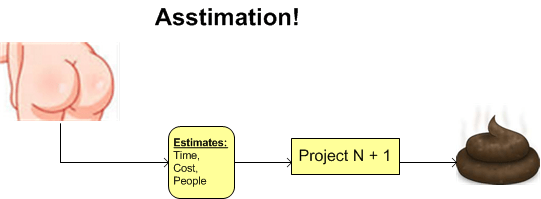

Asstimation!

Here’s one way of incrementally learning how to generate better estimates:

Like most skills, learning to estimate well is simple in theory but difficult in practice. For each project, you measure and record the actuals (time, cost, number/experience/skill-types of people) you’ve invested in your project. You then use your historical metrics database to estimate what it should take to execute your next project. You can even supplement/compare your empirical, company-specific metrics with industry-wide metrics available from reputable research firms.

Like most skills, learning to estimate well is simple in theory but difficult in practice. For each project, you measure and record the actuals (time, cost, number/experience/skill-types of people) you’ve invested in your project. You then use your historical metrics database to estimate what it should take to execute your next project. You can even supplement/compare your empirical, company-specific metrics with industry-wide metrics available from reputable research firms.

It should be obvious, but good estimates are useful for org-wide executive budgeting/allocating of limited capital and human resources. They’re also useful for aiding a slew of other org-wide decisions (do we need to hire/fire, take out a loan, restrict expenses in the short term, etc). Nevertheless, just like any other tool/technique used to develop non-trivial software systems, the process of estimation is fraught with risks of “doing it badly“. It requires discipline and perseverance to continuously track and record project “actuals“. Perhaps hardest of all is the ongoing development and maintenance of a system where historical actuals can be easily categorized and painlessly accessed to compose estimates for important impending projects.

In the worst cases of estimation dysfunction, the actuals aren’t tracked/recorded at all, or they’re hopelessly inaccessible, or both. Foregoing the thoughtful design, installation, and maintenance of a historical database of actuals (rightfully) fuels the radical #noestimates twitter community and leads to the well-known, well-tread, practice of:

Rollercoaster

Wanna go on a wildly fun rollercoaster ride? Then watch Erik Meijer’s “One Hacker Way” rant. Right out of the gate, I guesstimate that he alienated at least half of his audience with his opening “if you haven’t checked in code in the last week, what are you doing at a developer’s conference?” question.

I didn’t agree with all of what Erik said (I doubt anyone did), but I give him full credit for sticking his neck out and attacking as many sacred cows as he could: Agile, Scrum, 2 day certification, TDD “waste“, non-hackers, planning poker, the myth of self-organizing teams, etc. Mmmm, sacred cows make the best tasting hamburgers.

Uncle Bob Martin, the self-smug pope who arrogantly proclaimed “If you don’t do TDD, you’re unprofessional“, tried to make light of Erik’s creative rant with this lame blog post: “One Hacker Way!“. Nice try Bob, but we know you’re seething inside because many of your sacredly held beliefs were put on the stand. You seem to enjoy hacking the sacred cows of the reviled “traditional” way of developing software, but it’s a different story when your own cutlets are at steak.

Mr. Meijer pointed out what I, and no doubt many others, have thought for years: agile, particularly Scrum, is a subtle, insidious form of control. At least with explicit, transparent control, one knows the situation and where one stands. With Scrum, the koolaid-guzzling flock is duped into believing they’re in control; all the while being micro-managed with daily standups, burndown charts, velocity tracking, and cutesy terminology. No wonder it’s amassed huge fame and success – managers love Scrum because it makes their job easier and anesthetizes the coders.

My fave laugh-out-loud moment in Erik’s talk was when he presented Jeff Sutherland as the perceived messiah of software development:

I’ve found that the best books and talks are those in which I find some of the ideas enlightening and some revolting. Erik Meijer’s talk is certainly one of those brain-busting gems.

The test of a first-rate intelligence is the ability to hold two opposing ideas in mind at the same time and still retain the ability to function. – F. Scott Fitzgerald

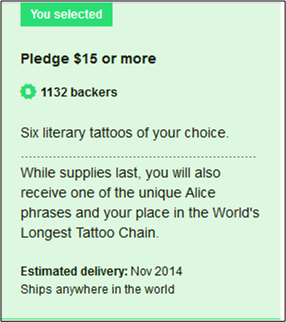

Nice Tatt!

It’s official! I’m the 4,829th member of the “Alice In Wonderland Tattoo Chain“.

Several months ago, I signed up for a kool Kickstarter project whose goal is to create and photograph the world’s largest tattoo chain. The completed project, brilliantly conceived of by Litograph’s Danny Fein, will be comprised of over 5,000 temporary tattoos. Each tattoo is one sentence from Lewis Carroll’s classic book “Alice’s Adventures in Wonderland“.

I received and applied my tattoo last week. I then uploaded a picture of my tatt to the collection site:

After one week of distribution, over 500 tatt pics have been uploaded. When all 5,000+ tattoos (over 55,000 words) have been uploaded, anyone will be able to read the entire book as one long sequence of online tattoos. Pretty creative, huh?

After one week of distribution, over 500 tatt pics have been uploaded. When all 5,000+ tattoos (over 55,000 words) have been uploaded, anyone will be able to read the entire book as one long sequence of online tattoos. Pretty creative, huh?

At Litograph.com, besides tattoos, you can buy T-shirts and other items with the entire text of one of many classic works of literature delicately imprinted on them. I purchased the most appropriate T-shirt I could find for BD00, Machiavelli’s “The Prince” 🙂

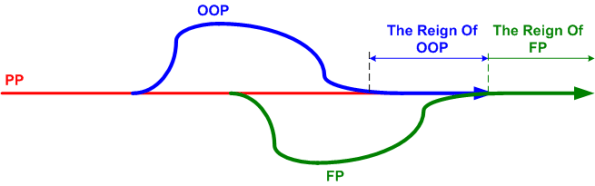

Regime Change

Revolution is glamorous and jolting; evolution is mundane and plodding. Nevertheless, evolution is sticky and long-lived whereas revolution is slippery and fleeting.

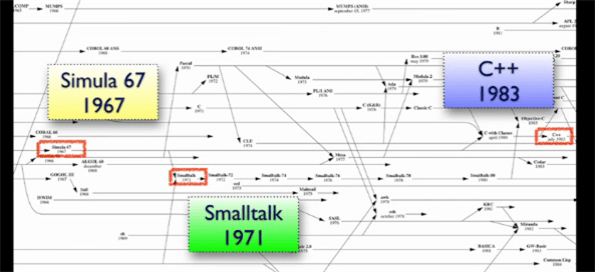

As the figure below from Neal Ford’s OSCON “Functional Thinking” talk reveals, it took a glacial 16 years for Object-Oriented Programming (OOP) to firmly supplant Procedural Programming (PP) as the mainstream programming style of choice. There was no revolution.

Starting with, arguably, the first OOP language, Simula, the subsequent appearance of Smalltalk nudged the acceptance of OOP forward. The inclusion of object-oriented features in C++ further accelerated the adoption of OOP. Finally, the emergence of Java in the late 90’s firmly dislodged PP from the throne in an evolutionary change of regime.

I suspect that the main reason behind the dethroning of PP was the ability of OOP to more gracefully accommodate the increasing complexity of software systems through the partitioning and encapsulation of state.

Mr. Ford asserts, and I tend to agree with him, that functional programming is on track to inherit the throne and relegate OOP to the bench – right next to PP. The main force responsible for the ascent of FP is the proliferation of multicore processors. PP scatters state, OOP encapsulates state, and FP eschews state. Thus, the FP approach maps more naturally onto independently running cores – minimizing the need for performance-killing synchronization points where one or more cores wait for a peer core to finish accessing shared memory.

The mainstream-ization of FP can easily be seen by the inclusion of functional features into C++ (lambdas, tasks, futures) and the former bastion of pure OOP, Java (parallel streams). Rather than cede the throne to pure functional languages like the venerable Erlang, these older heavyweights are joining the community of the future. Bow to the king, long live the king.

Cry Babies

On Oct. 6, the US Air Force awarded the Raytheon/Saab team a phase-one, four year, $19M, fixed-price contract to deliver 3 long range ground-to-air surveillance radars by 2018. The other two contractors vying for the award were Lockheed Martin (LM) and Nothrup Grumman (NG).

The contract award was expected in early September, but it was pushed back until Oct. 6. That could have been to ensure everything was handled properly to head off a potential protest from either Lockheed Martin or Northrop Grumman.

The total 3DELRR (3 Dimension Expeditionary Long Range Radar) contract value is estimated at $71M, and it requires the Raytheon-led team to produce an additional 3 radars. In the long run, the Air Force plans to procure 29 more radars for a grand total of 35 sensor systems. The total contract effort could span decades and possibly net $1B for the team. In addition, since “exportability” features were baked into the design UP FRONT, Raytheon will have the opportunity to sell many more radars to US allies all over the world without having to be concerned about US foreign technology transfer restrictions.

Since we’re not talkin’ chump change here, you can infer that the losing competitors were not at all happy with the Air Force’s decision – despite the delay to ensure a fair evaluation. But of course, as is standard practice with big government contracts, both losers filed formal protests of the award with the US government’s GAO (General Accounting Office) after they were formally debriefed on why they lost. NG did not publicly specify the grounds for its protest, but LM proclaimed that their offering was “the most affordable and capable solution.”

The need to allow for contract award protests is obvious: to ensure that no hanky-panky occurred behind the scenes during the decision-making process. However, since the chances of being successful are small and the protest process is normally a huge waste of time and money for all involved parties, you would think that controls would be in place to prevent every single big ticket award to be protested willy-nilly. But alas, no matter what controls are in effect, a desperate loser can always find a cadre of clever lawyers to skirt the rules.