Archive

Functional Allocation I

Some system engineering process descriptions talk about “Functional Allocation” as being one of the activities that is performed during product development. So, what is “Functional Allocation”? Is it the allocation of a set of interrelated functions to a set of “something else”? Is it the allocation of a set of “something else” to a set of function(s)? Is it both? Is it done once, or is it done multiple times, progressing down a ladder of decreasing abstraction until the final allocation iteration is from something abstract to something concrete and physically measurable?

I hate the word “allocation”. I prefer the word “design” because that’s what the activity really is. Given a specific set of items at one level of abstraction, the “allocator” must create a new set of items at the next lower level of abstraction. That seems like design to me, doesn’t it? Depending on the nature and complexity of the product under development, conjuring up the next lower level set of items may be very difficult. The “allocator” has an infinite set of items to consciously choose from and purposefully interconnect. “Allocation” implies a bland, mechanistic, and deterministic procedure of apportioning one set of given items to another different set of given items. However, in real life only one set of items is “given” and the other set must be concocted out of nowhere.

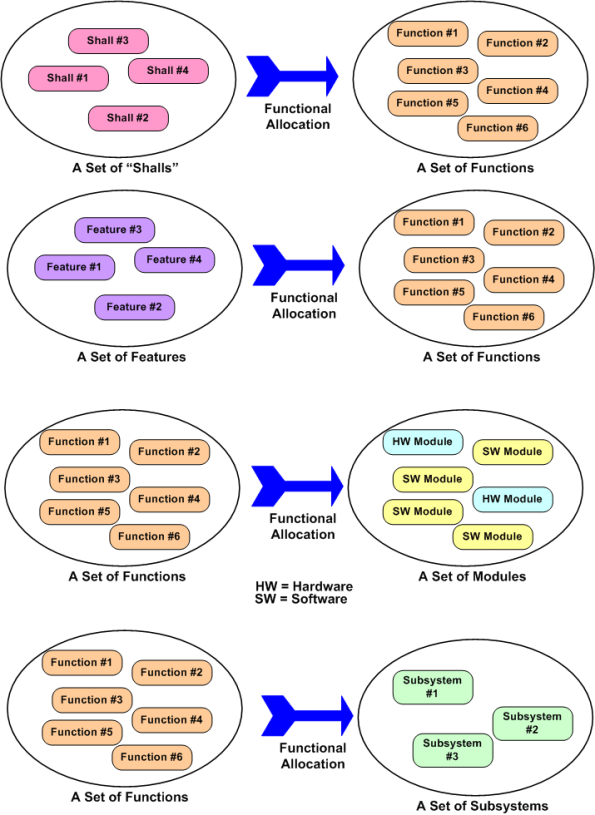

The figure below shows four different types of functional allocations: shalls-to-functions, features-to-functions, functions-to-modules, and functions-to-subsystems. Each allocation example has a set of functions involved. In the first two examples, the set of functions have been allocated “to”, and in the last two examples, the set of functions have been allocated “from”.

So, again I ask, what is functional allocation? To managers who love to remove people from the loop and automate every activity they possibly can to reduce costs, can human beings ever be removed from the process of functional allocation? If you said no, then what can you do to help make the process of allocation more efficient?

Archeosclerosis

Archeosclerosis is sclerosis of the software architecture. It is a common malady that afflicts organizations that don’t properly maintain and take care of their software products throughout the lifecycle.

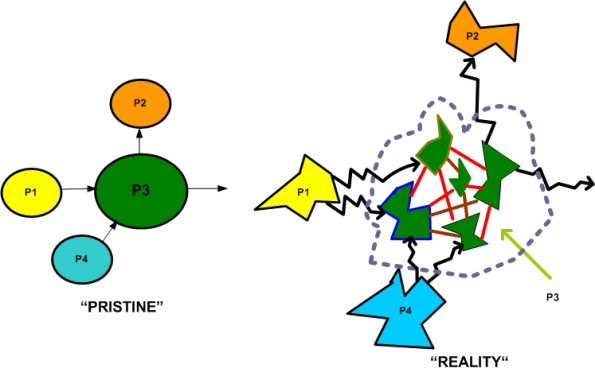

The time lapsed figure below shows the devastation caused by the failure to diligently keep entropy growth in check over the lifetime of a product. On the left, we have a four process application program that satisfies a customer need. On the right, we have a snapshot of the same program after archeosclerosis has set in.

Usually, but not always, the initial effort to develop the software yields a clean design that’s easy to maintain and upgrade. Over time, as new people come on board and the original developers move on to other assignments, the structure starts turning brittle. Bug fixes and new feature additions become swashbuckling adventures into the unknown and lessons in futility.

Fueled by schedule pressure and the failure of management to allocate time for periodic refactoring, developers shoehorn in new interfaces and modules. Pre-existing components get modified and lengthened. The original program structure fragments and the whole shebang morphs into a jagged quagmire.

Of course, the program’s design blueprints and testing infrastructure suffer from neglect too. Since they don’t directly generate revenue, money, time, and people aren’t allocated to keep them in sync with the growing software hairball. Besides, since all resources are needed to keep the house of cards from collapsing, no resources can be allocated to these or any other peripheral activities.

As long as the developer org has a virtual monopoly in their market, and the barriers to entry for new competitors are high, the company can stay in business, even though they are constantly skirting the edge of disaster.

Bozo Planning

Why is it that big government and big industry continue to cling to an archaic planning method that clearly doesn’t work. History has repeatedly shown that the Big, One Time Planning (BOTP) method is rapidly becoming more obsolete as the pace of change accelerates. The top half of the figure below shows what has been, and continues to be done for Big, Complex, Multi-technology, Product (BCMP) development jobs.

By definition, BCMPs are hairballs that no one fully understands at the outset. As time ticks forward and the effort progresses, learning naturally occurs and new knowledge is discovered and accumulated. This new found knowledge validates the invalidity of the golden BOTP plan. Strangely but surely, as THEE plan becomes more disconnected from reality, no one says or does anything until the mismatch gets in your face. Driven by fear, no one wants to be the first one to step up and announce that the emperor needs a new wardrobe. Eventually, shoddy work and buggy, failure prone components become visible and impossible to ignore. Finger pointing and defensiveness take over. It’s sick city until the crap gets delivered or the whole shebang is canceled in a highly publicized hatefest.

The bottom of the figure shows an alternative to the toxic BOTP method. It’s called: Planning To Continuously Replan (PTCR). In this method, everyone develops a shared understanding at the outset that PTCR will be used to increase (but not guarantee) the chance of project success. In PTCR, replanning is done at necessary points in the effort. How does a project manager know when one of these necessary points in time has occurred? By rolling up his/her sleeves, getting close to the team and, most importantly, personally monitoring the intermediate work products that are being created in real-time. Trust but verify. There’s no distancing and disconnecting from the project like the bozo executors of the BOTP technique do.

It’s my guess that BOTP will continue to be used by vendors and purchasers of BCMPs in the future. Because of the fear of change and the importance of maintaining (a false) image of infallibility, the comfort of the same-old same-old is always preferred to the uncomfort of the new. This, in spite of the ineffectiveness of the same-old same-old. As Mark Twain said: “I love progress, it’s change that I hate”.

Tasks, Threads, And Processes

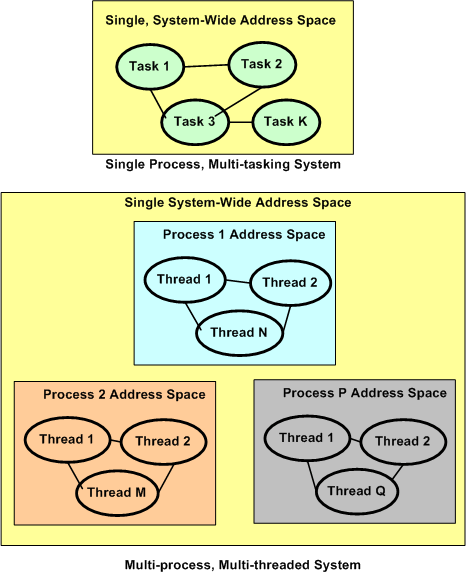

Tasks, threads, and processes. What’s the difference between them? Most, if not all Real-Time Operating Systems (RTOS) are intended to run stand-alone applications that require small memory footprints and low latency responsiveness to external events. They’re designed around the concept of a single, unified, system-wide memory address space that is shared among a set of prioritized application “tasks” that execute under the control of the RTOS. Context switching between tasks is fast in that no Memory Management Unit (MMU) hardware control registers need to be saved and retrieved to implement each switch. The trade-off for this increased speed is that when a single task crashes (divide by zero, or bus error, or illegal instruction, anyone?), the whole application gets hosed. It’s reboot city.

Modern desktop and server operating systems are intended for running multiple, totally independent, applications for multiple users. Thus, they divide the system memory space into separate “process” spaces so that when one process crashes, the remaining processes are unaffected. Hardware support in the form of an MMU is required to pull off process isolation and independence. The trade-off for this increased reliability is slower context switching times and responsiveness.

The figure below graphically summarizes what the text descriptions above have attempted to communicate. All modern, multi-process operating systems (e.g. Linux, Unix, Solaris, Windows) also support multi-threading within a process. A thread is the equivalent of an RTOS task in that if a thread crashes, the application process within which it is running can come tumbling down. Threads provide the option for increased modularity and separation of concerns within a process at the expense of another layer of context switching (beyond the layer of process-to-process context switching) and further decreased responsiveness.

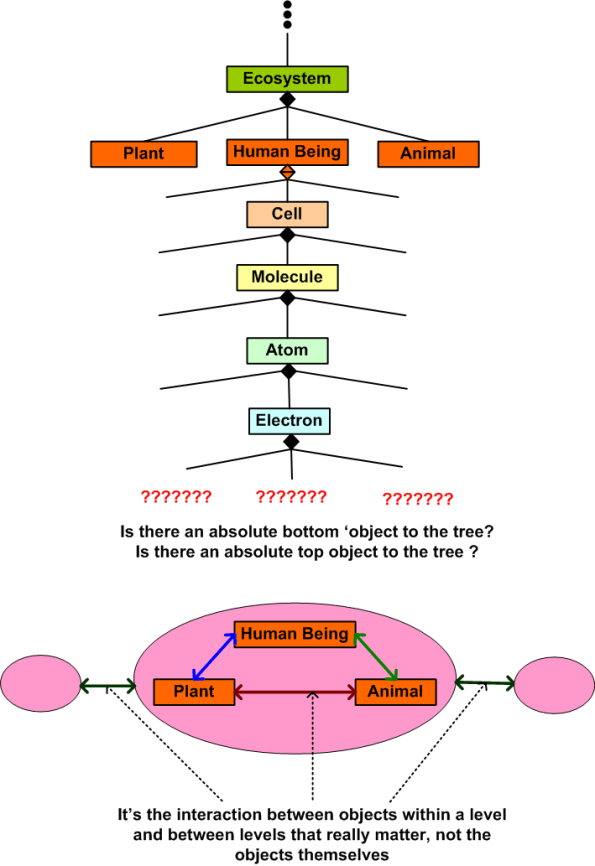

Trees

This tree below is my personal creation. You’re tree would likely be different than my tree. Nature creates perfect trees. Man tends to destroy nature’s trees and to create arbitrary artificial trees to suit his needs. Man must create, either consciously or unconsciously, conceptual trees to make sense of the world. How attached are you to your trees? Are your trees THE right trees and are my trees wrong? Are trees created by ‘experts’ the trees that all should unquestionably embrace? Who are the ‘experts’?

Creating the vertical aspect of the tree is called leveling. Creating the horizontal aspect of the tree is called balancing. Leveling and balancing, along with scoping and bounding, are powerful systems analysis and synthesis tools.

Unnecessarily Complex, or Sophisticated?

I recently stumbled upon the following quote:

“A lot of people mistake unnecessary complexity for sophistication.” – Dan Ward

Likewise, these two quotes from maestro da Vinci resonate with me:

“Simplicity is the ultimate sophistication.” – Leonardo da Vinci

“In nature’s designs, nothing goes wanting, and nothing is superfluous.” – Leonardo DaVinci

When designing and developing a large software-intensive system, over-designing it (i.e. adding too much unessential complexity to the system’s innate essential complexity) can lead to disastrously expensive downstream maintenance costs. The question I have is, “how do you know if you have enough expertise to confidently judge whether an existing complex system is over-designed or not?”. Do you just blindly trust the subjective experts that designed the system when they say “believe me, all the complexity in the system is essential”? If you’re a true layman, then there is probably no choice – ya gotta believe. But what if you’re a tweener? Between a layman and an expert? It’s dilemma city.

The figure below depicts a simple model of a generic multi-sensor system. The individual sensors may probe, detect, and measure any one or more of a plethora of physical attributes in the external environment to which they are “attached”. For example, the raw sensor samples may represent pressure, temperature and/or wind speed measurements. They also may represent the presence of objects in the external environment, their positions, and/or kinematic movements.

The fusion processor produces an integrated surveillance picture from the inputs it receives via all of the individual sensors. This fused picture is then propagated further downstream for:

- display to human users,

- additional value-added processing,

- automatically issuing control actions to actuators (e.g. gates, lights, valves, motors) embedded in the external environment .

Now assume that you are given a model of a multi-sensor system as shown in the figure below. Is the feedback interface from the fusion processor back to one (and only one) sensor evidence of an over-designed system and/or unnecessary added complexity? Well, it depends.

If the feedback interface was purposely designed into the system in order to increase the native functionality or performance of the individual sensor processor that utilizes the data, then the system may not be over-designed and the added complexity introduced by designing in the interface may be essential. If the feedback loop only serves to “push back” into the sensor processor some functionality that the fusion processor can easily do itself, then the interface may be interpreted as adding some unessential complexity to the system.

In any system design or system analysis/evaluation process, effectively judging what is essential and what is unessential can be a difficult undertaking. The more innately complex a system is, the more difficult it is to ferret out the necessary from the unnecessary.

Product Development Systems

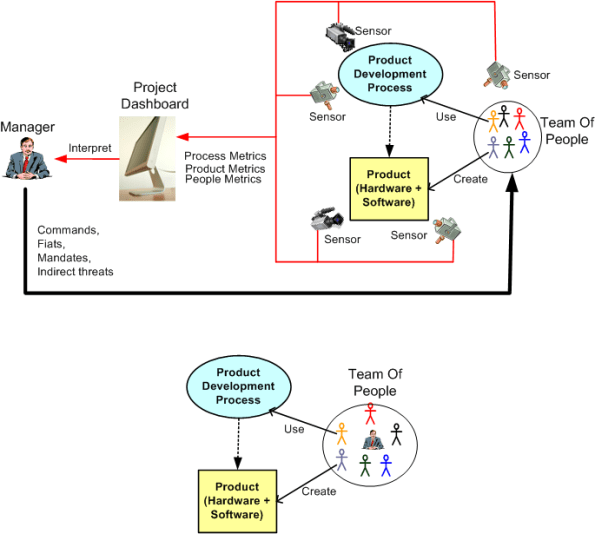

The figure below shows two (out of a possibly infinite number of) product development systems. Which one will produce the higher quality, lower cost product in the shortest time? Would a hybrid system be better?

Analysis Vs. Synthesis

Everybody’s an expert analyzer. We slice, we dice, we evaluate and judge everything and everyone around us. “That’s good, that’s bad, you should’ve done it this way”, yada, yada, yada. Analysis is easy, but how many of us are synthesizers and/or integrators, CREATORS?

The problem is that we’re taught to analyze and critique from day one. We start with our parents and we are continually reinforced over time by our education system until we’re rigid adults who know how “things should be”. Over and over, we’re presented with something that already IS (like a circuit drawing, a business case, an a-posteriori historic event) , and we are taught how to criticize it and how to vocalize that “it should have been done this way”.

That’s too bad, because we are all born with the innate ability to be synthesizers, integrators, or in summary, CREATORS. We were born of creation, and thus we are creators. Sadly, we lose touch of that simple fact of life because of our inculcated obsessive infatuation with academic intelligence and the ego-driven desire to know more than “others”. Our western societies reward and glorify left brain “experts” and they shun, or even ridicule, wild-ass innovators and creators (at least until aftet they’ve died). Creators and innovators are often ostracized by the herd because they shatter the status quo and disorient us from the comfort of our lazy Barca-loungers.

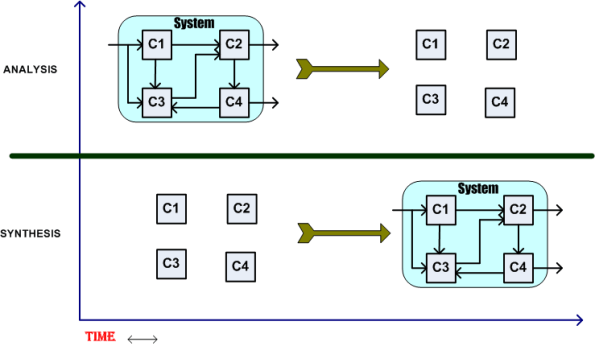

The figure below shows the difference between analysis and synthesis (Cx = component x). In the analysis scenario, we are given something that exists and then we are asked to evaluate it, to determine what’s good/bad about it, and to develop ideas on how to improve upon it. In school, we are taught all kinds of discipline-specific techniques and methods for decomposing and breaking things down into parts so that we can improve upon the existing design. But analyzing the parts separated from the whole doesn’t get us anywhere because the whole includes and transcends the individual parts. By breaking the system apart we obliterate the essence of the system, which is the magic that exists in the interactions between its parts. Blech! Meh! Hawk-tooey!

The synthesis scenario is downright scary. We are given a novel problem, a bounded set of parts (if we’re lucky), and told to “come up with a solution” by the boss. Dooh! Uh, I don’t know how to do that cuz I wasn’t taught that in school. I think I’ll bury my head in the sand and hope that someone else will solve the problem.

Synthesis transcends and includes analysis. You can’t be a good synthesizer without being a good analyzer. You synthesize an initial solution out of intellectual “parts” known to YOU and then you analyze the result. If you aren’t familiar with the problem domain, then you have no “parts” in your repository and you can’t come up with any initial solution candidates. End of story. If you do have experience and knowledge in the problem domain, then you can synthesize an initial solution. You can then iterate upon it, replace bad parts with better parts, and converge on a “good enough” solution without falling into an endless analysis-paralysis loop. During the process of synthesis, you dynamically rearrange structures, envision system behavior, and iterate again until it “intuitively feels right”. All this activity occurs naturally, chaotically, at the speed of thought, and to the chagrin of the masses of Harvard trained MBAs, in no premeditated or preplanned manner.

Ultimately, in order to share your creative results with others, you must expose your baby for “analysis”. Now THAT, is the hard part. For most people, it’s so hard to expose their creations to ridicule and humiliation that they keep their creations to themselves for fear of annihilation by the herd of analyzers standing on the sideline ready to attack and criticize. It sux to be a synthesizer, and that’s why the vast majority of people, even though every one of them is innately capable of synthesizing, fail to live fulfilling lives. Bummer!

The UML And The SysML

Introduction

The Unified Modeling Language (UML) and System Modeling Language (SysML) are two industry standard tools (not methodologies) that are used to visually express and communicate system structure and behavior.

The UML was designed by SW-weenies for SW-weenies. Miraculously, three well-known SW methodologists (affectionately called the three amigos) came together in koom-bah-yah fashion and synthesized the UML from their own separate pet modeling notations. Just as surprising is the fact that the three amigos subsequently donated their UML work to the Object Management Group (OMG) for standardization and evolution. Unlike other OMG technologies that were designed by large committees of politicians, the UML was originally created by three well-meaning and dedicated people.

After “seeing the light” and recognizing the power of the UML for specifying/designing large, complex, software-electro-mechanical systems, the system engineering community embraced the UML. But they found some features lacking or missing altogether. Thus, the SysML was born. Being smart enough not to throw the baby out with the bathwater, the system engineering powers-that-be modified and extended the UML in order to create the SysML. The hope was that broad, across-the-board adoption of the SysML-UML toolset pair would enable companies to more efficiently develop large complex multi-technology products.

The MLs are graphics intensive and the “diagram” is the fundamental building block of them both. The most widely used graphics primitives are simple enough to use for quick whiteboard sketching, but they’re also defined rigorously enough (hundreds of pages of detailed OMG specifications) to be “compiled” and checked for syntax and semantic errors – if required and wanted.

The Diagrams

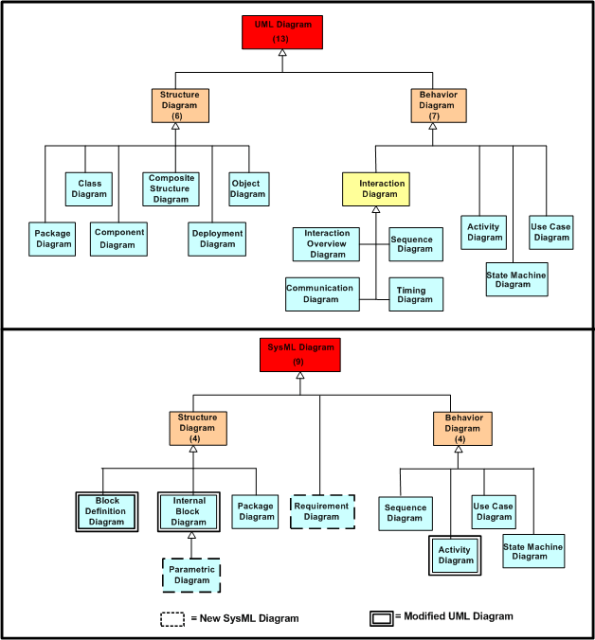

The stacked UML class diagram below shows the structural relationships among the diagrams in the UML and SysML, respectively. The subsequent table lists all the diagrams in the two MLs and it also maps which diagram belongs to which ML.

In the table, the blue, orange and green marked diagrams are closely related but not equal. For instance, the SysML block definition diagram is equivalent too, but replaces the UML class diagram.

Diagrams are created by placing graphics primitives onto a paper or electronic canvas and connecting entities together with associations. Text is also used in the construction of ML diagrams but it plays second fiddle to graphics. Both MLs have subsets of diagrams designed to clearly communicate the required structure(s) of a system and the required behavior(s) of the system.

The main individual star performers in the UML and the SysML are the “class” and (the more generic) “block”, respectively. Closely coupled class or block clusters can be aggregated and abstracted “upwards” into components, packages, and sub-systems, or they can be de-abstracted downwards into sub-classes, parts, and atomic types. A rich palette of line-based connectors can be employed to model various types of static and dynamic associations and relationships between classes and blocks.

A conundrum?

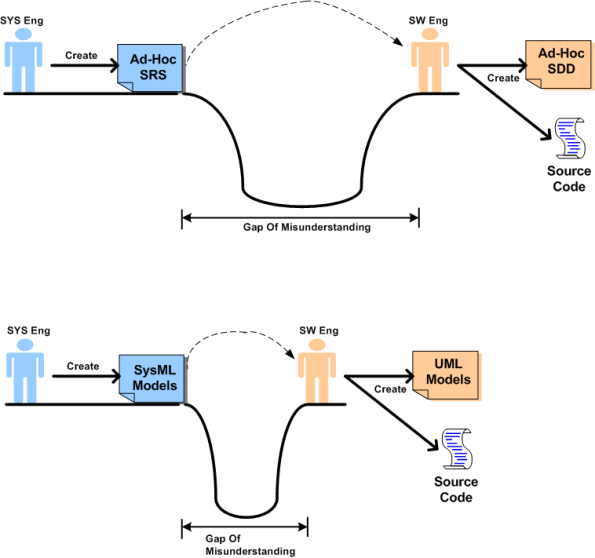

In the pre-ML days of large system specification and design, ad-hoc text and structured analysis/design tools were predominantly used to communicate requirements, architectures, and designs between engineering disciplines. The use of these ad-hoc tools often created large gaps in understanding between the engineering disciplines, especially between systems and software engineers. These misunderstandings led to inefficient and error prone development. The figure below shows how the SysML and UML toolset pair is intended to close this “gap of misunderstanding”.

Minimization of the gap-of-misunderstanding leads to shorter *and* higher quality product development projects. Since the languages are large and feature rich, learning to apply the MLs effectively is not a trivial endeavor. You can’t absorb all the details and learn it overnight.

Cheapskate organizations who trivialize the learning process and don’t invest in their frontline engineering employees will get what they deserve if they mandate ML usage but don’t pay for extensive institution-wide training. Instead of closing the gap-of-misunderstanding, the gap may get larger if the MLs aren’t applied effectively. One could end up with lots of indecipherable documentation that slows development and makes maintenance more of a nightmare than if the MLs were not used at all (the cure is worse than the disease!). Just because plenty of documentation gets created, and it looks pretty, doesn’t guarantee that the initial product development and subsequent maintenance processes will occur seamlessly. Also, as always in any complex product development, good foundational technical documentation cannot replace the value and importance of face-to-face interaction. Having a minimal, but necessary and sufficient document set augments and supplements the efficient exchange of face-to-face tacit communication.

Closing Note: The “gap-of-misunderstanding” is a derivative of W. L. Livingston’s “gulf-of-human-intellect” (GOHI) which can be explored in Livingston’s book “Have Fun At Work”.

How And What

“Never tell people how to do things. Tell them what to do and they will surprise you with their ingenuity.” – George S. Patton

One of my pet peeves is when a bozo manager dictates the how, but has no clue of the what – which he/she is supposed to define:

“Here’s how you’re gonna create the what (whatever the what ends up being): You will coordinate Fagan inspections of your code, write code using Test First Design, comply with the corpo coding standards, use the corpo UML tool, run the code through a static analyzer, write design and implementation documents using the corpo templates, yada-yada-yada. I don’t know or care what the software is supposed to do, what type of software it is, or how big it will end up being, but you shall do all these things by this XXX date because, uh, because uh, be-be-because that’s the way we always do it. We’re not burger king, so you can’t have it your way.”

Focusing on the means regardless of what the ends are envisioned to be is like setting a million monkeys up with typewriters and hoping they will produce a Shakespear-ian masterpiece. It’s a failure of leadership. On the other hand, allowing the ends to be pursued without some constraints on the means can lead to unethical behavior. In both cases, means-first or ends-first, a crappy outcome may result.

On the projects where I was lucky to be anointed the software lead in spite of not measuring up to the standard cookie cutter corpo leadership profile, I leaned heavily toward the ends-first strategy, but I tried to loosely constrain the means so as not to suffocate my team: “eliminate all compiler warnings, code against the IDD, be consistent with your coding style, do some kind of demonstrable unit and integration testing and take notes on how you did it.”