Archive

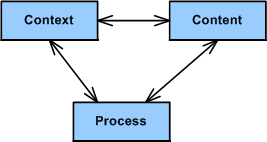

CCP

Relax right wing meanies, it’s not CCCP. It’s CCP, and it stands for Context, Content, and Process. Context is a clear but not necessarily immutable definition of what’s in and what’s out of the problem space. Content is the intentionally designed static structure and dynamic behavior of the socio-technical solution(s) to be applied in an attempt to solve the problem. Process is the set of development activities, tasks, and toolboxes that will be used to pre-test (simulate or emulate), construct, integrate, post-test, and carefully introduce the solution into the problem space. Like the other well-known trio, schedule-cost-quality, the three CCP elements are intimately coupled and inseparable. Myopically focusing on the optimization of one element and refusing to pay homage to the others degrades the performance of the whole.

I first discovered the holy trinity of CCP many years ago by probing, sensing, and interpreting the systems work of John Warfield via my friend, William Livingston. I’ve been applying the CCP strategy for years to technical problems that I’ve been tasked to solve.

You can start using the CCP problem solving process by diving into any of the three pillars of guidance. It’s not a neat, sequential, step-by-step process like those documented in your corpo standards database (that nobody follows but lots of experts are constantly wasting money/time to “improve”). It’s a messy, iterative, jagged, mistake discovering and correcting intellectual endeavor.

I usually start using CCP by spending a fair amount of time struggling to define the context; bounding, iterating and sketching fuzzy lines around what I think is in and what is out of scope. Next, I dive into the content sub-process; using the context info to conjure up solution candidates and simulate them in my head at the speed of thought. The first details of the process that should be employed to bring the solution out of my head and into the material world usually trickle out naturally from the info generated during the content definition sub-process. Herky-jerky, iterative jumping between CCH sub-processes, mental simulation, looping, recursion, and sketching are key activities that I perform during the execution of CCP.

What’s your take on CCP? Do you think it’s generic enough to cover a large swath of socio-technical problem categories/classes? What general problem solving process(es) do you use?

A Costly Mistake?

Assume the following:

- Your flagship software-intensive product has had a long and successful 10 year run in the marketplace. The revenue it has generated has fueled your company’s continued growth over that time span.

- In order to expand your market penetration and keep up with new customer demands, you have no choice but to re-architect the hundreds of thousands of lines of source code in your application layer to increase the product’s scalability.

- Since you have to make a large leap anyway, you decide to replace your homegrown, non-portable, non-value adding but essential, middleware layer.

- You’ve diligently tracked your maintenance costs on the legacy system and you know that it currently costs close to $2M per year to maintain (bug fixes, new feature additions) the product.

- Since your old and tired home grown middleware has been through the wringer over the 10 year run, most of your yearly maintenance cost is consumed in the application layer.

The figure below illustrates one “view” of the situation described above.

Now, assume that the picture below models where you want to be in a reasonable amount of time (not too “aggressive”) lest you kludge together a less maintainable beast than the old veteran you have now.

Cost and time-wise, the graph below shows your target date, T1, and your maintenance cost savings bogey, $75K per month. For the example below, if the development of the new product incarnation takes 2 years and $2.25 M, your savings will start accruing at 2.5 years after the “switchover” date T1.

Now comes the fun part of this essay. Assume that:

- Some other product development group in your company is 2 years into the development of a new middleware “candidate” that may or may not satisfy all of your top four prioritized goals (as listed in the second figure up the page).

- This new middleware layer is larger than your current middleware layer and complicated with many new (yet at the same time old) technologies with relatively steep learning curves.

- Even after two years of consumed resources, the middleware is (surprise!) poorly documented.

- Except for a handful of fragmented and scattered powerpoint files, programming and design artifacts are non-existent – showing a lack of empathy for those who would want to consider leveraging the 2 year company investment.

- The development process that the middleware team is using is fairly unstructured and unsupervised – as evidenced by the lack of project and technical documentation.

- Since they’re heavily invested in their baby, the members of the development team tend to get defensive when others attempt to probe into the depths of the middleware to determine if the solution is the right fit for your impending product upgrade.

How would you mitigate the risk that your maintenance costs would go up instead of down if you switched over to the new middleware solution? Would you take the middleware development team’s word for it? What if someone proposed prototyping and exploring an alternative solution that he/she thinks would better satisfy your product upgrade goals? In summary, how would you decrease the chance of making a costly mistake?

My OSEE Experience

Intro

A colleague at work recently pointed out the existence of the Eclipse org’s Open System Engineering Environment (OSEE) project to me. Since I love and use the Eclipse IDE regularly for C++ software development, I decided to explore what the project has to offer and what state it is in.

The OSEE is in the “incubation” stage of development, which means that it is not very mature and it may require a lot more work before it has a chance of being accepted by a critical mass of users. On the project’s main page, the following sentences briefly describe what the OSEE is:

The Open System Engineering Environment (OSEE) project provides a tightly integrated environment supporting lean principles across a product’s full life-cycle in the context of an overall systems engineering approach. The system captures project data into a common user-defined data model providing bidirectional traceability, project health reporting, status, and metrics which seamlessly combine to form a coherent, accurate view of a project in real-time.

The feature list is as follows:

- End-to-end traceability

- Variant configuration management

- Integrated workflows and processes

- A Comprehensive issue tracking system

- Deliverable document generation

- Real-time project tracking and reporting

- Validation and verification of mission software

I don’t know about you, but the OSEE sounds more like an integrated project management tool than a system engineering toolset that facilitates requirements development and system design. Promoting the product ambiguously may be intended to draw in both system engineers and program managers?

The OSEE is not a design-by-committee, fragmented quagmire, it’s a derivation of a real system engineering environment employed for many years by Boeing during the development of a military helicopter for the US government. Like IBM was to the Eclipse framework, Boeing is to the OSEE.

“Standardization without experience is abhorrent.” – Bjarne Stroustrup

Download, Install, Use

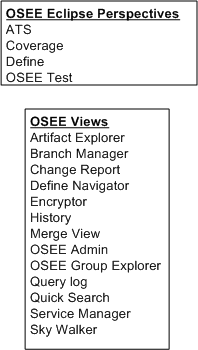

The figure below shows a simple model of the OSEE architecture. The first thing I did was download and install the (19) Eclipse OSEE plugins and I had no problem with that. Next, I tried to install and configure the required PostgresQL database and OSEE application and OSEE arbitration servers. After multiple frustrating tries, and several re-reads of the crappy install documentation, I said WTF! and gave up. I did however, open and explore various OSEE related Eclipse perspectives and views to try and get a better feel for what the product can do.

As shown in the figure below, the OSEE currently renders four user-selectable Eclipse perspectives and thirteen views. Of course, whenever I opened a perspective (or a view within a perspective) I was greeted with all kinds of errors because the OSEE back end kludge was not installed correctly. Thus, I couldn’t create or manipulate any hypothetical “system engineering” artifacts to store in the project database.

Conclusion

As you’ve probably deduced, I didn’t get much out of my experience of trying to play around with the OSEE. Since it’s still in the “incubation” stage of development and it’s free, I shouldn’t be too harsh on it. I may revisit it in the future, but after looking at the OSEE perspective/view names above and speculating about their purposes, I’ve pre-judged the OSEE to be a heavyweight bureaucrat’s dream and not really useful to a team of engineers. Bummer.

Exploring Processor Loading

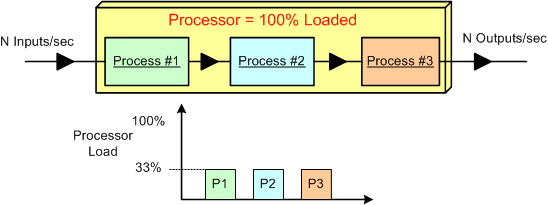

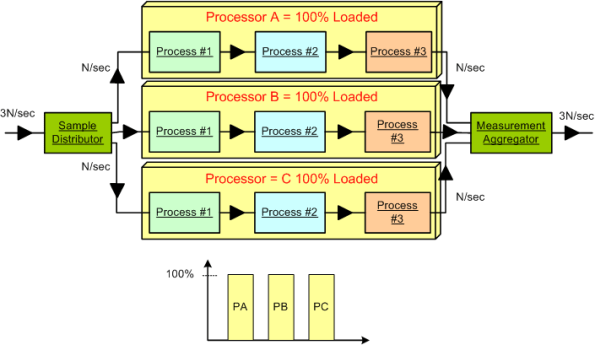

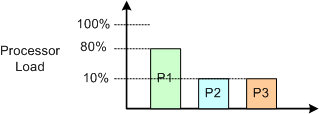

Assume that we have a data-centric, real-time product that: sucks in N raw samples/sec, does some fancy proprietary processing on the input stream, and outputs N value-added measurements/sec. Also assume that for N, the processor is 100% loaded and the load is equally consumed (33.3%) by three interconnected pipeline processes that crunch the data stream.

Next, assume that a new, emerging market demands a system that can handle 3*N input samples per second. The obvious solution is to employ a processor that is 3 times as fast as the legacy processor. Alternatively, (if the nature of the application allows it to be done) the input data stream can be split into thirds , the pipeline can be cloned into three parallel channels allocated to 3 processors, and the output streams can be aggregated together before final output. Both the distributor and the aggregator can be allocated to a fourth system processor or their own processors. The hardware costs would roughly quadruple, the system configuration and control logic would increase in complexity, but the product would theoretically solve the market’s problem and produce a new revenue stream for the org. Instead of four separate processor boxes, a single multi-core (>= 4 CPUs) box may do the trick.

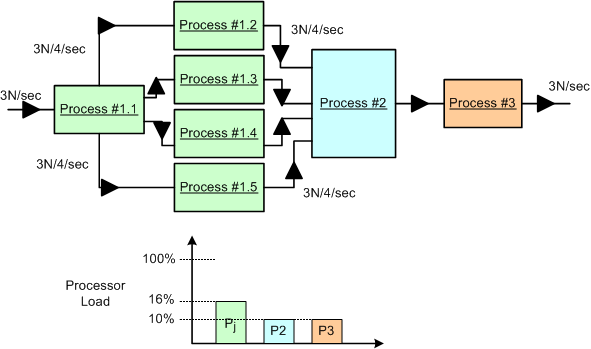

We’re not done yet. Now assume that in the current system, process #1 consumes 80% of the processor load and, because of input sample interdependence, the input stream cannot be split into 3 parallel streams. D’oh! What do we do now?

One approach is to dive into the algorithmic details of the P1 CPU hog and explore parallelization options for the beast. Assume that we are lucky and we discover that we are able to divide and conquer the P1 oinker into 5 equi-hungry sub-algorithms as shown below. In this case, assuming that we can allocate each process to its own CPU (multi-core or separate boxes), then we may be done solving the problem at the application layer. No?

Do you detect any major conceptual holes in this blarticle?

Application Infrastructure

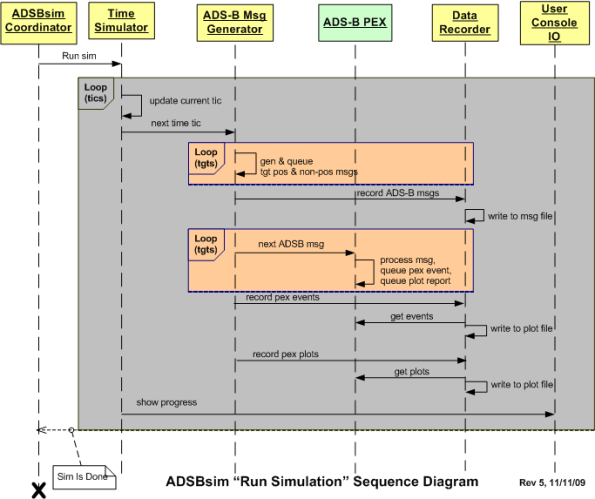

The most recent C++ application that I wrote is named “ADSBsim” The figure below shows some source code level metrics that characterize the program. The metrics are presented in two categories: global and infrastructure. Infrastructure code includes all of the low level, non-application layer logic. For this application, the ratio of infrastructure code to total code is 1725/2784*100 = 62%. Thus, over half of the application is comprised of unglamorous infrastructure scaffolding.

Unlike the application layer logic, which doesn’t get neglected up front, the amount of infrastructure code to be developed is hardly ever known to any degree of certainty at the beginning of a new project. Thus, in addition to the crappy guesstimates that you usually give for the application layer, you should add an equivalent amount of effort to cover the well-hidden infrastructure logic. Instead of multiplying your guesstimate by the classic factor of “2” (rule of thumb) to accommodate uncertainty, you should consider multiplying it by “4” to get a half-way reasonable result. If your org managers mandate schedules from above and always ignore your input, then never mind the advice in this post. You’re hosed no matter WTF you do :^)

Unlike the application layer logic, which doesn’t get neglected up front, the amount of infrastructure code to be developed is hardly ever known to any degree of certainty at the beginning of a new project. Thus, in addition to the crappy guesstimates that you usually give for the application layer, you should add an equivalent amount of effort to cover the well-hidden infrastructure logic. Instead of multiplying your guesstimate by the classic factor of “2” (rule of thumb) to accommodate uncertainty, you should consider multiplying it by “4” to get a half-way reasonable result. If your org managers mandate schedules from above and always ignore your input, then never mind the advice in this post. You’re hosed no matter WTF you do :^)

BTW, I initially estimated 2 months to complete the ADSBsim project. It ended up taking 4 months instead of the 8 recommended by the technique above. One could interpret this as successfully finishing the project well under budget and within schedule. On the other hand, if one “thought” that it should’ve only taken two months to complete, then my performance can be interpreted as being horrendously below par.

Machine Age Thinking, Systems Age Thinking

In Ackoff’s Best, Mr. Russell Ackoff states the following

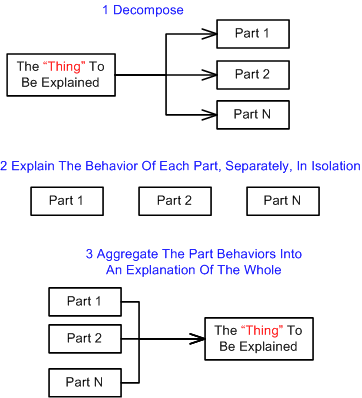

…Machine-Age thinking: (1) decomposition of that which is to be explained, (2) explanation of the behavior or properties of the parts taken separately, and (3) aggregating these explanations into an explanation of the whole. This third step, of course, is synthesis.

The figure below models the classical machine age, mechanistic thinking process described by Ackoff. The problem with this antiquated method of yesteryear is that it doesn’t work very well for systems of any appreciable complexity – especially large socio-technical systems (every one of which is mind-boggingly complex). During the decomposition phase, the interactions between the parts that animate the “thing to be explained” are lost in the freakin’ ether. Even more importantly, the external environment in which the “thing to be explained” lives and interacts is nowhere to be found. This is a huge mistake because the containing environment always has a profound effect on the behavior of the system as a whole.

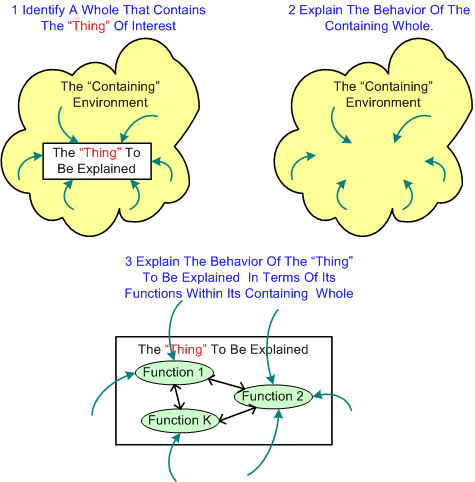

Mr. Ackoff professes that the antidote to mechanistic thinking is……. system thinking (duh!):

In the systems approach there are also three steps:

1. Identify a containing whole (system) of which the thing to be explained is a part.

2. Explain the behavior or properties of the containing whole.

3. Then explain the behavior or properties of the thing to be explained in terms of its role(s) or function(s) within its containing whole.

Note that in this sequence, synthesis precedes analysis.

The figure below graphically depicts the systems thinking process. Note that the relationships between the “thing to be explained” and its containing whole are first class citizens in this mode of thinking.

One of the primary reasons why we seek to understand systems is so that we can diagnose and solve problems that arise within established systems; or to design new systems to solve problems that need to be controlled or ameliorated. By applying the wrong thinking style to a system problem, the cure often ends up being worse than the disease. D’oh!

One of the primary reasons why we seek to understand systems is so that we can diagnose and solve problems that arise within established systems; or to design new systems to solve problems that need to be controlled or ameliorated. By applying the wrong thinking style to a system problem, the cure often ends up being worse than the disease. D’oh!

Abstraction

Jeff Atwood, of “Coding Horror” fame, once something like “If our code didn’t use abstractions, it would be a convoluted mess“. As software projects get larger and larger, using more and more abstraction technologies is the key to creating robust and maintainable code.

Using C++ as an example language, the figure below shows the advances in abstraction technologies that have taken place over the years. Each step up the chain was designed to make large scale, domain-specific application development easier and more manageable.

The relentless advances in software technology designed to keep complexity in check is a double-edged sword. Unless one learns and practices using the new abstraction techniques in a sandbox, haphazardly incorporating them into the code can do more damage than good.

One issue is that when young developers are hired into a growing company to maintain legacy code that doesn’t incorporate the newer complexity-busting language features, they become accustomed to the old and unmaintainable style that is encrusted in the code. Because of schedule pressure and no company time allocated to experiment with and learn new language features, they shoe horn in changes without employing any of the features that would reduce the technical debt incurred over years of growing the software without any periodic refactoring. The problem is exacerbated by not having a set of regression tests in place to ensure that nothing gets broken by any major refactoring effort. Bummer.

Requirements Before, Design After

The figure below depicts a UML sequence diagram of the behavior of a simulator during the execution of a user defined scenario. Before the code has been written and tested, one can interpret this diagram as a set of interrelated behavioral requirements imposed on the software. After the code has been written, it can be considered a design artifact that reflects what the code does at a higher level of abstraction than the code itself.

Interpretations like this give credence to Alan Davis’s brilliant quote:

One man’s requirement is another man’s design

Here’s a question. Do you think that specifying the behavior requirements in the diagram would have been best conveyed via a user story or a use case description?

Here’s a question. Do you think that specifying the behavior requirements in the diagram would have been best conveyed via a user story or a use case description?

The Requirements Landscape

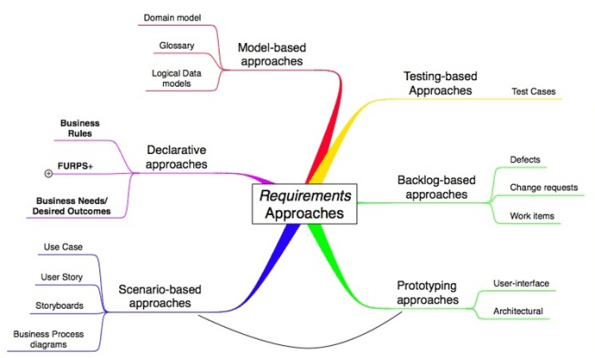

Kurt Bittner, of Ivar Jacobson International, has written a terrific white paper on the various approaches to capturing requirements. The mind map below was copied and pasted from Kurt’s white paper.

In his paper, Bittner discusses the pluses and minuses of each of his defined approaches. For the text-based “declarative” approaches, he states the pluses as: “they are familiar” and “little specialized training” is needed to write them. Bittner states the minuses as:

- They are “poor at specifying flow behavior”

- It’s “hard to connect related requirements”

IMHO, as systems get more and more complex, these shortcomings lead to bigger and bigger schedule, cost, and quality shortfalls. Yet, despite the advances in requirements specification methodologies nicely depicted in Bittner’s mind map, defense/aerospace contractors and their bureaucratic government customers seem to be forever married to the text-based “shall” declarative approach of yesteryear. Dinosaur mindsets, the lack of will to invest in corpo-wide training, and expensive past investments in obsolete and entrenched text-based requirements tools have prevented the newer techniques from gaining much traction. Do you think this encrusted way of specifying requirements will change anytime soon?

Black And White Binary Worlds

In this interview with the legendary Grady Booch, (InformIT: Grady Booch on Design Patterns, OOP, and Coffee), Larry O’Brien had this exchange with one of the original pioneers of object oriented design and the UML:

Larry: Joel Spolsky said:

“Sometimes smart thinkers just don’t know when to stop, and they create these absurd, all-encompassing, high-level pictures of the universe that are all good and fine, but don’t actually mean anything at all. These are the people I call Architecture Astronauts. It’s very hard to get them to write code or design programs, because they won’t stop thinking about Architecture.”

He also said:

“Sometimes, you’re on a team, and you’re busy banging out the code, and somebody comes up to your desk, coffee mug in hand, and starts rattling on…And your eyes are swimming, and you have no friggin’ idea what this frigtard is talking about,….and it’s going to crash like crazy and you’re going to get paged at night to come in and try to figure it out because he’ll be at some goddamn “Design Patterns” meetup.”

Spolsky seems to represent a real constituency that is not just dismissive but outright hostile to software development approaches that are not code-centric. What do you say to people who are skeptical about the value of work products that don’t compile?

Grady: You may be surprised to hear that I’m firmly in Joel’s camp. The most important artifact any development team produces is raw, running, naked code. Everything else is secondary or tertiary. However, that is not to say that these other things are inconsequential. Rather, our models, our processes, our design patterns help one to build the right thing at the right time for the right stakeholders.

I think that Grady’s spot on in that both the code-centric camp and the architecture-centric camp tend to throw out what’s good from the other camp. It’s classic binary extremism where things are either black or white to the participants and the color grey doesn’t exist in their minds. Once a rigid and unwavering mindset is firmly established, the blinders are put on and all learning stops. I try to keep this in mind all the time, but the formation of a black/white mindset is hard to detect. It creeps up on you.