Archive

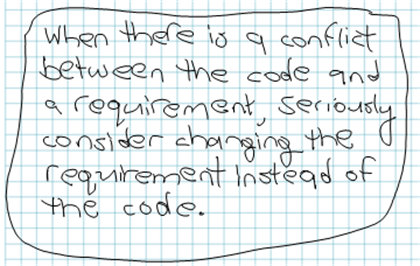

Software Development Cost Saving Tip

It’s easier (read as lower cost) to retest existing code with an “updated” requirement that was wrong or impossible to satisfy than it is to change and retest code in order to match an “unquestioned” requirement.

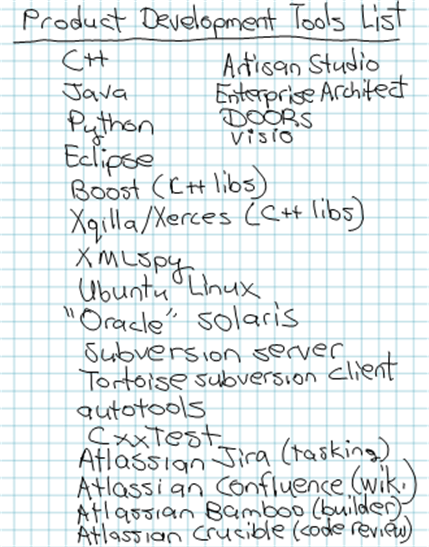

Tools List

Out of the blue, I tried to list all of the tools that we are currently using on our distributed software system development project:

What do you think? Too many tools? Too few tools? Redundant tools? Missing tools? What tools do you use?

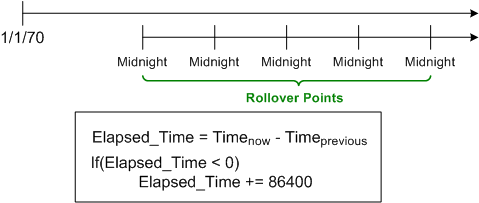

An Epoch Mistake

Let’s start this hypothetical story off with some framing assumptions:

Assume (for a mysterious historical reason nobody knows or cares to explore) that timestamps in a legacy system are always measured in “seconds relative to midnight” instead of “seconds relative to the unix epoch of 1/1/1970“.

Assume that the system computes many time differences at a six figure Hz rate during operation to fulfill it’s mission. Because “seconds relative to midnight” rolls over from 86399 to 0 every 24 hours, the time difference logic has to detect (via a disruptive “if” statement) and compensate for this rollover; lest its output is logically “wrong” once a day.

Assume that the “seconds relative to the unix epoch of 1/1/1970” library (e.g. Boost.Date_Time) satisfies the system’s dynamic range and precision requirements.

Assume that the design of a next generation system is underway and all the time fields in the messages exchanged between the system components are still mysteriously specified as “seconds since midnight” – even though it’s known that the added CPU cycles and annoyance of rollover checking could be avoided with a stroke of the pen.

Assume that the component developers, knowing that they can dispense with the silly rollover checking:

- convert each incoming time field into “seconds since the unix epoch“,

- use the converted values to perform their internal time difference computations without having to check/compensate for midnight rollover,

- convert back to “seconds since midnight” on output as required.

Assume that you know what the next two logical steps are: 1) change the specification of all the time fields in the messages exchanged between the system components from the midnight reference origin to the unix epoch origin, 2) remove the unessential input/output conversions:

Suffice it to say, in orgs where the culture forbids the admittance of mistakes (which implicates most orgs?) because the mistake-maker(s) may “look fallible“, next steps like this are rarely taken. That’s one of the reasons why old product warts are propagated forward and new warts are allowed to sprout up in next generation products.

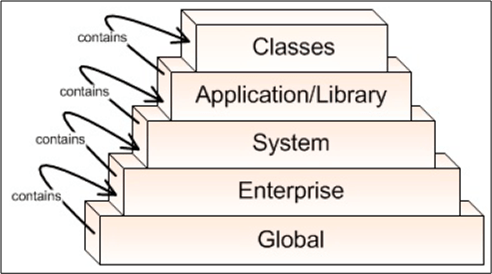

Levels, Components, Relationships

In the Crosstalk Journal, Michael Tarullo has written a nice little article on documenting software architectures. Using the concept of abstract levels (a necessary, but not sufficient tool, for understanding complex systems) and one UML component diagram per level, he presents a lightweight process for capturing big software system architecture decisions out of the ether.

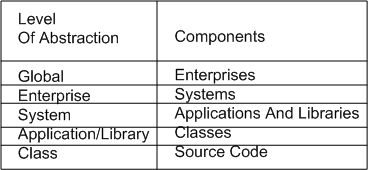

Levels Of Abstraction

Mr. Tarullo’s 5 levels of abstraction are shown below (minor nit: BD00 would have flipped the figure upside down and shown the most abstract level (“Global“) at the top.

Components Within A Level

Since “architecture” focuses on the components of a system and the relationships between them, Michael defines the components of concern within each of his levels as:

(minor nit: because the word “system” is too generic and overused, BD00 would have chosen something like “function” or “service” for the third level instead).

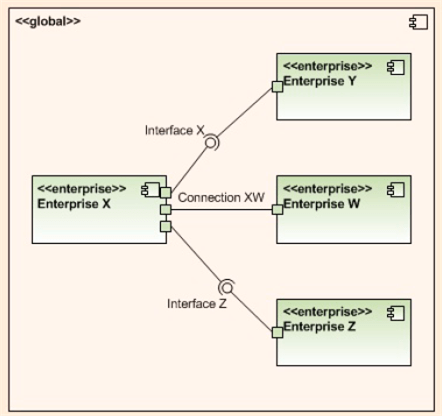

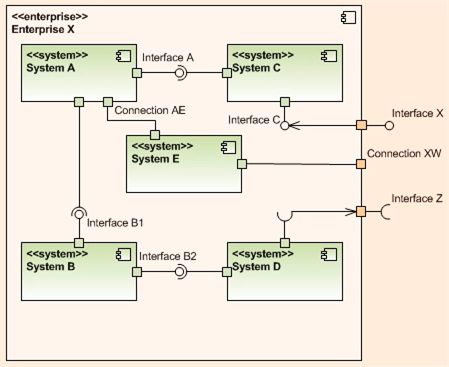

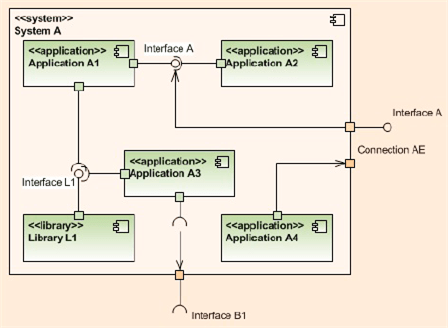

Relationships

Within a given level of abstraction, Mr. Tarullo uses a UML component diagram with ports and ball/socket pairs to model connections, interfaces (provides/requires), and to bind the components together. He also maintains vertical system integrity by connecting adjacent levels together via ports/balls/sockets.

The three UML component diagrams below, one for each of the top, err bottom, three levels of abstraction in his taxonomy, nicely illustrate his lightweight levels plus UML component diagram approach to software architecture capture.

But what about the next 2 levels in the 5 level hierarchy: the Application/Library and Classes levels of the architecture? Mr. Tarullo doesn’t provide documentation examples for those, but it follows that UML class and sequence diagrams can be used for the Application/Library level, while activity diagrams and state machine diagrams can be good choices for the atomic class level.

Providing and vigilantly maintaining a minimal, lightweight stack of UML “blueprint” diagrams like these (supplemented by minimal “hole-filling” text) seems like a lower cost and more effective way to maintain system integrity, visibility, and exposure to critical scrutiny than generating a boatload of DOD template mandated write-once-read-never text documents, no?

Alas, if:

- you and your borg don’t know or care to know UML,

- you and your borg don’t understand or care to understand how to apply the complexity and ambiguity busting concepts of “layering and balancing“,

- your “official” borg process requires the generation of write-once-read-never, War And Peace sized textbook monstrosities,

then you, your product, your customers, and your borg are hosed and destined for an expensive, conflict filled future. (D’oh! I hate when that happens.)

B and S == BS

About a year ago, after a recommendation from management guru Tom Peters, I read Sidney Dekker’s “Just Culture“. I mention this because Nancy Leveson dedicates a chapter to the concept of a “just culture” in her upcoming book “Engineering A Safer World“.

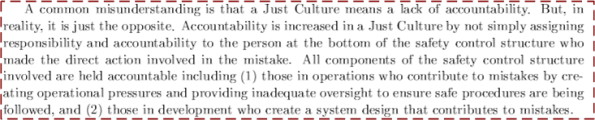

The figure below shows a simple view of the elements and relationships in an example 4 level “safety control structure“. In unjust cultures, when a costly accident occurs, the actions of the low elements on the totem pole, the operator(s) and the physical system, are analyzed to death and the “causes” of the accident are determined.

After the accident investigation is “done“, the following sequence of actions usually occurs:

- Blame and Shame (BS!) are showered upon the operator(s).

- Recommendations for “change” are made to operator training, operational procedures, and the physical system design.

- Business goes back to usual

- Rinse and repeat

Note that the level 2 and level 3 elements usually go uninvestigated – even though they are integral, influential forces that affect system operation. So, why do you think that is? Could it be that when an accident occurs, the level 2 and/or level 3 participants have the power to, and do, assume the role of investigator? Could it be that the level 2 and/or level 3 participants, when they don’t/can’t assume the role of investigator, become the “sugar daddies” to a hired band of independent, external investigators?

Scrumming For Dollars

“Systems Engineering with SysML/UML” author Tim Weilkiens recently tweeted:

Tim’s right. Check it out yourself: Scrum Guide – 2011.

Before Tim’s tweet, I didn’t know that “software” wasn’t mentioned in the guide. Initially, I was surprised, but upon further reflection, the tactic makes sense. Scrum creators Ken Schwaber and Jeff Sutherland intentionally left it out because they want to maximize the market for their consulting and training services. Good for them.

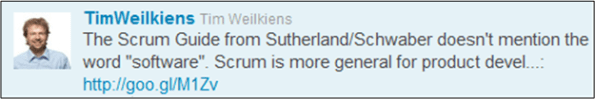

As a byproduct of synthesizing this post, I hacked together a UML class diagram of the Scrum system and I wanted to share it. Whatcha think? A useful model? Errors, omissions? Does it look like Scrum can be applied to the development of non-software products?

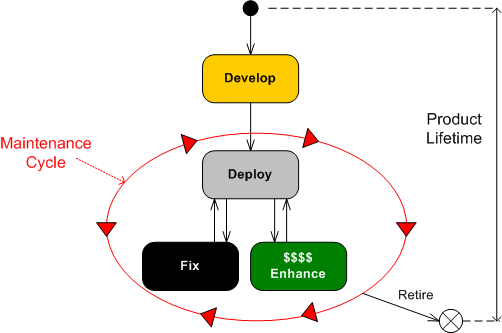

Product Lifetime

The UML state transition diagram below depicts the growth, maturity, and retirement of a large, software-intensive product. There are a bazillion known and unknown factors that influence the longevity and profitability of a system, but the greatest determinant is how effectively the work in the upfront “Develop” state is executed.

If the “Develop” state is executed poorly (underfunded, undermanned, mis-manned, rushed, “pens down“, etc), then you better hope you’ve got the customer by the balls. If not, you’re gonna spend most of your time transitioning into, and dwelling within, the resource-sucking “Fix” state. If you do have cajones in hand, then you can make the customer(s) pay for the fixes. If you don’t, then you’re hosed. (I hate when that happens.)

If you’ve done a great job in the “Develop” state, then you’re gonna spend most of your time transitioning into and out of the “Enhance” state – keeping your customer delighted and enjoying a steady stream of legitimate revenue. (I love when that happens.)

The challenge is: While you’re in the “Develop” state, how the freak do you know you’re doing a great job of forging a joyous and profitable future? Does being “on schedule” and “under budget” tell you this? Do “checked off requirements/design/code/test reviews” tell you this? Does tracking “earned value” metrics tell you this? What does tell you this? Is it quantifiably measurable?

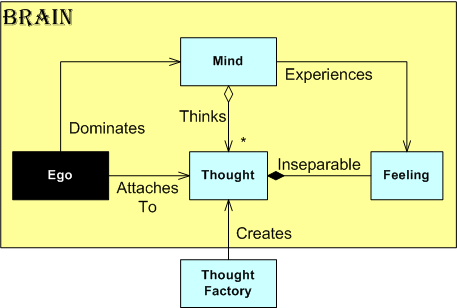

Spiritual UML

Because he is the chosen one, the universe spaketh to BD00 last night: “Go forth my son, and employ the UML to teach the masses the true nature of the mind!“. Fearful of being annihilated if he didn’t comply, BD00 sat down and waited for an infusion of cosmic power to infiltrate his being (to catalyze the process, BD00 primed the pump with a three olive dirty ‘tini and hoped the universe didn’t notice).

With mouse in trembling hand and an empty Visio canvas in front of him, BD00 waited…. and waited… and waited. Then suddenly, in mysterious Ouja board fashion, the mouse started moving and clicking away. Click, click, click, click.

After exactly 666 seconds, “revision 0” was 90% done. The secrets of the metaphysical that have eluded the best and brightest over the ages were captured and revealed in the realm of the physical! Lo and behold….. the ultimate UML class diagram:

Of course, the “Thought Factory” class is located in China. It efficiently and continuously creates (at rock bottom labor costs) every thought that comes/stays/goes through each of the 7 billion living brains on earth.

The Expense Of Defense

The following “borrowed” snippet from a recent Steve Vinoski QCon talk describes the well worn technique of defensive programming:

Steve is right, no? He goes on to deftly point out the expenses of defensive programming:

Steve is right on the money again, no?

Erlang and the (utterly misnamed) Open Telecom Platform (OTP) were designed to obviate the need for the defensive programming “idiom” style of error detection and handling. The following Erlang/OTP features force programmers to address the unglamorous error detection/handling aspect of a design up front, instead of at the tail end of the project where it’s often considered a nuisance and a second class citizen:

Even in applications where error detection and handling is taken seriously upfront (safety-critical and high availability apps), the time to architect, design, code, and test the equivalent “homegrown” capabilities can equal or exceed the time to develop the app’s “fun” functionality. That is, unless you use Erlang.

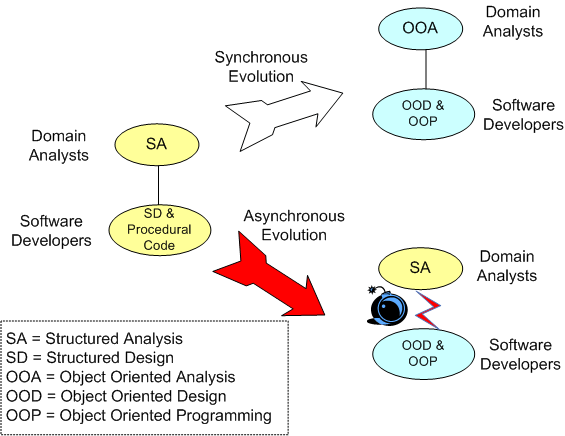

Asynchronous Evolution

In “Engineering A Safer World“, Nancy Leveson asserts that “asynchronous evolution” is a major contributor to costly accidents in socio-technical systems. Asynchronous evolution occurs when one or more parts in a system evolve faster than other parts – causing internal functional and interface mismatches between the parts. As an example, in a friendly fire accident where a pair of F-15 fighters shot down a pair of black hawk helicopters, the copter and fighter pilots didn’t communicate by voice because they had different radio technologies on board.

As another example, consider the graphic below. It shows a project team comprised of domain analysts and software developers along with two possible paths of evolution.

Happenstance asynchronous evolution is corrosive to product excellence and org productivity. It underpins much misunderstanding, ambiguity, error, and needless rework. Org controllers that diligently ensure synchronous evolution of the tools/techniques/processes amongst the disciplines that create and build its revenue generating products own a competitive advantage over those that don’t, no?