Archive

Best Actor Award

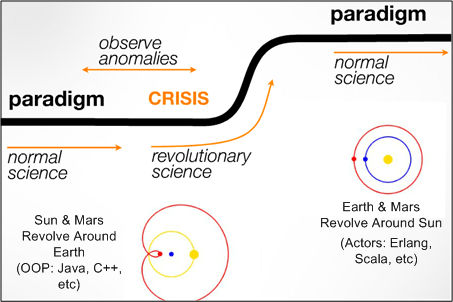

I recently watched (Trifork CTO and Erjang developer) Kresten Krab Thorup give this terrific talk: “Erlang, The Road Movie“. In his presentation, Kresten suggested that the 20+ year reign of the “objects” programming paradigm is sloooowly yielding to the next big problem-solving paradigm: autonomous “actors“. Using Thomas Kuhn‘s well known paradigm-change framework, he presented this slide (which was slightly augmented by BD00):

Kresten opined that the internet catapulted Java to the top of the server-side programming world in the 90s. However, the new problems posed by multi-core, cloud computing, and the increasing need for scalability and fault-tolerance will displace OOP/Java with actor-based languages like Erlang. Erlang has the upper-hand because it’s been evolved and battle-tested for over 20 years. It’s patiently waiting in the wings.

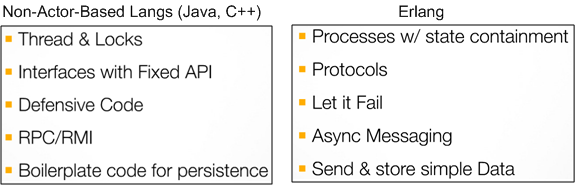

The slide below implies that the methods of OOP-based languages designed to handle post-2000 concurrency and scalability problems are rickety graft-ons; whereas the features and behaviors required to wrestle them into submission are seamlessly baked-in to Erlang’s core and/or its OTP library.

So, what do you think? Is Mr. Thorup’s vision for the future direction of programming correct? Is the paradigm shift underway? If not, what will displace the “object” mindset in the future. Surely, something will, no?

Too much of my Java programs are boilerplate code. – Kresten Krab Thorup

Too much of my C++ code is boilerplate code. – Bulldozer00

Java either steps up, or something else will. – Cameron Purdy

Reasonable Debugging

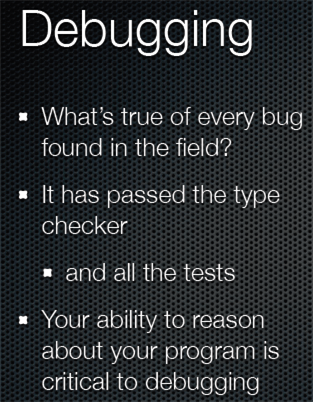

In Rich Hickey‘s QCon talk, “Simple Made Easy”, he hoisted this slide:

So, what can enhance one’s ability to “reason about” a program, especially a big, multi-threaded, multi-processing beast that maps onto a heterogeneous hodge-podge network of hardware and operating systems? Obviously, a stellar memory helps, but come on, how many human beings can remember enough detail in a >100K line code base to be able to debug field turds effectively and efficiently?

How about simplicity of design structure (whatever that means)? How about the deliberate and intentional use of a small set of nested, recurring patterns of interaction – both of the GoF kind and/or application specific ones? Or, shhhh, don’t say it too loudly, how about a set of layered blueprints that allow you and others to mentally “fly” over the software quickly at different levels of detail and from different aspect angles; without having to slodge through reams of “flat” code?

Do you, your managers, and/or your colleagues value and celebrate: simplicity of design structure; use of a small set of patterns of interaction; use of a set of blueprints? Do you and they walk the talk? If not, then why not? If so, then good for you, your org, your colleagues, your customers, and your shareholders.

No Lessons Learned

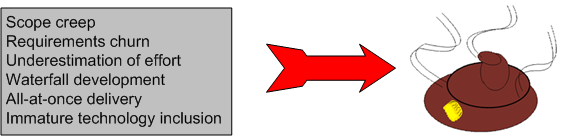

Because I’m fascinated by the causes and ubiquity of socio-technical project explosions, I try to follow technical press reports on the status of big government contracts. Here’s a recent article detailing the demise of the DoD’s Joint Tactical Radio System (JTRS): “How to blow $6 billion on a tech project“.

Even though the reasons for big, software-intensive, multi-technology project failures have been well known for decades, disasters continue to be hatched and cancelled daily around the world by both public and private institutions everywhere – except yours, of course.

What follows are some snippets from the Ars Technica article and the JTRS wikipedia entry. The well-known, well-documented, contributory causes to the JTRS project’s demise are highlighted in bold type.

When JTRS and GMR launched, the services broke out huge wish lists when they drafted their initial requests for proposals on individual JTRS programs. While they narrowed some of these requirements as the programs were consolidated, requirements were constantly revised before, during, and after the design process.

In hindsight, the military badly underestimated the challenges before it.

First and foremost was the software development problem. When JTRS started, software-defined radio (SDR) was still in its infancy. The project’s SCA architecture allowed software to manipulate field-programmable gate arrays (FPGAs) in the radio hardware to reconfigure how its electronics functioned, exposing those FPGAs as CORBA objects. But when development began, hardware implementations of CORBA for FPGAs didn’t really exist in any standard form.

Moving code for a waveform from one set of radio hardware to another didn’t just mean a recompile—it often meant significant rewrites to make it compatible with whatever FGPAs were used in the target radio, then further tweaking to produce an acceptable level of performance. The result: the challenge of core development tasks for each of the initial designs was often grossly underestimated. Some of those issues have been addressed by specialized CORBA middleware, such as PrismTech’s OpenFusion, but the software tools have been long in coming.

When JTRS began, there was no WiFi, no 3G or no 4G wireless, and commercial radio communications was relatively expensive. But the consumer industry didn’t even look at SDR as a way to keep its products relevant in the future. Now, ASIC-based digital signal processors are cheap, and new products also tend to include faster chips and new hardware features; people prefer buying a new $100 WiFi router when some future 802.11z protocol appears instead of buying a $3,000 wireless router today that is “future proofed” (and you can’t really call anything based on CORBA “future proofed”).

“If JTRS had focused on rapid releases and taken a more modular approach, and tested and deployed early, the Army could have had at least 80 percent of what it wanted out of GMR today, instead of what it has now—a certified radio that it will never deploy.”

Having an undefined technical problem is bad enough, but it gets even worse when serious “scope creep” sets in during a 15-year project.

Each of the five sub-programs within JTRS aimed not at an incremental goal, but at delivering everything at once. That was a recipe for disaster.

By 2007 (10 years after start) the JTRS program as a whole had spent billions and billions—without any radios fielded.

In the fall of 2011, after 13 years of toil and $6B of our money wasted, the monster was put out of its misery. It was cancelled on October 2011 by the United States Undersecretary of Defense:

Our assessment is that it is unlikely that products resulting from the JTRS GMR development program will affordably meet Service requirements, and may not meet some requirements at all. Therefore termination is necessary.

And here’s what we, the taxpayers, have to show for the massive investment:

After 13 years in the pipeline, what those users saw was a radio that weighed as much as a drill sergeant, took too long to set up, failed frequently, and didn’t have enough range. (D’oh! and WTF!)

Hindsight-Based

An Attempt At Legitimacy

Regular readers of this bogus blog know that one of my differentiators is the dorky graphics that I use to communicate my wildly distorted and fantastical views of life. But being a lazy ass and unscrupulous dolt, I’ve pulled quite a few graphic clips off the web without asking for permission or giving attribution. Here’s one of my latest DICster faves:

In trying to assuage my guilt for stealing clips, I used tineye.com to track down the talented creator of the artwork – Mr. George Coghill. I e-contacted him a few weeks ago, confessed my sin, and asked him how I could make it right. Alas, I haven’t heard back from him yet.

BTW, I don’t look anything like the DICster icon…..

Scripted Behavior

Since I’m on a mini-roll hoisting excerpts from W. L. Livingston’s D4P book, here’s yet another one (I had to type the example in by hand because it only appears in the print version and not in the pdf. D’oh!):

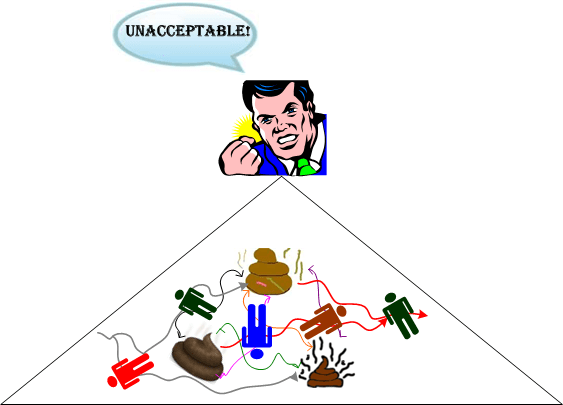

In project review meetings, the whimsical plan, riddled with entropy and misinformation, is used as gospel to measure “actual” progress. Since everyone at the meeting knows the measurement is useless as a control, it becomes an instrument of management to manage the project. Invariably, management directs a get-well plan be devised to get back on the horribly-flawed milestone plan. Of course, the get-well plan is composed in the same toxic way.

The attending executive proclaims “If you don’t get this mess back on schedule by tomorrow, I’ll get somebody who can.” Everyone has heard this proclamation of executive out-of-control. The impact of this act of desperation on the project is wholesale CYA (Cover Your Ass) and subreption. Information available for forecasting progress becomes nothing but calculated lies. That’s where attempts to defy natural law land you.

Matched Vs. Mismatched

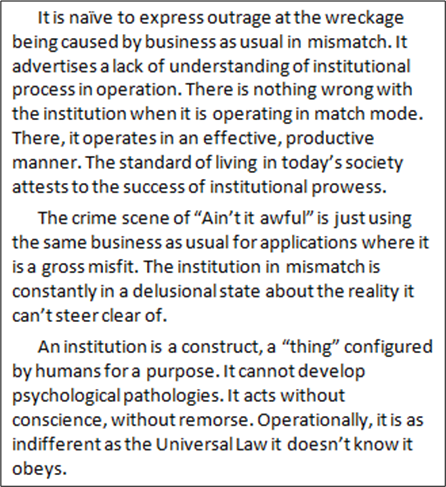

If for some strange reason you wasted some precious time and read yesterday’s post, you might have wondered what this “mismatch” thing is all about. Hopefully, this excerpt from the forthcoming 2012 edition of Bill Livingston’s D4P book (not the layman’s D4P4D) should shed some light on the mystery:

Naive outrage? Lack of understanding? Hmm. Not BD00. He knows everything.

Keystone Koppers

Here’s just one entertaining excerpt from Bill Livingston’s darkly insightful and mind-bending book, “D4P4D“:

The key word in the whole excerpt is “mismatch“. When there is a “match“, all is well, and “business as usual” gets the job done effectively and efficiently.

So, whadya think? Fearful fact? Funny fiction? A touch of both?

A Blank Stare In Return

In a previous life, I once was commiserating with a manager about how difficult and time consuming it was to keep up with technological change in the software development industry. She said “That’s why I went into management“. After sharing a chuckle, I asked her if there were any other reasons for movin’ on up. I received a blank stare in return.

In a previous life, I once was talking to a software lead and hinted that maybe he should do more than watching schedules and doling out tasks (like cutting some code from time to time or keeping the technical documentation in synch with the code or doing some exploratory testing on the code base or taking on the role of buildmaster). I received a blank stare in return.

In a previous life, I once asked a software lead why he moved out of “coding” and into the periphery of management. He said: “For more money“. When I asked him if there were any other reasons, I received a blank stare in return.

At least they were honest. They could’ve offered up the classic management textbook response: “to take on more responsibility“. Better yet, they could’ve said “to help people do their jobs better” or “to help improve the quality of our processes by reducing red tape and eliminating low value steps“.

So, are they “selfish” people? Nah. This ubiquitous behavior is simply a side effect of how the vast majority of reward and power distribution systems are structured in hierarchical orgs. It’s been that way for 100 years and it looks like it will stay that way for the next 100 years. But then again, maybe not.

Brain-Bustingly Hard

Unsettlingly, I admire the cross-disciplinary work of William L. Livingston because:

- It’s difficult to place into a nice and tidy category (systems thinking? social science? philosophy?).

- It resonates with “something” inside me but it’s brain-bustingly hard to absorb, understand, and re-communicate.

- The breadth of his vocabulary is astonishing.

- He doesn’t give a shit about becoming rich and famous.

- He digs up quotes/paragraphs from obscure, but insightful “mentors” from the past.

As the boxes below (plucked from the D4P4D) show, Gustave Le Bon is one of those insightful mentors, no?

A lot of Mr. Le Bon’s work is available for free online at project Gutenberg.