Archive

Move Me, Please!

UPDATE: Don’t read this post. It’s wrong, sort of 🙂 Actually, please do read it and then go read John’s comment below along with my reply to him. D’oh!

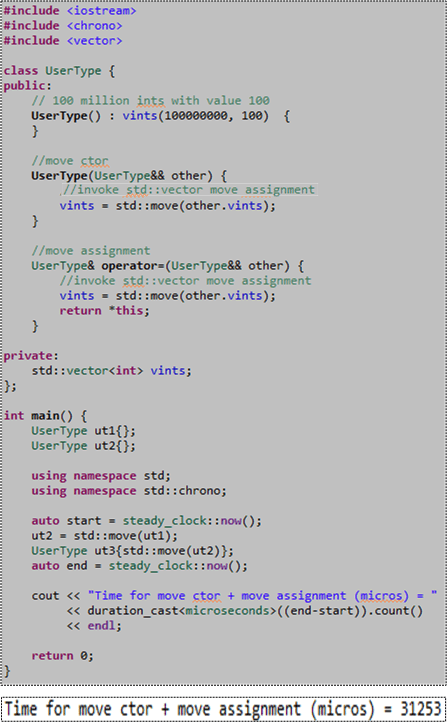

Just because all the STL containers are “move enabled” in C++11, it doesn’t mean you get their increased performance for free when you use them as member variables in your own classes. You don’t. You still have to write your own “move” constructor and “move” assignment functions to obtain the superior performance provided by the STL containers. To illustrate my point, consider the code and associated console output for a typical run of that code below.

Please note the 3o-ish millisecond performance measurement we’ll address later in this post. Also note that since only the “move” operations are defined for the UserType class, if you attempt to “copy” construct or “copy” assign a UserType object, the compiler will barf on you (because in this case the compiler doesn’t auto-generate those member functions for you):

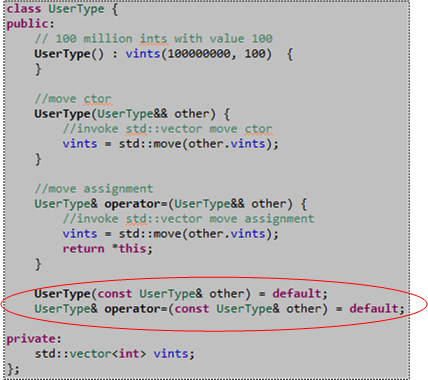

However, if you simply add “default” declarations of the copy ctor and copy assignment operations to the UserType class definition, the compiler will indeed generate their definitions for you and the above code will compile cleanly:

Ok, now remember that 30-ish millisecond performance metric we measured for “move” performance? Let’s compare that number to the performance of a “copy” construct plus “copy” assign pair of operations by adding this code to our main() function just before the return statement:

After compiling and running the code that now measures both “move” and “copy” performance, here’s the result of a typical run:

As expected, we measured a big boost in performance, 5X in this case, by using “moves” instead of old-school “copies“. Having to wrap our args with std::move() to signal the compiler that we want a “move” instead of “copy” was well worth the effort, no?

Summarizing the gist of this post; please don’t forget to write your own simple “move” operations if you want to leverage the “free” STL container “move” performance gains in the copyable classes you write that contain STL containers. 🙂

NOTE1: I ran the code in this post on my Win 8.1 Lenovo A-730 all-in-one desktop using GCC 4.8/MinGW and MSVC++13. I also ran it on the Coliru online compiler (GCC 4.8). All test runs yielded similar results. W00t!

NOTE2: When I first wrote the “move” member functions for the UserType class, I forgot to wrap other.vints with the overhead-free std::move() function. Thus, I was scratching my (bald) head for a while because I didn’t know why I wasn’t getting any performance boost over the standard copy operations. D’oh!

NOTE3: If you want to copy and paste the code from here into your editor and explore this very moving topic for yourself, here is the non-.png listing:

#include <iostream>

#include <chrono>

#include <vector>

class UserType {

public:

// 100 million ints with value 100

UserType() : vints(100000000, 100) {

}

//move ctor

UserType(UserType&& other) {

//invoke std::vector move assignment

vints = std::move(other.vints);

}

//move assignment

UserType& operator=(UserType&& other) {

//invoke std::vector move assignment

vints = std::move(other.vints);

return *this;

}

UserType(const UserType& other) = default;

UserType& operator=(const UserType& other) = default;

private:

std::vector<int> vints;

};

int main() {

UserType ut1{};

UserType ut2{};

using namespace std;

using namespace std::chrono;

auto start = steady_clock::now();

ut2 = std::move(ut1);

UserType ut3{std::move(ut2)};

auto end = steady_clock::now();

cout << "Time for move ctor + move assignment (micros) = "

<< duration_cast<microseconds>((end-start)).count()

<< endl;

UserType ut4{};

UserType ut5{};

start = steady_clock::now();

ut5 = ut4;

UserType ut6{ut5};

end = steady_clock::now();

cout << "Time for copy ctor + copy assignment (micros) = "

<< duration_cast<microseconds>((end-start)).count()

<< endl;

return 0;

}

Space Flights To Alpha Centauri

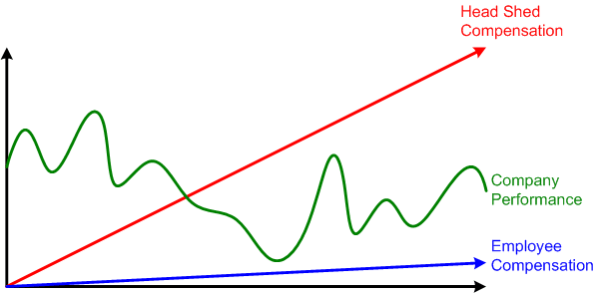

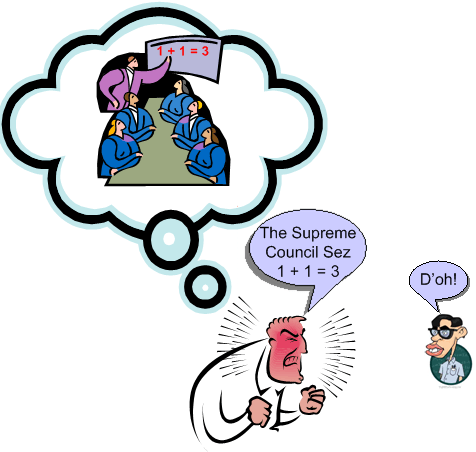

A disgusted reader recently sent me this link regarding yet another textbook example of corpo scam artistry: “Bwin.party proposes new bonus plan for top execs as share price languishes“. Since the board of directors at a borg is usually a hand picked crew of yes-men by the “senior leadership team“, the article headline should probably read: “Bwin.party executives propose new bonus plan for themselves as share price languishes.”

When pay for performance under the current set of “KPI“s (Key Performance Indicators) stops the money from flowing into the pockets of the head shed aristocracy, the answer is always the same no-brainer. Simply get your board-of-derelicts to lower the bar and champion a new set of bogus KPIs to the powerless and fragmented shareholdership. Ka-ching!

With the old bonus benchmarks now akin to launching manned space flights to Alpha Centauri, Bwin.party is proposing a new scheme that more accurately reflects the company’s lowered expectations. Under this plan, CEO Norbert Teufelberger would pick up a maximum bonus of 550% of his annual base salary of £500k, while chief financial officer Martin Weigold would receive 435% of his £446k annual pay packet. – Steven Stradbrooke

When borgs perform brazen acts of inequity like Bwin.party (“party” is literally true for the execs), the rationalizations they spew to the public are mostly hilarious repetitions recycled from the past:

- Bwin.party says the paydays are necessary because the US market is beginning to open up, and the hordes of US gaming companies looking to move online lack senior management with online know-how.

- The potential for US companies to poach senior execs from experienced European companies represents “the single biggest threat to Bwin.party’s ability to retain its senior management.”

- Bwin.party also suggests its top execs deserve danger pay due to “aggressive enforcement of national laws against senior executives within the industry.”

Danger pay? Bwaaahahhah and WTF! That excuse certainly wasn’t dug up from the past. Ya gotta give the clever board-of-derelicts bonus points for such creative genius: “If ya break the law and damage the company, don’t worry. We’ve got ya covered.” Why not go one step further and give Bwin.party’s employees hazard pay for having to work under such a cast of potentially criminal bozeltines?

To determine if executive compensation has any correlation to company performance, BD00 performed 30 seconds worth of intensive research and plotted the results of his arduous effort for your viewing pleasure:

So, whadya think? Is executive compensation tied to performance over the long haul? Regardless of how you answer the question, ya gotta love capitalism because after all is said, it’s the worst “ism” except for all the other “isms“.

Understood, Manageable, And Known.

Our sophistication continuously puts us ahead of ourselves, creating things we are less and less capable of understanding – Nassim Taleb

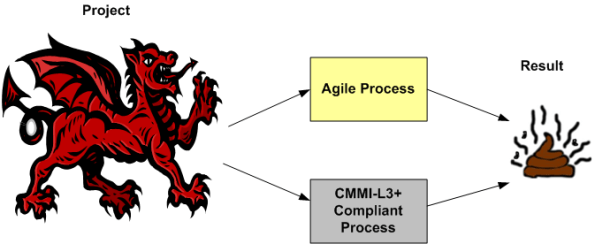

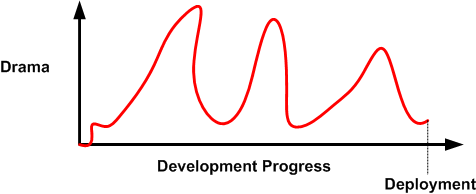

It’s like clockwork. At some time downstream, just about every major weapons system development program runs into cost, schedule, and/or technical performance problems – often all three at once (D’oh!).

Despite what their champions espouse, agile and/or level 3+ CMMI-compliant processes are no match for these beasts. We simply don’t have the know how (yet?) to build them efficiently. The scope and complexity of these Leviathans overwhelms the puny methods used to build them. Pithy agile tenets like “no specialists“, “co-located team“, “no titles – we’re all developers” are simply non-applicable in huge, multi-org programs with hundreds of players.

Being a student of big, distributed system development, I try to learn as much about the subject as I can from books, articles, news reports, and personal experience. Thanks to Twitter mate @Riczwest, the most recent troubled weapons system program that Ive discovered is the F-35 stealth fighter jet. On one side, an independent, outside-of-the-system, evaluator concludes:

The latest report by the Pentagon’s chief weapons tester, Michael Gilmore, provides a detailed critique of the F-35’s technical challenges, and focuses heavily on what it calls the “unacceptable” performance of the plane’s software… the aircraft is proving less reliable and harder to maintain than expected, and remains vulnerable to propellant fires sparked by missile strikes.

On the other side of the fence, we have the $392 billion program’s funding steward (the Air Force) and contractor (Lockheed Martin) performing damage control via the classic “we’ve got it under control” spiel:

Of course, we recognize risks still exist in the program, but they are understood and manageable. – Air Force Lieutenant General Chris Bogdan, the Pentagon’s F-35 program chief

The challenges identified are known items and the normal discoveries found in a test program of this size and complexity. – Lockheed spokesman Michael Rein

All of the risks and challenges are understood, manageable, known? WTF! Well, at least Mr. Rein got the “normal” part right.

In spite of all the drama that takes place throughout a large system development program, many (most?) of these big ticket systems do eventually get deployed and they end up serving their users well. It simply takes way more sweat, time, and money than originally estimated to get it done.

The Split

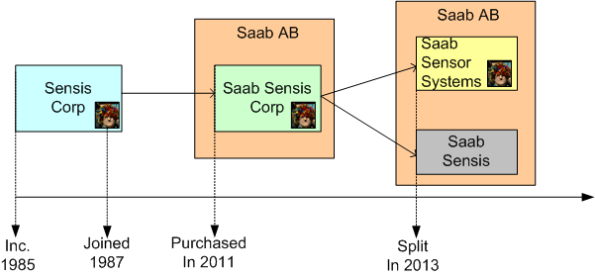

While reflecting on my journey through professional life, I decided to generate a timeline of my travels to date:

After a seven year stint at GE, I joined Sensis (SENsor Information Systems) Corporation in 1987 as employee #13. For 20+ years, the company flourished and grew until running into financial difficulties in 2009. After choosing Sweden’s Saab AB from a list of suitors as our future parent, we were purchased in 2011 and our name was changed accordingly to Saab Sensis Corp.

Due to the funky national security complications of being a foreign-owned company that does business with the US Department of Defense, it made financial sense to split the group in two – a subgroup that conducts business with the US DoD (Saab Sensor Systems) and one that doesn’t (Saab Sensis). A functional and physical split would lift the DoD security restrictions hampering the non-DoD business efforts of the Saab Sensis group.

After expressing a personal preference to be placed at Saab Sensis, I ended up being assigned to the Saab Sensor Systems group when the split was finalized in the fall of 2013. So far, it has worked out better than I initially thought it would. The high quality of the people and the work is essentially the same between the two groups, but Saab Sensor Systems is roughly half the size of Saab Sensis (to me, the smaller the better). In addition, the near term business outlook for Saab Sensor Systems seems to hold more promise.

I’ve been a lucky bastard throughout my entire work and social lives. I’m grateful for that, and I hope my lucky streak continues.

The Supreme Council Sez

Anyone who conducts an argument by appealing to authority is not using his intelligence; he is just using his memory – Leonardo da Vinci

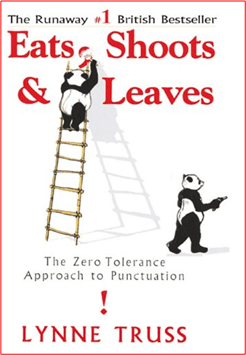

The Commas And Stuff

After watching an interesting documentary on J. D. Salinger, I decided to re-read his monstrously famous book, “The Catcher In The Rye“. Stunningly, I couldn’t find any store online that sold an e-version of the book. I ended up having to buy the dead-trees version.

In an early part of the book, protagonist Holden Caulfield’s roommate, Stradlater, begs Holden to write an English composition for him:

How ’bout writing a composition for me, in English? Just don’t do it too good, is all. That sonuvabitch Hartzell thinks you’re a hot-shot in English, and he knows your my roommate. So I mean don’t stick all the commas and stuff in the right place.

Stradlater was always doing that. He wanted you to think that the only reason he was lousy at writing compositions was because he stuck all the commas in the wrong place.

I laughed my ass off when I read that passage. Ya see, I’ve been blawging for over four years now, and deciding where to place commas in the text has always been a real pain in my keester. I’m constantly finding myself being yanked out of the continuous flow of words and pausing to ask “should I put a freakin’ comma here?“. In addition, when I iterate over a post just prior to queuing it up for publication, I’m always adding, removing, and/or moving commas around. I’d estimate that 20% of my writing time is spent obsessing over comma placement. LOL!

I think that my “comma dilemma” is why I enjoy composing the dorky clipart pix for a blog post much more than concocting the comma-laced text. I find the construction, placement, movement, and connection of images on the e-canvas a more fluid and less disruptive experience.

Another text-based conundrum that I’m constantly bumping into is the annoying decision process associated with the use of the word “that“. But that, is for a future post.

The Non-Absorption Of Variety

Update: Thanks to input from “fatjacques“, the quotes below can be attributed to Jon Seddon

A few days ago, I clipped a bunch of text from an article I read on computer-provided public services and put the words into a visio scratchpad page. However, I forgot to copy & paste the link to the article. Thus, I can’t give proper attribution to whomever wrote the words and ideas that I’m about to reuse in this post. I’m bummed, but I’m gonna proceed forward anyway.

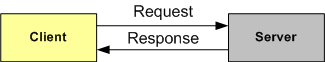

Consider the computer client/server model below. In the simplest case, a client issues a request to fulfill a need to the server. The server subsequently provides a single response back to the client that fulfills the need. The client walks away happy as a clam. Nothing to it, right?

Next, let’s assume that the client needs to issue “k” requests and receive k responses in order for her need to be fulfilled:

From the bottom of the above multi-transaction graphic, you can infer that the service provider can be “implemented” as a person or a software program running on a computer (or a hybrid combo of both).

With the current obsession in government about “reducing costs” (instead of focusing on the real goal of providing services more effectively), more and more public service people are being replaced by computers and the buggy, inflexible software that runs on those cold-hearted, mechanistic beasts.

Since computers obey rules to the letter and, thus, can’t absorb variety in real-time, service costs are rising instead of declining. That’s because each person requiring a public service likely has some individual-specific needs. And since the likelihood of a pre-programmed rule set covering the infinitely subtle variety of needs from the citizenry is zero, employing a computer-based service system guarantees that the number of transactions required to consummate a service will be much larger than the number of transactions provided via a thinking, reacting service person. What may normally take a single transaction with a flexible, thinking, service person representative could take 20+ interactions with a rigid, dumbass computer.

But it’s even worse than that. In addition to requiring many more transactions, a rule-based computer service interface may totally fail to complete the service at all. Frustrated clients may simply give up and walk away without having received any value at all. Miserably poor service at a high cost. Bummer.

Good services are local, not industrial, delivered by people, not computers, who give people what they need. – Jon Seddon

Fetch-tini

The 1200 Mile Flex

I am, and always have been, a gadget man. I own an iPhone 5, a Kindle Fire HD, a Livescribe Echo pen, and a Fitbit Flex. Since I purchased it in the spring of 2013, my favorite device has been the Fitbit Flex. That delightful gadget is effortless to use and has a well thought out, seamlessly integrated web/phone/device system.

My Flex has helped me shed close to 10 pounds of flab because:

- I now park farther away from driving destinations,

- I’ve tacked 10 minutes of treadmill time on to my regular workout

- I frequently scurry off for quick, 10 minute walks at work and home

Recently, I received my first annual report from Fitbit.com:

1200+ miles in less than one year! Sheesh, I never woulda thunk it.

Oh, and even though my friends often hassle me about my utter lack of fashion style, I’ve happily collected every color band currently available for the Flex.