Archive

Exaggerated And Distorted

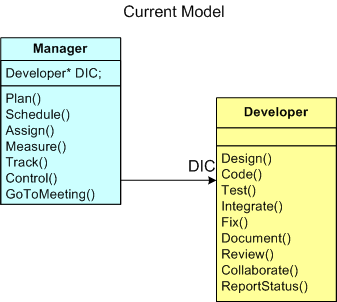

The figure below provides a UML class diagram (“class” is such an appropriate word for this blarticle) model of the Manager-Developer relationship in most software development orgs around the globe. The model is so ubiquitous that you can replace the “Developer” class with a more generic “Knowledge Worker” class. Only the Code(), Test(), and Integrate() behaviors in the “Developer” class need to be modified for increased global applicability.

Everyone knows that this current model of developing software leads to schedule and cost overruns. The bigger the project, the bigger the overruns. D’oh!

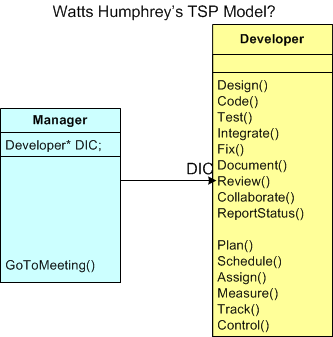

In this article and this interview, Watts Humphrey trumps up his Team Software Process (TSP) as the cure for the disease. The figure below depicts an exaggerated and distorted model of the manger-developer relationship in Watts’s TSP. Of course, it’s an exaggerated and distorted view because it sprang forth from my twisted and tortured mind. Watts says, and I wholeheartedly agree (I really do!), that the only way to fix the dysfunction bred by the current way of doing things is to push the management activities out of the Manager class and down into the Developer class (can you say “empowerment”, sic?). But wait. What’s wrong with this picture? Is it so distorted and exaggerated that there’s not one grain of truth in it? Decide for yourself.

Even if my model is “corrected” by Watts himself so that the Manager class actually adds value to the revolutionary TSP-based system, do you think it’s pragmatically workable in any org structured as a CCH? Besides reallocating the control tasks from Manager to Developer, is there anything that needs to socially change for the new system to have a chance of decreasing schedule and cost overruns (hint: reallocation of stature and respect)? What about the reward and compensation system? Does that need to change (hint: increased workload/responsibility on one side and decreased workload/responsibility on the other)? How many orgs do you know of that aren’t structured as a crystalized CCH?

Strangely (or not?), Watts doesn’t seem to address these social system implications of his TSP. Maybe he does, but I just haven’t seen his explanations.

Open Loop

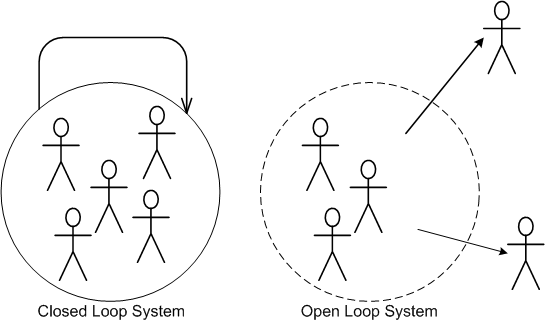

I’m currently working on a really exciting and fun software development project with several highly competent peers. Two of them, however, like to operate open loop and plow ahead with minimal collaboration with the more disciplined (and hence, slower) developers. These dudes not only insert complex designs and code into their components without vetting their ideas before the rest of the team, they have no boundaries. Like elephants in a china shop, they tramp all over code in everybody else’s “area of ownership”.

“He who touches the code last, owns it.” — Anonymous

Because of the team cohesion that it encourages, I’m all for shared ownership, but there has to be some nominal boundary to arrest the natural growth in entropy inherent in open loop systems and to ensure local and global conceptual integrity.

Even though these colleagues are rogues, they’re truly very smart. So, I’m learning from them, albeit slooowly since they don’t document very well (surprise!) and I have to laboriously reverse engineer the code they write to understand what the freak they did. Even though feelings aren’t allowed, I “feel” for those dudes who come on board the project and have to extend/maintain our code after we leave for the next best thing.

Emergent Design

I’m somewhat onboard with the agile community’s concept of “emergent design“. But like all techniques/heuristics/rules-of-thumb/idioms, context is everything. In the “emergent design” approach, you start writing code and, via a serendipitous, rapid, high frequency, mistake-making, error-correction process, a good design emerges from the womb – voila! This technique has worked well for me on “small” designs within an already-established architecture, but it is not scalable to global design, new architecture, or cross-cutting system functionality. For these types of efforts, “emergent modeling” may be a more appropriate approach. If you believe this, then you must make the effort to learn how to model, write (or preferably draw) it up, and iterate on it much like you do with writing code. But wait, you don’t do, or ever plan to do, documentation, right? Your code is so self-expressive that you don’t even need to comment it, let alone write external documentation. That crap is for lowly English majors.

To religiously embrace “emergent design” and eschew modeling/documentation for big design efforts is to invite downstream disaster and massive post delivery stakeholder damage. Beware because one of those downstream stakeholders may be you.

PAYGO II

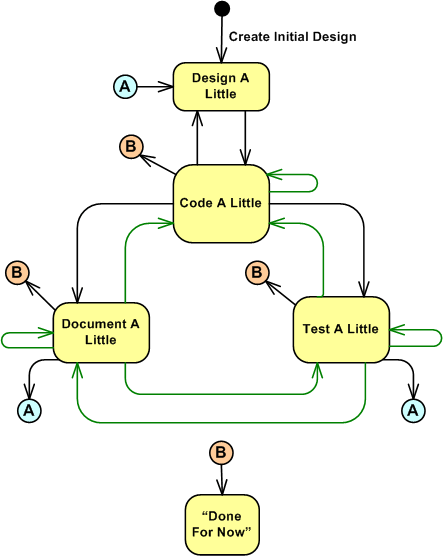

PAYGO stands for “Pay As You Go“. It’s the name of the personal process that I use to create or maintain software. There are five operational states in PAYGO:

- Design A Little

- Code A Little

- Test A Little

- Document A Little

- Done For Now

Yes, the fourth state is called “Document A Little“, and it’s a first class citizen in the PAYGO process. Whatever process you use, if some sort of documentation activity is not an integral part of it, then you might be an incomplete and one dimensional engineer, no?

“…documentation is a love letter that you write to your future self.” – Damian Conway

The UML state transition diagram below models the PAYGO states of operation along with the transitions between them. Even though the diagram indicates that the initial entry into the cyclical and iterative PAYGO process lands on the “Design A Little” state of activity, any state can be the point of entry into the process. Once you’re immersed in the process, you don’t kick out into the “Done For Now” state until your first successful product handoff occurs. Here, successful means that the receiver of your work, be it an end user or a tester or another programmer, is happy with the result. How do you know when that is the case? Simply ask the receiver.

Notice the plethora of transition arcs in the diagram (the green ones are intended to annotate feedback learning loops as opposed to sequential forward movements). Any state can transition into any other state and there is no fixed, well defined set of conditions that need to be satisfied before making any state-to-state leap. The process is fully under your control and you freely choose to move from state to state as “God” (for lack of a better word) uses you as an instrument of creation. If clueless STSJ PWCE BMs issue mindless commands from on high like “pens down” and “no more bug fixing, you’re only allowed to write new code“, you fake it as best you can to avoid punishment and you go where your spirit takes you. If you get caught faking it and get fired, then uh….. soothe your conscience by blaming me.

The following quote in “The C++ Programming Language” by mentor-from-afar Bjarne Stroustrup triggered this blog post:

In the early years, there was no C++ paper design; design, documentation, and implementation went on simultaneously. There was no “C++ project” either, or a “C++ design committee.” Throughout, C++ evolved to cope with problems encountered by users and as a result of discussions between my friends, my colleagues, and me. – Bjarne Stroustrup

When I read it on my Nth excursion through the book (you’ve made multiple trips through the BS book too, no?), it occurred to me that my man Bjarne uses PAYGO too.

Say STFU to all the mindlessly mechanistic processes from highly-credentialed and well-intentioned luminaries like Watts Humphrey’s PSP (because he wants to transform you into an accountant) and your mandated committee corpo process group (because the chances are that the dudes who wrote the process manuals haven’t written software in decades) and the TDD know-it-alls. Embrace what you discover is the best personal development process for you; be it PAYGO or whatever personal process the universe compels you to embrace. Out of curiosity, what process do you use?

If you’re interested in a higher level overview of the personal PAYGO process in the context of other development processes, you can check out this previous post: PAYGO I. And thanks for listening.

Processes, Threads, Cores, Processors, Nodes

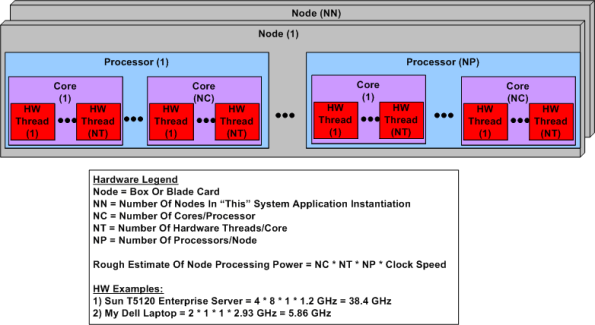

Ahhhhh, the old days. Remember when the venerable CPU was just that, a CPU? No cores, no threads, no multi-CPU servers. The figure below shows a simple model of a modern day symmetric multi-processor, multi-core, multi-thread server. I concocted this model to help myself understand the technology better and thought I would share it.

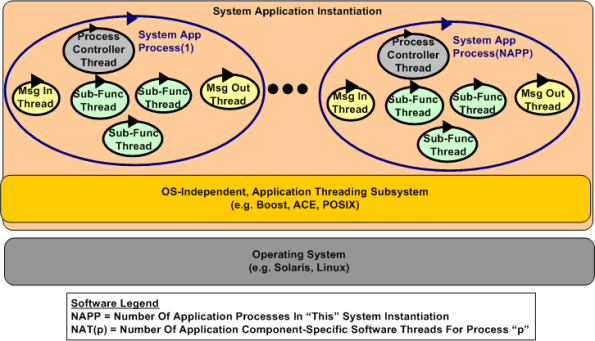

The figure below shows a generic model of a multi-process, multi-threaded, distributed, real-time software application system. Note that even though they’re not shown in the diagram, thread-to-thread and process-to-process interfaces abound. There is no total independence since the collection of running entities comprise an interconnected “system” designed for a purpose.

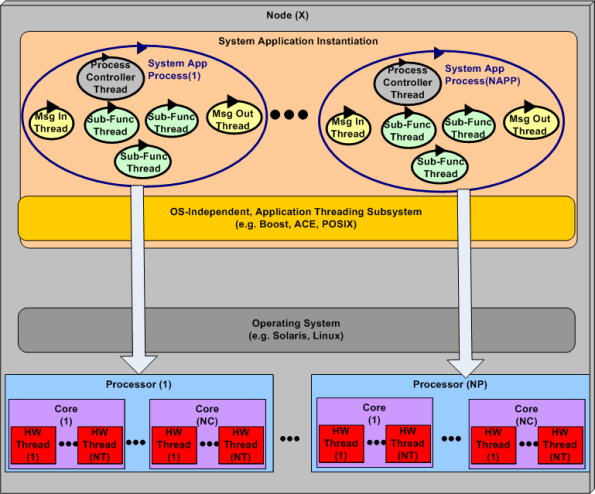

Interesting challenges in big, distributed system design are:

- Determining the number of hardware nodes (NN) required to handle anticipated peak input loads without dropping data because of a lack of processing power.

- The allocation of NAPP application processes to NN nodes (when NAPP > NN).

- The dynamic scheduling and dispatching of software processes and threads to hardware processors, cores, and threads within a node.

The first two bullets above are under the full control of system designers, but not the third one. The integrated hardware/software figure below highlights the third bullet above. The vertical arrows don’t do justice to the software process-thread to hardware processor-core-thread scheduling challenge. Human control over these allocation activities is limited and subservient to the will of the particular operating system selected to run the application. In most cases, setting process and thread priorities is the closest the designer can come to controlling system run-time behavior and performance.

D4P And D4F

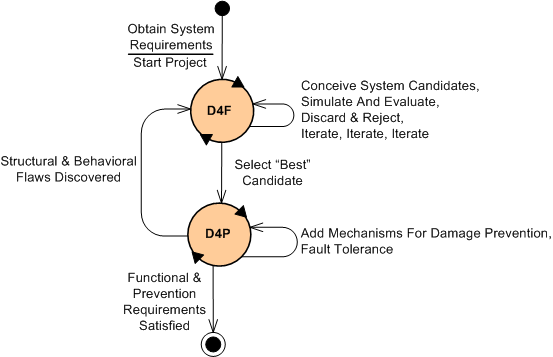

As some of you may know, my friend Bill Livingston recently finished writing his latest book, “Design For Prevention” (D4P). While doodling and wasting time (if you hadn’t noticed, I like to waste time), I concocted an idea for supplementing the D4P with something called “Design For Function” (D4F). The figure below shows, via a state machine diagram, the proposed marriage of the two complementary processes.

After some kind of initial problem definition is formulated by the owner(s) of the problem, the requirements for a “future” socio-technical system whose purpose is to dissolve the problem are recorded and “somehow” awarded to an experienced problem solver in the domain of interest. Once this occurs, the project is kicked off (Whoo Hoo!) and the wheels start churning via entry into the D4F state. In this state, various structures of connected functions are conceived and investigated for fitness of purpose. This iterative process, which includes short-cycle-run-break-fix learning loops via both computer-based and mental simulations, separates the wheat from the chaff and yields an initial “best” design according to some predefined criteria. Of course, adding to the iterative effort is the fact that the requirements will start changing before the ink dries on the initial snapshot.

Once the initial design candidate is selected for further development, the sibling D4P state is entered for the first (but definitely not last) time. In this important but often neglected problem solving system sub-state, the problem solution system candidate is analyzed for failure modes and their attendant consequences. Additional monitoring and control functional structures are then conceived and integrated into the system design to prevent failures and mitigate those failures that can’t be prevented. The goal at this point is to make the system fault tolerant and robust to large, but low probability, external and internal disturbances. Again, iterative simulations are performed as reconnaissance trips into the future to evaluate system effectiveness and robustness before it gets deployed into its environment.

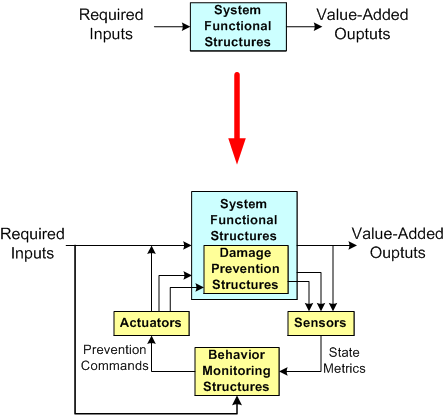

The figure below shows a dorky model of a system design before and after the D4P process has been executed. Notice the necessary added structural and behavioral complexity incorporated into the system as a result of recursively applying the D4P. Also note that the “Behavior Monitoring” structure(s), be they composed of people in a social system or computers in an automated system, or most likely both, need to have an understanding of the primary system goal seeking functions in order to effectively issue damage prevention and mitigation instructions to the various system elements. Also note that these instructions need not only be logically correct, they need to be timely for them to be effective. If the time lag between real-time problem sensing and control actuating is too great (which happens repeatedly and frequently in huge multi-layered command and control hierarchies that don’t have or want an understanding of what goes on down in the dirty boiler room), then the internal/external damage caused by the system can be as devastating as a cheaper, less complex system operating with no damage prevention capability at all.

So what do you think? Is this D4F + D4P process viable? A bunch of useless baloney?

Ackoff On Systems Thinking

Russell Ackoff, bless his soul, was a rare, top echelon systems thinker who successfully avoided being assimilated by the borg. Checkout the master’s intro to systems thinking in the short series of videos below.

What do you think?

It’s Gotta Be Free!

I love ironies because they make me laugh.

I find it ironic that some software companies will staunchly avoid paying a cent for software components that can drastically lower maintenance costs, but be willing to charge their customers as much as they can get for the application software they produce.

When it comes to development tools and (especially) infrastructure components that directly bind with their application code, everything’s gotta be free! If it’s not, they’ll do one of two things:

- They’ll “roll their own” component/layer even though they have no expertise in the component domain that cries out for the work of specialized experts. In the old days it was device drivers and operating systems. Today, it’s entire layers like distributed messaging systems and database managers.

- They’ll download and jam-fit a crappy, unpolished, sporadically active, open source equivalent into their product – even if a high quality, battle-tested commercial component is available.

Like most things in life, cost is relative, right? If a component costs $100K, the misers will cry out “holy crap, we can’t waste that money“. However, when you look at it as costing less than one programmer year, the situation looks different, no?

How about you? Does this happen, or has it happened in your company?

Ya Gotta Use This!

It’s interesting when people come off a previous project and are assigned to a new, in-progress, software development project. Often, they demand that their new project team adopt a process/procedure/technique/design/architecture (PPTDA) that they themself used on their previous project.

This can be either good or bad. It can be good if (and it’s a big IF) the alternative PPTDA they are promoting is actually better than the analogous PPTDA currently being employed by the new project team and the cost to integrate the proposed PPTDA into the project environment is less than the additional benefit it brings to the table. It can be really good if the PPTDA has been proven to “work” well and the new project hasn’t progressed past the point where an analogous PPTDA has been decided upon and weaved into the fabric of the project.

On the other hand, a newly proposed PPTDA can be bad in these cases:

- The new project team already has an equivalent PPTDA in place and there’s no “objective” proof that the championed PPTDA really does work better.

- The new project team already has an equivalent PPTDA in place and there’s “objective” proof that the championed PPTDA really does work better, but the cost of social disruption to integrate the PPTDA into the project isn’t worth the benefit.

- The new project team doesn’t have an equivalent PPTDA in place yet and the championed PPTDA has “somehow” been proven to be better than other alternatives, but adopting it would require changes to other well-working PPTDAs that the team is using.

Because there’s a bit of subjectivity in the above list and “rank can be pulled” by a so-called superior in order to jam an unworthy PPTDA into a smoothly running project and muck up the works, be wary of kings bearing gifts.