Archive

A Pencast

Checkout my “pencast” of the legendary Grady Booch talking about software architecture. In this notes+audio mashup, Grady expounds on the importance of patterns within elegant and robust architectures. Don’t mind my wife and dog’s barking, uh, I mean my dog’s barking and my wife’s talking, in a small segment of the pencast.

Related Articles

- Digital Pen Gives Boring Note Taking a Modern Kick (wired.com)

- Livescribe use models in special education (zdnet.com)

- The future of the pen? (building43.com)

- Livescribe Introduces Next Generation Hardware and Software Smartpen System – Review (geardiary.com)

Alignment

Deterministic, Animated, Social

Unless you object, of course, a system can be defined as an aggregation of interacting parts built by a designer for a purpose. Uber systems thinker Russell Ackoff classified systems into three archetypes: deterministic, animated, and social. The main criterion Ackoff uses for mapping a system into its type is purpose; the purpose of the containing whole and the purpose(s) of the whole’s parts.

The figure below attempts to put the Ackoff “system of system types” 🙂 into graphic form.

Deterministic Systems

In a deterministic system like an automobile, neither the whole nor its parts have self-purposes because there is no “self”. Both the whole and its parts are inanimate objects with fixed machine behavior designed and assembled by a purposeful external entity, like an engineering team. Deterministic systems are designed by men to serve specific, targeted purposes of men. The variety of behavior exhibited by deterministic systems, while possibly being complex in an absolute sense, is dwarfed by the variety of behaviors capable of being manifest by animated or social systems.

Animated Systems

In an animated system, the individual parts don’t have isolated purposes of their own, but the containing whole does. The parts and the whole are inseparably entangled in that the parts require services from the whole and the whole requires services from the parts in order to survive. The non-linear product (not sum) of the interactions of the parts manifest as the external observable behavior of the whole. Any specific behavior of the whole cannot be traced to the behavior of a single specific part. The human being is the ultimate example of an animated system. The heart, lungs, liver, an arm, or a leg have no purposes of their own outside of the human body. The whole body, with the aid of the product of the interactions of its parts produces a virtually infinite range of behaviors. Without some parts, the whole cannot survive (loss of a functioning heart). Without other parts, the behavior of the whole becomes constrained (loss of a functioning leg).

Social Systems

In a social system, the whole and each part has a purpose. The larger the system, the greater the number and variety of the purposes. If they aren’t aligned to some degree, the product of the purposes can cause a huge range of externally observed behaviors to be manifest. When the self-purposes of the parts are in total alignment with whole, the system’s behavior exhibits less variety and greater efficiency at trying to fulfill the whole’s purpose(s). Both internal and external forces continually impose pressure upon the whole and its parts to misalign. Only those designers who can keep the parts’ purpose aligned with the whole’s purpose have any chance of getting the whole to fulfill its purpose.

System And Model Mismatch

Ackoff states that modeling a system of one type with the wrong type for the purpose of improving or replacing it is the cause of epic failures. For example, attempting to model a social system as a deterministic system with an underlying mathematical model causes erroneous actions and decisions to be made by ignoring the purposes of the parts. Human purposes cannot be modeled with equations. Likewise, modeling a social system as an animated system also ignores the purposes of the many parts. These mismatches assume the purposes of the parts align with each other and the purpose of the whole. Bad assumption, no?

Yes I do! No You Don’t!

“Jane, you ignorant slut” – Dan Ackroid

In this EETimes post, I Don’t Need No Stinkin’ Requirements, Jon Pearson argues for the commencement of coding and testing before the software requirements are well known and recorded. As a counterpoint, Jack Ganssle wrote this rebuttal: I Desperately Need Stinkin Requirements. Because software industry illuminaries (especially snake oil peddling methodologists) often assume all software applications fit the same mold, it’s important to note that Pearson and Gannsle are making their cases in the context of real-time embedded systems – not web based applications. If you think the same development processes apply in both contexts, then I urge you to question that assumption.

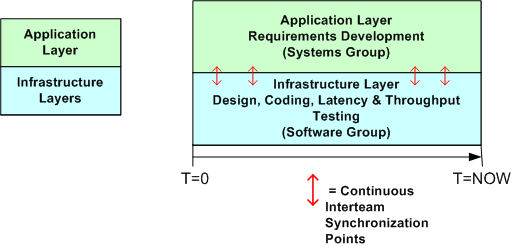

I usually agree with Ganssle on matters of software development, but Pearson also provides a thoughtful argument to start coding as soon as a vague but bounded vision of the global structure and base behavior is formed in your head. On my current project, which is the design and development of a large, distributed real-time sensor that will be embedded within a national infrastructure, a trio of us have started coding, integrating, and testing the infrastructure layer before the application layer requirements have been nailed down to a “comfortable degree of certainty”.

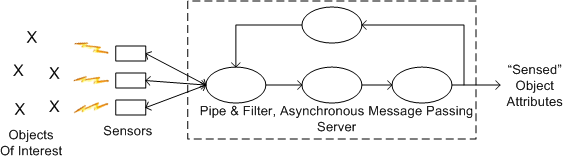

The simple figures below show how we are proceeding at the current moment. The top figure is an astronomically high level, logical view of the pipe-filter archetype that fits the application layer of our system. All processes are multi-threaded and deployable across one or more processor nodes. The bottom figure shows a simplistic 2 layer view of the software architecture and the parallel development effort involving the systems and software engineering teams. Notice that the teams are informally and frequently synchronizing with each other to stay on the same page.

The main reason we are designing and coding up the infrastructure while the application layer requirements are in flux is that we want to measure important cross-cutting concerns like end-to-end latency, processor loading, and fault tolerance performance before the application layer functionality gets burned into a specific architecture.

The main reason we are designing and coding up the infrastructure while the application layer requirements are in flux is that we want to measure important cross-cutting concerns like end-to-end latency, processor loading, and fault tolerance performance before the application layer functionality gets burned into a specific architecture.

So, do you think our approach is wasteful? Will it cause massive, downstream rework that could have been avoided if we held off and waited for the requirements to become stable?

To Call, Or To Be Called. THAT Is The Question.

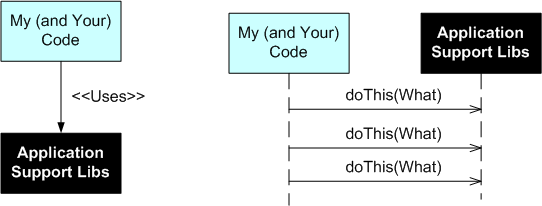

Except for GUIs, I prefer not to use frameworks for application software development. I simply don’t like to be the controllee. I like to be the controller; to be on top so to speak. I don’t like to be called; I’d rather call.

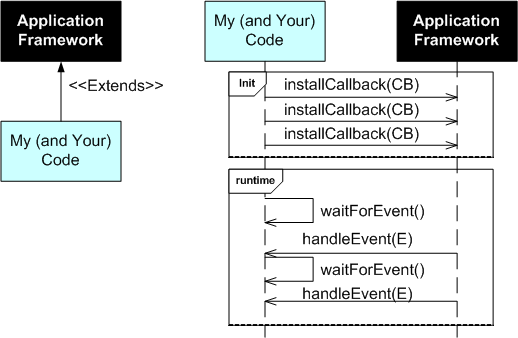

The UML figure below shows a simple class diagram and sequence diagram pair that illustrate how a typical framework and an application interact. On initialization, your code has to install a bunch of CallBack (CB) functions or objects into the framework. After initialization, your code then waits to be called by the framework with information on Events (E) that you’re interested in processing. You’re code is subservient to the Framework Gods.

After object oriented inheritance and programming by difference, frameworks were supposed to be the next silver bullet in software reuse. Well crafted, niche-based frameworks have their place of course, but they’re not the rage they once were in the 90s. A problem with frameworks, especially homegrown ones, is that in order to be all things to all people, they are often bloated beyond recognition and require steep learning curves. They also often place too much constraint on application developers while at the same time not providing necessary low level functionality that the application developer ends up having to write him/herself. Finding out what you can and can’t do under the “inverted control” structure of a framework is often an exercise in frustration. On the other hand, a framework imposes order and consistency across the set of applications written to conform to it’s operating rules; a boon to keeping maintenance costs down.

The alternative to a framework is a set of interrelated, but not too closely coupled, application domain libraries that the programmer (that’s you and me) can choose from. The UML class and sequence diagram pair below shows how application code interacts with a set of needed and wanted support libraries. Notice who’s on top.

How about you? Do you like to be on top? What has been your experience working with non-GUI application frameworks? How about system-wide frameworks like CORBA or J2EE?

How about you? Do you like to be on top? What has been your experience working with non-GUI application frameworks? How about system-wide frameworks like CORBA or J2EE?

Go, Go Go!

Rob Pike is the Google dude who created the Go programming language and he seems to be on a PR blitz to promote his new language. In this interview, “Does the world need another programming language?”, Mr. Pike says:

…the languages in common use today don’t seem to be answering the questions that people want answered. There are niches for new languages in areas that are not well-served by Java, C, C++, JavaScript, or even Python. – Rob Pike

In Making It Big in Software, UML co-creator Grady Booch seems to disagree with Rob:

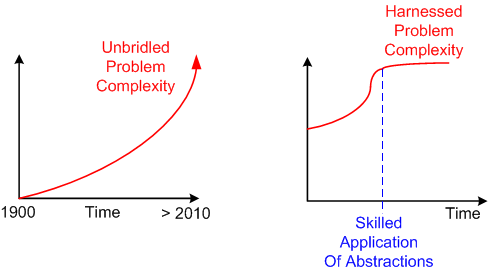

It’s much easier to predict the past than it is the future. If we look over the history of software engineering, it has been one of growing levels of abstraction—and, thus, it’s reasonable to presume that the future will entail rising levels of abstraction as well. We already see this with the advent of domain-specific frameworks and patterns. As for languages, I don’t see any new, interesting languages on the horizon that will achieve the penetration that any one of many contemporary languages has. I held high hopes for aspect-oriented programming, but that domain seems to have reached a plateau. There is tremendous need to for better languages to support massive concurrency, but therein I don’t see any new, potentially dominant languages forthcoming. Rather, the action seems to be in the area of patterns (which raise the level of abstraction). – Grady Booch

I agree with Grady because abstraction is the best tool available to the human mind for managing the explosive growth in complexity that is occurring as we speak. What do you think?

Abstraction is selective ignorance – Andrew Koenig

Continuous Turd Cleanup

Everyone knows that continuous refactoring of the source code is required to keep your code base clean. However, what about continuous cleanup of turd files? Do you iteratively do this unglamorous, janitorial task?

As you develop software and learn how to use one of the exotic new build systems like the autotools set, you will most likely find a bunch of project build files accumulating that are no longer needed. These files will clutter up your source control repository tree and frustrate project newcomers; starting them off with a “bad attitude” toward you and the other people who’ve “laid the groundwork” for them.

If you can’t find the time to diligently keep your source tree clean (“I am too busy!”), then you deserve what you get from your future team mates: disdain and disrespect.

WHAT WE HAVE DONE FOR OURSELVES ALONE DIES WITH US; WHAT WE HAVE DONE FOR OTHERS AND THE WORLD REMAINS AND IS IMMORTAL. – Dan Brown

Cleanliness And Understandability

While adding code to our component library framework yesterday, a colleague and myself concocted a 2 attribute quality system for evaluating source code:

When subjectively evaluating the cleanliness attribute of a chunk of code, we pretty much agree on whether it is clean or dirty. The trouble is our difference in evaluating understandability. My obfuscated is his simple. Bummer.

When subjectively evaluating the cleanliness attribute of a chunk of code, we pretty much agree on whether it is clean or dirty. The trouble is our difference in evaluating understandability. My obfuscated is his simple. Bummer.

Anomalously Huge Discrepancies

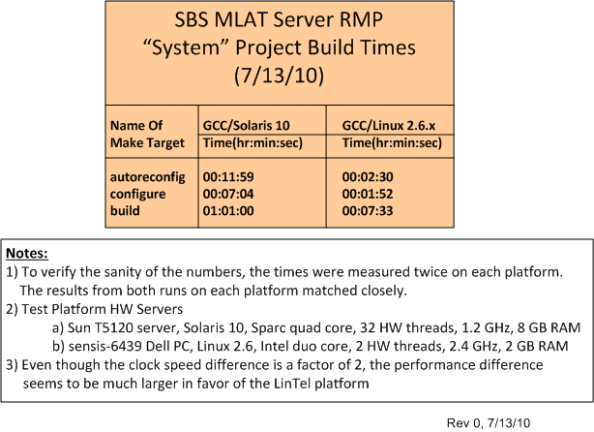

I’m currently having a blast working on the design and development of a distributed, multi-process, multi-threaded, real-time, software system with a small core group of seasoned developers. The system is being constructed and tested on both a Sparc-Solaris 10 server and an Intel-Ubuntu Linux 2.6.x server. As we add functionality and grow the system via an incremental, “chunked” development process, we’re finding anomalously huge discrepancies in system build times between the two platforms.

The table below quantifies what the team has been qualitatively experiencing over the past few months. Originally, our primary build and integration machine was the Solaris 10 server. However, we quickly switched over to the Linux server as soon as we started noticing the performance difference. Now, we only build and test on the Solaris 10 server when we absolutely have to.

The baffling aspect of the situation is that even though the CPU core clock speed difference is only a factor of 2 between the servers, the build time difference is greater than a factor of 5 in favor of the ‘nix box. In addition, we’ve noticed big CPU loading performance differences when we run and test our multi-process, multi-threaded application on the servers – despite the fact that the Sun server has 32, 1.2 GHz, hardware threads to the ‘nix server’s 2, 2.4 GHz, hardware threads. I know there are many hidden hardware and software variables involved, but is Linux that much faster than Solaris? Got any ideas/answers to help me understand WTF is going on here?

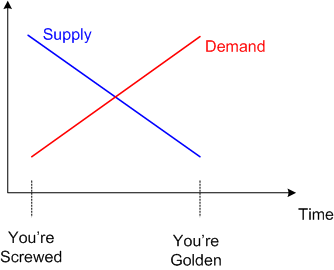

Highly Skilled

Be careful out there. If you acquire deep expertise and develop into a highly skilled worker in a narrow technical domain, you’re walking a tightrope.You may be highly valued by the marketplace today, but if your area of expertise becomes obsolete because of rapid technological change, your career may stall – or worse. On the other hand, if you luckily “choose” your narrow area of expertise correctly, your skillset may be in demand for life. It’s a classic textbook case of supply and demand.

In the software development arena, consider the ancient COBOL and C programming languages. Hundreds of millions of lines of code written in these languages are embedded in thousands of mission-critical systems deployed out in the world. These systems need to be continuously maintained and extended in order to keep their owners in business. History has repeatedly shown that the cost, schedule, and technical risks of updating big software systems written in these languages (or any other language) are huge. Thus, because of the large numbers of systems deployed and the fact that most software engineers leave those languages behind (and stigmatize them), the supply-demand curve will be in your favor if you stick solely to COBOL or C programming out of fear of change. The tradeoff is that you’ll spend your whole career in maintenance, and you’ll rarely, if ever, experience the thrill of developing brand new systems with your old skillset.