Archive

These Guys “Get It”

In the freely downloadable National Academies book, “Critical Code: Software Producibility for Defense“, the dudes who wrote the book “get it“. Check out this rather long snippet and place close attention on the bolded sentences. If you dare, pay closer attention to the snarky Bulldozer00 commentary highlighted in RED .

An additional challenge to the DoD is that the split between technical and management roles will result (has already resulted) in leaders who, on moving into management, face the prospect of losing technical excellence and currency over time. This means that their qualifications to lead in architectural decision making (and schedule making) may diminish unless they can couple project management with ongoing architectural leadership and technical engagement. The DoD does not (and legions of private enterprises don’t) have strong technical career paths that build on and advance software expertise with the exception of the service labs. Upward career progression trends leading closer to senior management-focused roles and further away from technical involvement tend to stress general management rather than technical management experience (well, duh! That’s the way status-centric command and control hierarchies are designed.). This is not necessarily the case in technology-intensive roles in industry (not necessarily, but still pervasively). Many (but nearly not enough) of the most senior leaders in the technology industry have technical backgrounds and continue to exercise technical roles and be engaged in technology strategy. Nonetheless, certain DoD software needs remain sufficiently complex and unique and are not covered by the commercial world, and therefore call for internal DoD software expertise. In the DoD, however, as software personnel take on more management responsibility, they have less opportunity and incentive to stay technically current (<- this “feature” is baked into command and control hierarchies where, of course, caste and who-reports-to-who is king – to hell with excellence and what sustains an enterprise’s health and profitability). At the same time, there is an increasing need for an acquisition workforce that has a strong understanding of the challenges in systems engineering and software-intensive systems development. It is particularly critical to have program managers who understand modern software development and systems (If that’s the case, then the DoD and most private enterprises are hosed. D’oh!).

Could it be that unelected, anointed “managers” in DoD and technology industry CLORGs and DYSCOs are still stuck in the 20th century FOSTMA mindset? You know, the UCB where they “feel” they are entitled to higher compensation and stature than the lower cast knowledge workers (architects, designers, programmers, testers, etc) just because they occupy a higher slot in an anachronistic, and no longer applicable, way of life – no matter what the cost to the whole org’s viability.

In command and control hierarchies, almost everybody is a wanna-be:

In command and control hierarchies, almost everybody is a wanna-be:

“I wanna rise up to the next level so I’ll: make more money, have more freedom, be perceived as more important, and rule over the hapless dudes in my former level“. Nah, that’s not true. BD00 has been drinkin’ too many dirty, really really dirty, martinis.

Properties Of Interest

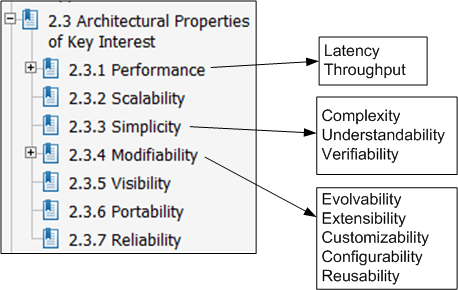

Roy Fielding‘s famous PhD thesis, “Architectural Styles and the Design of Network-based Software Architectures” introduces the REST (REpresentational State Transfer) architectural style for large-scale, distributed, hypermedia-based network applications.

In his thesis, Mr. Fielding defines the non-functional property set that he uses to evaluate various architectural styles against one another:

Of course, there is no universal set of “ilities” definitions that technical stakeholders use to reason about, and evaluate, software architectures. Plus, depending on the application domain one is immersed in, some “ilities” are more important than others. Nevertheless, Mr. Fielding’s set is as good as any other that I’ve seen to date.

Of course, there is no universal set of “ilities” definitions that technical stakeholders use to reason about, and evaluate, software architectures. Plus, depending on the application domain one is immersed in, some “ilities” are more important than others. Nevertheless, Mr. Fielding’s set is as good as any other that I’ve seen to date.

Whenever I see someone’s personal list of “ilities“, sometimes I discover at least one that I’ve never seen before. In Mr. Fielding’s list, the “visibility” property is one such “ility“. Here’s Mr. Fielding’s definition:

Visibility… refers to the ability of a component to monitor or mediate the interaction between two other components.

In a “distributed hypermedia systems” application like the www, visibility impacts several other properties as follows:

Visibility can enable improved performance via shared caching of interactions, scalability through layered services, reliability through reflective monitoring,

and security by allowing the interactions to be inspected by mediators (e.g., network firewalls).

I wonder what my next “ility” discovery will be?

Complicated != Complex

For the non-geeks reading this post, the “!=” symbol is the C++ programming language token for “not equal“.

It seems like a lot of people think that classifying something as “complex” is the same as calling it “complicated“, and vice-versa. That conclusion can be, and often is, true, but it can also be false. I associate “complicated” with “not-understandable” – except to a select few experts. I think of “complex” to be the equivalent of something like “intricately elegant” and understandable to far more people than just experts.

Let’s take an example to illuminate my viewpoint. Assume that the black box system below functions delightfully. It’s reliable, responsive, easy to learn, and does what its users want without frustrating them in the slightest.

Now, in terms of complicated and complex, consider what the system may look like under the covers:

Now, in terms of complicated and complex, consider what the system may look like under the covers:

Of course, most users don’t give a shite what goes on under the covers, but the designing org and its people better well know what does – unless they luckily don’t have any competition to deal with, and hence, have their customers in a vice grip.

You see, at some point in time, the users will want improvements to the system as their needs evolve. If the original team of builders of implementation #1 are the only people who know the (so-called) design well enough to change it without breaking any existing capabilities, then the development org is hosed if those people leave. In effect, the org is held hostage by a small cadre of people. D’oh!

In the complex-complex implementation on the far right, even if the original builders leave the development org, the (relatively) elegant and well thought out design structure facilitates easy on-boarding of replacement builders. As an added bonus, the effort needed to add features and enhancements to the product is way less costly and risky than the other jaggedly complicated implementations.

So, given the portfolio of products in your org, how would you assess them in terms of the complexity and complicated attributes? If, and it’s probably a big IF, you could publicly communicate your assessment without fear of marginalization, or worse, how many people in your org do you think would publicly agree with your assessment? Uh, how abut privately? Would the number of public “agreers” match the number of private “agreers“?

Process, Passion, And Quality

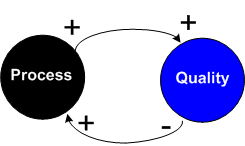

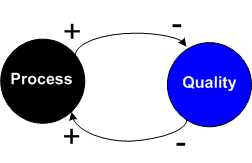

Naive managers (usually those who get drunk on large quantities of 6-sigma, CMMI, ISO-90XX, EVM, and/or PMP kool aid) tend to think of the correlation between process and quality like this:

This cause-effect diagram can be read as “more process imposition leads to more quality; less quality leads to more process imposition“. What’s missing in this simplistic diagram? Could it be something that represents the human element?

This cause-effect diagram can be read as “more process imposition leads to more quality; less quality leads to more process imposition“. What’s missing in this simplistic diagram? Could it be something that represents the human element?

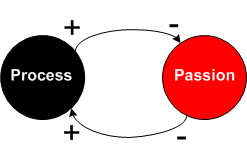

In the blarticle, “Process kills developer passion“, James Turner writes about the human element of “passion“:

…passionate programmers write great code, but process kills passion. Disaffected programmers write poor code, and poor code makes management add more process in an attempt to “make” their programmers write good code. That just makes morale worse, and so on.

If you believe Mr. Turner, then the cause-effect diagram for process and passion is a self-reinforcing loop that may snuff out passion over time:

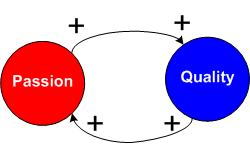

So, what about the relationship between passion and quality? I think that many would agree that it is thus:

So, what about the relationship between passion and quality? I think that many would agree that it is thus:

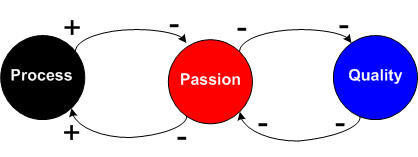

When we integrate the two models above, we get….

When we integrate the two models above, we get….

Moving from left to right, and then from right to left we read that:

Moving from left to right, and then from right to left we read that:

an increase in process triggers a decrease in passion, which triggers a decrease in quality, which triggers a further decrease in passion, which triggers an increase in process imposition. Round and round we go.

If we assume that “passion” is an integral player in the system, but hide it in the above diagram to simulate a common managerial blindspot, the end to end process-quality cause-effect diagram emerges as:

If we compare this derived result to the first naive manager mental model which doesn’t include the messy “passion” element, what’s the difference?

If we compare this derived result to the first naive manager mental model which doesn’t include the messy “passion” element, what’s the difference?

Centralized, Federated, Decentralized

1 Prelude

A colleague on LinkedIn.com pointed me toward this Doug Schmidt, et al, paper: “Evaluating Technologies for Tactical Information Management in Net-Centric Systems“. In it, Doug and crew qualitatively (scalability, availability, configurability) and quantitatively (latency, jitter) evaluate three different architectural implementations of the Object Management Group‘s (OMG) Data Distribution Service (DDS): centralized, federated, and decentralized.

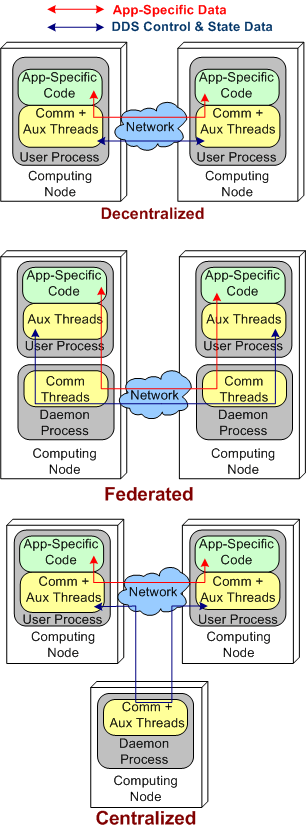

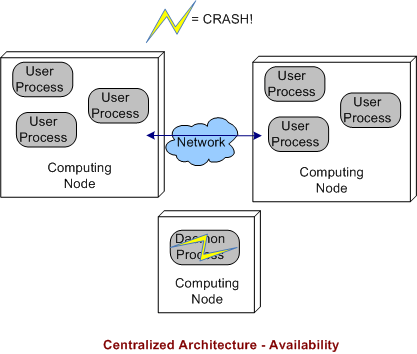

The stacked trio of figures below model the three DDS architecture types. They’re slightly enhanced renderings of the sketches in the paper.

2 Quantitative Comparisons

DDS was specifically designed to meet the demanding latency and jitter (the standard deviation of latency) performance attributes that are characteristic of streaming, Distributed Real-Time Event (DRE) systems like defense and air traffic control radars. Unlike most client-server, request-response systems, if data required for human or computer decision-making is not made available in a timely fashion, people could die. It’s as simple and potentially horrible as that.

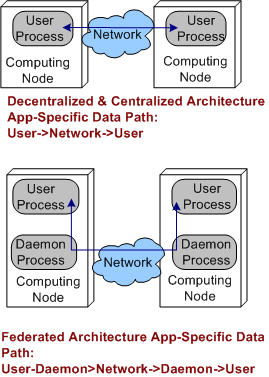

Applying the systems thinking idiom of “purposeful, selective ignorance“, the pics below abstract away the unimportant details of the pics above so that the architecture types can be compared in terms of latency and jitter performance.

By inspecting the figures, it’s a no brainer, right? The steady-state latency and jitter performance of the decentralized and centralized architectures should exceed that of the federated architecture. There is no “middleman“, a daemon, for application layer data messages to pass through.

Sure enough, on their two node test fixture (I don’t know why they even bothered with the one node fixture since that really isn’t a “distributed” system in my mind) , the Schmidt et al measurements indicate that the latency/jitter performance of the decentralized and centralized architectures exceed that of the federated architecture. The performance difference that they measured was on the order of 2X.

3 Qualitative Comparisons

In all distributed systems, both DRE and Client-Server types, achieving high operational availability is a huge challenge. Hell, when the system goes bust, fuggedaboud the timeliness of the data, no freakin’ work can get done and panic can and usually does set in. D’oh!

With that scary aspect in mind, let’s look at each of the three architectures in terms of their ability to withstand faults.

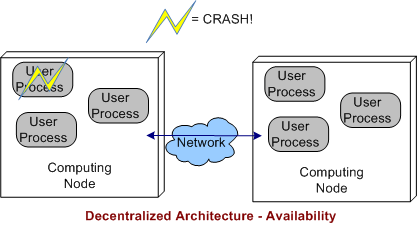

3.1 Decentralized Architecture Availability

In a decentralized architecture, there are no invasive daemons that “leak” into the application plane so we can’t talk about daemon crashes. Thus, right off the bat we can “arguably” say that a decentralized architecture is more resistant to faults than the federated or centralized architectures.

As the picture below shows, when a user application layer process dies, the others can continue to communicate with each other. Depending on what the specific application is required to do during operation, at least some work may be able to still get accomplished even though one or more app components go kaput.

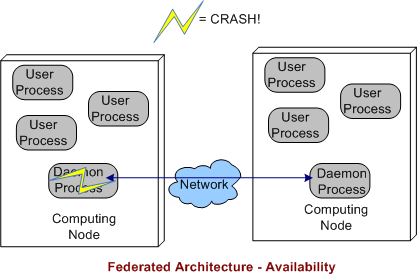

3.2 Federated Architecture Availability

In a federated architecture, when a daemon process dies, a whole node and all the subscriber application user processes running on it are severed from communicating with the user processes running on the other nodes (see the sketch below) in the system. Thus, the federated architecture is “arguably” less fault tolerant than the decentralized and (as we’ll see) centralized architectures. However, through judicious “allocation” of user processes to nodes (the fewer the better – which sort of defeats the purpose of choosing a federation for per node intra-communication performance optimization), some work still may be able to get accomplished when a node’s daemon crashes.

If the node daemons stay viable but a user application dies, then the behavior of a federated architecture, DDS-based system is the same as that of a decentralized architecture

3.3 Centralized Architecture Availability

Finally, we come to the robustness of a centralized DDS architecture. As shown below, since the single daemon overlord in the system is not (or should not be) involved in inter-process application layer data communications, if it crashes, then the system can continue to do its full workload. When a user process crashes instead of, or in addition to, the daemon, then the system’s behavior is the same as a decentralized architecture.

4 BD00 Commentary

Because he works on data streaming DRE radar systems, Jimmy likes, I mean BD00 likes, the DDS pub-sub architectural style over broker-based, distributed communication technologies like C/S CORBA and JMS queues. It should be obvious that the latter technologies are not a good match for high availability and low latency DRE applications. Thus, trying to jam fit a new DRE application into a CORBA or JMS communication platform “just because we have one” is a dumb-ass thing to do and is sure to lead to high downstream maintenance costs and a quicker route to archeosclerosis.

Within the DDS space, BD00 prefers the decentralized architecture over the federated and centralized styles because of the semi-objective conclusions arrived at and documented in this post.

Seven Unsurprising Findings

In the National Acadamies Press’s “Summary of a Workshop for Software-Intensive Systems and Uncertainty at Scale“, the Committee on Advancing Software-Intensive Systems Producibility lists 7 findings from a review of 40 DoD programs.

- Software requirements are not well defined, traceable, and testable.

- Immature architectures; integration of commercial-off-the-shelf (COTS) products; interoperability; and obsolescence (the need to refresh electronics and hardware).

- Software development processes that are not institutionalized, have missing or incomplete planning documents, and inconsistent reuse strategies.

- Software testing and evaluation that lacks rigor and breadth.

- Lack of realism in compressed or overlapping schedules.

- Lessons learned are not incorporated into successive builds—they are not cumulative.

- Software risks and metrics are not well defined or well managed.

Well gee, do ya think they missed anything? What I’d like to know is what, if anything, they found right with those 40 programs. Anything? Maybe that would help more than ragging on the same issues that have been ragged on for 40 years.

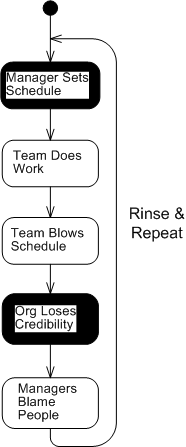

My fave is number five (with number 1 a close second). When schedules concocted by non-technical managers without any historical backing or input from the people who will be doing the work are publicly promised to customers, how can anyone in their right mind assert that they’re “realistic“? The funny thing is, it happens all the time with nary a blink – until the fit hits the shan, of course. D’oh!

Meeting schedules based on historically tracked data and input from team members is challenging enough, but casting an unsubstantiated schedule in stone without an explicit policy of periodically reassessing it on the basis of newly acquired knowledge and learning as a project progresses is pure insanity. Same old, same old.

I love deadlines. I like the whooshing sound they make as they fly by. – Douglas Adams

Pegged

Peg, It will come back to you – Steely Dan

Assume that as a sub-task of redesigning a BBoM chunk of legacy software for scalability (single thread to mutliple threads), you have to “do something” with a subset of high performance, but computationally dense and tricky, C procedural code. Let’s call the functionality implemented by this code “peg“.

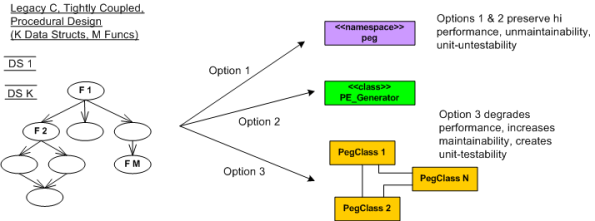

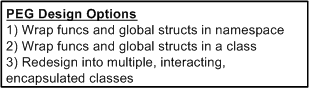

In the figure below, I model the procedural mess that is “peg” on the left as an M function call tree in which many of the functions perform CRUD accesses on a set of K interrelated global data structures. Trust me when I say that K and M are non-trivial, double digit numbers. D’oh!

The way I see it, I have three choices for attacking the monolith:

Options 1 and 2 are slightly different instances of the ultra-conservative “sarcophagus” pattern (remember Chernobyl?). Option 3, which is technically riskier, higher latency, and higher development cost in the short term, will definitely pay off in terms of lower maintenance costs and lower developer anxiety the long term – if done correctly!

If it was my decision alone (and it should be since I’ll be doing all the coding/testing/documenting/defending/”owning“, no?), I’d choose option 3 without blinking an eye. I wouldn’t blink an eye because I know that “peg” will continue to need to be extended and enhanced for many years into the future – and maintenance costs far exceed initial developments costs in all software product life cycles. But alas, I’m just a dumb engineer with no business sense.

Requirements Stability

Over the years, I’ve been assigned to the roles of specifier, designer, documenter, writer, and maintainer of source code for radar sensor systems that are used in safety-critical applications. These sensors get deployed in noisy, interference-infested environments and they must perform at high levels of availability and with great fidelity.

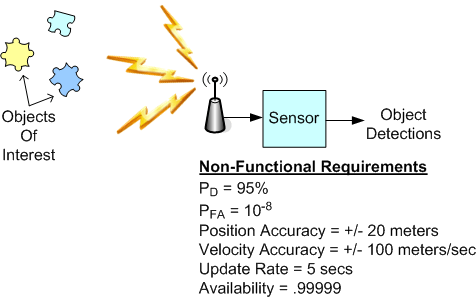

The figure below shows a generic sensor system context diagram along with some typical non-functional requirements (with made-up values) that are critical for customer acceptance. My experience has indicated that once these black-box level requirements are specified, they rarely change. Thus, the agile war cry to continuously “embrace requirements change” may not fully apply to the development of this class of systems, no?

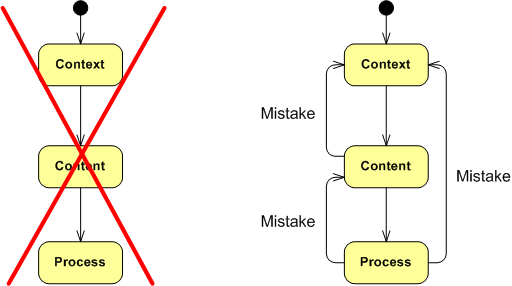

The point I’m trying to make here is to be wary of morphing into a lap-dog zealot for any technique, process, method, or practice – which includes the hallowed “agile” brand. For a long time, my motto (thanks to the work of W. L. Livingston and John Warfield) has been: Context, Content, and then Process (CCP). Synthesize an understanding of the problem context, design the content of the solution (structure and behavior), and only then design the solution construction process – tailored to the context and content. Of course, since mistakes and errors will be made during the journey, backtracking and iterative convergence are expected. Thus, “embrace mistakes, errors, backtracking, and iteration” is my war cry. What’s yours? What’s your org’s?

Traceability Woes

For safety-critical systems deployed in aerospace and defense applications where people’s lives may at be at stake, traceability is often talked about but seldom done in a non-superficial manner. Usually, after talking up a storm about how diligent the team will be in tracing concrete components like source code classes/functions/lines and hardware circuits/boards/assemblies up the spec tree to the highest level of abstract system requirements, the trace structure that ends up being put in place is often “whatever we think we can get by with for certification by internal and external regulatory authorities“.

I don’t think companies and teams willfully and maliciously screw up their traceability efforts. It’s just that the pragmatics of diligently maintaining a scrutable traceability structure from ground zero back up into the abstract requirements cloud gets out of hand rather quickly for any system of appreciable size and complexity. The number of parts, types of parts, interconnections between parts, and types of interconnections grows insanely large in the blink of an eye. Manually creating, and more importantly, maintaining, full bottom-to-top traceability evidence in the face of the inevitable change onslaught that’s sure to arise during development becomes a huge problem that nobody seems to openly acknowledge. Thus, “games” are played by both regulators and developers to dance around the reality and pass audits. D’oh!

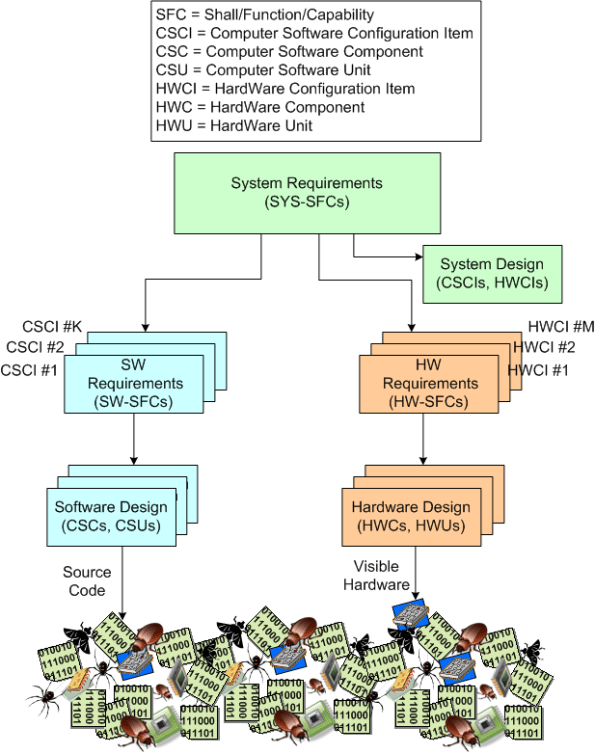

To illustrate the difficulty of the traceability challenge, observe the specification tree below. During the development, the tree (even when agile and iterative feedback loops are included in the process) grows downward and the number of parts that comprise the system explodes.

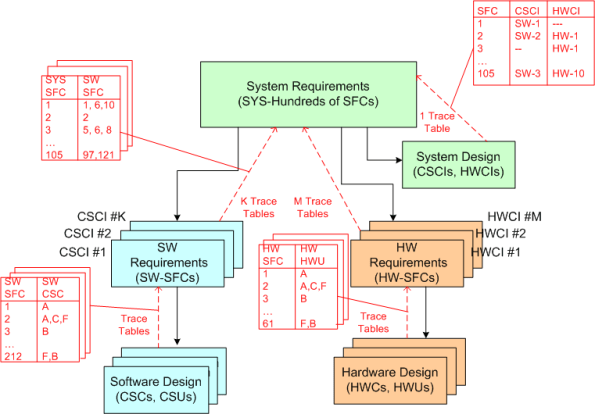

In “theory” as the tree expands, multiple traceability tables are rigorously created in pay-as-you-go fashion while the info and knowledge and understanding is still fresh in the minds of the developers. When “done” (<- LOL!), an inverse traceability tree with explicit trace tables connecting levels like the example below is supposed to be in place so that any stakeholder can be 100% certain that the hundreds of requirements at the top have been satisfied by the thousands of “cleanly” interconnected parts on the bottom. Uh yeah, right.

SysML Support For Requirements Modeling

“To communicate requirements, someone has to write them down.” – Scott Berkun

Prolific author Gerald Weinberg once said something like: “don’t write about what you know, write about what you want to know“. With that in mind, this post is an introduction to the requirements modeling support that’s built into the OMG’s System Modeling Language (SysML). Well, it’s sort of an intro. You see, I know a little about the requirements modeling features of SysML, but not a lot. Thus, since I “want to know” more, I’m going to write about them, damn it! 🙂

SysML Requirements Support Overview

Unlike the UML, which was designed as a complexity-conquering job performance aid for software developers, the SysML profile of UML was created to aid systems engineers during the definition and design of multi-technology systems that may or may not include software components (but which interesting systems don’t include software?). Thus, besides the well known Use Case diagram (which was snatched “as is” from the UML) employed for capturing and communicating functional requirements, the SysML defines the following features for capturing both functional and non-functional requirements:

- a stereotyped classifier for a requirement

- a requirements diagram

- six types of relationships that involve a requirement on at least one end of the association.

The Requirement Classifier

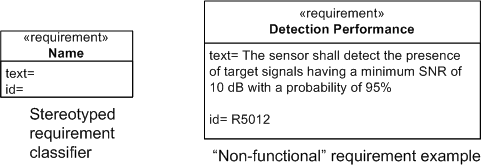

The figure below shows the SysML stereotyped classifier model element for a requirement. In SysML, a requirement has two properties: a unique “id” and a free form “text” field. Note that the example on the right models a “non-functional” requirement – something a use case diagram wasn’t intended to capture easily.

One purpose for capturing requirements in a graphic “box” symbol is so that inter-box relationships can be viewed in various logically “chunked“, 2-dimensional views – a capability that most linear, text-based requirements management tools are not at all good at.

Requirement Relationships

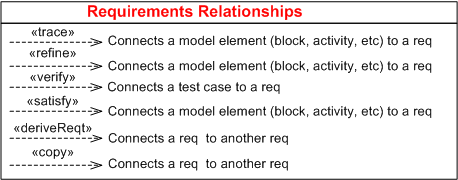

In addition to the requirement classifier, the SysML enumerates 6 different types of requirement relationships:

A SysML requirement modeling element must appear on at least one side of these relationships with the exception of <<derivReqt>> and <<copy>>, which both need a requirement on both sides of the connection.

Rather than try to write down semi-formal definitions for each relationship in isolation, I’m gonna punt and just show them in an example requirement diagram in the next section.

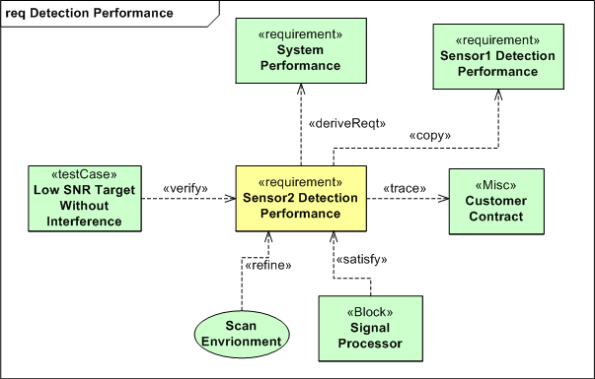

The Requirement Diagram

The figure below shows all six requirement relationships in action on one requirement diagram. Since I’ve spent too much time on this post already (a.k.a. I’m lazy) and one of the goals of SysML (and other graphical modeling languages) is to replace lots of linear words with 2D figures that convey more meaning than a rambling 1D text description, I’m not going to walk through the details. So, as Linda Richman says, “tawk amongst yawselves“.

References

1) A Practical Guide to SysML: The Systems Modeling Language – Sanford Friedenthal, Alan Moore, Rick Steiner

2) Systems Engineering with SysML/UML: Modeling, Analysis, Design – Tim Weilkiens