Archive

“As-Built” Vs “Build-To”

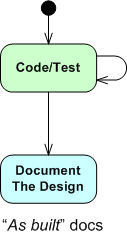

The figure below shows one (way too common) approach for developing computer programs. From an “understanding” in your head, you just dive into the coding state and stay there until the program is “done“. When it finally is “done“: 1) you load the code into a reverse engineering tool, 2) press a button, and voila, 3) your program “As-Built” documentation is generated.

For trivial programs where you can hold the entire design in your head, this technique can be efficient and it can work quite well. However, for non-trivial programs, it can easily lead to unmaintainable BBoMs. The problem is that the design is “buried” in the code until after the fact – when it is finally exposed for scrutiny via the auto-generated “as-built” documentation.

With a dumb-ass reverse engineering tool that doesn’t “understand” context or what the pain points in a design are, the auto-generated documentation is often overly detailed, unintelligible, camouflage in which a reviewer/maintainer can’t see the forest for the trees. But hey, you can happily tick off the documentation item on your process checklist.

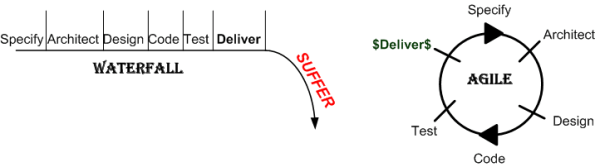

Two alternative, paygo types of development approaches are shown below. During development, the “build-to” design documentation and the code are cohesively produced manually. Only the important design constructs are recorded so that they aren’t buried in a mass of detail and they can be scrutinized/reviewed/error-corrected in real-time during development – not just after the fact.

I find that I learn more from the act of doing the documentation than from pushing an “auto-generate” button after the fact. During the effort, the documentation often speaks to me – “there’s something wrong with the design here, fix me“.

Design is an intimate act of communication between the creator and the created – Unknown

Of course, for developers, especially one dimensional extreme agilista types who have no desire to “do documentation” or learn UML, the emergence of reverse engineering tools has been a Godsend. Bummer for the programmer, the org he/she works for, the customer, and the code.

Deliverance

Alarmin’ Larman

Recently, I listened to an interview with Craig Larman on the topic of “Scaling Scrum To Large Organizations“.

Here is what I liked about Mr. Larman’s talk:

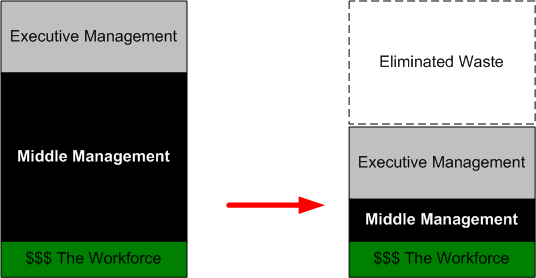

- He didn’t use the term “management“. He repeatedly used the term “overhead management” to emphasize the wastefulness of maintaining these self-important middle org layers.

- He predicted that the dream of retaining high paying, “spreadsheet and Microsoft Project overhead management jobs” in developed countries designed to watch over subservient code monkeys in low labor rate, third world countries would not come to fruition.

- He predicted that the whole scale adoption of agile processes like “Scrum” and “Lean” would lead to the demise of the roles of “project manager” and “program manager” in software projects.

- He recommended ignoring ISO and CMMI certifications and assessments. Roll up your sleeves and directly read/inspect the source code of any software company you’re considering partnering with or buying from.

There is one thing that bothered me about his talk. I’m not a Scrum process expert, but I don’t understand the difference between the cute sounding “Scrum Coach/Scrum Master” roles and the more formal sounding “Project Manager/Program Manager” roles. Assuming that there’s a huge difference, I can foresee clever overhead managers donning the mask of “Scrum Master” and still behaving as self-important, know-it-all, command and control freaks. Of course, they can only get away with preserving the unproductive status quo (while espousing otherwise) if the executives they work for are clueless dolts.

If you’re so inclined, give the talk a listen and report your thoughts back here.

Findings And Recommendations

The National Academy of Sciences is a private, nonprofit, self-perpetuating society of distinguished scholars engaged in scientific and engineering research, dedicated to the furtherance of science and technology and to their use for the general welfare. Upon the authority of the charter granted to it by the Congress in 1863, the Academy has a mandate that requires it to advise the federal government on scientific and technical matters.

Via the publicly funded National Academies mailing list, I found out that the book “Critical Code: Software Producibility for Defense“ was available for free pdf download. Despite being committee written, the book is chock full of good “findings” and “recommendations” that are not only applicable to the DoD, but to laggard commercial companies as well.

Not surprisingly, because of the exponentially growing size of software-centric systems and the need for interoperability, “architecture” plays a prominent role in the book. Here are some of my favorite committee findings and recommendations:

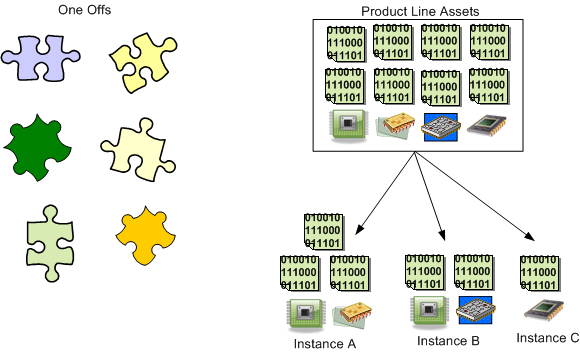

Finding 3-1: Industry leaders attend to software architecture as a first-order decision, and many follow a product-line strategy based on commitment to the most essential common software architectural elements and ecosystem structures.

Finding 3-2: The technology for definition and management of software architecture is sufficiently mature, with widespread adoption in industry. These approaches are ready for adoption by the DoD, assuming that a framework of incentives can be created in acquisition and development efforts.

Recommendation 3-2: This committee reiterates the past Defense Science Board recommendations that the DoD follow an architecture driven acquisition strategy, and, where appropriate, use the software architecture as the basis for a product-line approach and for larger-scale systems potentially involving multiple lead contractors.

The DoD funded Software Engineering Institute, located at Carnegie Mellon University, has produced a lot of good work on software product lines. Jan Bosch’s “Design and Use of Software Architectures: Adopting and Evolving a Product-Line Approach” and Chapters 14 and 15 in Bass, Clements, and Kazman’s “Software Architecture in Practice” are excellent sources of information. The stunning case study of Celsius Tech really drove the point home for me.

My Erlang Learning Status – IV

I haven’t progressed forward at all on my previously stated goal of learning how to program idiomatically in Erlang. I’m still at the same point in the two books (“Erlang And OTP In Action“; “Erlang Programming“) that I’m using to learn the language and I’m finding it hard to pick them up and move forward.

I’m still a big fan (from afar) of Erlang and the Erlang community, but my initial excitement over discovering this terrific language has waned quite a bit. I think it’s because:

- I work in C++ everyday

- C++11 is upon us and learning it has moved up to number 1 on my priority list.

- There are no Erlang projects in progress or in the planning stages where I work. Most people don’t even know the language exists.

Because of the excuses, uh, reasons above, I’ve lowered my expectations. I’ve changed my goal from “learning to program idiomatically” in Erlang to finishing reading the two terrific books that I have at my disposal.

Note: If you’re interested in reading my previous Erlang learning status reports, here are the links:

Doc Maturity?

In his classic and highly referenced but much vilified paper, “Managing The Development Of Large Software Systems“, Winston Royce says:

Occasionally I am called upon to review the progress of other software design efforts. My first step is to investigate the state of the documentation. If the documentation is in serious default my first recommendation is simple. Replace project management. (D’oh!)

Before going further, let me establish the “context” of the commentary that follows so that I can make a feeble attempt to divert attacks from any extremist agilistas who may be reading this post. Like Mr. Royce, I will be talking about computationally-intensive software intended for use in these and other closely related types of application domains:

spacecraft mission planning, commanding, and post-flight analysis

Ok, are we set to move on with his shared contextual understanding? Well then, let’s go!

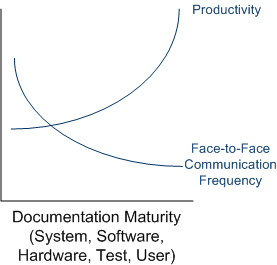

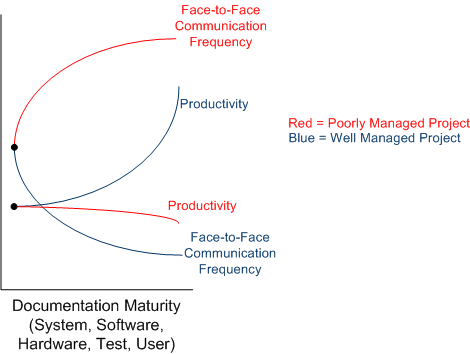

The figure below shows what should happen on a well run, large, defense/aerospace industry system development project. As the recorded info artifact set matures (see the post-post note below), productivity increases and the need for face-to-face communication decreases (but never goes to zero ala the “over-the-wall” syndrome).

Using this graph as a reference point, here’s what often happens, no?

On big, complex projects, mature but incomplete/inconsistent/ambiguous documentation tends to cause an unmanageable increase in face-to-face communication frequency (and decrease in face-to-face communication quality) as schedule-pressured people frantically try to figure out what needs to be built and what elements they’re personally responsible for creating, building, and integrating into the beast. Since more and more time is spent communicating inefficiently, less time is available for productive work. In the worst case, productivity plummets to zero and all subsequent investment dollars go down the rat hole…. until the project “leaders” figure out how to terminate the mess without losing face.

Note: Since “maturity” normally implies “high quality”, and that’s not what I’m trying to convey on the x axis, I tried to concoct a more meaningful x axis label. “Documentation Quantity” came to mind, but that doesn’t fit the bill either because it would imply that quantity == quality in the first reference graph. Got any suggestions for improvement?

CMMI Insanity

This CMMI stuff is getting absurd. There seems to be a CMMI sub-category for everything now: CMMI-DEV, CMMI-Services, CMMI-Acquisition, CMMI-Integration, CMMI-People…. yada yada, yada. It’s typical bureaucratic bloat that causes companies to spend precious money and time on impressing hot-shot, non-doer auditors while simultaneously dropping boat anchors on their teams…. instead of creating products. Some contracts require a minimum level of assessment by an external rating agency in order to win the job.

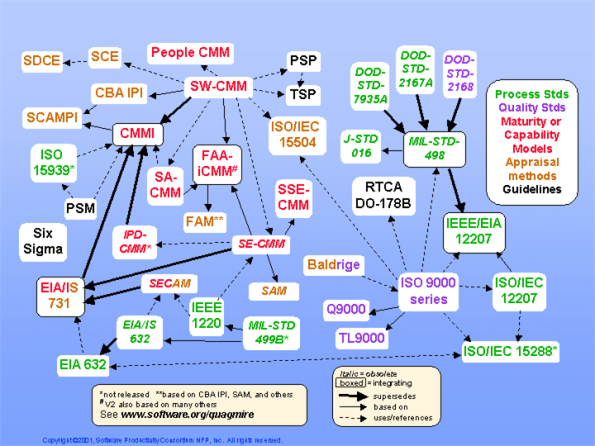

Let’s crank it up a notch and see who else knows “what’s good for everybody“. For your viewing displeasure, I present the quag(mire) map:

Which certification/assessment bureaucratic borgs hold your company hostage by demanding a ransom in return for a favorable assessment?

Distributed Functions, Objects, And Data

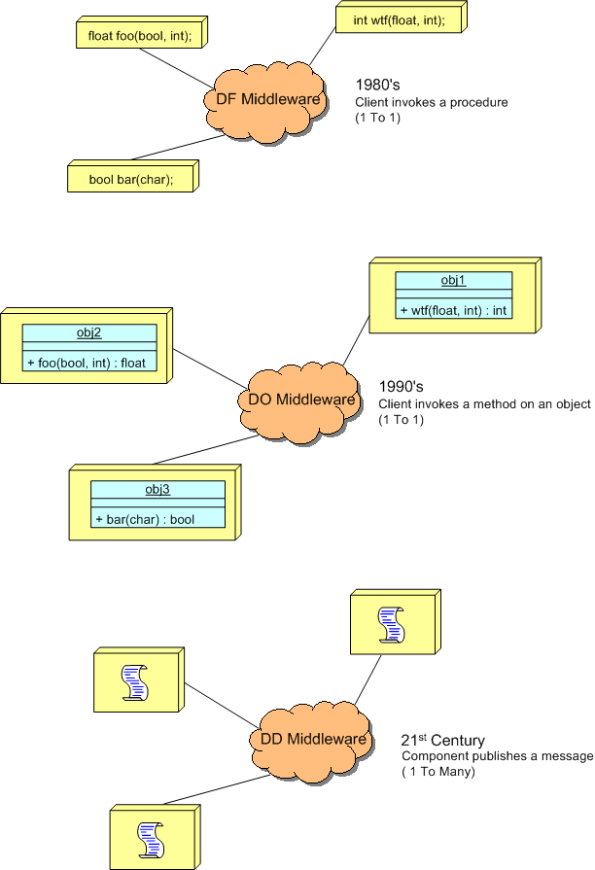

During a discussion on LinkedIn.com, the following distributed system communication architectural “styles” came up:

- DF == Distributed Functions

- DO == Distributed Objects

- DD == Distributed Data

I felt the need to draw a picture of them, so here it is:

The DF and DO styles are point-to-point, client-server oriented. Client functions invoke functions and object methods invoke object methods on remotely located servers.

The DD style is many-to-many, publisher-subscriber oriented. A publisher can be considered a sort of server and subscribers can be considered clients. The biggest difference is that instead of being client-triggered, communication is server-triggered in DD systems. When new data is available, it is published out onto the net for all subscribers to consume. The components in a DD system are more loosely coupled than those in DF and DO systems in that publishers don’t need to know anything (no handles or method signatures) about subscribers or vice versa – data is king. Nevertheless, there are applications where each of the three types excel over the other two.

Too Much Pressure

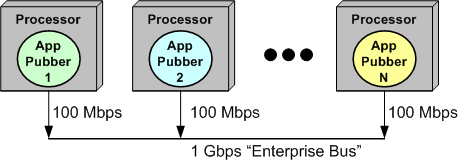

The figure below models an N node, “Enterprise Bus” (EB) connected, software-centric system.

Even though the “shared” EB supplies a raw, theoretical bandwidth of 1 Gbps to its connected user set, contention for frequent but temporary control of the bus among its clients increases as the number of message publisher (pubber) clients increases. Thus, the total effective bandwidth of the system as a whole decreases below the raw 1 Gbps rate as its utilization increases.

In the example, instead of accommodating up to 10, 100 Mbps clients, the increasing “back pressure” exerted by the bus under the duress imposed by multiple, “talky” (you know, wife-like) clients will throttle the per-pubber message send rate to well below 100 Mbps.

I recently found this out the hard way – through time-consuming testing and making sense out of the “unexpected” results I was getting. Bummer.

Cpp, Java, Go, Scala Performance

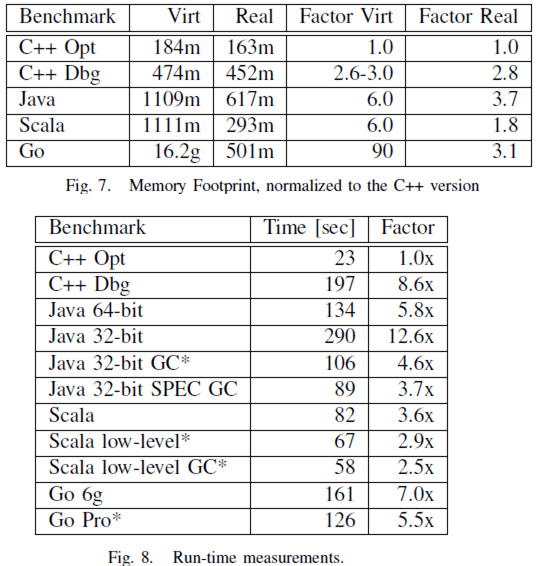

I recently stumbled upon this interesting paper on programming language performance from Google researcher Robert Hundt : Loop Recognition in C++/Java/Go/Scala. You can read the details if you’d like, but here are the abstract, the conclusions, and a couple of the many performance tables in the report.

Abstract

Conclusions

Runtime Memory Footprint And Performance Tables

Of course, whenever you read anything that’s potentially controversial, consider the sources and their agendas. I am a C++ programmer.